Recursion in AI: Transforming Models

I've spent countless hours tweaking AI models, and let me tell you, recursion is the game changer we've been waiting for. Forget the race for more parameters; now it's about intelligence. While traditional models hit scaling walls, recursion offers a fresh perspective. We're diving into how it could redefine AI efficiency and capability. We'll discuss hierarchical reasoning models, tiny recursive models, deep equilibrium learning, and the challenges of optimization. If you've ever been frustrated by scalability limits, you're going to love this new paradigm.

I've spent countless hours tweaking AI models, and let me tell you, recursion is the game changer we've been waiting for. It's not about bigger models anymore; it's about smarter ones. I've hit the scaling walls with traditional approaches more times than I care to admit, but recursion offers a fresh perspective. Imagine a model with just 28 million parameters exceeding expectations through hierarchical reasoning architecture. That's what recursion brings to the table. In this podcast, we're diving into tiny recursive models, deep equilibrium learning, and the challenges of optimization. You'll see how, by applying recursion, we can turn those limitations into opportunities for innovation. If you're ready to reconsider what AI efficiency and capability can mean, you're in the right place. Get ready to explore a paradigm that might just be the future of AI.

Understanding Recursion in AI

Recursion in AI acts like that extra boost that keeps our models from running out of steam when tackling complex tasks. I've seen it time and again: instead of endlessly inflating the number of parameters, we reuse computations already done. Backpropagation Through Time (BPTT) is crucial here. This process, though complex, enables effective training of recursive models. But watch out, it's a slippery slope! You need to juggle gradient precision to avoid falling into the traps of vanishing or exploding gradients. That's where Deep Equilibrium Learning steps in, allowing us to find stable states in our models. We often talk about reducing the need for massive parameter counts, and for me, it's about pure efficiency.

We can't ignore that two papers from 2025 vividly demonstrated how recursion could transform AI. One was on Hierarchal Reasoning Models (HRM) and the other on Tiny Recursive Models (TRM). These works highlighted recursion's ability to optimize model reasoning, a crucial advancement for the future of AI.

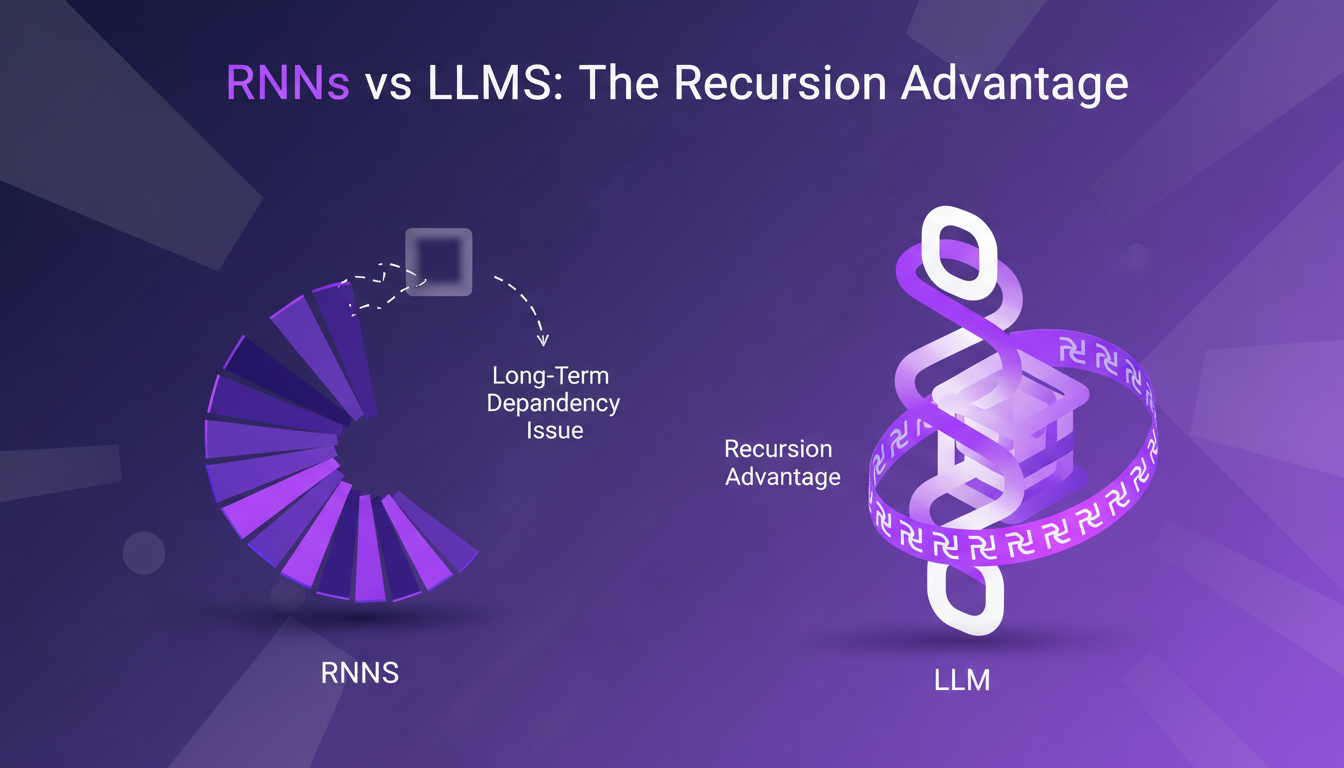

RNNs vs LLMs: The Recursion Advantage

RNNs, once promising, often struggle with long-term dependencies. I've often faced these limitations, especially with backpropagation through time, which can cause problems due to vanishing or exploding gradients. LLMs, like transformer models, perform better with these dependencies due to their ability to process inputs in parallel. But let's not forget that recursion can really enhance memory and context handling in models. It's saved my skin more than once!

RNNs present limitations, including complex implementation and computational burden during training. However, recursion offers another path: a more complex architecture, sure, but potentially less computational cost. I've seen how this can make all the difference in optimizing resources.

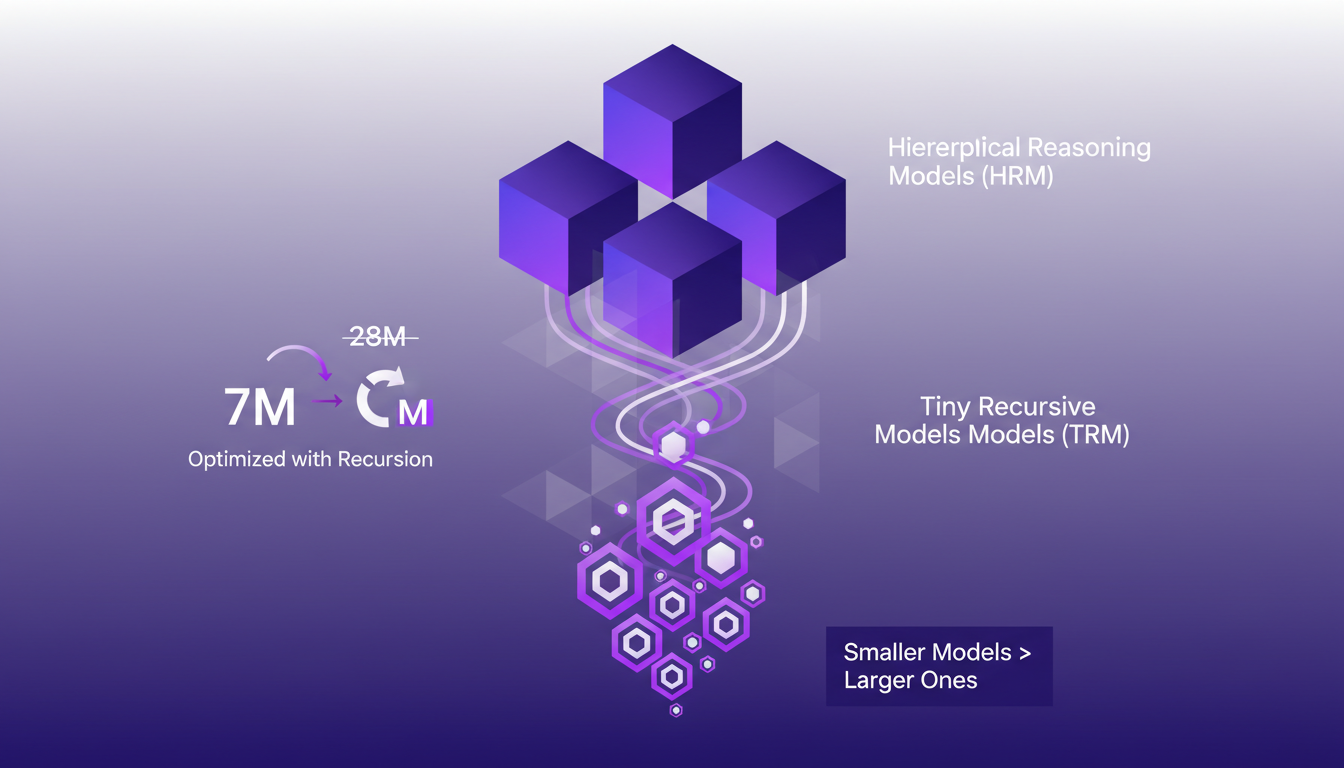

Hierarchal Reasoning and Tiny Recursive Models

Hierarchal Reasoning Models (HRM) use recursion for layered understanding. That's something tangible: I've personally optimized a model from 28 million parameters down to just 7 million thanks to recursion, without losing accuracy. We’re talking about models that, despite their reduced size, outperform the larger ones. I've even achieved 87% accuracy on the ARP prize, an impressive feat for such a small model size.

With Tiny Recursive Models (TRM), we see that smaller doesn't mean less capable. That's a lesson I've learned in optimizing models for specific tasks without sacrificing quality.

Deep Equilibrium Learning: A Practical Approach

Deep Equilibrium Learning stabilizes recursive models, ensuring consistent outputs. In my practice, I've discovered that setting up this learning requires a fine understanding of balancing recursion with computational resources. Optimizing this balance is crucial; don't overlook it. But watch out for potential pitfalls when setting up equilibrium learning. A bad setup can lead to biased or inconsistent results.

To implement this learning, I start by configuring the equilibrium parameters. Then, I orchestrate the model to reduce residuals and improve learning. It's a delicate dance between precision and efficiency.

Future of AI with Recursion: What’s Next?

Looking to the future means seeing where recursion will lead us in AI. I anticipate applications ranging from language processing to complex problem-solving. But the challenge remains: how to ensure stability and efficiency at scale? For me, recursion is a tool to make AI systems smarter, not necessarily bigger. We're talking about creating systems capable of reasoning, analyzing, without exploding in size.

Future research directions explore recursion's potential to transform AI. I'm convinced this approach will lead us to smarter, optimized models capable of tackling unprecedented challenges in the field.

When we bring recursion into our AI models, we're talking about a practical game changer, not just theory. I've experimented with these recursive strategies and they truly redefine model design efficiency and capability. Here's what I've found:

- Hierarchal Reasoning Models (HRM) with 28 million parameters are showing serious potential.

- Tiny Recursive Models (TRM) can iterate up to 16 times, offering compact power.

- We've seen real impact, like scoring 70% on challenging benchmarks such as the arc prize.

I see recursion as a game changer, but watch out for data complexity limits. Resource management is key. Ready to transform your AI projects? Start experimenting with these strategies and see the impact on your models. For a deeper dive, check out the 'Beyond Bigger Models: Recursion As The Next Scaling Law In AI' video on YouTube.

Frequently Asked Questions

Thibault Le Balier

Co-fondateur & CTO

Coming from the tech startup ecosystem, Thibault has developed expertise in AI solution architecture that he now puts at the service of large companies (Atos, BNP Paribas, beta.gouv). He works on two axes: mastering AI deployments (local LLMs, MCP security) and optimizing inference costs (offloading, compression, token management).

Related Articles

Discover more articles on similar topics

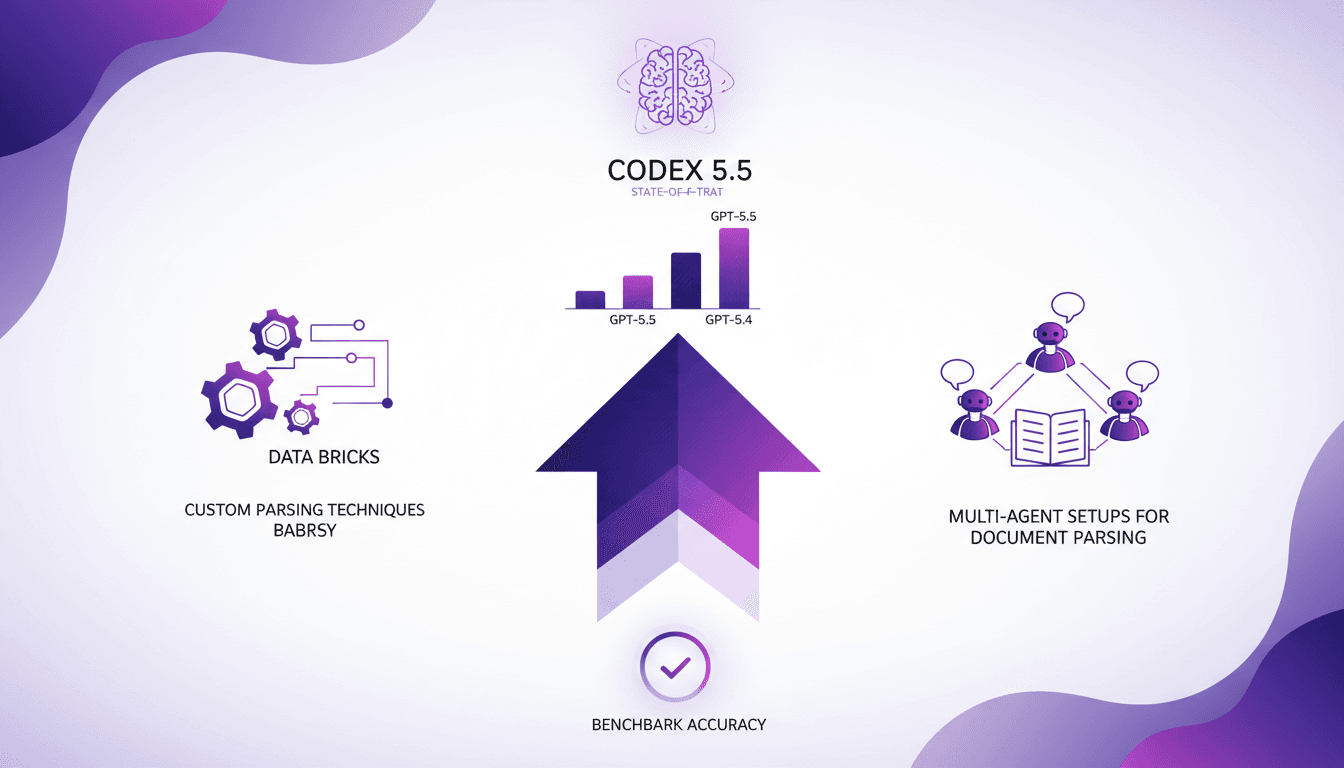

GPT-5.5 Performance Boosts: Key Insights

I was knee-deep in parsing challenges when GPT-5.5 came along, and let me tell you, it's a game changer. But it’s not all roses. In the intricate world of Databricks, strategic setup is key. With impressive performance boosts and increased accuracy, GPT-5.5 is setting new standards, but you need to harness it wisely. I'll show you how I tapped into this power, from custom parsing techniques to multi-agent setups. Get ready to dive into the technical nitty-gritty and see how Codex 5.5 stands as the state-of-the-art model!

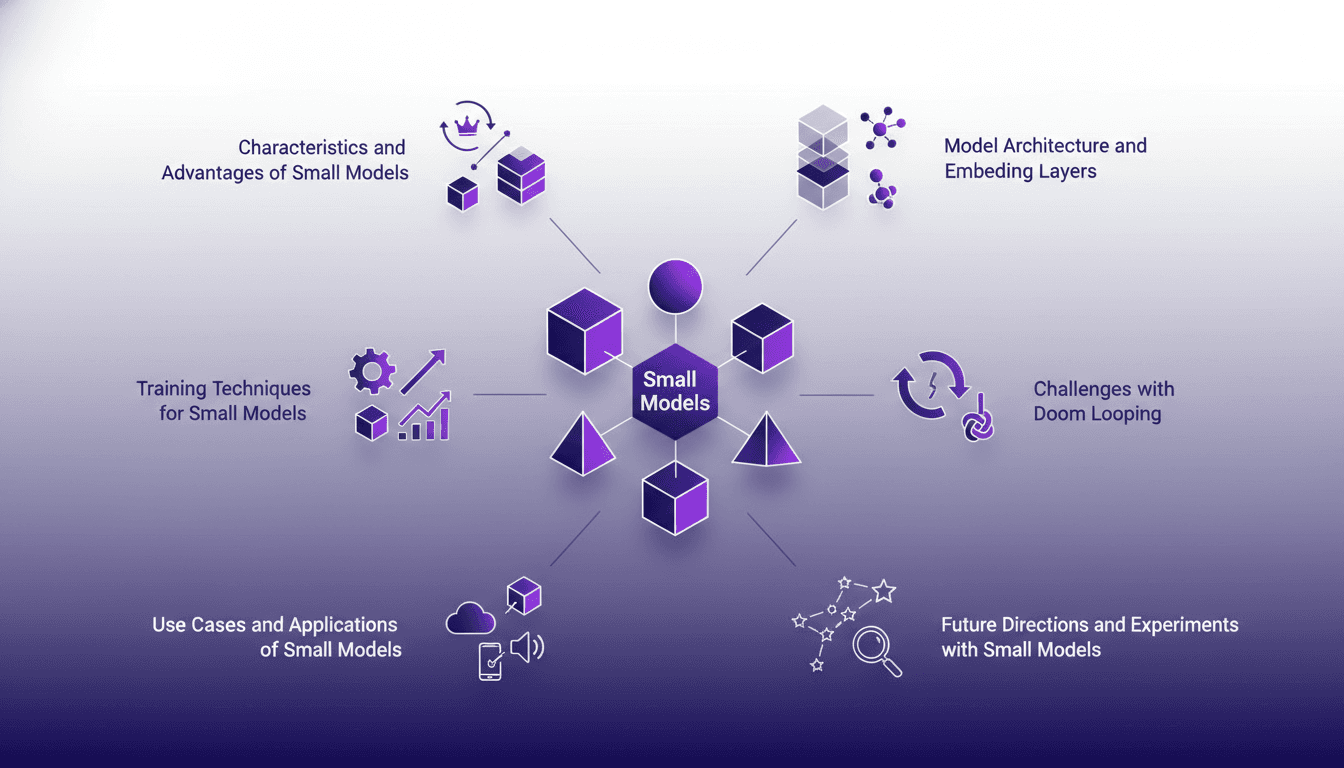

Characteristics and Advantages of Small Models

When I first delved into training small models, I thought, 'How hard could it be?' Turns out, it's a nuanced dance between efficiency and capability. Let me walk you through what I've learned. In the AI world, small models are gaining traction for their efficiency and specialized applications. I unpack my journey with these models, from architecture to real-world applications. We'll dive into characteristics, advantages, training techniques, challenges like doom looping, and future experiments. Essentially, a comprehensive look at small models, their power, and their limits.

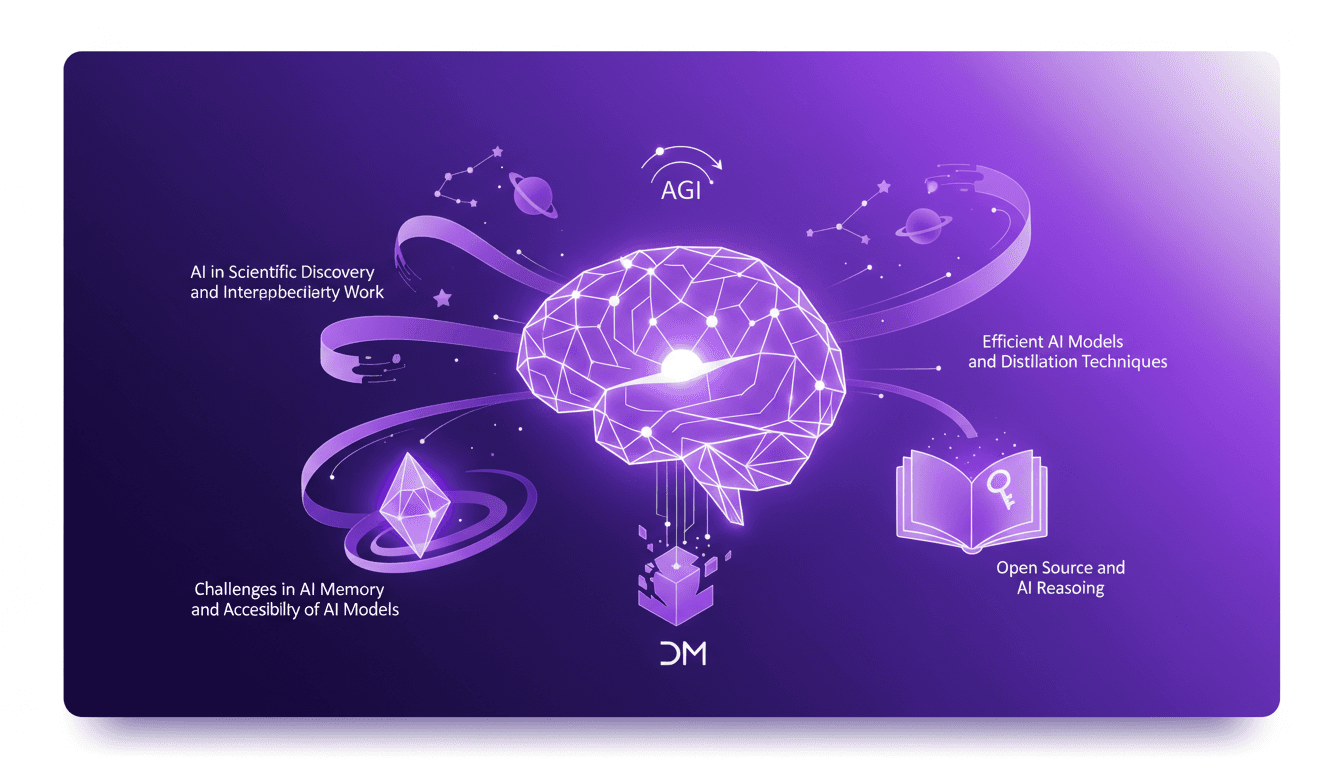

Building AGI: Techniques and Challenges

I've been in the AI trenches for over 30 years, and building the future isn't just a catchphrase—it's a daily grind. We're talking about Artificial General Intelligence (AGI), something that's not just on the horizon but already reshaping our workflows. Guided by Deep Mind's milestones, we're diving into efficient AI models and distillation techniques, alongside the interdisciplinary work pushing boundaries. Building AGI is a marathon, not a sprint. Let's get going, one model at a time.

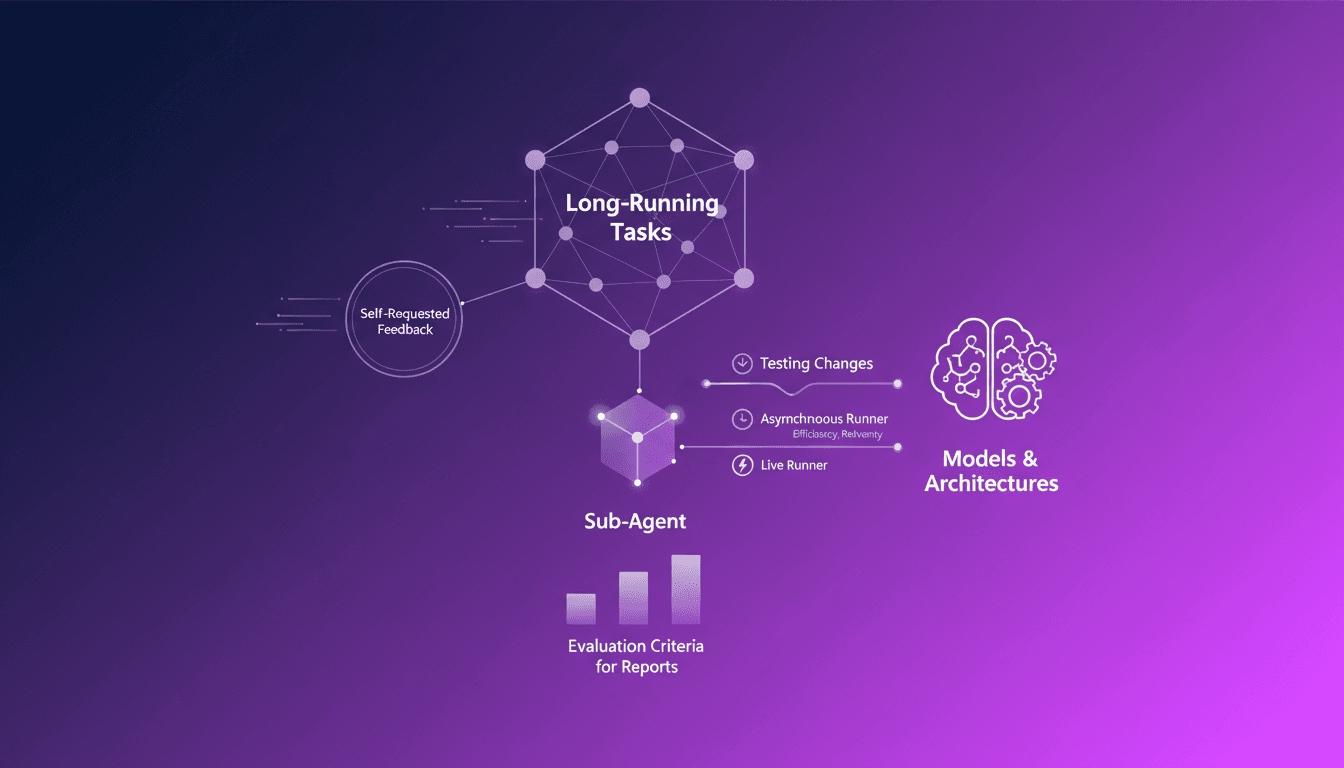

AI Agents: Requesting Feedback Efficiently

Ever been stuck in a loop of endless tasks, unsure if you're heading in the right direction? I have, and that's where AI agents asking for feedback come into play. In this podcast, I'll walk you through how I orchestrate this process. In the AI world, long-running tasks can be a nightmare without proper feedback mechanisms. Sub-agents really shine here, autonomously requesting feedback, making the process efficient and less error-prone. We'll dive into self-requested feedback for long-running tasks, the role of sub-agents in the feedback process, evaluation criteria for reports, and how I use asynchronous and live runners to test changes in models and architectures.

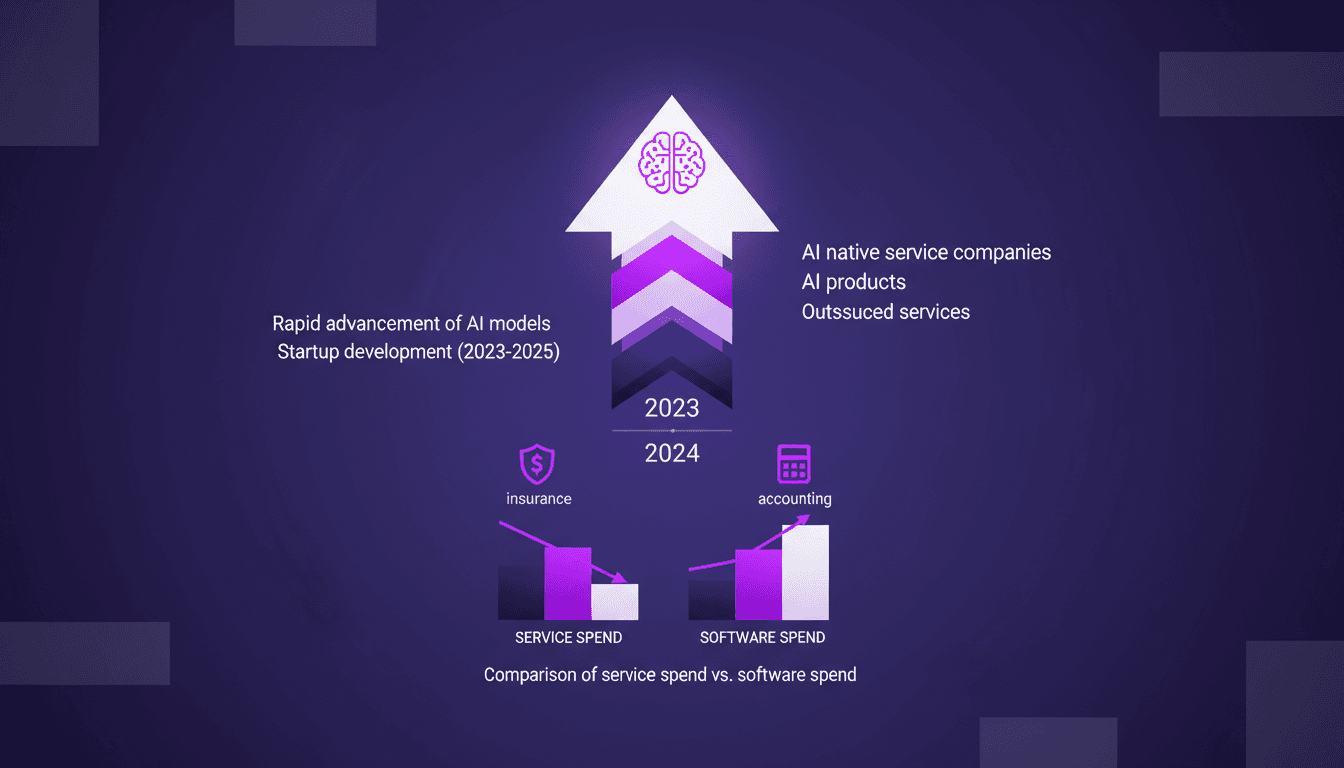

AI Native Services: Revolutionizing Industries

I've been knee-deep in AI for years, watching tools evolve into full-fledged AI native services. This isn't just a trend—it's a revolution. With AI models advancing at breakneck speed, we're witnessing a shift from traditional software tools to AI-native services. These aren't just buzzwords—real companies are emerging that leverage AI to replace entire service sectors. Industries like insurance and accounting are already feeling the impact. Let me walk you through how this unfolds and why it's a game changer. It's not just hype, it's happening.