Characteristics and Advantages of Small Models

When I first delved into training small models, I thought, 'How hard could it be?' Turns out, it's a nuanced dance between efficiency and capability. Let me walk you through what I've learned. In the AI world, small models are gaining traction for their efficiency and specialized applications. I unpack my journey with these models, from architecture to real-world applications. We'll dive into characteristics, advantages, training techniques, challenges like doom looping, and future experiments. Essentially, a comprehensive look at small models, their power, and their limits.

When I first delved into training small models, I thought, 'How hard could it be?' Turns out, it's a nuanced dance between efficiency and capability. Let me walk you through what I've learned. In the AI world, small models are gaining traction for their efficiency and specialized applications. I started by connecting the architecture and embedding layers (and realized it's not just a matter of clicking a button). I've also explored training techniques and challenges like doom looping, which have burned me more than once. Then, there are the real-world use cases that truly showcase where these models shine. I'll also share future directions and experiments I'm conducting. In essence, a comprehensive overview of the power and limits of small models.

Characteristics and Advantages of Small Models

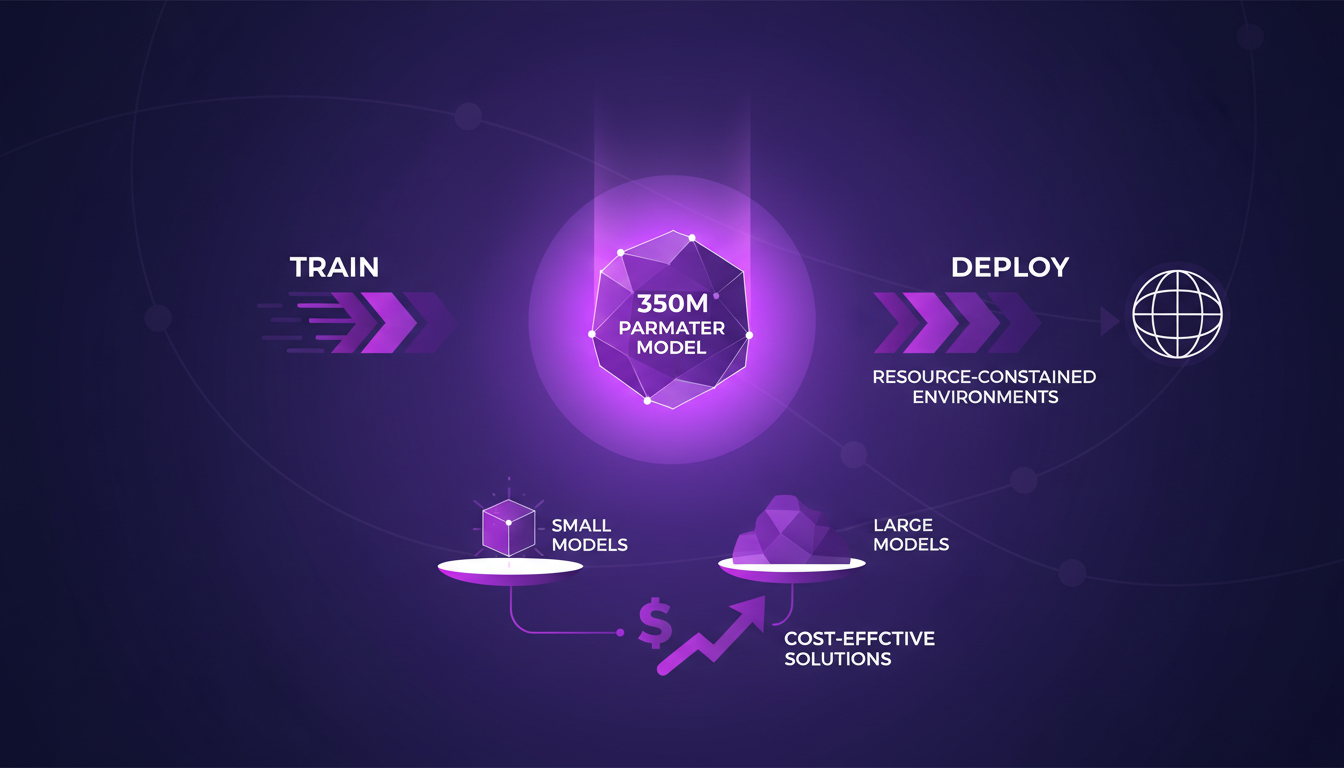

In my experience at Liquid AI, I've often found that small models, like the 350 million parameter one, hit the sweet spot between performance and resource demands. These models are faster to train and deploy, making them perfect for specific environments where resources are constrained. For example, in real-time applications where latency needs to be minimal, these models shine with their efficiency.

But watch out, there's a trade-off between model size and accuracy. The challenge is finding that balance. I've often seen larger models being overkill for certain tasks where a smaller model would suffice. This is where the real advantage lies: a cost-effective solution without sacrificing performance.

- Fast training and deployment

- Ideal for resource-constrained environments

- Balance between size and accuracy

- Cost-effective solutions for specific tasks

Model Architecture and Embedding Layers

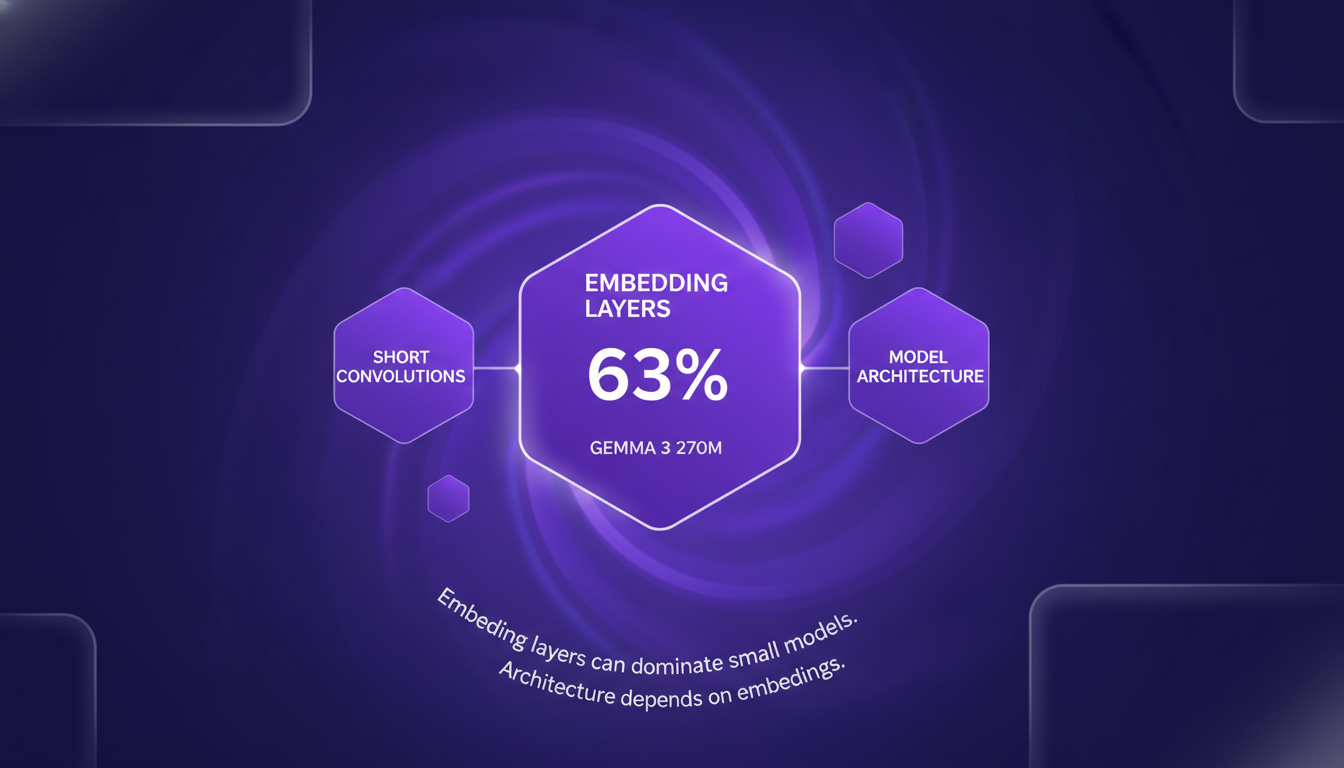

Architecture plays a crucial role in optimizing models for specific tasks. I've experimented with various architectures, and what I've often noticed is that embedding layers take up a significant chunk of smaller models. Take the Gemma 3 270M model, where these layers account for 63% of the total parameters. That's huge!

Short convolutions are also key to maintaining efficiency without sacrificing too much performance. In my tests, they have proven to be faster than other alternatives. Understanding the architecture helps in optimizing the model for specific tasks, and that's invaluable.

- Embedding layers often dominate models

- Short convolutions are crucial for efficiency

- Optimization of architecture for specific tasks

Training Techniques for Small Models

When it comes to training small models, two techniques stand out: Supervised Fine-Tuning (SFT) and Reinforcement Learning (RL). Personally, I always start with SFT to give the model a solid foundation before moving to RL.

Watch out, training small models requires precision. A slight misstep can lead to inefficiencies. But once well-trained, these models deploy faster and at lower costs.

- SFT for a solid foundation

- RL for task generalization

- Precision is key to avoiding inefficiencies

- Fast and economical deployment

Challenges with Doom Looping

A common pitfall when training models is doom looping, where the model gets stuck in non-productive training cycles. I got burned several times before I learned how to spot early signs and quickly correct course.

Preventing doom looping often involves monitoring and tweaking hyperparameters. The trick lies in balancing exploration and exploitation during training.

- Quick identification of doom looping signs

- Monitoring hyperparameters

- Balance between exploration and exploitation

Use Cases and Future Directions for Small Models

Small models are perfect for edge devices, where resources are limited. I've seen success in deploying small models in real-time applications due to their speed.

Future experiments focus on expanding capabilities without increasing size. The 450M VLM model is a testament to ongoing improvements in small model capabilities.

- Perfect for edge devices

- Success in real-time applications

- Future experiments to expand capabilities

- 450M VLM model as proof of improvement

Training small models taught me that balancing efficiency with capability is a constant journey. First, I pre-trained a 350 million parameter model, which was quite a learning curve, especially when stacked against the 24 billion parameter giants. Then, I embedded smart layers to squeeze out performance while keeping it lean. But watch out, avoiding the Doom Looping is essential to keep progressing.

- Solid architecture and embedding layers: They're the foundation for an efficient model.

- Targeted training techniques: These make all the difference in getting the most out of small models.

- Avoiding Doom Looping: Constant vigilance is necessary to avoid going in circles.

Looking ahead, small models could be game changers, especially in constrained environments. So, start experimenting today; the benefits are tangible, and the learning curve is genuinely rewarding. Check out Maxime Labonne's full video on YouTube for deeper insights and share your experiences.

Frequently Asked Questions

Thibault Le Balier

Co-fondateur & CTO

Coming from the tech startup ecosystem, Thibault has developed expertise in AI solution architecture that he now puts at the service of large companies (Atos, BNP Paribas, beta.gouv). He works on two axes: mastering AI deployments (local LLMs, MCP security) and optimizing inference costs (offloading, compression, token management).

Related Articles

Discover more articles on similar topics

DreamLIVE in London: Turning Dreams into Reality

Ever stood in a room with 600 dreamers? I did at DreamLIVE in London, where aspirations meet action. We dove deep into topics ranging from micro greens to AI-driven creativity. This wasn't just talk; it was a blueprint for building the future. We examined personal aspirations, sustainable food production, the challenges in motorsports, preservation of native horse breeds, and empowering ethnic minority women in corporate spaces. The diversity of journeys and visions turned this gathering into a true wellspring of inspiration, and I left with renewed energy to build my own dreams.

Building AGI: Techniques and Challenges

I've been in the AI trenches for over 30 years, and building the future isn't just a catchphrase—it's a daily grind. We're talking about Artificial General Intelligence (AGI), something that's not just on the horizon but already reshaping our workflows. Guided by Deep Mind's milestones, we're diving into efficient AI models and distillation techniques, alongside the interdisciplinary work pushing boundaries. Building AGI is a marathon, not a sprint. Let's get going, one model at a time.

Mastering Generative AI: A Practical Guide

I still recall diving into AI coding, thinking generative AI was just another buzzword. Then I realized it’s a real game changer, but only if you know how to harness it. First, I immersed myself in its fundamentals—understanding how these tools transform how we code. Engineers spend barely two hours a day on actual coding; the rest is orchestration. And that’s where AI steps in, boosting productivity and redefining our roles. I’ll walk you through how I navigated this complex landscape, from the environmental impact of AI technologies to prompt engineering and context management. Let's explore how mastering generative AI can revolutionize our approach to software development.

Reusable Rockets: Unlocking Space Capacity

I remember the first time I witnessed a reusable rocket launch. It was a game-changer for space capacity. Now, as we push the boundaries of compute power in space, the demand for specialized chips is skyrocketing. With companies like SpaceX and Stoke Space at the forefront, reusable rockets are transforming our approach to space capacity. But it's not just about getting there—it's about what we do once in orbit. That's where inference chips come into play, optimized for unique space conditions. Let's dive into how we're optimizing electronics for the harsh realities of space.

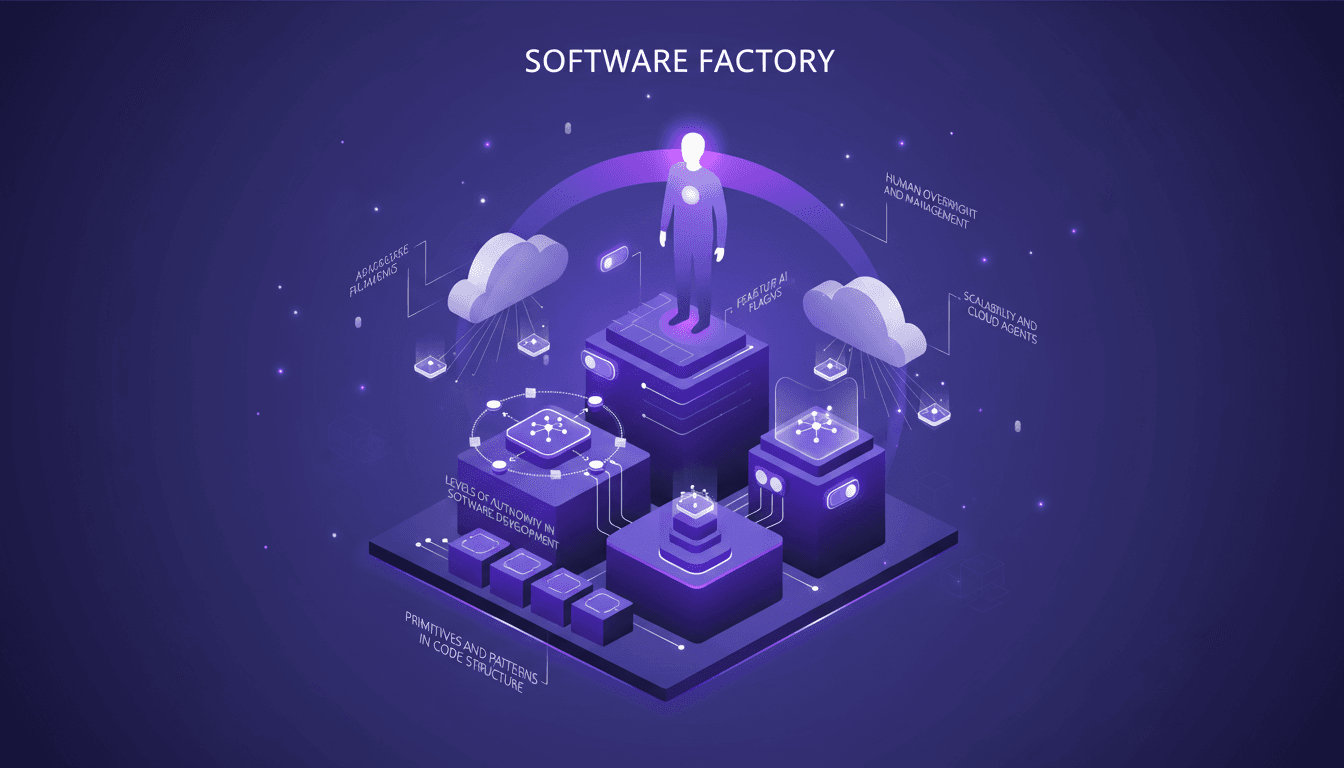

Building Your Software Factory: Key Steps

I remember my first thought about building a software factory. It felt overwhelming, but with a step-by-step approach, it turned manageable. In this article, I walk you through how I set up my own software factory, focusing on efficiency and scalability. We dive into the key components and strategies for success, from the role of AI agents and feature flagging to verification and testing in automated systems. For me, a software factory is more than just automation; it's a paradigm shift that can transform productivity. So how do you pilot this without getting burned? I share my mistakes, successes, and most importantly, the lessons learned.