AI Agents: Requesting Feedback Efficiently

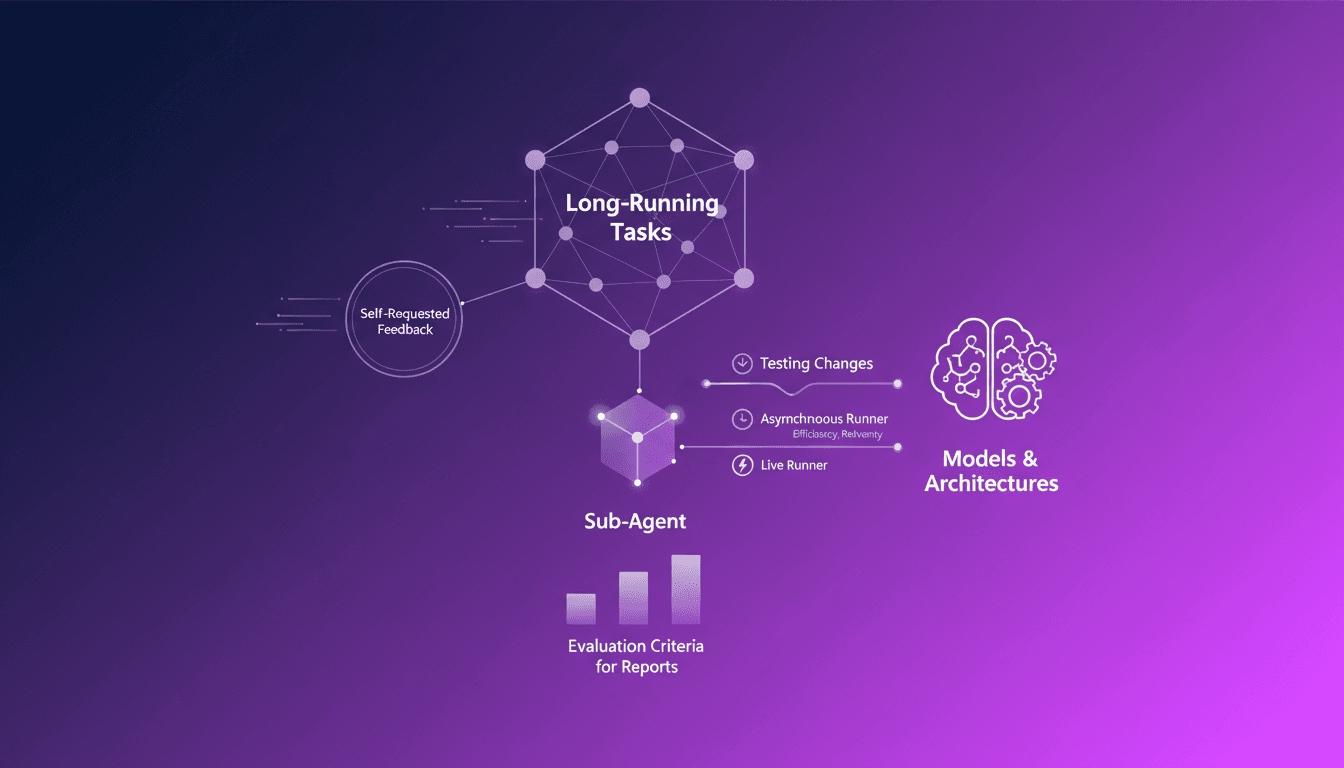

Ever been stuck in a loop of endless tasks, unsure if you're heading in the right direction? I have, and that's where AI agents asking for feedback come into play. In this podcast, I'll walk you through how I orchestrate this process. In the AI world, long-running tasks can be a nightmare without proper feedback mechanisms. Sub-agents really shine here, autonomously requesting feedback, making the process efficient and less error-prone. We'll dive into self-requested feedback for long-running tasks, the role of sub-agents in the feedback process, evaluation criteria for reports, and how I use asynchronous and live runners to test changes in models and architectures.

Stuck in an endless loop of tasks, unsure if I was moving in the right direction—sound familiar? That's when I discovered a game changer: letting AI agents ask for feedback on themselves. In AI, long-running tasks without feedback can quickly become a nightmare. So, I orchestrated a process where sub-agents autonomously request feedback. First, it lightens the cognitive load, then it reduces errors. I'll walk you through how I set up these self-requested feedback loops, the critical role sub-agents play, and the criteria I evaluate in reports. We'll also cover how I use asynchronous and live runners to test changes in models and architectures. This isn't just theory; it's practical, everyday stuff.

Understanding Long-Running Tasks

In the AI realm, long-running tasks are those that don't wrap up in real-time. Think of a complex financial report that takes days to process — that's where these tasks come into play. The challenge here is maintaining efficiency and accuracy over a long period. I've often found myself tweaking my processes to avoid errors that accumulate over time.

This is where feedback becomes crucial. It allows for error correction along the way, preventing downstream disasters. I've adopted AI agents to automate feedback requests, saving precious time that I'd rather spend on strategic analysis than constant monitoring.

"AI agents, when well-orchestrated, turn complex tasks into seamless processes."

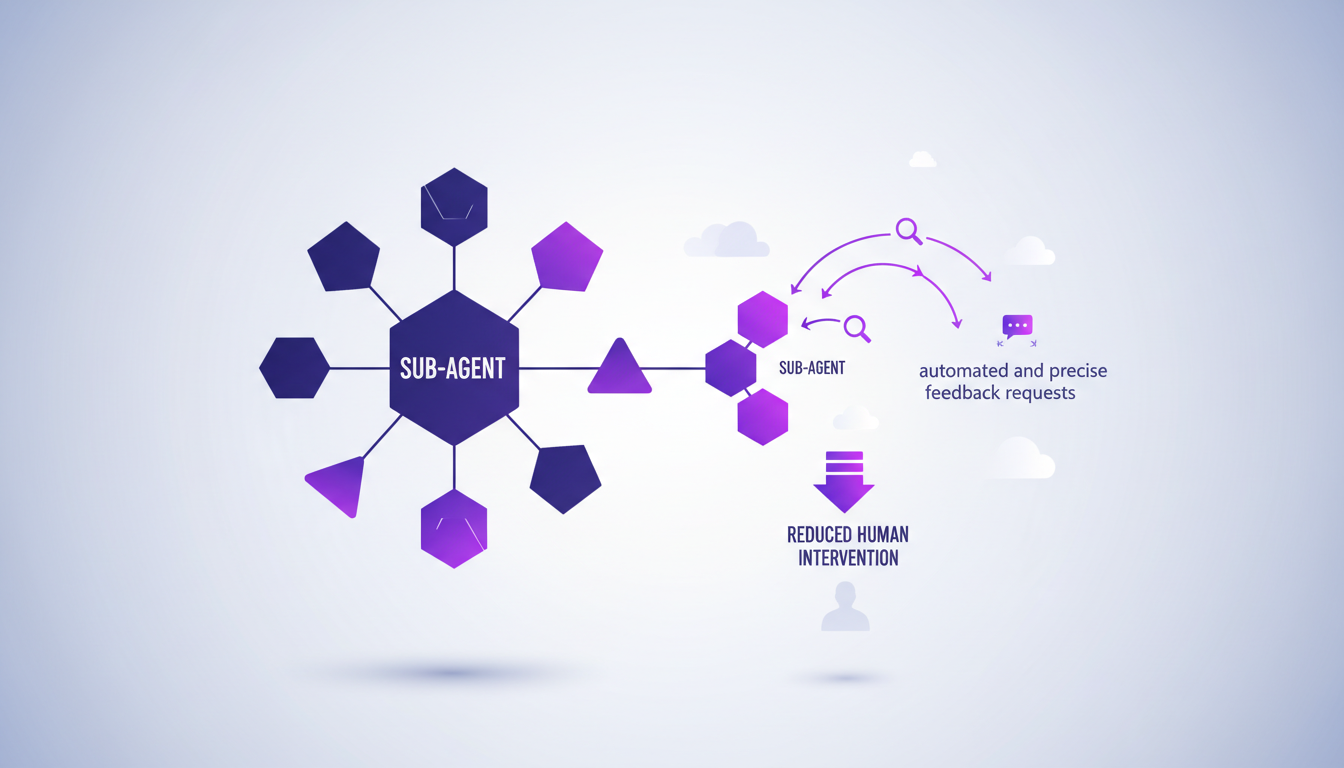

Role of Sub-Agents in Feedback

A sub-agent is a specialized agent for feedback processes. In practice, it makes feedback requests automatically and precisely. I've integrated these sub-agents into my workflow, and the reduction in human intervention has been significant.

Their impact is undeniable: they streamline the feedback loop by eliminating redundant tasks. I recall my early days without these tools, where each feedback required endless meetings. Now, sub-agents provide an efficiency I couldn't ignore anymore.

- Automation of feedback requests

- Reduction of human intervention

- Overall efficiency improvement

Criteria for Evaluating Reports

When it comes to evaluating reports, I've set clear criteria: accuracy, evidence, and clarity. Every claim must be backed by citations or data. AI agents play a key role here, ensuring quick and consistent evaluation. But watch out, not everything is perfect — sometimes a manual review is still necessary for complex cases.

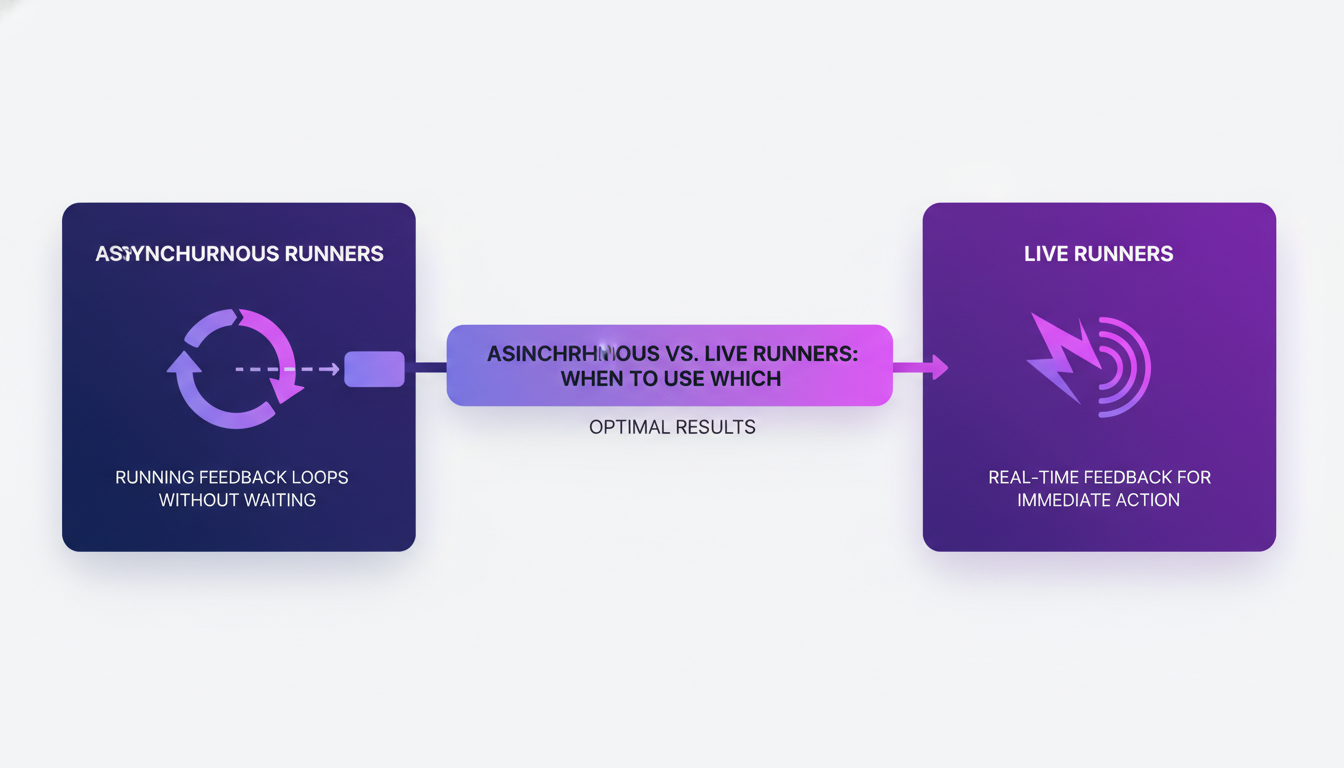

Using Asynchronous and Live Runners

Asynchronous runners allow feedback loops to run without waiting. It's like juggling multiple balls in the air and catching them at different times. Then, there are live runners, providing real-time feedback for immediate action. I use both, depending on the need.

When to choose one over the other? For critical tasks requiring immediate reaction, live runners are invaluable. For ongoing processes, asynchronous offers more efficient resource management — a real time and cost saver.

- Cost savings through efficient resource management

- Reduced wait time for feedback

- Strategic choice based on the need for reactivity

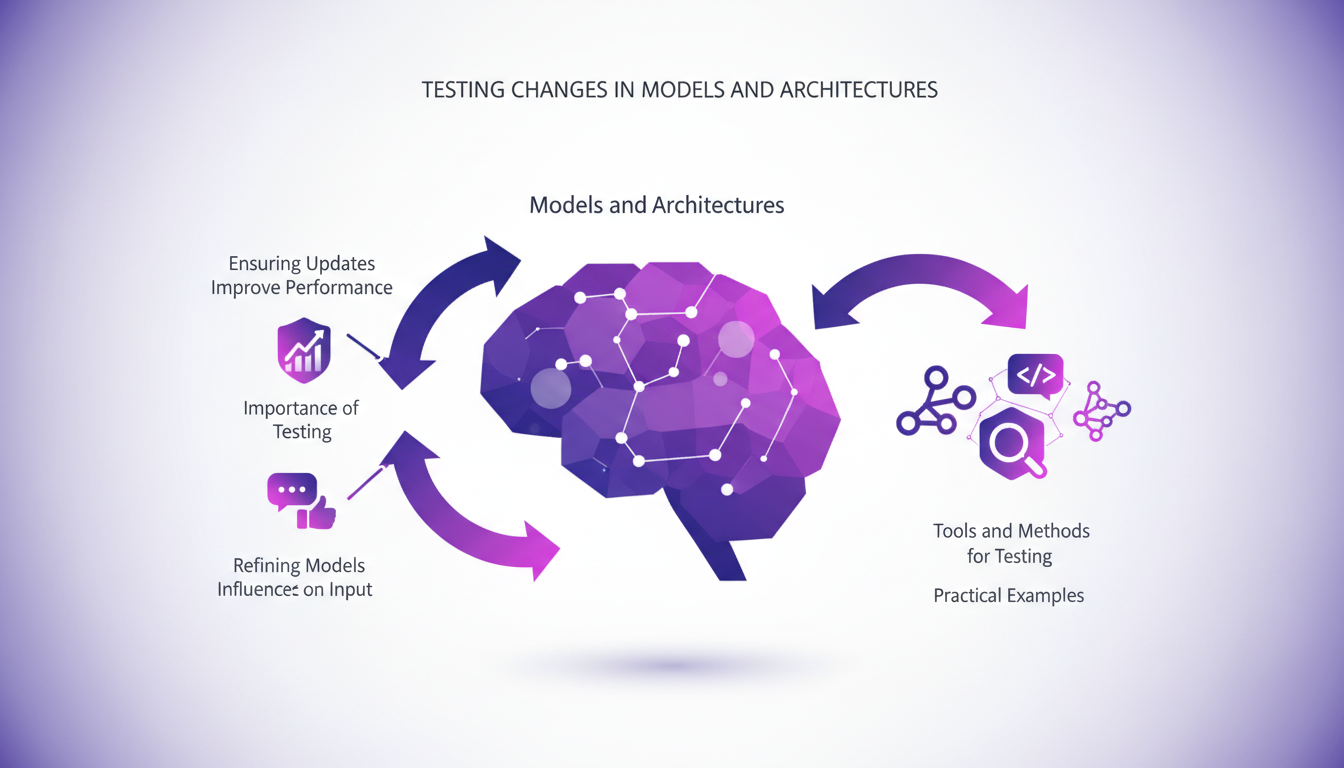

Testing Changes in Models and Architectures

Testing is essential to verify if updates truly improve performance. Feedback plays a key role here, helping refine models based on the input received. I employ various tools and methods (like benchmarks) to ensure that changes bring the expected improvements.

But watch out, over-reliance on automated feedback can be a trap. I've learned the hard way that relying solely on automated returns can lead to errors. Sometimes, a human touch is necessary for final evaluation.

- Importance of testing for performance improvement

- Use of feedback to refine models

- Risks of over-reliance on automation

In the end, mastering these tools and understanding their limits has allowed me to optimize my processes while maintaining a constant vigilance over result quality.

Integrating AI agents into feedback processes is like putting a turbocharger on long-running tasks. First, it simplifies the management of tasks that drag on. Then, it boosts efficiency by cutting down on errors that we'd make manually. Orchestrating sub-agents and runners is a real game changer for feedback management, but watch out — it can get tricky if not handled right.

- Long-running tasks are no longer a nightmare thanks to AI.

- Sub-agents smooth out the feedback process.

- Evaluation criteria, like citations or data, are key for solid reports.

I see a future where these agents really take the reins to optimize our workflows. It's worth checking out the original video to see how this plays out in practice. Ready to optimize your workflows with AI? Start implementing these strategies today and see the difference for yourself.

Frequently Asked Questions

Thibault Le Balier

Co-fondateur & CTO

Coming from the tech startup ecosystem, Thibault has developed expertise in AI solution architecture that he now puts at the service of large companies (Atos, BNP Paribas, beta.gouv). He works on two axes: mastering AI deployments (local LLMs, MCP security) and optimizing inference costs (offloading, compression, token management).

Related Articles

Discover more articles on similar topics

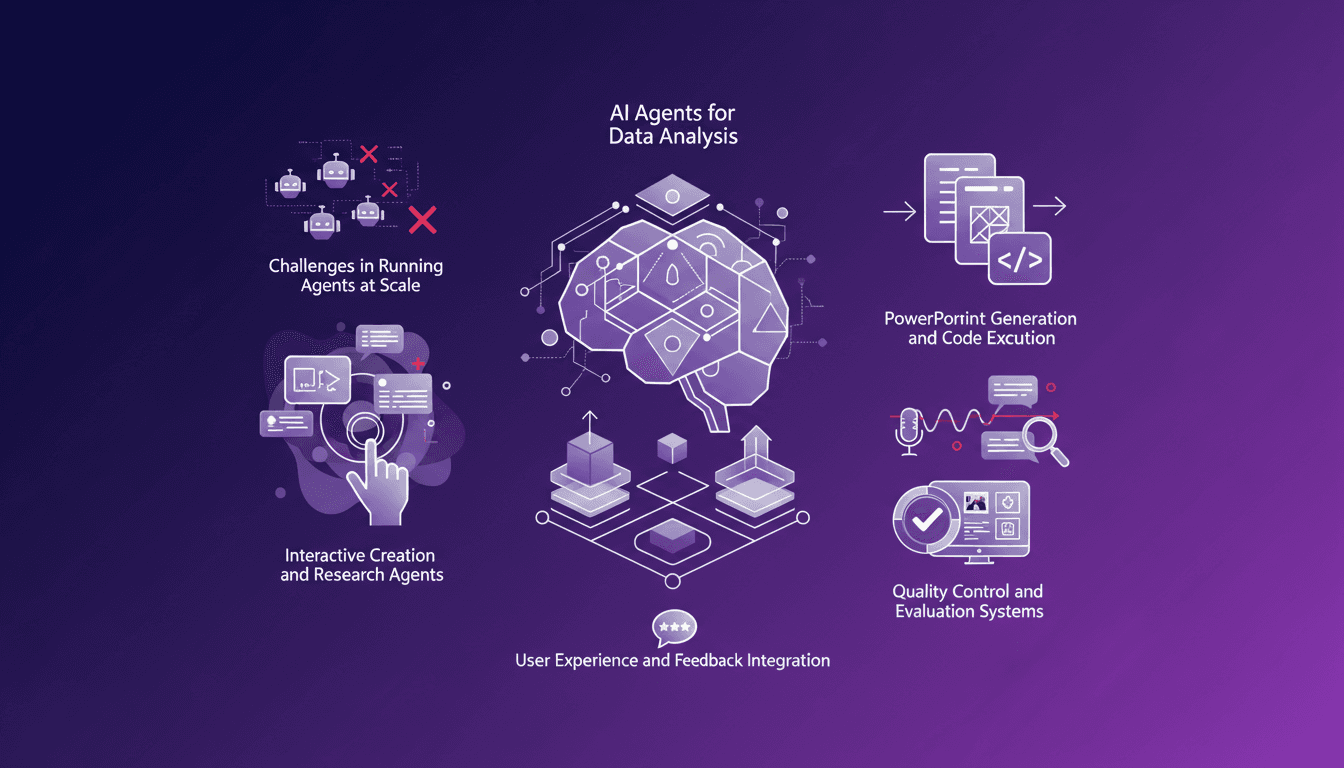

AI Agents for Analysis: Challenges and Solutions

When I say I've spent hours in the trenches orchestrating AI agents for data analysis, I mean it. Generic agents look great in demos, but in real life, you have to juggle robust architectures, integrate user feedback, and more. Take the challenge of spawning 500 agents for a specific tool, for instance—it's a puzzle. Plus, a single analysis run can easily take 30 minutes, and trust me, those minutes add up fast. I'm sharing my solutions, my mistakes, and what truly works.

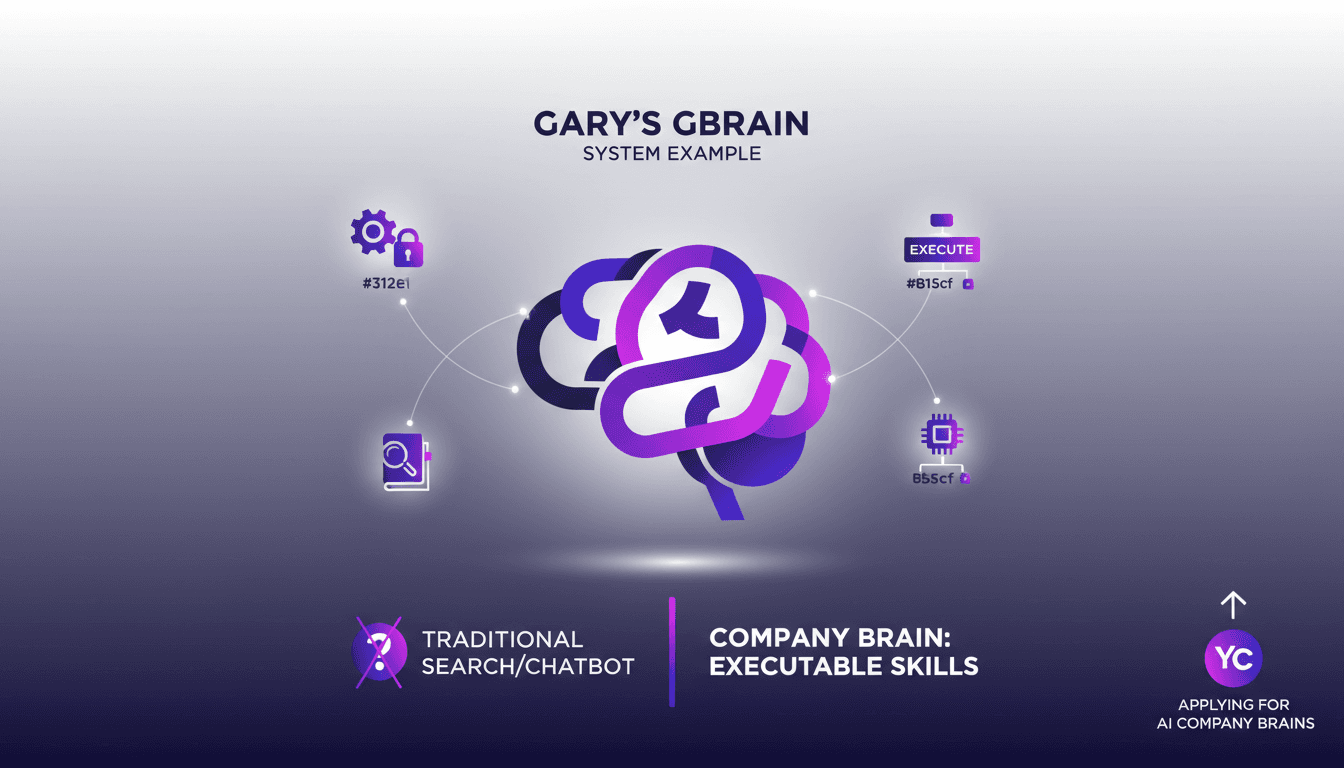

AI Automation: Challenges and Practical Solutions

I vividly remember my first attempt at implementing AI automation in my company—what a mess! But once I grasped the importance of domain knowledge and the 'company brain' concept, things started to make sense. In this article, I share how I tackled these challenges using Gary's GBrain as an example. Too often, companies hit a wall with AI because they overlook the real key: domain knowledge. I'll walk you through how I built a company brain and why every business should have one today.

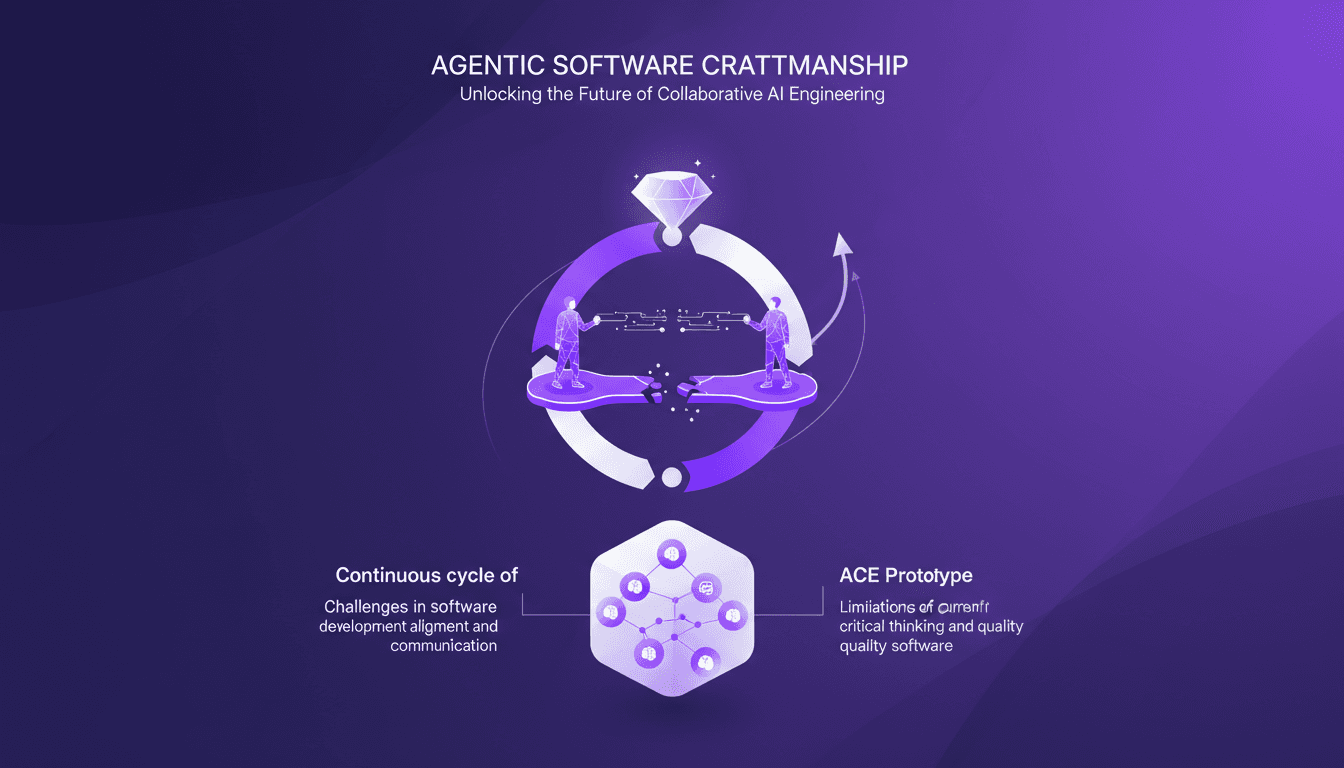

Collaborative AI Engineering: Challenges and Solutions

I dove into the world of collaborative AI engineering with Maggie Appleton's insights, and it was a real game changer. Imagine orchestrating a team of two dozen agents to streamline your development process—sounds ambitious, right? But here's how it plays out in the real world. We often talk about alignment and communication as major hurdles. Current coordination tools aren't always up to the task, especially when managing a continuous cycle of planning and building. The introduction of the ACE prototype shifts the game with real-time collaboration between developers and coding agents. Yet, the real challenge lies in the importance of context and decision-making to reclaim time for critical thinking and quality software. As we move toward the future of agentic development, software craftsmanship remains essential. It's not just about technology, but about redefining our approach to development.

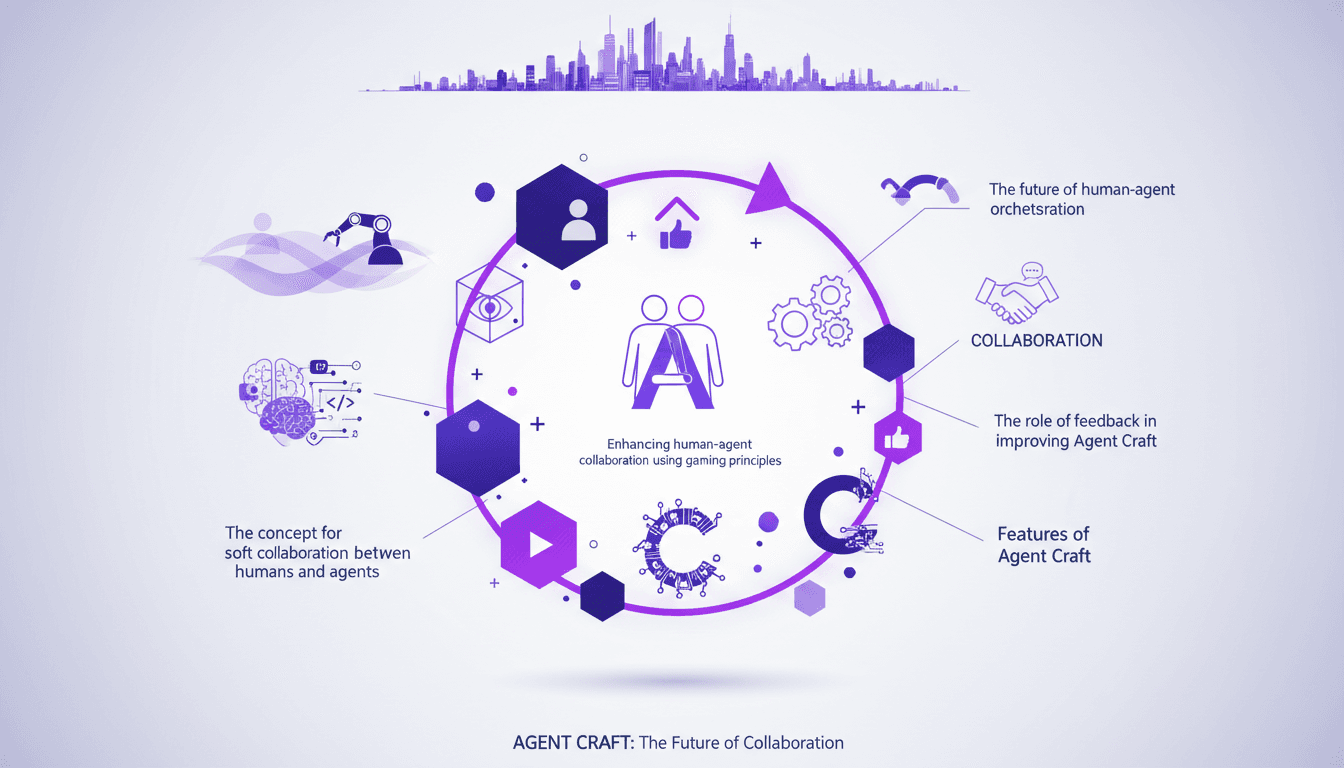

AgentCraft: Scaling Agent Orchestration Efficiently

I dove into AgentCraft headfirst, driven by the need to orchestrate our agents more efficiently. It's like putting the 'orc' in orchestration. Right off the bat, the scale was both daunting and exhilarating. AgentCraft employs gaming principles to enhance collaboration between humans and AI agents. In this article, I share my journey implementing AgentCraft, the challenges faced, and the solutions I found. We dive into visibility, automation, collaboration, and the crucial role of feedback. Trust me, I got burned a few times before nailing the right approach. If you're serious about mastering human-agent orchestration, keep reading.

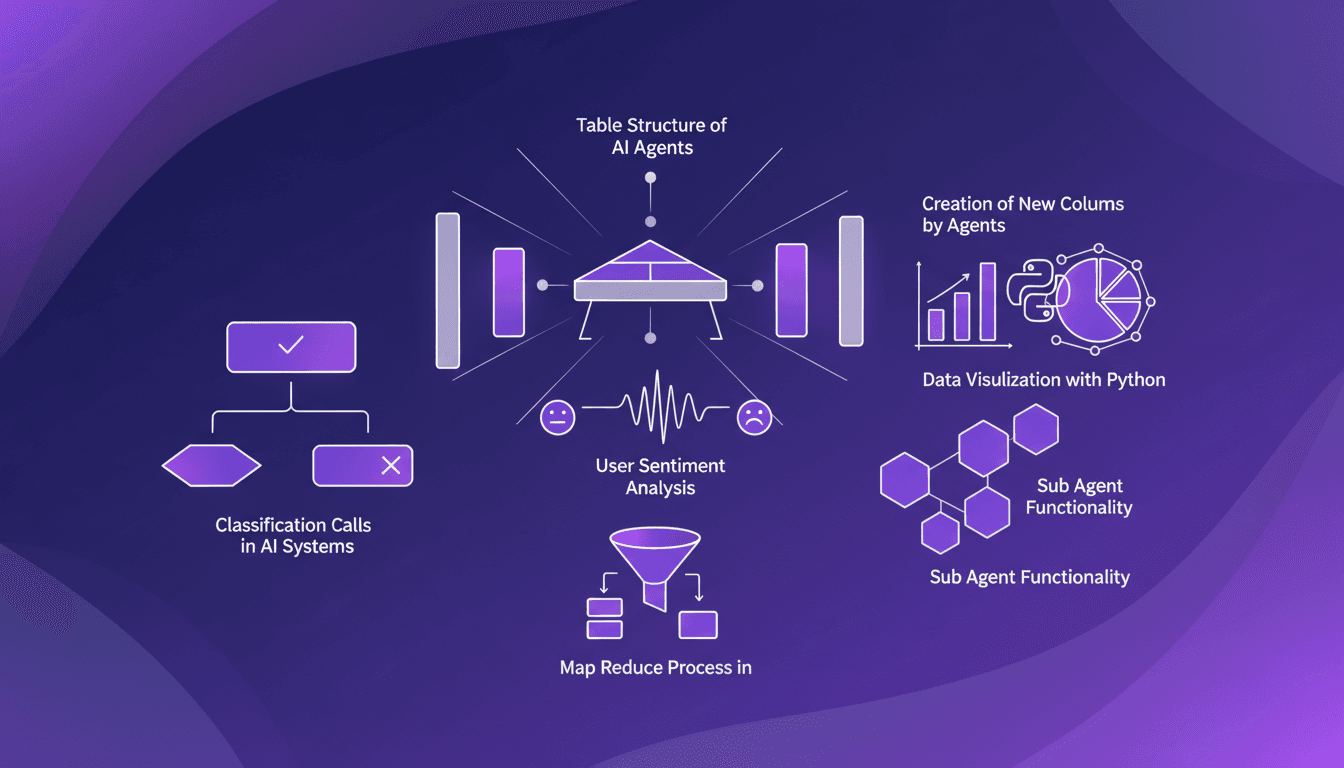

AI Table Structure: Building Efficiently

I've been in the AI trenches, building agents that do more than just process data—they create their own structures. Let's talk about how these AI agents build their own data tables and why it's a game changer. In the AI world, creating dynamic data tables is crucial. It's not just about storing data; it's about making it actionable. How do I do it? I connect my agents to Map Reduce processes, create new columns for user sentiment analysis, and use Python for data visualization. But watch out, if you forget to properly structure your classification calls, you'll end up with a real mess.