GPT-5.5 Performance Boosts: Key Insights

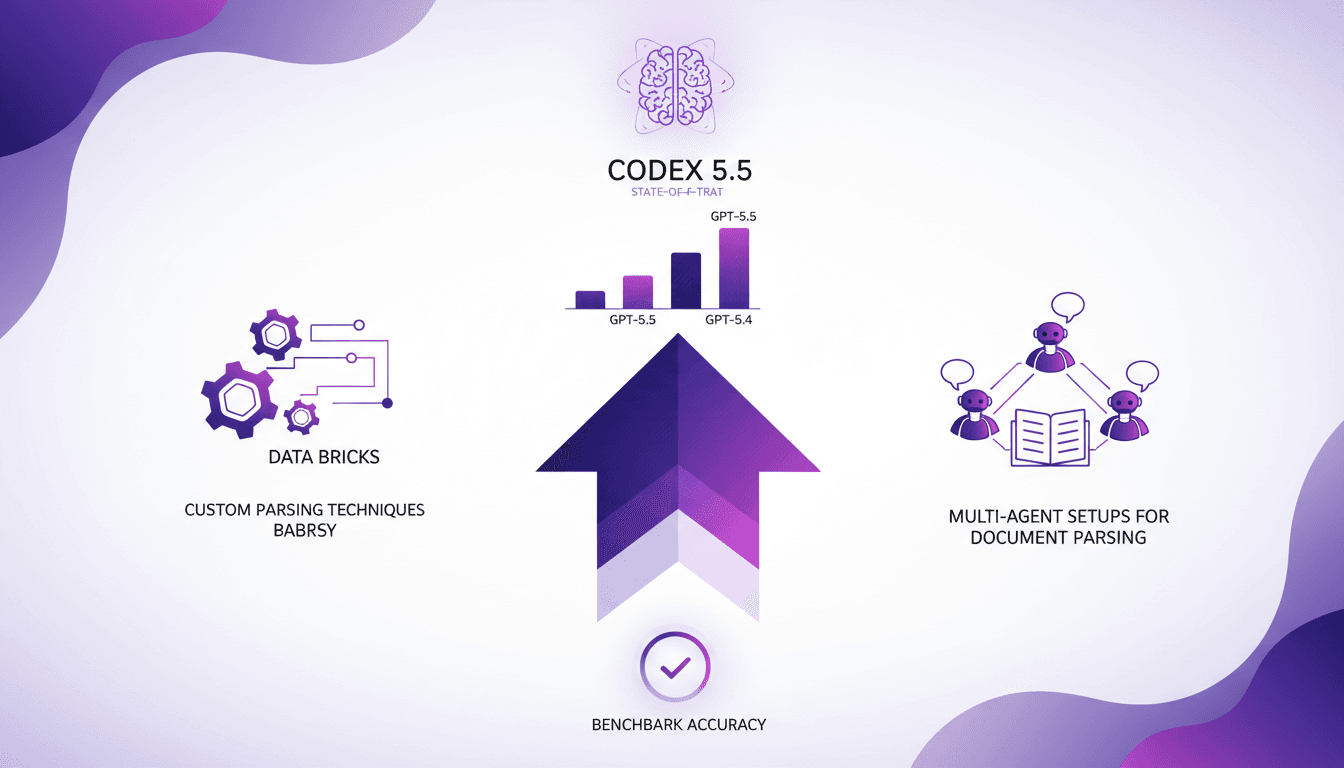

I was knee-deep in parsing challenges when GPT-5.5 came along, and let me tell you, it's a game changer. But it’s not all roses. In the intricate world of Databricks, strategic setup is key. With impressive performance boosts and increased accuracy, GPT-5.5 is setting new standards, but you need to harness it wisely. I'll show you how I tapped into this power, from custom parsing techniques to multi-agent setups. Get ready to dive into the technical nitty-gritty and see how Codex 5.5 stands as the state-of-the-art model!

I was knee-deep in parsing challenges when GPT-5.5 came along, and let me tell you, it's a game changer. But it’s not all roses. In the complex environment of Databricks, you need a strategic setup to truly harness its power. With a 46% reduction in errors over version 5.4 and a 50% accuracy on benchmarks, GPT-5.5 is setting new standards. But here's where it gets tricky: you can't just press a button and expect miracles. I'll show you how I orchestrated custom parsing techniques and multi-agent setups, all while avoiding common pitfalls. With Codex 5.5, we’re dealing with serious power, but you need to tame it to really benefit. Ready to dive into the technical details?

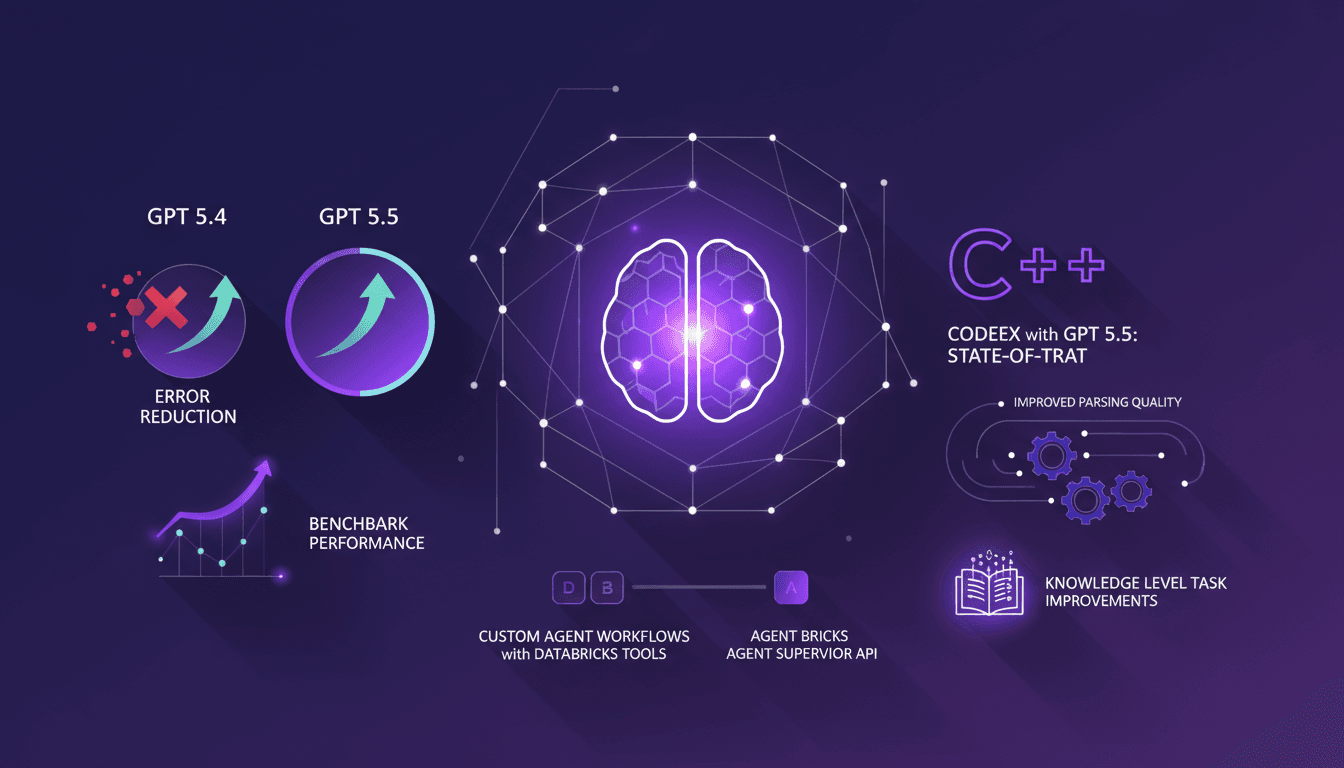

Harnessing GPT-5.5: Performance and Accuracy

Integrating GPT-5.5 into my workflow was like switching from a bicycle to a sports car. We're talking about a 46% reduction in errors compared to GPT-5.4, which is a game-changer. But hold your horses, because with more power comes more computing costs. Achieving 50% accuracy on benchmarks is no small feat, especially considering GPT-5.5 is the only model breaking that barrier in the agent harness setting.

In practice, this translates to significant time savings in daily tasks. Less manual correction, more trust in the generated outputs. I've literally saved hours each week. However, keep an eye on the cost of these performance gains – every cycle of computation comes at a price, needing a careful trade-off analysis.

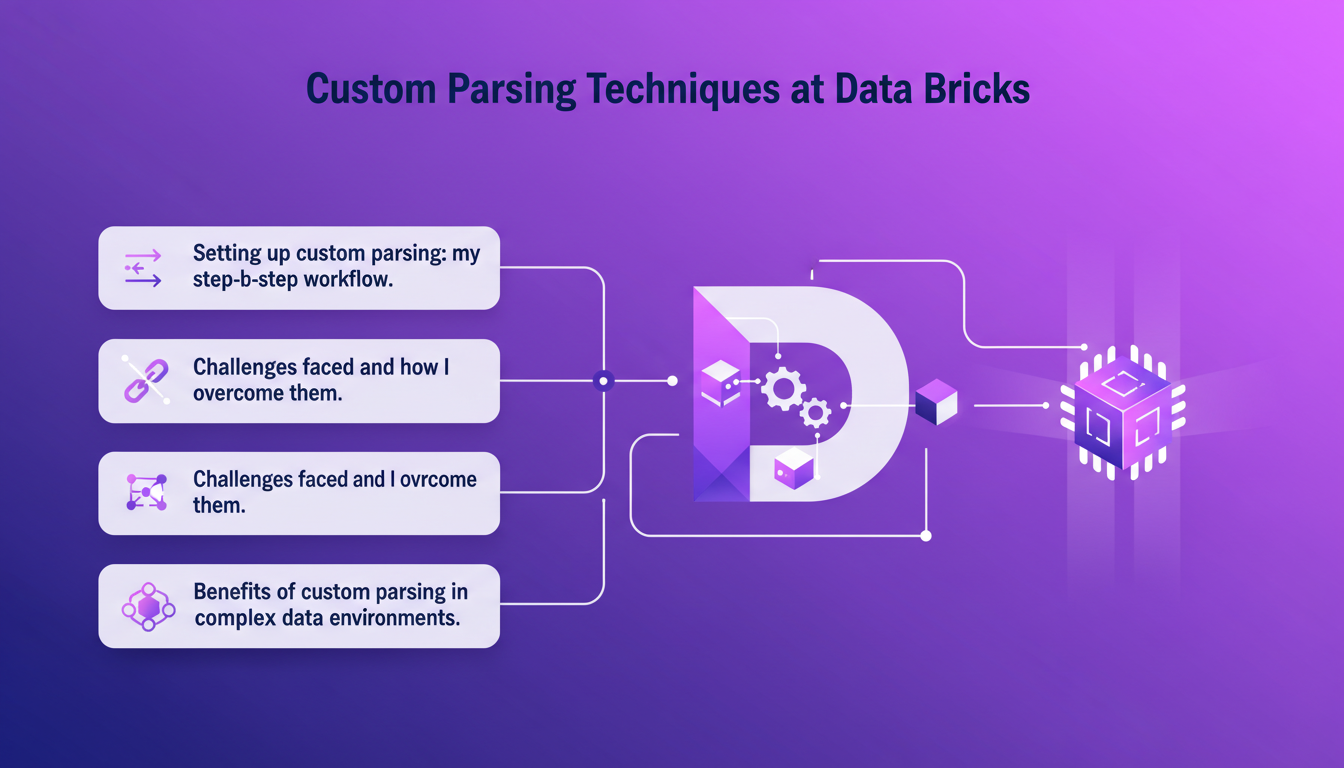

Custom Parsing Techniques at Data Bricks

At Data Bricks, we can't afford messy data. Setting up custom parsing for really disorganized documents was a must. First step, identify recurring patterns in the data. Then, I developed scripts to extract these patterns. Imagine it's like solving a puzzle where the pieces are always a bit different.

The challenges? There are always exceptions that break everything. But after a few iterations, I found solutions. For example, using more flexible regex and advanced text processing libraries. The benefits? Much more reliable data extraction in complex environments. But don't get too excited – sometimes custom parsing isn't worth it if the documents are too varied. In such cases, a standard approach is better.

- Set up custom parsing

- Develop scripts for extraction

- Use flexible regex

- Monitor parsing reliability

Multi-Agent Setups for Document Parsing

Now onto multi-agent setups. Orchestrating a multi-agent system requires a solid understanding of how each agent contributes to the whole. I started by defining the role of each agent, then integrated these agents into a cohesive system. It's like conducting an orchestra – each instrument (or agent) needs to be in perfect harmony with the others.

Multi-agent systems offer significant advantages in terms of speed and efficiency. However, be wary of complexity! Too many agents can slow down the system rather than speeding it up. I found the key is finding the right balance – enough agents to be effective, but not so many that you create an IT nightmare.

Codex 5.5: The State-of-the-Art Model

Why does Codex 5.5 stand out? Simple: it's currently the state of the art in the AI landscape. Integrating it into my systems meant rethinking parts of my infrastructure. It's not just a plug-and-play deal – you need to adapt data flows and often reconfigure APIs to get the most out of the model.

Performance metrics are crucial here. Codex 5.5 isn't just about benchmark scores – it genuinely enhances how tasks are executed. But don't be fooled by the hype. Adopting Codex 5.5 involves trade-offs, particularly in terms of cost and integration effort.

GPT-5.5 in the agent harness setting has a 46% reduction in errors compared to 5.4.

If you're looking to implement Codex 5.5, ensure your team is ready to tackle the technical challenges.

Practical Takeaways and Future Directions

What have I learned from implementing GPT-5.5 and Codex 5.5? The key lessons revolve around efficiency and orchestration. Don't underestimate the need to fully understand your requirements before diving into these technologies. To future-proof, I make sure my setups are scalable and ready for upcoming advancements.

Finally, a cost-benefit analysis is essential. Is the upgrade worth it? Yes, if it's well-orchestrated and aligned with your strategic goals. In the end, efficiency and orchestration are at the heart of everything I do in AI.

- Anticipate future needs

- Analyze costs and benefits

- Align technologies with strategic goals

I've been integrating GPT-5.5 and Codex 5.5 into my recent projects, and here's what I've learned:

- 46% reduction in errors: Impressive, but watch out—proper model setup is crucial to avoid unpleasant surprises.

- 50% benchmark accuracy: A real game changer for workflows, but it requires careful parameter tuning.

- Custom parsing techniques at Data Bricks: I've tested multi-agent setups that truly transform document processing.

These tools can genuinely revolutionize our workflows if used wisely. But there are trade-offs to consider, especially regarding integration complexity and resource needs.

Ready to upgrade your parsing game? I encourage you to dive into these setups yourself. And for a deeper understanding, check out the video "GPT-5.5 is SOTA for Databricks" to see these advancements in action: YouTube Video.

Frequently Asked Questions

Thibault Le Balier

Co-fondateur & CTO

Coming from the tech startup ecosystem, Thibault has developed expertise in AI solution architecture that he now puts at the service of large companies (Atos, BNP Paribas, beta.gouv). He works on two axes: mastering AI deployments (local LLMs, MCP security) and optimizing inference costs (offloading, compression, token management).

Related Articles

Discover more articles on similar topics

Error Reduction in GPT-5.5 with Databricks

I dove into GPT-5.5 with Databricks, and let me tell you, the improvements are not just theoretical. After integrating it into my workflows, I saw a 46% error reduction compared to 5.4. The performance boost, especially with the Agent Supervisor API, is impressive. Parsing quality and task performance have clearly upped their game. Needless to say, my custom agents, with Databricks tools, are now more efficient. But watch out, it's not all perfect; you need to handle these new tools with care to avoid pitfalls. This update, I must admit, has directly impacted my projects, and I'm not stopping here.

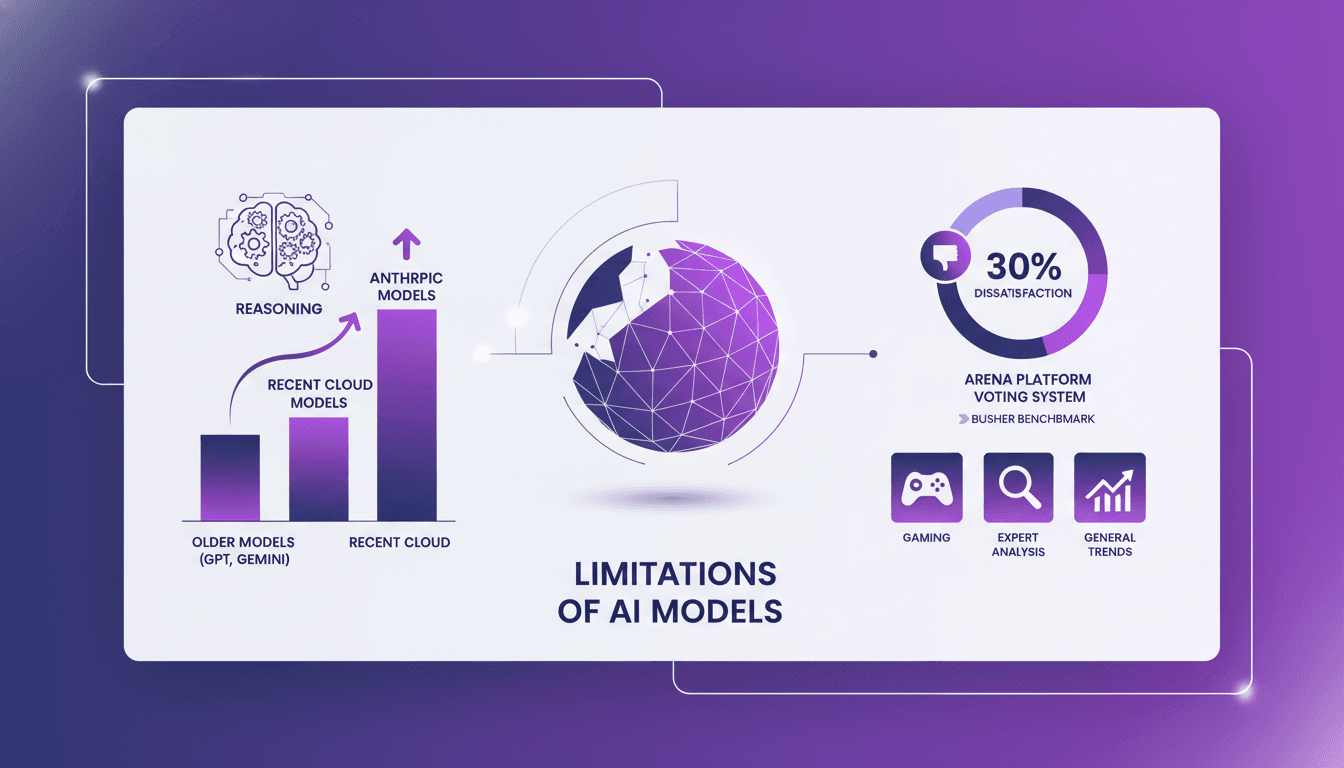

AI Model Limits: What Still Falls Short

I've been knee-deep in AI models, testing and retesting, and let me tell you, there's still a lot they can't handle. I've been burned more than once by putting too much faith in these models. From the 'Busher benchmark' to Arena's voting system, I've seen where models shine and where they stumble. Let's dissect these limitations together and understand the real performance landscape. From recent cloud models to older ones like GPT and Gemini, there are clear trends and specific fields, like gaming, where performance still falls short. Ready to cut through the hype? Let's dive in!

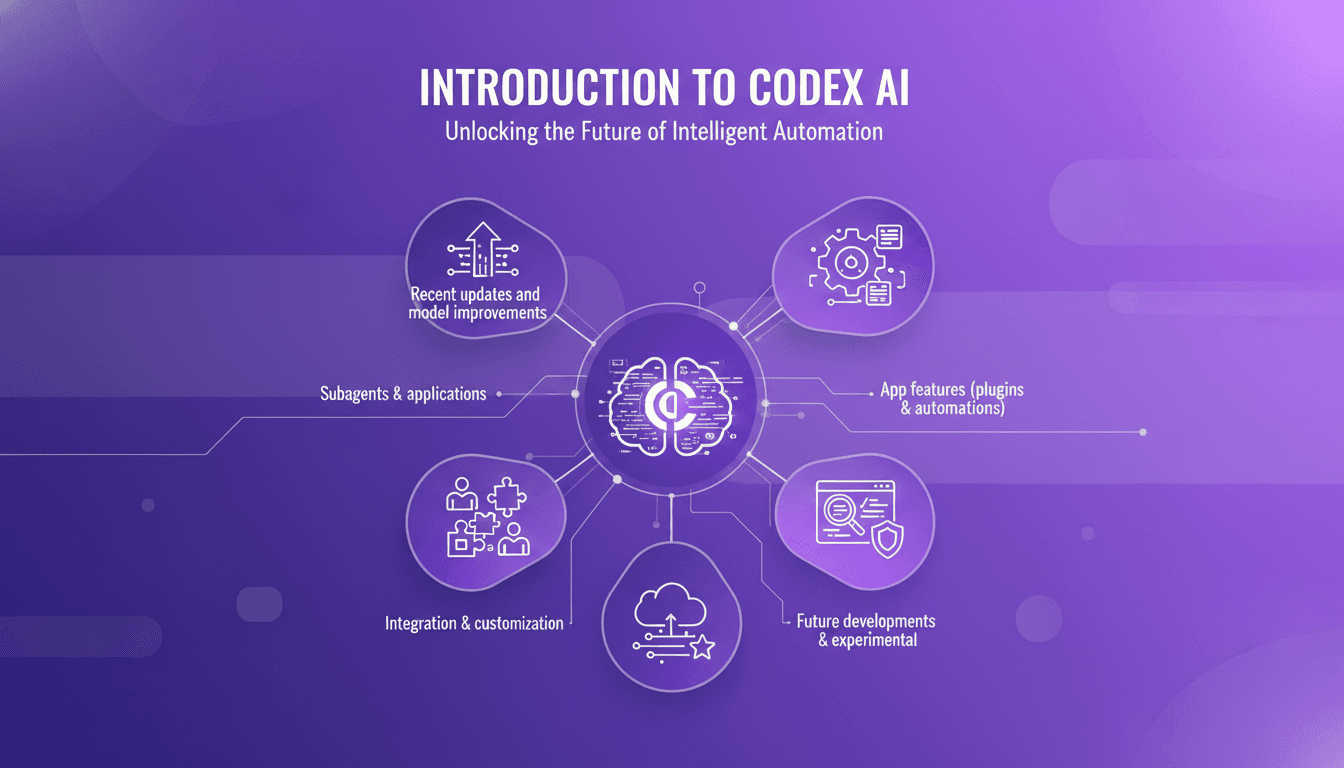

Codex: Recent Enhancements and Integrations

I remember the first time I integrated Codex into our workflow. It was like adding a turbocharger to a well-oiled machine. But watch out, like any powerful tool, understanding its capabilities and limits is key. Codex, with its recent updates, is not just a code assistant; it's a powerhouse for automation and integration. From subagents to security features, it's evolved significantly. Let me show you how to leverage Codex for maximum efficiency. With over three million weekly active users and the latest 5.4 model, the possibilities are massive. Don't get burned by context limits; let's orchestrate Codex to transform your coding environment.

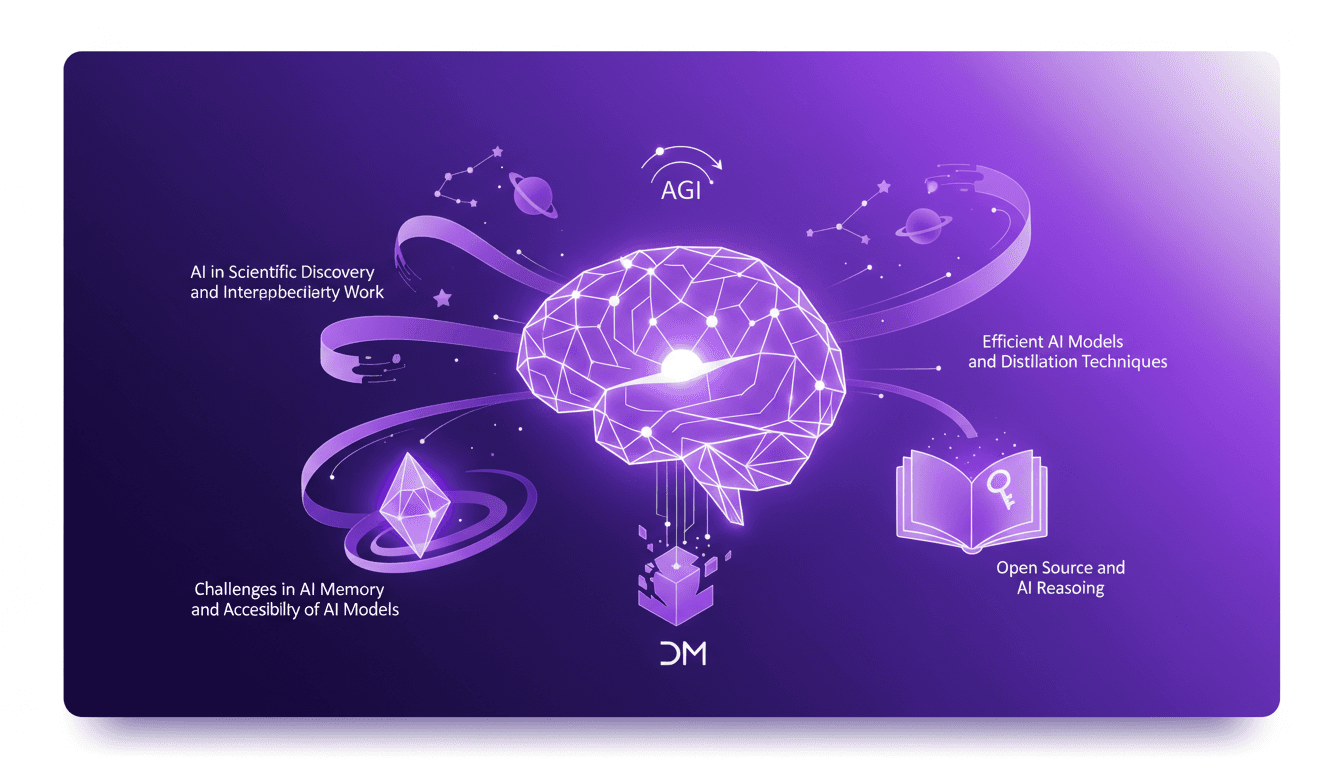

Building AGI: Techniques and Challenges

I've been in the AI trenches for over 30 years, and building the future isn't just a catchphrase—it's a daily grind. We're talking about Artificial General Intelligence (AGI), something that's not just on the horizon but already reshaping our workflows. Guided by Deep Mind's milestones, we're diving into efficient AI models and distillation techniques, alongside the interdisciplinary work pushing boundaries. Building AGI is a marathon, not a sprint. Let's get going, one model at a time.

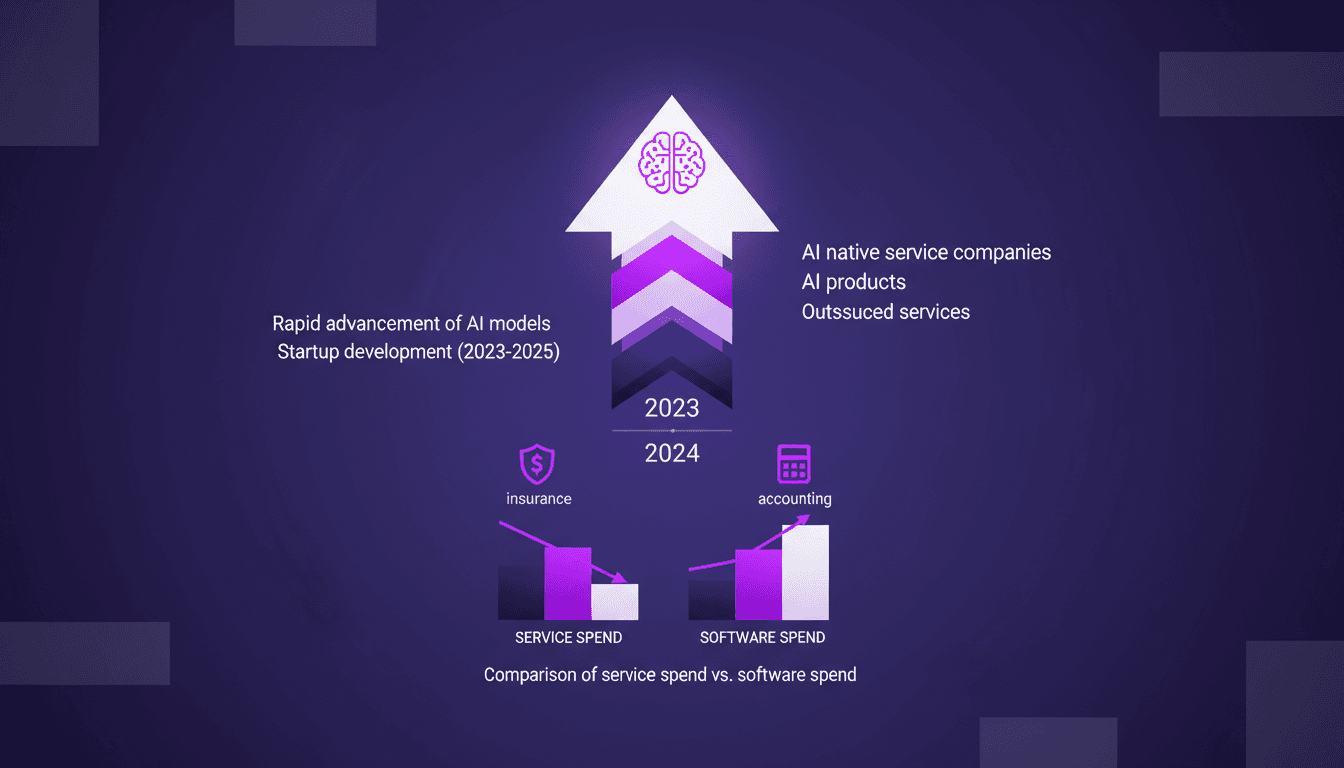

AI Native Services: Revolutionizing Industries

I've been knee-deep in AI for years, watching tools evolve into full-fledged AI native services. This isn't just a trend—it's a revolution. With AI models advancing at breakneck speed, we're witnessing a shift from traditional software tools to AI-native services. These aren't just buzzwords—real companies are emerging that leverage AI to replace entire service sectors. Industries like insurance and accounting are already feeling the impact. Let me walk you through how this unfolds and why it's a game changer. It's not just hype, it's happening.