Mastering Link Chain and Linksmith for AI

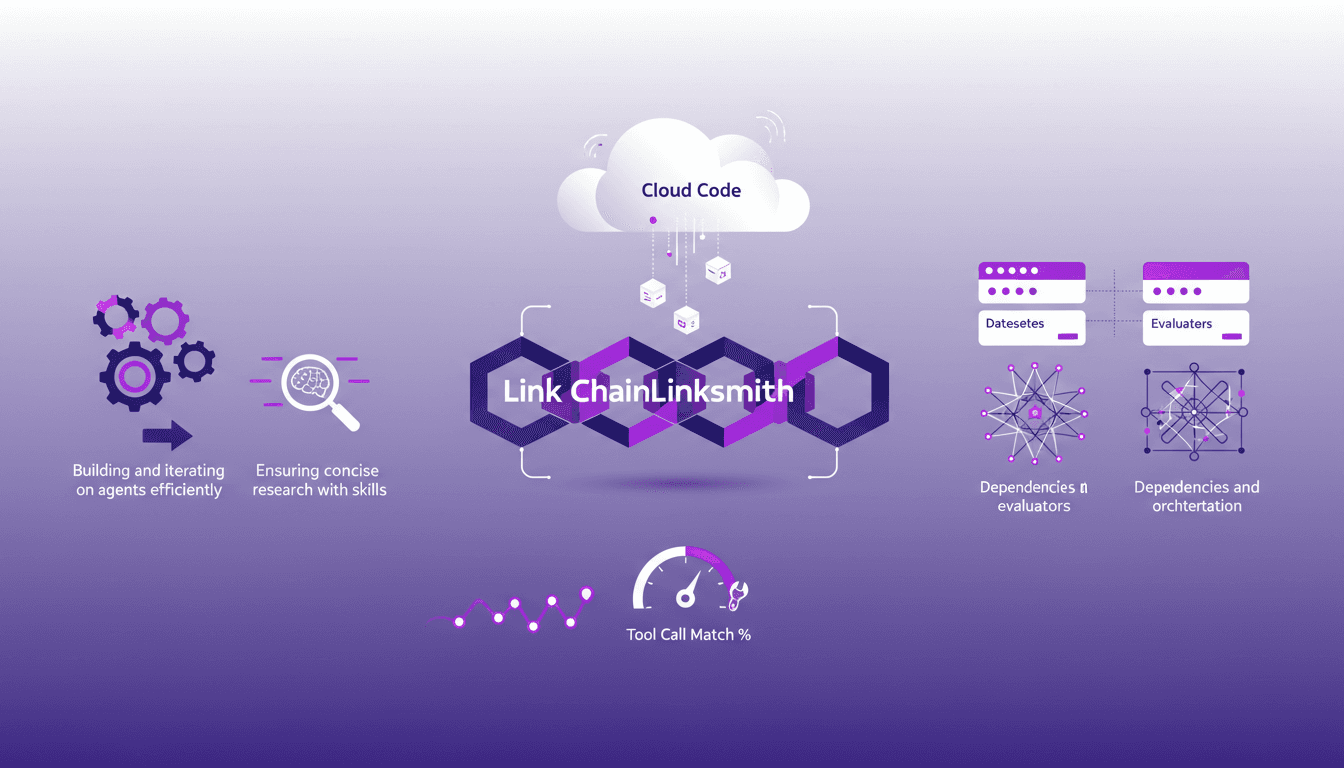

The first time I tried to build an AI agent with Link Chain and Linksmith, it felt like piecing together a complex puzzle. But once I got the hang of it, the efficiency gains were undeniable. In this article, I share my practical experiences: how I used Link Chain and Linksmith with Cloud Code to create and iterate on AI agents. I'll explore key concepts like creating datasets, evaluating tool call match percentages, and more. You'll see how I orchestrated dependencies and analyzed traces to optimize each step.

I remember the first time I tried to build an AI agent using Link Chain and Linksmith. It felt like piecing together a complex puzzle, but once I got the hang of it, the efficiency gains were undeniable. I led this journey using Cloud Code to orchestrate my agents, and I'll show you how I did it. First, I created datasets (the backbone of your agent) and evaluated tool call match percentages to ensure every query hit the mark. But watch out, don't underestimate dependencies and orchestration. You'll need to capture and analyze traces to avoid getting burned and maximize your agent's impact. In this article, I share the mistakes I made and how I overcame them so you can also boost your agents' performance.

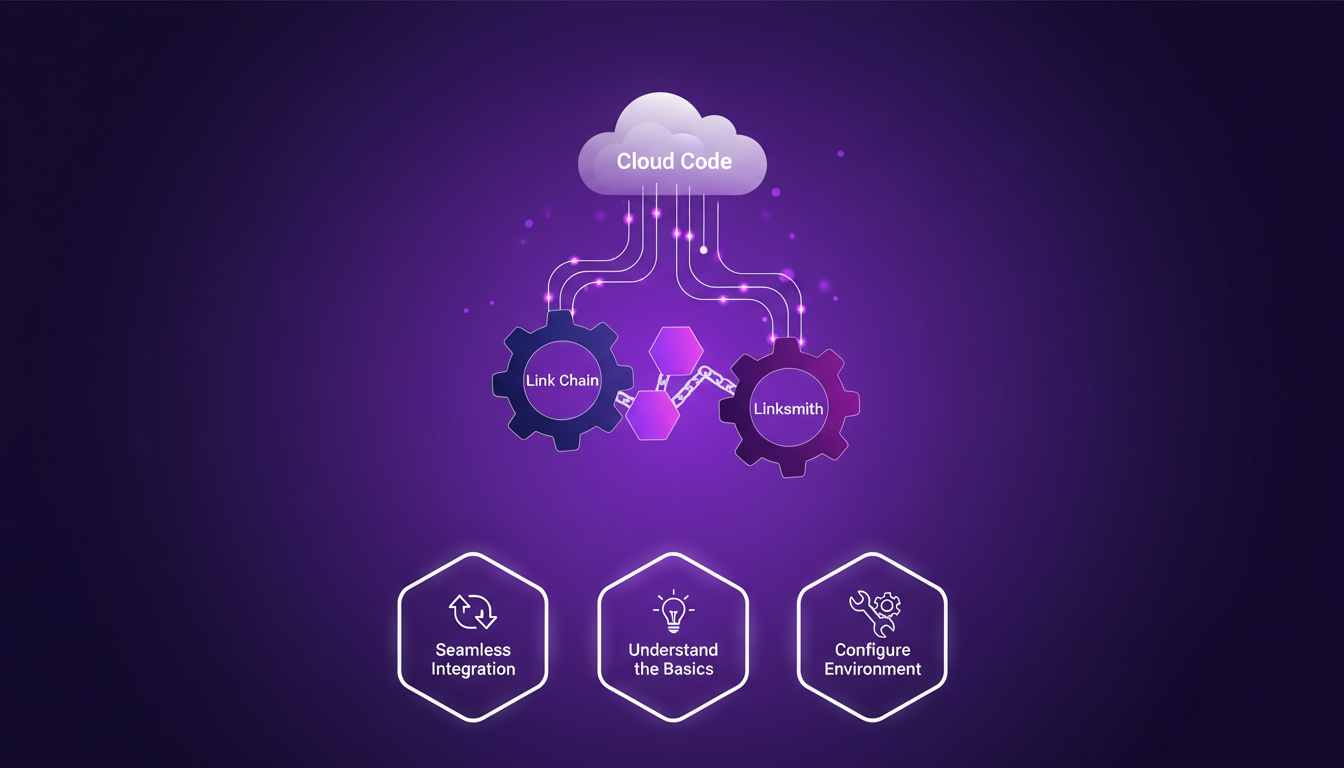

Getting Started with Link Chain and Linksmith

Kicking off your journey in building intelligent agents requires the right tools at hand. Connecting Link Chain and Linksmith with Cloud Code has been a game changer for me. These tools complement each other perfectly to create a seamless integration. First, you need to configure your environment to accommodate these technologies. Ensure that your version of Cloud Code supports these integrations. I got burned once using an outdated version that wasn't supported.

Understanding the basics is crucial. Link Chain and Linksmith act like assistants that allow Cloud Code to better understand project dependencies and structures. But watch out, if your Cloud Code isn't up to date, you might face compatibility issues.

- Ensure your Cloud Code version supports these tools.

- Configure your environment to avoid conflicts.

- Test the integration with simple projects before moving to more complex tasks.

Building and Iterating on AI Agents

With the tools in place, I use Cloud Code to build a deep research agent. Iteration is key here. Instead of aiming for perfection on the first try, I prefer to run concise research queries and adjust as needed. This optimizes agent performance by analyzing query results. However, creating more complex agents might require additional resources, a real trade-off for anyone looking to keep costs down.

Working on agents, I've learned that optimization comes through rapid and continuous iterations. I use two test queries, one about Chinese restaurants in New York and San Francisco, and another on AI trends in 2026. These tests help me see how my agent adapts to different types of data.

- Set clear goals for each iteration.

- Use test queries to assess performance.

- Allocate sufficient resources for complex agents.

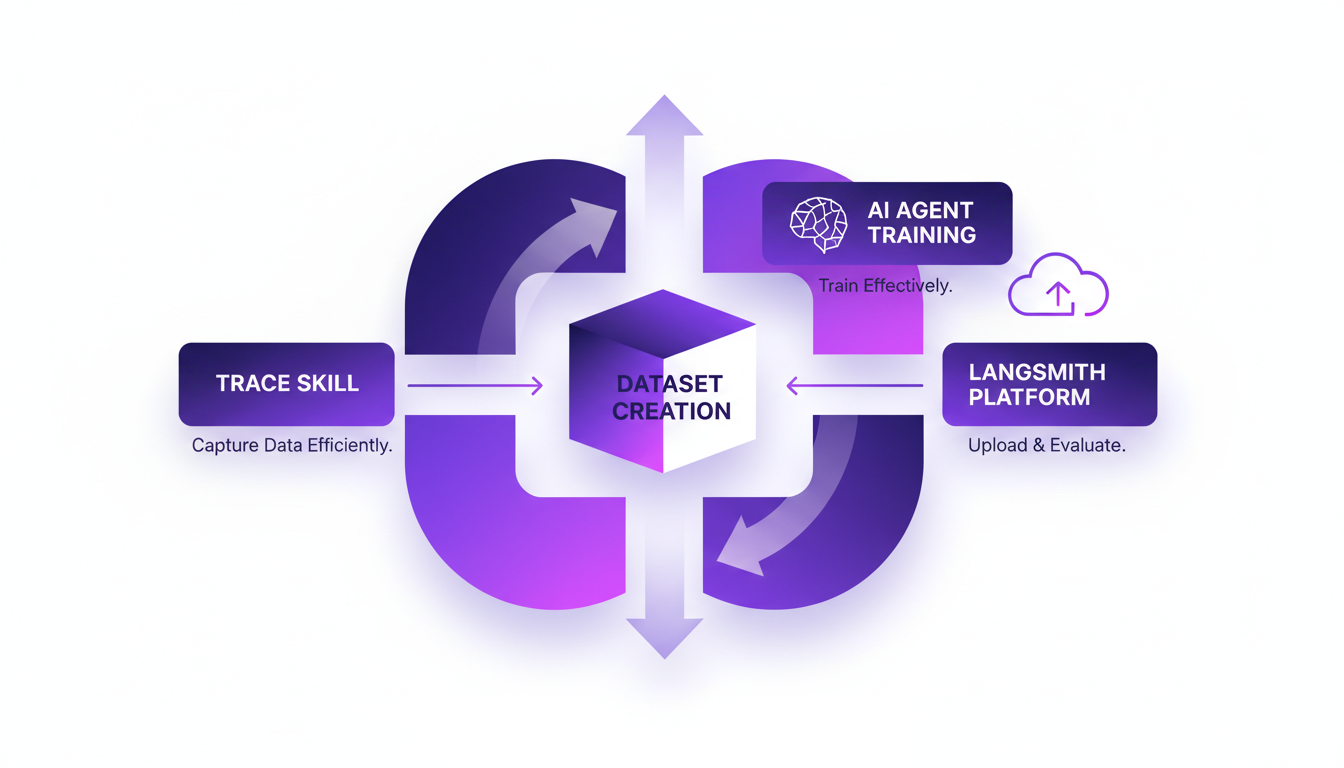

Creating and Using Datasets

To effectively train your agents, creating relevant datasets is essential. I use the trace skill to capture data efficiently. Simplicity here is often more powerful than unnecessary complexity. I've learned not to overcomplicate datasets, as it can make analysis more challenging.

Once the data is collected, I upload it to Langsmith and use evaluators to refine the results. This ensures the agent is well-trained and ready for real-world testing.

- Use the trace skill to efficiently capture data.

- Keep datasets simple and relevant.

- Use evaluators to refine results.

Evaluating Tool Call Match Percentage

Understanding the tool call match percentage is crucial for assessing your agent's performance. In my experience, aiming for a high match percentage enhances agent efficiency, even though achieving 100% isn't always necessary.

I analyze the results to refine agent operations and ensure the tools used are perfectly suited for the tasks at hand.

- Regularly analyze match percentage to improve performance.

- Compare results to identify areas for improvement.

- Don't always aim for 100%, but ensure high accuracy.

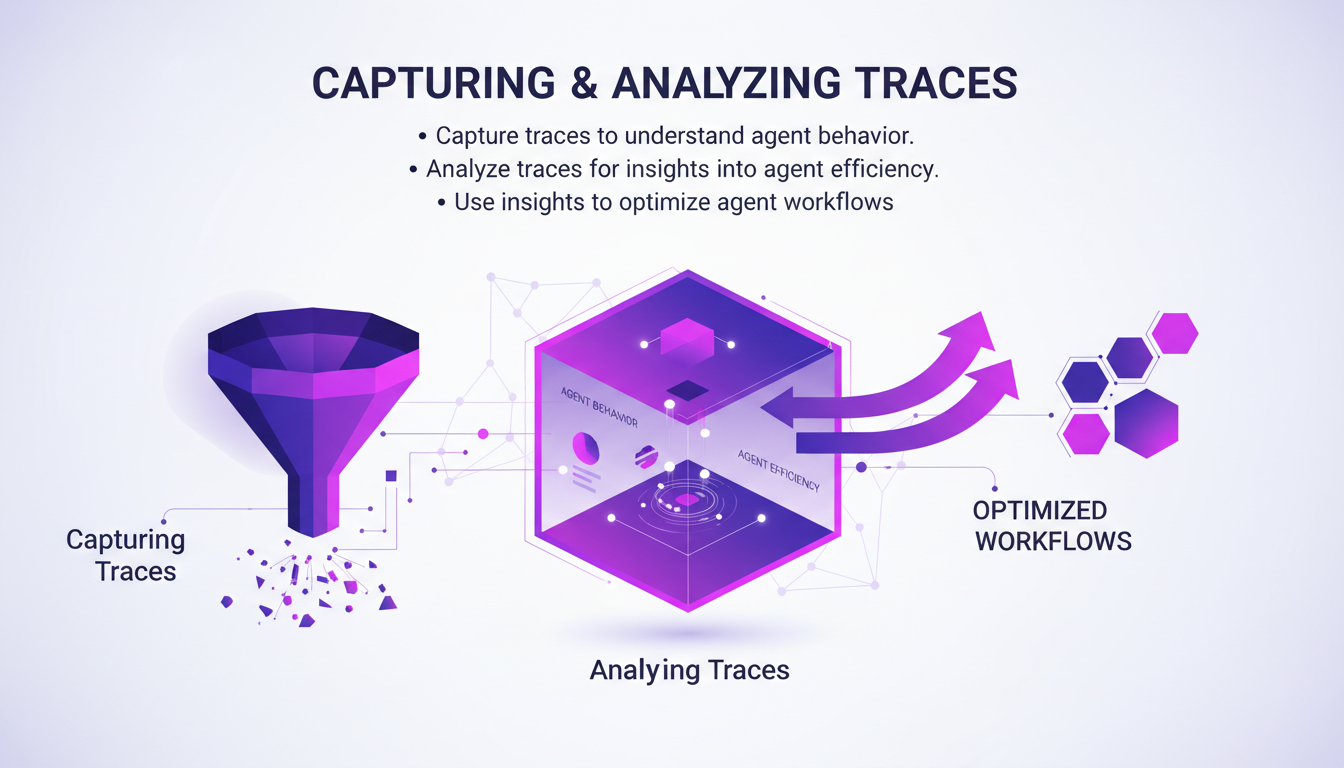

Capturing and Analyzing Traces

Capturing traces is essential to understanding agent behavior. I use these traces to gain insights into agent efficiency and optimize workflows. However, it's important to avoid being overwhelmed by unnecessary data. Focusing on key metrics is paramount.

By analyzing traces, I've been able to pinpoint areas where the agent was wasting time or using resources inefficiently. This has allowed me to optimize workflows and improve overall project efficiency.

- Capture traces to understand agent behavior.

- Analyze them to improve efficiency.

- Avoid data overload by focusing on key metrics.

In conclusion, using Link Chain and Linksmith with Cloud Code transforms how we build and iterate on AI agents. By focusing on efficiency and resource economy, robust and adaptive solutions can be built. For those looking to delve deeper, I recommend checking out this practical guide on boosting web search with GPT-5.3.

Integrating Link Chain and Linksmith with Cloud Code is a real game changer. First, I got my environment set up—crucial step—before diving into evaluating tool call match percentages. Here's what stood out to me:

- Optimizing AI agents: With these integrations, building and iterating is straightforward, but don't skip those initial setup steps.

- Leveraging precise skills: Concise, targeted research is key to keeping your agents effective.

- Creating and using datasets: Well-constructed datasets and evaluators make all the difference in efficiency.

Looking ahead to 2026, expect these tools to shape AI trends, but don't underestimate the importance of initial setup. Try implementing these strategies in your next AI project and see the difference. Check out the full video to deepen your understanding. YouTube link: here. Let me know how it goes!

Frequently Asked Questions

Thibault Le Balier

Co-fondateur & CTO

Coming from the tech startup ecosystem, Thibault has developed expertise in AI solution architecture that he now puts at the service of large companies (Atos, BNP Paribas, beta.gouv). He works on two axes: mastering AI deployments (local LLMs, MCP security) and optimizing inference costs (offloading, compression, token management).

Related Articles

Discover more articles on similar topics

Boosting Web Search with GPT-5.3: Practical Guide

I've been tweaking search results for years, but integrating GPT-5.3 changed everything. With the latest enhancements, understanding user queries has become more nuanced. In this article, I walk you through how to leverage these advancements for better web search results. We'll dive into the importance of subtext, the enhancements in GPT-5.3, and how they make responses more natural and conversational. You'll see practical cases like planning a biking trip or understanding baseball rule changes. It's a powerful tool, but watch out for context limits—beyond 100K tokens, things get tricky. I'll share how I orchestrated these elements for direct user experience impact.

Nanabano 2: Setup, Comparison, and Tips

I dove into Google Nanabano 2 expecting just another AI tool, but what I found was a real game-changer for image generation. Let me walk you through how I set it up and what makes it tick. With high visual fidelity and multilingual support, Nanabano 2 holds a lot of promise, but how does it perform in the real world? I compared it with the Pro version, explored its features, and tested its rendering and image generation capabilities. In just 12 minutes, I'll show you how to access Nanabano 2, leverage its real-time translation capabilities, and why it might just become your favorite new tool.

WebM MCP: Use Cases and Future Prospects

When I first heard about WebM MCP, I was skeptical. But after diving in, wrapping my head around its APIs, and seeing the potential, I realized it's a game changer for AI agent deployment. Developed by Google and Microsoft, WebM MCP offers a new way to handle media processing with AI agents. In this article, I share my hands-on experience, pitfalls to avoid, and how I integrated this tool into my daily workflow. Imagine managing thousands of tokens for each processed image, with just two APIs to master. I'll guide you through the benefits, use cases, and future prospects of this powerful tool.

AI Optimization: Recursive Self-Improvement vs Fine-Tuning

I remember the first time I ditched fine-tuning for Poetic's recursive self-improvement. It was like trading a bicycle for a jet. The efficiency was mind-blowing, and the cost savings were immediate. In this article, I'll walk you through how this approach can change your AI game. We're diving into cost-effective AI model optimization, Poetic's standout benchmark performance, and the journey from mobile apps to AI. If you're tired of traditional fine-tuning, this is the read you need.

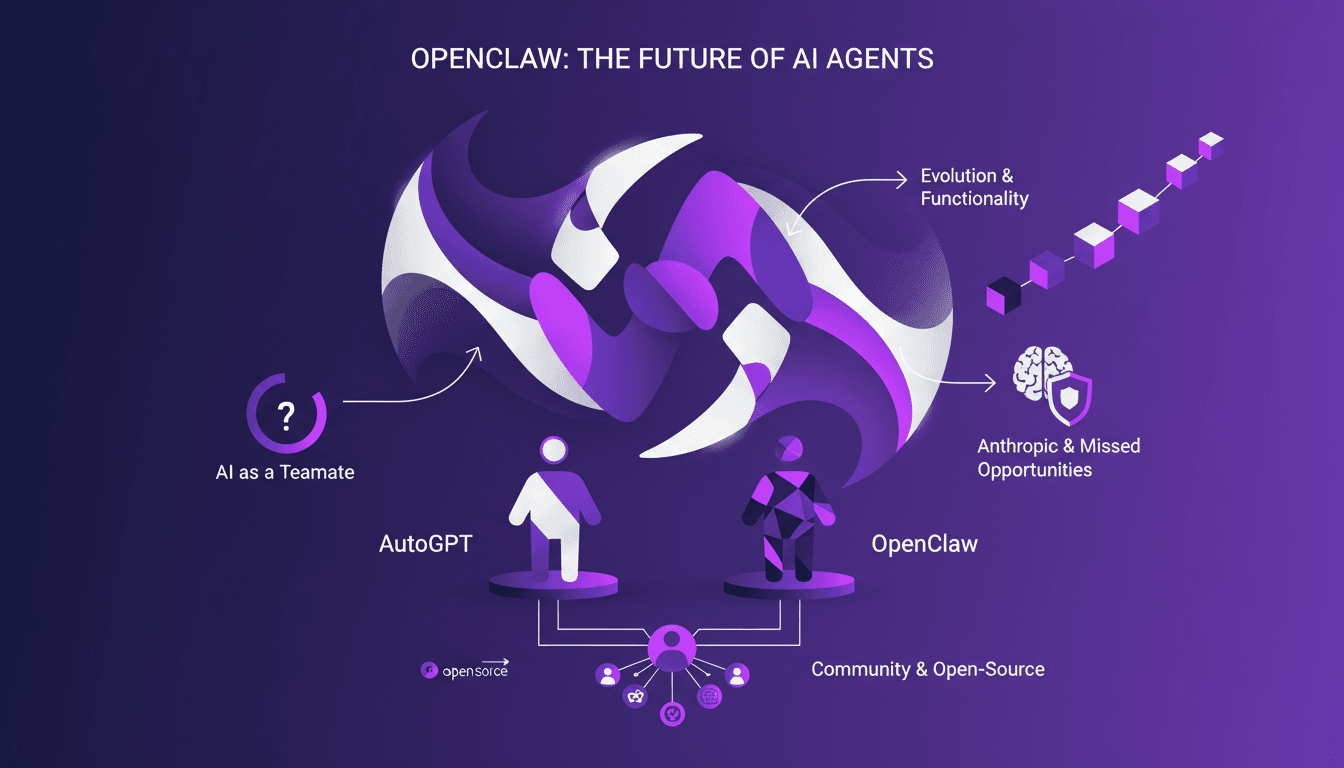

OpenAI Acquires OpenClaw: What It Means for AI

I was in the middle of orchestrating a multi-agent system when the news hit: OpenAI just bought OpenClaw. This isn't just another acquisition; it's a potential game changer for AI agents. OpenClaw, which evolved from Clawdbot to Moltbot, is set to redefine how we view AI as a teammate, not just a tool. With its persistent memory and sandbox environments, OpenClaw promises to transform our workflows. This acquisition could accelerate the integration of open-source AI agents and strengthen community collaboration. Let's dive into the details of what might be a pivotal moment for the future of AI agents.