Mastering AI: Strategies and Tips for Coding

I dove into AI coding headfirst, and let me tell you—it’s a game changer. But without a plan, you're just spinning wheels. Here’s how I navigated the smart and dumb zones of LLMs, broke down tasks, and used Test-Driven Development (TDD) to keep my projects on track. AI is reshaping software engineering, but it’s not as simple as adding AI to your toolkit. You need to understand its nuances—like smart zones, task sizing, and efficient session management—to truly harness its power.

I dove into AI coding headfirst, and let me tell you—it’s a game changer. But without a plan, you're just spinning wheels. First, I had to navigate the smart and dumb zones of LLMs. It's not just about throwing AI into the mix and hoping for magic. Then, I learned to break down tasks into vertical slices rather than coding horizontally. It’s a game changer! And let’s not forget Test-Driven Development (TDD) — crucial for keeping my projects on track. AI is shaking up software engineering, but you need to know how to tame it. You have to manage coding sessions with care, understand when to compact or clear context to avoid wasting tokens. I got burned on this more times than I can count before figuring out how to orchestrate these sessions correctly. So, if you really want to master AI coding, join me in this workshop. We'll tackle it step by step, together.

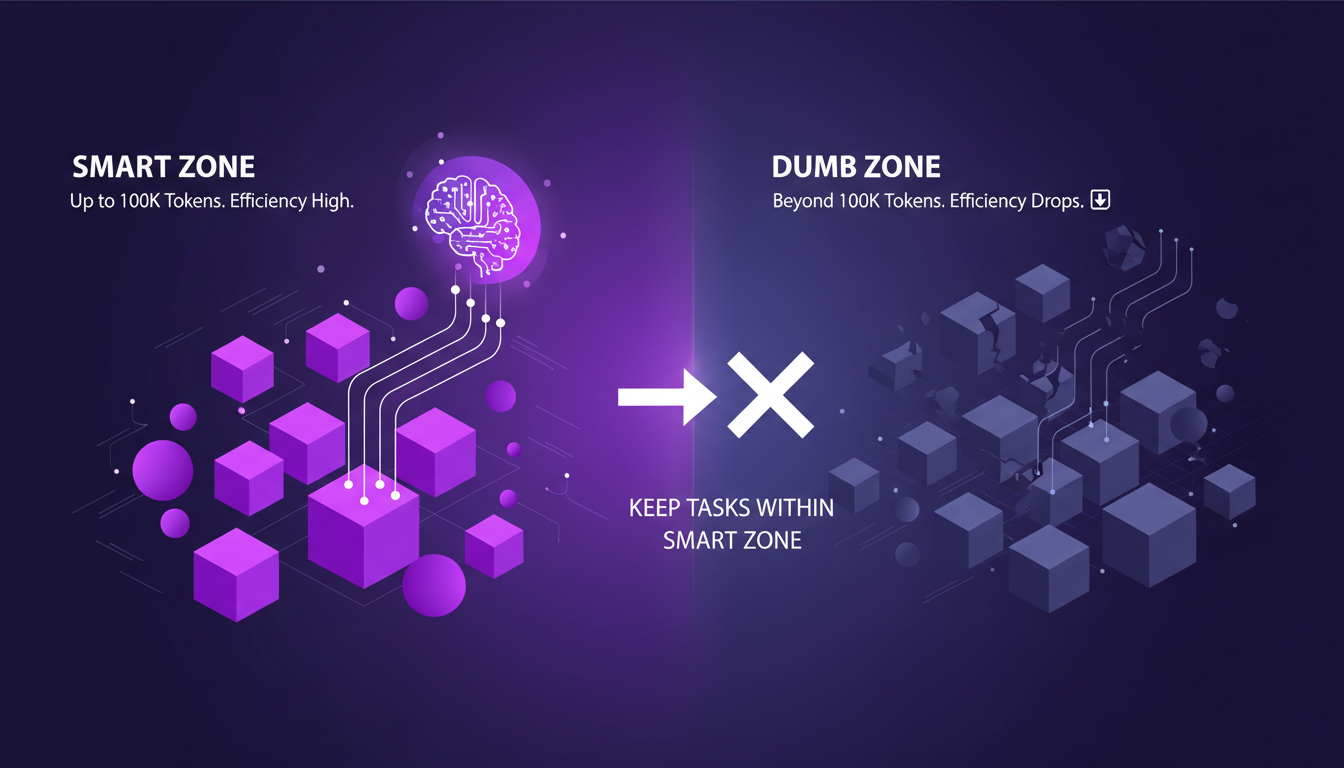

Understanding Smart and Dumb Zones in LLMs

I discovered that the smart zone in LLMs (Large Language Models) is where AI truly shines, up to 100,000 tokens. Beyond that, you're entering the dumb zone, and things get tricky. Efficiency drops, and performance issues start cropping up. The key is to keep your tasks within this smart zone to maximize AI's potential.

Watch out for token usage—going over can lead to performance issues. Balancing between zones is crucial for maintaining efficiency. While working with these models, I learned that each task must be carefully sized to stay within the smart zone.

"AI is a new paradigm that is changing many things."

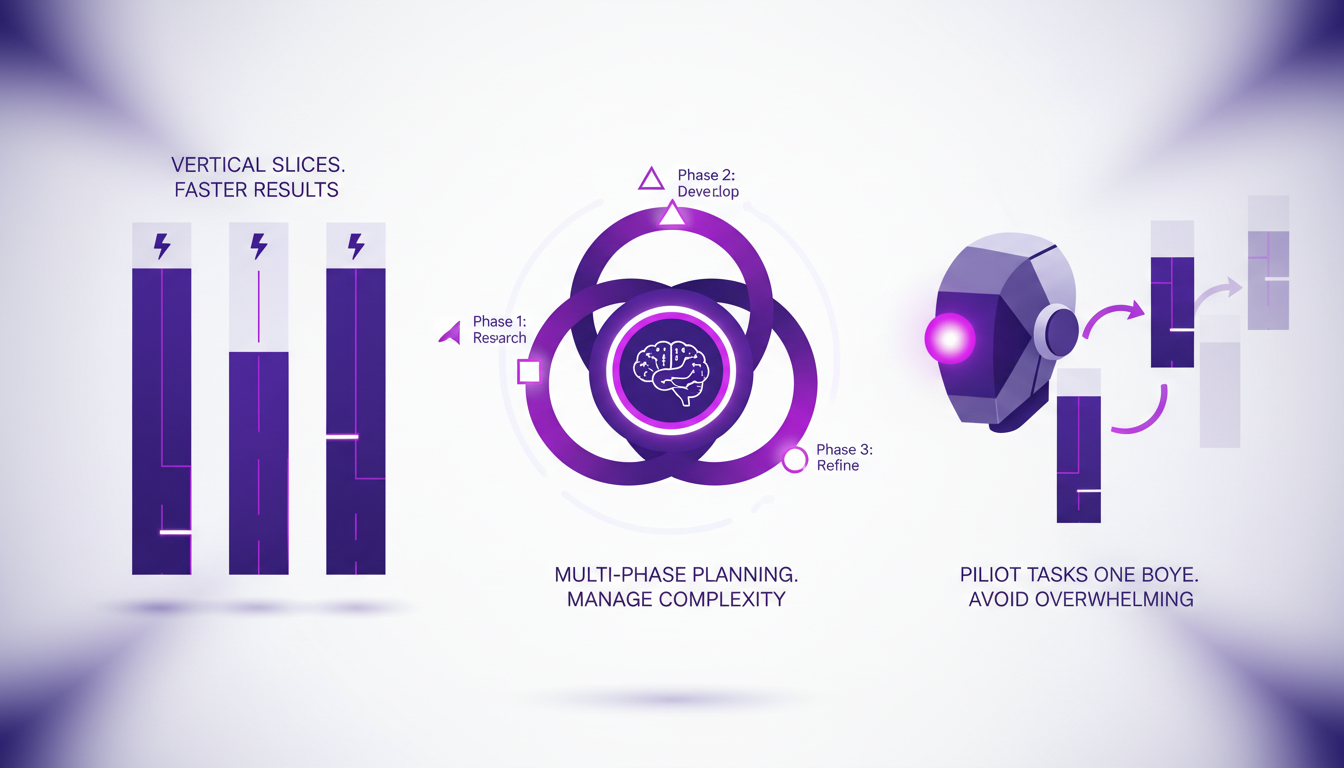

Task Sizing and Multi-Phase Planning

To manage complexity, I break tasks into vertical slices rather than horizontal layers. This brings faster results. Using multi-phase plans helps me stay focused and manage complexity. I learned to pilot tasks one by one, rather than overwhelming the AI with too much at once.

A tip: increase readability by simplifying your plans. Remember, one agent per sequential plan is often enough. I've seen this work across various projects where phase management becomes a real asset.

Compacting Conversations in AI Coding

Compacting is about distilling conversations to their essence. I wrap up sessions by clearing unnecessary context, saving tokens. This approach reduces noise and focuses AI’s attention on what matters.

Clearing context can prevent sessions from becoming unwieldy. Efficiency is key—compact before you expand. This has helped me keep sessions light and performant.

Test-Driven Development with AI

Test-Driven Development (TDD) with AI keeps my code reliable and my projects on track. I write tests first, then let the AI suggest implementations. This method helps catch errors early, saving time and resources.

AI can sometimes suggest unexpected solutions—be ready to iterate. But watch out for over-reliance on AI; human oversight is still crucial.

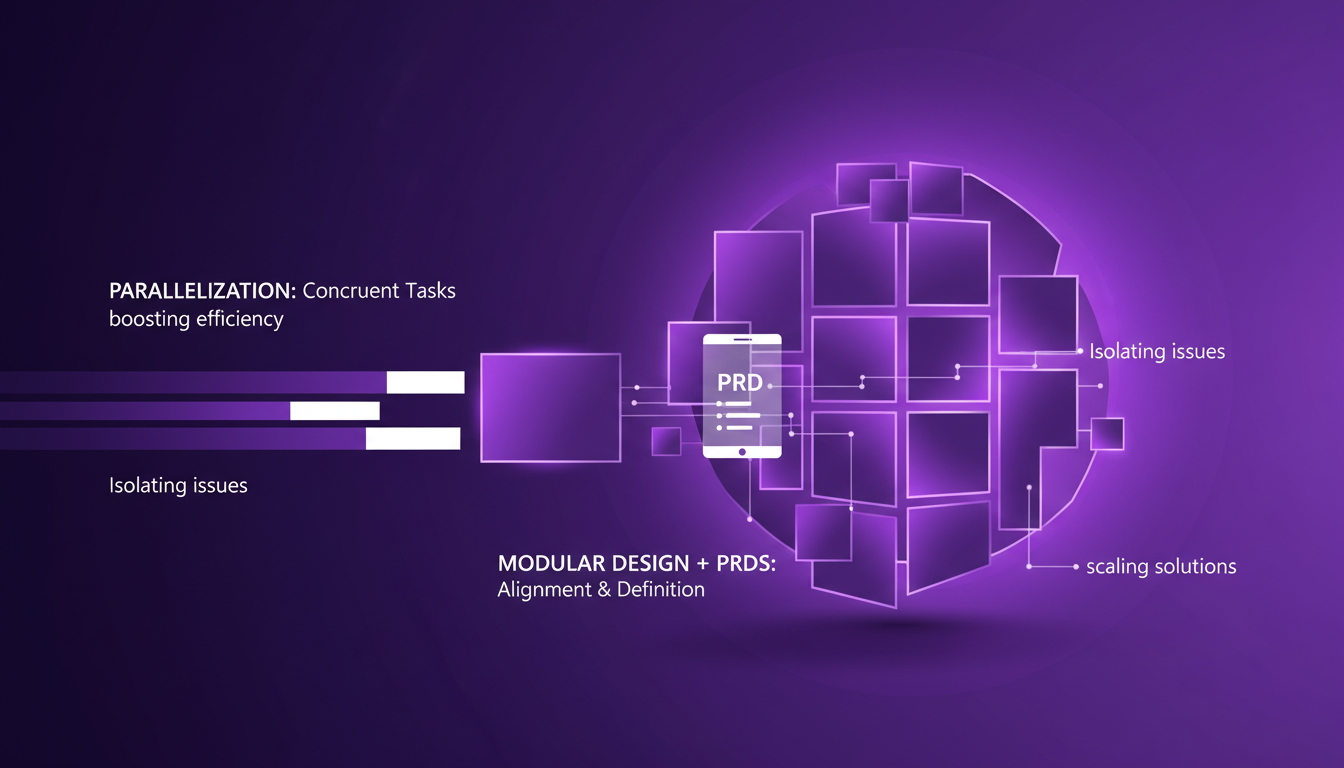

Parallelization and Modular Code Design

Parallelization allows me to run tasks concurrently, boosting efficiency. Modular design helps in isolating issues and scaling solutions. I use Product Requirements Documents (PRDs) to keep everyone aligned and tasks well-defined.

Parallel tasks require careful orchestration to avoid conflicts. Balancing parallel and sequential tasks is a trade-off worth mastering.

In conclusion, navigating between the smart and dumb zones of LLMs, sizing tasks correctly, compacting conversations, and using TDD with AI are strategies that transform the way I work. Each step in the process demands careful attention to maximize efficiency and avoid pitfalls.

- Keep tasks within the smart zone of LLMs.

- Use multi-phase plans to manage complexity.

- Compact conversations to save tokens.

- Adopt TDD to maintain code reliability.

- Carefully orchestrate parallel tasks to avoid conflicts.

I've realized that AI coding isn't just about the tech but about smart orchestration. First, understanding the difference between smart zones and dumb zones in LLMs is a real game changer. Next, task sizing and multi-phase plans can really transform your workflow. And check out how compacting context can be more efficient than clearing it entirely in AI sessions. Finally, using one agent in a sequential plan and opting for vertical slices over horizontal ones are tips you can't overlook. The numbers speak for themselves: one agent, three vertical slices, five tips for increased readability. Looking ahead, I'm convinced that those who master these AI tools will have a distinct edge. Ready to dive in? Start small, iterate often, and always keep an eye on efficiency. To truly grasp the nuances, I recommend watching Matt Pocock's full video. You won't regret it! Video link

Frequently Asked Questions

Thibault Le Balier

Co-fondateur & CTO

Coming from the tech startup ecosystem, Thibault has developed expertise in AI solution architecture that he now puts at the service of large companies (Atos, BNP Paribas, beta.gouv). He works on two axes: mastering AI deployments (local LLMs, MCP security) and optimizing inference costs (offloading, compression, token management).

Related Articles

Discover more articles on similar topics

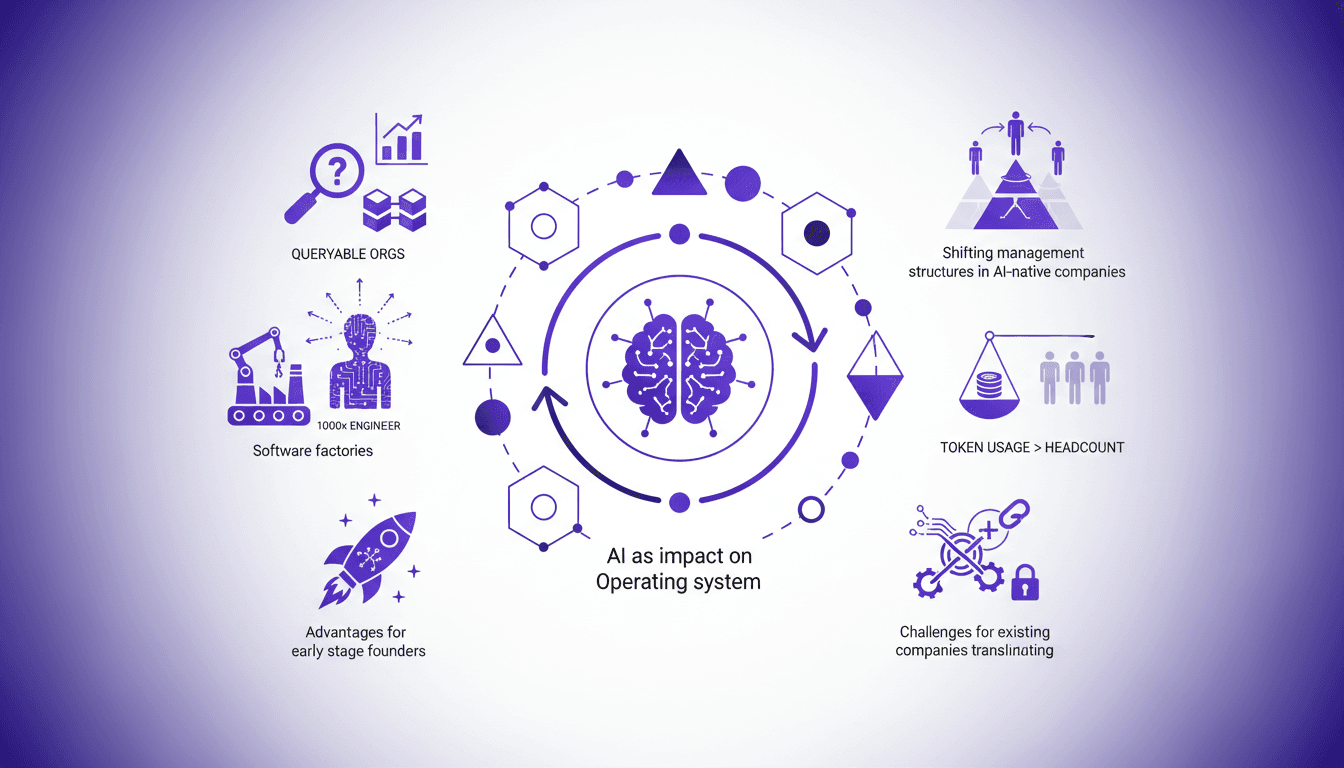

Building an AI Startup: Impact and Strategies

I've spent countless hours in the trenches, building companies from the ground up with AI. AI isn't just a tool—it's the backbone of modern startups. I'll guide you on how to effectively leverage these transformations to turn your company into a more agile and efficient organization. We're diving into closed-loop systems, AI-driven productivity, and maximizing token usage over headcount. Whether you're an early-stage founder or looking to transition an existing company, it's time to dive into the AI universe.

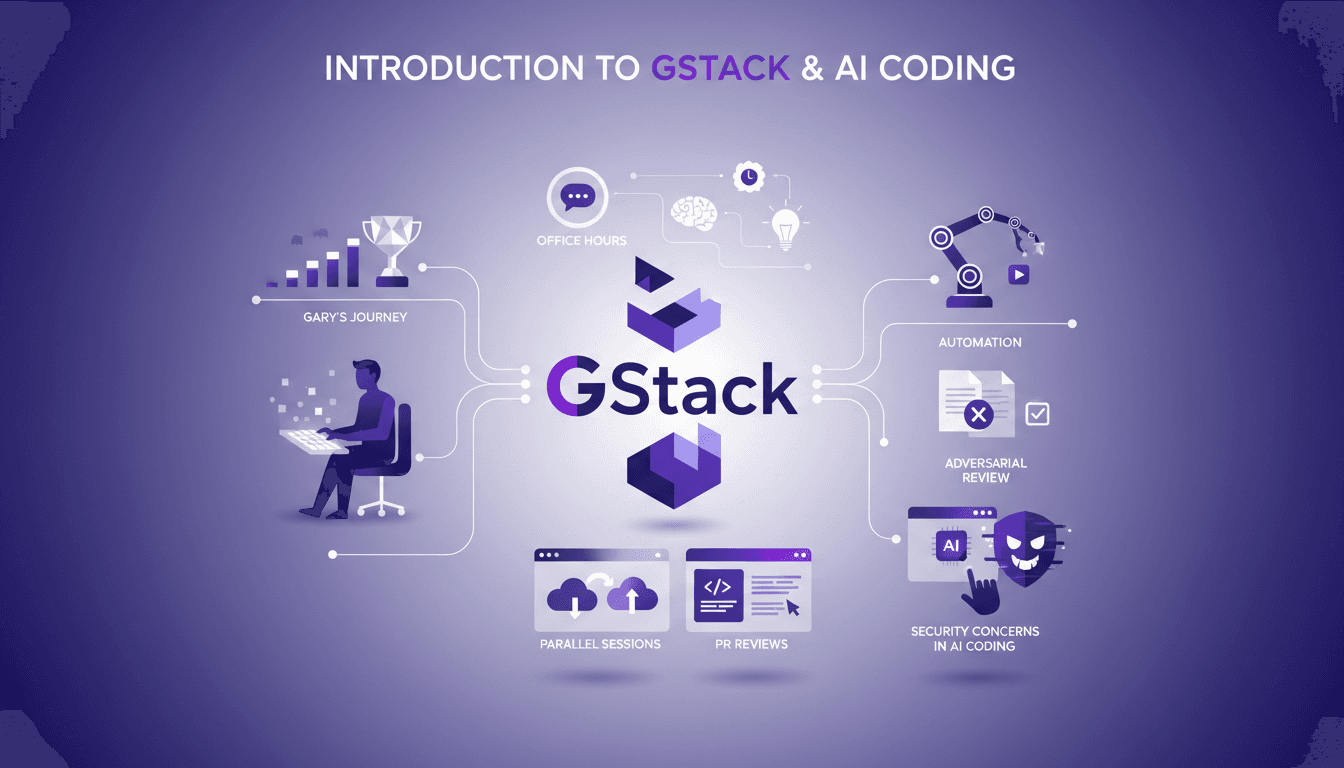

Setting Up GStack: My Experience with Claude Code

I've been deep in the trenches with GStack and Claude Code, and let me tell you, the way Garry Tan orchestrates his workflow is something else. From coding marathons to leveraging office hours for refining startup ideas, there’s a lot to unpack here. GStack is a powerhouse for automation in software development, and Garry has taken it to new heights. In this article, we dive into his tools, his methods, and the lessons we can all learn. We'll explore his use of GStack for automation, parallel cloud code sessions, and how he integrates AI into his process. But watch out, there are security concerns not to overlook.

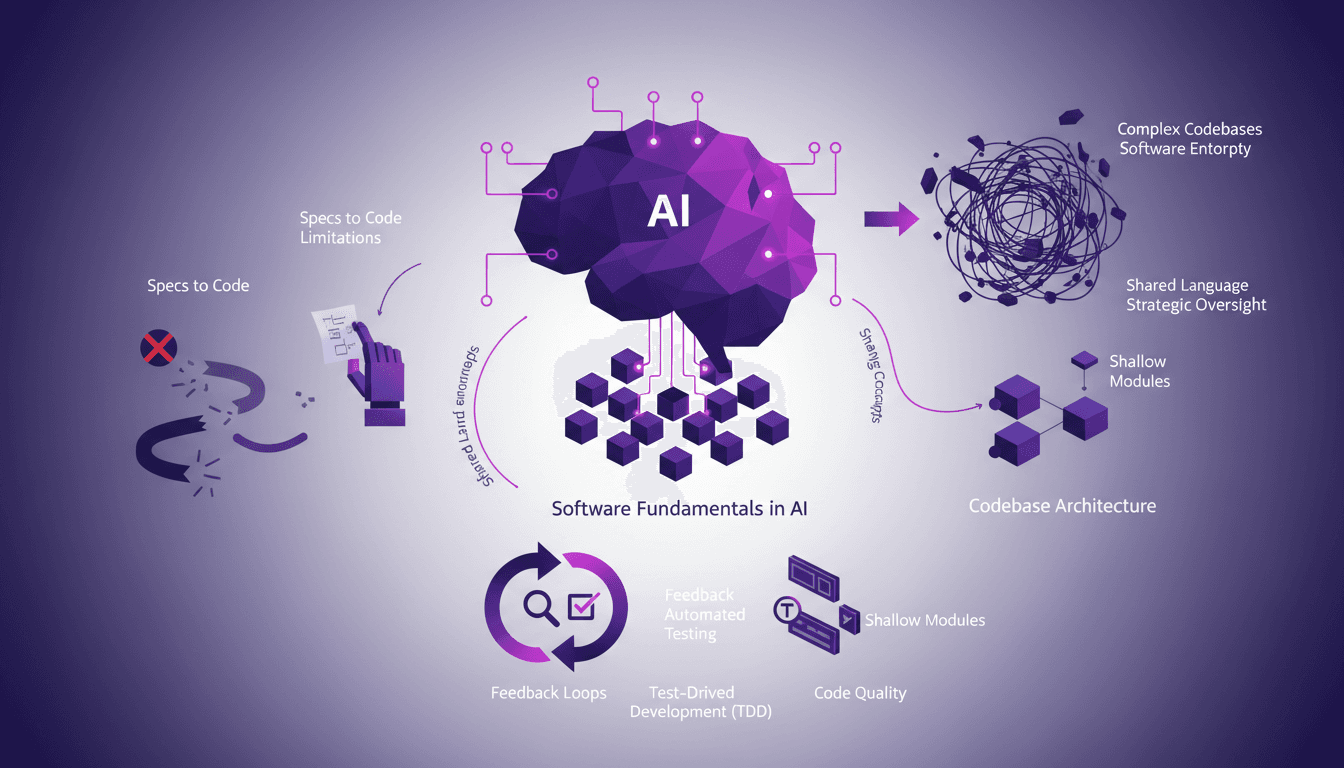

Why Software Fundamentals in AI Matter Now

I've been deep in the trenches of AI development, and if there's one thing I've learned, it's that software fundamentals are not just nice-to-haves—they're game changers. In a world where AI evolves at breakneck speed, it's easy to get caught up in the latest trends and forget the core principles that keep everything running smoothly. This isn't about theory—it's about practical, battle-tested workflows that can save you time and headaches. We'll dive into why the 'specs to code' movement has its limitations, how to manage software entropy, and why shared concepts and strong feedback loops are vital. Test-Driven Development is my third tip and it's crucial for code quality. Let's demystify all this together.

Master Open Source to Boost Your Career

I've been there, navigating the maze of software engineering without a traditional job application. Open source was my ticket. Let me walk you through how I leveraged open source to get hired. In today's tech landscape, open source isn't just a buzzword; it's a career catalyst. Whether it's contributing to projects or building a network, open source offers a pathway to professional growth and personal fulfillment. But beware, contributing to open source comes with its challenges. You'll see how I navigated the hurdles, optimizing my time (spending two to three hours on a single PR proposal) and building an identity within these communities. And then, there's the impact of AI, shifting the game for open source contributors. This podcast episode is here to arm you with the tools to master these resources and boost your career.

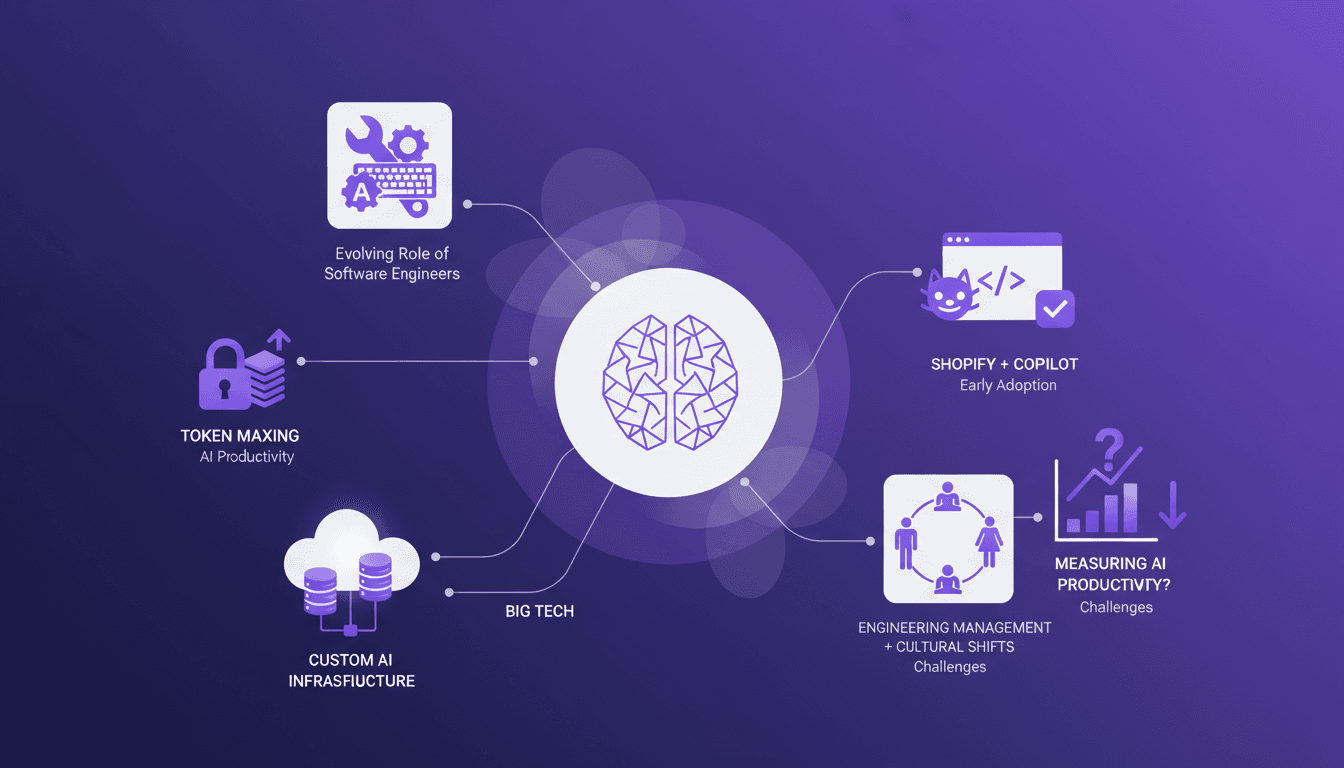

Token Maxing: AI's Revolution in Engineering

I've been in the AI trenches, and let me tell you, the way AI is reshaping software engineering is nothing short of a game changer. But beware, it's not all smooth sailing. In our field, AI tool adoption brings its own set of challenges, like token maxing and the evolving role of engineers. At a recent conference, experts like Gergely Orosz shared valuable insights on these transformations, from productivity impacts to cultural shifts in team management. We will need to navigate these opportunities and challenges to make the most of this technological revolution.