Low-Latency Audio Intelligence: AI Workflow Insights

I've spent countless hours in the trenches of contact centers, where every minute counts. When I decided to tackle low-latency intelligence extraction from messy audio streams, I knew I was up against a giant. But I figured out how to mechanize the process to save time and boost efficiency. Contact centers, the backbone of customer interaction, face major hurdles: hiring, training, productivity. AI can change the game, especially in summarizing and extracting data from audio. Let's dive into the workflow, the technical architecture, and the outcomes.

In contact centers, every minute counts, and I've spent enough time there to know it firsthand. When I set out to mechanize low-latency intelligence extraction from messy audio streams, I knew I was tackling a behemoth. But by orchestrating the right workflow, I managed to turn this process into something far more efficient. The stats speak for themselves: over 50% of contact centers identify hiring, training, and productivity as critical barriers. With average call durations of 6.5 minutes and post-call processing times of 6.3 minutes, there's clearly room for improvement. AI, with its ability to summarize and extract audio data, can really change the game. So, how did I do it? I'll share my experience—from the technical architecture to workflow implementation and tangible outcomes. And of course, a few pitfalls to avoid along the way.

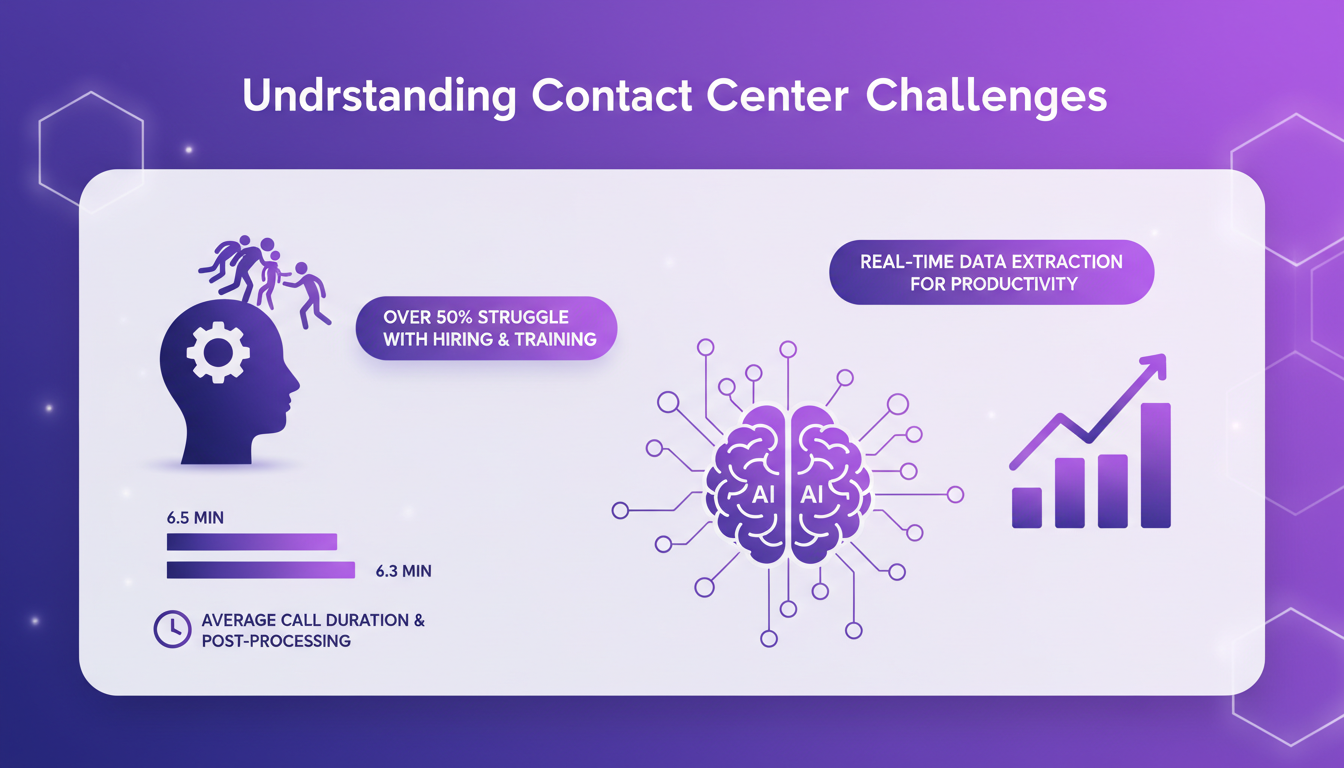

Understanding Contact Center Challenges

In the realm of contact centers, challenges are numerous and often underestimated. Over 50% of contact centers identify hiring and training as critical barriers. This statistic speaks volumes about how difficult it is to maintain an effective operational team. On average, a call lasts 6.5 minutes, but what's striking is that post-processing time is nearly identical, at 6.3 minutes. This is a glaring inefficiency.

The need for real-time data extraction becomes imperative to boost productivity and balance operator well-being with workload management. The balance is delicate, but necessary to avoid agent burnout.

Mechanizing Summarization and Data Extraction

To address these challenges, I implemented a speech-to-text engine with 90% accuracy. This is a crucial step because without accurate transcription, the rest of the process is compromised. I optimized token usage to reduce processing time, which is critical when every millisecond counts.

By using generative AI and LLM (large language models), we were able to extract nuanced data in real time. But watch out, there's always a trade-off between speed and accuracy in real-time processing.

AI Workflow Implementation and Outcomes

I integrated a workflow that streamlines audio data processing. This significantly reduced post-call processing time. Before, our operators spent almost as much time processing data as talking to customers. With AI, this time was cut by nearly 50%.

Sentiment and acoustic analyses became more accurate, but beware of token optimization pitfalls, which can sometimes become a trap.

Technical Architecture for Low-Latency Extraction

I built a robust architecture for real-time processing, balancing load between AI models and system resources. It's a constant challenge to manage constraints, but with scalable solutions, we maintained optimal performance.

Our future roadmap includes continuous improvement and scaling to meet increasing demands. It's an endless journey, but each step brings us closer to a more efficient contact center.

Operator Well-being and Workforce Management

AI tools relieve operators from repetitive tasks, improving workforce management through better data insights. The potential for burnout reduction is real, but it's crucial to monitor and adapt based on operator feedback.

It's important not to overuse technology and ensure it genuinely serves the operators rather than overwhelming them.

By integrating low-latency intelligence extraction into contact centers, I've really streamlined workflows. The result? Increased productivity. We all know that hiring, training, and maintaining productivity are a nightmare for over 50% of centers. But with AI, we're cutting post-call processing time down to an average of 6.3 minutes. This is concrete stuff, not just theory.

- Automating summarization and data extraction boosts operator efficiency.

- Implementing AI in workflows enhances overall productivity.

- The technical architecture for fast extraction has its challenges, but it's worth it.

Looking forward, there are still challenges to tackle, but the potential is huge. Ready to transform your contact center with AI? Let's talk. And for those who want to dive deeper, I recommend watching Dippu Kumar Singh's original video. It's a goldmine for understanding how to extract intelligence from messy audio streams. Watch the video.

Frequently Asked Questions

Thibault Le Balier

Co-fondateur & CTO

Coming from the tech startup ecosystem, Thibault has developed expertise in AI solution architecture that he now puts at the service of large companies (Atos, BNP Paribas, beta.gouv). He works on two axes: mastering AI deployments (local LLMs, MCP security) and optimizing inference costs (offloading, compression, token management).

Related Articles

Discover more articles on similar topics

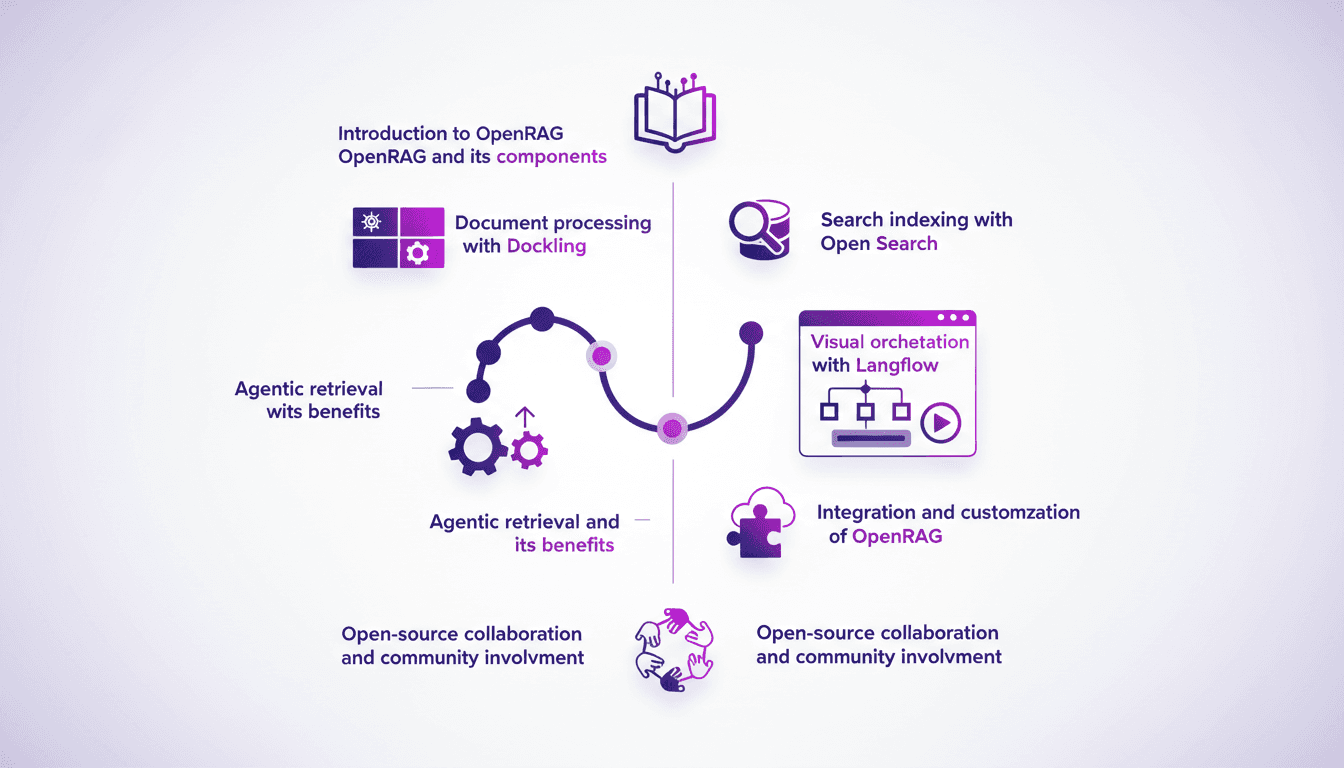

OpenRAG: Building with an Open-Source RAG Stack

Ever tried building a RAG solution from scratch? I have, and let me tell you, OpenRAG is a game-changer. It's not just another toolset—it's a full-stack open-source powerhouse for retrieval augmented generation. In this article, I'll walk you through my experience with OpenRAG, from document processing to search indexing, and how it saves me time and headaches. Let's dive into the components and see how they fit into a robust AI workflow.

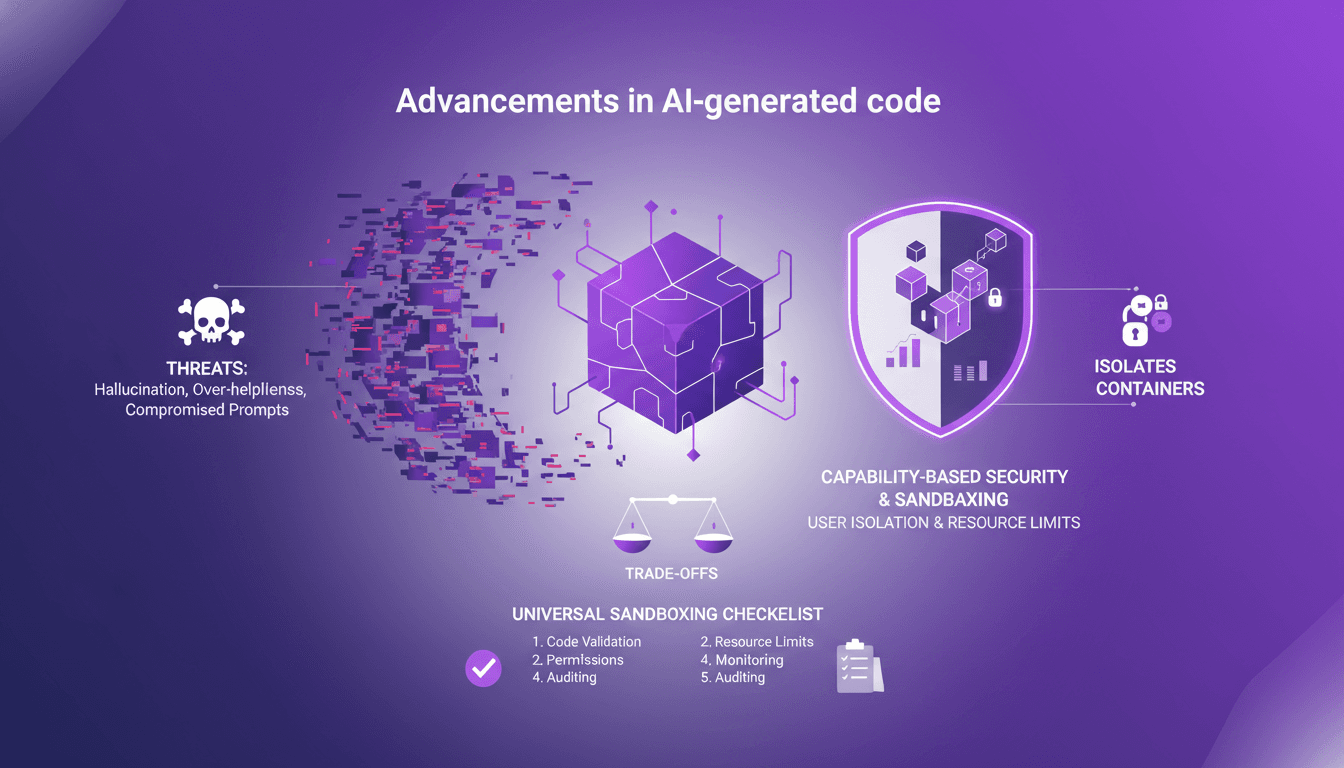

Sandboxing AI Code: Secure Your Projects

I've been burned by AI-generated code more times than I'd like to admit. From hallucinations that crashed my server to overly helpful suggestions that sent me spiraling down rabbit holes, I knew I had to sandbox that AI code. First, I'll walk you through why sandboxing is crucial, then how I set it up to protect my projects. With AI-generated code becoming more prevalent, robust security practices are essential. We’ll explore the threats posed by AI code and how sandboxing can mitigate these risks. (Hint: containers and isolates each have their trade-offs.)

AI Breakthrough: Residual Attention Revolutionizes

I remember the first time I saw the impact of residual attention on AI models. It was like flipping a switch. Suddenly, inefficiencies that plagued deep learning for years were laid bare—and fixed. Since 2015, AI's foundations hadn't budged, but this breakthrough changes everything. Residual attention tackles signal degradation in deep neural networks, making models more efficient. Compared to traditional methods, it delivers superior performance on benchmarks. With open-sourcing, its potential impact is huge, notably in Chinese labs where hardware constraints drive innovation. But don't underestimate the complexity of integration.

From Coding to Solution-Focused Engineering

I've spent enough sleepless nights coding to know that the real challenge isn't about how much code we write, but the solutions we deliver. In a world where you can code 55 times faster, the mistake is focusing solely on churning out lines of code. What really matters is solution-focused software engineering, AI adoption, and integrating all this into our platforms. If you've ever wondered why your productivity only improves by 14% despite all your efforts, maybe it's because you haven't yet embraced this holistic approach that pushes beyond just coding.

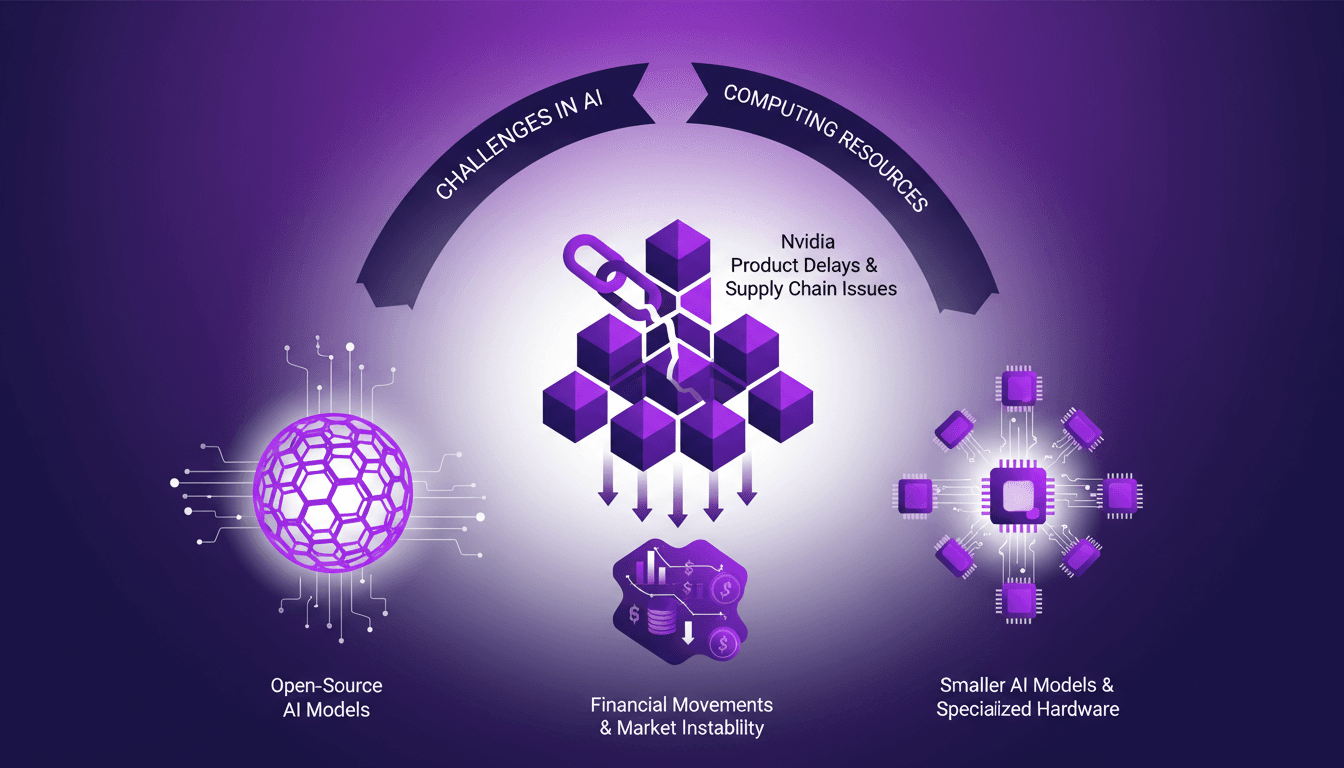

AI Resource Struggles: Nvidia Delays, Open Source

I remember the first time I hit a wall with AI compute resources. It felt like trying to run a marathon on a treadmill stuck at walking speed. In this rapidly evolving AI landscape, we're facing real challenges—from Nvidia's delays to the growing allure of open-source models. The market is in flux, with financial movements like Mistral's debt announcements adding another layer of complexity. We need to navigate resource shortages, the emergence of smaller AI models, and supply chain issues affecting component lead times. Let's dive into these dynamics from a practitioner’s perspective, focusing on practical solutions and trade-offs.