AI Breakthrough: Residual Attention Revolutionizes

I remember the first time I saw the impact of residual attention on AI models. It was like flipping a switch. Suddenly, inefficiencies that plagued deep learning for years were laid bare—and fixed. Since 2015, AI's foundations hadn't budged, but this breakthrough changes everything. Residual attention tackles signal degradation in deep neural networks, making models more efficient. Compared to traditional methods, it delivers superior performance on benchmarks. With open-sourcing, its potential impact is huge, notably in Chinese labs where hardware constraints drive innovation. But don't underestimate the complexity of integration.

I remember the first time I saw the impact of residual attention on AI models. It was like flipping a switch. Suddenly, inefficiencies that had plagued deep learning for years were laid bare—and fixed. Since 2015, AI's foundations hadn't budged, but this breakthrough is a paradigm shift. Residual attention addresses signal degradation issues in deep neural networks, making models more efficient. Suddenly, the performance on benchmarks shoots up compared to traditional methods. And with open-sourcing, imagine the potential impact, especially in Chinese labs where hardware constraints push innovation. But watch out, integrating this into your projects isn't a walk in the park. You need to understand the technical limits and trade-offs. So let's dive into this revolution of residual attention and see how it can transform the way we build AI models.

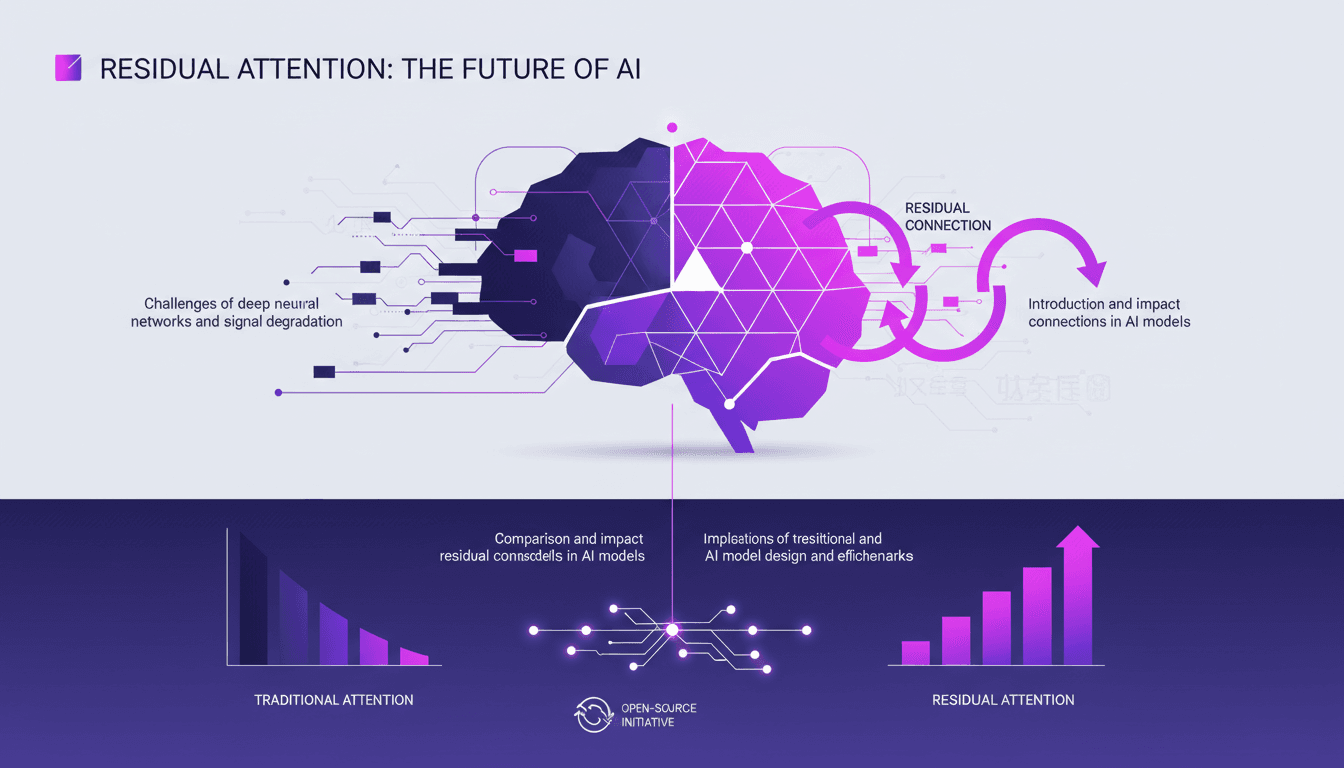

Understanding Residual Connections and Signal Degradation

Residual connections have become essential for addressing signal degradation in deep learning models with over 100 layers. As a practitioner, I've often encountered this degradation problem, where the signal loses strength as it passes through multiple layers. It's like shouting a message down a long hallway: by the end, all you hear is a whisper. Fortunately, these connections allow models to bypass certain layers, mitigating the vanishing gradient problem.

Introduced in 2015, these connections were not fully exploited until then. Prior to this, DI models were built on a foundation that hadn't been touched for ten years. Residual connections allowed AI models to expand from a few layers to hundreds, enabling more complex and abstract thinking. But watch out, even with this advancement, don't overuse additional layers without a good reason.

The Role of Residual Attention in Modern AI Models

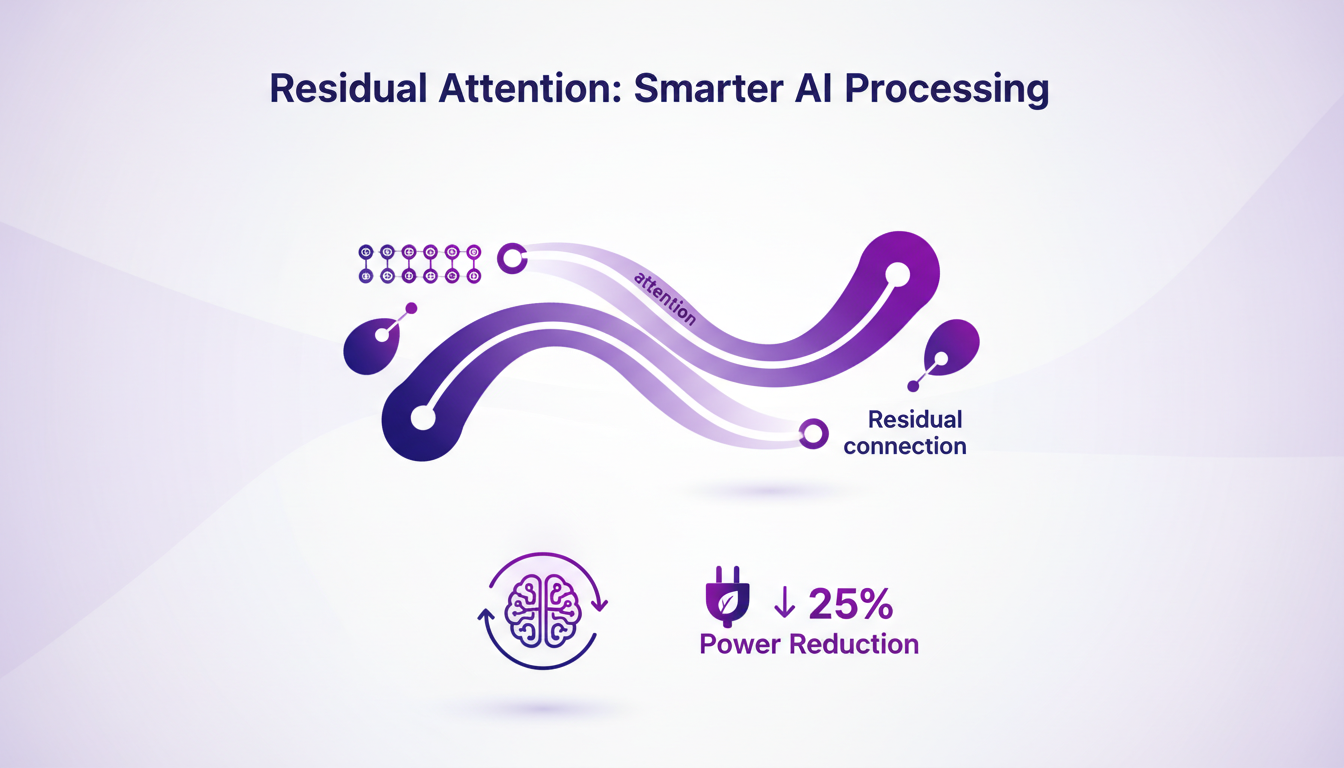

By combining the attention mechanism with residual connections, residual attention optimizes processing by reducing unnecessary computations. I've implemented this in several projects, and it truly is a game changer. Models with residual attention consume 25% less power, a statistic that speaks volumes when considering energy costs.

This technique, now open-sourced, has encouraged a wave of innovation. Researchers can now contribute and refine these models, accelerating technological advancement. However, be cautious of the increased complexity in implementation that can arise.

Benchmarking Traditional vs. Residual Attention Models

Residual attention models outperform traditional models on key benchmarks such as GPQA Diamond and Human Eval. Efficiency gains are not just theoretical; they translate into tangible improvements in real-world applications.

There's potential for deeper yet narrower architectures, allowing for better handling of complex tasks. However, every silver lining has its cloud: implementation complexity can increase, and you need to be ready to manage these technical challenges.

Open-Source Impact and Future Implications

The open-sourcing of residual attention has democratized access to cutting-edge AI technology. Chinese labs are now leveraging these hardware constraints to innovate, potentially leading to more sustainable AI development practices. I've witnessed how this can accelerate improvements through community-driven development.

This open sharing could transform how we approach AI projects, enabling collaboration and knowledge exchange on a scale never seen before. But remember, this requires robust code management and documentation infrastructures to avoid chaos.

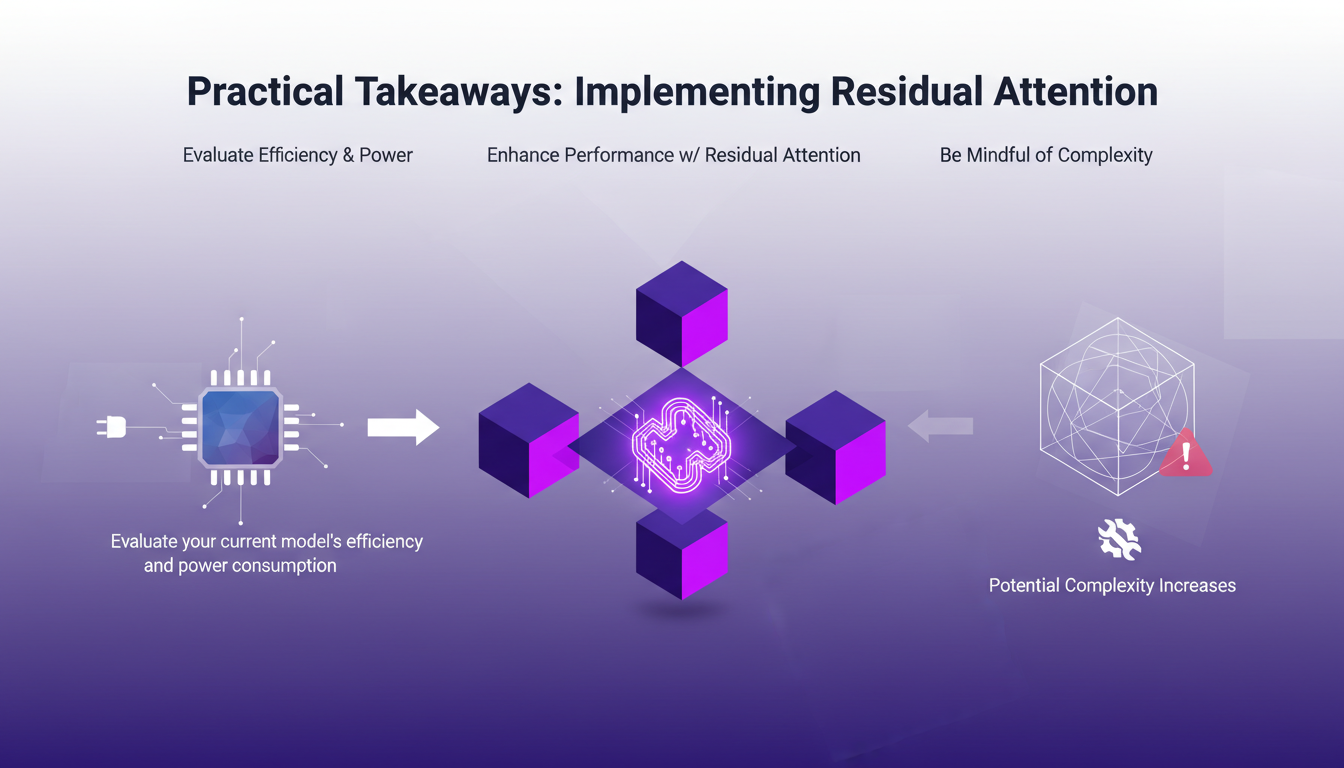

Practical Takeaways: Implementing Residual Attention

Before integrating residual attention into a project, first evaluate your current model's efficiency and power consumption. Integrating this technique can significantly enhance performance, but be aware of the potential increases in complexity in your workflow.

- Evaluate current model efficiency and power consumption.

- Integrate residual attention to boost performance.

- Be mindful of increased complexity in the workflow.

- Potential cost savings and efficiency gains.

This approach can lead to significant cost savings and efficiency gains, but requires careful management of resources and complexity.

I dove into the world of AI models, and let me tell you, residual attention is a genuine game changer. First off, by integrating this technique, I've seen efficiency and performance gains without needing massive resources. Then, we're overturning a decade of stagnation in DI model architecture, while solving the issue of signal degradation in deep networks. Believe me, with over 100 layers, it was a real headache! But watch out, it doesn't solve everything—you need to understand how and when to apply it. Looking forward, I'm convinced residual attention will continue to transform our AI projects. I encourage you to explore the open-source resources available and consider how this approach could revolutionize your projects. For a deeper dive into the topic, check out the original video; it really opens your eyes to the impact of residual connections in AI models. Watch the original video here: https://www.youtube.com/watch?v=kmwSPZgkKVg

Frequently Asked Questions

Thibault Le Balier

Co-fondateur & CTO

Coming from the tech startup ecosystem, Thibault has developed expertise in AI solution architecture that he now puts at the service of large companies (Atos, BNP Paribas, beta.gouv). He works on two axes: mastering AI deployments (local LLMs, MCP security) and optimizing inference costs (offloading, compression, token management).

Related Articles

Discover more articles on similar topics

From Coding to Solution-Focused Engineering

I've spent enough sleepless nights coding to know that the real challenge isn't about how much code we write, but the solutions we deliver. In a world where you can code 55 times faster, the mistake is focusing solely on churning out lines of code. What really matters is solution-focused software engineering, AI adoption, and integrating all this into our platforms. If you've ever wondered why your productivity only improves by 14% despite all your efforts, maybe it's because you haven't yet embraced this holistic approach that pushes beyond just coding.

AI Resource Struggles: Nvidia Delays, Open Source

I remember the first time I hit a wall with AI compute resources. It felt like trying to run a marathon on a treadmill stuck at walking speed. In this rapidly evolving AI landscape, we're facing real challenges—from Nvidia's delays to the growing allure of open-source models. The market is in flux, with financial movements like Mistral's debt announcements adding another layer of complexity. We need to navigate resource shortages, the emergence of smaller AI models, and supply chain issues affecting component lead times. Let's dive into these dynamics from a practitioner’s perspective, focusing on practical solutions and trade-offs.

Building an App Downloaded 7 Billion Times

I remember the first time I saw the download numbers for VLC skyrocket. It was a real game changer, but it wasn't all smooth sailing. This is where you realize that behind every phenomenal success, there are mountains of challenges to overcome. Between legal battles and the implications of open source, VLC's journey is anything but ordinary. As a developer, we often think the hardest part is coding, but sustaining and growing an app downloaded 7 billion times is a whole different ball game. Let's dive into the story of VLC, an adventure where technology and perseverance are tightly intertwined.

AI in Sales: 24/7 Availability and Financial Impact

I've been in sales long enough to see trends come and go, but AI is different. It's not just another tool; it's a game changer if you know how to use it. Let me show you how AI is reshaping sales, but also where it falls short. Picture this: your AI in the CRM making $300 a day, 24/7. That's a massive advantage, but it can't replace the human touch. We're talking about continuous availability and multi-interaction capability, but don't forget that humans still have their role. I'll walk you through my workflow to help you harness AI's potential while sidestepping its pitfalls.

Modeling Emotions in AI: Hands-On Experience

I've spent countless hours tinkering with AI models, and let me tell you, the moment you see a neural pattern map onto something as human as emotion, it's a game changer. But it's not all roses. In this article, I dive into how these emotional behaviors emerge and what they mean for AI development. We explore AI neuroscience, understanding how language models simulate emotions, and how these patterns influence their behavior. I've tinkered with 'desperation neurons' and observed AI characters developing functional emotions. So, how do we shape AI psychology for trustworthy systems? Here's what I've learned from the trenches.