Post Hog Wizard: Boosting Agent Efficiency

I still remember the first time I fired up Post Hog Wizard—15,000 users every month can’t be wrong. It’s a game changer, but like any tool, it comes with its quirks. Between model rot and human error, I’ve learned to keep my integrations smooth and efficient. I connect, I orchestrate, and I ensure the data stays relevant. In this talk, I’ll dive into how I tackle these challenges head-on, maximizing agent efficiency and preventing code from becoming obsolete.

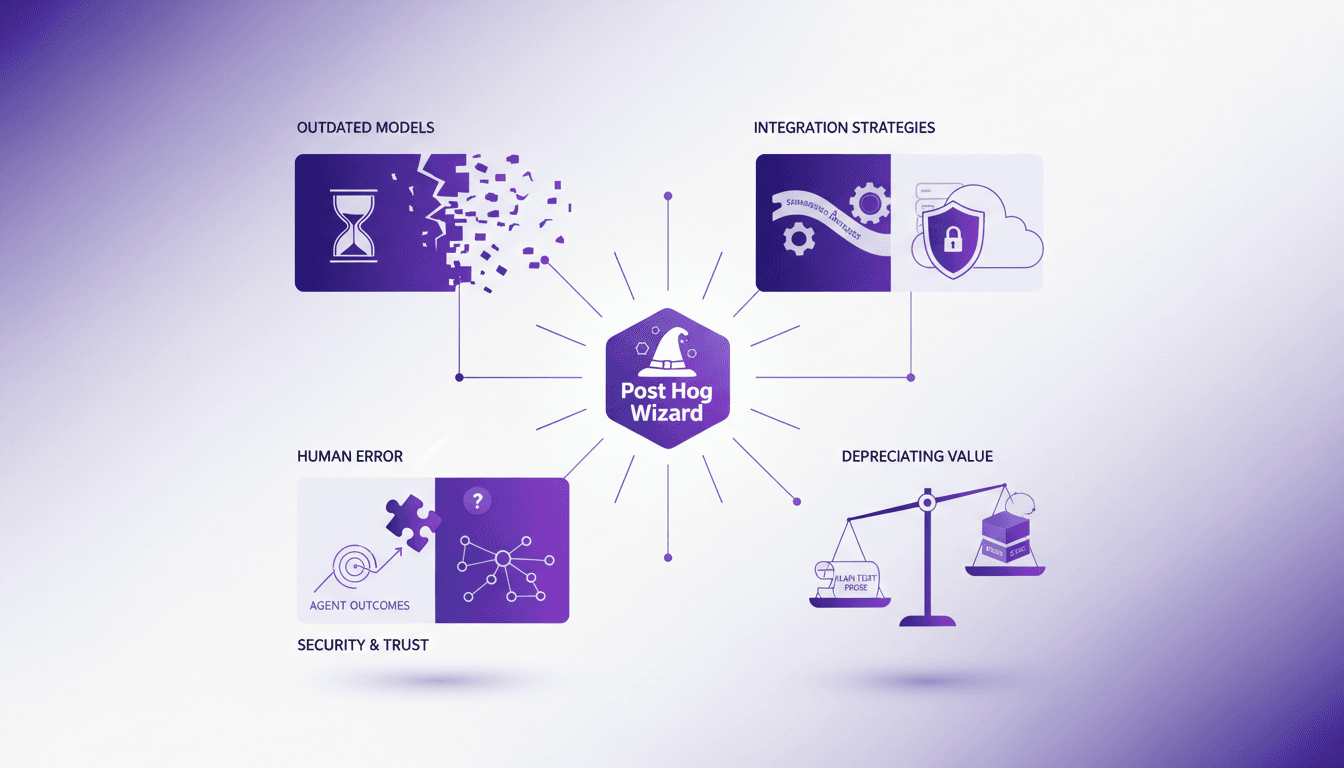

I still remember the first time I fired up Post Hog Wizard—when 15,000 people use it every month, you know you’ve got a powerful tool in your hands. It’s a game changer, but it comes with its quirks. I quickly found out that model rot and human error could turn a smooth integration into a real headache. So, how do I manage? I connect my systems, I orchestrate my data, and I make sure nothing gets lost along the way. In this talk, I’ll share how I tackle these challenges with well-honed strategies. We’ll cover maintaining integration consistency, the impact of human errors on agent outcomes, and the necessary security when running agents on external machines. Essentially, I’ll show you how to prevent your code from becoming obsolete and your data from rotting.

Leveraging Post Hog Wizard: Real-World Benefits

Let's dive into the Post Hog Wizard. I've seen firsthand how this tool can turn two hours of misery into eight minutes of productivity. Yes, you read that right. It's used by 15,000 people each month, which is a testament to its undeniable efficiency. Imagine a tool where 90% of its functionality is driven by markdown files: it's lightweight yet powerful. User feedback on platforms like Blue Sky and Twitter consistently highlights its positive impact.

The real-time savings it offers is fascinating. Think about all you can accomplish with those recovered hours. A tip: never underestimate the power of markdown files to make an agent lightweight and responsive.

- 15,000 monthly users prove its efficiency.

- Two hours of misery turned into eight minutes of productivity.

- 90% functionality in markdown for maximum lightness.

Tackling Model Rot: Staying Ahead of Outdated Data

Model rot, the bane of outdated data models, is very real. These models become a snapshot of the web as it was months ago, which can pose problems. To avoid this, I recommend "breadcrumbing" the agent, a method to keep track of the logic and decisions used.

Make sure to have fine-grain control over tool usage to prevent this phenomenon. Regular updates are crucial to keep data relevant. I've often noticed that without this diligence, models quickly lose their utility.

- Model rot: A model can represent data from 6 to 18 months ago.

- Breadcrumbing: A method to keep logic clear.

- Fine-grain control: Essential to prevent obsolescence.

Maintaining Consistent Integrations: The 'Model Airplane' Approach

Integrations, I often compare to model airplanes. Precision is key. A consistent integration prevents disruptions in workflows. By maintaining a regular maintenance schedule, potential issues can be spotted early. Adaptation is crucial as new data and requirements emerge.

I've learned the hard way that neglecting this approach can lead to strange architectures and unpredictable paths through the problem space. Keep your integrations precise and under control.

- Precision is key: To prevent disruptions.

- Maintenance schedule: To identify issues early.

- Continuous adaptation: In the face of new data and requirements.

Human Error and Security: Minimizing Risks

Human error is inevitable, but it can be minimized with post-run interrogations. Security concerns often arise when running agents on external machines. It is imperative to implement strict protocols to ensure data safety.

I've seen agents fail due to human error, which is why the balance between trust in automation and human oversight is vital. I recommend regular interrogations after each run to detect critical issues, like missing tools or contradictory directives.

- Human error: A significant threat to agent performance.

- Data security: Requires strict protocols.

- Post-run interrogations: To reveal critical issues.

The Shift from Code to Plain Text Prose: A New Paradigm

The depreciating value of code emphasizes the importance of clear documentation. Plain text prose offers flexibility and accessibility. Markdown files are a testament to this shift, providing simplicity and efficiency. Balancing code with prose ensures both technical depth and user-friendliness.

Every time I've faced an aging codebase, I've seen the importance of well-done documentation. It allows for rapid adaptation and maintains the value of a project even as the code becomes obsolete.

- Code: A depreciating asset: Its value diminishes over time.

- Plain text prose: Enhances adaptability and performance.

- Balance between code and prose: Ensures technical depth and user-friendliness.

Navigating Post Hog Wizard is like balancing precision, adaptation, and foresight. First, I tackle model rot head-on – it's crucial to keep our data relevant and fresh. Then, I ensure my integrations are consistent; without that, it's a disaster waiting to happen. And finally, I balance code with prose to keep human error at bay (or worse, becoming the source of it).

- Each month, 15,000 users run the Post Hog Wizard, which is a strong indicator of its impact.

- In the last six hours, there were two unprompted positive posts: proof it works!

- I've turned two hours of misery into eight minutes of pseudo entertainment, and that's something.

It's a real game changer for data orchestration, but watch out for outdated models and human error. Ready to optimize your data orchestration? Dive into Post Hog Wizard and transform your workflows today. Watch Danilo Campos' original video on YouTube for even deeper insights. See you there?

Frequently Asked Questions

Thibault Le Balier

Co-fondateur & CTO

Coming from the tech startup ecosystem, Thibault has developed expertise in AI solution architecture that he now puts at the service of large companies (Atos, BNP Paribas, beta.gouv). He works on two axes: mastering AI deployments (local LLMs, MCP security) and optimizing inference costs (offloading, compression, token management).

Related Articles

Discover more articles on similar topics

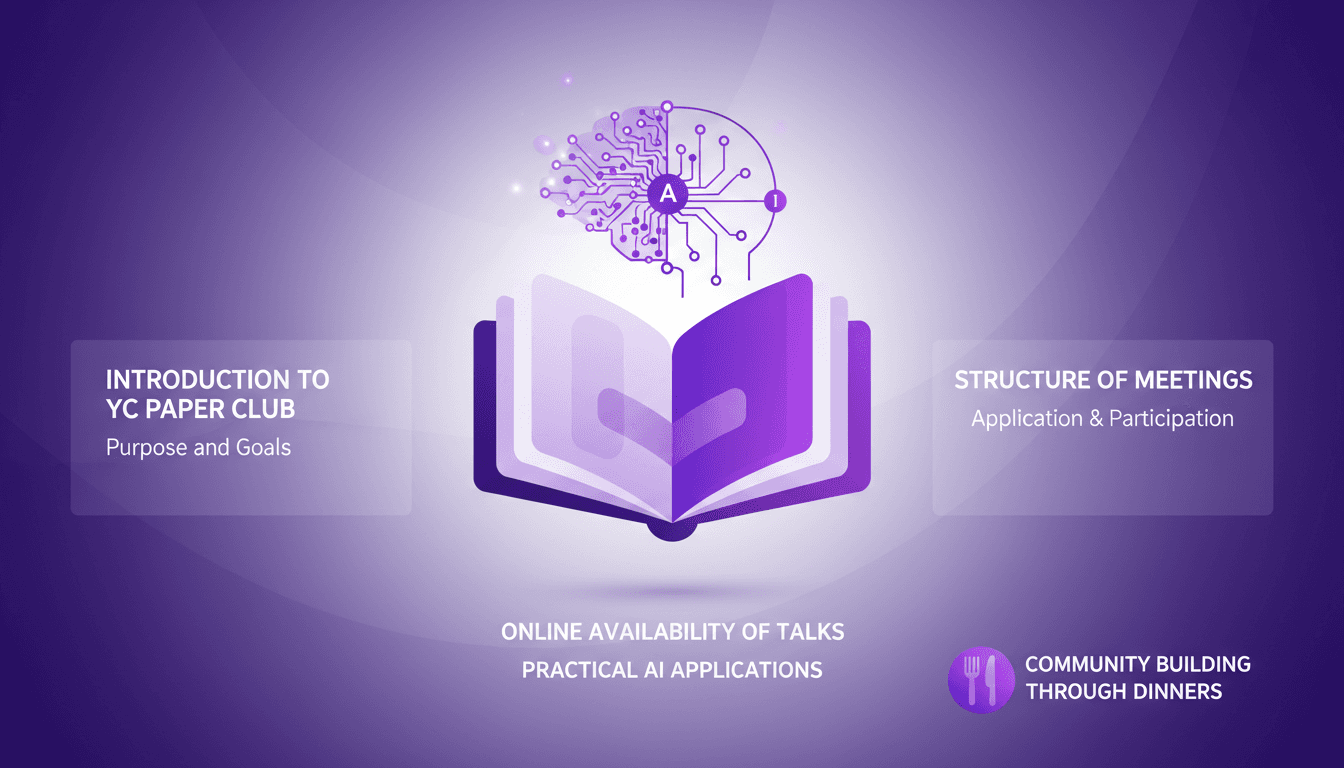

YC Paper Club: Goals and Structure

I joined YC Paper Club last year, and honestly, it's been a game changer for how I understand AI research. Picture a group where we dive deep into AI research papers and discuss practical applications. This isn't just another AI meetup. If you're serious about AI, this is where you need to be. The club keeps it under 100 people, so you really get to build strong connections (and the dinners help). Talks are available online, making it accessible to everyone. But be warned, it's intense — be ready to dig deep into the material and share your insights. It's a space where we build, not just observe.

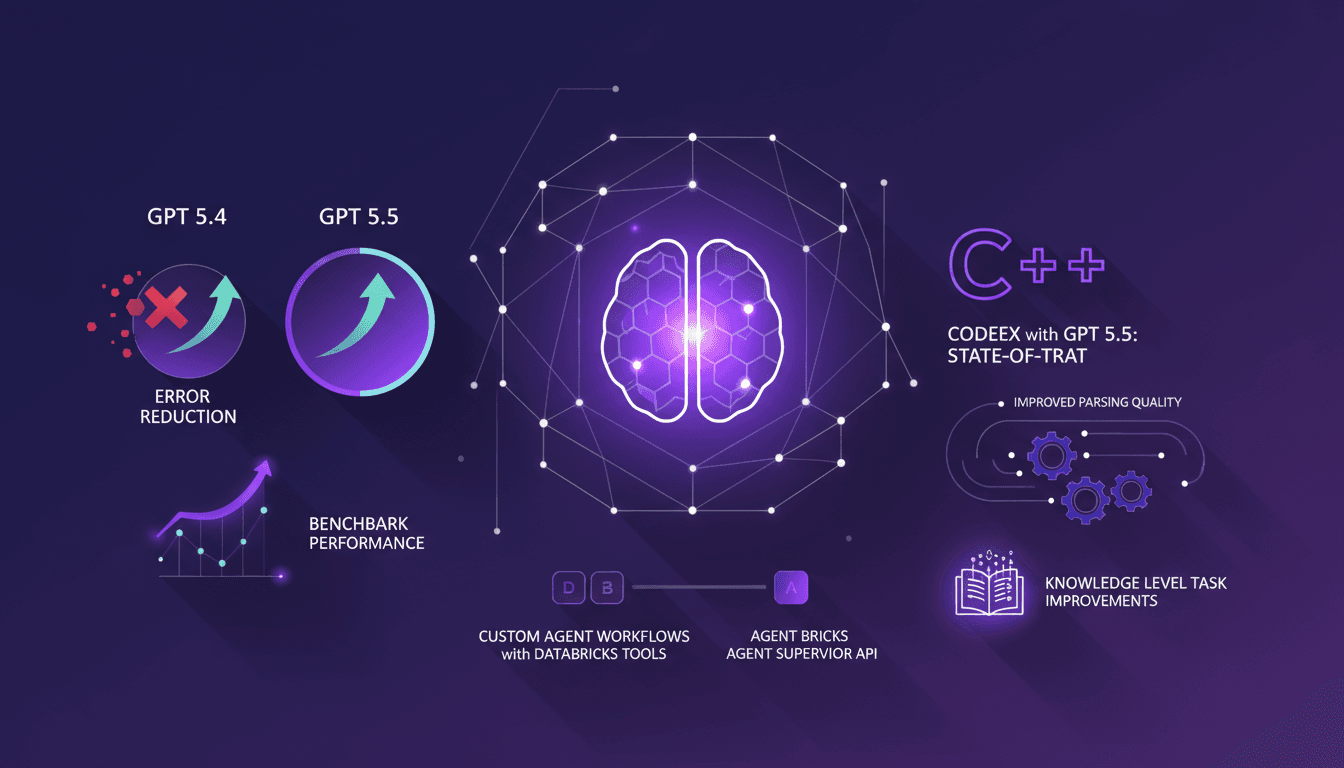

Error Reduction in GPT-5.5 with Databricks

I dove into GPT-5.5 with Databricks, and let me tell you, the improvements are not just theoretical. After integrating it into my workflows, I saw a 46% error reduction compared to 5.4. The performance boost, especially with the Agent Supervisor API, is impressive. Parsing quality and task performance have clearly upped their game. Needless to say, my custom agents, with Databricks tools, are now more efficient. But watch out, it's not all perfect; you need to handle these new tools with care to avoid pitfalls. This update, I must admit, has directly impacted my projects, and I'm not stopping here.

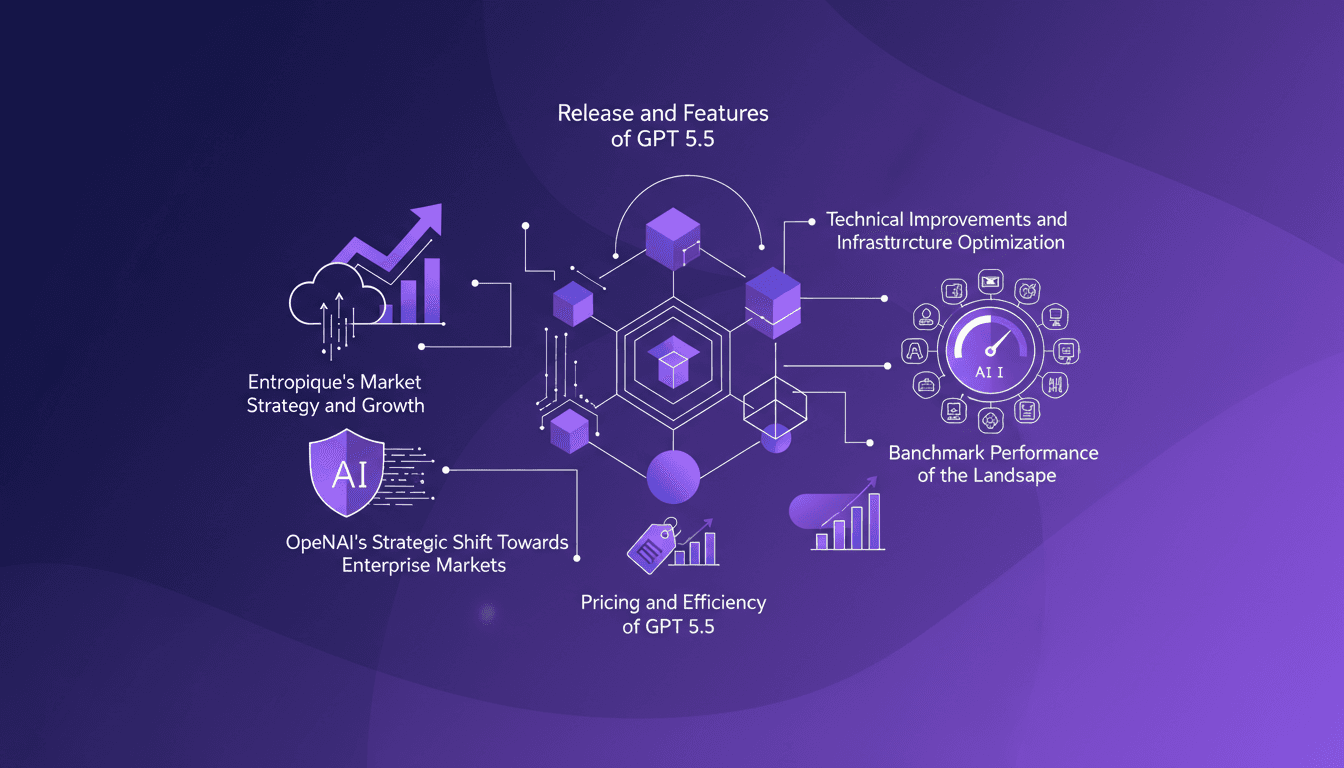

GPT 5.5: Token Speed and Enterprise Strategy

I dove into GPT 5.5 the moment it dropped, and let me tell you, the 20% token speed boost isn't just a number—it's a game changer for real-time applications. But there's more under the hood than just speed. Released on April 23, 2026, this model marks a rapid evolution in OpenAI's offerings. This isn't just about new features; it's a strategic pivot towards enterprise solutions, optimizing infrastructure, and redefining efficiency. We'll explore the release of GPT 5.5, Entropique's market strategy, the impact of Cloud Code on the coding landscape, and how OpenAI is reshaping its approach to conquer the enterprise markets.

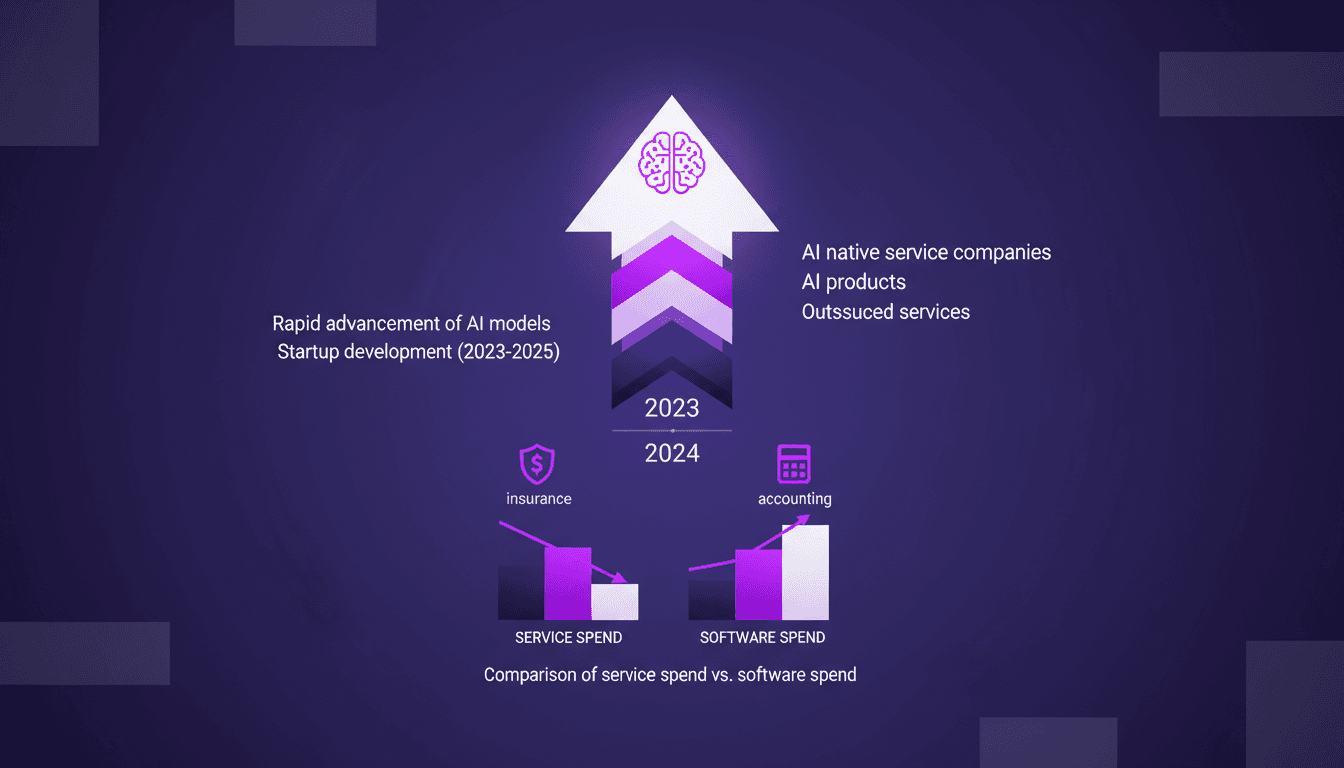

AI Native Services: Revolutionizing Industries

I've been knee-deep in AI for years, watching tools evolve into full-fledged AI native services. This isn't just a trend—it's a revolution. With AI models advancing at breakneck speed, we're witnessing a shift from traditional software tools to AI-native services. These aren't just buzzwords—real companies are emerging that leverage AI to replace entire service sectors. Industries like insurance and accounting are already feeling the impact. Let me walk you through how this unfolds and why it's a game changer. It's not just hype, it's happening.

Teaching AI to Close: 6 Months of Insights

I spent six months training an AI to close deals—230 real estate investors and wholesalers later, I learned that AI's edge isn't speed, but its lack of ego. This journey reshaped my understanding of sales, challenging traditional training methods. In a field that's been taught the same way for a century, AI is changing the game. Let's dive into how AI can optimize sales processes and redefine how we approach prospects. Topics include AI's role in sales, misconceptions in traditional sales training, the importance of diagnosing prospects, and the future of sales with AI. Get ready for a deep dive into the future of sales, where AI might just become your best ally.