GPT 5.4: Context Revolution with 1 Million

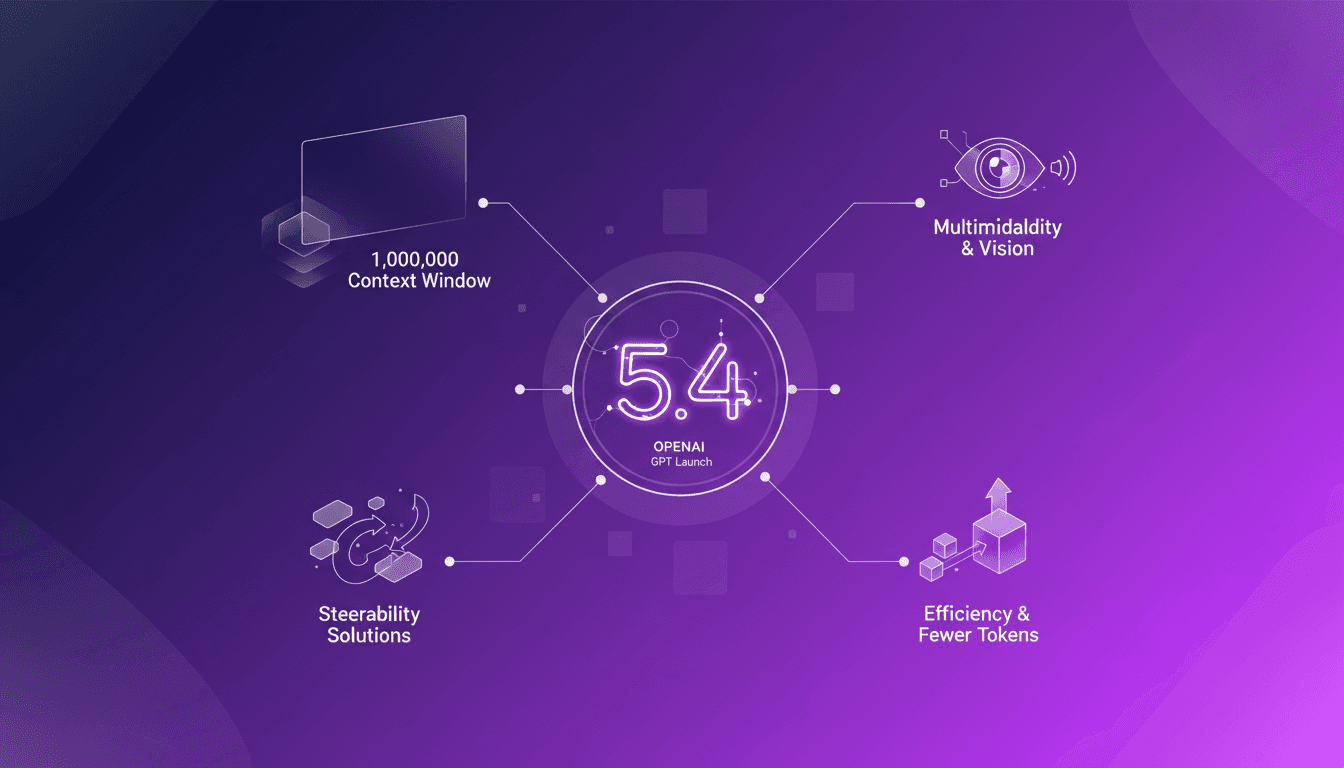

I've been in the trenches with AI models for years, and let me tell you, the launch of GPT 5.4 is a game changer. This model promises a massive leap with its 1 million context window, enhanced multimodal capabilities, and solutions to the notorious steerability problem. But before you dive in headfirst, let's break down what this means for us builders. Imagine orchestrating a project where context isn’t a crushing limit anymore, where vision and text blend seamlessly. GPT 5.4 isn’t just a simple update; it’s a reinvention of the wheel, but watch out for the usual pitfalls: don’t overload your project with promises without understanding the constraints. Let's explore these new features and see how they stack up in real-world applications.

I've been in the trenches with AI models for years, and let me tell you, the launch of GPT 5.4 is a game changer. But before you dive in headfirst, let's break down what this means for us builders. First, the 1 million context window. I'm not going to lie; that's unprecedented. Imagine the possibilities: no more chopping your data into ridiculous pieces just to make it fit. Then there's the enhanced multimodal capabilities. I've hit frustrating limits in projects where text and image needed to talk, and now GPT 5.4 promises to smooth those obstacles. But watch out, steerability is a step forward, not a magic wand. I've been burned by relying too heavily on it. Finally, efficiency with fewer tokens is enticing, but it requires careful management. Let's explore these advancements together and see how they fit into our daily workflows.

Harnessing the 1 Million Context Window

I found myself hitting context limits with previous models, so when OpenAI released GPT 5.4 with a 1 million token context window, it was a real game changer. For managing complex projects spanning multiple documents, this extended window allows integrating more data without having to chop it down or simplify.

But watch out, more context means more processing. I noticed it can weigh down computations if you're not careful. Sometimes, it's faster to break tasks into parts, especially when resources are tight.

- Practical applications: Managing multi-document projects.

- Limitations: Increased processing load.

- Efficiency gains: Less need for data simplification.

Exploring Multimodality and Vision Tasks

Integrating multimodality is one of the most anticipated aspects of GPT 5.4. Imagine processing texts and images together for richer analysis. I've used this for projects where visual analysis complements textual understanding.

However, there's a balance to maintain. More complexity can mean more processing time and resources consumed. Sometimes, it's not necessary to add a vision layer if text is enough.

- Real-world use cases: Document analysis with visual elements.

- Trade-offs: Increased processing time.

- Vision tasks: Enhanced interpretation of visual data.

Tackling the Steerability Problem

Steerability is crucial to avoid model drift. With GPT 5.4, OpenAI has made strides by allowing interruption and redirection of the model's thought process. It's handy when the model starts veering off track.

- Solutions: Interrupt and redirect the process.

- Real-life scenarios: Avoiding misdirection in analysis.

- Trade-offs: Balancing control and flexibility.

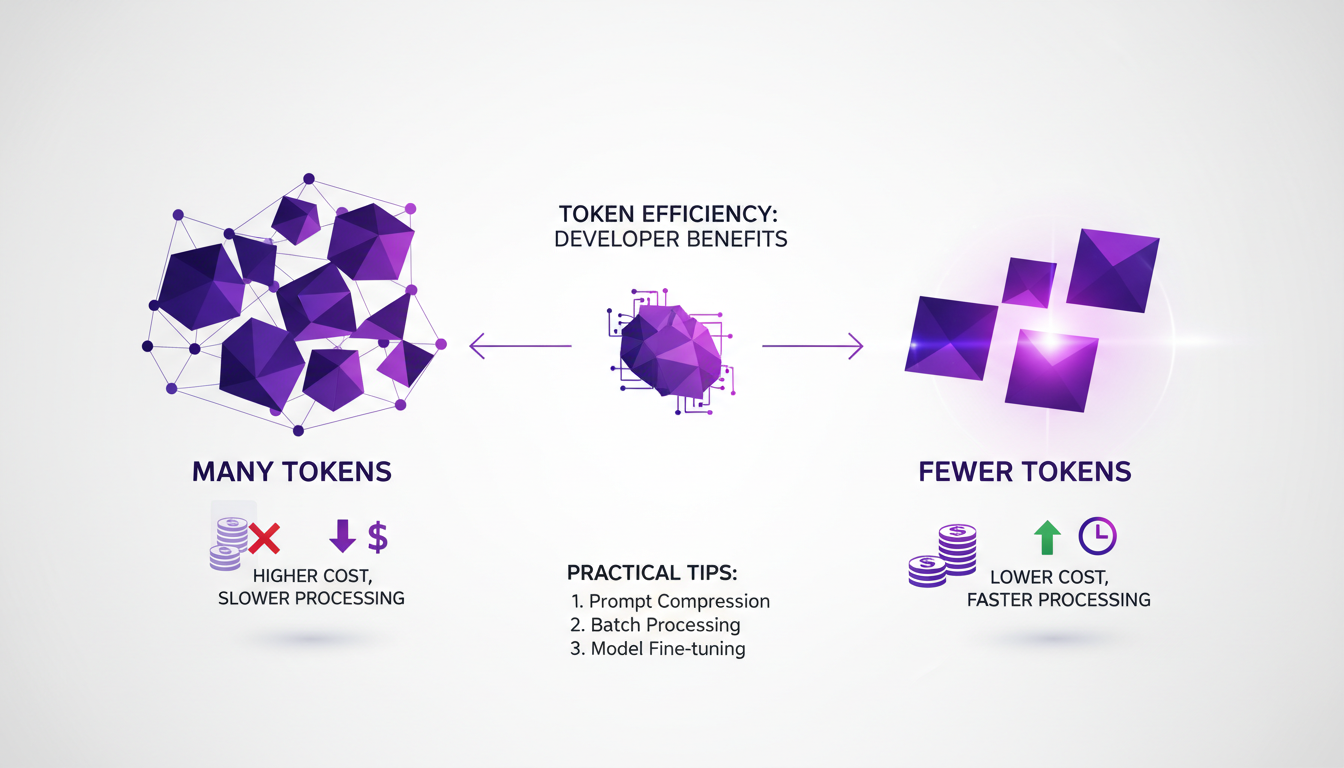

Efficiency Improvements with Fewer Tokens

One of the strengths of GPT 5.4 is its increased efficiency in token usage. Fewer tokens mean reduced costs and shorter processing times. I've optimized my projects by using more concise prompts and cutting unnecessary verbosity.

However, there are times when fewer tokens aren't enough, especially for very detailed projects. In such cases, a hybrid strategy might be necessary.

- Impact: Reduced costs and processing time.

- Practical tips: Use concise prompts.

- Limitations: Insufficiency in highly detailed projects.

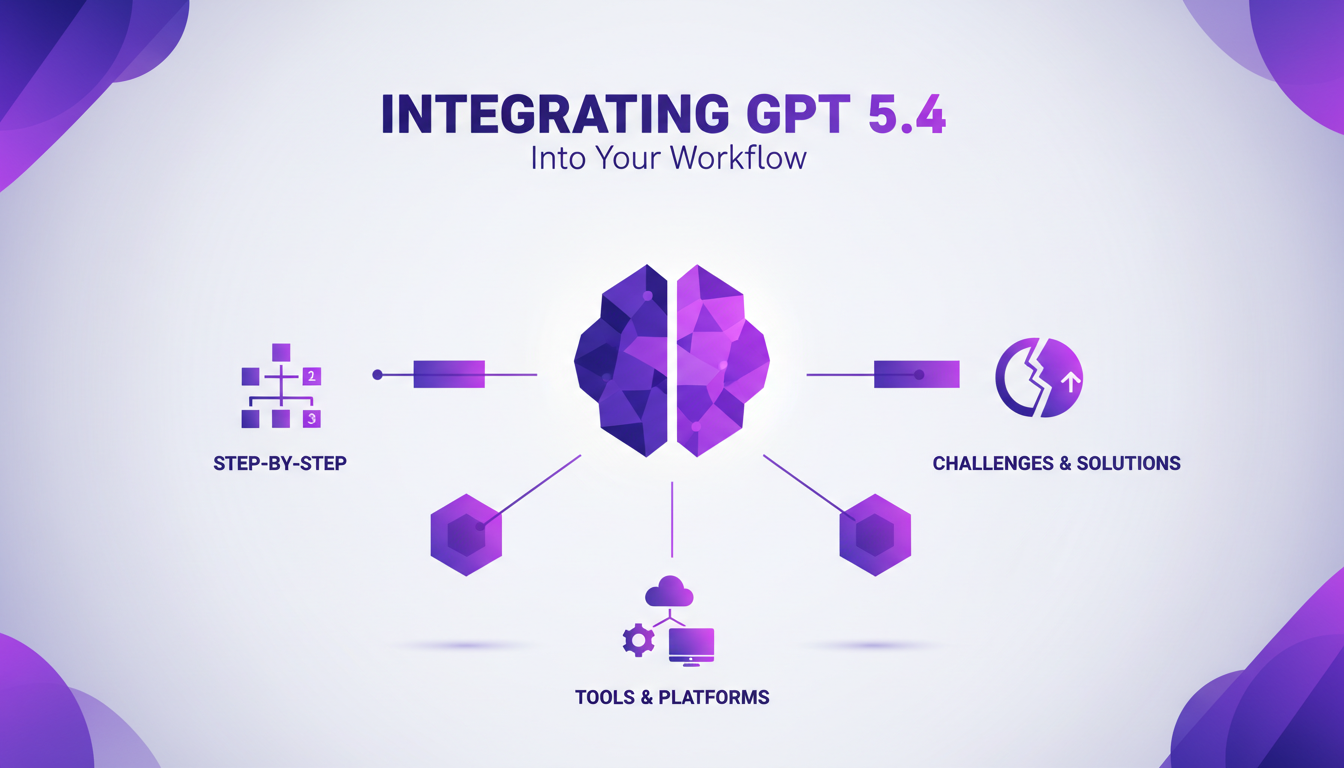

Integrating GPT 5.4 into Your Workflow

Integrating GPT 5.4 has been an adventure. I started by embedding it into my existing systems, using tools like custom APIs. Each step posed its challenges, but the solutions I found have significantly improved my processes.

Looking ahead, I ensure my system is ready to evolve with the next AI advancements. This includes regularly assessing tools and updating my practices.

- Steps: Gradual integration into existing systems.

- Tools: Use of custom APIs.

- Challenges and solutions: Continuous process improvement.

- Future-proofing: System assessment and updates.

With GPT 5.4, we're stepping into a new era for AI applications. First, the model offers a whopping 1 million token context window, which is a game changer for handling complex information. But watch out, you need to manage this capacity well to avoid skyrocketing resource costs. Next, the multimodality is truly on point: text, image... I can finally orchestrate richer experiences. And finally, the steerability issue, or the ability to direct the model, is better handled, but you still have to navigate carefully to avoid unexpected results.

Looking forward, GPT 5.4 promises to streamline our workflows with efficiency I hadn't seen before. Ready to integrate GPT 5.4 into your projects? Let's chat about maximizing its impact. I recommend watching the original video 'OpenAI drops GPT 4.5' for a deeper dive. It's worth it!

Frequently Asked Questions

Thibault Le Balier

Co-fondateur & CTO

Coming from the tech startup ecosystem, Thibault has developed expertise in AI solution architecture that he now puts at the service of large companies (Atos, BNP Paribas, beta.gouv). He works on two axes: mastering AI deployments (local LLMs, MCP security) and optimizing inference costs (offloading, compression, token management).

Related Articles

Discover more articles on similar topics

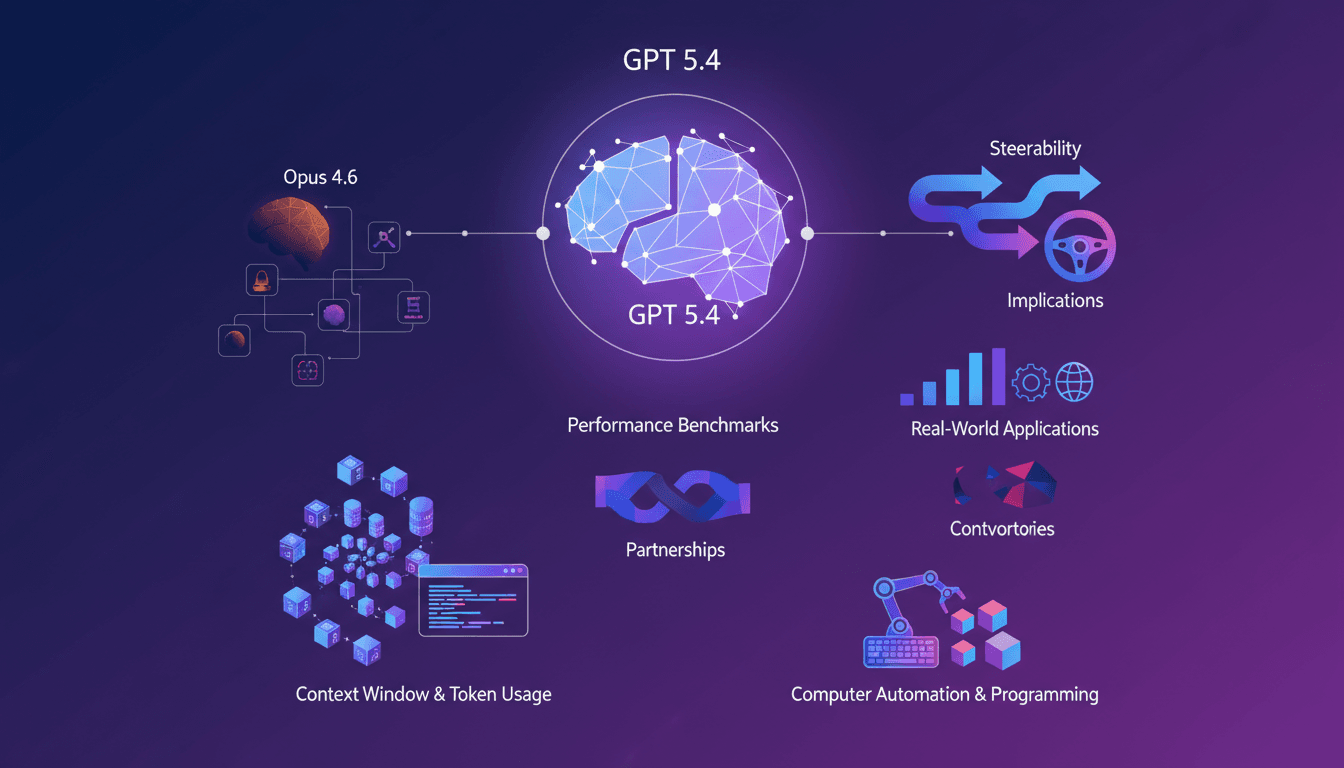

GPT 5.4 vs Opus 4.6: Killer or Just Hype?

I dove headfirst into GPT 5.4 to see if it could dethrone Opus 4.6. Having been burned by overhyped AI promises before, I wanted to separate the noise from the real game changers. GPT 5.4 boasts a massive context window of one million tokens and new steerability features. But is it truly a leap forward or just another iteration with flashy marketing? Let's compare it to Opus 4.6. GPT 5.4's performance in computer automation is impressive, with 90% accuracy. However, even with a score of 75% versus Opus 4.6's 72.7%, is that enough to claim victory? Let's dive into the technical advancements and real-world implications of these features.

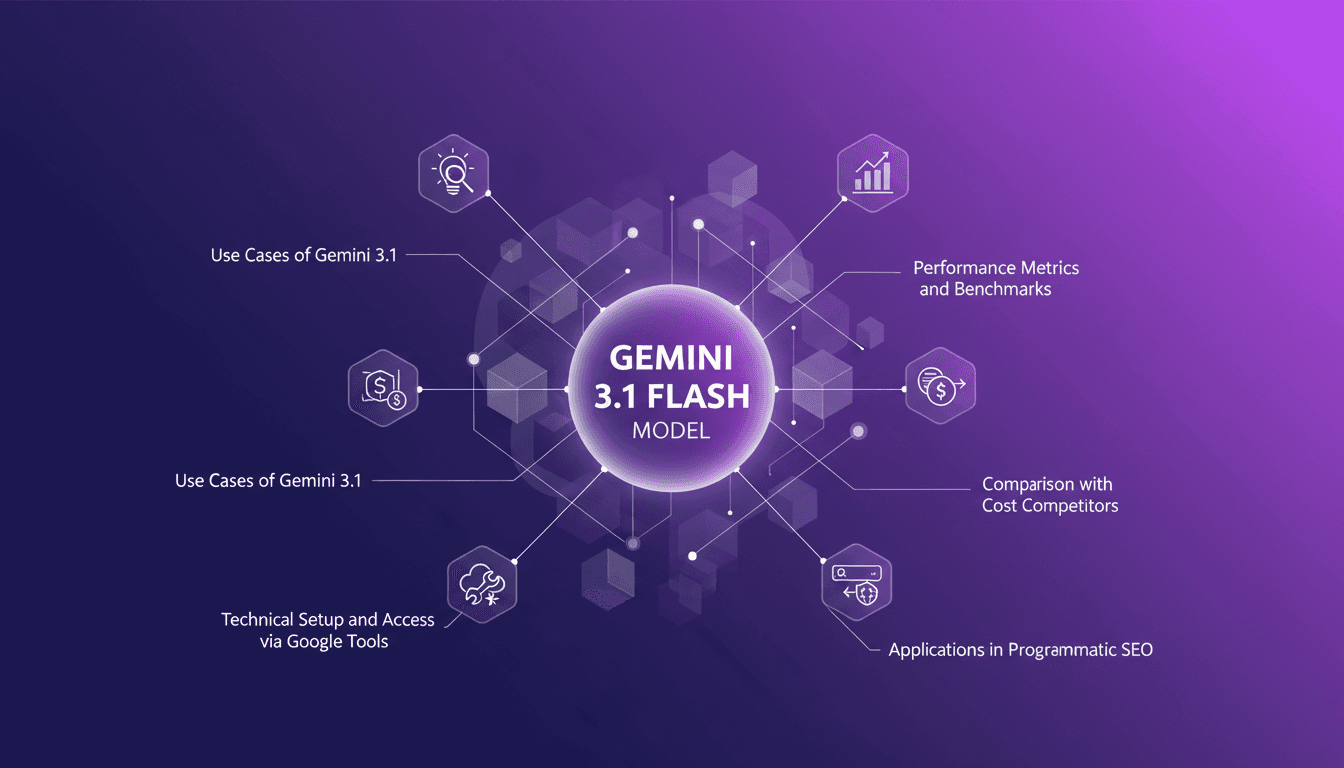

Mastering Gemini 3.1: Flash Lite in 14 Minutes

I dove headfirst into Gemini 3.1 Flash Lite, eager to see if it could truly revolutionize my workflow. Spoiler: It did, but not without a few hiccups along the way. Picture a model that can grasp multimodal data and optimize programmatic SEO in a flash. I tested five different use cases, and for a translation task, it took just one second. But watch out, setting it up with Google's tools isn’t exactly a walk in the park. I'll walk you through how I navigated it all, with candid comparisons to competitors and an eye on cost efficiency. If you're ready to supercharge your SEO, join me on this journey.

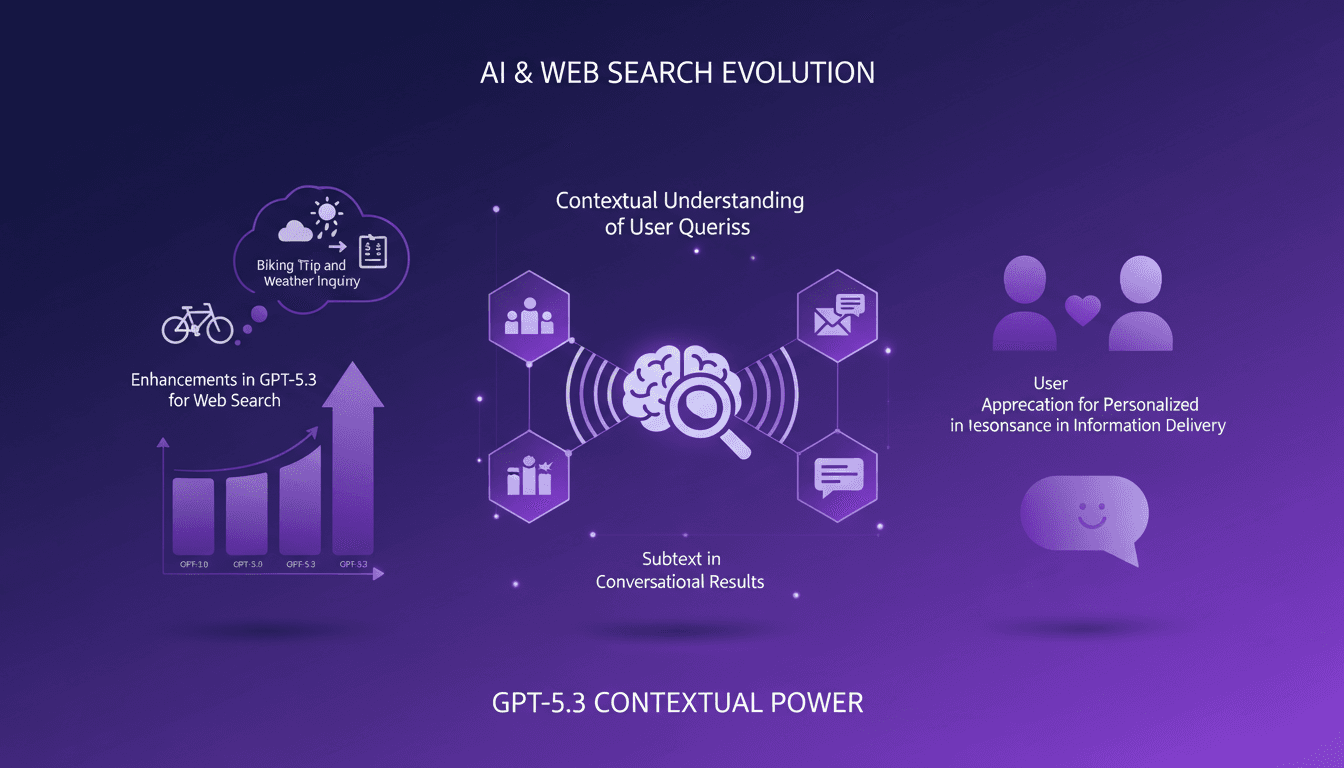

Boosting Web Search with GPT-5.3: Practical Guide

I've been tweaking search results for years, but integrating GPT-5.3 changed everything. With the latest enhancements, understanding user queries has become more nuanced. In this article, I walk you through how to leverage these advancements for better web search results. We'll dive into the importance of subtext, the enhancements in GPT-5.3, and how they make responses more natural and conversational. You'll see practical cases like planning a biking trip or understanding baseball rule changes. It's a powerful tool, but watch out for context limits—beyond 100K tokens, things get tricky. I'll share how I orchestrated these elements for direct user experience impact.

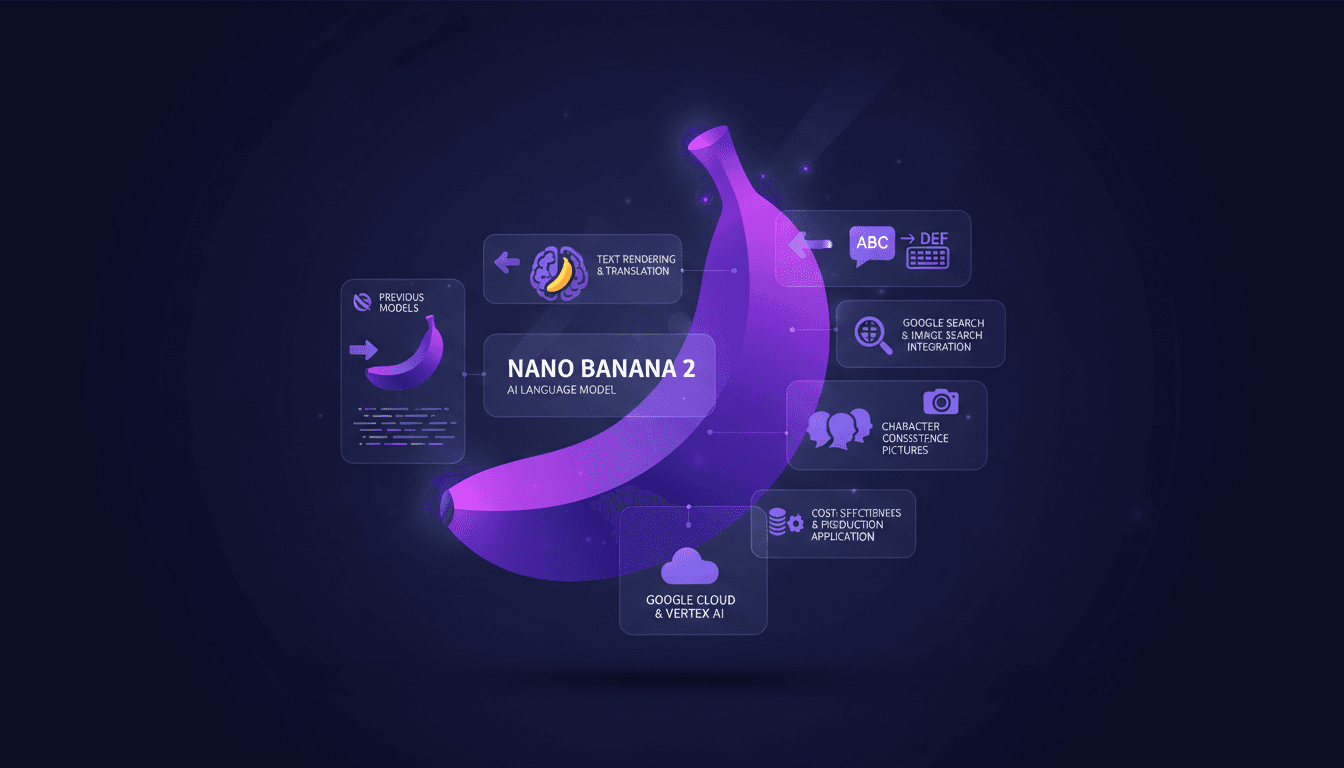

Nano Banana 2: Smaller, Faster, Cheaper

I've been in the trenches with image generation tools, and when Nano Banana 2 hit my workflow, it was a game changer. Smaller, faster, cheaper – it's not just marketing fluff. Let me walk you through how I've leveraged its capabilities to streamline my projects. With enhanced performance and cost-effectiveness, Nano Banana 2 transforms integration with tools like Google Cloud and Vertex AI. For those of us relying on precision and speed, understanding its integration is crucial.

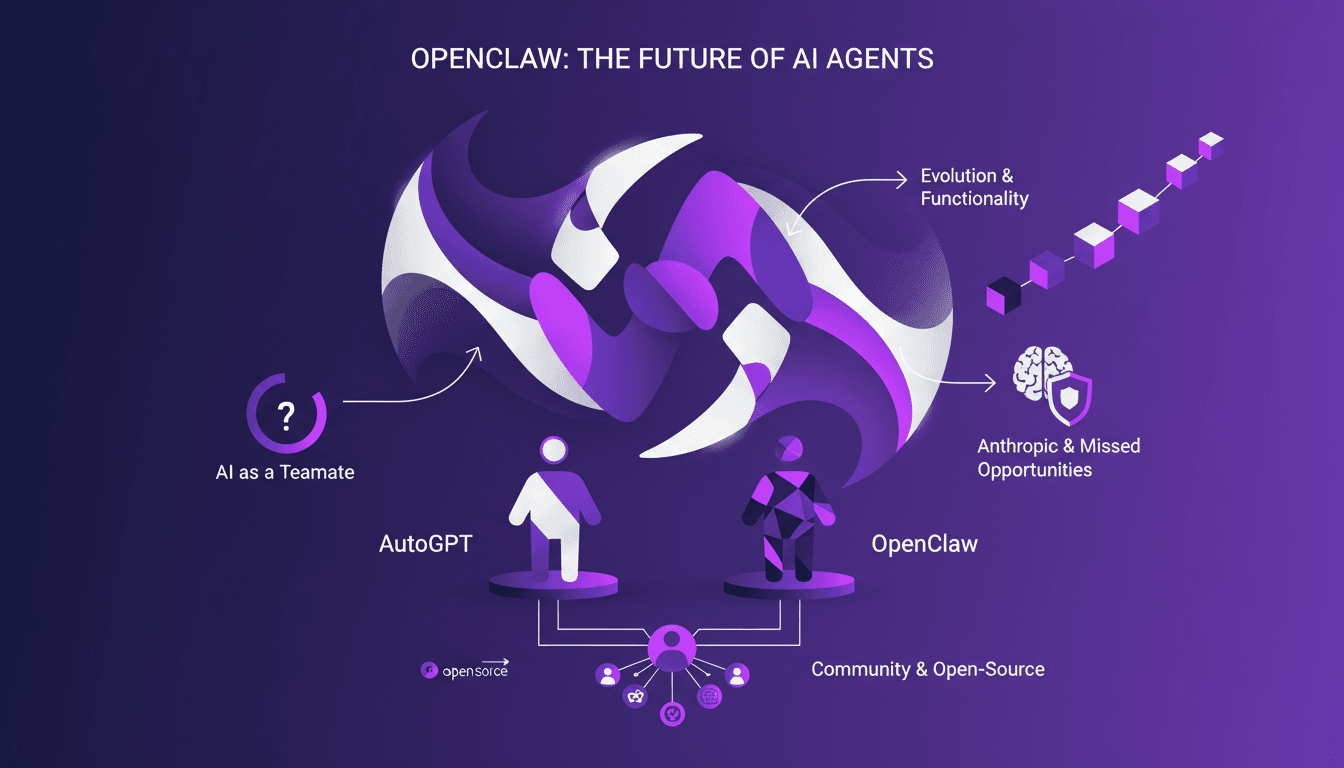

OpenAI Acquires OpenClaw: What It Means for AI

I was in the middle of orchestrating a multi-agent system when the news hit: OpenAI just bought OpenClaw. This isn't just another acquisition; it's a potential game changer for AI agents. OpenClaw, which evolved from Clawdbot to Moltbot, is set to redefine how we view AI as a teammate, not just a tool. With its persistent memory and sandbox environments, OpenClaw promises to transform our workflows. This acquisition could accelerate the integration of open-source AI agents and strengthen community collaboration. Let's dive into the details of what might be a pivotal moment for the future of AI agents.