Mastering Gemini 3.1: Flash Lite in 14 Minutes

I dove headfirst into Gemini 3.1 Flash Lite, eager to see if it could truly revolutionize my workflow. Spoiler: It did, but not without a few hiccups along the way. Picture a model that can grasp multimodal data and optimize programmatic SEO in a flash. I tested five different use cases, and for a translation task, it took just one second. But watch out, setting it up with Google's tools isn’t exactly a walk in the park. I'll walk you through how I navigated it all, with candid comparisons to competitors and an eye on cost efficiency. If you're ready to supercharge your SEO, join me on this journey.

I dove headfirst into Gemini 3.1 Flash Lite, eager to see if it could truly revolutionize my workflow. Spoiler: It did, but not without a few hiccups. Imagine a tool that understands multimodal data and optimizes programmatic SEO in a flash. I tackled five different use cases. For a translation task, it took just one second! But brace yourself for some surprises when setting up with Google's tools. Between getting burned and course-correcting, I made straightforward comparisons with competitors and assessed cost efficiency. If you're ready to supercharge your SEO, join me as we dive deep into Gemini 3.1's capabilities together.

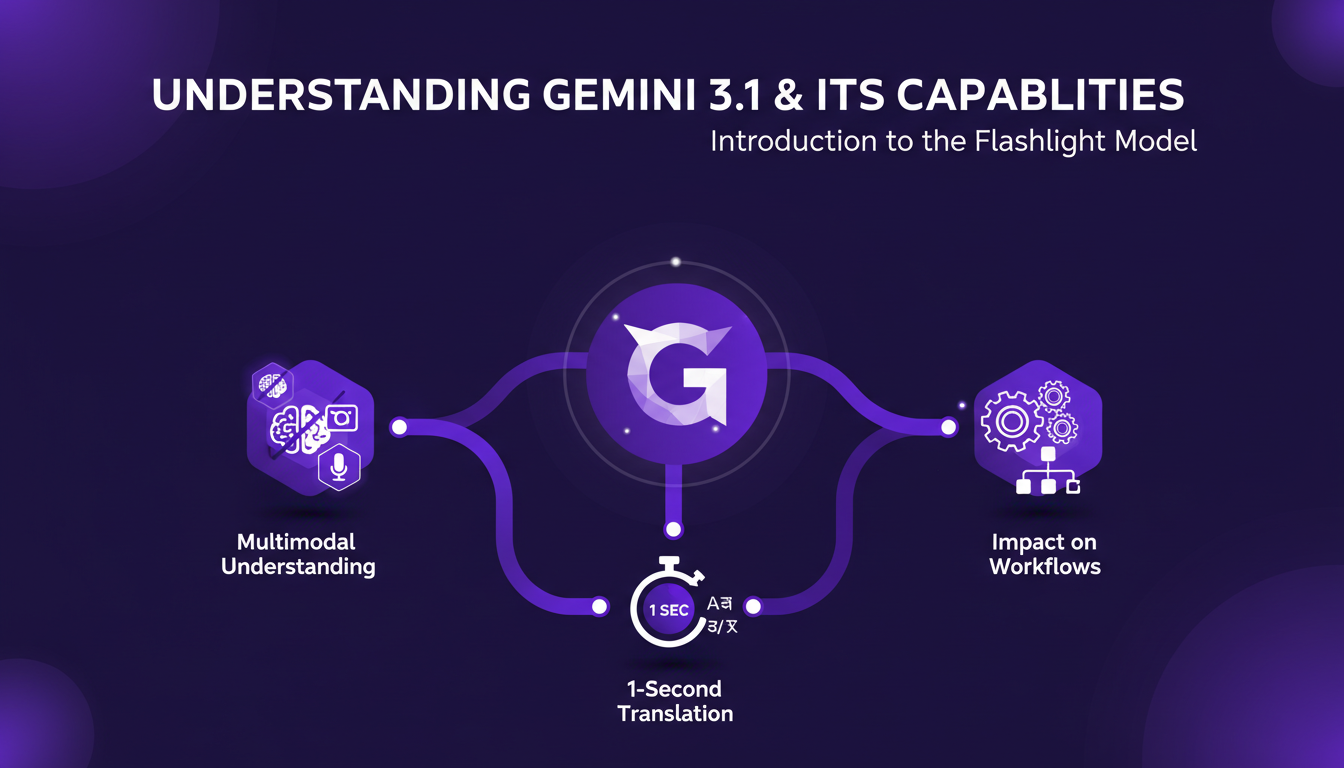

Understanding Gemini 3.1 and Its Capabilities

The Gemini 3.1 Flashlight model is a true game-changer in the AI world. I've used it in my agency to streamline tedious workflows, and honestly, it transforms everything. With multimodal understanding capabilities, it allows us to handle complex tasks in no time. Picture this: a complete translation in just one second! I've found myself using this feature for multilingual projects, and the fluidity with which Gemini handles these tasks is impressive. The impact is direct: less time waiting for translations means more time focusing on data analysis.

Multimodal understanding means Gemini can simultaneously process different types of data – text, audio, images – and derive concrete insights. This is particularly useful in processes that require rapid and precise analysis.

Key Takeaways:

- Gemini 3.1 Flashlight model is extremely fast and accurate.

- Multimodal translation capabilities demonstrated in 1 second.

- Direct impact on workflow efficiency.

Performance Metrics and Efficiency

I tested the efficiency of the Gemini 3.1 model on a transcription task involving a 43-minute MP3 file. The result? A 5,274-word transcription completed in just 37 seconds. This is an incredible time saver, especially when dealing with loads of content. The model generates tokens at a speed of 363 tokens per second, which is crucial for real-time processes.

However, there's a trade-off between high speed and accuracy. In some cases, rapidity can lead to minor errors, but overall, the benefits far outweigh the drawbacks.

Key Takeaways:

- 5,274-word transcription completed in 37 seconds for a 43-minute MP3.

- Tokenization speed of 363 tokens per second.

- Balance between speed and accuracy needed for certain tasks.

Model Routing and Cost Efficiency

One of the most impressive features of Gemini 3.1 is its model routing. This means it can choose the most appropriate model for each task based on cost and speed. This is a significant advantage for companies looking to optimize their resources. I've integrated this feature with Google's infrastructure, and while it requires some initial setup, the savings are well worth the effort.

However, there are pitfalls to avoid. For instance, not properly setting the parameters can lead to higher-than-expected costs. It's crucial to understand how model routing works to avoid costly mistakes.

Key Takeaways:

- Model routing to optimize cost and speed.

- Integration with Google's infrastructure for substantial savings.

- Importance of correctly setting parameters to avoid excessive costs.

Technical Setup with Google Tools

Accessing Gemini 3.1 through Google tools isn't complicated, but there are a few essential steps to follow to ensure everything runs optimally. First, you need to obtain your Google API key. Then, configure your environment using Google AI Studio – this is where you can really leverage the power of Gemini.

Orchestrating tasks to maximize efficiency involves properly configuring prompts and monitoring token usage. Watch out for common errors like forgetting the API key, which can abruptly halt your project.

Key Takeaways:

- Access via Google AI Studio with precise configuration.

- Task orchestration to maximize efficiency.

- Watch out for common setup errors.

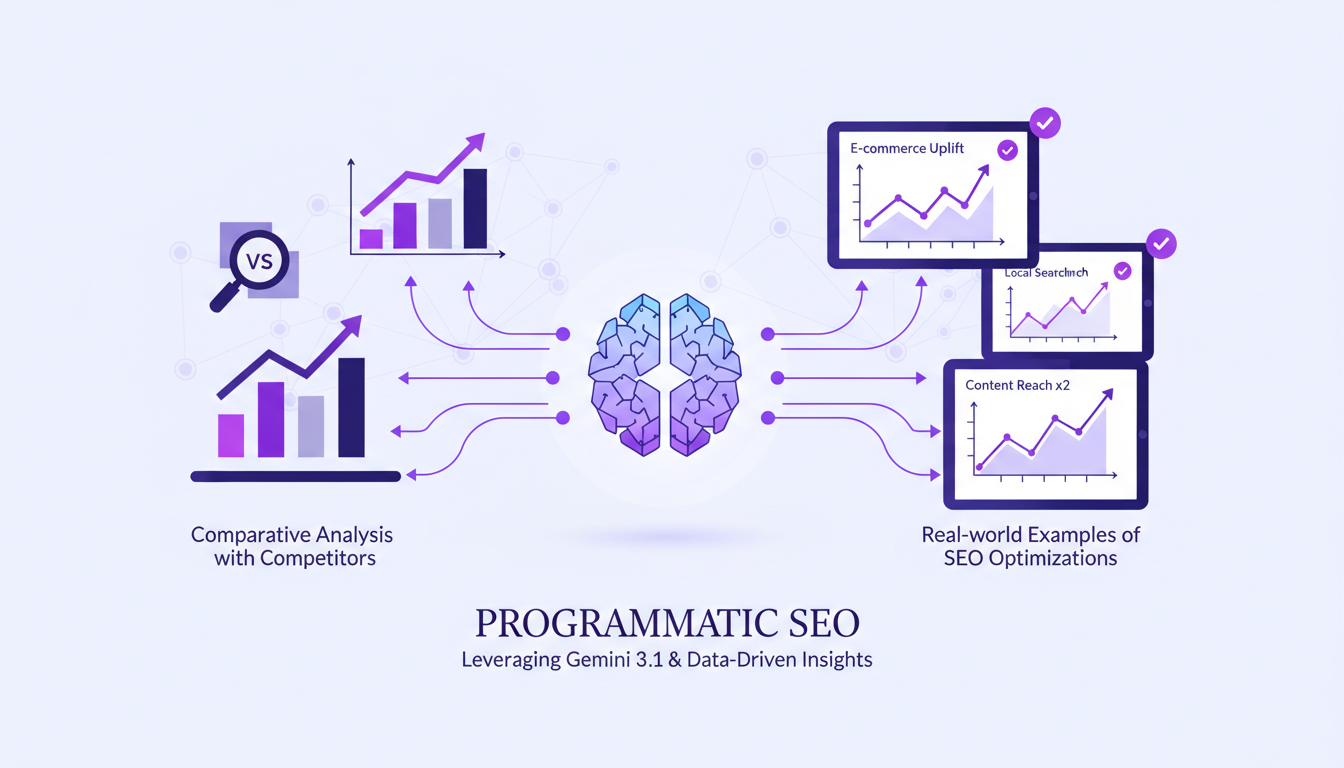

Applications in Programmatic SEO

In the realm of programmatic SEO, Gemini 3.1 is a powerful asset. Its ability to rapidly generate optimized web pages is a major competitive advantage. I've observed initial SEO optimizations within just a few days, which is impressive compared to competing models like GPT5 Mini or Grock.

However, there are limitations to consider. The model works best with structured tasks and may require human oversight for fine-tuning. That said, the efficiency gains and results speak for themselves.

Key Takeaways:

- Competitive advantages in programmatic SEO thanks to Gemini 3.1.

- Rapid and effective SEO optimizations.

- Limitations to consider for precise adjustments.

Gemini 3.1 Flash Lite is a real game changer when used correctly. First, its speed is impressive—translating in just one second is no small feat. Then, its multimodal understanding opens doors for various use cases (I've tested five myself and it works like a charm). But be prepared for some trade-offs: you need to set up the model carefully to avoid losing performance.

- Impressive speed: 1-second translation.

- Multimodal understanding: Supports five different use cases.

- Efficiency and cost-effectiveness: When configured well, it boosts your efficiency and cuts costs.

I'm excited to see how this technology evolves because it promises significant advancements. If you're ready to integrate Gemini 3.1 into your workflow, dive in with the practical steps I've detailed and start optimizing your processes today. For a deeper dive, watch the original video "Gemini 3.1 Flash Lite in 14 mins!" on YouTube. It’s pure hands-on experience.

Frequently Asked Questions

Thibault Le Balier

Co-fondateur & CTO

Coming from the tech startup ecosystem, Thibault has developed expertise in AI solution architecture that he now puts at the service of large companies (Atos, BNP Paribas, beta.gouv). He works on two axes: mastering AI deployments (local LLMs, MCP security) and optimizing inference costs (offloading, compression, token management).

Related Articles

Discover more articles on similar topics

Boosting Web Search with GPT-5.3: Practical Guide

I've been tweaking search results for years, but integrating GPT-5.3 changed everything. With the latest enhancements, understanding user queries has become more nuanced. In this article, I walk you through how to leverage these advancements for better web search results. We'll dive into the importance of subtext, the enhancements in GPT-5.3, and how they make responses more natural and conversational. You'll see practical cases like planning a biking trip or understanding baseball rule changes. It's a powerful tool, but watch out for context limits—beyond 100K tokens, things get tricky. I'll share how I orchestrated these elements for direct user experience impact.

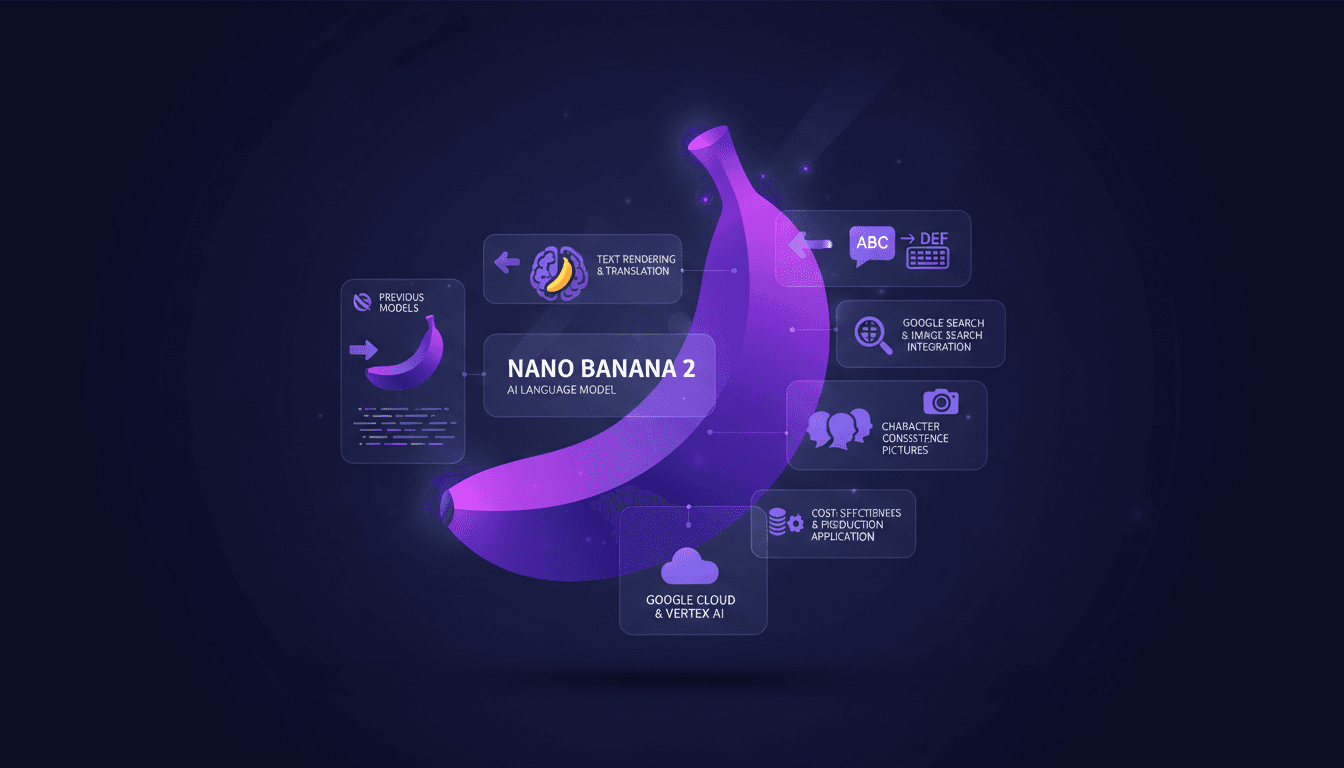

Nano Banana 2: Smaller, Faster, Cheaper

I've been in the trenches with image generation tools, and when Nano Banana 2 hit my workflow, it was a game changer. Smaller, faster, cheaper – it's not just marketing fluff. Let me walk you through how I've leveraged its capabilities to streamline my projects. With enhanced performance and cost-effectiveness, Nano Banana 2 transforms integration with tools like Google Cloud and Vertex AI. For those of us relying on precision and speed, understanding its integration is crucial.

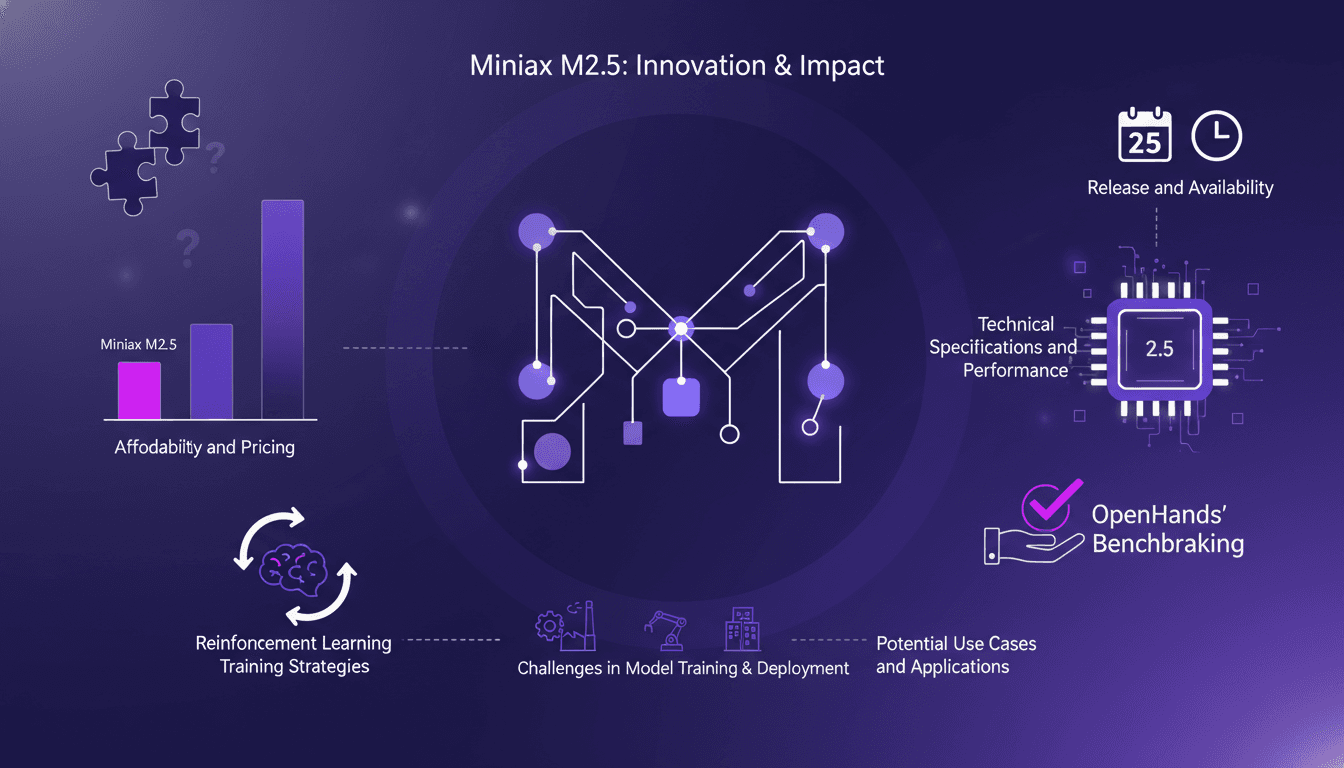

Minimax M2.5: Unpacking Strengths and Limits

I've had my hands on the Minimax M2.5, and trust me, it's not just another model on the shelf. I integrated it into my workflow, and its affordability made me rethink my entire setup. But hold on, it's not just about the price tag. This model stands out with its technical specs and efficiency. So why should the Minimax M2.5 get your attention? We're diving into its strengths, limitations, and how it stacks up against competitors. We'll also discuss reinforcement learning strategies and potential use cases. Get ready, because this model might just shake up your work approach.

WebM MCP: Use Cases and Future Prospects

When I first heard about WebM MCP, I was skeptical. But after diving in, wrapping my head around its APIs, and seeing the potential, I realized it's a game changer for AI agent deployment. Developed by Google and Microsoft, WebM MCP offers a new way to handle media processing with AI agents. In this article, I share my hands-on experience, pitfalls to avoid, and how I integrated this tool into my daily workflow. Imagine managing thousands of tokens for each processed image, with just two APIs to master. I'll guide you through the benefits, use cases, and future prospects of this powerful tool.

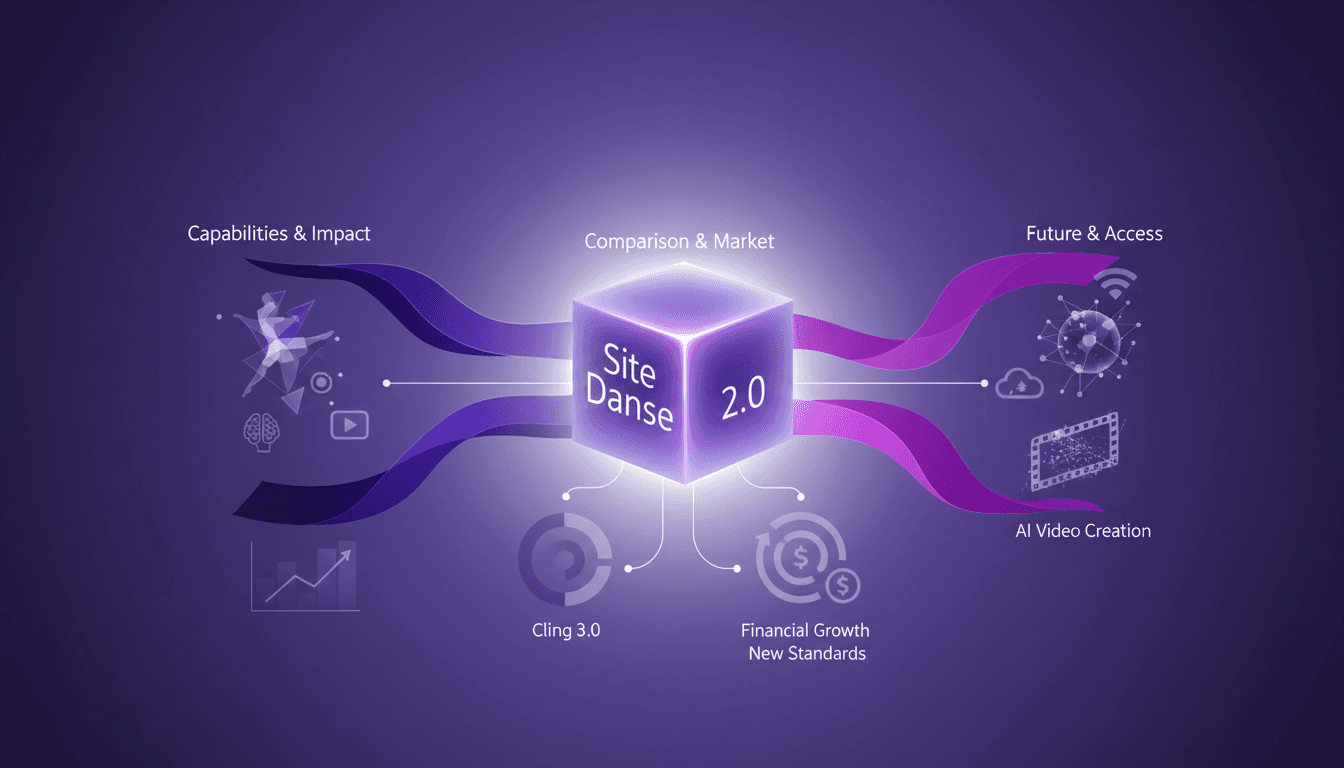

Seedance AI 2.0: Revolutionizing Video Creation

I dove into Seedance AI 2.0 expecting just another AI tool, but what I found was a game changer. This isn't just tech hype—it's a real shift in video creation. With Seedance AI 2.0, we're witnessing a revolution in leveraging AI for video content. It's not just about flashy features; it's about tangible impacts on production workflows. Compared with Cling 3.0 and other models, Seedance AI 2.0 stands out with its technical capabilities and market impact. Chinese companies saw their stocks rise by 10 to 20% in a single trading day. And that native 2048 x 1080 resolution, it's a game changer! I'm sharing how I've integrated it into my workflow and the financial implications to consider. Get ready to see how this technology might redefine the future of video content creation.