Evolving AI Models: Limits and Opportunities

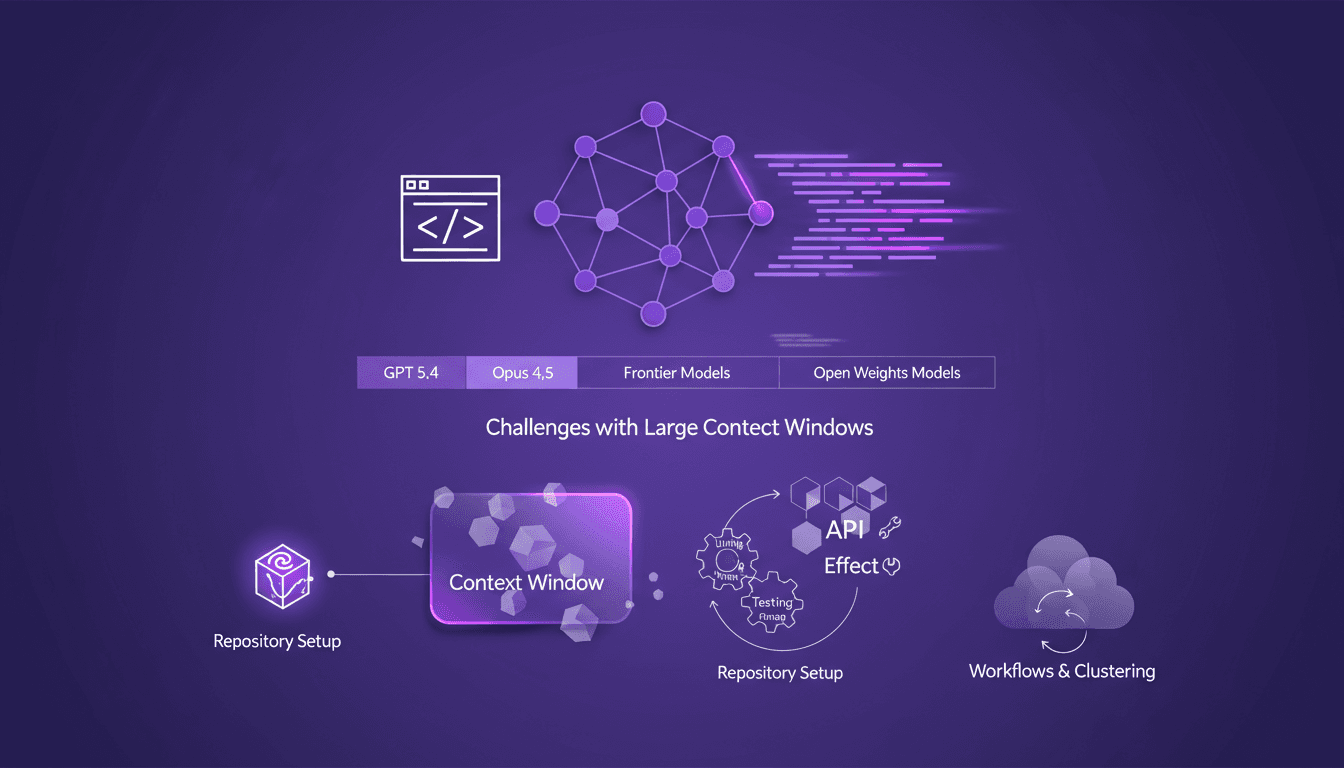

I still remember setting up my first repository for AI-driven code generation. It was a game changer, but also a minefield of potential pitfalls. In this talk, I'm diving into how AI models like GPT 5.4 and Opus 4.5 are reshaping our workflows and where they still stumble. Shifting from hand-coding to automated code generation is what I live daily in the field. But watch out—large context windows can be a real headache. Your repository setup is critical to avoid pitfalls, and I'll share how I use Effect and other tools for efficient API development. Buckle up, because understanding these models and their limitations is crucial for anyone navigating AI-assisted development.

I remember the first time I set up a repository for AI-driven code generation. It was a game changer for my workflow but also a minefield of potential pitfalls. As we transition from hand-coding to automated generation, AI models like GPT 5.4 and Opus 4.5 are reshaping our landscape. But watch out—they still have limitations, especially with large context windows. I learned the hard way that your repository setup is crucial. I'll show you how I use tools like Effect to efficiently orchestrate API development, and why linting and testing are indispensable in AI-assisted development. The delay in open weights models compared to frontier models is another hurdle to overcome. I'll also share the significance of workflows and clustering in long-running AI processes. This isn't just theory; it's what I encounter daily. So let's dive into the evolution of AI models, their limits, and opportunities.

Transitioning from Hand-Coding to AI Automation

Let me tell you, transitioning from hand-coding to AI automation is a bit of a shock at first. I started programming at the age of 12, and for years, hand-coding was my bread and butter. But for the past 6 to 8 months, I haven't written a single line of code manually. It's unsettling but also incredibly liberating. First, you set up your AI models to integrate into your daily workflows. Then, you realize the immense time savings and efficiency it brings. But watch out, there are challenges to tackle.

AI automation is a game changer, but it comes with its own complexities.

Integrating these models into our daily routine isn't without hiccups. The learning curve is steep, especially if you're used to coding every little detail. But once you get over that hump, the impact on efficiency is direct. You spend less time on repetitive tasks and more on innovation. However, don't assume that not writing code by hand means everything's perfect. Human oversight is still crucial.

Understanding AI Model Limitations

Let's talk about context windows in AI models. It's a key concept to grasp. Imagine each model has a limited memory, a window through which it sees the world. With context windows of a million tokens, you'd think you're all set. But often, bigger means more confusion. I've worked with GPT 5.4 and Opus 4.5, and confusion can set in quickly.

These models, even with their 10 trillion parameters, can't know everything. They learn mainly from code, not human documentation. It's a limitation to keep in mind, especially when using them for real-world applications. Be cautious not to overload the models with too much information at once.

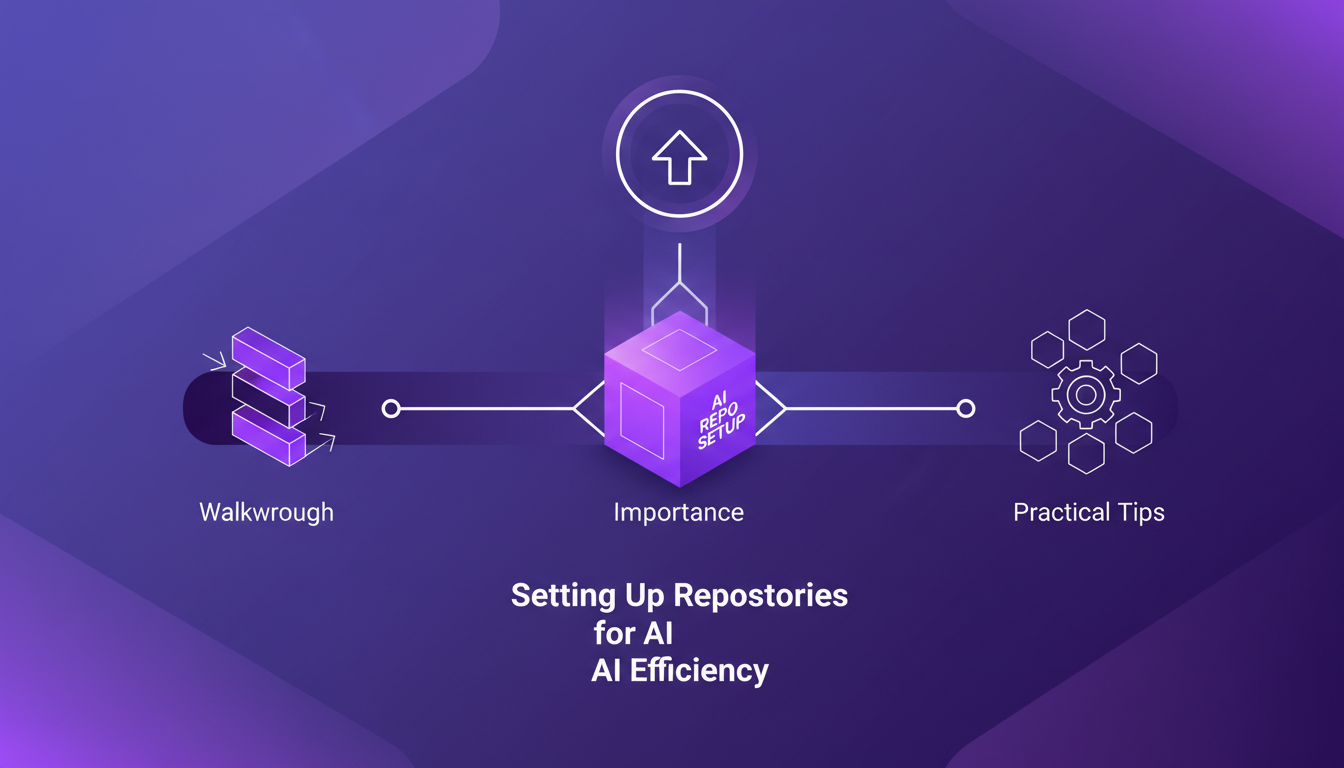

Setting Up Repositories for AI Efficiency

Let's move to something more practical: setting up repositories. It's a crucial point for the efficiency of AI-driven projects. First, I clone the repo, that's basic. Then, I configure the repositories to maximize efficiency. It might seem trivial, but a good setup makes all the difference.

Using coding agents with a single tool call is an asset. But you need to ensure these agents have access to the right resources. The performance of the AI model directly depends on this. A well-configured repository avoids performance issues and optimizes model usage.

Enhancing AI Code Generation with Linting and Testing

Linting is often talked about as a tool for maintaining code quality. And in the context of AI, it's even more crucial. Linting and testing guide AI code generation, and it's something I've experienced firsthand. But watch out, there's a trade-off between speed and code quality.

Incorporating tests into the development cycle is essential. It ensures that the code generated by AI meets quality standards. And with continuous integration, you ensure every change is validated. It's a balance to find, but one that pays off in the long run.

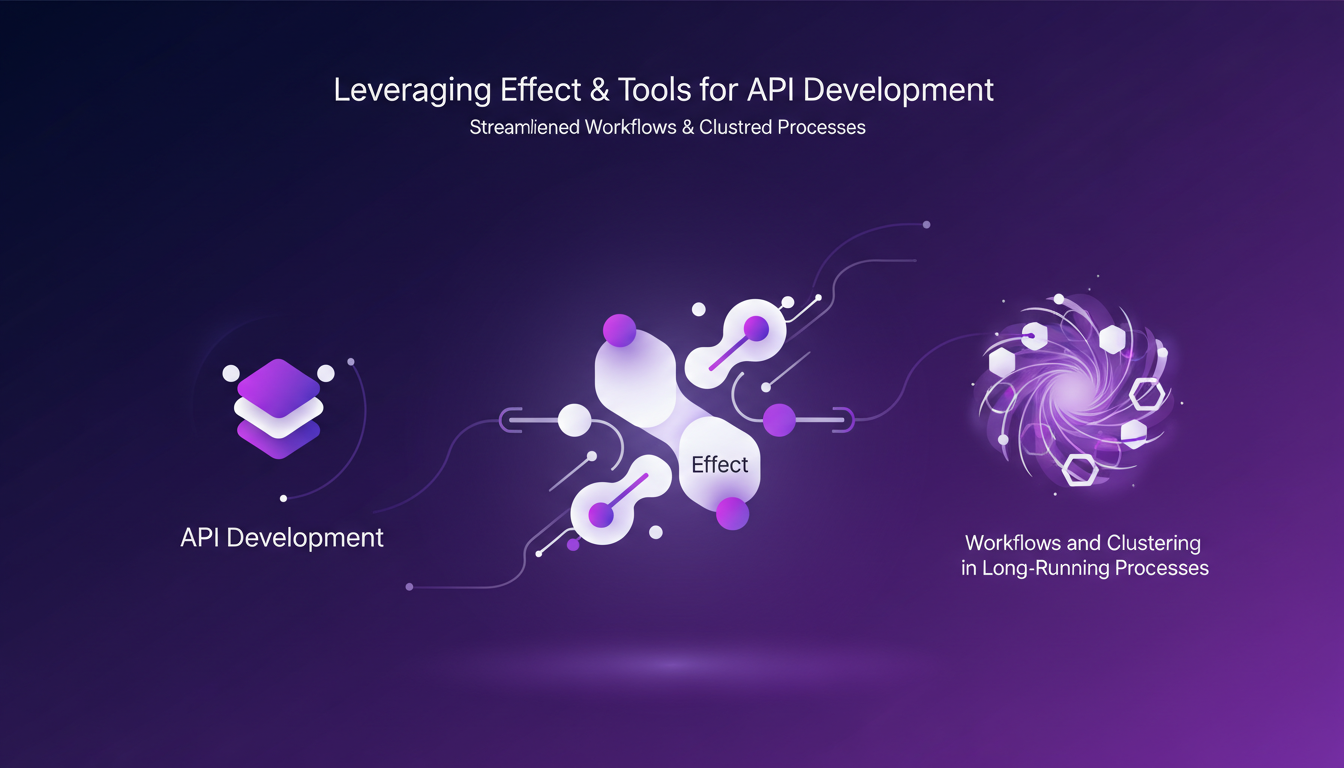

Leveraging Effect and Tools for API Development

Finally, let's talk about tools like Effect for API development. These tools simplify and streamline development processes. With well-defined workflows, you manage even long-running processes without worry.

But here's the catch: open weights models often lag three to six months behind frontier models. So you need to juggle between the two to get the best of both worlds. The impact on API orchestration is direct, and there's much to gain by optimizing these workflows.

AI is reshaping the way we build and deploy software, and I've been in the trenches with it. Here's what stands out:

- Models like GPT 5.4 and Opus 4.5 are game changers, but watch out for their limits. Large context windows can become a headache, especially beyond 100,000 tokens.

- The shift from hand-coding to automated code generation is happening, but don’t think it’s all smooth sailing. Repository setup is key to making the most of this automation.

- I handle user ID 100 and use a coding agent with a single tool call — it's a setup that works for me.

- Running V4 in production is solid, but still requires some fine-tuning.

The future with these tools is bright, but balancing potential and limitations is crucial. Ready to dive deeper? Start experimenting with these models today to see how they can truly transform your development process. For more practical insights, check out the "Vibe Engineering Effect Apps" video by Michael Arnaldi on YouTube. It's packed with practical knowledge for anyone serious about leveraging this tech.

Frequently Asked Questions

Thibault Le Balier

Co-fondateur & CTO

Coming from the tech startup ecosystem, Thibault has developed expertise in AI solution architecture that he now puts at the service of large companies (Atos, BNP Paribas, beta.gouv). He works on two axes: mastering AI deployments (local LLMs, MCP security) and optimizing inference costs (offloading, compression, token management).

Related Articles

Discover more articles on similar topics

Ralph Loops: Building Simple, Effective AI

I remember the first time I built a Ralph Loop. It was like finding a missing puzzle piece in AI-driven development. Not just theory, but a real workflow that changed how I orchestrate tasks. These loops streamline automation using AI models like GPT 5.8, offering a practical, no-nonsense approach. Imagine orchestrating tasks seamlessly while addressing the challenges and benefits of using AI in software development. In this article, I'll take you through Ralph Loops, their practical applications, and how they can truly transform your workflow. Let's dive into the limits, security and ethical considerations, and scaling these processes in team environments. Yes, the future of AI in automating complex workflows is already here. Ready to dive in?

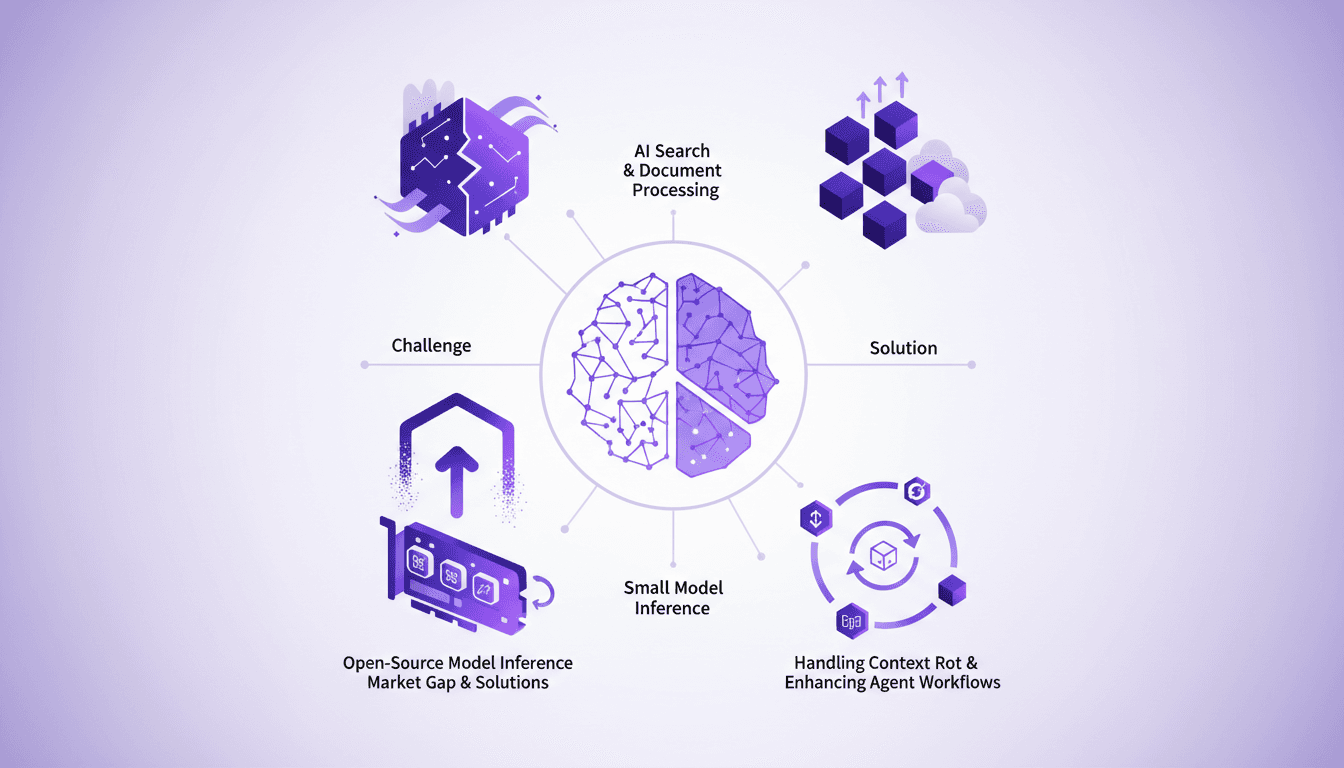

Optimizing AI Models: Our Practical Approach

I saw a gap in the market for small model inference, so I decided to build the infrastructure myself. With over 3 million models on Hugging Face, the lack of effective infrastructure was glaring. Why is this important? Because small models play a crucial role in AI search and document processing. And the challenges? There were plenty, but I tackled them head-on. From context rot to optimizing agent workflows, each step was a learning curve. I orchestrated model swapping for efficient GPU usage while supporting various open-source architectures. In short, we filled a market gap with a robust, scalable solution.

Gemma 4: Transforming Edge AI Deployment

I dove into deploying AI on edge devices with Gemma 4, and let me tell you, it's a game changer. But there are nuances you need to know to make it work seamlessly. Gemma 4 brings new capabilities for processing data locally, reducing latency and bandwidth usage. I set up the Light RT deployment framework, and the performance boost with NPU acceleration is impressive. But don't get too excited just yet — cross-platform integration requires some tweaks. Community support and open-source contributions are key assets. Want to maximize these benefits? I'm sharing what I've learned from the field.

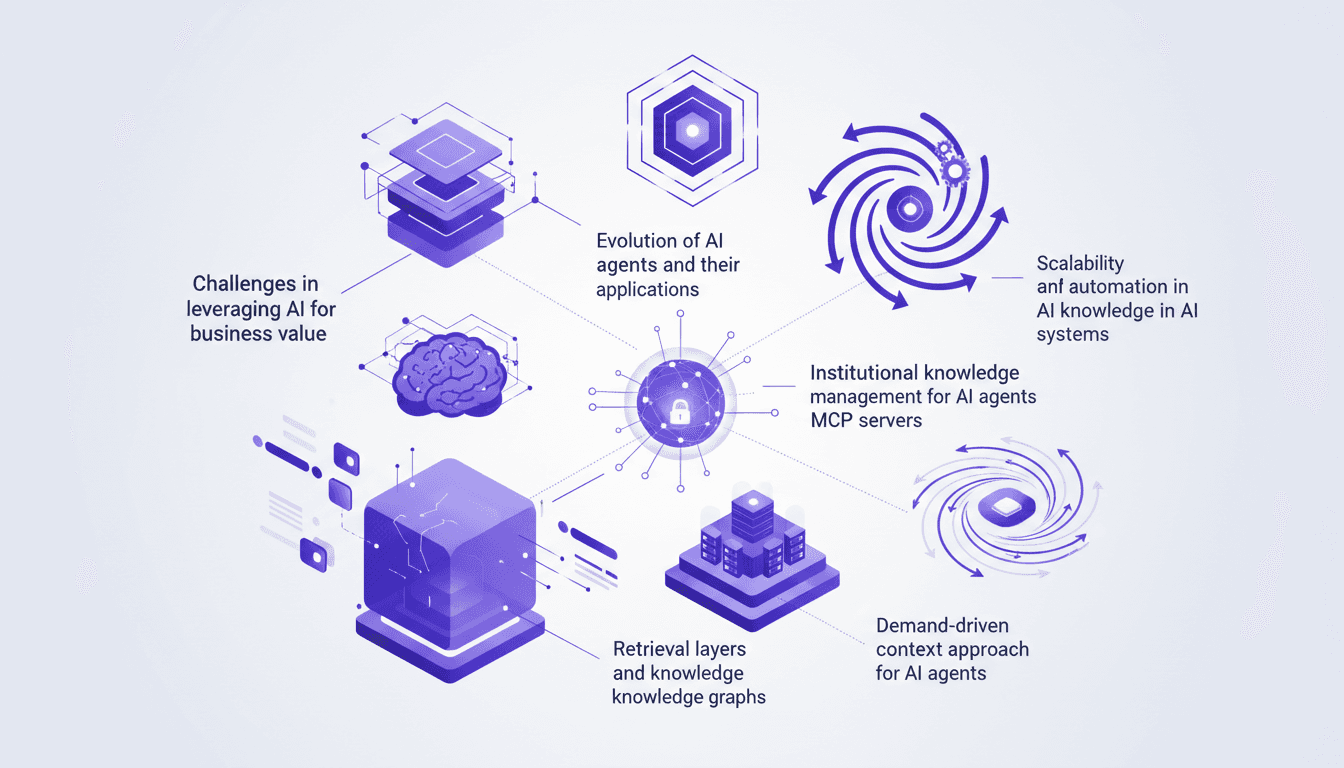

Optimizing AI Agents: Challenges and Solutions

I've been knee-deep in AI agents, wrestling with their intricacies and harnessing their potential. Dive into how I tackled the challenges of integrating AI for real business value. As I explore the evolution of AI agents, their applications, and effective enterprise management, I'm sharing my hands-on experiences. From institutional knowledge management to building MCP servers and a context-driven approach, I'll guide you through optimizing AI agents. Remember: only 20% of your documentation is truly useful, so let's make every word count.

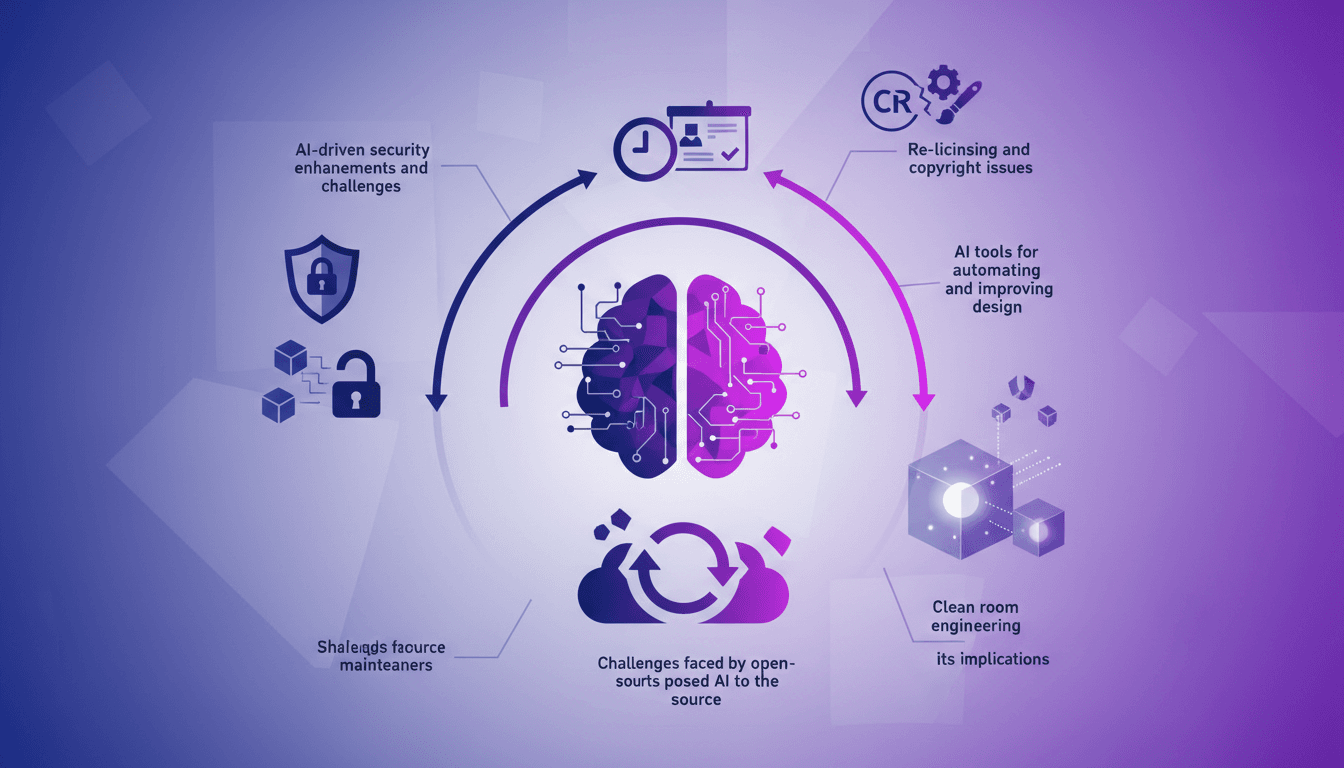

AI's Impact on Open Source: Opportunities

I remember the first time I integrated AI into my open-source project. It was a game changer, but not without its challenges. AI is reshaping the open-source landscape, enhancing security but also threatening traditional business models. Let's discuss the implications of this transformation – from AI tools boosting productivity to re-licensing and copyright issues. Open-source maintainers are facing unprecedented challenges, not to mention threats to existing business models. How is your open-source project adapting to this new era?