Gemma 4: Transforming Edge AI Deployment

I dove into deploying AI on edge devices with Gemma 4, and let me tell you, it's a game changer. But there are nuances you need to know to make it work seamlessly. Gemma 4 brings new capabilities for processing data locally, reducing latency and bandwidth usage. I set up the Light RT deployment framework, and the performance boost with NPU acceleration is impressive. But don't get too excited just yet — cross-platform integration requires some tweaks. Community support and open-source contributions are key assets. Want to maximize these benefits? I'm sharing what I've learned from the field.

I dove into deploying AI on edge devices with Gemma 4, and let me tell you, it's a game changer. But there are nuances you need to know to make it work seamlessly. First, understanding how Gemma 4 allows local data processing is crucial. It not only reduces latency but also bandwidth usage. I started by setting up the Light RT framework for deployment. Performance improvements with NPU acceleration are impressive, but watch out — cross-platform integration requires some fine-tuning. For instance, after quantization, the Gemma 4 4B model demands 4GB of RAM — that can catch you off guard if you're not ready. I've also found that community support and open-source contributions play a big role in enhancing the experience. Wondering how to best leverage these models? I'm sharing my firsthand experiences and the pitfalls I've avoided after a few missteps.

Understanding Gemma 4 and Edge Models

Gemma 4 is the new frontier of AI on edge devices, where efficiency rules. When I first started tinkering with these models, their design optimized for low-resource devices immediately stood out. Picture this: the E2B model of Gemma 4 uses between 1 and 2 GB of RAM. That's almost nothing compared to what's typical. And if you step up to the heftier models like the 4B, post-quantization, we're talking about 4 GB of RAM. That's a nice evolution from the Gemma 3's 270 million parameters. This is concrete stuff. But watch out, it requires fine orchestration, or you'll quickly run out of resources.

Benefits and Challenges of AI on Edge Devices

When we talk about AI on edge, the benefits are clear. Reduced latency, enhanced privacy... It's really a game changer for real-time applications. With edge AI, data transmission costs are slashed. But I'm not going to lie, there are limits. Processing power and storage are constrained on these devices. So you have to carefully balance model complexity with device capability. Believe me, I've had sleepless nights over this.

- Reduced latency for real-time applications

- Enhanced privacy through local processing

- Reduced data transmission costs

- Watch out for constrained processing power

Gemma 4's New Capabilities and Deployment with Light RT

The real standout is the enhanced capabilities of the Gemma 4 models. We're talking performance improvements, structured JSON support, and hardware-native compatibility. But what really struck me was the Light RT framework. Once configured, it integrates seamlessly with your edge devices. Quantization plays a crucial role here in reducing RAM requirements. First, configure Light RT; then integrate it with your edge device. I've seen this work like a charm, but beware, a solid understanding of your platform's needs is essential.

Use Cases and NPU Acceleration

Practical applications are where it gets interesting. Real-time analytics systems, IoT devices, all benefit from NPU acceleration. I've tested on iOS and IoT devices and the performance boost was explosive, up to 13 times faster. But again, there's a flip side: NPU integration might require additional hardware considerations. And watch for compatibility with existing infrastructure. I've had some surprises there, not always pleasant.

- Real-time analytics

- NPU acceleration: up to 13x faster

- Potential extra hardware considerations

- Compatibility with existing infrastructure

Cross-Platform Integration and Community Contributions

Cross-platform integration is a major asset discussed at the 00:50:00 mark. It ensures broad applicability. Thanks to the Apache 2.0 license, open-source contributions are pouring in, driving innovation and support. First, assess your platform needs; then leverage community resources. I've seen fruitful collaborations spring up around these licenses. It's a thriving ecosystem.

- Cross-platform support ensures broad applicability

- Apache 2.0 license facilitates open-source contributions

- Innovation is driven by community involvement

- Start by assessing your platform needs

Working with Gemma 4 models, I've seen firsthand how they revolutionize edge AI deployment. First, these models offer efficiency and performance boosts, with RAM usage for the E2B model ranging from 1 to 2 GB. Then, the ability of Gemma 4 to run with just 4 GB of RAM after quantization is a real game changer. But watch out—you need to balance model complexity with device capabilities to avoid hiccups. Finally, leveraging community resources can really optimize your results. With Gemma 4, we're on the brink of a new era for edge AI, but hardware limits remain a reality. Ready to implement Gemma 4 on your edge devices? Dive into the deployment process and join the growing community of edge AI innovators. For a deeper dive, I recommend watching the original video: 'Accelerating AI on Edge' by Chintan Parikh and Weiyi Wang. It could really enrich your understanding!

Frequently Asked Questions

Thibault Le Balier

Co-fondateur & CTO

Coming from the tech startup ecosystem, Thibault has developed expertise in AI solution architecture that he now puts at the service of large companies (Atos, BNP Paribas, beta.gouv). He works on two axes: mastering AI deployments (local LLMs, MCP security) and optimizing inference costs (offloading, compression, token management).

Related Articles

Discover more articles on similar topics

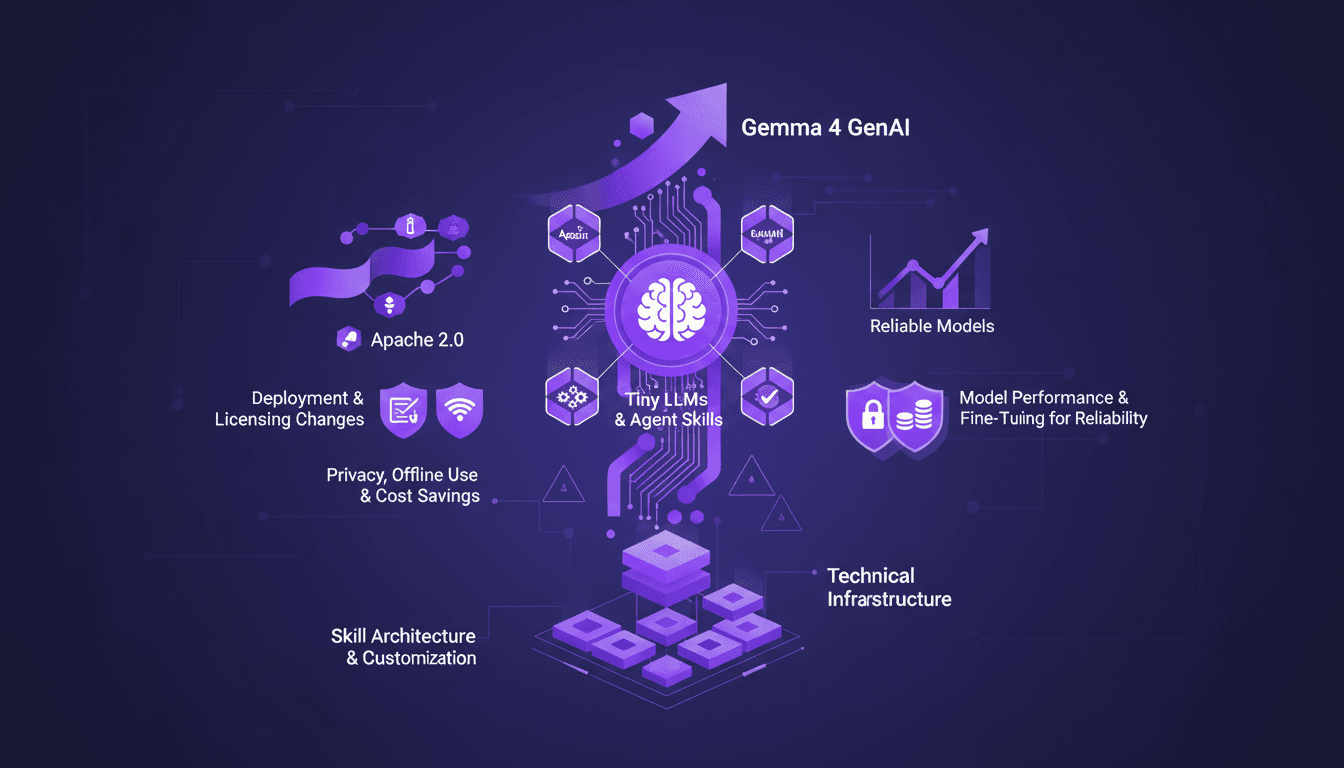

Edge AI: Benefits and Implementing Tiny LLMs

I've spent over a decade diving into Edge AI, and let me tell you, it's a game changer. Running AI models directly on edge devices isn't just a tech trend—it's a practical solution to real-world challenges. With the launch of Gemma 4 and advances in Tiny LLMs, we're witnessing a shift towards more efficient and reliable AI solutions. When it comes to deployment and cost, Edge AI is redefining the landscape with performance gains, privacy enhancements, and offline use. Yet, the true potential lies in the skill architecture and customization of the models. In this talk, we delve into the technical infrastructure needed to run AI on edge devices, deployment and licensing changes, and how Tiny LLMs can transform our current approaches.

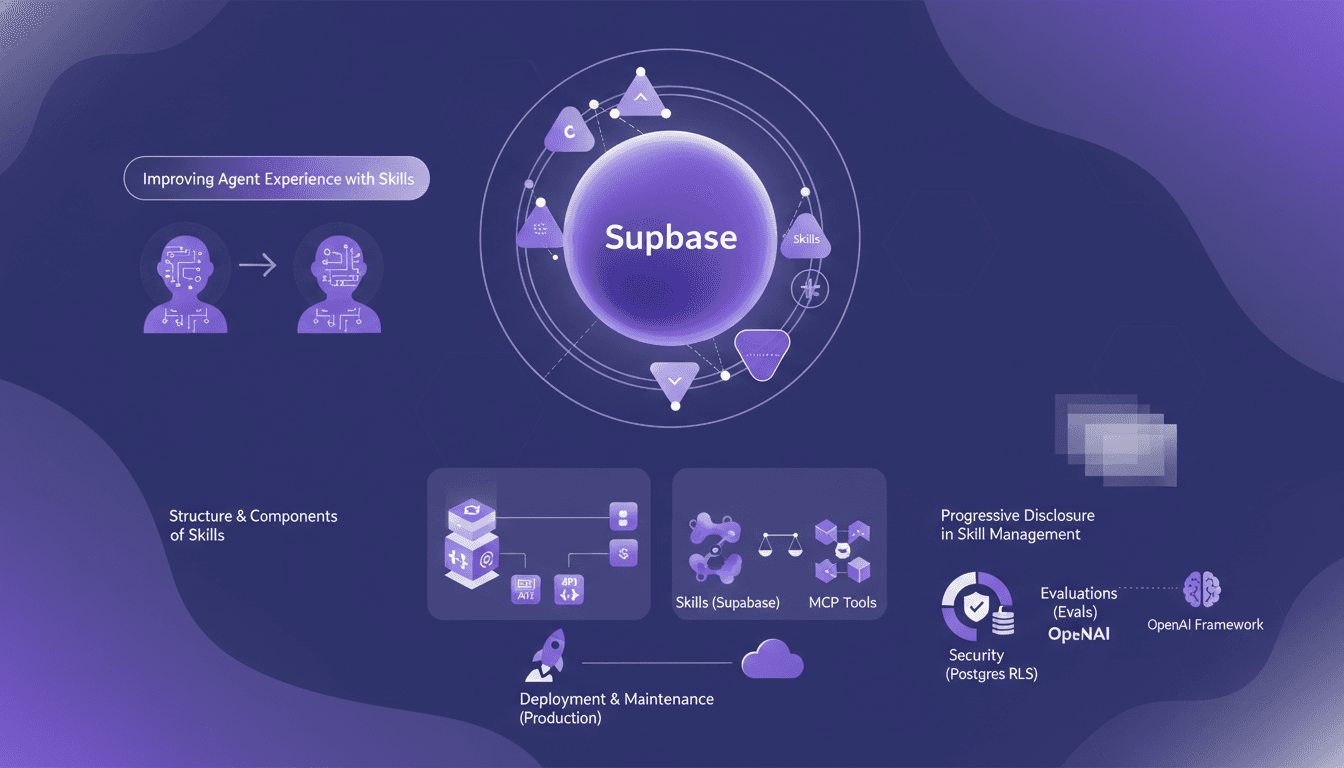

Boosting Agents with Supabase Skills: Our Approach

I spent two months knee-deep in Supabase, crafting skills for our AI agents. Let me walk you through how we made them not just good, but actually effective. In this article, I dive into our approach to enhancing agent experience with Supabase, dissecting the structure and components of skills, and comparing them to MCP tools. We've leveraged evaluations to test agent behavior, not to mention the pivotal role OpenAI’s framework played. From RLS in Postgres to deploying in production — each step came with its hurdles. I’ll explain how I orchestrated all of this and, importantly, what I wish I'd known earlier.

Ralph Loops: Building Simple, Effective AI

I remember the first time I built a Ralph Loop. It was like finding a missing puzzle piece in AI-driven development. Not just theory, but a real workflow that changed how I orchestrate tasks. These loops streamline automation using AI models like GPT 5.8, offering a practical, no-nonsense approach. Imagine orchestrating tasks seamlessly while addressing the challenges and benefits of using AI in software development. In this article, I'll take you through Ralph Loops, their practical applications, and how they can truly transform your workflow. Let's dive into the limits, security and ethical considerations, and scaling these processes in team environments. Yes, the future of AI in automating complex workflows is already here. Ready to dive in?

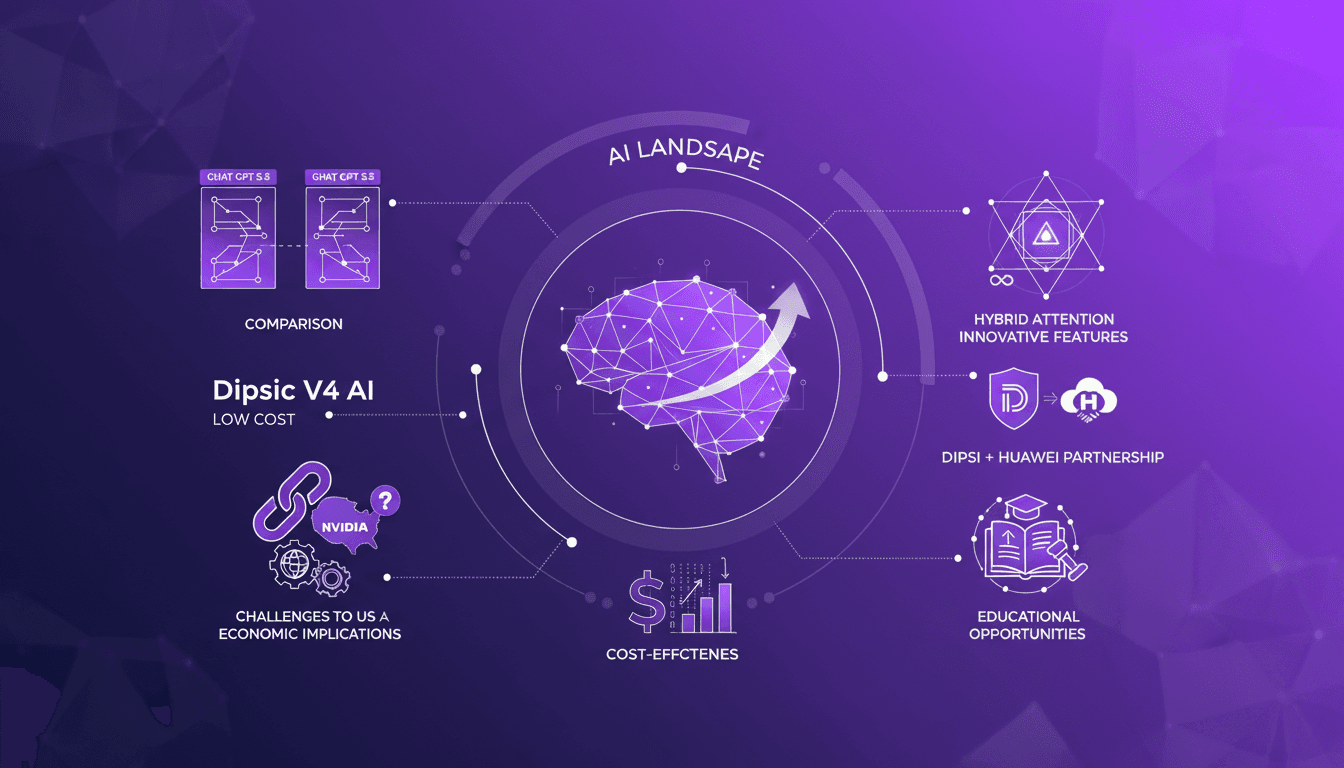

Dipsic V4: AI Revolution, Challenges OpenAI

I've been in the AI trenches for years, watching models evolve. But when I first got my hands on Dipsic V4, I knew we were onto something game-changing. With 1600 billion parameters, this model isn't just another tool in the landscape; it’s a potential disruptor in a space dominated by giants like OpenAI's GPT 5.5. Let me show you why this model is causing such a stir and how it’s rewriting the rules. We’ll dive into its innovative features, aggressive pricing strategy, and what it means for players like Nvidia and OpenAI. Watch out, this could be a game changer.

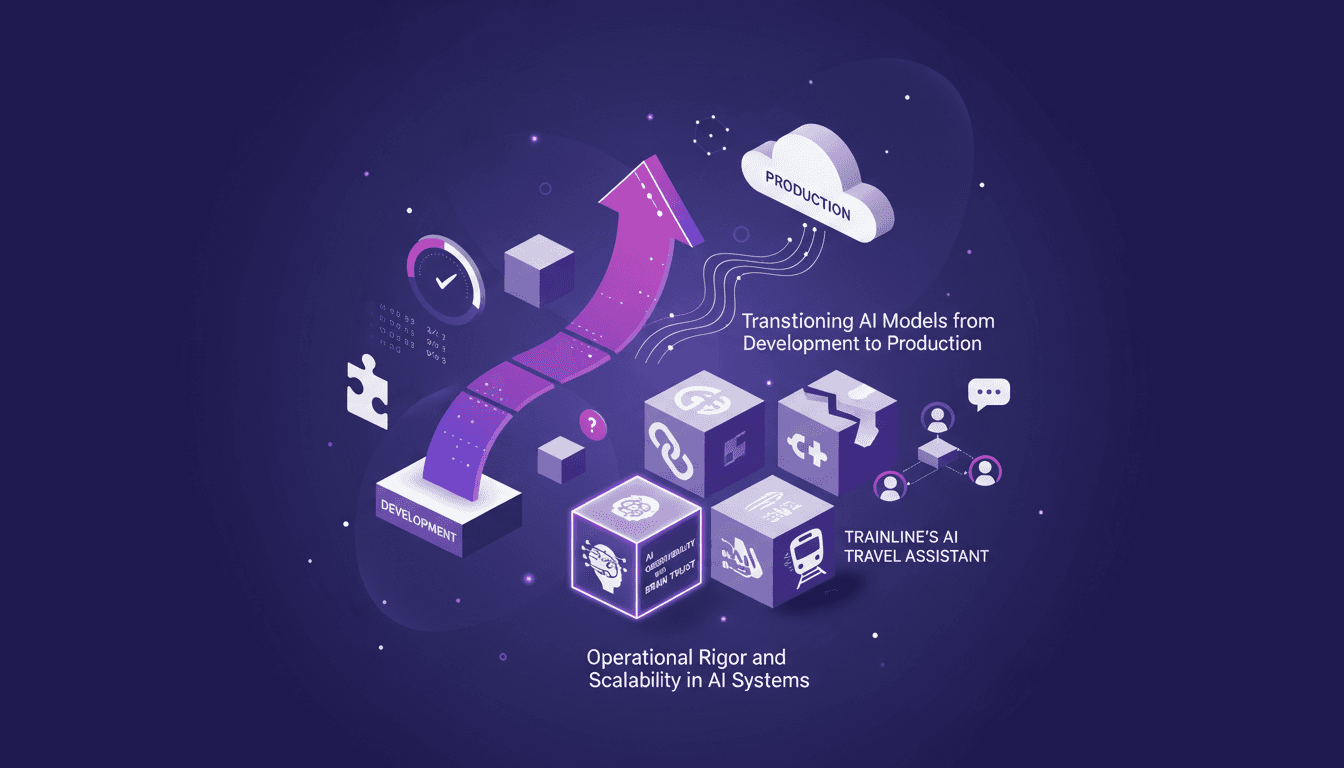

Delivering Quality AI Apps: A Practitioner’s Guide

I've been knee-deep in AI deployment for years, and let me tell you, delivering quality AI applications is no walk in the park. From transitioning models to production to ensuring operational rigor, I've faced—and solved—my fair share of challenges. In this article, I'll walk you through my journey with AI systems, focusing on practical workflows, the tools I rely on, and the pitfalls I've learned to avoid. We'll dive into operational rigor and scalability, transitioning AI models from development to production, and Trainline's AI travel assistant with multi-agent systems. It's a hands-on guide for anyone looking to master the complex art of shipping quality AI apps.