Dipsic V4: AI Revolution, Challenges OpenAI

I've been in the AI trenches for years, watching models evolve. But when I first got my hands on Dipsic V4, I knew we were onto something game-changing. With 1600 billion parameters, this model isn't just another tool in the landscape; it’s a potential disruptor in a space dominated by giants like OpenAI's GPT 5.5. Let me show you why this model is causing such a stir and how it’s rewriting the rules. We’ll dive into its innovative features, aggressive pricing strategy, and what it means for players like Nvidia and OpenAI. Watch out, this could be a game changer.

I've been in the AI trenches for years, watching models evolve. But when I got my hands on Dipsic V4, I knew we were onto something game-changing. With 1600 billion parameters, this model isn't just another name on the list; it's a potential disruptor in a space dominated by giants like OpenAI's GPT 5.5. And this isn't just hype. Dipsic V4 innovates with a hybrid attention approach, and its aggressive pricing could shake up the market. Consider this: stocks like Zipo, AI, and Minimax have already dropped by 9%. We’re diving into the details of this disruptive model, its political and economic implications, and why Nvidia and the US AI industry should be concerned. I'll also touch on the training programs emerging around this ecosystem. Get ready to rethink your strategies.

Introduction to Dipsic V4: A Game Changer

Dipsic V4 is a powerhouse in the AI landscape. With 1600 billion parameters in the V4 Pro model, we're in a different league. These specs aren't just trendy figures; they fundamentally change how we use and think about AI daily. Each request utilizes 49 billion active parameters, making efficiency in action a reality. It's like transitioning from a gas-powered car to an electric one: everything changes, and you feel it immediately.

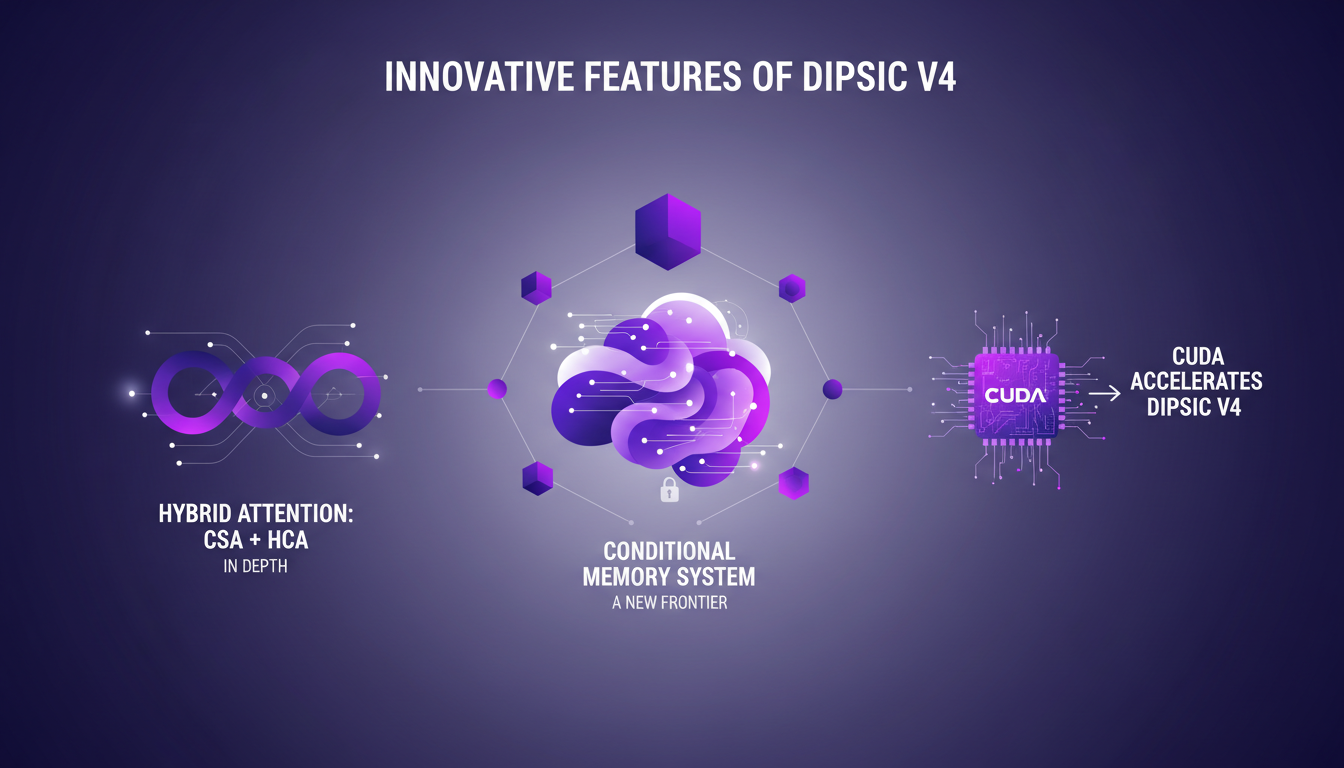

What truly sets Dipsic V4 apart is its hybrid attention approach: CSA + HCA. These technical terms essentially mean combining the strengths of two methods to achieve optimal performance. Practically, this results in a dramatic reduction in computational power consumption and optimized working memory usage. Dipsic V4 doesn't just enhance the past; it rewrites the rules.

Dipsic V4 vs GPT 5.5: Head-to-Head

Speaking of revolutions, let's compare Dipsic V4 with GPT 5.5. Where Dipsic V4 shines is in its capacity to efficiently handle vast contexts, up to 1 million tokens, or about 750,000 words. Imagine reading an entire series of novels at once. But watch out, GPT 5.5 still holds its ground in certain aspects, like the diversity of commercial applications.

Dipsic V4's conditional memory system is a major innovation. Inspired by human memory, it enables the AI to forget irrelevant details and compress information efficiently. Additionally, the KV Cache enhances working memory efficiency, allowing for more complex tasks without skyrocketing costs.

Innovative Features of Dipsic V4

Back to the hybrid attention approach: CSA + HCA. By combining these two methods, Dipsic V4 reduces power consumption while increasing processing capabilities. It's like upgrading from a piston engine to a jet engine.

Then there's the conditional memory system, which is truly the new frontier. It allows the AI to function more humanly, forgetting the unnecessary and focusing on the essential. And where CUDA comes into play is in accelerating Dipsic V4's performance, making processes faster and less energy-intensive.

Cost-Effectiveness and Strategic Partnerships

Let's talk numbers. The Dipsic V4 model is offered at a much more competitive rate than its competitors, like Chat GPT 5.5 and Claud 4.7. It's a strategic positioning that changes the market dynamics. The partnership with Huawei is another key piece of the puzzle, imposing a new dynamic in the AI sector.

This partnership with Huawei, based on the use of Chinese chips and software, disrupts the market, directly challenging Nvidia and its CUDA. The economic implications are huge, as evidenced by a 9% drop in Zipo, AI, and Minimax stocks. But remember, cost is only part of the equation. Performance and solution longevity must also be considered.

Educational Opportunities and Future Outlook

For those looking to dive into the world of Dipsic V4, training programs are available. These resources allow users to familiarize themselves with the models without technical prerequisites. It's a gateway to the future of AI, where continuous learning becomes essential.

The political and economic implications of Dipsic V4's release are also worth considering. In a context of US-China rivalries, this model becomes a key player. For practitioners, it's crucial to prepare for these changes and anticipate future AI developments.

Dipsic V4 isn't just another AI model; it's a real leap forward. In practice, I've seen how its hybrid attention approach is a game changer. First, with those 1600 billion parameters, the precision is something I haven't encountered before. But watch out, cost is a factor to keep an eye on, even with their aggressive pricing strategy. Then, the strategic partnerships they've set up pave the way for smoother integrations into our workflows. And, for those comparing with Chat GPT 5.5, it's not just about numbers. You need to test in real-world conditions.

Looking ahead, mastering Dipsic V4 will allow us to transform our AI projects. We can't afford to ignore these cutting-edge technologies. I strongly encourage you to dive into Dipsic V4's features and explore its potential. For a deeper understanding, watch the original video: it provides essential insights into what DeepSeek has achieved against OpenAI. YouTube link

Frequently Asked Questions

Thibault Le Balier

Co-fondateur & CTO

Coming from the tech startup ecosystem, Thibault has developed expertise in AI solution architecture that he now puts at the service of large companies (Atos, BNP Paribas, beta.gouv). He works on two axes: mastering AI deployments (local LLMs, MCP security) and optimizing inference costs (offloading, compression, token management).

Related Articles

Discover more articles on similar topics

GPT 5.5: Token Speed and Enterprise Strategy

I dove into GPT 5.5 the moment it dropped, and let me tell you, the 20% token speed boost isn't just a number—it's a game changer for real-time applications. But there's more under the hood than just speed. Released on April 23, 2026, this model marks a rapid evolution in OpenAI's offerings. This isn't just about new features; it's a strategic pivot towards enterprise solutions, optimizing infrastructure, and redefining efficiency. We'll explore the release of GPT 5.5, Entropique's market strategy, the impact of Cloud Code on the coding landscape, and how OpenAI is reshaping its approach to conquer the enterprise markets.

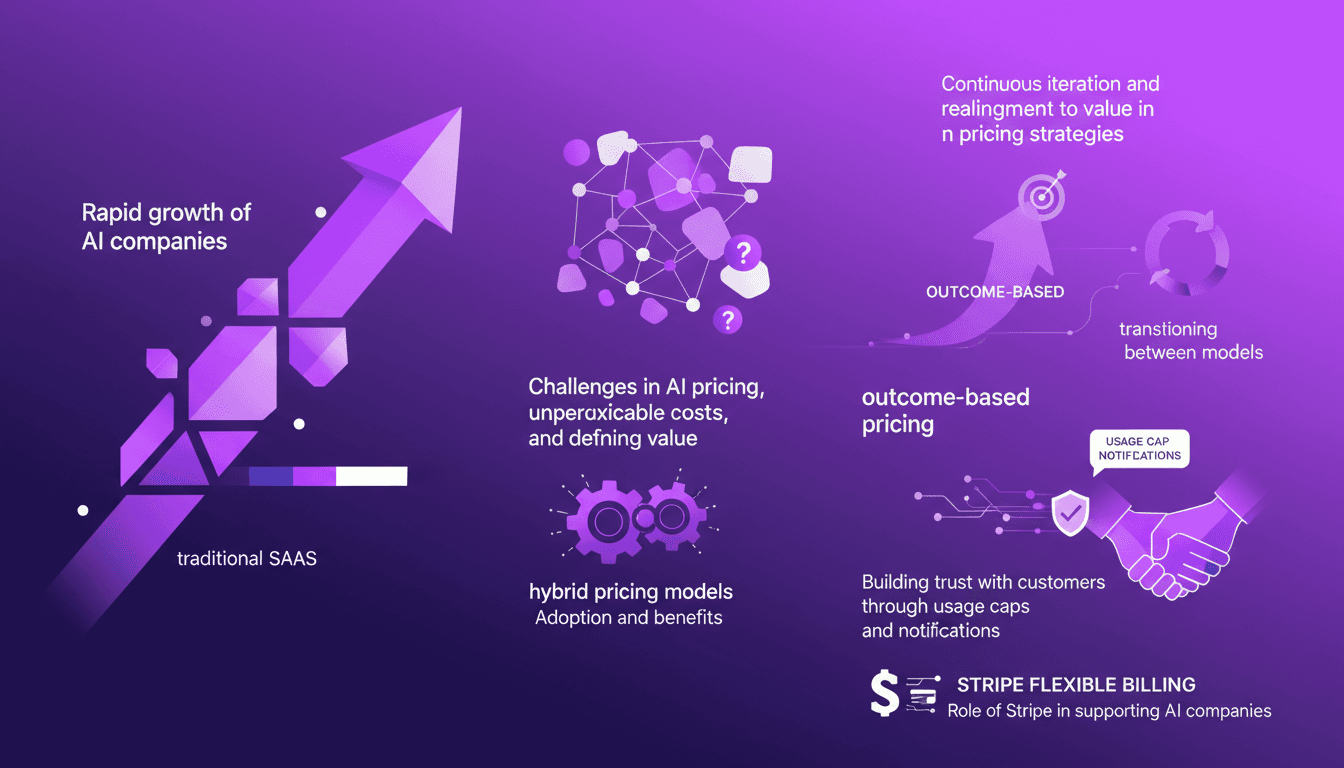

Master AI Pricing: Flexible Hybrid Models

I remember the first time I had to price an AI service. The growth was explosive, but the pricing model felt like a straitjacket. Let's talk about how we can break free. Today, AI companies are outpacing traditional SaaS, but their pricing strategies often lag behind. In this article, I'll share practical insights on adopting flexible and agile monetization strategies for AI products. We'll address the challenges in AI pricing, like unpredictable costs and defining value, and the adoption of hybrid pricing models. We'll also explore transitioning to outcome-based pricing to build trust with customers through usage caps and notifications. Finally, we'll discuss the role of Stripe in supporting AI companies with flexible billing solutions. It's time to rethink our approach to truly align with real value.

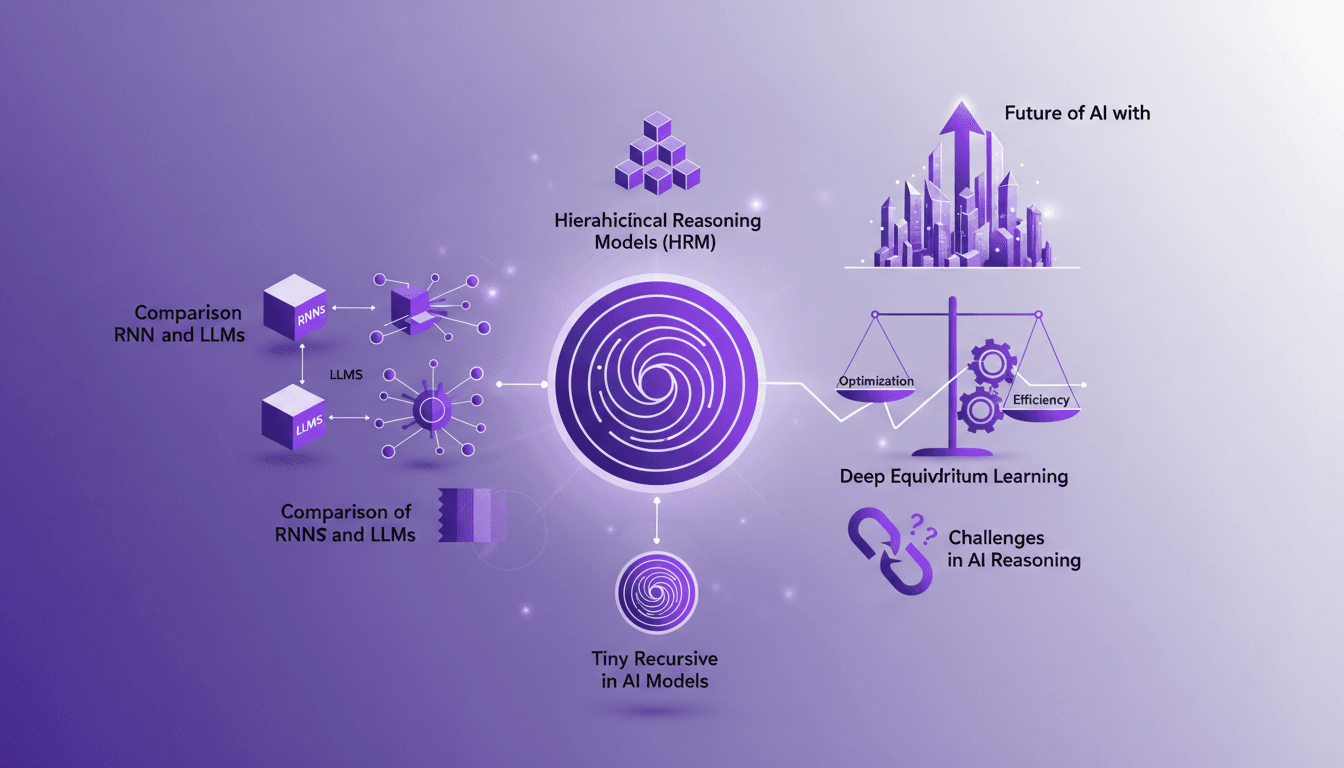

Recursion in AI: Transforming Models

I've spent countless hours tweaking AI models, and let me tell you, recursion is the game changer we've been waiting for. Forget the race for more parameters; now it's about intelligence. While traditional models hit scaling walls, recursion offers a fresh perspective. We're diving into how it could redefine AI efficiency and capability. We'll discuss hierarchical reasoning models, tiny recursive models, deep equilibrium learning, and the challenges of optimization. If you've ever been frustrated by scalability limits, you're going to love this new paradigm.

Supply Chain 2.0: Revolutionizing Semiconductors

I've been knee-deep in semiconductor supply chains for years, and let me tell you, it's not just about making chips; it's about orchestrating a global symphony. First, you tackle the 1,400 process steps, then you navigate through a dozen countries. But what happens when a $300 chip holds up a $50,000 car? That's when things get real. With AI chips at the forefront, every step from manufacturing to delivery must be optimized. Let's dive into how we're handling these challenges and where the opportunities lie.

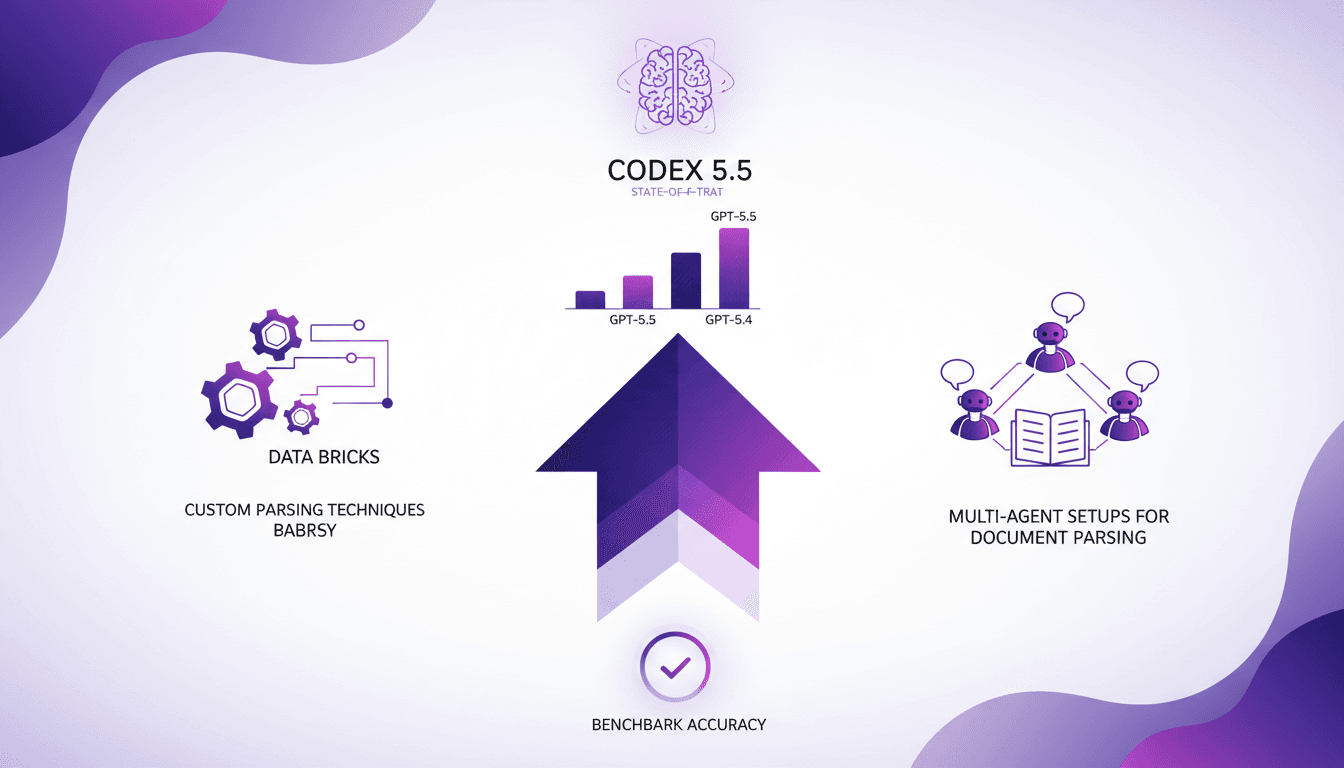

GPT-5.5 Performance Boosts: Key Insights

I was knee-deep in parsing challenges when GPT-5.5 came along, and let me tell you, it's a game changer. But it’s not all roses. In the intricate world of Databricks, strategic setup is key. With impressive performance boosts and increased accuracy, GPT-5.5 is setting new standards, but you need to harness it wisely. I'll show you how I tapped into this power, from custom parsing techniques to multi-agent setups. Get ready to dive into the technical nitty-gritty and see how Codex 5.5 stands as the state-of-the-art model!