Delivering Quality AI Apps: A Practitioner’s Guide

I've been knee-deep in AI deployment for years, and let me tell you, delivering quality AI applications is no walk in the park. From transitioning models to production to ensuring operational rigor, I've faced—and solved—my fair share of challenges. In this article, I'll walk you through my journey with AI systems, focusing on practical workflows, the tools I rely on, and the pitfalls I've learned to avoid. We'll dive into operational rigor and scalability, transitioning AI models from development to production, and Trainline's AI travel assistant with multi-agent systems. It's a hands-on guide for anyone looking to master the complex art of shipping quality AI apps.

I've been knee-deep in AI deployment for years, and let me tell you, delivering quality AI applications is no walk in the park. First, getting models from development into production takes an operational rigor many underestimate. I've faced challenges, I've been burned more than once, but I've figured out how to overcome them. This article dives into my journey with AI systems. I'll guide you through my practical workflows, the tools that have become my allies, and pitfalls to avoid. We'll talk operational rigor, scalability, and transitioning AI models from development to production. And if you're curious about how Trainline uses a multi-agent AI travel assistant, I'll show you the backstage. This is a guide for those who want to master the complex art of shipping quality AI apps.

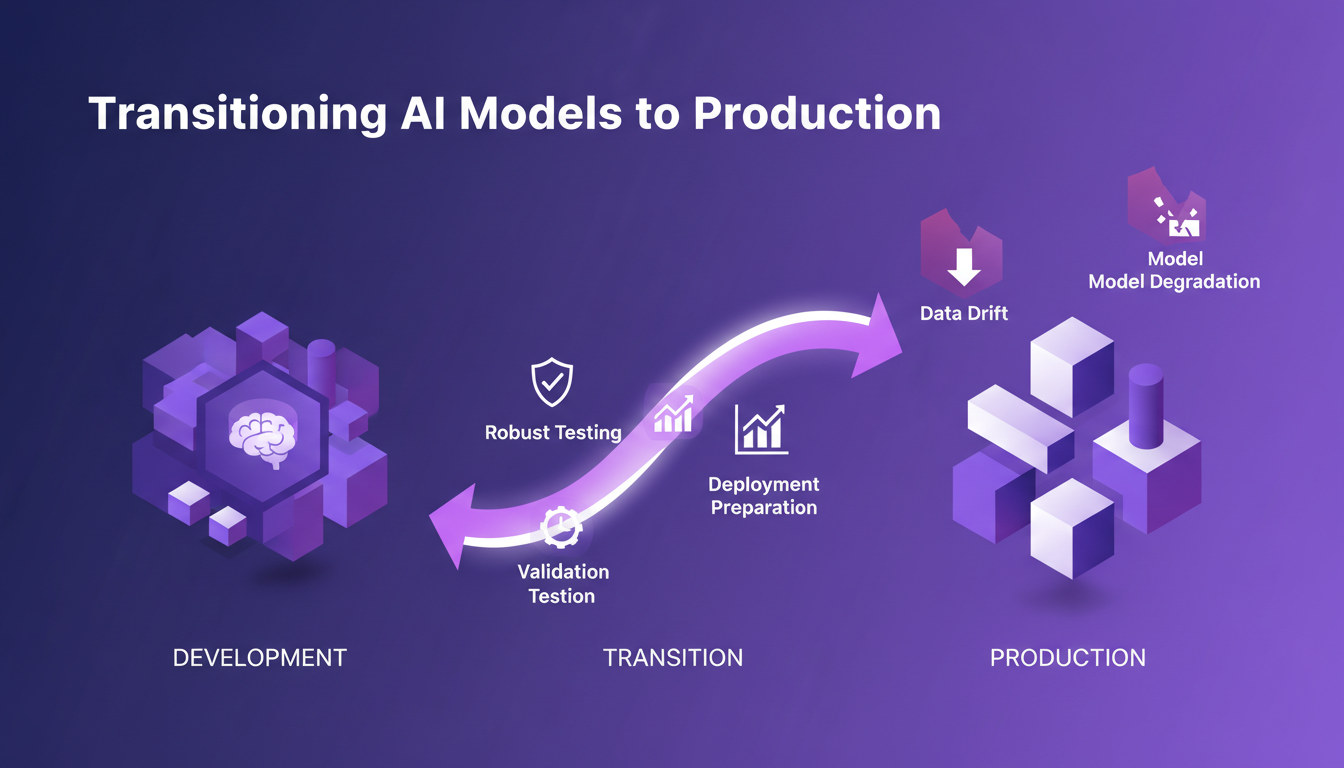

Transitioning AI Models to Production

Moving AI models from development to production is a challenge that can't be overlooked. First, I tackle the practical hurdles that come with this transition. It's not just a formality; it's a deep dive into real-world application. The key is ensuring the model is ready to face the real world, which requires robust testing and solid validation before deployment.

Watch out for common pitfalls like data drift and model degradation over time, which can seriously impact performance. This is why a solid CI/CD pipeline is indispensable for streamlining this process. I use golden data sets to ensure reliable benchmarking.

- Robust testing before deployment

- Beware of data drift

- CI/CD pipeline for efficiency

- Golden data sets for reliable benchmarking

Ensuring Operational Rigor in AI Systems

Operational rigor isn't just a buzzword; it's essential for scalable AI. I often compare the impact of deterministic vs non-deterministic scoring on system performance. What I've learned is that clarity in scoring can really be a game changer.

I use AI observability tools like Brain Trust to monitor system health. The role of parent spans in tracing and debugging interactions is crucial. They allow me to maintain system integrity through continuous monitoring and updates.

- Difference between deterministic and non-deterministic scoring

- Using Brain Trust for observability

- Tracking interactions with parent spans

- Continuous monitoring for system integrity

AI Observability and Multi-Agent Systems

My experience with AI observability, especially with Brain Trust, has been a paradigm shift. Multi-agent systems are powerful but complex to orchestrate. I've learned to manage these systems effectively.

LLMs as judges in evaluations are a revolution, but watch out for their limits. I manage agent interactions to avoid conflicts and ensure efficiency. There's always a trade-off between flexibility and control.

- Using Brain Trust for observability

- Orchestrating multi-agent systems

- LLMs as judges in evaluations

- Managing interactions to avoid conflicts

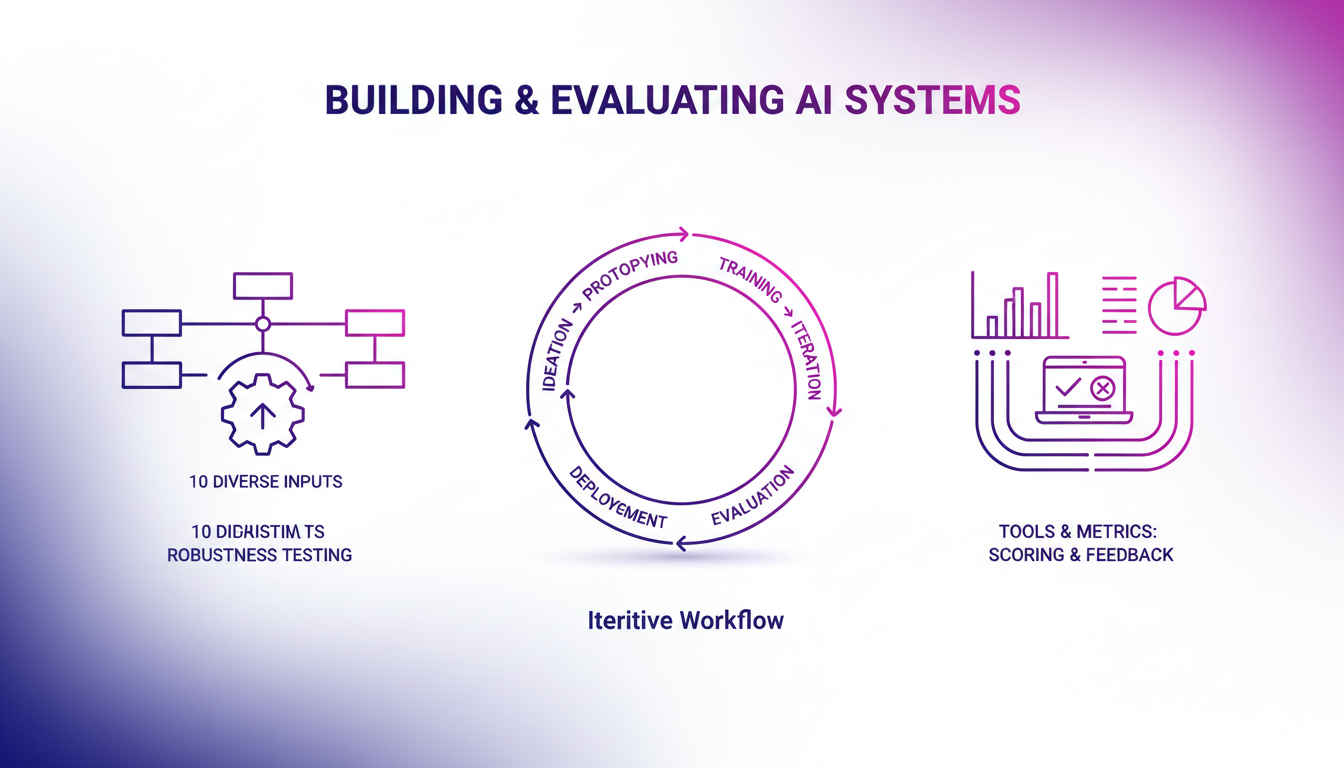

Building and Evaluating AI Systems

Building AI systems is an iterative process. I share my workflow from ideation to deployment. Evaluation is key: I create 10 diverse inputs to test system robustness.

I discuss the tools and metrics I use for scoring and feedback loops. Collaboration is crucial; I highlight the tools that facilitate teamwork. Continuous improvement and learning from failures are essential.

- Iterative building process

- Creating diverse inputs for evaluation

- Tools and metrics for scoring

- Collaboration tools for teamwork

- Continuous improvement and learning from failures

Managing AI Systems and Continuous Improvement

Managing AI systems requires constant vigilance and adaptability. Remediation processes are essential for handling failures. Continuous improvement isn't optional; it's a core part of my workflow.

I share how I keep the team aligned and motivated through regular updates. Feedback loops play a key role in refining AI models and systems.

- Vigilance and adaptability for management

- Remediation processes for failures

- Continuous improvement at the core of the workflow

- Regular updates to keep the team motivated

- Feedback loops for refining models

Let's get straight to it: shipping complex AI applications isn’t just about technical prowess. It's also about operational rigor and effective collaboration. First, I've learned that the quality of the application rests on our ability to integrate AI smoothly into existing systems. Then, AI models need to be scalable and well-orchestrated to avoid crashing in production. Finally, observability is key. With Brain Trust, I could trace individual interactions using a singular parent span, which is a real game changer. But watch out, it requires constant vigilance.

Looking ahead, I believe transforming your AI deployment strategy starts with an honest assessment of your current workflows. Are you ready to take the leap? I encourage you to watch the full video 'Shipping complex AI applications — Braintrust & Trainline' on YouTube. It's well worth it if you’re serious about evolving your approaches!

Frequently Asked Questions

Thibault Le Balier

Co-fondateur & CTO

Coming from the tech startup ecosystem, Thibault has developed expertise in AI solution architecture that he now puts at the service of large companies (Atos, BNP Paribas, beta.gouv). He works on two axes: mastering AI deployments (local LLMs, MCP security) and optimizing inference costs (offloading, compression, token management).

Related Articles

Discover more articles on similar topics

YC Paper Club: Goals and Structure

I joined YC Paper Club last year, and honestly, it's been a game changer for how I understand AI research. Picture a group where we dive deep into AI research papers and discuss practical applications. This isn't just another AI meetup. If you're serious about AI, this is where you need to be. The club keeps it under 100 people, so you really get to build strong connections (and the dinners help). Talks are available online, making it accessible to everyone. But be warned, it's intense — be ready to dig deep into the material and share your insights. It's a space where we build, not just observe.

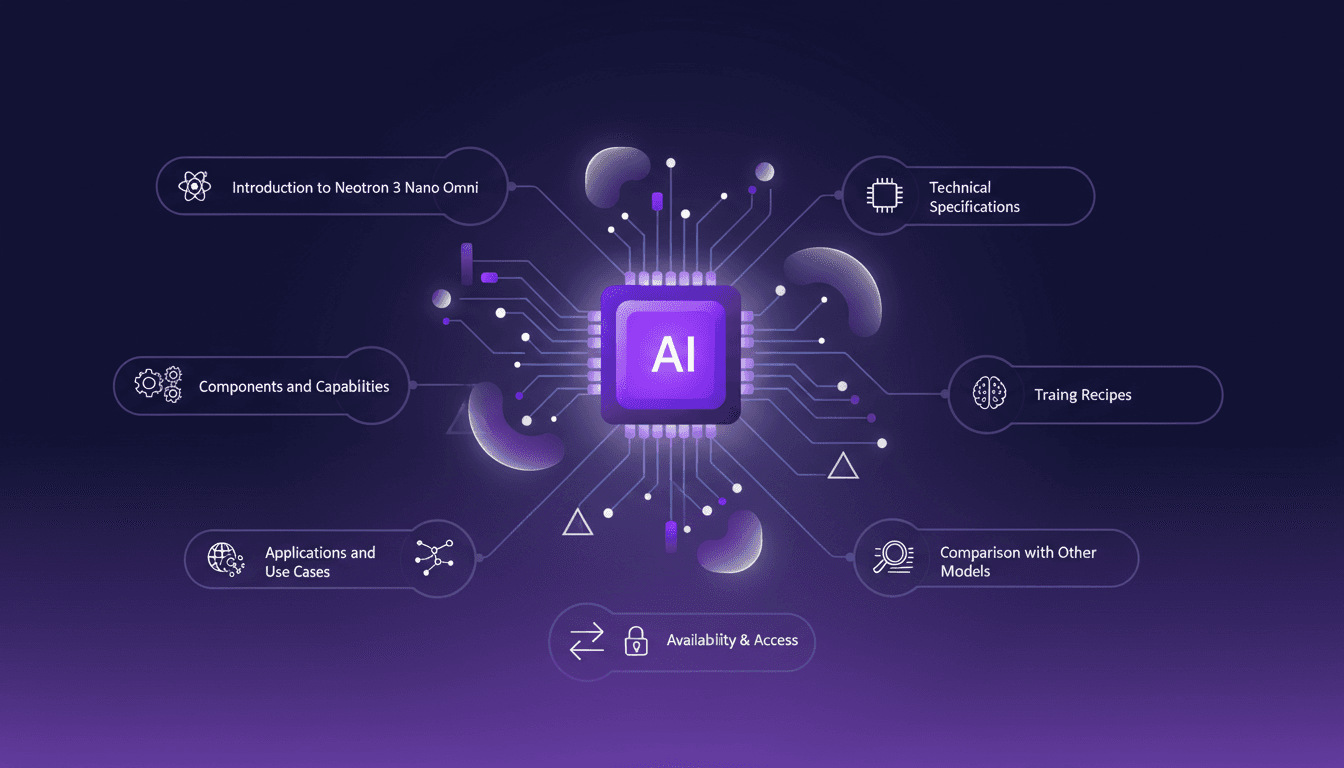

Mastering Neotron 3 Nano Omni: Multimodal Intelligence

I dove into NVIDIA's Neotron 3 Nano Omni and discovered how this powerhouse of multimodal intelligence can redefine our workflows. It's not just hype—it's a game changer, but with some caveats. By combining vision and audio encoding with a transformer mixture of experts model, this tech offers impressive possibilities. I started by connecting the dots between its components, then explored how to harness it effectively and avoid common pitfalls. Whether for software cybersecurity or other applications, Neotron 3 Nano Omni is a powerful tool, but watch out for context limits. I'm sharing my experiences to help you avoid mistakes I made and maximize business impact.

Selling Salam City: Steps and Challenges

In the restaurant business, I've learned that selling a place like Salam City isn't just about numbers. It's about dreams and responsibilities. This is the story of a man torn between his dream to reunite with his wife in America and his ties to his restaurant. I navigated this journey with him, weighing each step and decision. Imagine standing in his shoes: $200,000 for a dream, but also a legacy to let go. Join me in this intricate journey where every choice matters.

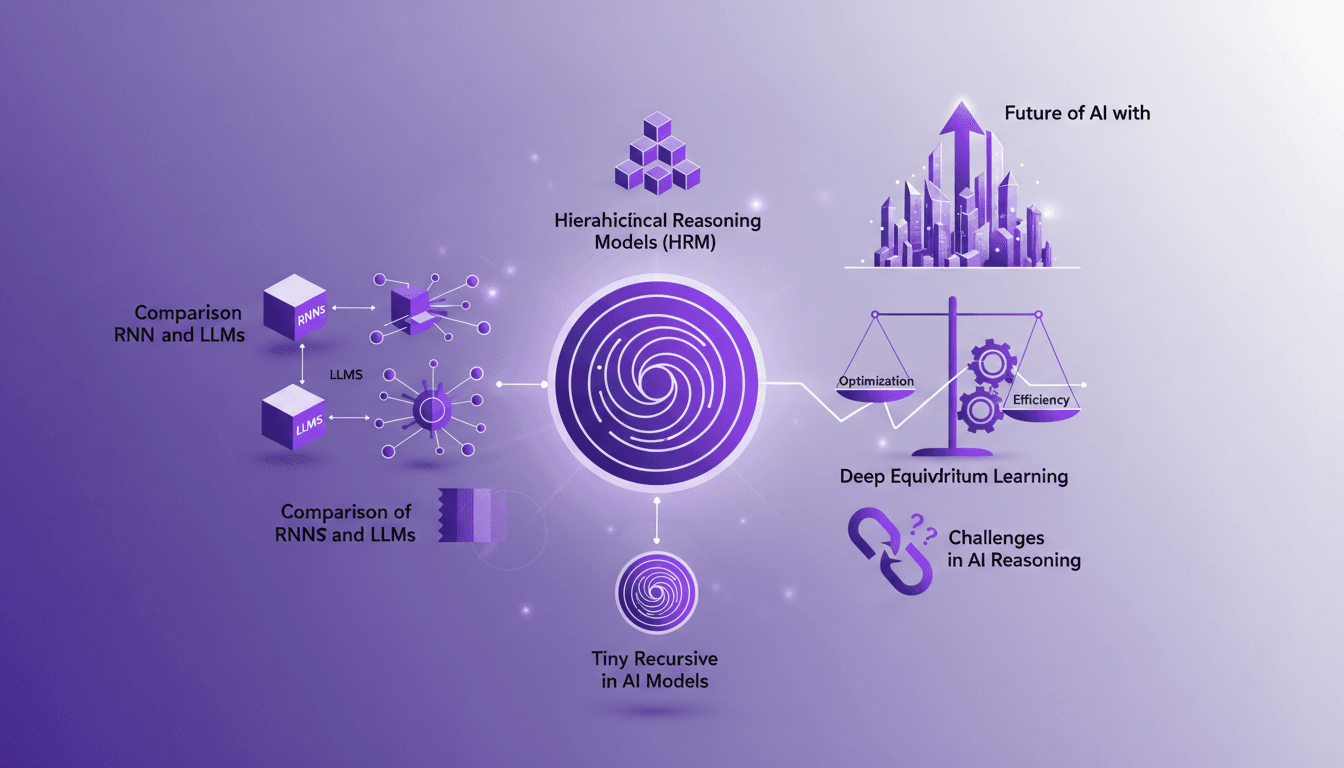

Recursion in AI: Transforming Models

I've spent countless hours tweaking AI models, and let me tell you, recursion is the game changer we've been waiting for. Forget the race for more parameters; now it's about intelligence. While traditional models hit scaling walls, recursion offers a fresh perspective. We're diving into how it could redefine AI efficiency and capability. We'll discuss hierarchical reasoning models, tiny recursive models, deep equilibrium learning, and the challenges of optimization. If you've ever been frustrated by scalability limits, you're going to love this new paradigm.

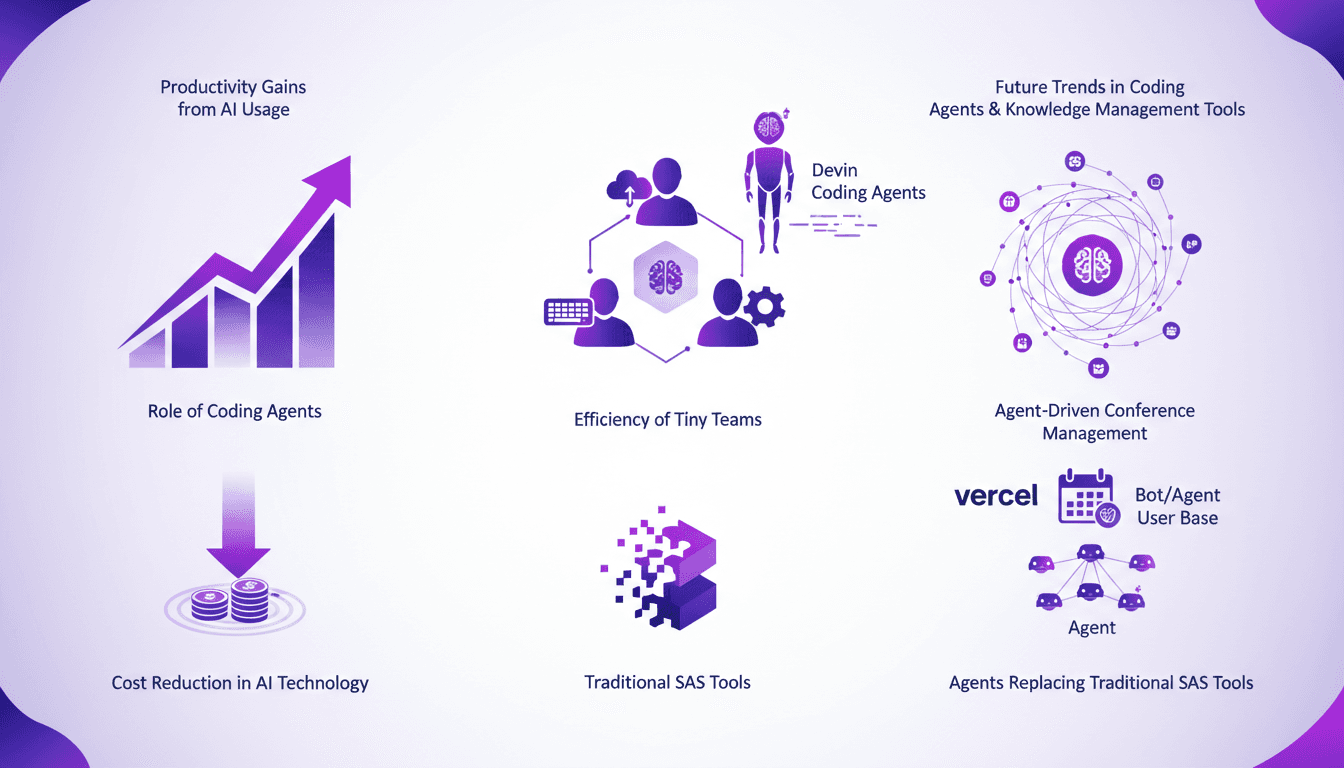

Productivity Gains: AI Agents Empowering Teams

Ever felt like your team's too small to tackle big projects? I did too, until I started leveraging AI coding agents like Devin. These tiny team powerhouses are game-changers. Imagine running a $9 million business with just nine full-timers. With coding agents, it's possible. Let me show you how these tools boost productivity, cut costs, and transform how we work. We're talking about AI costs dropping 100-fold in a few short years. Join me as we explore what Devin and other agents can genuinely do for your team.