Boosting Agents with Supabase Skills: Our Approach

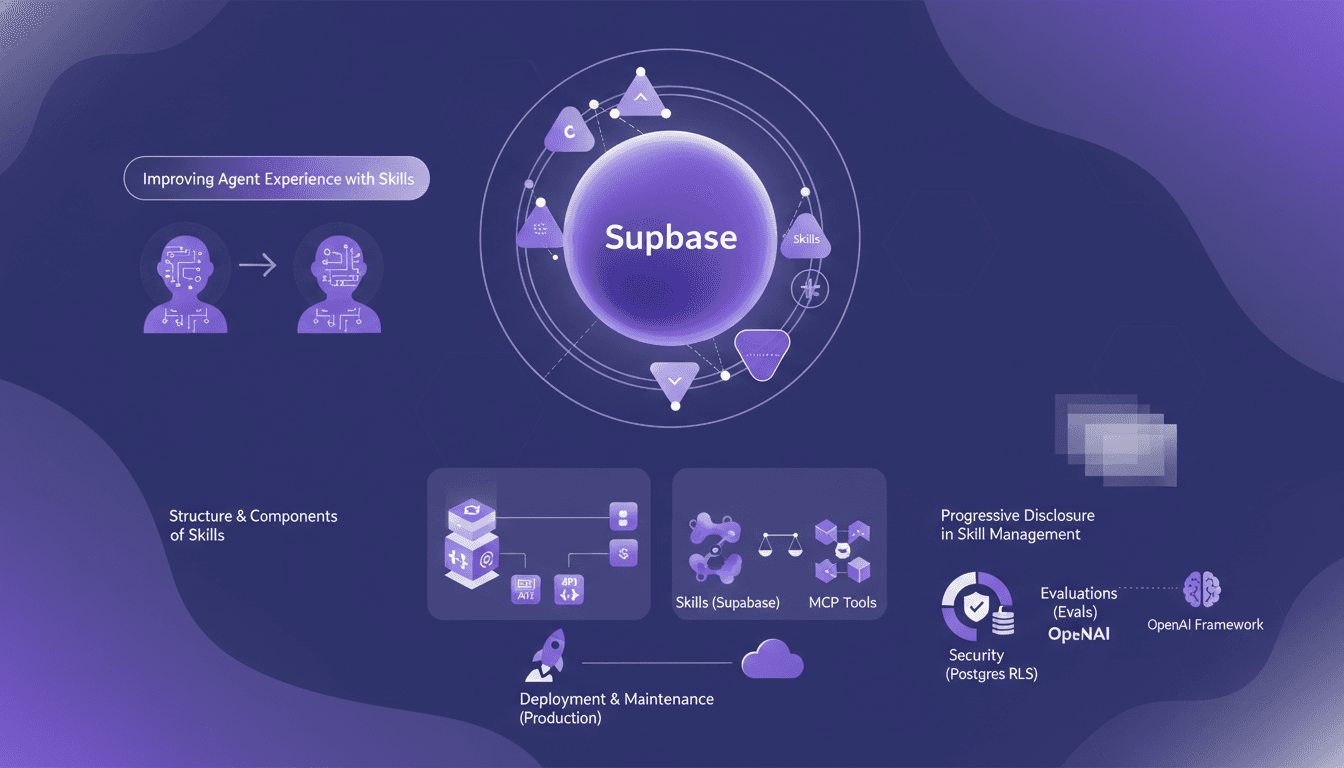

I spent two months knee-deep in Supabase, crafting skills for our AI agents. Let me walk you through how we made them not just good, but actually effective. In this article, I dive into our approach to enhancing agent experience with Supabase, dissecting the structure and components of skills, and comparing them to MCP tools. We've leveraged evaluations to test agent behavior, not to mention the pivotal role OpenAI’s framework played. From RLS in Postgres to deploying in production — each step came with its hurdles. I’ll explain how I orchestrated all of this and, importantly, what I wish I'd known earlier.

I spent two months knee-deep in Supabase, crafting skills for our AI agents. Let me walk you through how we made them not just good, but actually effective. In the ever-evolving landscape of AI, agent skills are pivotal. At Supabase, we refined these skills using evaluations and OpenAI's framework, ensuring our agents perform at their best. Picture setting up your RLS, then wrapping that in middleware, all while juggling agent behavior testing. I orchestrated every step, navigating challenges like security in Postgres and deploying in production. With our four-person team, we tested two conditions, creating a multi-scenario evaluation structure. I’ll share how I piloted this journey and the lessons I learned to keep you from getting burned.

Understanding Skills at Supabase: The Backbone of Our Agents

When we talk about skills at Supabase, we're referring to a solid framework that guides our agents in their daily tasks. Think of them as folders filled with essential instructions and files to execute workflows. At the core of these skills is the skill.md file containing crucial information like the skill's name and description. It's like an index on steroids, allowing the agent to know exactly what to do and when.

We dedicated two intense months to developing these skills, and they were launched in October or November last year. This investment of time was crucial to fine-tuning agent performance and enhancing their capacity to adapt to varied contexts while remaining efficient.

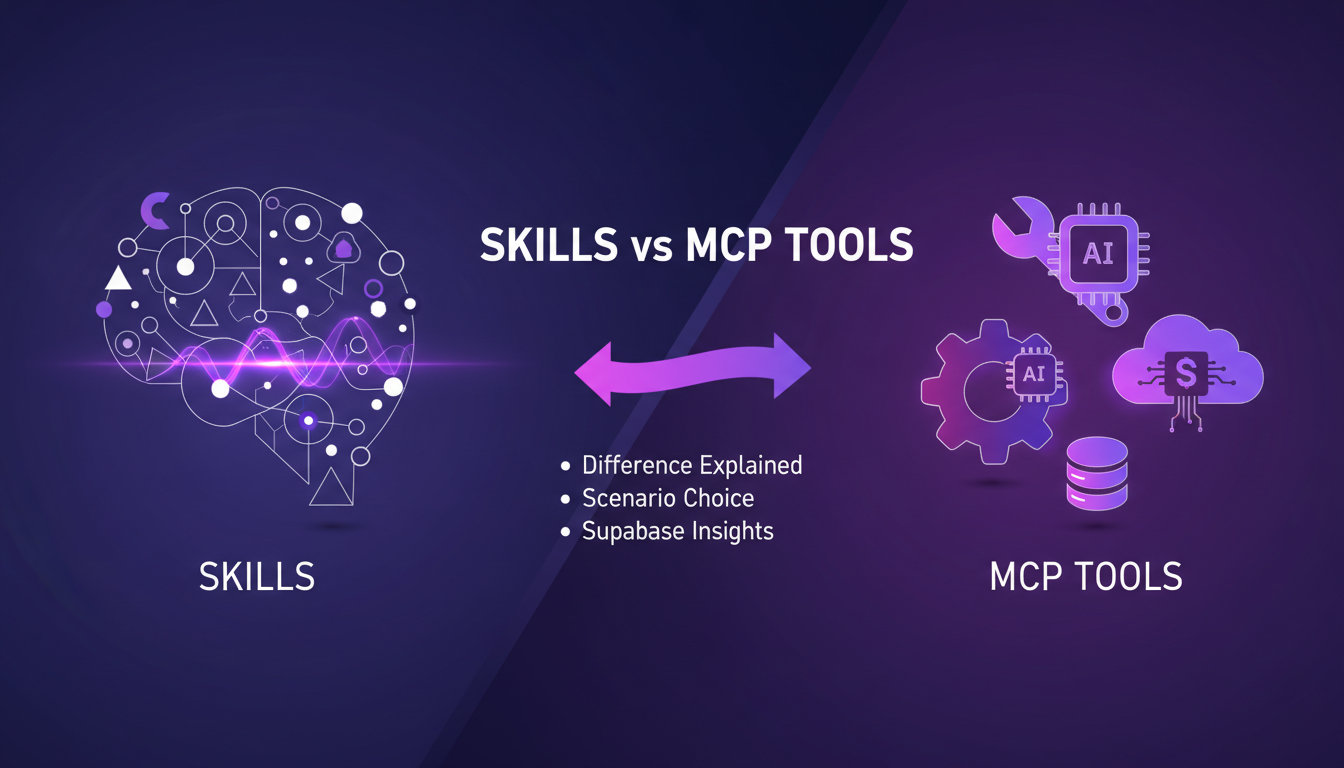

Skills vs MCP Tools: Making the Right Choice

There's always been a debate about using skills versus MCP tools. Skills are ideal for structuring complex operations, while MCP tools offer a more straightforward approach. At Supabase, we've had many discussions about these choices, and what emerged is a recommendation: use both for different purposes.

Skills offer flexibility and contextual adaptation, perfect for situations where the agent needs precise guidance. MCP tools, on the other hand, are preferable for tasks where direct data access is paramount. One doesn't exclude the other, but watch out for the trade-offs: skills can be heavier to maintain, especially if they become overly complex.

- Use skills for complex operations.

- MCP tools for direct data access.

- Recommendation to combine both based on specific needs.

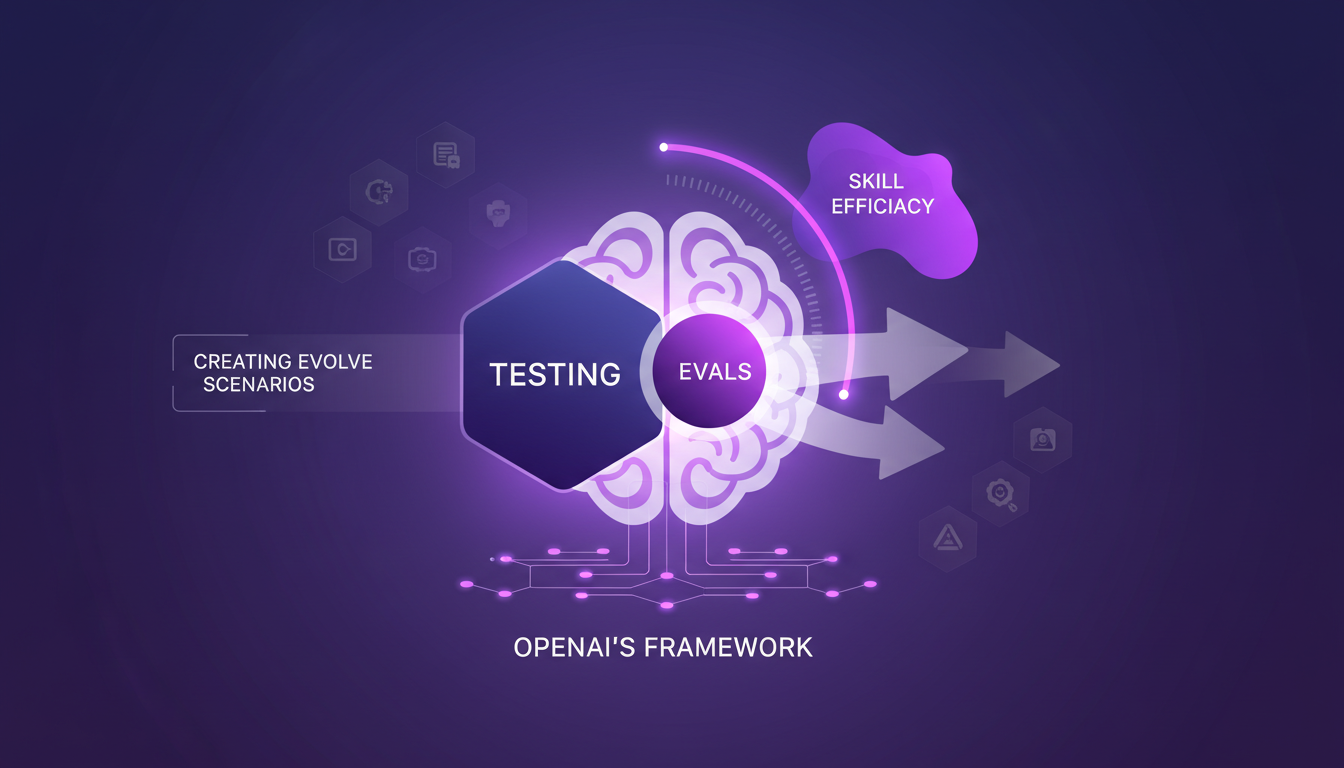

Testing and Evaluating: Ensuring Skill Efficacy

To ensure our skills truly work, we've adopted evaluations (Evals). Thanks to the framework proposed by OpenAI in January, we were able to structure our tests. The idea is to test an agent or model's behavior in a non-deterministic manner by creating evolve scenarios.

We tested two specific conditions, and the results were revealing. This allowed us to readjust certain skills and ensure they are optimized for maximum performance. The key here is flexibility – being ready to adjust and adapt based on evaluation results.

- Use evaluations to test skills.

- OpenAI framework to structure tests.

- Evolve scenarios for comprehensive testing.

Security and Progressive Disclosure: Safeguarding Our System

Role level security in Postgres is a key element for controlling data access. At Supabase, we've implemented robust security measures to protect our agents. The concept of progressive disclosure plays a crucial role in this process. This approach allows only the necessary information to be revealed at a given time, letting the agent choose when to load the rest of the data.

This strategy has had a significant impact on agent development and security. We've learned that sometimes, less information is more beneficial, especially to avoid overload and improve agent efficiency.

From Development to Deployment: Maintaining Skills in Production

Deploying skills in a production environment is a task in itself. We faced several challenges that we overcame through close collaboration with our product team, which consists of four people. Their role was crucial in ensuring everything ran smoothly.

For maintenance, it's essential to keep skills up-to-date and regularly check their relevance. The business impact is direct: well-deployed skills enhance overall efficiency and strengthen agent performance.

- Challenges of deploying skills in production.

- Importance of the product team in this process.

- Maintenance strategies for long-term efficacy.

Building effective agent skills at Supabase was quite the journey of testing, security, and strategic deployment. Here's what really stood out:

- I crafted evolve scenarios to structure our evaluations, which has completely transformed our AI toolkit.

- With a product team of just four, we managed to deploy these skills while keeping security intact.

- Testing under two specific conditions, I saw a significant boost in agent experience.

These skills have become a cornerstone of our AI toolkit. Looking ahead, I'm confident this approach will continue refining our AI agents and enhancing their performance.

Ready to refine your AI agents? Dive into Supabase's approach and see the difference. I highly recommend checking out the original video by Pedro Rodrigues to get a deeper understanding of how we made our agents truly effective. Watch here.

Frequently Asked Questions

Thibault Le Balier

Co-fondateur & CTO

Coming from the tech startup ecosystem, Thibault has developed expertise in AI solution architecture that he now puts at the service of large companies (Atos, BNP Paribas, beta.gouv). He works on two axes: mastering AI deployments (local LLMs, MCP security) and optimizing inference costs (offloading, compression, token management).

Related Articles

Discover more articles on similar topics

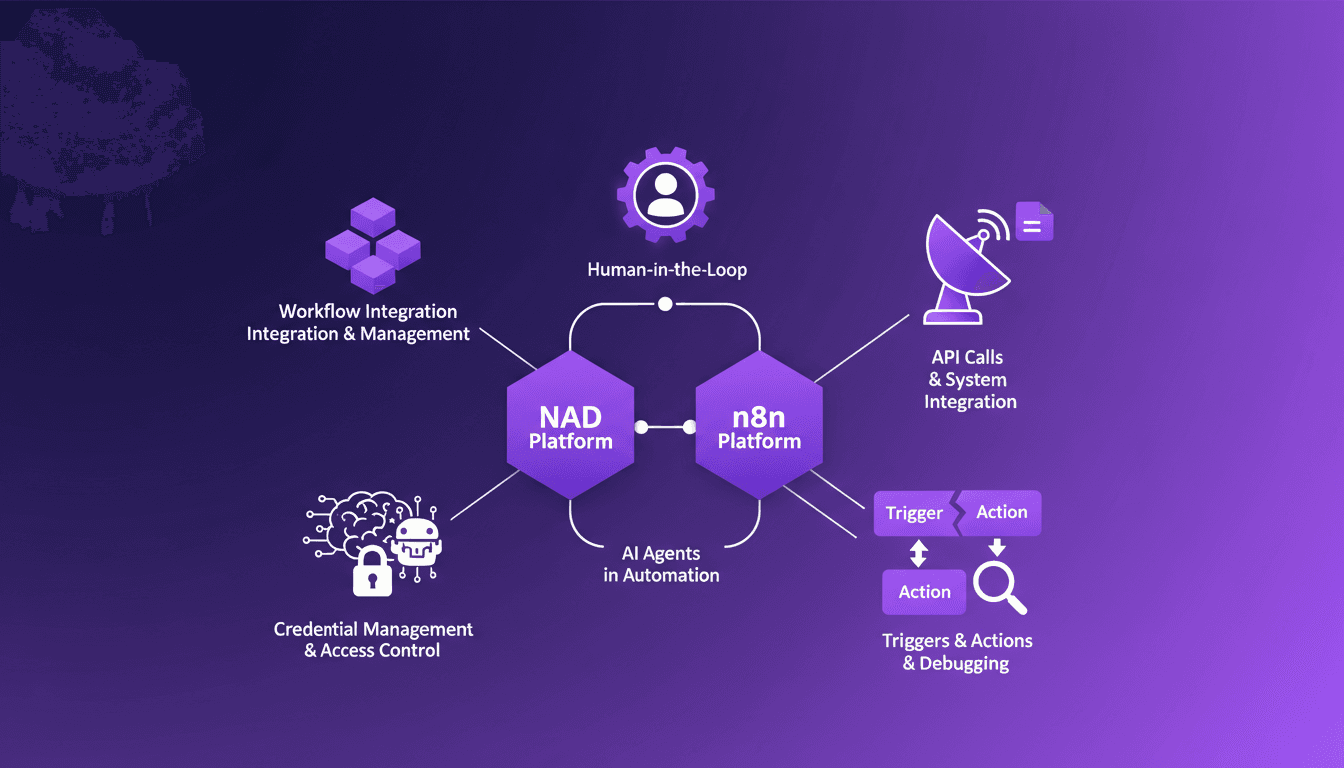

Human-in-the-Loop with n8n: Practical Integration

I dove into n8n and NAD to streamline my workflows, and let me tell you, it's been a game changer. But watch out, every tool has its quirks and limits. In this article, I'll show you how I integrate human-in-the-loop automation using these platforms. Automation isn't just about machines doing all the work. Sometimes you need a human touch to guide the process. That's where human-in-the-loop automation comes in, especially when using platforms like n8n and NAD. We'll explore API integrations, error management, and how to juggle AI agents in your workflows.

Integrating OpenClaw: Optimizing Daily Life

I handed over the keys to my life to an AI agent. Sounds risky, right? Yet, integrating OpenClaw transformed my daily routine in remarkable ways. Picture managing a 3,000-page Obsidian knowledge base, with tasks kicking off at 4 a.m. I’m sharing how I optimized my life with AI, from data management to building reliable routines. Fixing a Netflix payment failure in five minutes made me realize the potential of these tools. But beware, you need to filter and prioritize information, and handle sometimes brittle automations. Ultimately, it's a fascinating journey toward an AI-optimized life.

Software for Agents: Designing for the Future

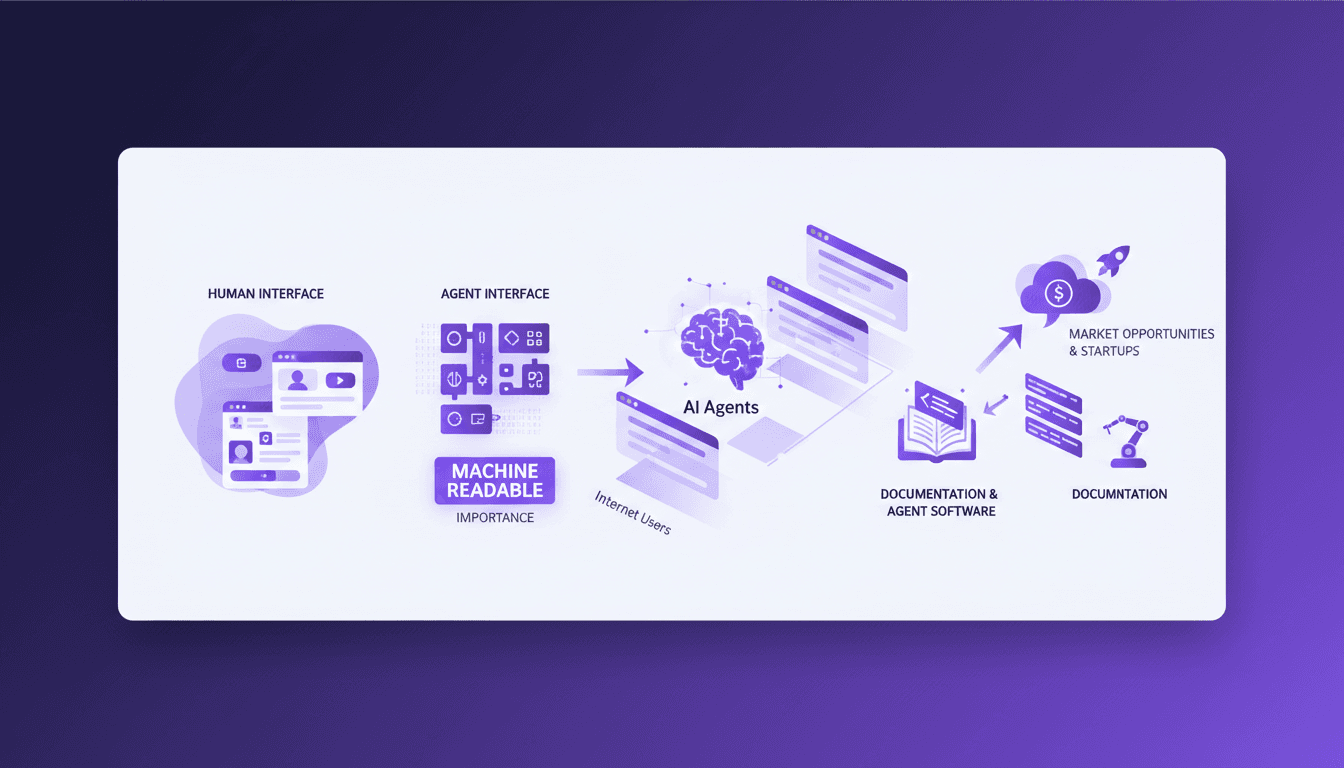

I still remember the moment I realized the next trillion internet users wouldn't be human—it would be AI agents. It hit me during a conference and shifted my whole approach to software design. Gone are the days when human-centric design was enough. Now, it's the era of agents, which means we need to rethink everything—from the interfaces we use to the market opportunities for startups. Machine-readable interfaces like APIs, MCPs, and CLIs are becoming crucial, and clear documentation is no longer optional. If you want to stay ahead, now's the time to pivot and think agent-first. Otherwise, you risk falling behind in this digital revolution.

Delivering Quality AI Apps: A Practitioner’s Guide

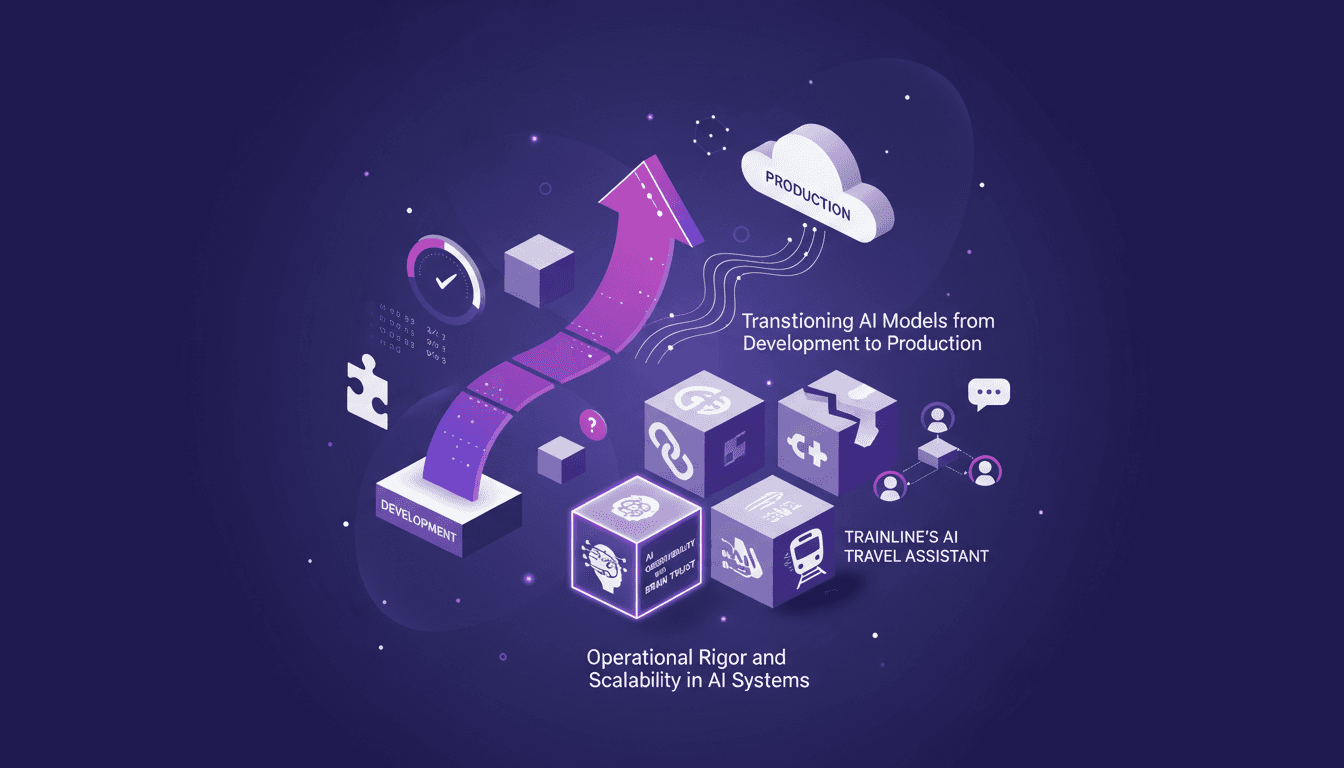

I've been knee-deep in AI deployment for years, and let me tell you, delivering quality AI applications is no walk in the park. From transitioning models to production to ensuring operational rigor, I've faced—and solved—my fair share of challenges. In this article, I'll walk you through my journey with AI systems, focusing on practical workflows, the tools I rely on, and the pitfalls I've learned to avoid. We'll dive into operational rigor and scalability, transitioning AI models from development to production, and Trainline's AI travel assistant with multi-agent systems. It's a hands-on guide for anyone looking to master the complex art of shipping quality AI apps.

Productivity Gains: AI Agents Empowering Teams

Ever felt like your team's too small to tackle big projects? I did too, until I started leveraging AI coding agents like Devin. These tiny team powerhouses are game-changers. Imagine running a $9 million business with just nine full-timers. With coding agents, it's possible. Let me show you how these tools boost productivity, cut costs, and transform how we work. We're talking about AI costs dropping 100-fold in a few short years. Join me as we explore what Devin and other agents can genuinely do for your team.