Code Mode: Slash API Calls Efficiently

I've been in the trenches with API calls, and let me tell you, Code Mode is a game changer. First, I was skeptical, but then I saw the 99.9% reduction in token usage. Let's dive into how this works and why it matters in today's tech landscape. Code Mode isn't just about slashing API calls; it transforms how we engage with AI models, capability-based security, and even generative UIs. It's not just hype—it's the next step for more efficient and secure software architecture.

I've been in the trenches with API calls, and let me tell you, Code Mode is a game changer. Picture this: a 99.9% reduction in token usage. I was skeptical at first, but when I saw the numbers, I had to admit it was a turning point. In today's tech landscape, where efficiency and security are paramount, Code Mode isn't just a buzzword. It's a practical approach that transforms how we interact with APIs and build software. We're talking about massive reductions in API call tokens through code generation, emergent behaviors in AI models, and even new software architectures with the 'harness' concept. And of course, don't forget capability-based security and sandboxing. If you want to see how this translates to personalized software development and generative UIs, dive into this fascinating topic with me.

Understanding Code Mode and Its Real Benefits

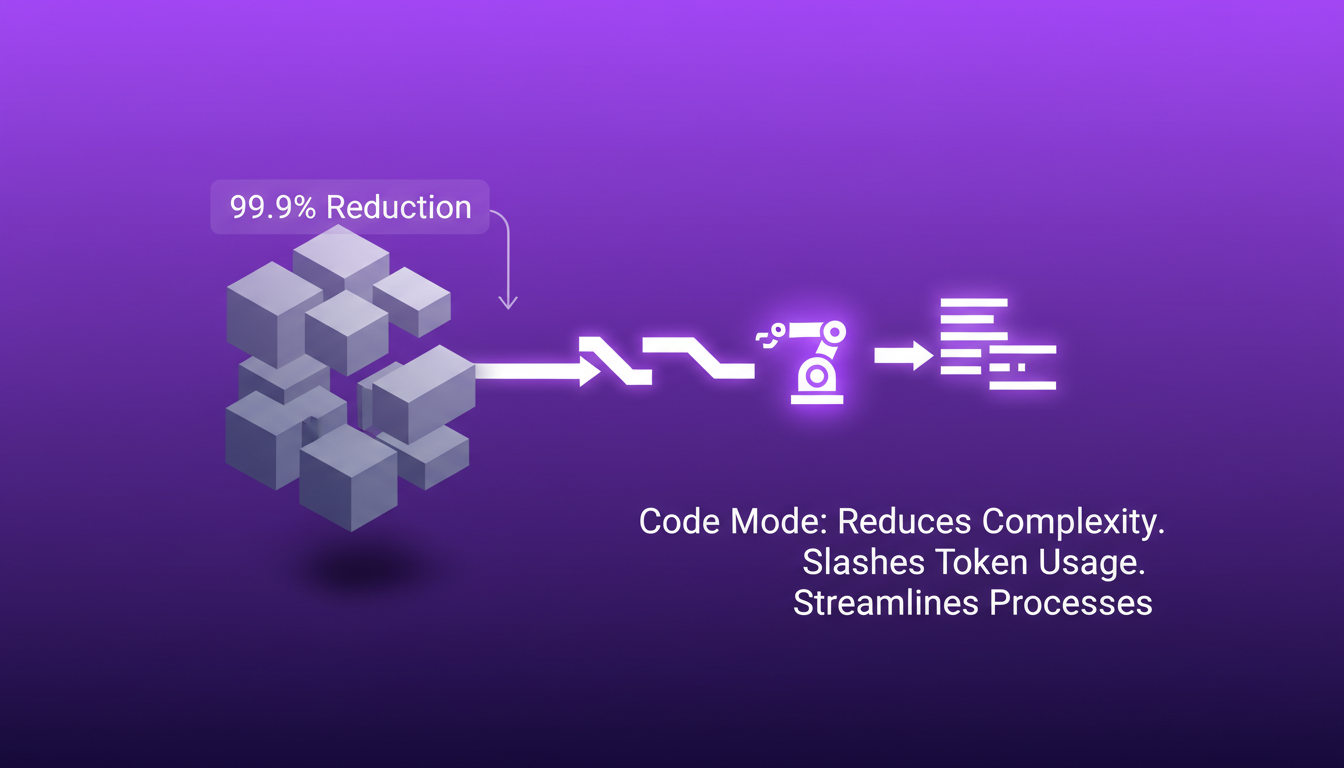

Code Mode isn't just some abstract concept to chat about over coffee. No, it's a genuine workflow that dramatically reduces complexity. I've personally witnessed how it cut token usage by 99.9%. Imagine that: one change in our process, and suddenly everything becomes more efficient. The code takes over, doing all the heavy lifting without constant intervention. The key here is to understand that it's not a magic bullet. The initial setup can be a real headache. But trust me, it's worth the effort.

What I've noticed is that once Code Mode is set up properly, it automates complex tasks without the usual back-and-forth between the model and the environment. However, watch out, the initial configuration can be a bit cumbersome. But once you get past that stage, it's a real game-changer.

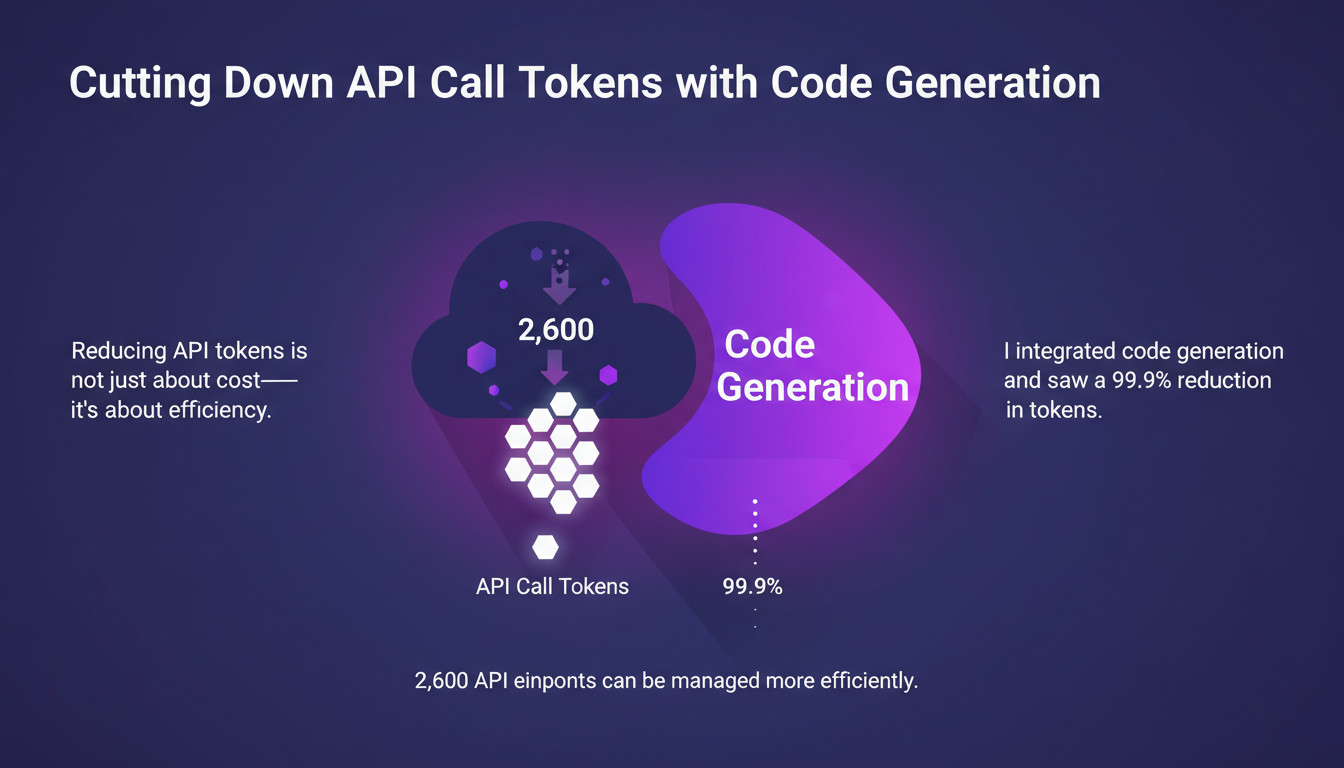

Cutting Down API Call Tokens with Code Generation

Reducing API token usage isn't just about cost—it's about efficiency. By integrating code generation, I saw a 99.9% reduction in tokens needed. Imagine managing 2,600 API endpoints with such efficiency. It's a radical shift. However, don't rely blindly on automation. Always validate outputs before implementing them.

What I did was integrate models capable of generating code for API calls, thus reducing the total number of tokens from 1.2 million to just 1,000. But there's a catch: don't over-rely on automation. Sometimes, it's safer to manually check the results before moving on to the next step.

Harnessing Emergent Behaviors in AI Models

AI models can surprise you, and not always in a bad way. I've leveraged these emergent behaviors to optimize certain workflows. For instance, a model generated a tic-tac-toe app without us ever coding the game into the system. It's impressive, but watch out: AI isn't a silver bullet. Understand its limits, because sometimes manual intervention is still necessary.

"The ability to generate code quickly and efficiently enables tasks like DDoS protection to be handled in a single execution."

What I've learned is that these behaviors can be used to improve efficiency, but you should always keep in mind that there might be limitations.

New Software Architectures: The Harness Concept

The harness concept is crucial for managing complex architectures. I've used this method to orchestrate multiple services efficiently. The key here is balancing flexibility and control. But don't over-engineer: simplicity often works best. You need to know when to stop to avoid over-engineering the system.

By implementing this structure, I've been able to streamline interactions and reduce friction points. However, don't fall into the trap of trying to control everything. Sometimes, allowing systems a bit of freedom can lead to unexpected yet effective solutions.

Generative UI and Personalized Software Development

Generative UI allows for personalized user experiences, and I implemented this with a noticeable increase in user engagement. However, beware of over-customization. It can quickly become a maintenance nightmare.

The key is to focus on user needs, not just flashy features. At the end of the day, a well-designed user interface should meet real needs, not just be a showcase of what technology can do. Stay simple, and you'll see the results.

I've brought Code Mode into my workflows, and I can tell you, it's far from being just a theoretical concept. In practice, it's a game changer! First, I saw an incredible reduction in API tokens in just 20 minutes, optimizing from 2,600 API endpoints to 1.2 million tokens. Then, the emergent behaviors in AI models genuinely enriched my development process. You can craft new software architectures by leveraging what I call 'harnesses.'

- API Token Reduction: Under 20 minutes to optimize the usage of 1.2 million tokens

- Emergent Behaviors: AI takes interesting initiatives that enhance innovation

- New Architectures: These 'harnesses' allow for smart code structuring

But watch out, you've got to understand the limits and trade-offs, especially with complex architectures. It's a powerful tool, but not magic. To dive deeper, I highly recommend checking out the original video "Code Mode: Let the Code do the Talking - Sunil Pai, Cloudflare". Start integrating Code Mode today and see how it can transform your efficiency and security.

Go ahead, give it a try and share your experiences!

Frequently Asked Questions

Thibault Le Balier

Co-fondateur & CTO

Coming from the tech startup ecosystem, Thibault has developed expertise in AI solution architecture that he now puts at the service of large companies (Atos, BNP Paribas, beta.gouv). He works on two axes: mastering AI deployments (local LLMs, MCP security) and optimizing inference costs (offloading, compression, token management).

Related Articles

Discover more articles on similar topics

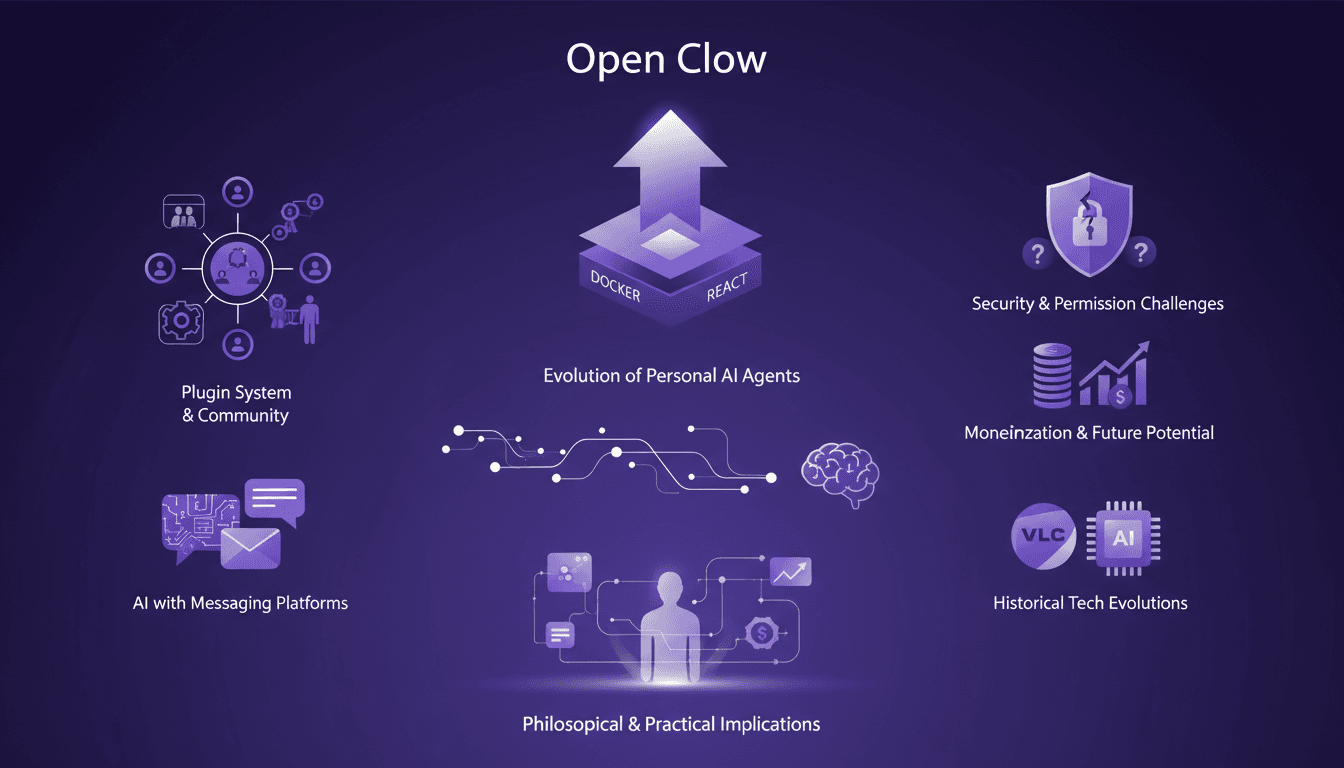

Open Claw Growth & Security: Practical Insights

I jumped into Open Claw five months ago, and it's been a whirlwind ride! This open-source project, boasting 30,000 GitHub stars, isn't just another project—it's a movement. But with growth come challenges, especially in security. I've been in the trenches tackling vulnerabilities (like that Gshjp issue with a CVSS score of 10). Collaborating with major companies also brought its own set of complexities. In this article, I share what's working, what's not, and where we're headed. Get ready for the real story, straight from the trenches.

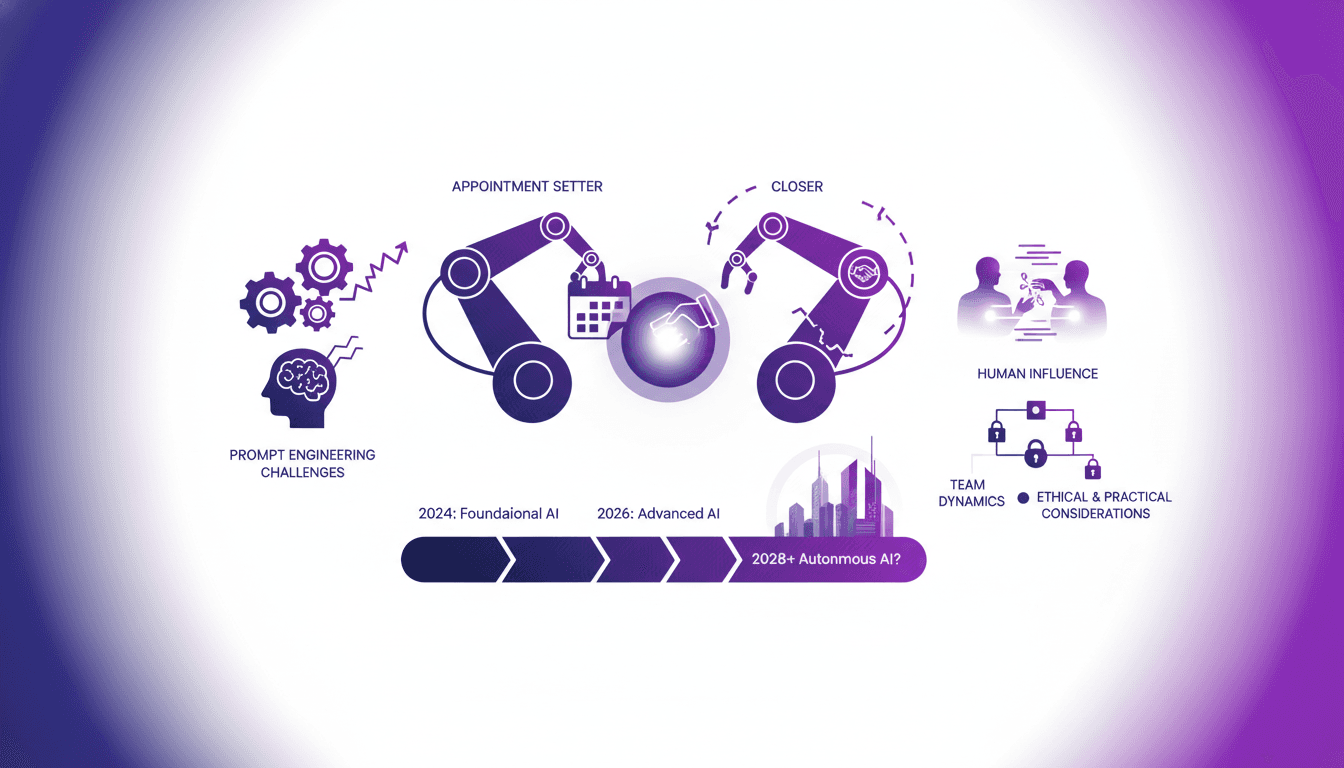

AI in Sales: Current Limits and Future Potential

I've been in sales for over a decade, and AI is seriously shaking things up. But let's be clear: AI isn't closing deals yet, but it's getting closer than you'd think. In this article, I'm diving into the real-world applications of AI in sales, the current limitations, and where we're heading. Let's talk about what works, what doesn't, and what's just around the corner. We'll tackle challenges in prompt engineering for sales, the impact on sales teams and business strategies, and the ethical and practical considerations. Get ready to see how AI might just revolutionize sales in the coming years.

Open Clow Surpasses Docker: Impact and Implications

I clearly remember when Open Clow surpassed Docker and React on GitHub. It felt like witnessing a paradigm shift. Suddenly, personal AI agents were more than just hypothetical—they became a burgeoning movement. With 265,000 stars, Open Clow is reshaping the open-source AI landscape. But it's not just about numbers; it's about the transformation of our daily workflows through these agents. Let's delve into Open Clow's evolution, its plug-in systems, community engagement, and the security challenges it poses. Watch out for permission pitfalls and monetization, because the future of AI is happening now.

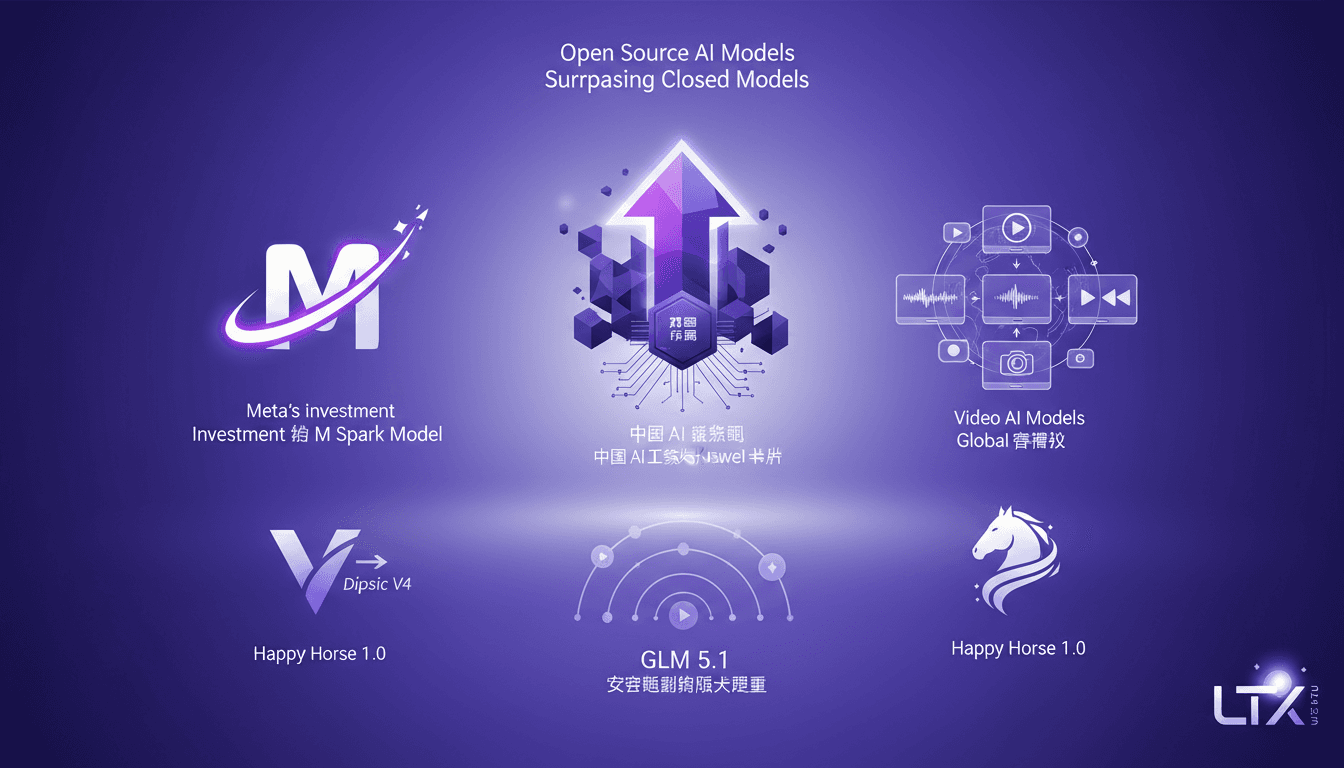

Open Source AI Beats ChatGPT: My Workflow

I've been knee-deep in AI for years, and let me tell you, open source models are shaking things up like never before. When I first saw GLM 5.1 surpassing big names like ChatGPT, I knew we were onto something game-changing. But it's not just about the scores—it's about what we can do with these tools, right in our hands. With scores that give established giants a run for their money, open source is redefining our approach to AI development and deployment. We're diving into how this plays out—from Huawei chips in China to globally competing video models. This transformation is far more than just a tech update—it's a seismic shift in the AI landscape.

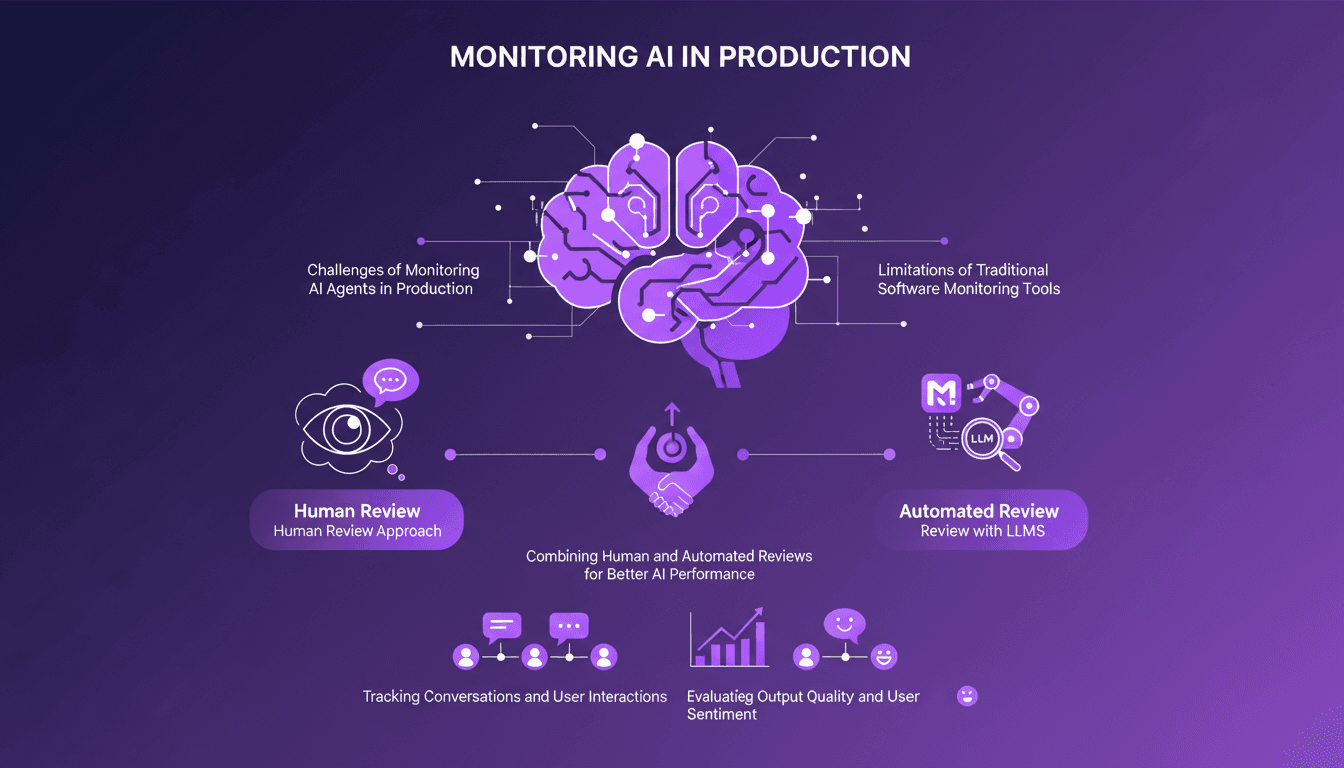

Monitoring AI Agents: Challenges and Solutions

I've been knee-deep in AI production environments, and trust me, monitoring AI agents isn't as straightforward as traditional software. First, I realized that traditional APM tools just don't cut it. With thousands of interactions at stake, ensuring optimal performance is crucial. So I explored new methodologies. LangSmith offers a human review approach and automated review with LLMs for better AI performance. The idea is to combine these two methods to track conversations and evaluate interaction quality. Here's how I tackled the challenge.