Monitoring AI Agents: Challenges and Solutions

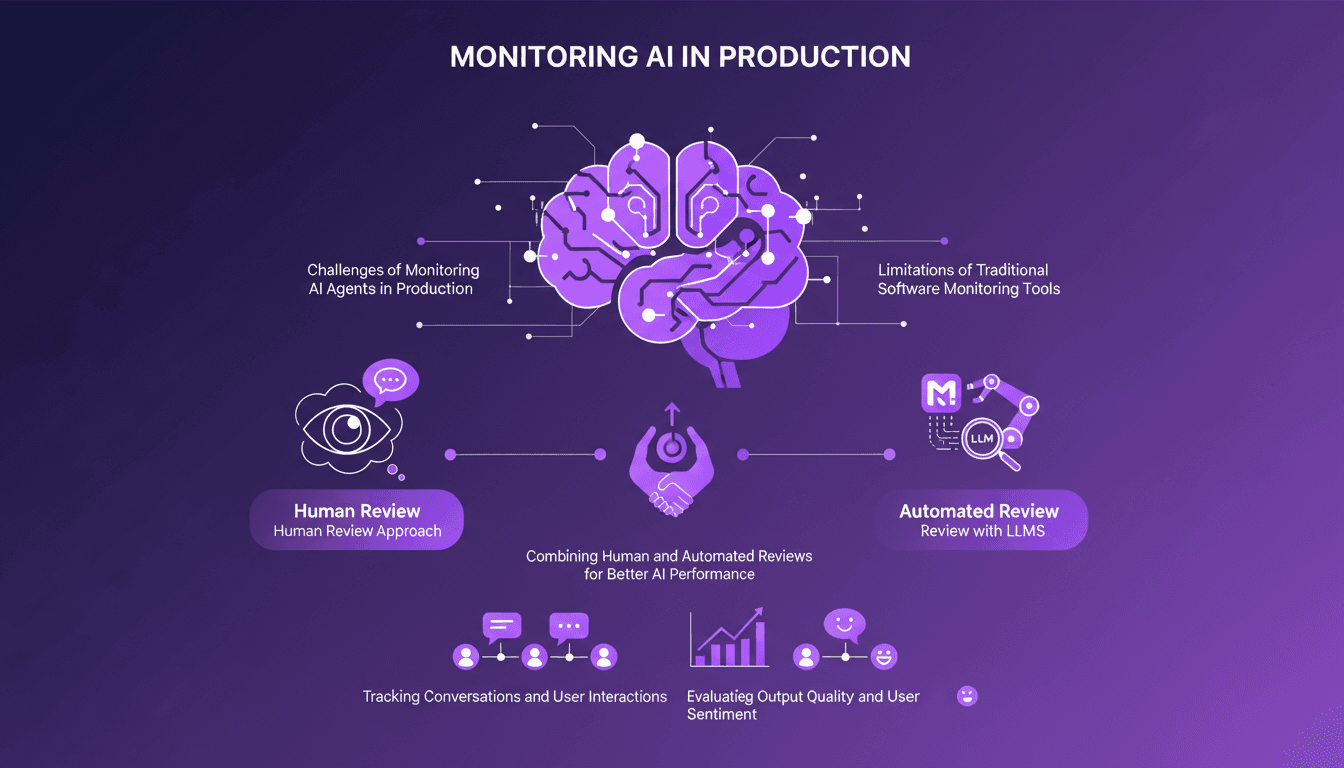

I've been knee-deep in AI production environments, and trust me, monitoring AI agents isn't as straightforward as traditional software. First, I realized that traditional APM tools just don't cut it. With thousands of interactions at stake, ensuring optimal performance is crucial. So I explored new methodologies. LangSmith offers a human review approach and automated review with LLMs for better AI performance. The idea is to combine these two methods to track conversations and evaluate interaction quality. Here's how I tackled the challenge.

I've been knee-deep in AI production environments, and trust me, monitoring AI agents isn't as straightforward as traditional software. First, I realized that traditional APM tools just don't cut it. With thousands of interactions happening, ensuring optimal performance is crucial. So I took this challenge head-on. Initially, I tried some traditional tools, but quickly found they fell short. LangSmith offers something intriguing: a human review approach combined with automated LLM reviews. It's about tracking conversations, evaluating output quality, and even user sentiment. By blending these methods, you get a more complete picture, which makes all the difference. Let me break it down for you.

Understanding the Monitoring Challenge

When I first started working with AI agents, it became clear that traditional APM (Application Performance Monitoring) tools just don't cut it. These tools are great for measuring latency or error rates in traditional applications, but when it comes to AI agents, we're diving into the world of natural language. It's complex, to say the least.

AI agents handle human conversations, which means they need to understand context, the tone of conversation, and even user emotions. We're talking about thousands or millions of interactions. Can you imagine the complexity? Effective monitoring must account for all these elements, or we miss out on crucial information.

Recognizing these gaps is the first step toward effective monitoring. If we don't acknowledge them, we risk sticking with tools that miss the nuance of human interactions. And that's a mistake I've made once, not twice.

Why Traditional Tools Fall Short

Traditional APM tools focus on metrics like latency but don't grasp language understanding. In AI, it's not just about measuring performance; it's about evaluating output quality, and here, traditional tools fall flat.

User interactions are full of nuances. If you rely solely on these tools, you risk missing valuable insights. It's like trying to enjoy a symphony with earplugs. You miss the essence.

Understanding these limitations helps us pivot to better solutions. And that's key to avoiding going in circles.

LangSmith's Human Review Approach

At LangSmith, we decided to integrate humans into the review process. Why? Because there's nothing better for understanding language subtleties. Annotation queues help us manage and prioritize these reviews. It allows us to structure our approach and collaborate effectively.

Human feedback provides depth that automation alone can't offer. But it's time-consuming. We must find the right balance between efficiency and depth. I've spent too much time on manual reviews before figuring out how to optimize this process.

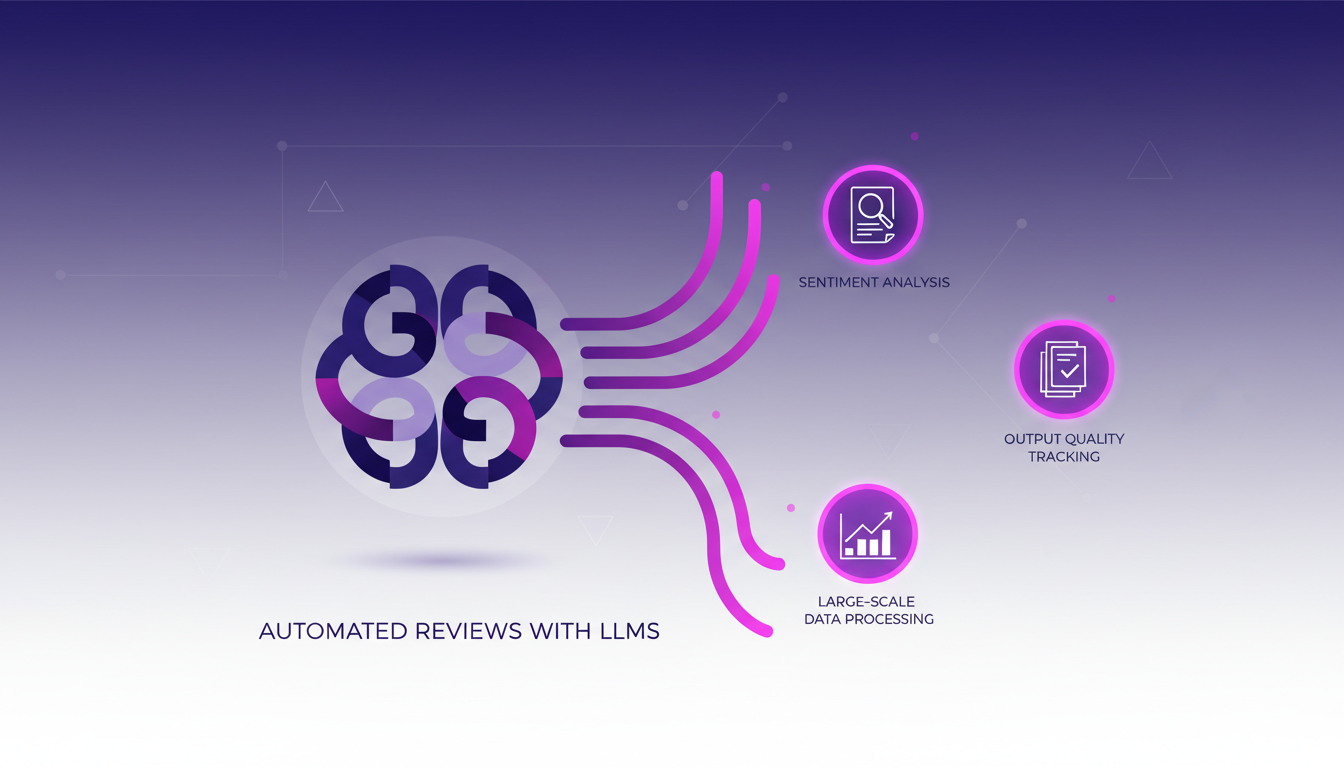

Automated Reviews with LLMs

LLMs (Large Language Models) allow us to automate part of the review process. It's a real time-saver for handling large-scale data. In our case, it helps us track user sentiment and output quality.

But watch out, beyond 100K tokens, it gets tricky. You need to know these models' limits to avoid unpleasant surprises. By combining automation with human intuition, we get far more relevant results.

Combining Approaches for Optimal Performance

One day, I realized that human and automated reviews perfectly complement each other. You get the depth from one and the scalability from the other. This dual approach is the recipe for improving AI agent performance and user satisfaction.

By tracking conversations and interactions, your decisions become more informed. And the business impact is direct: better insights lead to better decisions.

- Traditional tools lack nuance for natural language.

- Human reviews provide qualitative understanding.

- LLMs automate reviews efficiently at scale.

- Combining approaches offers a balance between depth and scalability.

Deploy Agents with A2A on LangSmith can help you maximize the impact of these combined methods.

I've realized that monitoring AI agents in production isn't about picking one tool or method—it's about orchestrating a blend of human insight and automated efficiency. First, I assess the interactions in production—thousands or even millions—and notice the limits of traditional tools. Then, with LangSmith's dual approach, mixing human reviews with LLMs, I optimize AI performance.

- Performance optimization: LangSmith offers more precise monitoring by blending human reviews with LLMs.

- Limits of traditional tools: They fall short when handling thousands of interactions alone.

- Dual approach: Two complementary methods for comprehensive monitoring.

Looking forward, it's clear this human-automation combo is a game changer for AI monitoring. Ready to enhance your AI monitoring? Start by evaluating your current tools and consider integrating human and automated reviews. For more insights, I recommend watching the video "How to monitor production AI agents: A simple breakdown" here: YouTube.

Frequently Asked Questions

Thibault Le Balier

Co-fondateur & CTO

Coming from the tech startup ecosystem, Thibault has developed expertise in AI solution architecture that he now puts at the service of large companies (Atos, BNP Paribas, beta.gouv). He works on two axes: mastering AI deployments (local LLMs, MCP security) and optimizing inference costs (offloading, compression, token management).

Related Articles

Discover more articles on similar topics

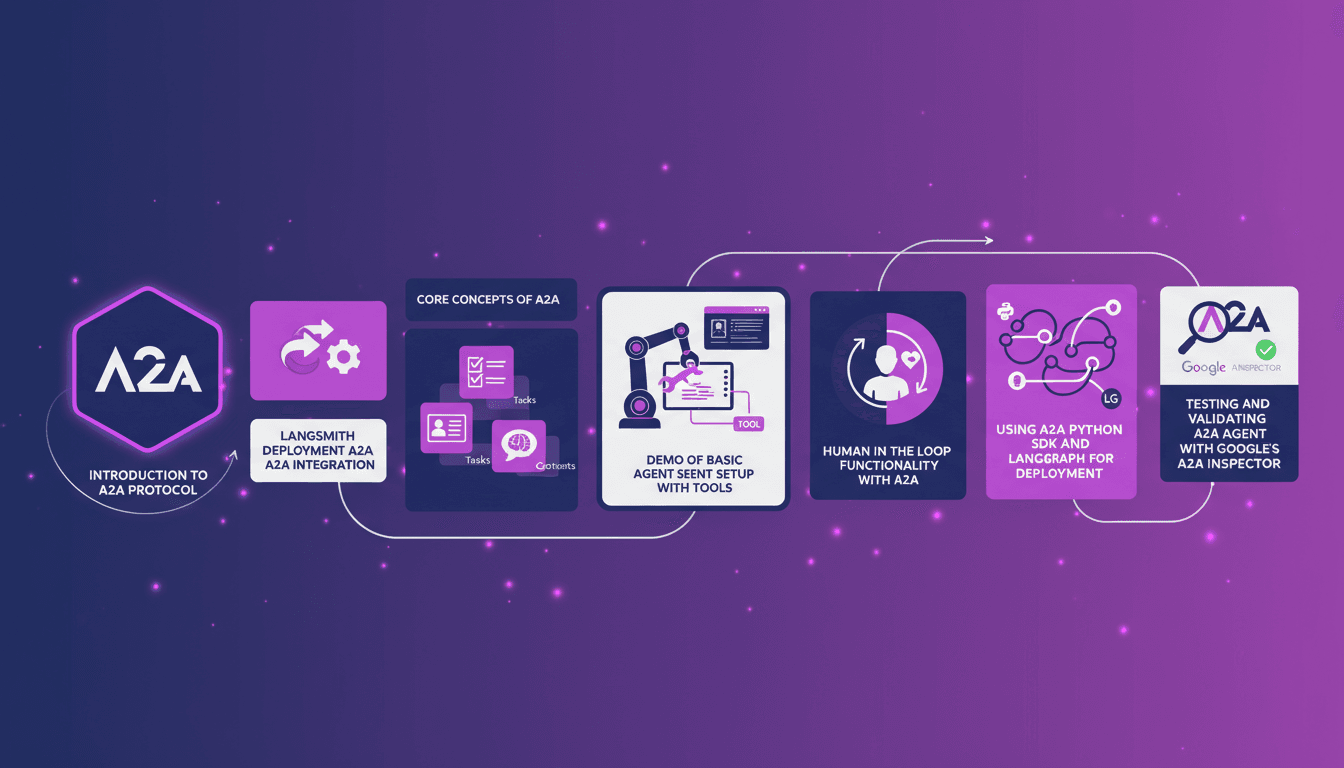

Deploy Agents with A2A on LangSmith

Ever tried deploying agents with the A2A protocol on LangSmith? I did, and it changed my workflow. When Google released A2A in 2024, I was intrigued. Integrating it with LangSmith has honestly streamlined everything, saving time and resources. I'll walk you through how I set it up, the pitfalls I avoided, and how I used LangGraph and the Python SDK to orchestrate it all. We're talking agent cards, tasks, contexts, and of course, testing with Google's inspector. It's powerful stuff, but watch out for limits, especially when you go beyond 100K tokens.

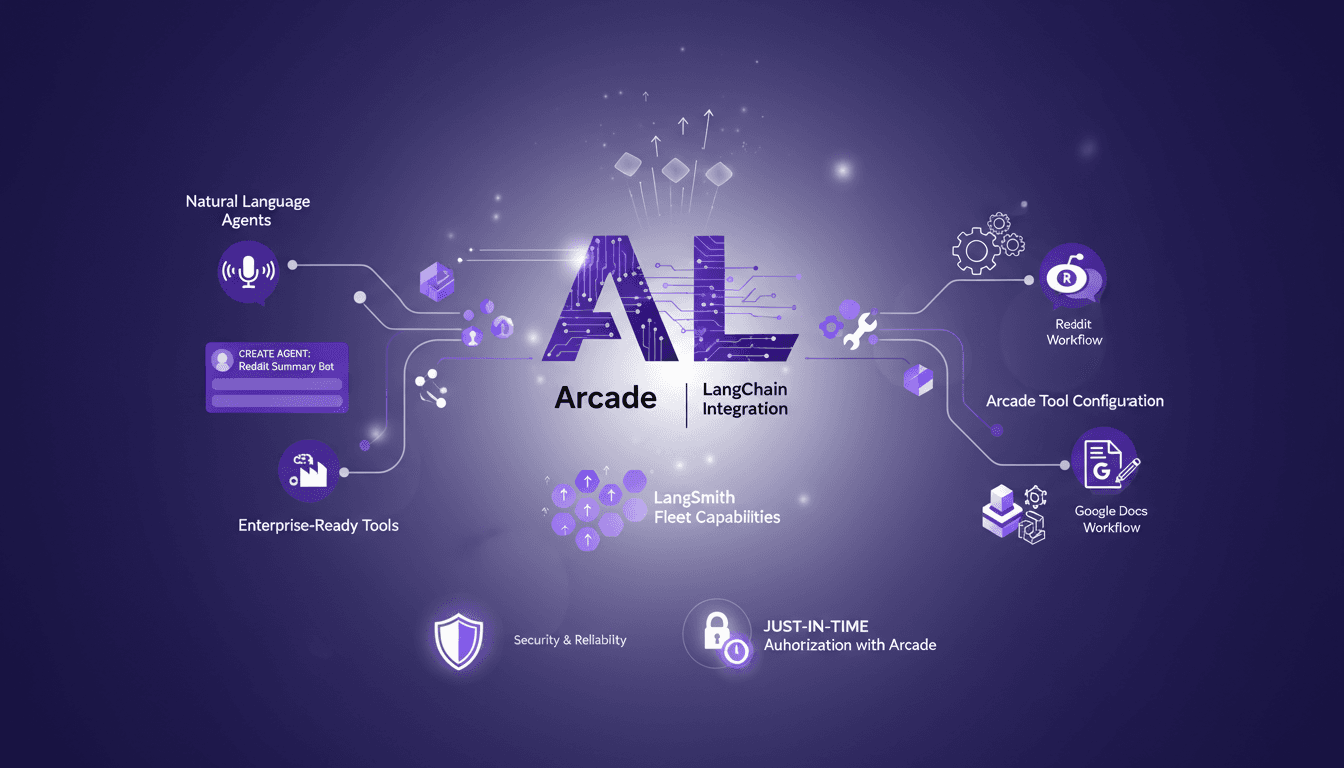

Integrating LangChain with Arcade: A Practical Guide

I dove headfirst into integrating LangChain with Arcade, and let me tell you, the capabilities are game-changing. But, like any powerful tool, it’s all about how you set it up and use it. With over 7,500 Arcade.dev tools now available in LangSmith Fleet, the opportunities for creating AI agents with natural language are unprecedented. However, you need to orchestrate these tools wisely to avoid pitfalls. In this guide, I'll show you how to get the most out of this integration, with concrete examples like using Reddit and Google Docs. And importantly, I'll discuss the challenges of security and reliability in production environments, as well as just-in-time authorization with Arcade. In short, a comprehensive overview for those looking to maximize the impact of their AI projects.

Agentic Engineering: Collaborate with AI

I remember when I first started integrating AI tools into my workflow. It was like discovering a new continent. But the trick wasn't just using AI; it was working with it. That's where agentic engineering comes into play. Today, collaborating with AI goes beyond automation. It's about forging a true partnership with technology. In this article, I'll share how I and other engineers are making this shift—integrating AI models into our development processes, managing context effectively, and configuring AI agents that adapt to our needs. We're no longer passive users; we're active orchestrators. Ready to explore this new frontier?

Teaching Claude to Edit Videos: My Approach

I remember the days of spending two hours manually editing a single YouTube video. Frustrating, right? Then I thought, why not teach Claude to do it for me? In this article, I take you through my journey of automating video editing using Claude's agent skills. We'll go through the tools, techniques, and iterative processes that made it possible. Teaching Claude to edit my videos not only cut the raw video time down by 50% but also transformed my approach to content creation.

Seedance 2.0: Master Fast-Paced Video Production

I remember the first time I tried Seedance 2.0. I was skeptical, but the potential for fast-paced video production was too good to ignore. So, I dove in. Seedance 2.0 is the latest in video production tools, promising speed and creativity. As someone who builds with these tools daily, I’ll walk you through its features, use cases, and how it stacks up against giants like TikTok. Whether it's for global release or personal projects, Seedance 2.0 is a game changer – but watch out for its limitations. ByteDance has really influenced this market, and I'll show you why.