Deploy Agents with A2A on LangSmith

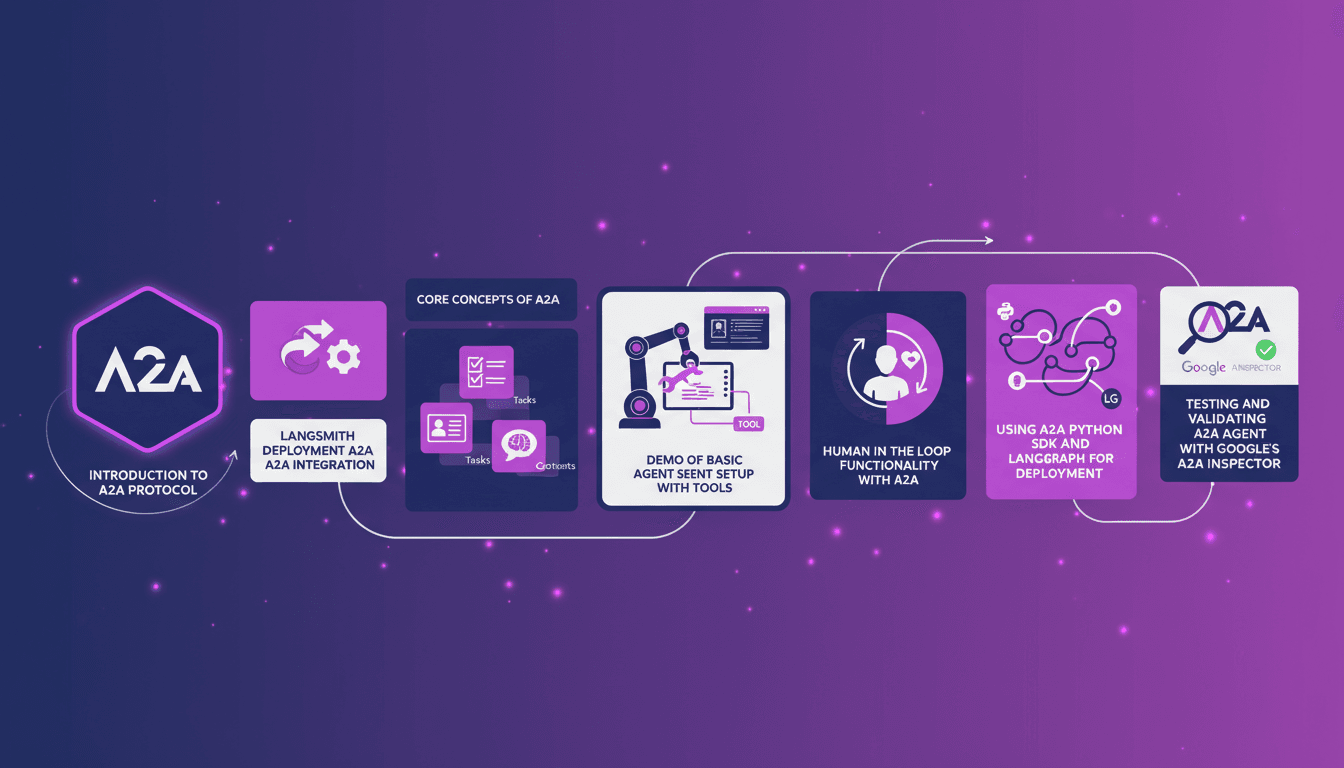

Ever tried deploying agents with the A2A protocol on LangSmith? I did, and it changed my workflow. When Google released A2A in 2024, I was intrigued. Integrating it with LangSmith has honestly streamlined everything, saving time and resources. I'll walk you through how I set it up, the pitfalls I avoided, and how I used LangGraph and the Python SDK to orchestrate it all. We're talking agent cards, tasks, contexts, and of course, testing with Google's inspector. It's powerful stuff, but watch out for limits, especially when you go beyond 100K tokens.

Deploying agents with the A2A protocol on LangSmith was a real game changer for me. When Google launched A2A in 2024, I thought it could genuinely optimize my processes. I dove in, and integrating it with LangSmith was an eye-opener. Imagine setting up agents with four basic tools and seeing them run calculations as straightforward as 3 * 2 * 2. That's where I truly noticed the time and resource savings. But beware, it wasn't without challenges. I had to juggle agent cards, tasks, contexts, and even implement a human-in-the-loop. I also used the Python SDK and LangGraph to orchestrate everything. For testing, Google's A2A Inspector was an essential companion. In short, I'll take you through my journey, with successes and mistakes, so you can also leverage this technology without getting burned.

Understanding the A2A Protocol

The A2A protocol, released by Google in 2024, is a game-changer in agent communication. Imagine agents, regardless of vendor or underlying model, interacting seamlessly. That's A2A: a standardized protocol facilitating action coordination among agents. But watch out, it gets complex, especially with contexts. Each agent is described by an agent card, a JSON file summarizing its capabilities, URL, and other essential metadata. Then, there are tasks, representing request-response within a given context, grouping multiple tasks together and becoming crucial in multi-turn conversations. I've been burned by context complexity more than once; mastering them is key.

Integrating A2A with LangSmith

To integrate the A2A protocol with LangSmith, I started by setting up the integration, and that's where the real challenges began. Initial configuration is crucial. It took several attempts to get everything functioning correctly. We often underestimate this step, but it's fundamental to avoid errors later. Once the integration was successful, efficiency gains were noticeable: agents communicated smoothly, and tasks executed without a hitch. But don't overlook the initial settings, as they can cost you in time and performance.

Setting Up Basic Agents with Tools

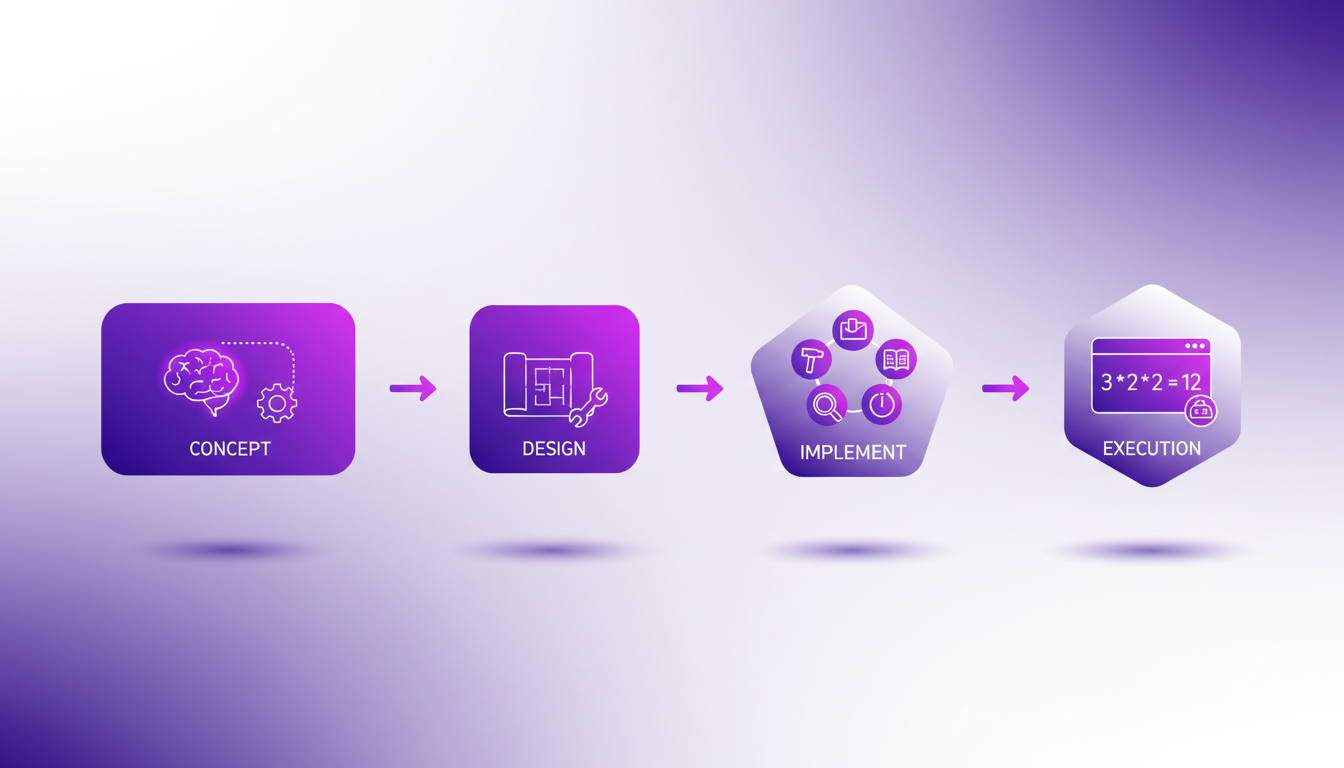

For setting up a basic agent, I used four different tools: one for telling time, one for simple calculations, and two others for managing emails. The workflow is straightforward: first, create the agent, then add tools one by one. During the demonstration, I asked the agent to calculate 3 * 2 * 2, and it responded instantly. Balancing complexity and functionality is a real challenge. Too much complexity and the agent becomes hard to manage; too little, and it's not performant enough. It's a balancing act I've learned to master over time.

Human in the Loop Functionality

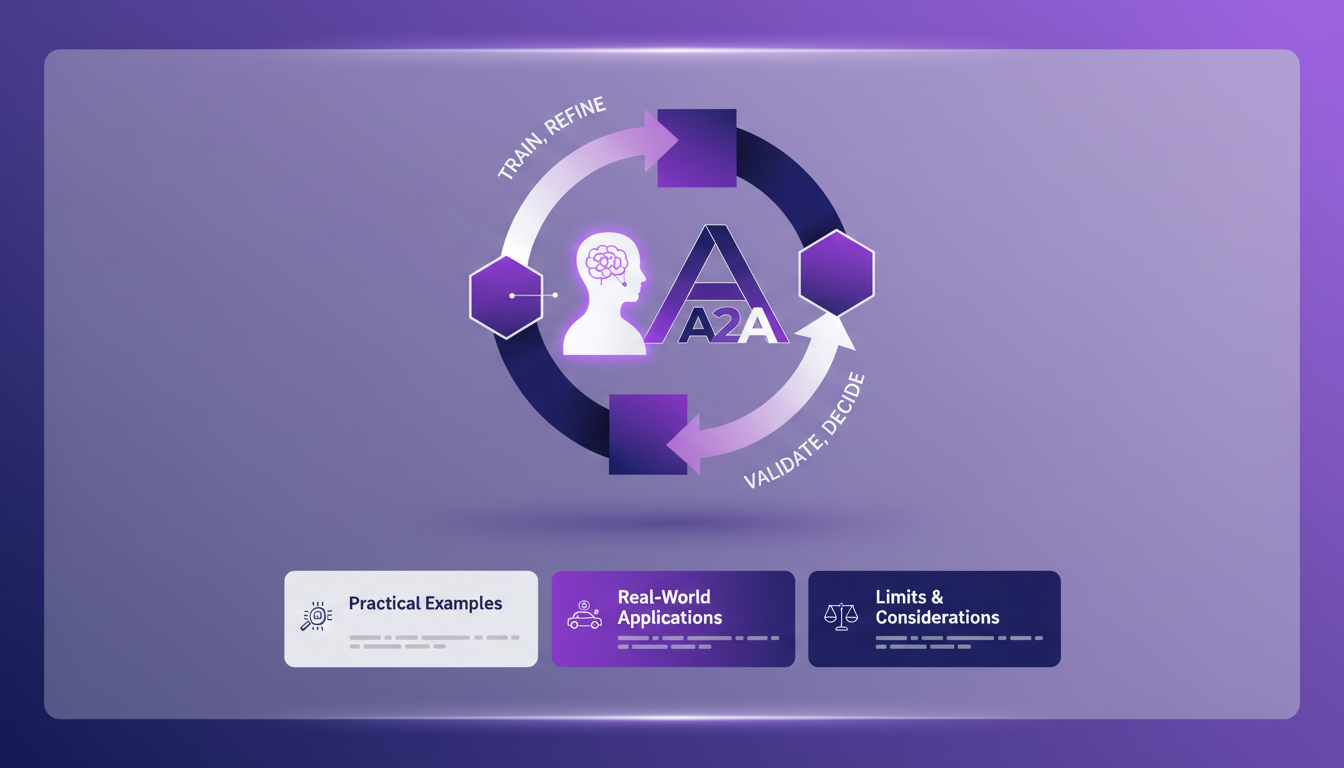

Integrating Human in the Loop (HITL) with the A2A protocol adds an interesting dimension. I used this feature to oversee email sending. It allows validating or modifying agent actions before they're executed. In certain situations, human intervention is necessary to avoid costly mistakes. Costs may rise, but the quality assurance is worth the investment. I recommend using HITL when critical decisions need to be made.

Deployment and Validation with A2A

For deployment, I used the A2A Python SDK and LangGraph. Testing and validation with Google's A2A Inspector are essential to ensure everything works as planned. But beware of common pitfalls: poor configuration can lead to communication failures. My advice: take the time to properly configure and test each component before final deployment. This way, you'll avoid many headaches and optimize your agents' performance.

Deploying agents with A2A on LangSmith is like shifting gears in your workflow. I started by diving into the A2A protocol released in 2024 by Google. Then, I integrated agent cards and tasks into my setup with 4 tools. And there, bam, it calculates 3 * 2 * 2 in a snap! But watch out, integrating HITL (Human-In-The-Loop) can be a real headache if not managed properly.

- Take the time to understand the A2A protocol; it's your solid foundation.

- Integrate agent cards and tasks to truly boost efficiency.

- Don't overlook the importance of contexts for optimal setup.

What I take away is that, when mastered, A2A can really transform your projects. But as always, you need to test, adjust, and iterate to avoid nasty surprises. Ready to deploy your own agents? Dive in, test, iterate, and see the impact. I recommend watching the original video for a deeper understanding: YouTube Video.

Frequently Asked Questions

Thibault Le Balier

Co-fondateur & CTO

Coming from the tech startup ecosystem, Thibault has developed expertise in AI solution architecture that he now puts at the service of large companies (Atos, BNP Paribas, beta.gouv). He works on two axes: mastering AI deployments (local LLMs, MCP security) and optimizing inference costs (offloading, compression, token management).

Related Articles

Discover more articles on similar topics

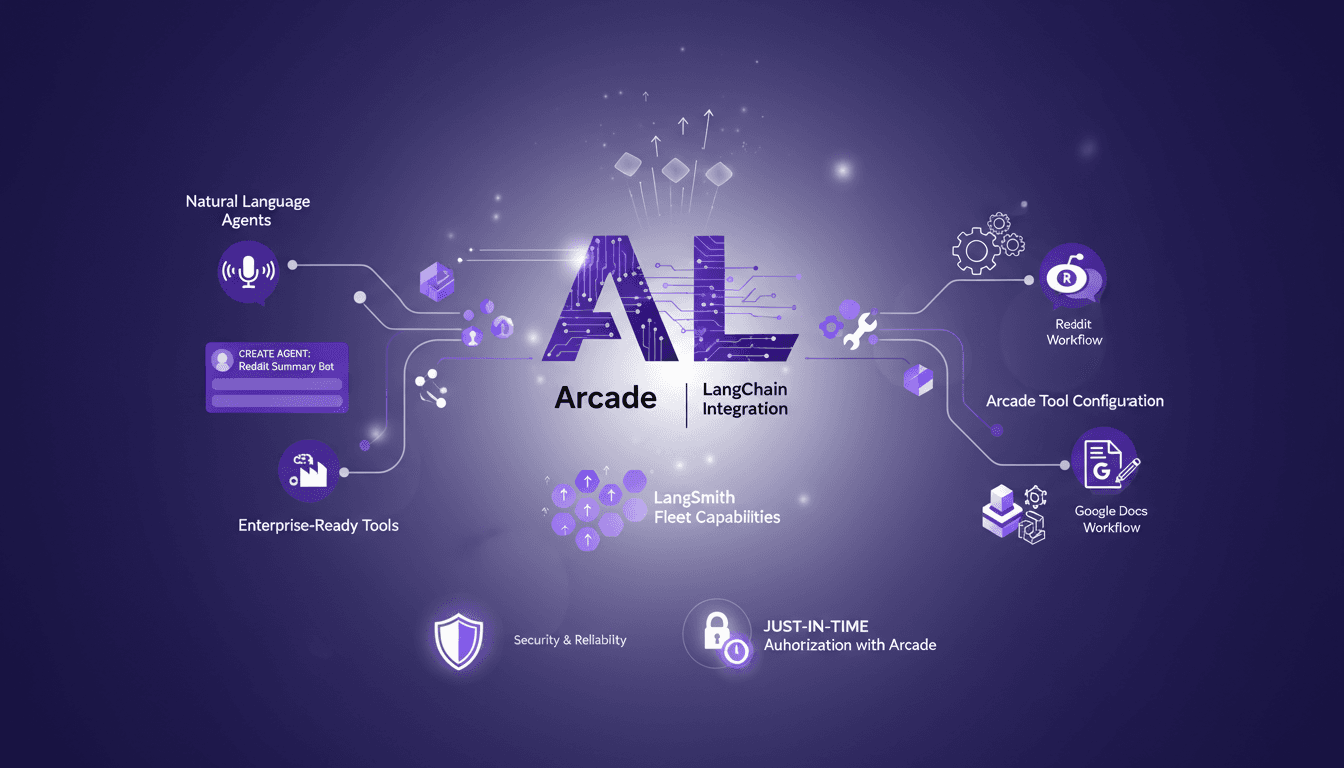

Integrating LangChain with Arcade: A Practical Guide

I dove headfirst into integrating LangChain with Arcade, and let me tell you, the capabilities are game-changing. But, like any powerful tool, it’s all about how you set it up and use it. With over 7,500 Arcade.dev tools now available in LangSmith Fleet, the opportunities for creating AI agents with natural language are unprecedented. However, you need to orchestrate these tools wisely to avoid pitfalls. In this guide, I'll show you how to get the most out of this integration, with concrete examples like using Reddit and Google Docs. And importantly, I'll discuss the challenges of security and reliability in production environments, as well as just-in-time authorization with Arcade. In short, a comprehensive overview for those looking to maximize the impact of their AI projects.

Handling Sales Objections: Practical Techniques

Ever been in a sales meeting where you couldn't quite read the room? I've been there, and here’s how I handle it when objections seem invisible. In sales, recognizing objections—or their absence—is crucial. I'm not talking theory, but real-world scenarios. How can AI lend a hand? I'll share how I uncover hidden motivations through precise questioning and create space for genuine communication. It's not about overusing AI, but integrating it smartly into the sales process. Let’s dive into this tutorial with practical techniques that I use daily to navigate these tricky waters.

Avoid Identity Objections in Car Sales

In the tough world of car sales, I've learned the hard way that identity objections can make or break a deal. The first time I faced it, I stumbled. But now, I navigate these situations with a clear strategy. It's not just about specs or price; it's about who the customer is or wants to be. Let's break down how identity plays a crucial role in sales and how you can turn it to your advantage.

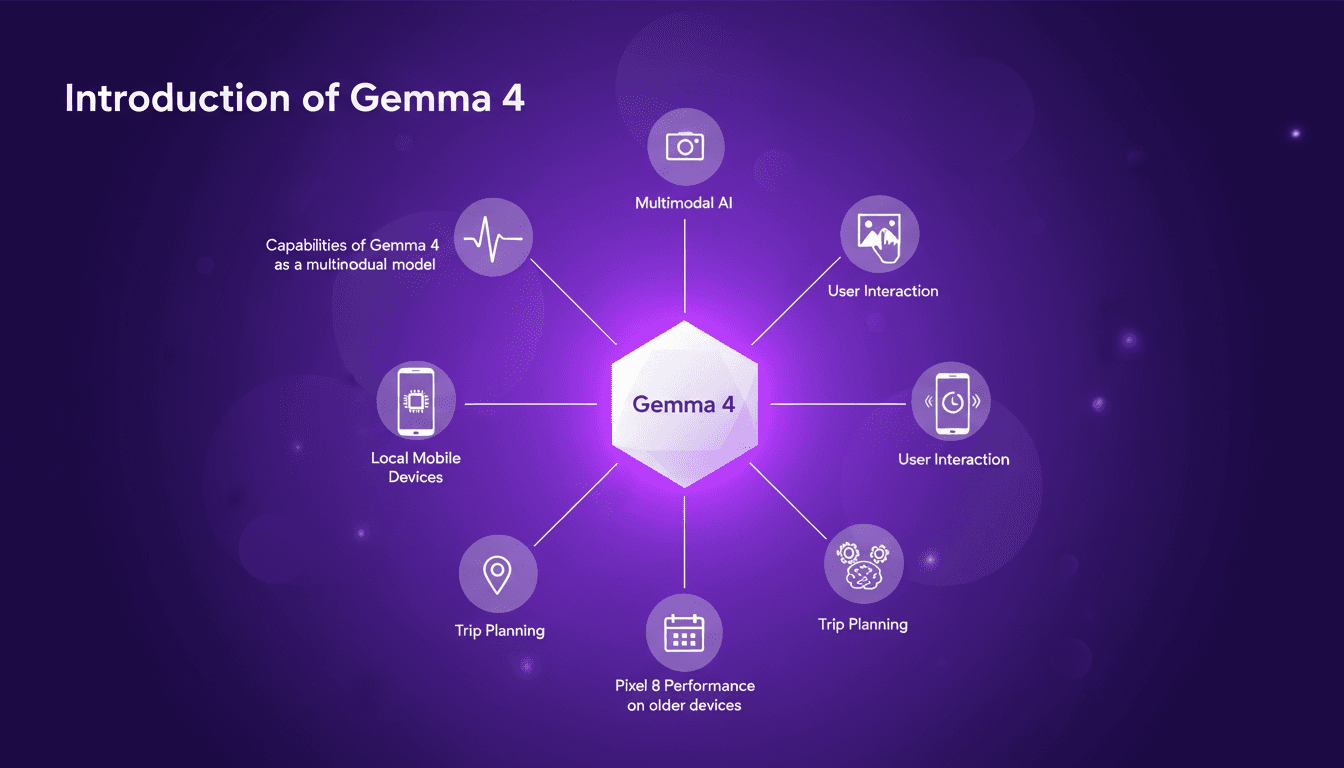

Exploring Gemma 4: Google's Multimodal Model

I just got my hands on Gemma 4, Google's latest AI model, and it's a true multimodal powerhouse! I've started testing it on my mobile devices, and honestly, it's impressive. It's not just about flashy tech; it's about practical applications and efficiency, even on older devices like the Pixel 8. Imagine planning your next trip with an AI that understands not just text but images and voice as well: that's what Gemma 4 promises. I was pleasantly surprised by its performance on older devices. In this tutorial, I'll show you how to make the most of Gemma 4 with the Google AI Edge Gallery. Ready to see how it works?

AI Auto-Evolution: Towards Autonomy

I remember the first time I saw an AI tweak its own code. It was like watching a child learn to walk—thrilling and a bit terrifying. In this article, I'm diving into the world of AI self-improvement, where machines aren't just executing tasks but redefining their capabilities. With AI systems now capable of modifying their own source code, we're witnessing a shift in software evolution. This isn't just a theoretical leap; it's a practical reality impacting industries like e-commerce and automotive. Discover how this AI auto-evolution is transforming key players like Shopify, Stripe, and Tesla, and what it means for the future of AI-driven development.