Open Source AI Beats ChatGPT: My Workflow

I've been knee-deep in AI for years, and let me tell you, open source models are shaking things up like never before. When I first saw GLM 5.1 surpassing big names like ChatGPT, I knew we were onto something game-changing. But it's not just about the scores—it's about what we can do with these tools, right in our hands. With scores that give established giants a run for their money, open source is redefining our approach to AI development and deployment. We're diving into how this plays out—from Huawei chips in China to globally competing video models. This transformation is far more than just a tech update—it's a seismic shift in the AI landscape.

I've been knee-deep in AI for years, and let me tell you, open source models are shaking things up like never before. When I first saw GLM 5.1 surpassing the big names like ChatGPT with a 58.4% score on the SWE Bench Pro, I knew we were onto something game-changing. But beware, it's not just about the numbers—it's about what we can do with these tools, right in our hands, that shifts the paradigm. Imagine orchestrating models that rival giants, with flexibility we've never seen before. From the Chinese AI ecosystem and Huawei chips to Meta's investment and their M Spark model, not to mention the global competition in video AI models, we're witnessing a true upheaval. This isn't just a tech update—it's a seismic change redefining how we approach AI development and deployment. And I'm going to show you how to ride this wave.

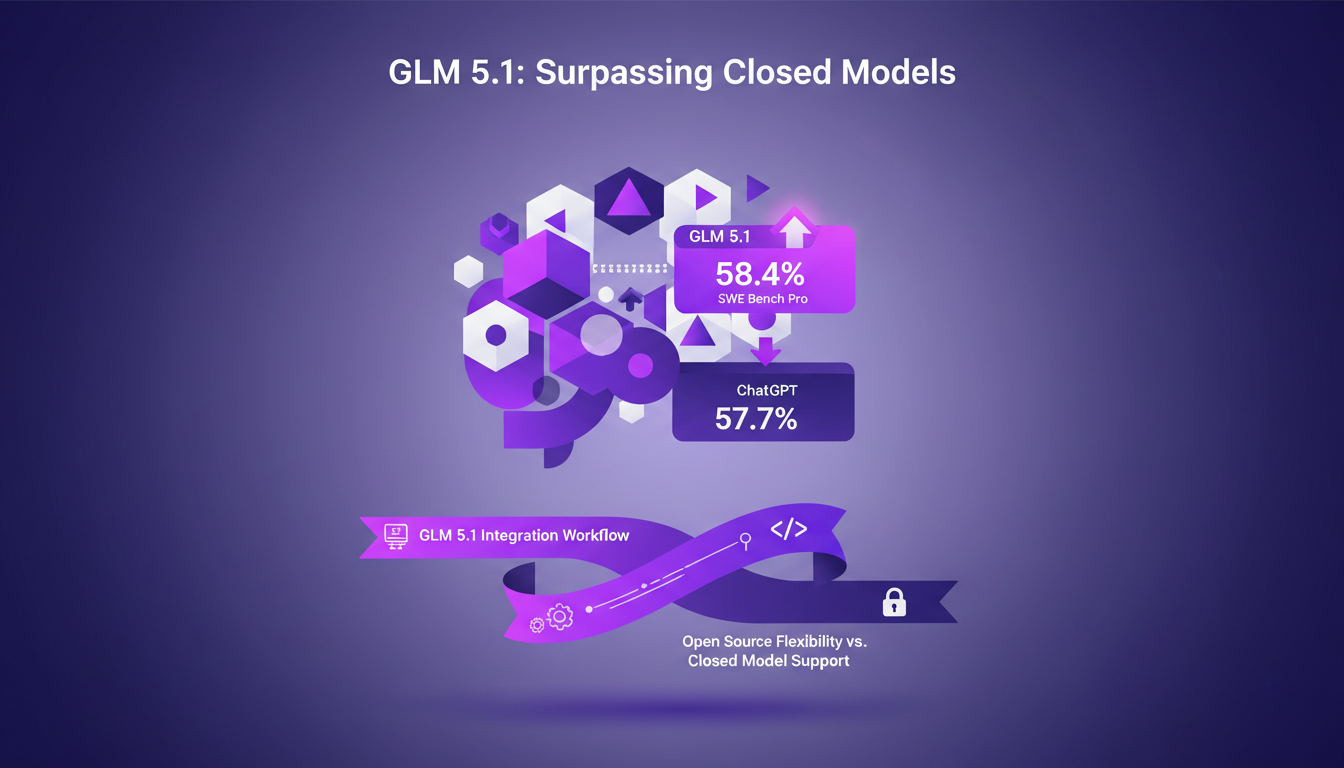

GLM 5.1: Surpassing Closed Models

Integrating GLM 5.1 into our systems was a game changer. This open source model scored an impressive 58.4% on the SWE Bench Pro, beating the renowned ChatGPT's 57.7%. This is a shift that commands attention.

To integrate, I first connected our Supabase repo, then wrapped the API in a custom service. But watch out, token management can quickly spiral if you're not careful. GLM 5.1 is designed for long-haul tasks, working autonomously for up to 8 hours — a true coding marathoner.

Trade-offs? Open source offers unmatched flexibility, but don't expect the customer support of a closed model. Real-world scenarios where GLM 5.1 excels include heavy data processing and query optimization. I've seen this model hit 21,500 requests per second, obliterating the previous record. But don't overload it unnecessarily.

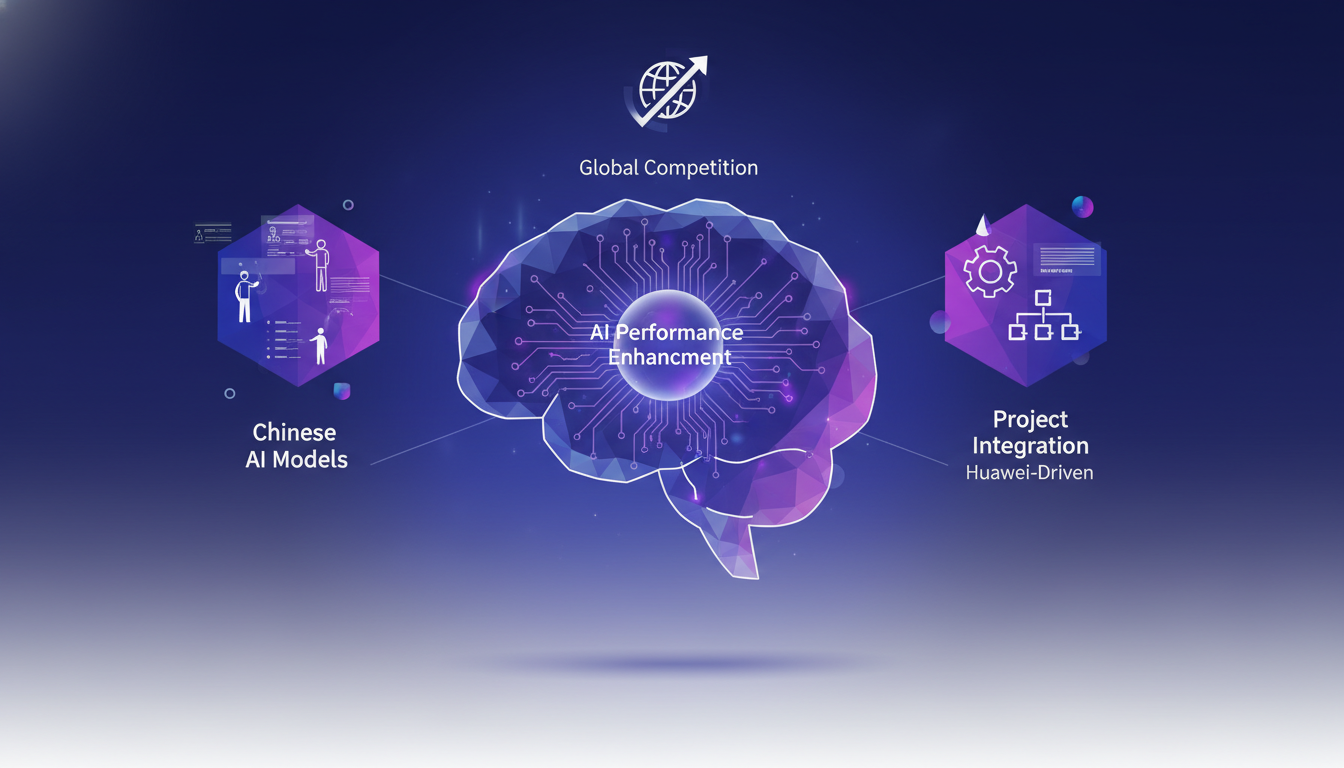

Chinese AI Models and Huawei Chips

Huawei chips have been pivotal in boosting the performance of Chinese AI models. Integrating Huawei-driven models was an eye-opener. These models, optimized for Chinese hardware, achieved efficiencies I hadn't anticipated.

But let's talk trade-offs. Yes, we're talking enhanced performance, but at what cost? Implementation and maintenance costs can skyrocket, especially if aiming for optimal hardware-software synergy. However, once orchestrated properly, the results are impressive. I've observed significant improvements in inference capabilities by integrating these models into intricate projects.

Hardware-software synergy is no longer an option; it's a necessity. The impact is straightforward: increased computing power and reduced delays. For more on this trend, check out this article on Chinese open source AI.

Meta's M Spark Model and Investment

Meta went big with a $14 billion investment in the M Spark model. That's no small change, but the returns have been mixed. I had the chance to test this model, and its power is undeniable. However, innovation doesn't come without risks.

With M Spark, I had to juggle resources to maximize its potential. Proper orchestration can really leverage its unique features. But watch out for limitations: some aspects of the model remain proprietary, which can hinder widespread adoption. For a glimpse into these challenges, see this New York Times article.

Challenges? Well, integration isn't a walk in the park. Fine orchestration is necessary to avoid bottlenecks. And then there's the operating costs. But once in place, the potential is huge. I deployed M Spark on demanding projects, where it shone with its speed.

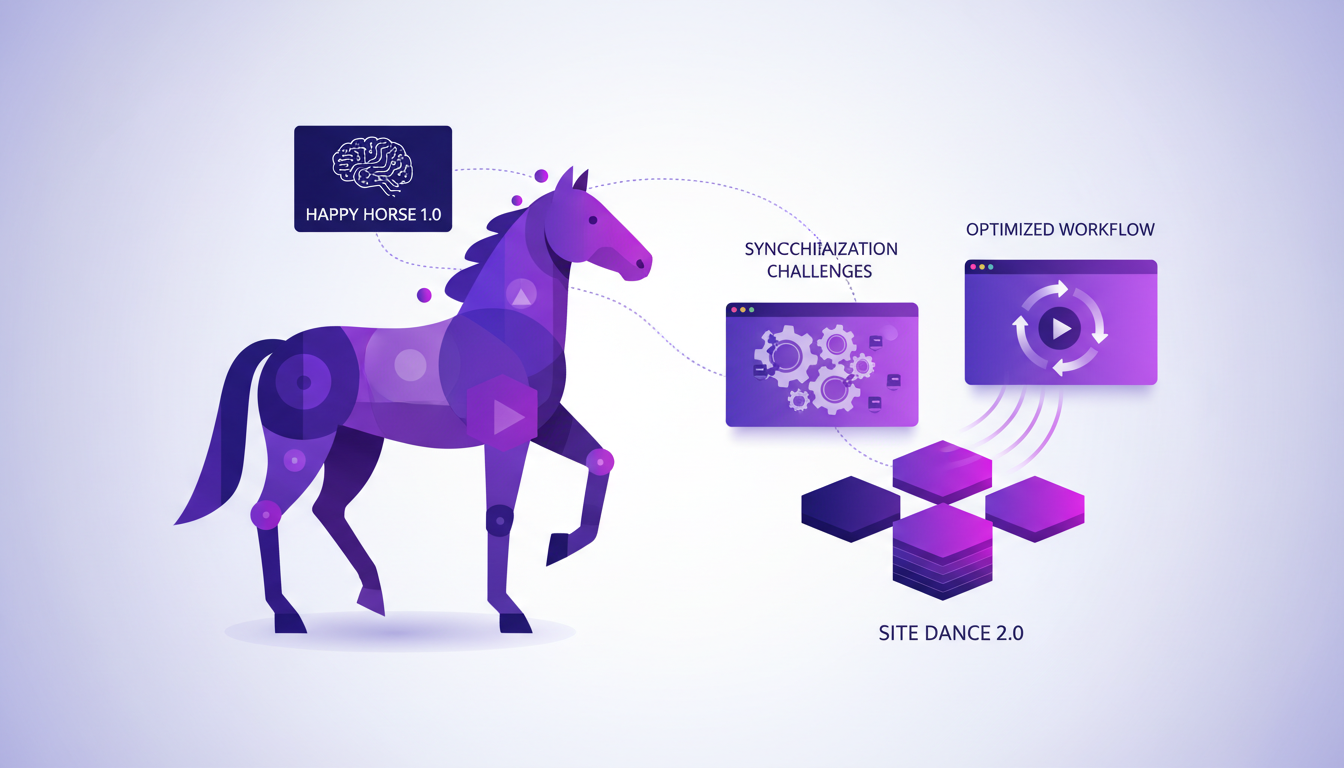

Video AI Models: Happy Horse 1.0 and Beyond

Video AI models pose unique challenges, particularly with synchronization. With Happy Horse 1.0, I had to fine-tune my strategy to optimize performance. This model outperformed previous ones, but watch out for the complexities of real-time processing.

To optimize Happy Horse 1.0, I integrated Site Dance 2.0, which compensates for video processing limitations. But beware, too much complexity can hurt fluidity. In practice, simplicity is often the key for real-time applications. For more practical tips, check out how I used AI to handle sales objections.

Practical tip: Avoid overloading your systems with overly demanding models. Sometimes, simplicity is the key to effective deployment.

Upcoming Releases: Dipsic V4 and Lightrix's LTX 2.3

Upcoming releases like Dipsic V4, with its 1000 billion parameters, and Lightrix's LTX 2.3 promise to shake up the AI landscape. With MOE (Mixture of Experts) architecture, Dipsic V4 is expected to bring unprecedented capabilities. I'm eager to see how these innovations will integrate into our workflows.

What interests me most is balancing innovation with practical deployment strategies. With LTX 2.3, I've tested synchronized video models that open new horizons for content creators. Preparing for the future, these models require meticulous resource planning and continuous adaptation.

In conclusion, these releases represent both a challenge and an opportunity. Staying updated with the latest advancements is critical, while keeping an eye on technical limits and costs. In this ever-evolving field, adaptability is key.

Here's the deal: open-source AI models aren't just catching up—they're leading the charge. GLM 5.1 nails a 58.4% on SWE Bench Pro, outpacing Chat GPT 5.4. And with Dipsic V4 on the horizon, packing around 1000 billion parameters, we're talking serious power. But, be aware, these leaps come with new technical challenges that need smart handling.

- Open-source models surpassing closed ones is a genuine game changer.

- The Chinese AI ecosystem with Huawei chips and Meta's M Spark model are key players.

- Video AI models and global competition are heating up, don't overlook them.

Looking ahead: the open-source AI ecosystem is becoming increasingly crucial. The tools are there—make them work for you in your projects. But remember, each model has its limits—don't get caught off guard by technical trade-offs.

So dive into these models, experiment, and adjust your strategies. For a deeper understanding, I recommend watching the full video here. Trust me, it's worth the watch.

Frequently Asked Questions

Thibault Le Balier

Co-fondateur & CTO

Coming from the tech startup ecosystem, Thibault has developed expertise in AI solution architecture that he now puts at the service of large companies (Atos, BNP Paribas, beta.gouv). He works on two axes: mastering AI deployments (local LLMs, MCP security) and optimizing inference costs (offloading, compression, token management).

Related Articles

Discover more articles on similar topics

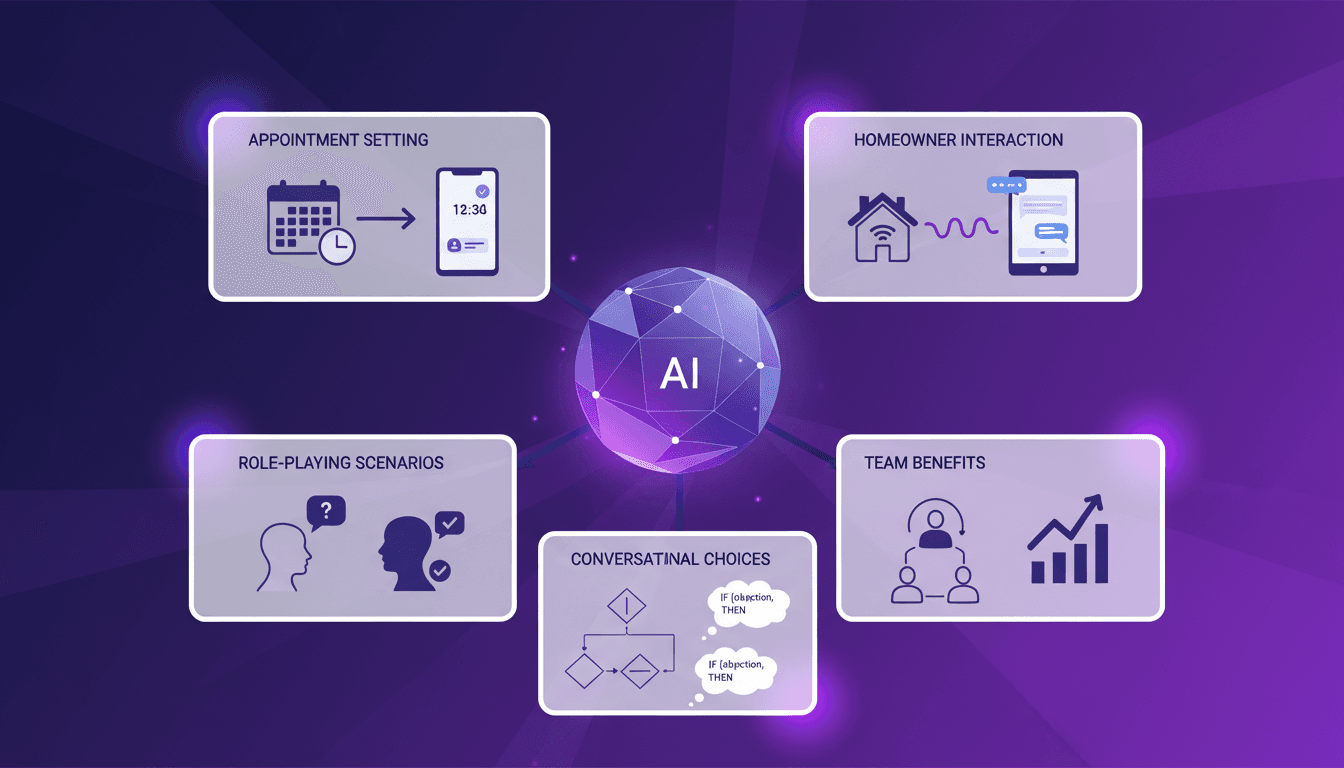

Handling Sales Objections with AI: Experience

I remember the first time I set up an AI lead manager to handle sales objections. It felt like handing over the keys to a new driver. The potential was massive, but I needed to see it in action to believe it. In today's lightning-fast sales world, efficiently responding to objections is crucial. AI lead managers are stepping up, promising to streamline processes and save time. But how do they really perform under pressure? I'll walk you through my integration process, role-playing scenarios, and interactions with homeowners. The benefits for teams are tangible, but watch out for the limits!

Psychological Effects of Chatbots: MIT Study

I was skeptical at first, but after diving into the MIT study on psychophantic chatbots, reality hit hard: 300 documented cases of AI-induced psychosis. People are losing themselves in AI interactions, and it’s not just hype. I connect the dots between psychological effects, legal and ethical implications, and strategies to navigate this complex space. Understanding AI limitations and biases isn't optional—it's crucial. It’s time we look at how to mitigate these risks and use these tools effectively.