Challenges in Building Eval Platforms

I remember the first time I tried to build an evaluation platform. It felt like trying to fit a square peg into a round hole. The complexity, the moving parts, the constant need for iteration—it's a beast. But once you get it right, the efficiency and insights you gain are game-changing. In today's LMS environments, these platforms are crucial for understanding variability and improving agent quality. Building them is no walk in the park. Let me walk you through the challenges and how I've tackled them. From transitioning from spreadsheets to advanced tools to integrating AI-driven solutions, each step is critical.

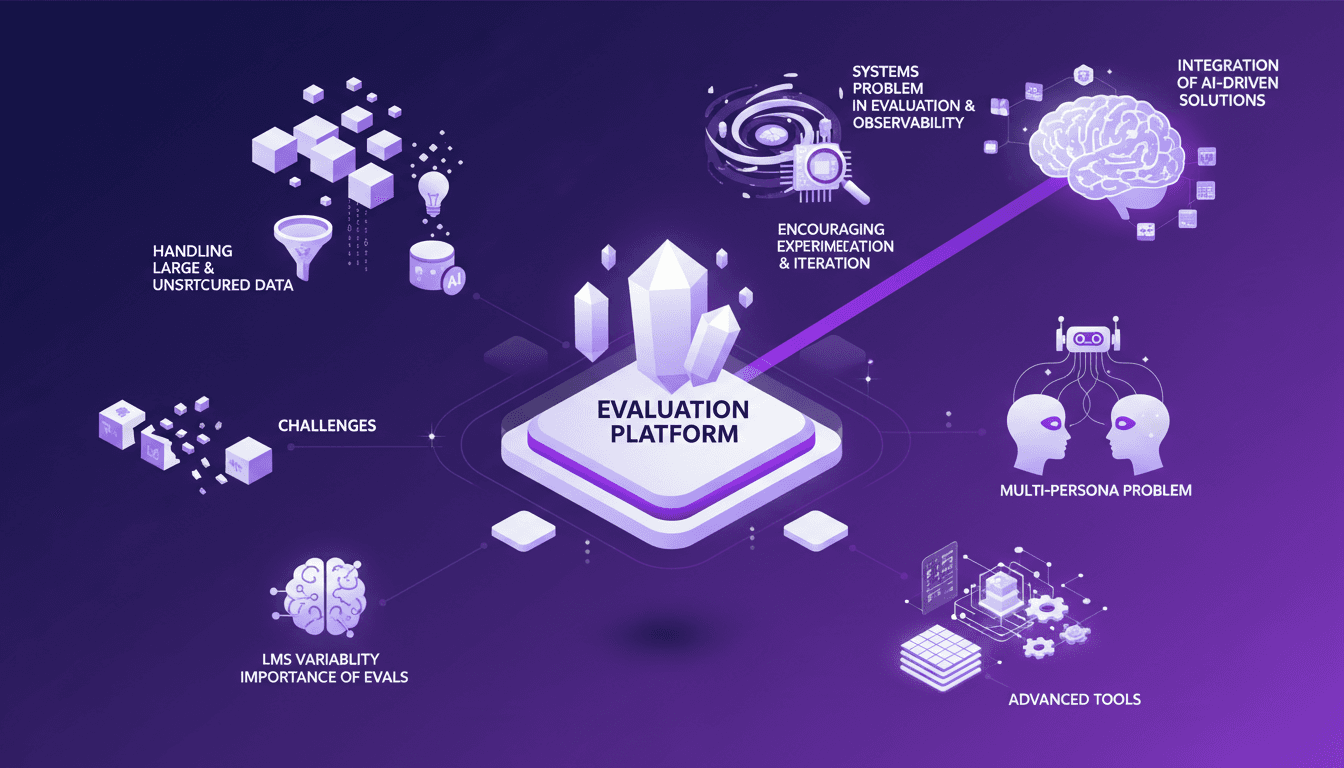

I remember the first time I tried to build an evaluation platform. It felt like trying to fit a square peg into a round hole. Honestly, the complexity, the moving parts, the constant need for iteration—it's a real beast. But once you get it right, the efficiency and insights you gain are just game-changing. In today's LMS environments, these platforms are crucial for understanding variability and improving agent quality. But make no mistake, building these systems is no walk in the park. Let me walk you through the challenges I've faced, from transitioning from spreadsheets to advanced tools to integrating AI-driven solutions. We're dealing with a multi-persona problem and a real systems headache — and that's where encouraging experimentation and iteration becomes vital. Catching and handling large and unstructured data is also a big piece of the puzzle. So buckle up. I'll show you how I've navigated this maze.

Tackling the Challenges of Eval Platforms

I remember diving into eval platforms for the first time. The main challenge is understanding the complexity of eval observability. When juggling multiple agents, each with its own set of tasks, you quickly realize that mere observation isn't enough. It's a real puzzle, especially when you have to manage the multi-persona problem in agent development. You've got engineers, domain experts, each with their own expectations.

Next, there's the systems problem in evaluation and observability. Aligning technology with business processes is crucial. And spreadsheets? They're just not enough anymore. I've seen teams drown in rows and columns before they even realize they're overwhelmed by the complexity of the tasks at hand. Data management becomes unmanageable, and believe me, you don't want to be the one explaining why results don't match up.

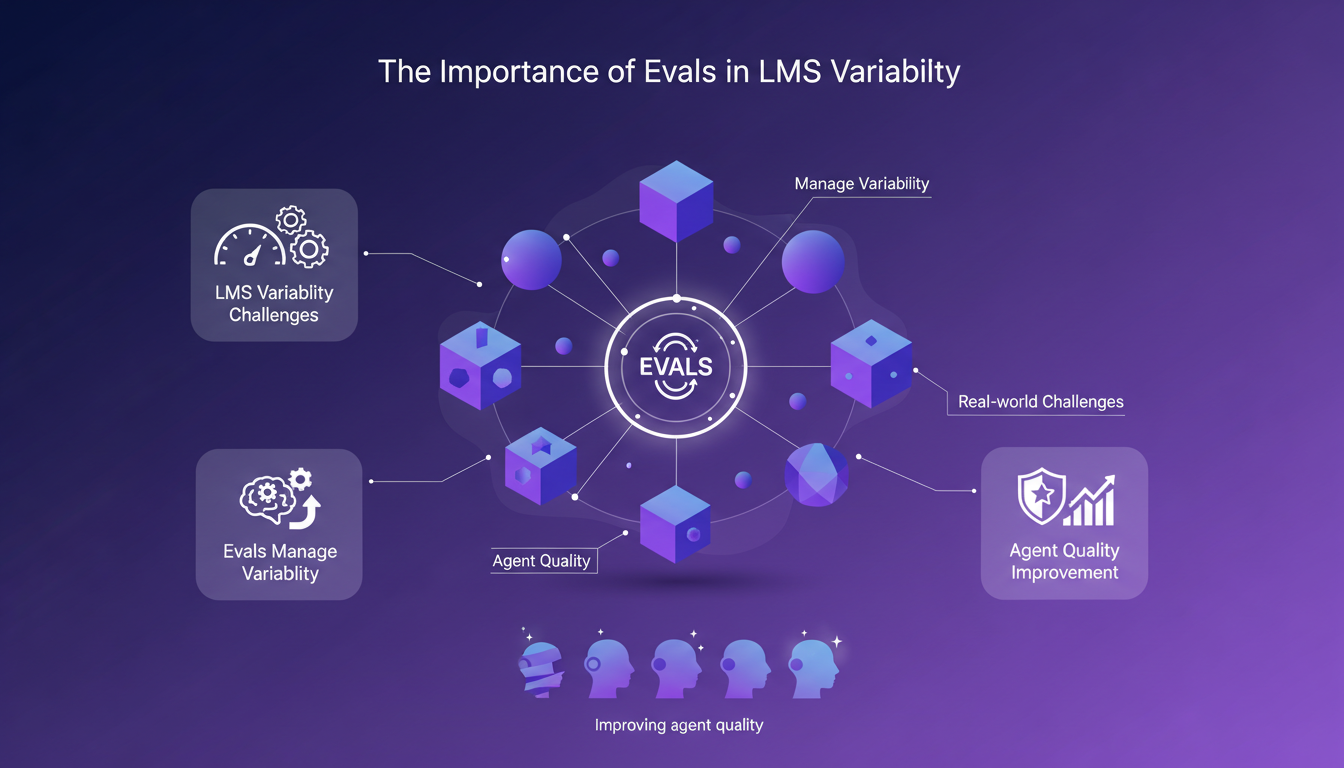

The Importance of Evals in LMS Variability

Evals are like a GPS for managing LMS variability. With the extreme variability encountered, it's an indispensable tool. Evals help improve agent quality by providing constant feedback. This reminds me of a time when transitioning from 130 to 60 students, I realized how important it was to adjust teaching methods to maintain consistent quality. It's the same with LMS; you need to continually adapt and evaluate.

I've learned valuable lessons transitioning class sizes. For instance, when moving from 130 to 60, each agent needs to be evaluated differently. That's where platforms like Brain Trust prove invaluable, providing a framework for evaluating agent quality.

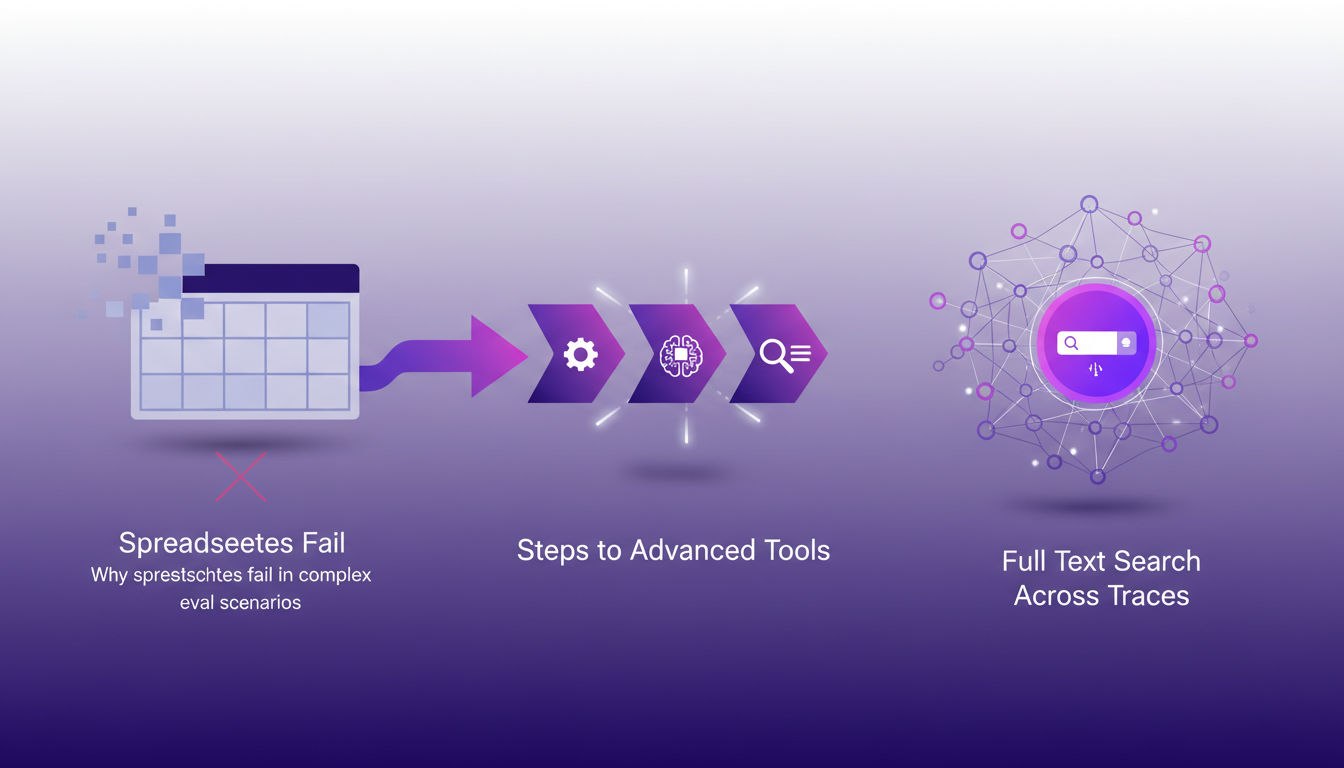

Transitioning from Spreadsheets to Advanced Tools

So why do spreadsheets fail in complex eval scenarios? Simply because they become obsolete quickly when you need to compare experiments and manage iterations. Transitioning to advanced tools is essential. First, I'd recommend integrating full text search across traces. It's a game changer.

But watch out for pitfalls. During the transition, keep in mind that involving both technical and non-technical team members is crucial. Otherwise, you risk losing performance and evaluation persistence. Experiment, but don't overuse it.

Handling Large and Unstructured Data

When managing large datasets, solid strategies are essential. Using the right tools and techniques for unstructured data is vital. For example, I've used NoSQL databases to balance data volume with processing speed.

I've found that integrating real-time solutions can significantly enhance the responsiveness of evaluations. The trick is not to get overwhelmed by the volume and to orchestrate processes properly.

Integrating AI-driven Solutions

Finally, how does AI enhance evaluation platforms? By automating certain tasks and providing more accurate analyses. For instance, I've integrated machine learning algorithms to analyze data faster, improving the quality of evaluations.

But there are limits. Sometimes, AI-driven solutions can be costly and complex to implement. Encourage experimentation and iteration, but know when to pull back.

- Key Takeaways:

- Avoid data overload by focusing on essentials.

- Use advanced tools to overcome spreadsheet limitations.

- Integrate AI in a controlled manner to enhance processes.

Building evaluation platforms is tough, but with the right approach and tools, you can really streamline the process and boost LMS functionality. First off, I moved from spreadsheets to advanced tools. It's a game changer, but watch those costs—they can add up fast. Then, it's all about iterating and adapting. I started with a class of 130 students, and through iteration, I got it down to 60 for optimal efficiency. Finally, the multi-persona problem in agent development can be a real pain if not tackled head-on. But integrating AI can be a huge asset. Ready to take your evaluation platform to the next level? Start experimenting with AI integration and see the difference it makes. For deeper insights, I recommend checking out Phil Hetzel's video on YouTube—it's a game changer.

Frequently Asked Questions

Thibault Le Balier

Co-fondateur & CTO

Coming from the tech startup ecosystem, Thibault has developed expertise in AI solution architecture that he now puts at the service of large companies (Atos, BNP Paribas, beta.gouv). He works on two axes: mastering AI deployments (local LLMs, MCP security) and optimizing inference costs (offloading, compression, token management).

Related Articles

Discover more articles on similar topics

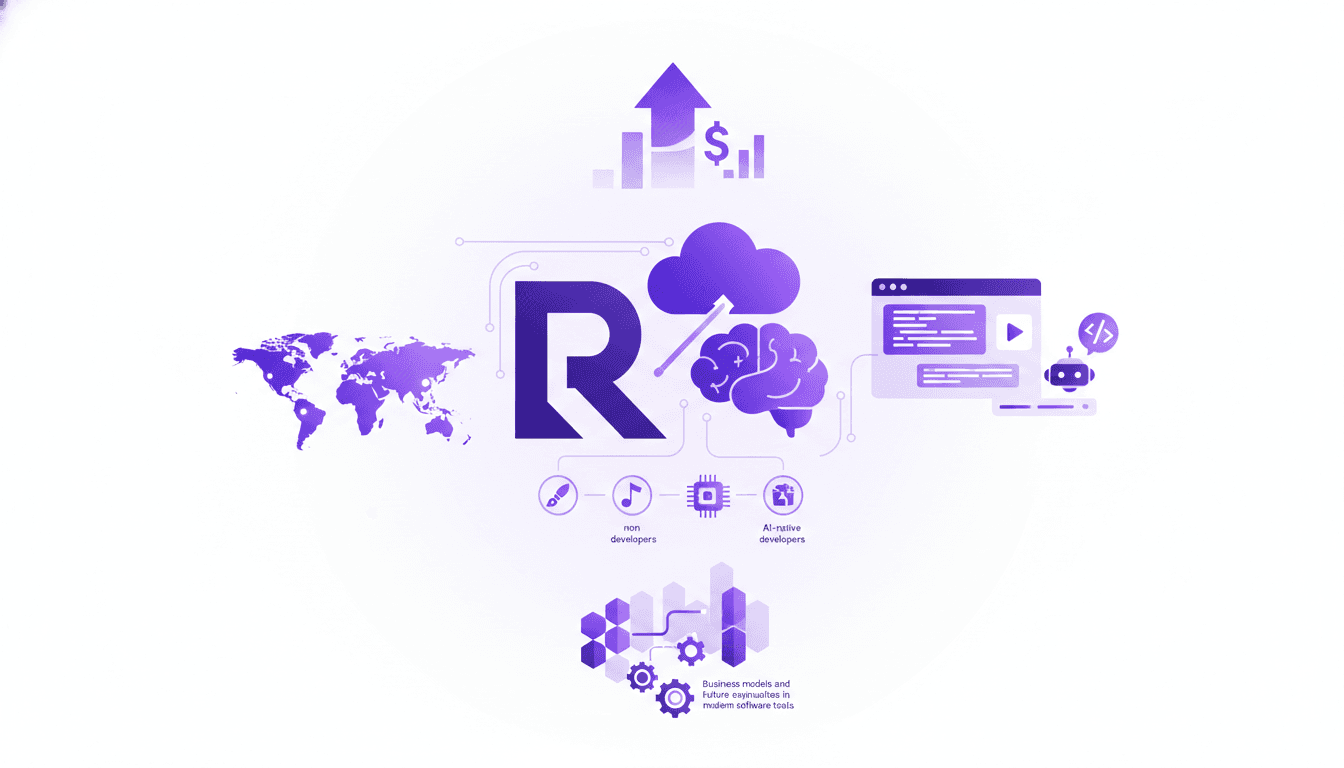

Replit: Democratizing App Development

I've been in the trenches with Replit, and trust me, the way they're reshaping software development is something you need to see. With their mission to democratize app creation, they're opening up a future where programming becomes accessible to all. Their recent $400 million funding, boosting them to a $9 billion valuation, is just the start. Replit isn't just reinventing development for seasoned devs, they're driving towards a future where non-developers and AI-native devs can shine. It's a true paradigm shift, and you'll want to witness it firsthand.

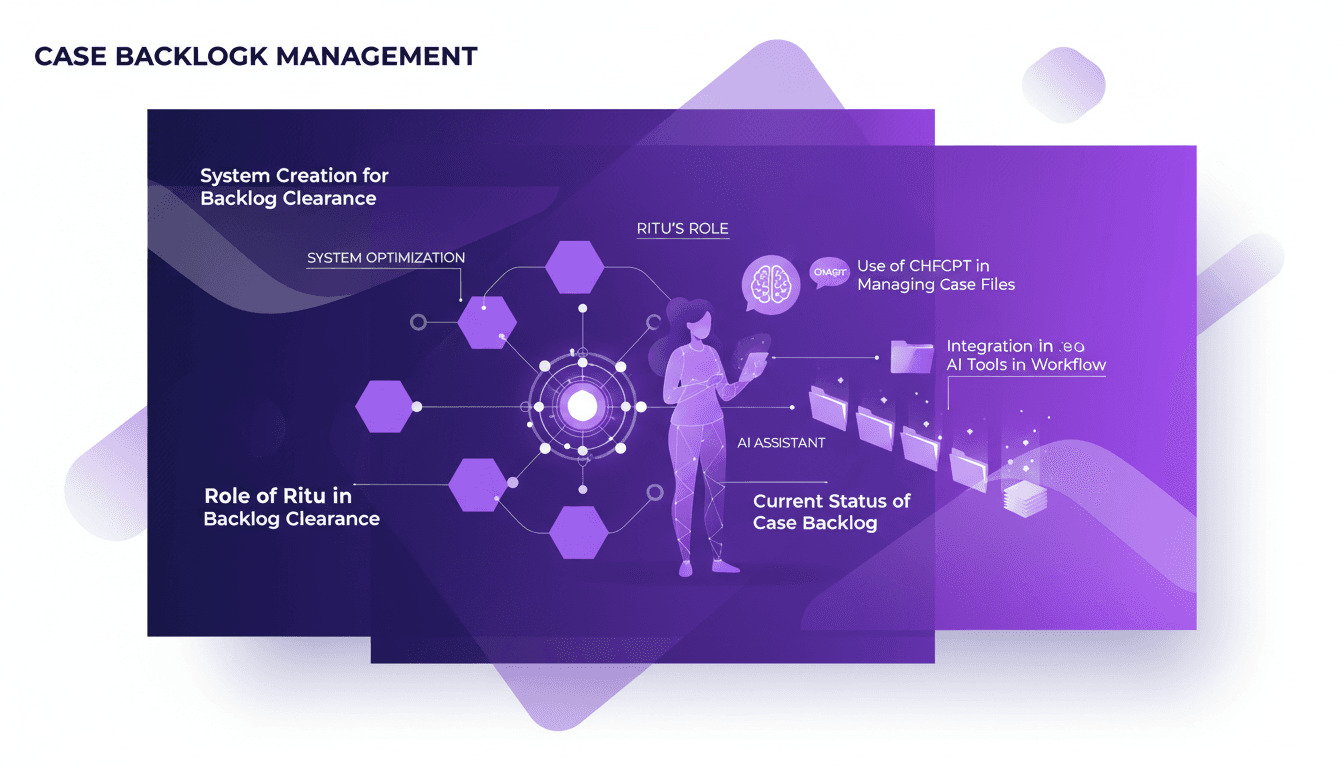

Mastering Case Files with ChatGPT: Efficient Workflow

I've been in the trenches of backlog management, and hitting that zero backlog is a game changer. Using ChatGPT, I built a system that flipped my workflow on its head. First, I integrated Ritu to optimize file processing, then connected ChatGPT to automate and speed things up. The result? Zero backlog and skyrocketed efficiency. But watch out, orchestrating your tools properly is key to avoiding performance pitfalls. If you're looking to master your case files, this one's for you.

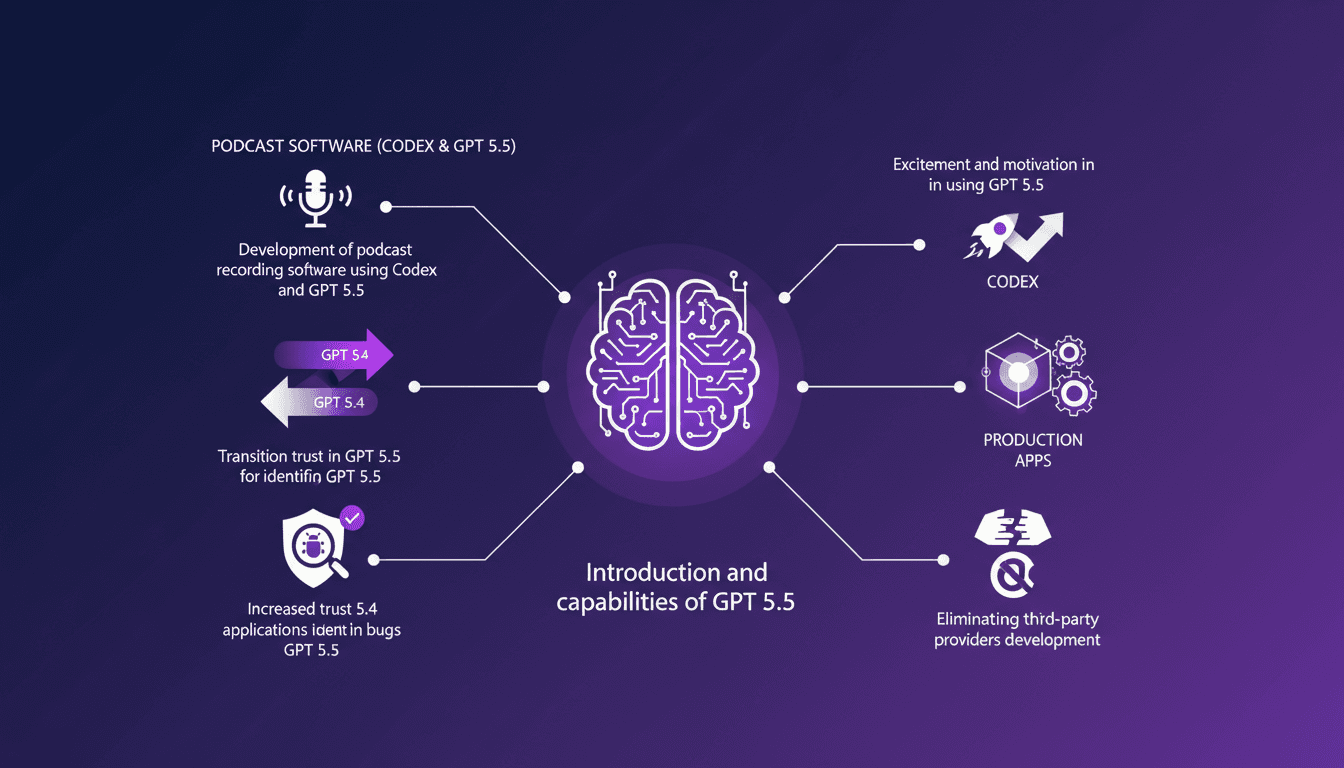

Building with GPT 5.5 and Codex: Cut the Third Parties

I dove headfirst into GPT 5.5, and what a ride it's been! From building podcast software to cutting out third-party providers, this version has changed the game for me. With GPT 5.5, we're not just talking upgrades; we're talking a whole new level of trust and capability. First, I integrated Codex to develop a podcast app and cut the middlemen out. Then, bugs? GPT 5.5 spots them like a pro. Tired of third-party solutions bogging down your productivity? This tool might just be your new secret weapon. Let's dive into some real-world applications together.

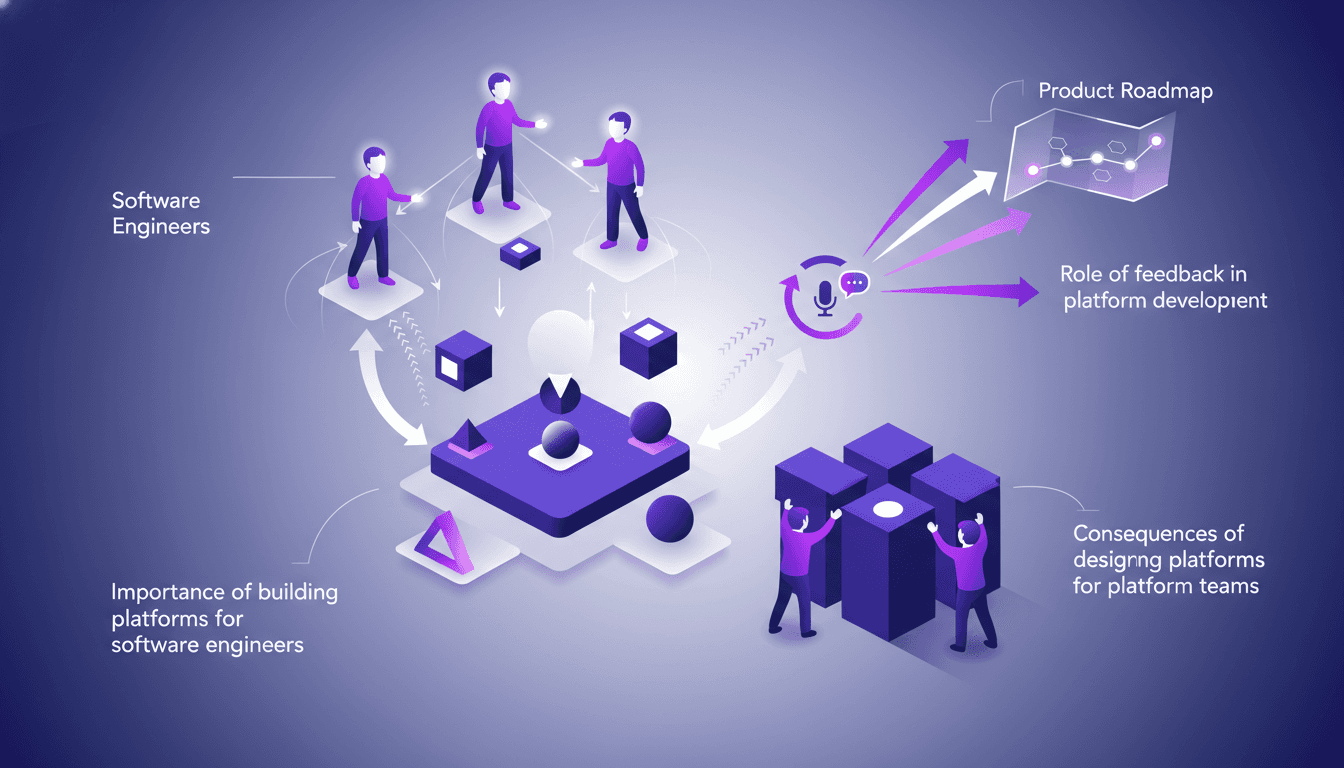

Building Internal Platforms for Engineers

I've been there—stuck in a loop where the platform serves everyone but the folks who actually build with it. Realizing that if your internal platform isn't designed for software engineers means you're missing the mark. In software development, internal platforms are the backbone, but often they're built for the wrong audience. Let's dive into why platforms should cater to software engineers, the pitfalls of designing for platform teams, and how feedback can steer the ship in the right direction. By engineering a platform for those who create and code, we're changing the game. Join me behind the scenes to explore the exchanges between software engineers and platform teams, and see how feedback can reshape a product roadmap.

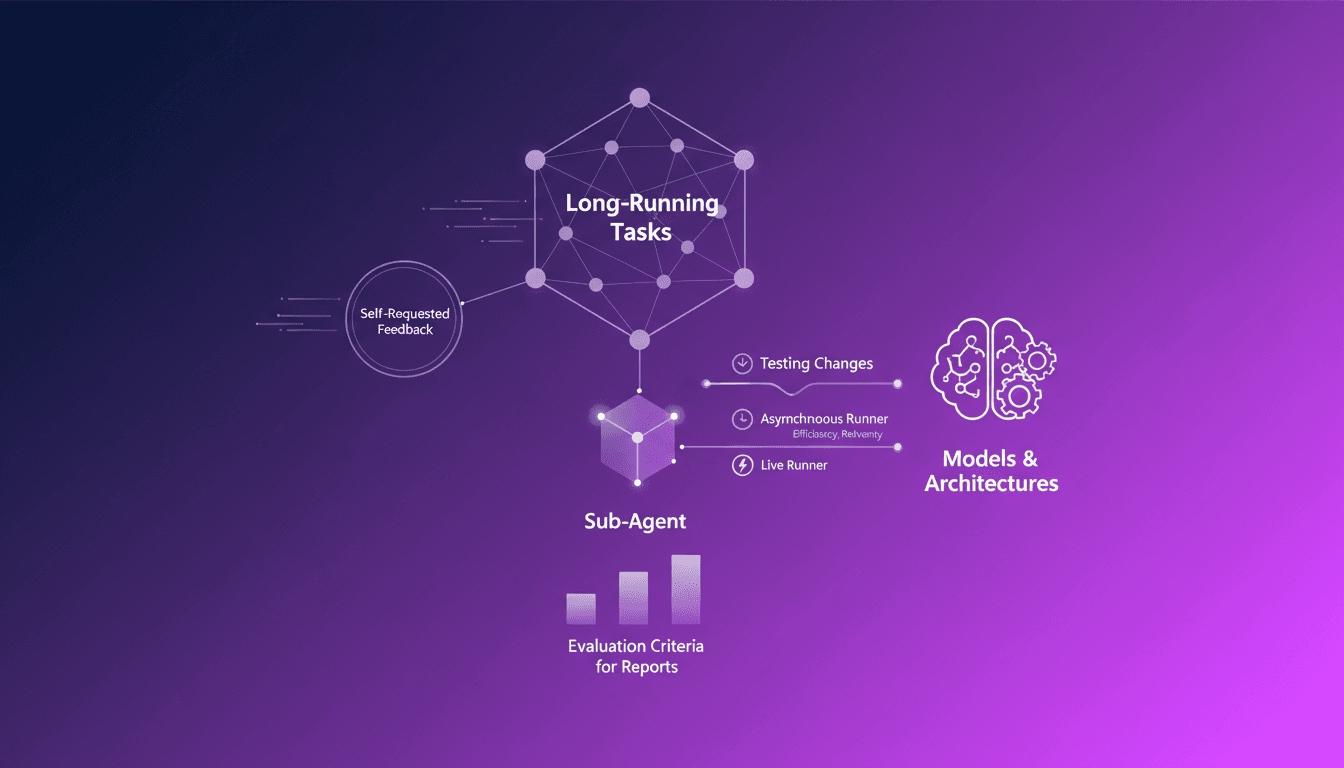

AI Agents: Requesting Feedback Efficiently

Ever been stuck in a loop of endless tasks, unsure if you're heading in the right direction? I have, and that's where AI agents asking for feedback come into play. In this podcast, I'll walk you through how I orchestrate this process. In the AI world, long-running tasks can be a nightmare without proper feedback mechanisms. Sub-agents really shine here, autonomously requesting feedback, making the process efficient and less error-prone. We'll dive into self-requested feedback for long-running tasks, the role of sub-agents in the feedback process, evaluation criteria for reports, and how I use asynchronous and live runners to test changes in models and architectures.