Transformers in Vision: Evolution and Challenges

I remember the first time I transitioned from CNNs to Transformers. It felt like stepping into a new world, full of potential but also pitfalls. Here, I'll walk you through how these models evolved and what it means for us in the field. Transformers have revolutionized vision tasks, and understanding their evolution and application is crucial for effective deployment. I'll take you through my journey, highlighting key moments and practical insights. From ViT and pretraining techniques to Swin and ConvNeXt models, down to the deployment challenges of the SAM Series Models, and how Roboflow's RF100VL dataset impacts model flexibility, we've got a lot to cover.

I remember perfectly the first time I left behind Convolutional Neural Networks and dove into Transformers. It felt like stepping into uncharted territory, full of promises but also traps to avoid. If you're in the field, you know what I mean. Transformers have completely disrupted vision tasks, but deploying them effectively requires understanding their evolution and application. I'll share my journey, highlight key moments, and offer practical insights. We'll talk about Vision Transformer (ViT) and pretraining techniques, Swin and ConvNeXt models, and the deployment challenges of the SAM Series Models. And of course, I'll tell you how I used Roboflow's RF100VL dataset to boost model flexibility. So, if you're ready to dive into the details (and avoid some pitfalls I encountered), stick with me.

From CNNs to Transformers: A Paradigm Shift

I've spent years tinkering with Convolutional Neural Networks (CNNs) for my computer vision projects. They dominated vision tasks but hit limits when handling complex patterns. CNNs rely on strong inductive biases, which makes them great for identifying invariant local features. But then came transformers with their attention mechanisms, and suddenly everything changed. We could process visual data in a far more flexible way. This forced me to rethink model architectures and training processes. Of course, there's a trade-off: increased computational demand versus improved accuracy. And believe me, you feel it on the electricity bill.

Vision Transformer (VIT): Breaking Down the Model

With the Vision Transformer (VIT), we use a 16x16 patch size, transforming images into a sequence of patches. This reduces inductive bias, which is a double-edged sword: on one hand, it allows for more flexible learning, but on the other, it can be chaotic without proper pretraining. I've experimented with self-supervised learning and Masked Autoencoder (MAE) techniques to enhance this pretraining. But watch out, scaling issues come fast: resolution compute scales with n to the fourth power. It can be a real pitfall if you're not careful.

Swin and ConvNeXt: The Next Steps in Model Evolution

Swin models introduced hierarchical feature maps, improving efficiency. It's a real relief when you see the compute bill. Then came ConvNeXt, revisiting convolutional networks with a 4x4 patch size. These models aim for a balance between performance and computational cost. It's like choosing between a sports car and a hybrid: it depends on your specific needs and resource availability. I've learned to pick based on task-specific requirements and available resources. And honestly, it's not always obvious.

SAM Models and Deployment Challenges

SAM series models offer impressive flexibility but come with deployment complexities. Achieving a 40x speed-up requires careful tuning and understanding of model intricacies. Deployment often hits snags due to hardware limitations and integration issues. A tip: sometimes, it's faster to iterate with simpler models before scaling up. I've found that it's often better to start simple and build from there.

Roboflow's RF100VL Dataset: Flexibility in Action

The RF100VL dataset from Roboflow provides a robust foundation for model training. This flexibility allows model training to adapt to diverse tasks. The key here is to leverage the dataset's diversity to improve model robustness. But don't overuse dataset features; focus on relevant data points for your specific application. I've seen teams get lost in unnecessary data, and trust me, it costs.

For more on recent cloud impacts, check out this article on the implications of Google's cloud moves.

Transformers have really revolutionized vision models. Here's what I've learned while integrating them into my projects:

- I started by dissecting the Vision Transformer (VIT) with its 16 by 16 patch size, and quickly saw how switching to Swin and ConvNeXt, with their 4 by 4 patches, optimized performance.

- Be careful with the resolution compute scaling in transformers, n to the fourth power, it can really load up the system — you need to juggle that intelligently.

- The SAM series models bring intriguing solutions, but deployment challenges remain a real headache that I haven't fully solved yet.

Looking ahead, I believe continuing to explore and experiment with these models could further push the boundaries of what we can do with computer vision. If you're ready to dive into the world of transformers, start experimenting with your own datasets and models. Share your insights with the community, because that's how we move forward!

I encourage you to watch the original video "How Transformers Finally Ate Vision" for deeper insights. Trust me, you won't regret it. YouTube link

Frequently Asked Questions

Thibault Le Balier

Co-fondateur & CTO

Coming from the tech startup ecosystem, Thibault has developed expertise in AI solution architecture that he now puts at the service of large companies (Atos, BNP Paribas, beta.gouv). He works on two axes: mastering AI deployments (local LLMs, MCP security) and optimizing inference costs (offloading, compression, token management).

Related Articles

Discover more articles on similar topics

Google's $40B Cloud Move: Impacts Unveiled

I never thought I'd see Google throw $40 billion at a cloud competitor. But here we are, and it's shaking up the tech landscape like never before. I'm diving into how this move, alongside advancements in AI and robotics, is reshaping the industry. We'll unpack Google's massive investment, explore the performance of cutting-edge AI models like Happy Horse and Grock 4.3, and examine the latest innovations in robotics. We'll also touch on tech giants' infrastructure investments and a new approach to AI collaboration.

Evolving AI Models: Limits and Opportunities

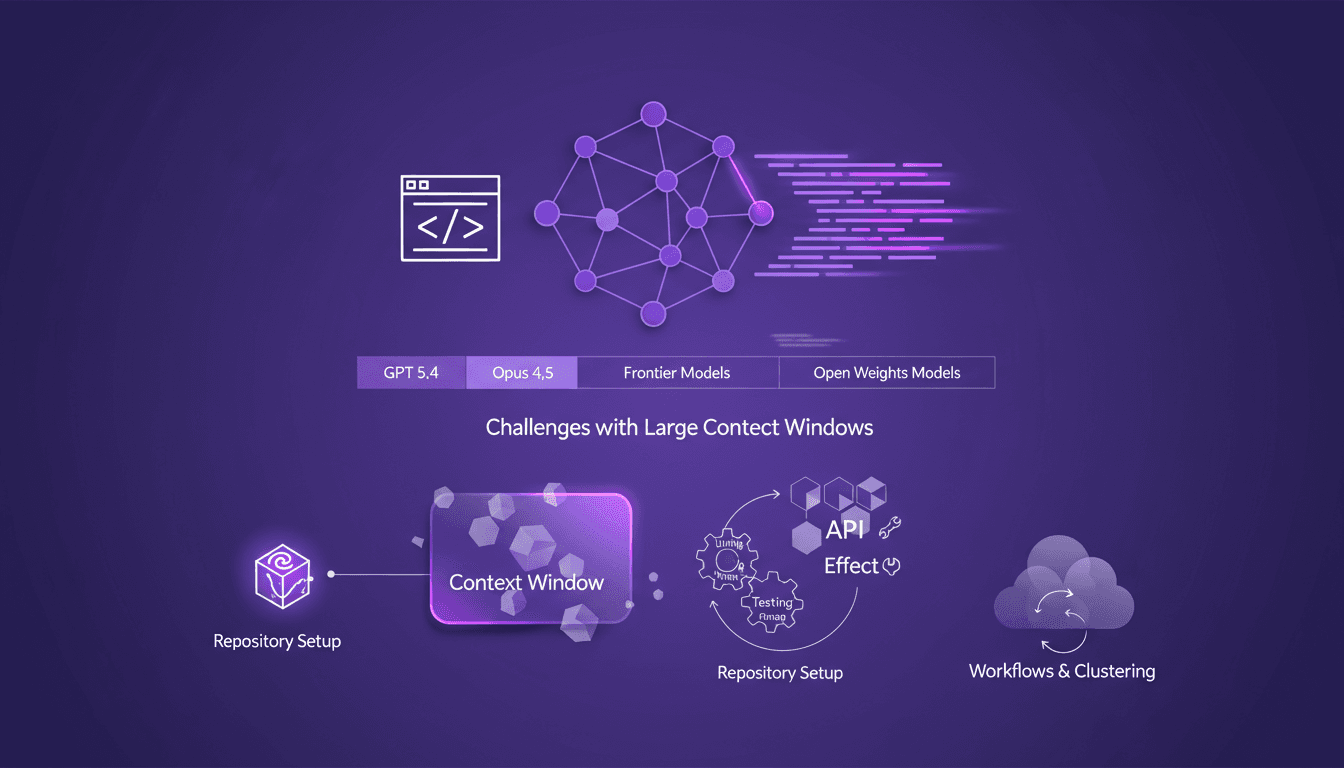

I still remember setting up my first repository for AI-driven code generation. It was a game changer, but also a minefield of potential pitfalls. In this talk, I'm diving into how AI models like GPT 5.4 and Opus 4.5 are reshaping our workflows and where they still stumble. Shifting from hand-coding to automated code generation is what I live daily in the field. But watch out—large context windows can be a real headache. Your repository setup is critical to avoid pitfalls, and I'll share how I use Effect and other tools for efficient API development. Buckle up, because understanding these models and their limitations is crucial for anyone navigating AI-assisted development.

Optimizing AI Models: Our Practical Approach

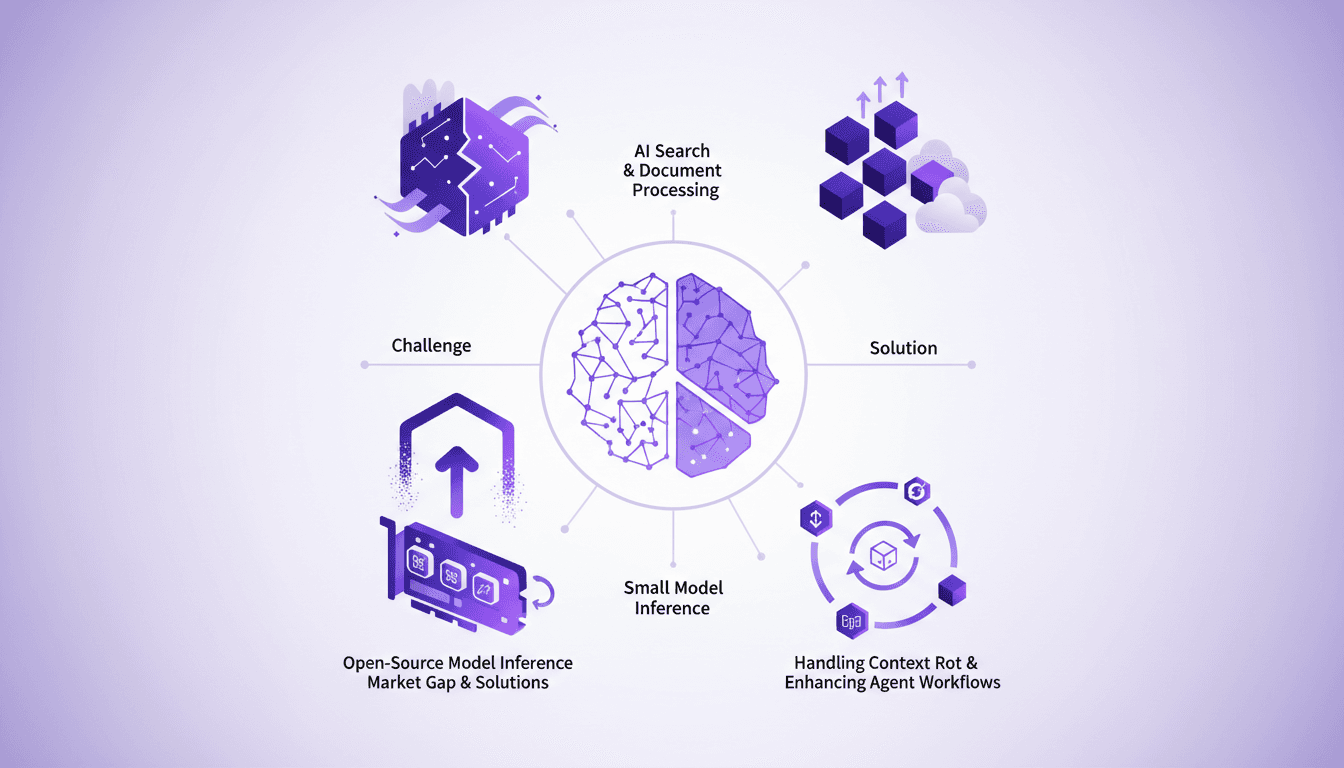

I saw a gap in the market for small model inference, so I decided to build the infrastructure myself. With over 3 million models on Hugging Face, the lack of effective infrastructure was glaring. Why is this important? Because small models play a crucial role in AI search and document processing. And the challenges? There were plenty, but I tackled them head-on. From context rot to optimizing agent workflows, each step was a learning curve. I orchestrated model swapping for efficient GPU usage while supporting various open-source architectures. In short, we filled a market gap with a robust, scalable solution.

Gemma 4: Transforming Edge AI Deployment

I dove into deploying AI on edge devices with Gemma 4, and let me tell you, it's a game changer. But there are nuances you need to know to make it work seamlessly. Gemma 4 brings new capabilities for processing data locally, reducing latency and bandwidth usage. I set up the Light RT deployment framework, and the performance boost with NPU acceleration is impressive. But don't get too excited just yet — cross-platform integration requires some tweaks. Community support and open-source contributions are key assets. Want to maximize these benefits? I'm sharing what I've learned from the field.

Ralph Loops: Building Simple, Effective AI

I remember the first time I built a Ralph Loop. It was like finding a missing puzzle piece in AI-driven development. Not just theory, but a real workflow that changed how I orchestrate tasks. These loops streamline automation using AI models like GPT 5.8, offering a practical, no-nonsense approach. Imagine orchestrating tasks seamlessly while addressing the challenges and benefits of using AI in software development. In this article, I'll take you through Ralph Loops, their practical applications, and how they can truly transform your workflow. Let's dive into the limits, security and ethical considerations, and scaling these processes in team environments. Yes, the future of AI in automating complex workflows is already here. Ready to dive in?