Reducing Overcaveating in GPT-5.3

I remember the first time I encountered overcaveating in AI models. It was like talking to someone who couldn't stop hedging their bets. With GPT-5.3, I've finally found a way to cut through the noise and get straight answers. This version has significantly improved understanding of user intent and precision in interactions. But watch out for contextual limits. When I configure these models, I always make sure to test use cases where precision is crucial, and not get burned by poor performance. In short, GPT-5.3 is a game changer, but you need to know how to use it right.

I remember the first time I ran into overcaveating in AI models. It was like having a conversation with someone who couldn't stop hedging their bets. Frustrating, right? With GPT-5.3, I've finally found a way to get clear answers without all that noise. This version has seriously improved understanding of user intent, which means we can finally have more precise and direct interactions. But don't get me wrong, it's not all smooth sailing. When configuring a model like this, you need to be aware of the contextual limits and make sure you don't get burned by poor performance. I always test use cases where precision is crucial. So, if you want to get the most out of GPT-5.3, watch this video closely. I'll share my tips to avoid pitfalls and maximize the impact of your AI interactions. It's a real breakthrough, but as always, knowing how to handle it is key.

Understanding Overcaveating in AI Models

Overcaveating is like having a conversation where every statement you make is second-guessed with unnecessary caution. In AI, this means the model adds caveats where none are needed, often misunderstanding the user's intent. I've experienced this firsthand with older models, and it can be quite frustrating. Imagine asking for help with archery physics and getting a safety lecture instead of the trajectory formula you need.

With the GPT-5.3 model, there's a marked improvement. The AI can banter and interpret intentions more naturally, almost like chatting with a friend. But remember, balancing necessary caution and excessive confidence is tricky. I've learned (sometimes the hard way) not to take everything at face value, especially with older models.

"Overcaveating is like trying to run with weights on your ankles: it slows you down more than it helps."

- Overcaveating harms efficiency and user trust.

- GPT-5.3 improves contextual interpretation.

- Balance between caution and confidence is essential.

Comparing GPT-5.3 to Older Models

When comparing GPT-5.3 to its predecessors, it's like moving from a trainee to a seasoned expert. The improvements are evident, especially in handling humor. Previously, joking with AI was like trying to entertain a robot programmed never to laugh. Now, it understands context and responds appropriately.

These enhancements are not just for show. They translate into better user engagement, which is a game changer in many scenarios. Technically, the AI is more adept at avoiding overcaveating, thanks to a finer grasp of context.

- Improved handling of humor and jokes.

- Increased user engagement.

- Reduced overcaveating through better contextual understanding.

Handling Technical Queries with Precision

I've had the chance to use GPT-5.3 for complex technical queries, and the difference is noticeable. For example, asking about fluid dynamics or code optimization happens without the unnecessary caveats that bogged down past interactions.

Precision in technical interactions is crucial, especially in niche fields. With less overcaveating, the AI can provide more direct and useful responses, having a tangible impact on daily workflows.

- Precise handling of technical questions.

- More direct responses due to reduced overcaveating.

- Positive impact on daily workflows.

Model Safety and Precision in GPT-5.3

Safety in the GPT-5.3 model has been enhanced without increasing overcaveating. It's like fine-tuning a car's brakes to be more effective without unnecessarily slowing down the drive. I've observed that the model can now assess intentions without falling into paranoia.

There's always a trade-off between safety and precision. But with GPT-5.3, this balance is better managed, translating into a positive business impact, especially in sectors where AI needs to be both safe and responsive.

- Enhanced safety without sacrificing precision.

- Balance between safety and user intent.

- Positive business impact.

Practical Use Cases of AI Interaction

With GPT-5.3, practical use cases are expanding. Whether in healthcare, finance, or even content creation, AI proves to be a major asset. In my experience, I've used it to optimize customer support processes, reducing response time while increasing customer satisfaction.

However, like any tool, there are limitations and trade-offs. For instance, in highly specific situations, the AI might need more information to be fully effective.

- Expanding use cases across various sectors.

- Process optimization through AI.

- Limitations and trade-offs in specific cases.

In conclusion, the GPT-5.3 model marks a significant step forward in AI interactions, particularly by reducing overcaveating and enhancing contextual precision. It's a powerful tool that, when used correctly, can transform daily processes.

With GPT-5.3, there's a real leap forward on several fronts. First, I notice a significant reduction in overcaveating during interactions, which enhances both precision and safety in AI models. Next, there's improved user intent understanding, making exchanges smoother and more relevant. Finally, by implementing these improvements, we achieve more efficient and trustworthy AI communication. It's a game changer, but watch out for limits: always validate outputs in critical use cases. I encourage you to integrate GPT-5.3 into your workflows. See the difference for yourself and share your experiences. For more details, check out the video "Reducing Overcaveating in GPT-5.3 Instant" on YouTube. Together, we can push the boundaries of AI.

Frequently Asked Questions

Thibault Le Balier

Co-fondateur & CTO

Coming from the tech startup ecosystem, Thibault has developed expertise in AI solution architecture that he now puts at the service of large companies (Atos, BNP Paribas, beta.gouv). He works on two axes: mastering AI deployments (local LLMs, MCP security) and optimizing inference costs (offloading, compression, token management).

Related Articles

Discover more articles on similar topics

AI Optimization: Recursive Self-Improvement vs Fine-Tuning

I remember the first time I ditched fine-tuning for Poetic's recursive self-improvement. It was like trading a bicycle for a jet. The efficiency was mind-blowing, and the cost savings were immediate. In this article, I'll walk you through how this approach can change your AI game. We're diving into cost-effective AI model optimization, Poetic's standout benchmark performance, and the journey from mobile apps to AI. If you're tired of traditional fine-tuning, this is the read you need.

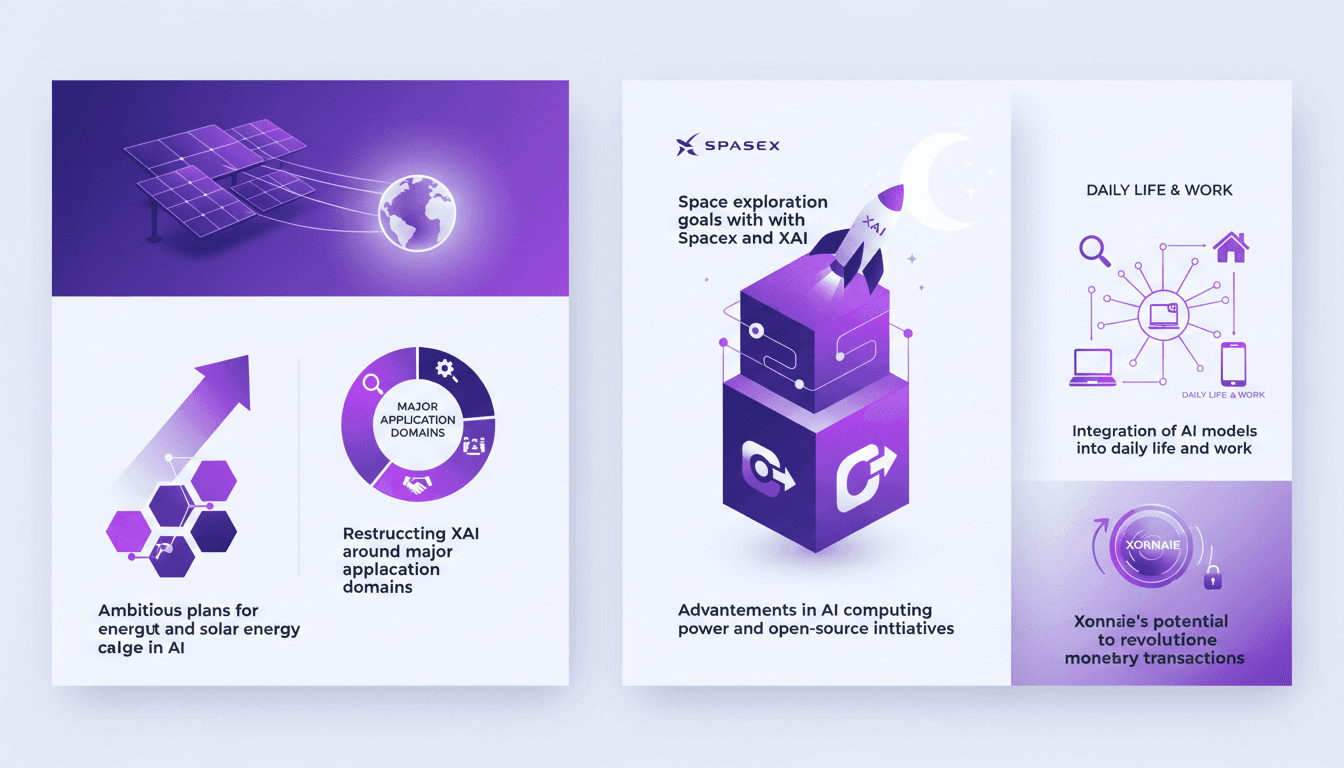

XAI's Ambitious Solar and AI Plans

I was at the XAI 2026 conference, and let me tell you, Elon Musk didn't hold back. From solar energy capture to AI advancements, it was a glimpse into the future. I'm connecting the dots on how we're going to tackle these ambitious plans. XAI, amidst its strategic restructuring, is pushing the boundaries of AI and energy. We're talking astronomical computing power, reorganizing around major application domains, and integrating AI models into our daily lives. Not to mention Xonnaie, potentially revolutionizing monetary transactions. Let's dive into this world-shaking conference.

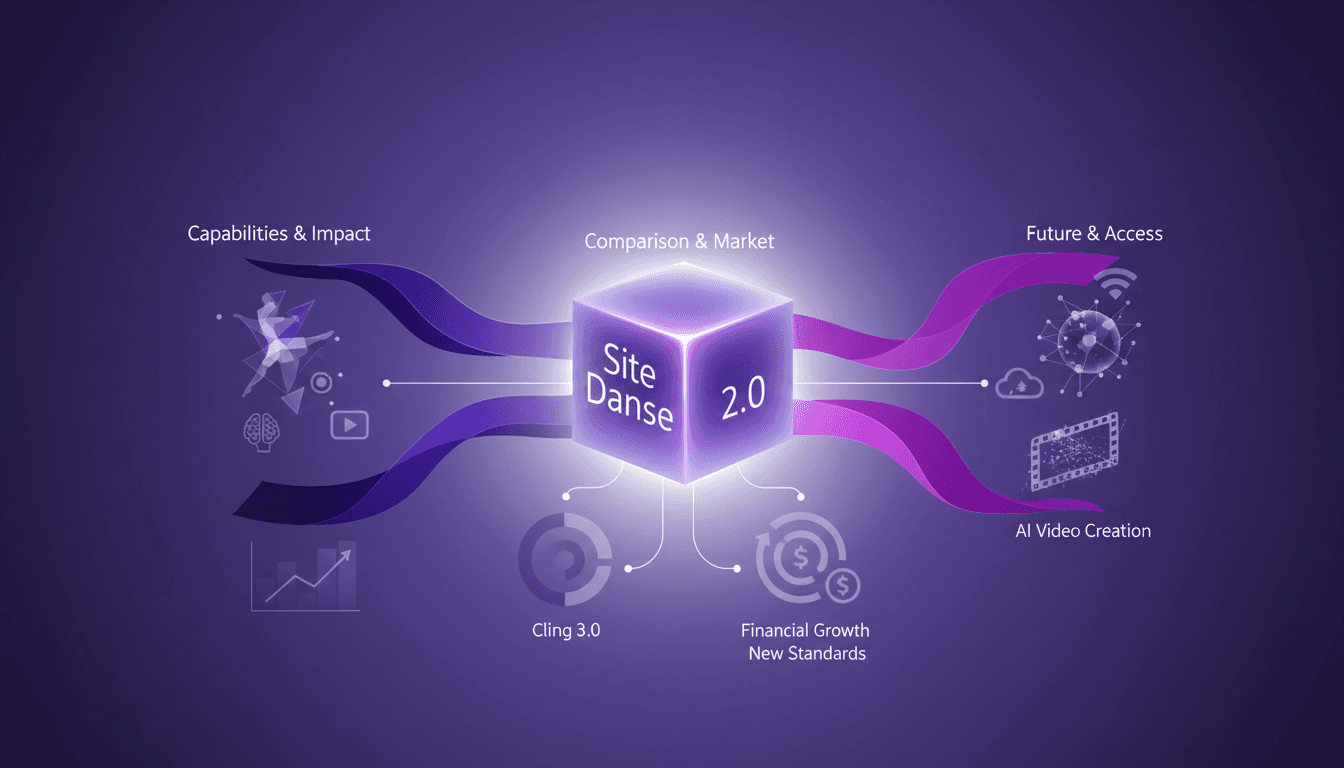

Seedance AI 2.0: Revolutionizing Video Creation

I dove into Seedance AI 2.0 expecting just another AI tool, but what I found was a game changer. This isn't just tech hype—it's a real shift in video creation. With Seedance AI 2.0, we're witnessing a revolution in leveraging AI for video content. It's not just about flashy features; it's about tangible impacts on production workflows. Compared with Cling 3.0 and other models, Seedance AI 2.0 stands out with its technical capabilities and market impact. Chinese companies saw their stocks rise by 10 to 20% in a single trading day. And that native 2048 x 1080 resolution, it's a game changer! I'm sharing how I've integrated it into my workflow and the financial implications to consider. Get ready to see how this technology might redefine the future of video content creation.

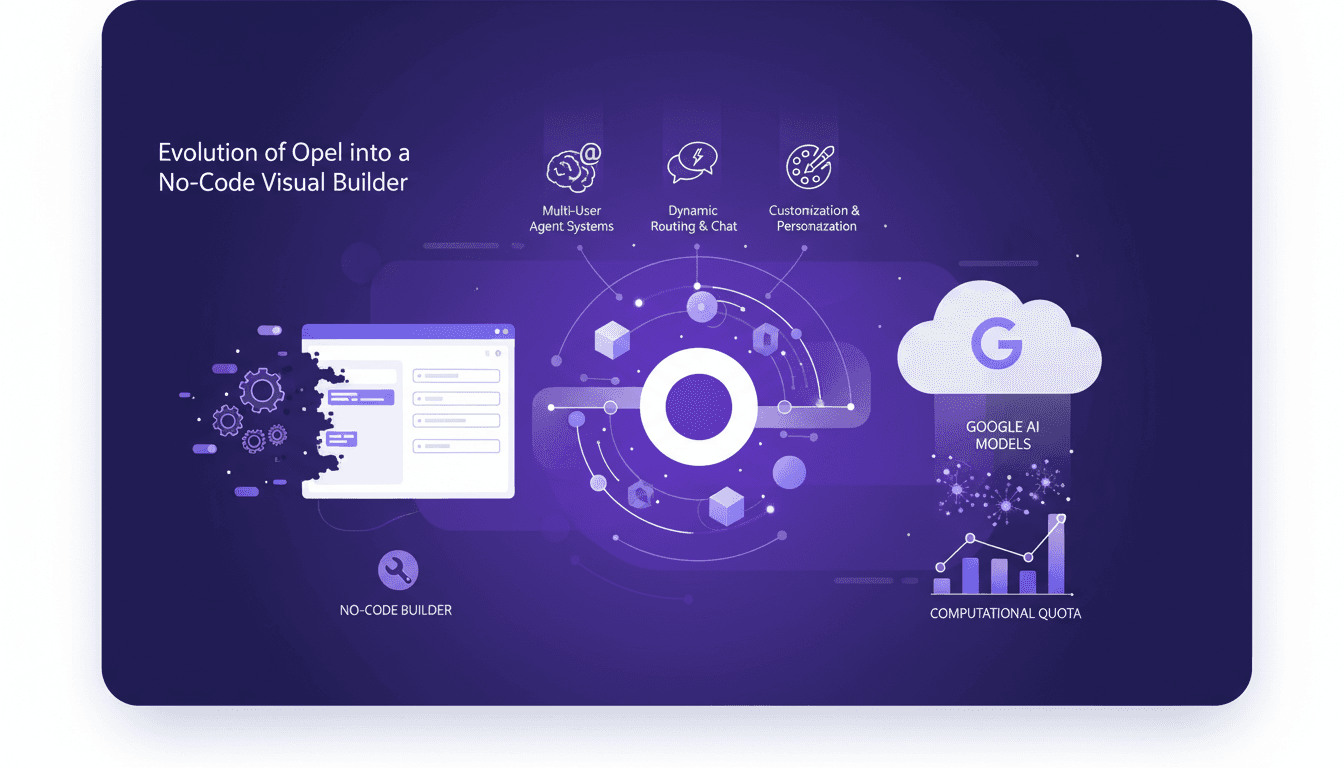

Opel's New Features: Generative AI in Action

I remember when I first started with Opel, it was a game changer for building no-code solutions. Now, with Google's latest upgrades, we're talking about a whole new level of efficiency and power. Let me walk you through how I leverage these tools in my daily workflow. Opel has evolved from a simple tool into a robust no-code visual builder, thanks to Google's integration of generative AI models. This article dives into the new features, customization options, and practical applications. Between dynamic routing, interactive chats, and multi-user systems, Google is really pushing the limits. But watch out for those quotas, they can quickly become a headache.

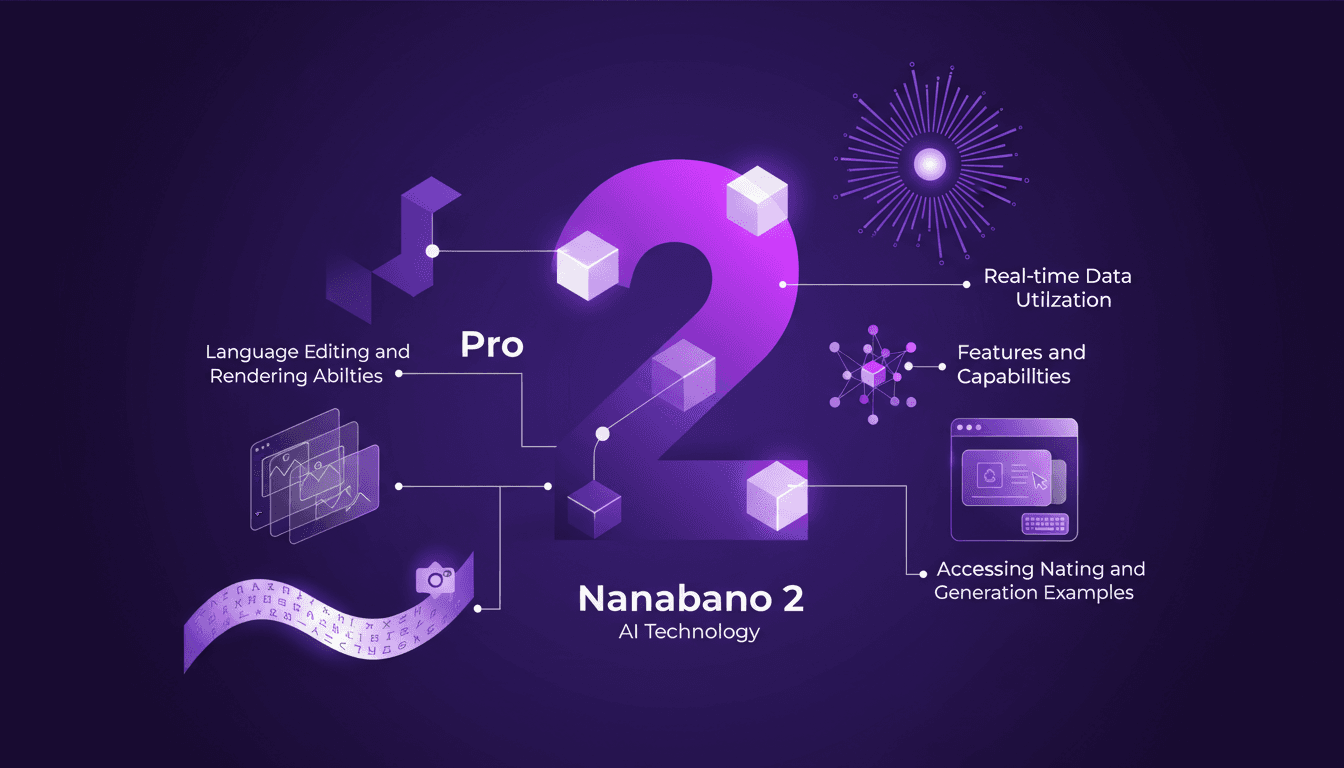

Nanabano 2: Setup, Comparison, and Tips

I dove into Google Nanabano 2 expecting just another AI tool, but what I found was a real game-changer for image generation. Let me walk you through how I set it up and what makes it tick. With high visual fidelity and multilingual support, Nanabano 2 holds a lot of promise, but how does it perform in the real world? I compared it with the Pro version, explored its features, and tested its rendering and image generation capabilities. In just 12 minutes, I'll show you how to access Nanabano 2, leverage its real-time translation capabilities, and why it might just become your favorite new tool.