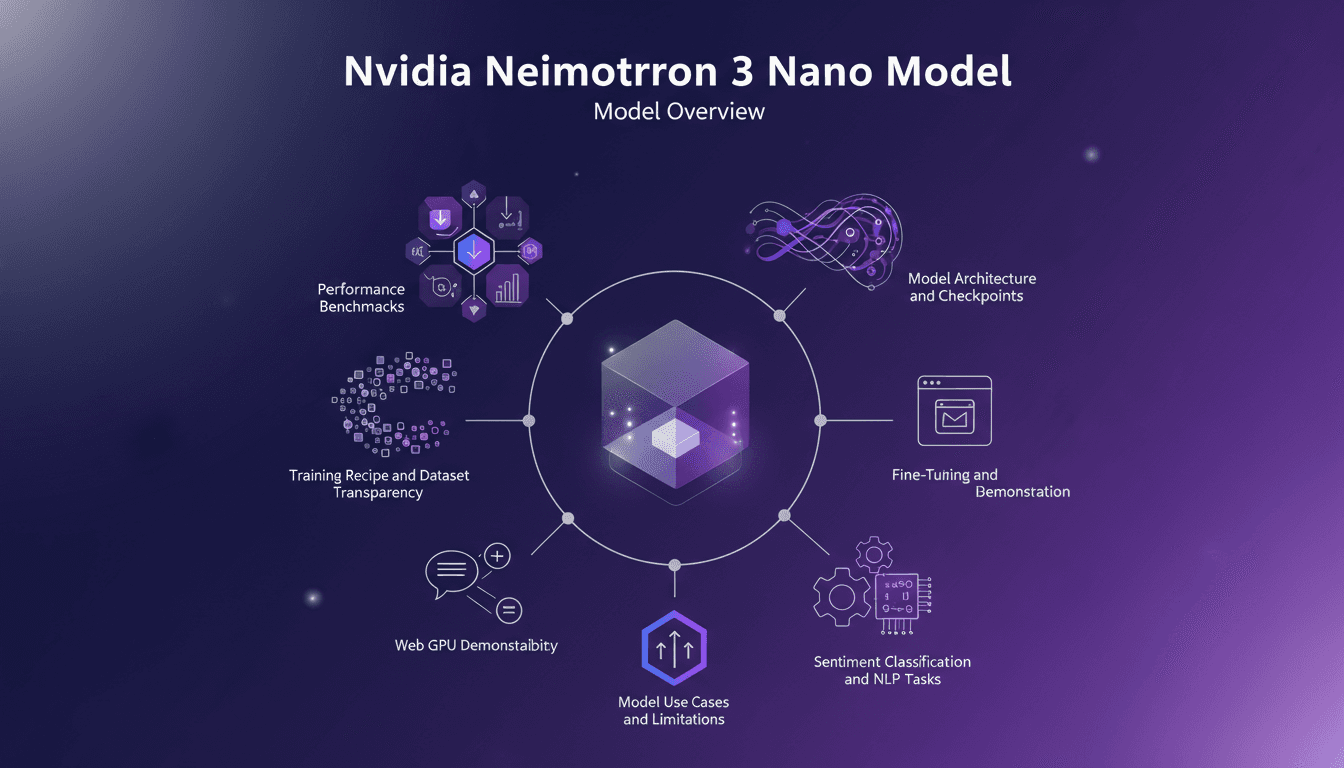

Nvidia Neimotron 3 Nano: LLM for the Edge Explained

I got my hands on the Nvidia Neimotron 3 Nano, and it's a game changer for edge computing. With 4 billion parameters, this model is set to push the boundaries of edge AI. But beyond the hype, how does it truly perform? I'll take you through setting it up, what worked, and what to watch out for. We'll delve into the model architecture, performance benchmarks, and I'll share insights on use cases and limitations. Get ready to dive into the world of the Neimotron 3 Nano and see how it stacks up against models like the Quen 3.5, with a 10 percentage point better performance on ifbench.

I got my hands on the Nvidia Neimotron 3 Nano, and trust me, it's a game changer for edge computing. Picture a model with 4 billion parameters, crafted to push AI beyond current boundaries. But before we get too excited, let's see what this really means in practice. First, I set it up (not without a few hitches, I'll admit), and tested its performance, stacking it against models like the Quen 3.5. Spoiler: it outperforms Quen by 10 percentage points on ifbench! I'm going to walk you through the model's architecture, checkpoints, and how it handles sentiment classification and NLP tasks. But beware, there are limitations you need to know about. Get ready, because in this video, we're diving into everything the Neimotron 3 Nano can do, and how you can integrate it into your own workflow.

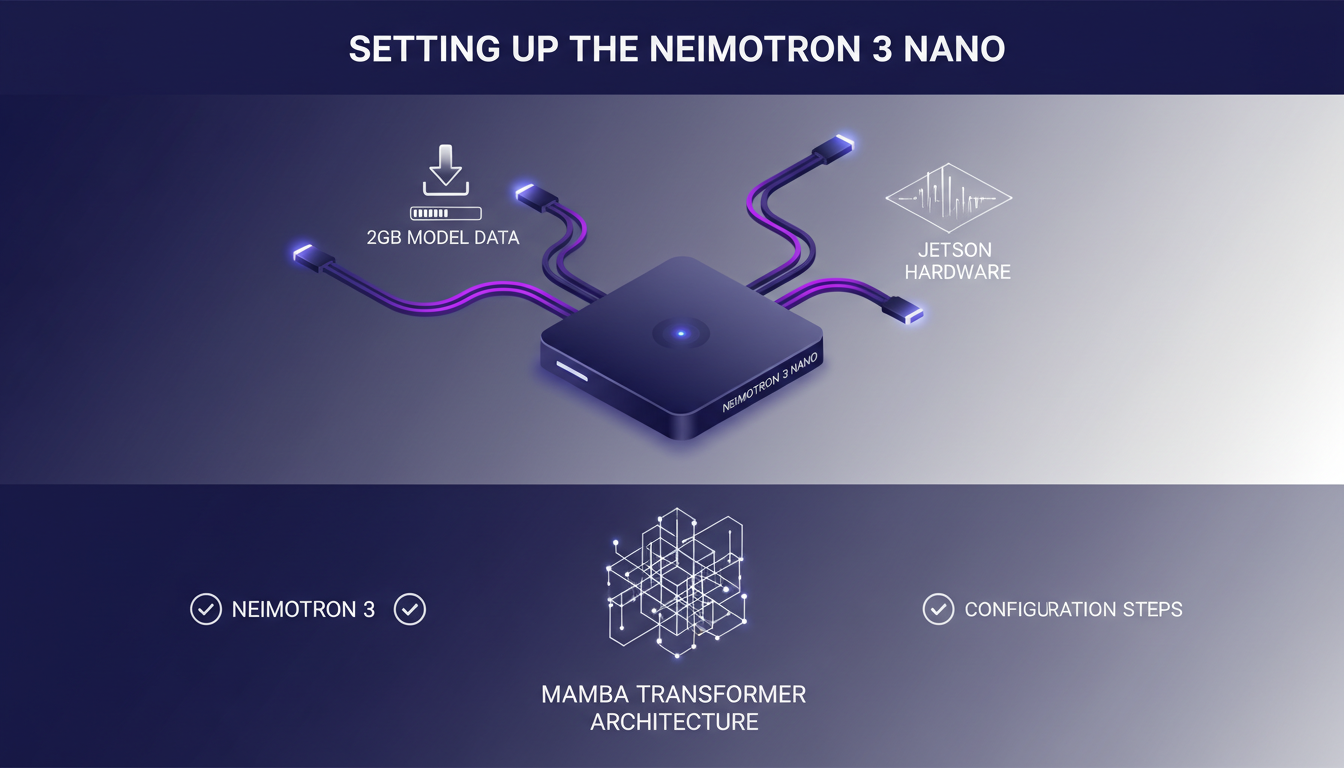

Setting Up the Neimotron 3 Nano

Setting up the Neimotron 3 Nano is akin to connecting a rocket to its launch pad. It all starts with connecting to the Jetson hardware. If you've done this before, it's a breeze, but watch out for compatibility issues. I've had my share of headaches with driver versions that don't play nice with the hardware.

Next, I downloaded 2GB of model data for the Web GPU demo. Make sure your bandwidth can handle it, or you'll be grumbling. Once that's done, configuring the Mamba Transformer Architecture is key. I'll walk you through the crucial steps.

Quantization is crucial for performance. I implemented it efficiently using quantization techniques that reduce memory usage without sacrificing accuracy. Don't underestimate this aspect, or you'll end up with sluggish performance.

Model Architecture and Performance

The Neimotron 3 Nano outperforms the Quen 3.5 by 10 percentage points on ifbench, and that really matters. With its 4 billion parameters, it's a powerhouse, compared to the 3.54 billion of Quen 3.5. But remember, more parameters also mean more complexity.

Distillation and reinforcement learning are the secret sauce to its performance. Essentially, larger models are distilled to get the best out of them, and reinforcement learning is used to fine-tune it. But this has its limits; more complexity can mean longer processing times.

Training Recipe and Dataset Transparency

Nvidia's transparency in their training recipe is refreshing. You can know exactly which datasets were used, which is rare these days. Transparency allows for a better understanding of results and learning from potential errors.

Dataset choices significantly impact results. I navigated these decisions by focusing on diverse datasets to avoid biases. Reinforcement learning also plays a role here, significantly influencing training outcomes.

Web GPU and Hardware Compatibility

Running the model on Web GPU was surprisingly smooth. I'll walk you through the process. Jetson hardware compatibility is crucial. I've tested various setups and some have turned out to be finicky.

Fine-tuning can be tricky. I had to adjust certain parameters to achieve optimal performance. But beware, not all hardware setups are created equal. Be vigilant about your hardware specifications.

Practical Use Cases and Limitations

Sentiment classification was a breeze with this model. I'll show you how I set it up. NLP tasks are where the Neimotron shines. I've tested specific tasks and the results have been impressive.

But don't overestimate its capabilities. There are limitations to consider. Efficiency and cost are always concerns, and I've optimized both through quantization tweaks and judicious resource use.

- Hardware compatibility is essential for optimal performance.

- Beware of the pitfalls of biased datasets.

- Always optimize for cost and efficiency.

Diving into the Nvidia Neimotron 3 Nano has been quite the ride. It's a powerhouse for edge computing, but not without its quirks. Here's what stood out to me:

- With 4 billion parameters, the Neimotron 3 Nano outshines the Quen 3.5’s 3.54 billion, delivering a 10% better performance on ifbench.

- Its architecture and checkpoints offer serious leverage, but getting the setup right can be a bit tricky.

- When it comes to dataset transparency and training recipes, it's a real game changer, but don't expect a plug-and-play solution.

For those ready to explore further, I suggest you test the Neimotron 3 Nano in your own setup. The results can be transformative, but patience is key. Ready to dive deeper? Check out the full video here: [YouTube link]. You'll see firsthand how it can impact your projects.

Frequently Asked Questions

Thibault Le Balier

Co-fondateur & CTO

Coming from the tech startup ecosystem, Thibault has developed expertise in AI solution architecture that he now puts at the service of large companies (Atos, BNP Paribas, beta.gouv). He works on two axes: mastering AI deployments (local LLMs, MCP security) and optimizing inference costs (offloading, compression, token management).

Related Articles

Discover more articles on similar topics

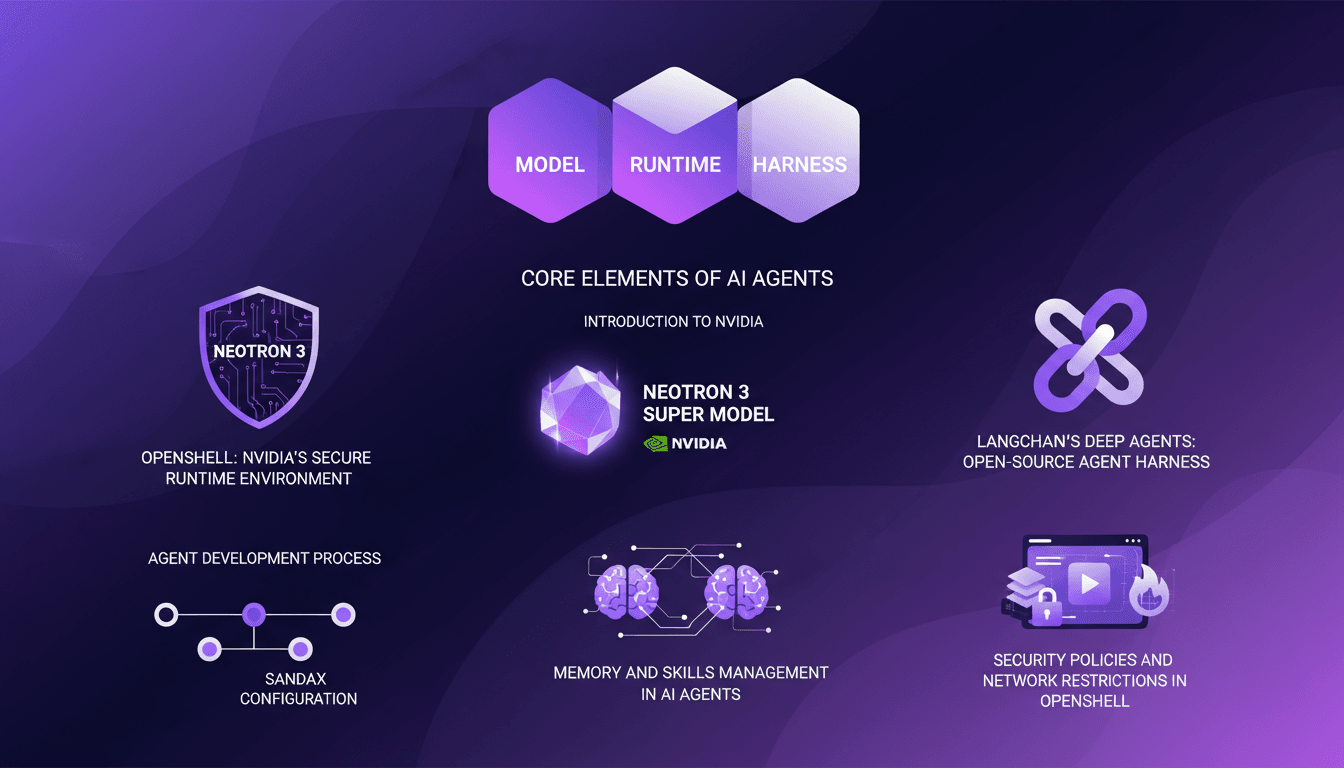

LangChain & Nvidia: Create Your AI Agent

I dove headfirst into building AI agents using LangChain and Nvidia's latest tech, and it's been a game changer. First, I connected my Neotron 3 model, then secured the runtime with OpenShell. LangChain's Deep Agents helped me craft an open-source harness, and juggling the agent's memory and skills was both complex and fascinating. But watch out, the security policies and network restrictions in OpenShell can be tricky. If you're looking to build your own AI agent, I break down how I orchestrated it all.

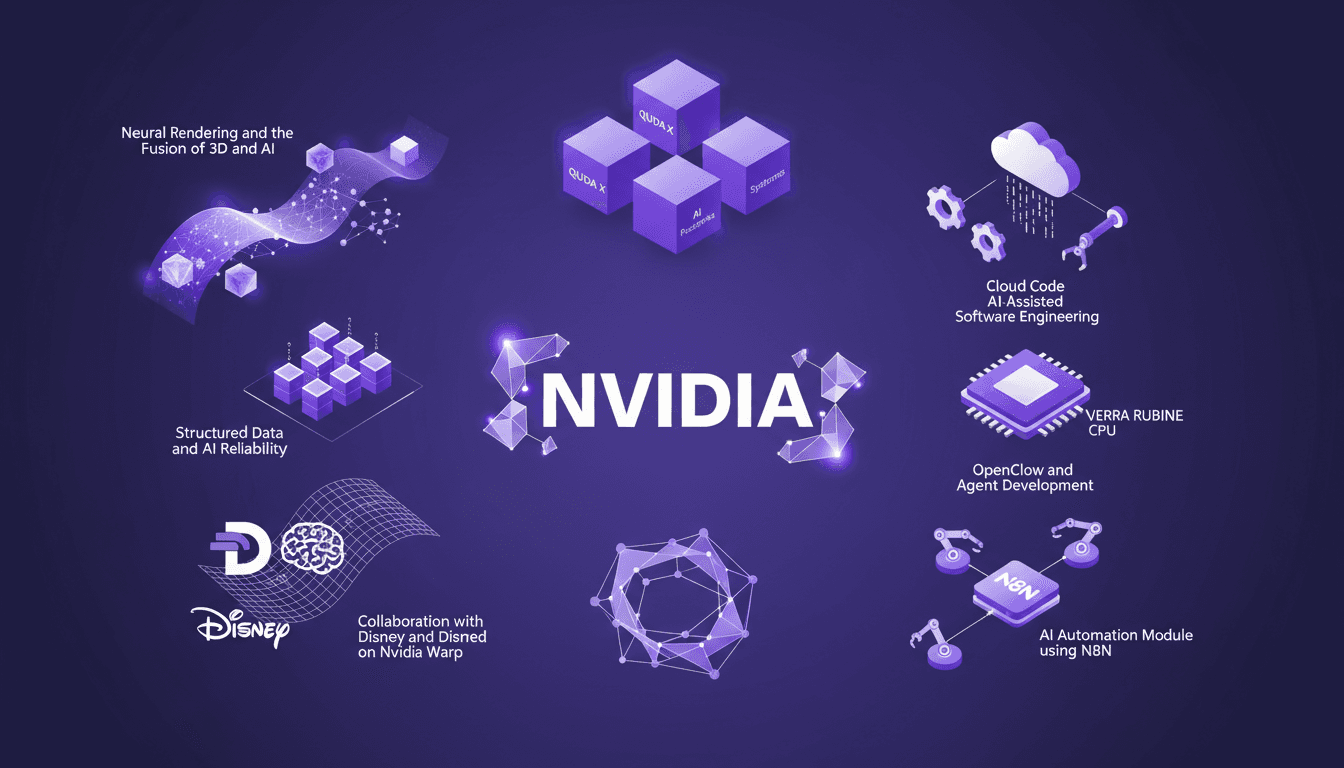

NVIDIA GTC 2026: Unveiling New Platforms

I've attended countless conferences, but NVIDIA's GTC 2026 was a real game changer. They unveiled platforms that are set to redefine AI and computing. Picture CPUs that transform how we code, neural rendering that fuses 3D and AI, and collaborations with Disney and Deep Mind on Nvidia Warp. It's massive, and the impact on us builders is direct. We're talking about QUDA X, AI-assisted software engineering, and even 45° water-cooled systems. We've got years of work ahead, but also incredible opportunities. Let's dive into what was unveiled.

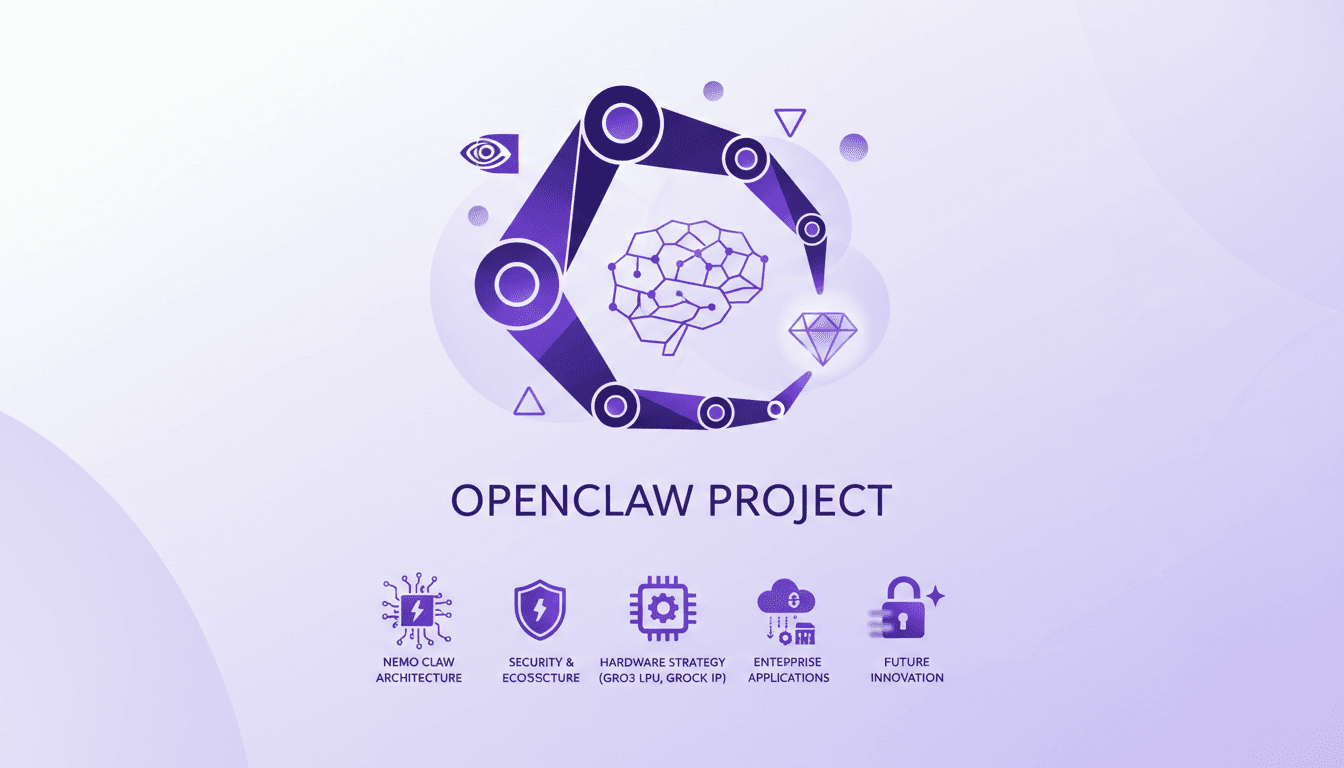

Nvidia and OpenClaw: Integrating Nemo Claw

I dove into the Nvidia GTC 2026 keynote expecting the usual tech updates, but stumbled upon a real game-changer: Nvidia's involvement in the OpenClaw project with Nemo Claw. Nvidia isn't just tagging along; they're reshaping the landscape. OpenClaw started from scratch and already boasts over 50 variations on GitHub. With Nemo Claw's entry, security, hardware integration, and enterprise applications are being reinvented. Nvidia's hardware strategy is no joke, especially with Gro 3 LPU chips and Grock IP integration. But watch out—data privacy remains a hot topic in this space.

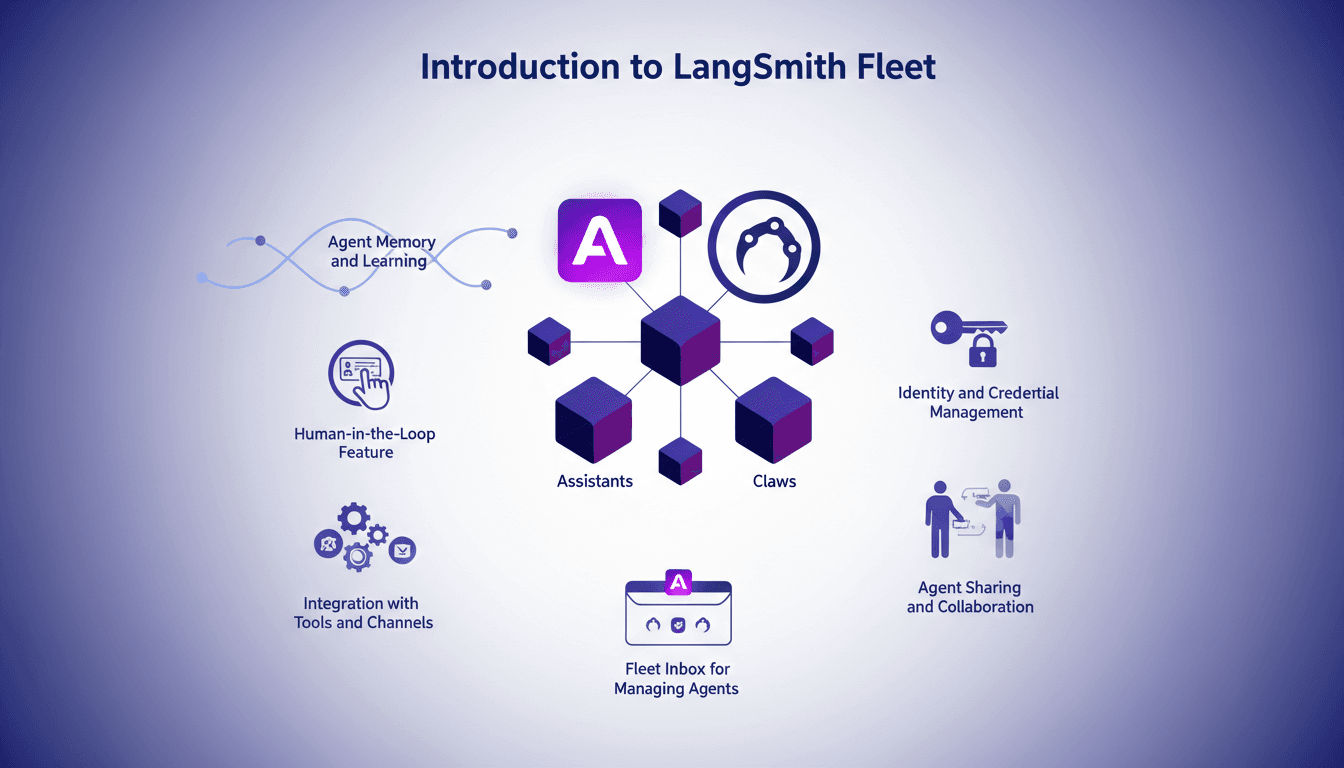

LangSmith Fleet: Quick and Efficient Start

I jumped into LangSmith Fleet thinking it was just another tool. But once I integrated it with my workflow, I realized it was a game changer. Let me walk you through how I set it up, the pitfalls I encountered, and the efficiencies I gained. LangSmith Fleet offers a robust platform for managing AI agents, whether you're dealing with assistants or claws. Understanding agent memory, leveraging human-in-the-loop features, integrating with tools and channels... This isn't theoretical; it's practical with a direct impact on your daily workflow.

Crafting Effective Soundscapes in Videos

I still remember the first time I realized the power of sound in a video. It was a simple project, but the moment I added background music, everything transformed. That's when I knew audio wasn't just an accessory; it was a game changer. Today, in the media landscape, sound plays a pivotal role in shaping viewer perception. Whether through subtle theme repetition or strategic use of music, audio elements can make or break your content. In this video, I share how I crafted effective soundscapes and the impact of auditory repetition on atmosphere creation.