LangChain & Nvidia: Create Your AI Agent

I dove headfirst into building AI agents using LangChain and Nvidia's latest tech, and it's been a game changer. First, I connected my Neotron 3 model, then secured the runtime with OpenShell. LangChain's Deep Agents helped me craft an open-source harness, and juggling the agent's memory and skills was both complex and fascinating. But watch out, the security policies and network restrictions in OpenShell can be tricky. If you're looking to build your own AI agent, I break down how I orchestrated it all.

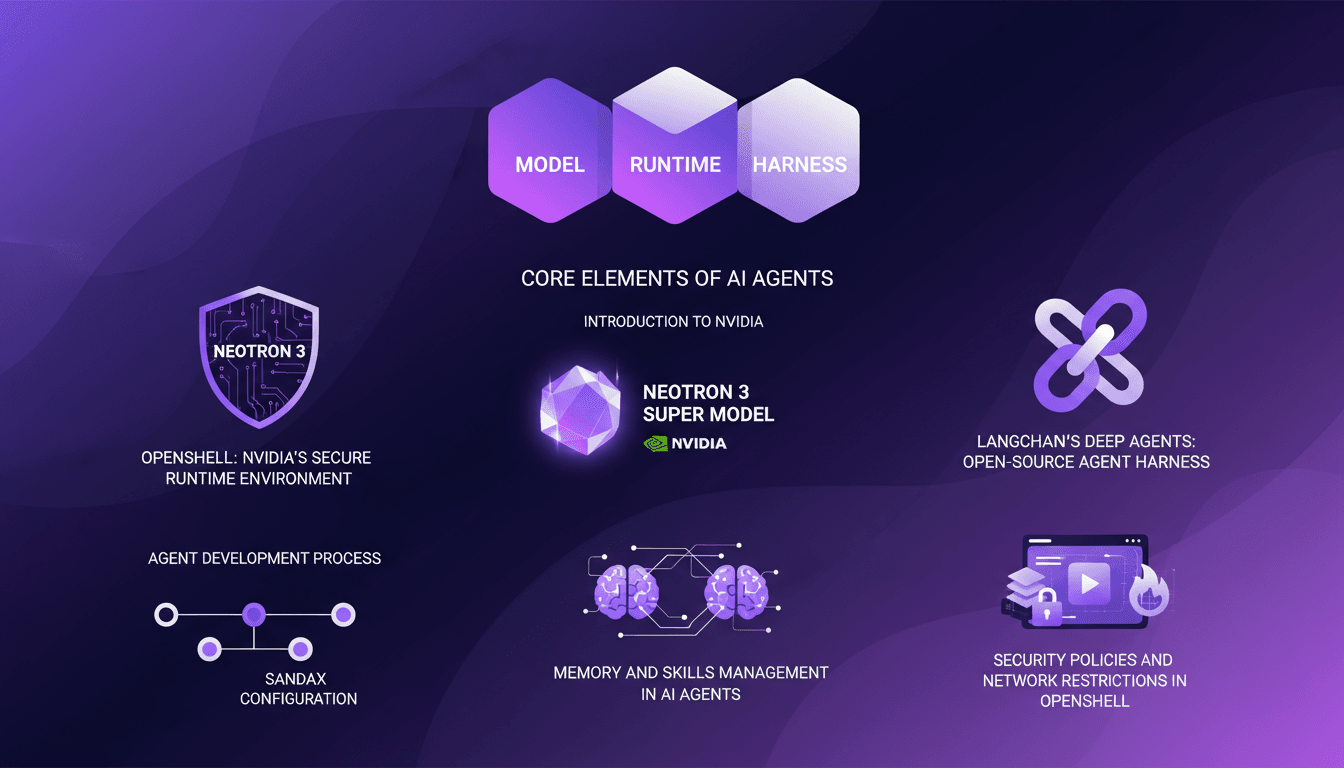

I dove into building AI agents with LangChain and Nvidia's latest tech, and it's been a game changer. Picture this: three core elements—a model, a runtime, and a harness. First, I connected Nvidia's Neotron 3, a super model that outpaces GPTOS in accuracy and speed. Then, I secured the runtime with OpenShell, ensuring my data stayed protected. LangChain's Deep Agents provided the open-source harness to orchestrate it all. But watch out, managing security policies and network restrictions in OpenShell requires attention. (Trust me, I got burned more than once.) Now, the agent's memory and skills are set and ready to deploy. In this tutorial, I'll show you how I implemented this ambitious setup.

Understanding AI Agent Architecture

In the realm of AI agents, the structure revolves around three core components: the model, the runtime, and the harness. These components form the backbone of agent efficiency for systems like Claude Code, Manis, and OpenClaw. The performance of an agent is directly tied to how these elements are orchestrated. For instance, I've found that Neotron 3, Nvidia's latest model, outperforms GPTOS in both accuracy and speed. Choosing components is a balancing act, especially when aiming for maximum efficiency without skyrocketing costs.

Orchestrating these components requires a solid understanding of their respective roles. The model serves as the central intelligence, the runtime executes tasks, and the harness links the two. But watch out, each choice involves trade-offs. For instance, a more performant model like Neotron 3 might demand greater resources, which could be a constraint for budget-limited projects.

Getting Started with Nvidia's Neotron 3

Neotron 3 represents a significant leap in AI model technology. Recently released by Nvidia, this super model offers precision and speed that surpass GPTOS. Integrating it into my workflow improved performance dramatically. However, integrating Neotron 3 isn't without its challenges. For example, I encountered compatibility issues with existing environments, requiring configuration adjustments.

To start, ensure compatibility of APIs and adjust runtime parameters. Once these steps are complete, Neotron 3 becomes a powerful asset. But don't underestimate the time needed for these initial adjustments. Poor preparation can lead to suboptimal performance or service interruptions.

Harnessing LangChain's Deep Agents

LangChain offers an open-source harness that simplifies AI agent integration. I found this tool particularly useful for context engineering, a crucial step in enhancing agent performance. By setting up the development environment and testing sandbox, I managed to balance complexity and performance, which is key to avoiding unnecessary overhead.

The sandbox is your safe testing ground. I typically start by configuring my environment variables before testing agents within the sandbox. This allows for simulating real-world scenarios without risking the production system. However, be careful not to overcomplicate the system with excessive configurations that could slow down the process.

Executing and Managing AI Agent Memory

Memory management is a crucial aspect of executing AI agents. I've often used a middleware for memory handling, allowing for efficient data management in the background. Integrating a composite backend also facilitates large-scale data management, but watch out for common pitfalls like memory overload, which can slow down the agent.

It's essential to clearly define memory limits for each agent to avoid cross-thread and cross-sandbox conflicts. With the right setup, agents can then execute commands, write, and read files, enhancing the agent's learning capabilities.

Security and Policy Management with OpenShell

Nvidia's OpenShell provides a secure runtime environment for AI agents. By implementing robust security policies, agents can be executed with different permission sets, on GPU-accelerated environments for instance. However, constantly balancing security and functionality is crucial to avoid hindering regular operations.

The lessons learned from my initial implementations taught me that managing network restrictions can significantly impact agent performance. It's vital to regularly review security policies to adapt to new requirements without compromising agent functionality.

- AI agents rely on a model, a runtime, and a harness.

- Neotron 3 offers superior performance over GPTOS.

- LangChain facilitates context engineering with its open-source harness.

- Effective memory management is essential to avoid slowdowns.

- Security and functionality must be balanced in OpenShell.

Building an AI agent with LangChain and Nvidia's tools is all about orchestrating the right mix. First, I make sure to nail the three core components: the model, the runtime, and the harness. Then, I deploy Neotron 3, Nvidia's supermodel that basically puts GPTOS to shame in terms of accuracy and speed. Finally, I wrap it all up in OpenShell, Nvidia’s secure runtime environment. But heads up, each piece has its limits—OpenShell is fantastic for security but can be a bit of a beast to set up.

- Key takeaways:

- 3 core elements: model, runtime, harness.

- Neotron 3: supermodel released a week ago.

- OpenShell: secure runtime environment.

Looking forward, I see these tools as game changers, but you really need to master them to get the most out of them. Ready to build your own AI agent? Dive into the setup with LangChain and Nvidia and transform your workflow. For a deeper dive, I recommend watching the original video—trust me, it's worth it!

Frequently Asked Questions

Thibault Le Balier

Co-fondateur & CTO

Coming from the tech startup ecosystem, Thibault has developed expertise in AI solution architecture that he now puts at the service of large companies (Atos, BNP Paribas, beta.gouv). He works on two axes: mastering AI deployments (local LLMs, MCP security) and optimizing inference costs (offloading, compression, token management).

Related Articles

Discover more articles on similar topics

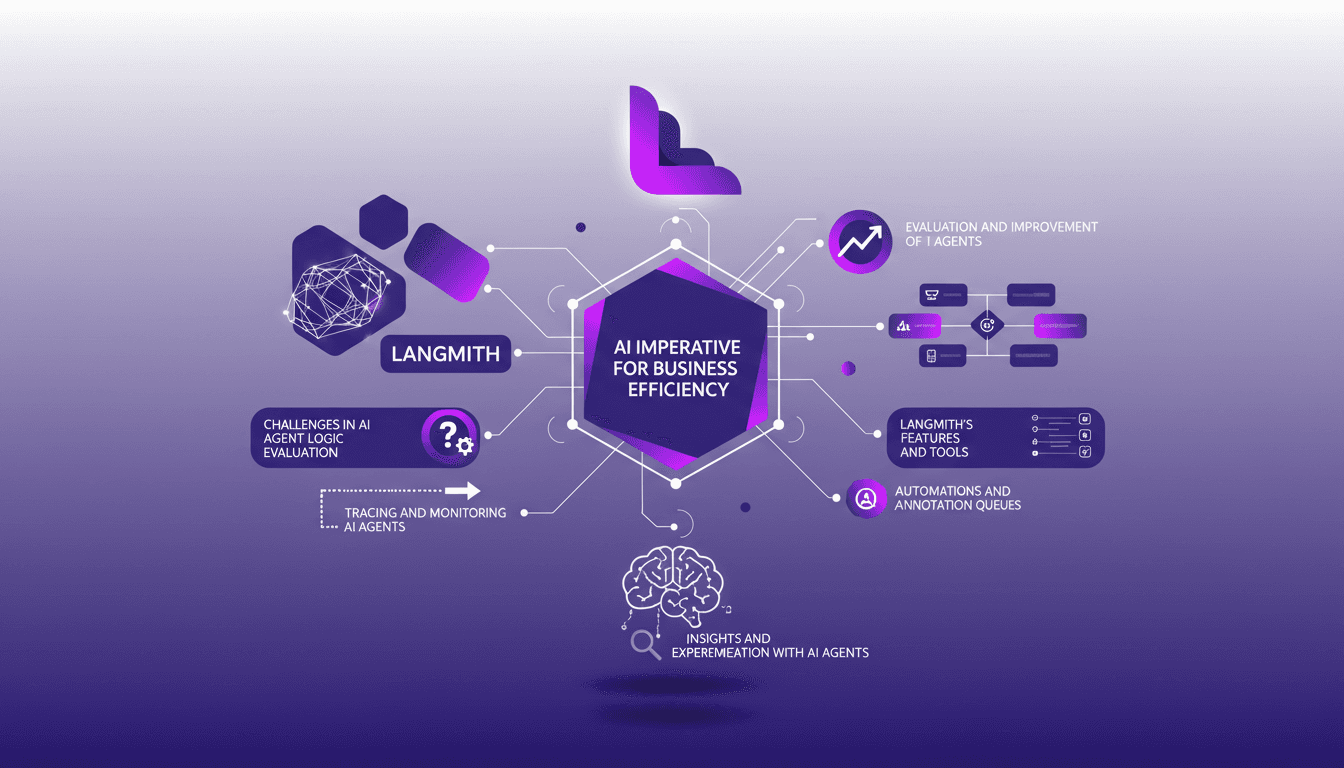

Debugging and Evaluating AI Agents with LangSmith

I've been deep in the trenches with AI agents, and trust me, making them reliable is no small feat. LangSmith has been a real game-changer. It's not just about making them smart; it's about ensuring they actually deliver. First, I connect my agents to LangSmith to trace and evaluate their logic. Then, I ensure they hit that magic feedback score of 8 for helpfulness. LangSmith's tools—like automation and annotation queues—let me fine-tune and ship agents that actually work. But watch out, automation has its limits—don't over-rely on it. Dive in with me as we navigate the challenges, tools, and solutions that make LangSmith an essential ally for AI agents.

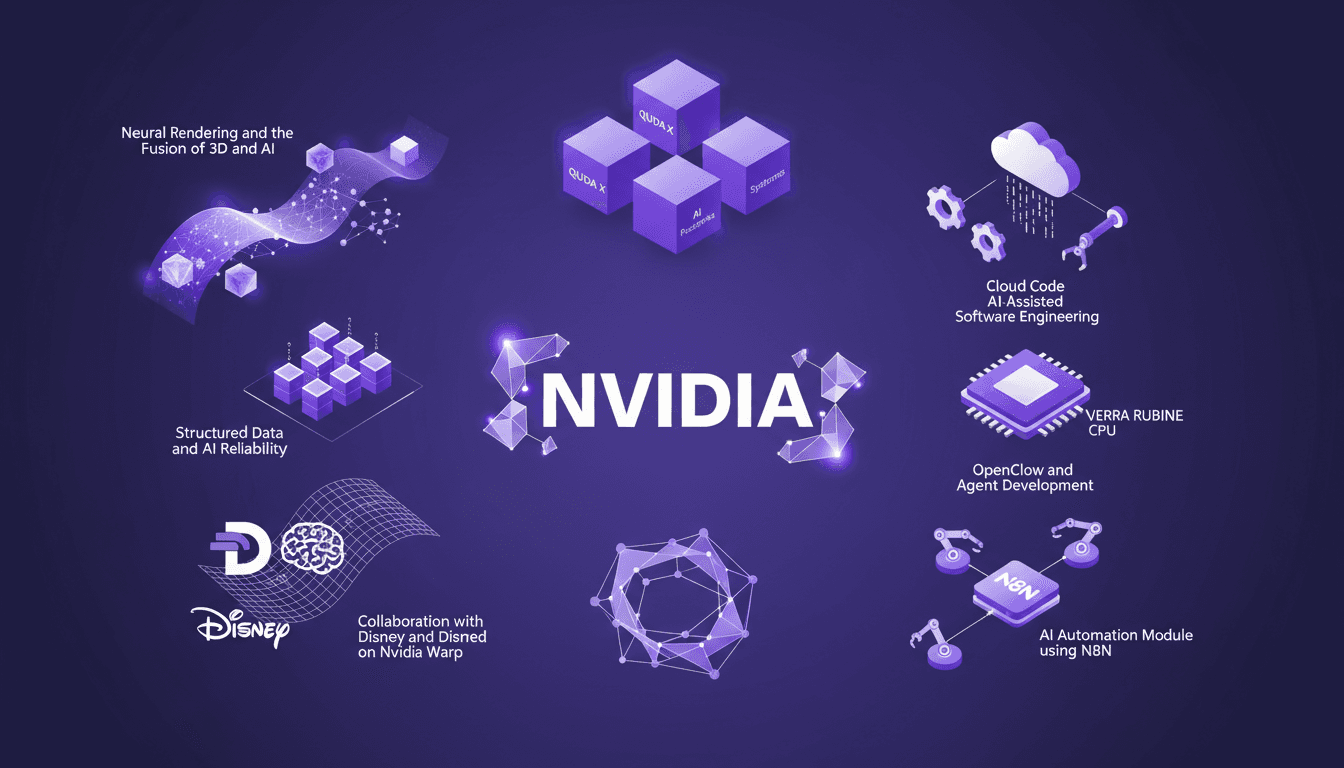

NVIDIA GTC 2026: Unveiling New Platforms

I've attended countless conferences, but NVIDIA's GTC 2026 was a real game changer. They unveiled platforms that are set to redefine AI and computing. Picture CPUs that transform how we code, neural rendering that fuses 3D and AI, and collaborations with Disney and Deep Mind on Nvidia Warp. It's massive, and the impact on us builders is direct. We're talking about QUDA X, AI-assisted software engineering, and even 45° water-cooled systems. We've got years of work ahead, but also incredible opportunities. Let's dive into what was unveiled.

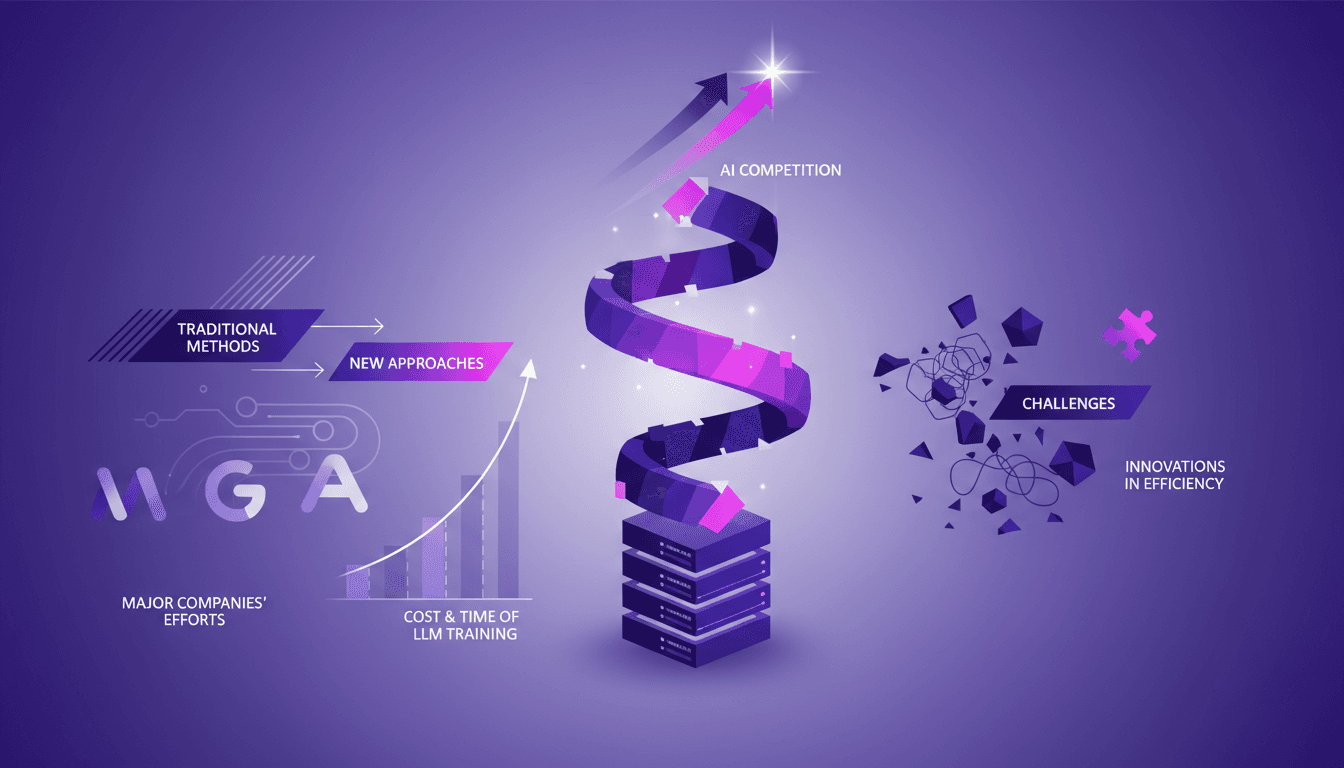

AI Improvement: Cutting Costs and Time

I remember the first time I heard about 'recursive self-improvement' in AI—it sounded like sci-fi. But diving into it, I realized it's the future—if we can manage the costs and time. Training an LLM costs hundreds of millions and takes months. I'm right in the thick of this race to develop faster and cheaper solutions. Let's break down how we're tackling this, the challenges in model training, and why traditional methods just won't cut it anymore. Trust me, this is practical stuff, not corporate fluff.

Aerospace Engineer: My Practical Journey

Ever dreamt of reaching for the stars, literally? I did, and it led me to become an aerospace engineer at NASA. But dreams aren't enough—they need a practical roadmap. In this live stream, I share how I built mine, from investing in rocket companies to developing platforms that disrupt industries. We dive into Vibe coding, the Consult Sphere platform, and how these tools turn aspirations into tangible outcomes. Plus, let's not overlook the importance of community, credibility, and brand value in all of this.

Nvidia and OpenClaw: Integrating Nemo Claw

I dove into the Nvidia GTC 2026 keynote expecting the usual tech updates, but stumbled upon a real game-changer: Nvidia's involvement in the OpenClaw project with Nemo Claw. Nvidia isn't just tagging along; they're reshaping the landscape. OpenClaw started from scratch and already boasts over 50 variations on GitHub. With Nemo Claw's entry, security, hardware integration, and enterprise applications are being reinvented. Nvidia's hardware strategy is no joke, especially with Gro 3 LPU chips and Grock IP integration. But watch out—data privacy remains a hot topic in this space.