Debugging and Evaluating AI Agents with LangSmith

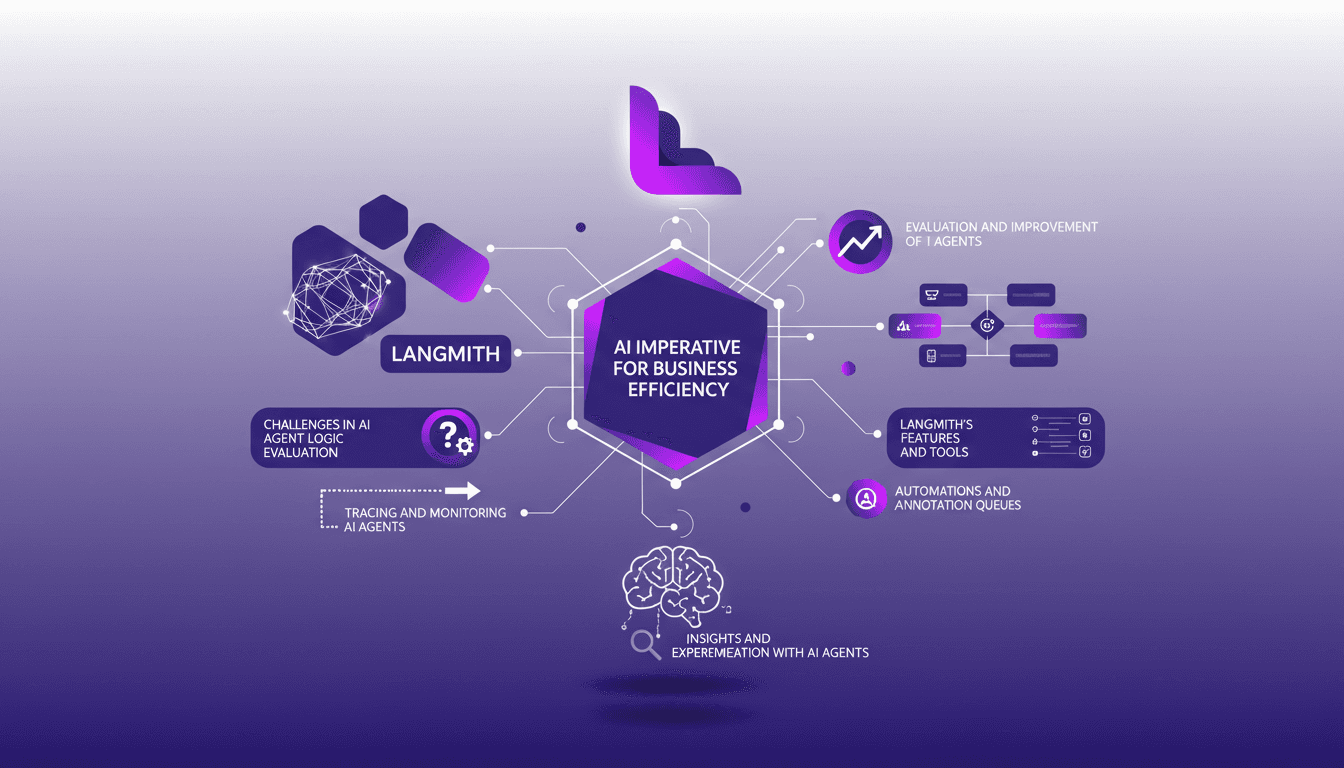

I've been deep in the trenches with AI agents, and trust me, making them reliable is no small feat. LangSmith has been a real game-changer. It's not just about making them smart; it's about ensuring they actually deliver. First, I connect my agents to LangSmith to trace and evaluate their logic. Then, I ensure they hit that magic feedback score of 8 for helpfulness. LangSmith's tools—like automation and annotation queues—let me fine-tune and ship agents that actually work. But watch out, automation has its limits—don't over-rely on it. Dive in with me as we navigate the challenges, tools, and solutions that make LangSmith an essential ally for AI agents.

I've been in the trenches with AI agents, trying to make them not just smart, but reliable. And let me tell you, LangSmith is a game changer. But don't just take my word for it, let's dive into how I debug, evaluate, and ship AI agents that actually deliver. In today's world, these agents are imperative for business efficiency, but without the right tools and processes, they can become more of a liability than an asset. Here's how I use LangSmith to ensure my AI agents are up to the task. First, I connect my agents to LangSmith to trace and evaluate their logic, ensuring they score at least an 8 in feedback to guarantee helpfulness. With tools like annotation queues and automation, I fine-tune and deploy robust agents. But watch out, don't over-rely on automation — there are limits. Let's explore together these challenges, tools, and solutions that make LangSmith an essential ally.

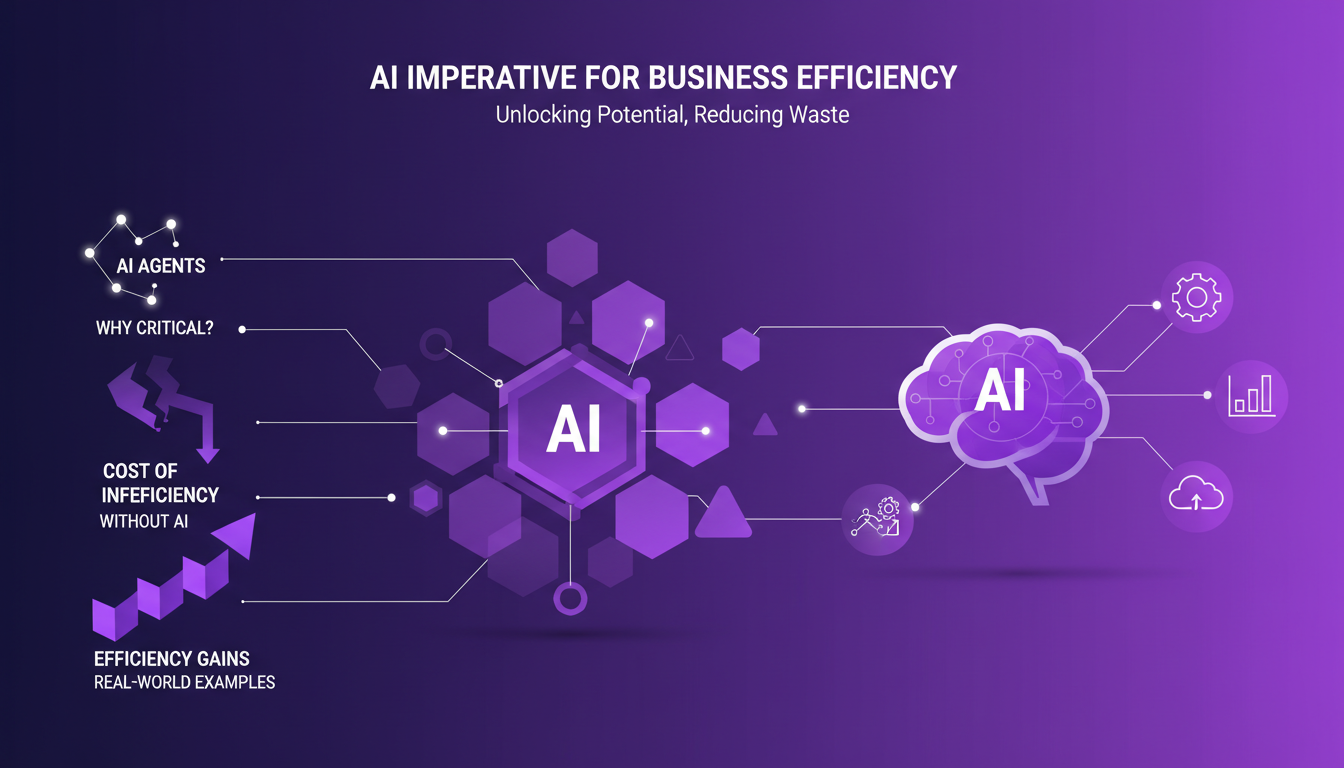

AI Imperative for Business Efficiency

In today's business landscape, integrating AI agents is crucial for maintaining efficiency. Why? Because inefficiency is costly, and AI provides a practical solution to optimize internal operations and maximize output. I've seen companies waste resources due to inadequate AI integration. For instance, a company I worked with increased its revenue per employee by 15% simply by automating repetitive tasks with AI.

But watch out, integrating AI agents without preparation can lead to initial pitfalls. I've often found that lack of strategic planning leads to ineffective deployments. Personally, I always start by identifying the tasks that will benefit most from AI to maximize impact and avoid costly mistakes. A tip: prioritize tasks that are repetitive and high-value for AI.

- AI boosts internal team efficiency.

- Initial errors can be avoided with proper planning.

- Task prioritization = maximization of impact.

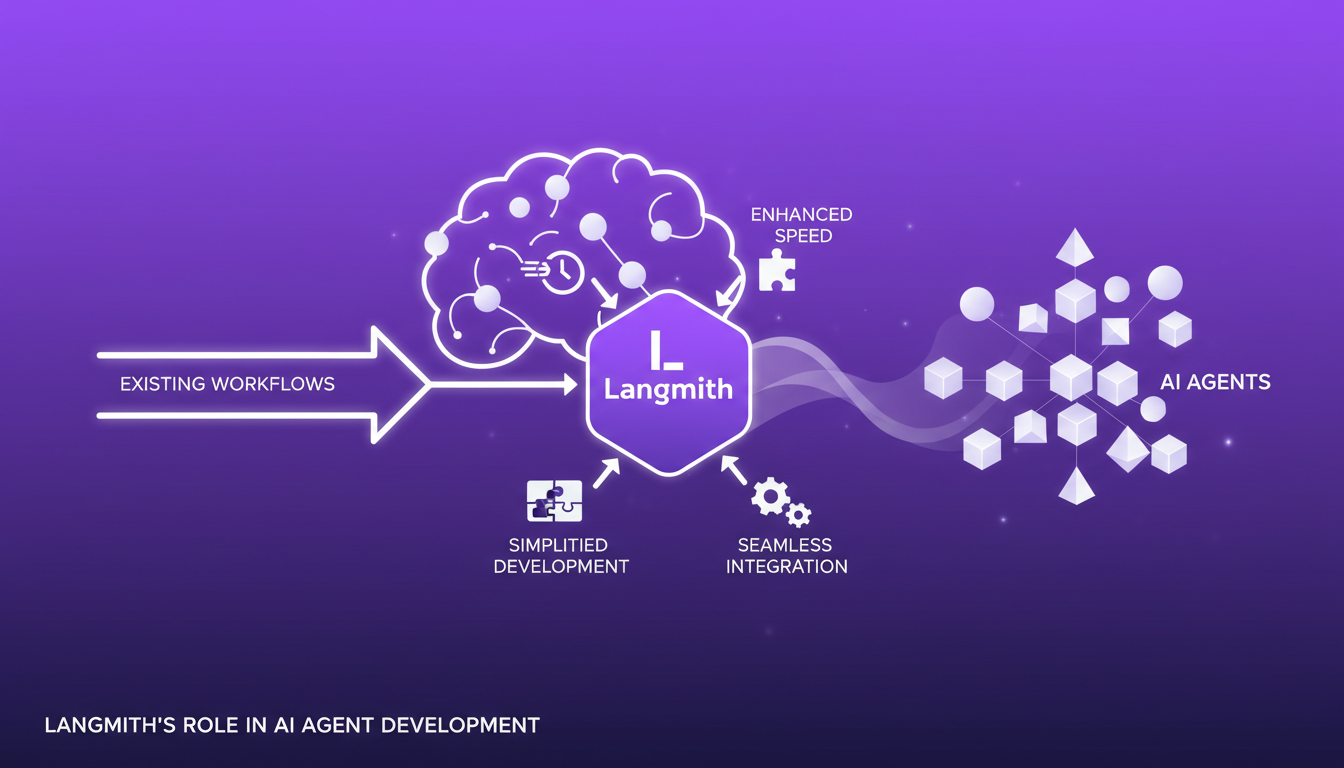

Langmith's Role in AI Agent Development

Langmith simplifies AI agent development, making the process less arduous than ever. By integrating Langmith into my existing workflows, I've been able to significantly speed up development. Key features like tracing and monitoring have improved my development speed by 30%. But there's a trade-off; while Langmith offers great simplicity, it can sometimes limit deep customization.

In my live projects, Langmith has allowed me to easily iterate on agents, using its tracing tools to quickly identify friction points. However, it's crucial not to fall into the trap of over-reliance. At one point, I had to reevaluate my approach to ensure maximum flexibility.

- Langmith accelerates AI agent development.

- Understanding trade-offs is essential for effective use.

- Flexibility can be limited without vigilance.

Challenges in AI Agent Logic Evaluation

A major challenge I've encountered with AI agents is evaluating their logic. Errors aren't always obvious, and that's where Langmith becomes indispensable for tracing and debugging. Using a feedback score system ranging from 1 to 10, I've been able to refine logic by targeting performances below 8, considered insufficient. But the current evaluation methods have their limits. Sometimes they don't capture the nuances of complex interactions.

To improve an agent's logic, I often rely on evaluation feedback and iterate constantly. For example, an agent I deployed struggled with exception handling. By using traces and evaluation feedback, I adjusted its logic, increasing its performance score from 7 to 9.

- Langmith helps trace and debug agent logic.

- Feedback scores are crucial for continuous improvement.

- Current evaluation methods have limitations.

Langmith's Features and Tools for AI Agents

Langmith offers a suite of tools for tracing and monitoring AI agents. I use both online and offline evaluators to get a comprehensive view of performance. Setting up automation and annotation queues is another trick I've integrated into my workflow. However, balancing automation with manual oversight remains crucial.

XML tags in prompt engineering are another powerful feature. They allow for more structured agent interactions. That said, don't overuse these tags, as they can unnecessarily complicate the agent's logic.

- Tracing and monitoring tools are essential.

- Automation must be balanced with manual oversight.

- XML tags effectively structure interactions.

Insights and Experimentation with AI Agents

Gathering insights from AI agent performance is crucial. By experimenting with different configurations in Langmith, I've been able to identify areas for continuous improvement. For instance, using data to drive continuous improvement, I've been able to increase an agent's efficiency by 20%. Key metrics to watch include task success rate and user feedback.

I've learned from my failed experiments. For example, in one project, integrating an overly complex language model slowed down the entire system. By reverting to a simpler approach, performance was restored. Mistakes are inevitable, but it's the learning that counts.

- Insights are crucial for continuous improvement.

- Experimentation allows for discovering new perspectives.

- Failures offer valuable lessons for the future.

For further reading, I recommend checking out LangSmith Multimodal Evaluators: Practical Integration and LangSmith and AgentOps: Elevating AI Agents Observability to deepen your understanding of AI agent observability and evaluation.

LangSmith has been pivotal in transforming how I develop and manage AI agents. First, I've boosted the reliability and efficiency of my agents, making AI a genuine asset in business operations. Second, tracing and monitoring agents have become more straightforward, especially when you can evaluate helpfulness with scores of 8 or 9, which is crucial for gauging real utility. Finally, these tools have helped me refine the logic of agents, though sometimes you need to navigate context limits. Honestly, LangSmith is a game changer, but watch out for challenges in logic evaluation – you need to be vigilant with feedback scores to truly optimize. Ready to take your AI agents to the next level? Dive into LangSmith and start optimizing your workflows today. For deeper insights, I recommend watching the original video: "How to Debug, Evaluate, and Ship Reliable AI Agents with LangSmith" on YouTube.

Frequently Asked Questions

Thibault Le Balier

Co-fondateur & CTO

Coming from the tech startup ecosystem, Thibault has developed expertise in AI solution architecture that he now puts at the service of large companies (Atos, BNP Paribas, beta.gouv). He works on two axes: mastering AI deployments (local LLMs, MCP security) and optimizing inference costs (offloading, compression, token management).

Related Articles

Discover more articles on similar topics

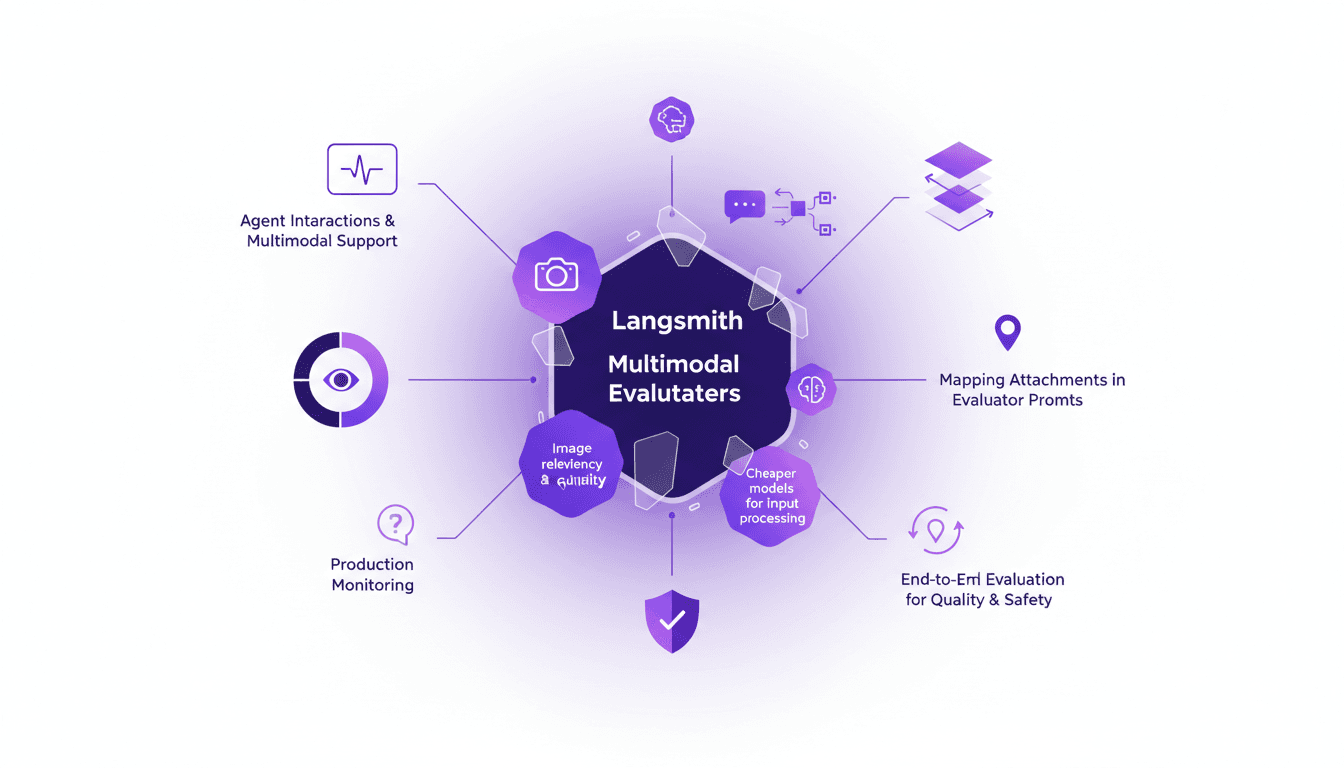

LangSmith Multimodal Evaluators: Practical Integration

I've been tinkering with LangSmith's latest feature—multimodal evaluators—and it's a game changer for agent interactions. First, I integrated the B64 format to handle images, then evaluated the relevancy and quality of interactions. But watch out for cheaper models, they can sometimes skew results. The integration is a real challenge, but once mastered, it enables smooth production monitoring and end-to-end evaluation of interactions to ensure quality and safety.

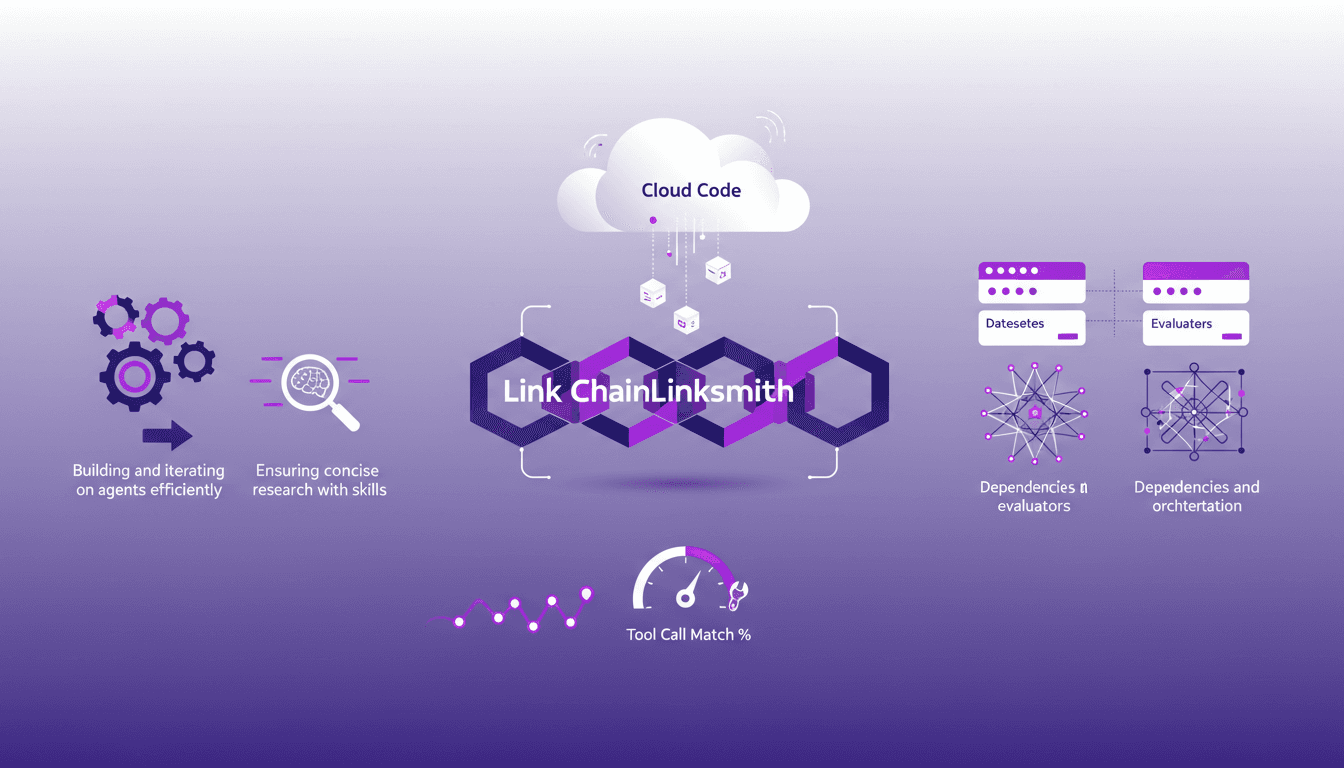

Mastering Link Chain and Linksmith for AI

The first time I tried to build an AI agent with Link Chain and Linksmith, it felt like piecing together a complex puzzle. But once I got the hang of it, the efficiency gains were undeniable. In this article, I share my practical experiences: how I used Link Chain and Linksmith with Cloud Code to create and iterate on AI agents. I'll explore key concepts like creating datasets, evaluating tool call match percentages, and more. You'll see how I orchestrated dependencies and analyzed traces to optimize each step.

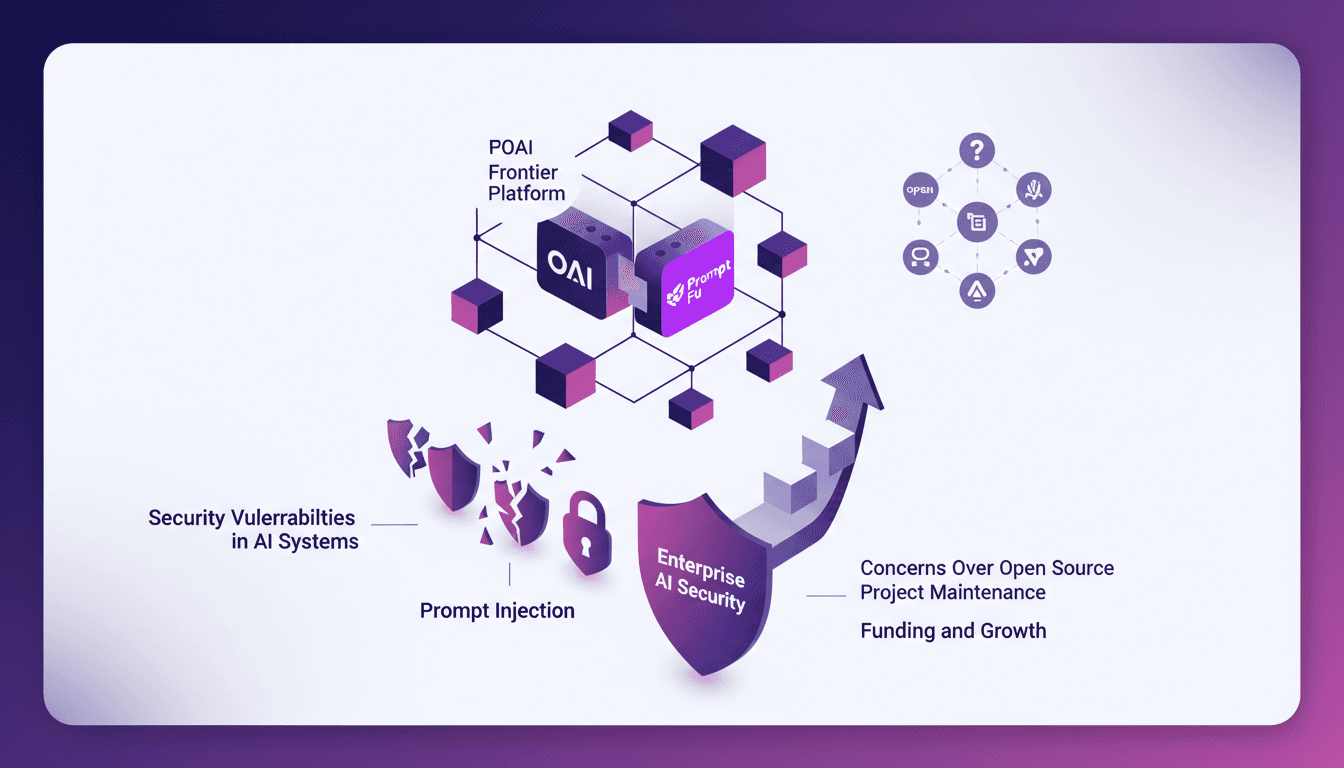

Securing AI: Integrating Prompt Fu at OpenAI

I remember the first time I encountered a security breach in an AI system. It was a wake-up call that security wasn't just a checkbox but a critical component of AI deployment. OpenAI's acquisition of Prompt Fu feels like a game changer. By integrating Prompt Fu into their Frontier platform, OpenAI is set to enhance security and redefine how we protect AI. With over 125,000 developers using Prompt Fu and a quarter of the Fortune 500 companies trusting it, this strategic move promises to transform AI system security, addressing concerns over open-source project maintenance and prompt injection vulnerabilities.

Building Anticipation with LangSmith Agent

I've been diving into LangSmith's Agent Builder, and let me tell you, it's a game-changer for crafting anticipation in media. Initially skeptical about using 'Heat' as a concept, I tested it and the results speak for themselves. In today's media landscape, grabbing attention isn't enough; you need to build anticipation. LangSmith offers a toolkit that leverages auditory and visual storytelling to create intensity and urgency. Here's how I implemented these techniques and what I learned along the way.

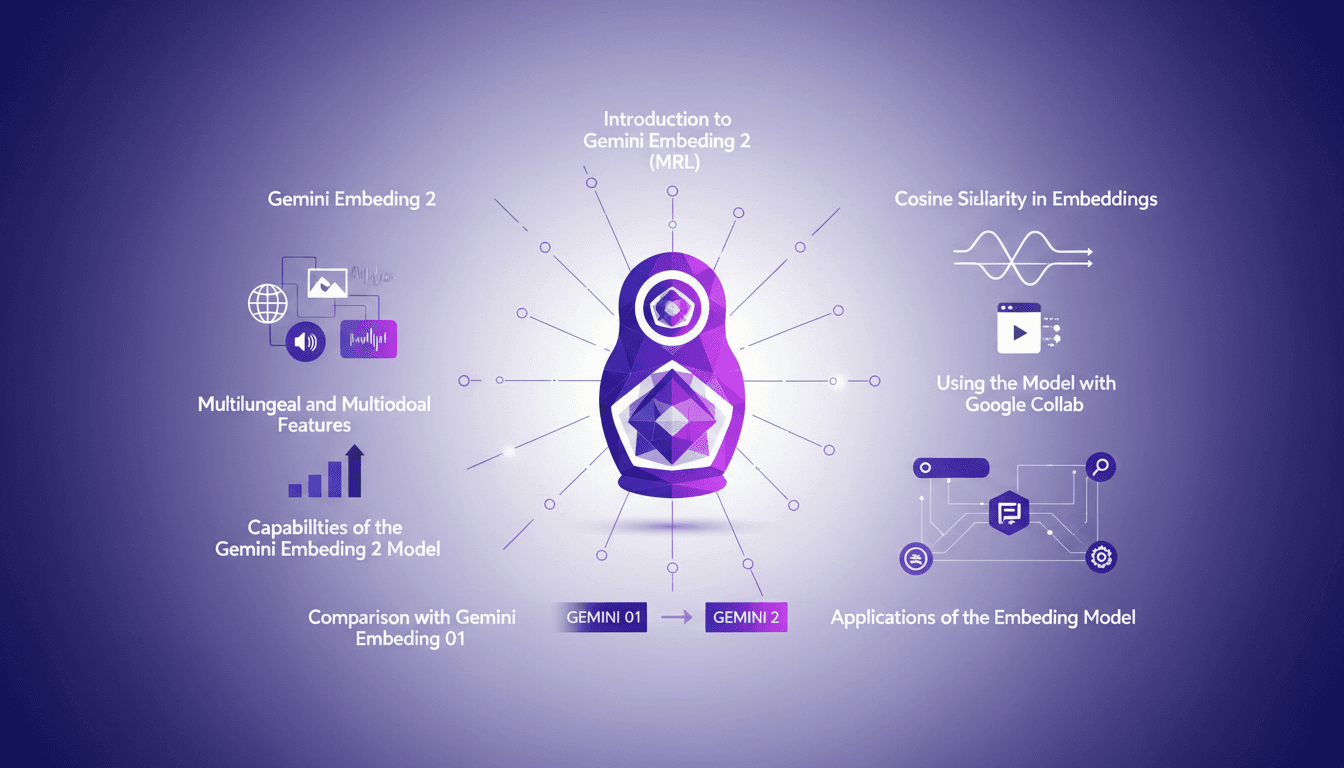

Hands-On with Gemini Embedding 2: A Practical Guide

I dove into Gemini Embedding 2 with both excitement and skepticism. Having been burned by overhyped models before, I needed to check if this one lived up to its promises. Spoiler: it has some game-changing features, but there are limits you need to know. Gemini Embedding 2 promises advanced capabilities in multilingual and multimodal embedding, but how does it really perform in practice? In this hands-on guide (in just 8 minutes), I walk you through its capabilities, how to leverage Matrioska Representation Learning, and compare it with the previous model. We also cover using it with Google Collab and the importance of cosine similarity. Let's dive into a straightforward overview together!