LangSmith Multimodal Evaluators: Practical Integration

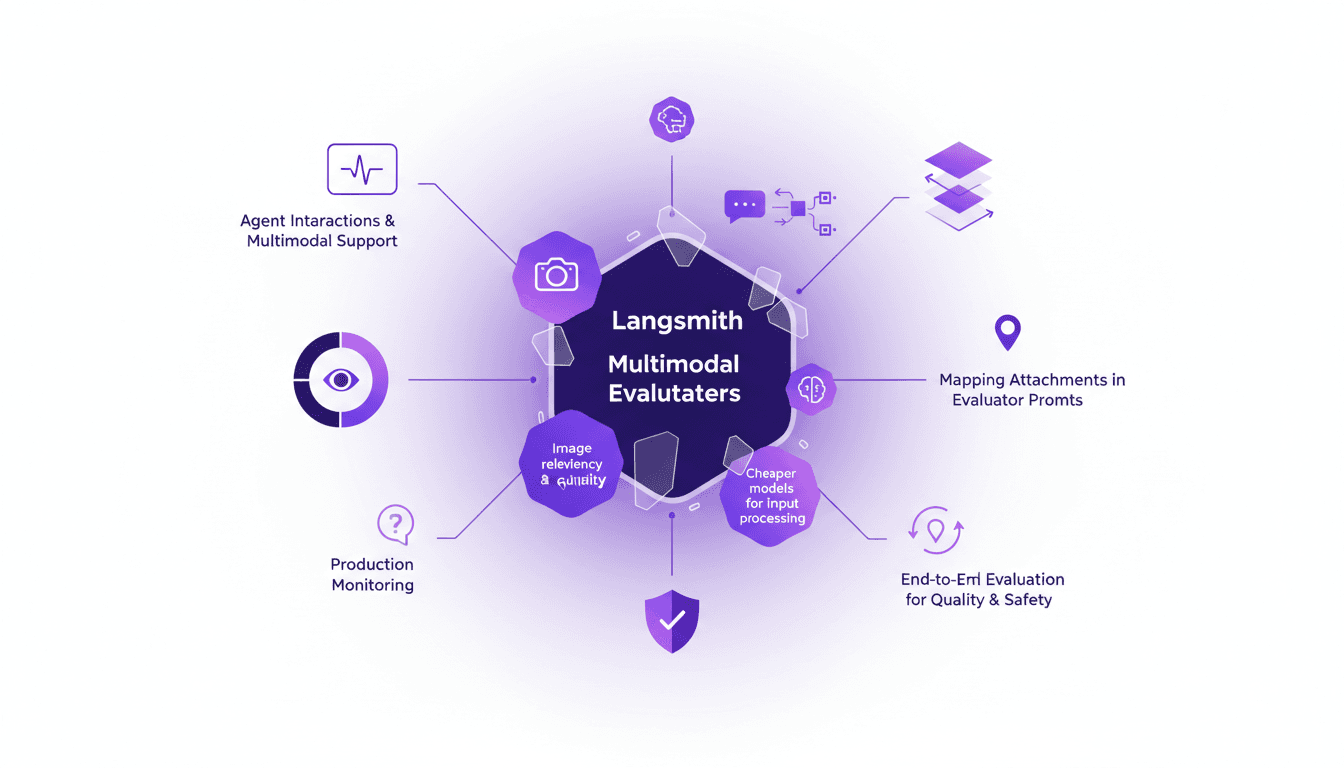

I've been tinkering with LangSmith's latest feature—multimodal evaluators—and it's a game changer for agent interactions. First, I integrated the B64 format to handle images, then evaluated the relevancy and quality of interactions. But watch out for cheaper models, they can sometimes skew results. The integration is a real challenge, but once mastered, it enables smooth production monitoring and end-to-end evaluation of interactions to ensure quality and safety.

I've recently dived headfirst into LangSmith with their new feature—multimodal evaluators. It's really a game changer for agent interactions, especially when dealing with images and other media. Imagine being able to assess the relevancy and quality of visual interactions as smoothly as text. First, I integrated the B64 image format, which streamlined the process. Then, I used cheaper models for processing multimodal inputs, but watch out, they can sometimes skew your results. Integrating attachments in evaluator prompts is another crucial step. And of course, production monitoring is essential to ensure these interactions run safely and effectively. But don't get me wrong, this journey is filled with pitfalls. I've learned, often the hard way, to identify traps and optimize the workflow.

Setting Up Multimodal Evaluators in LangSmith

First, I integrated the multimodal evaluators into my existing LangSmith setup. Understanding the importance of multimodal support for agent interactions was key. With interactions evolving beyond just text, multimodal support is indispensable. So, I prioritized evaluating image relevancy and quality right from the start.

Decoding the B64 Format and Attachments

I had to wrap my head around the B64 format for image processing. Mapping attachments correctly in evaluator prompts is crucial. I found that using cheaper models for processing multimodal input saved costs. Don't overlook the importance of attaching metadata correctly.

- B64 image format: essential for evaluation.

- Accurate mapping of attachments needed.

- Use of economical models to cut costs.

Monitoring Multimodal Interactions in Production

I set up production monitoring to track multimodal interactions. Monitoring allowed me to quickly identify and resolve issues with image quality. Frequent checks prevent bigger problems down the line. Automated alerts for when things go off the rails were invaluable.

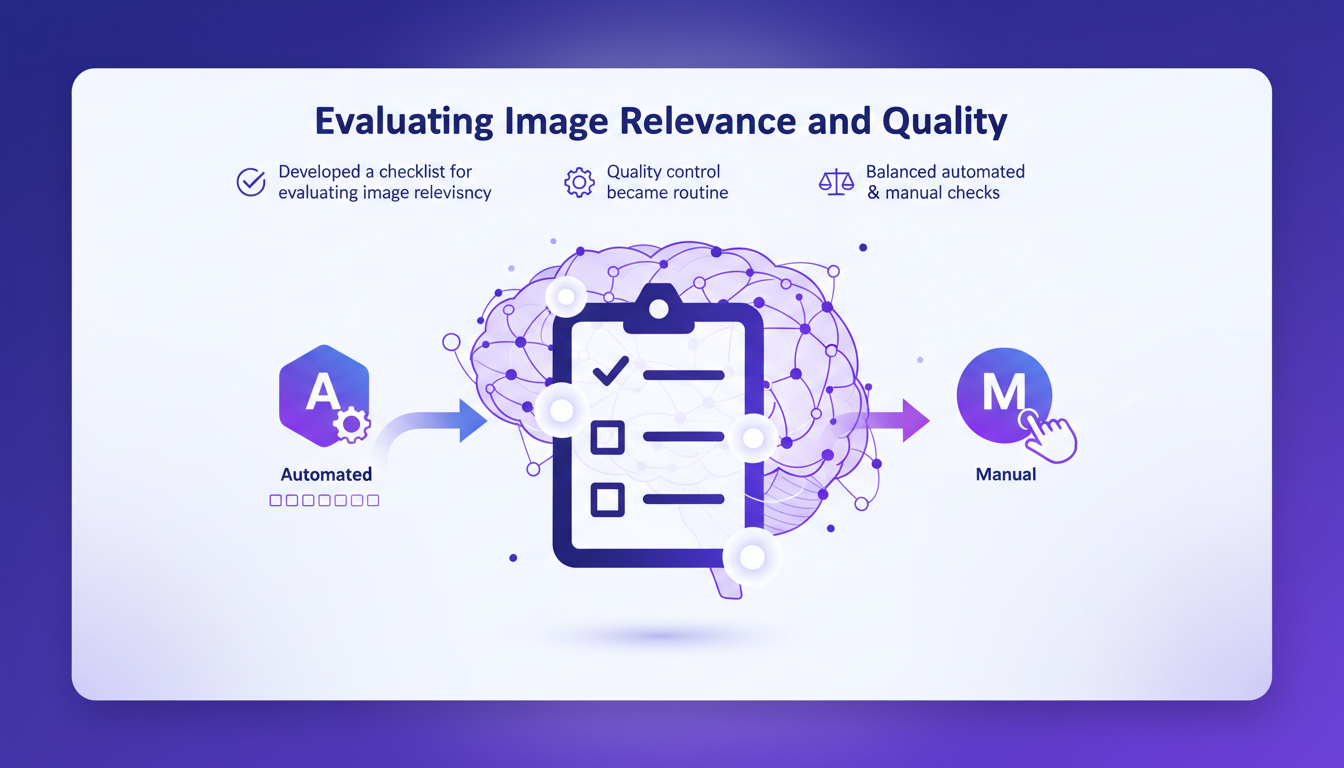

Evaluating Image Relevance and Quality

I developed a checklist for evaluating image relevancy in interactions. Quality control became a routine part of my workflow. I balanced between automated evaluations and manual checks. Keep an eye on the trade-off between speed and accuracy.

- Checklist for image relevance.

- Quality control integrated into workflow.

- Balance between automated and manual.

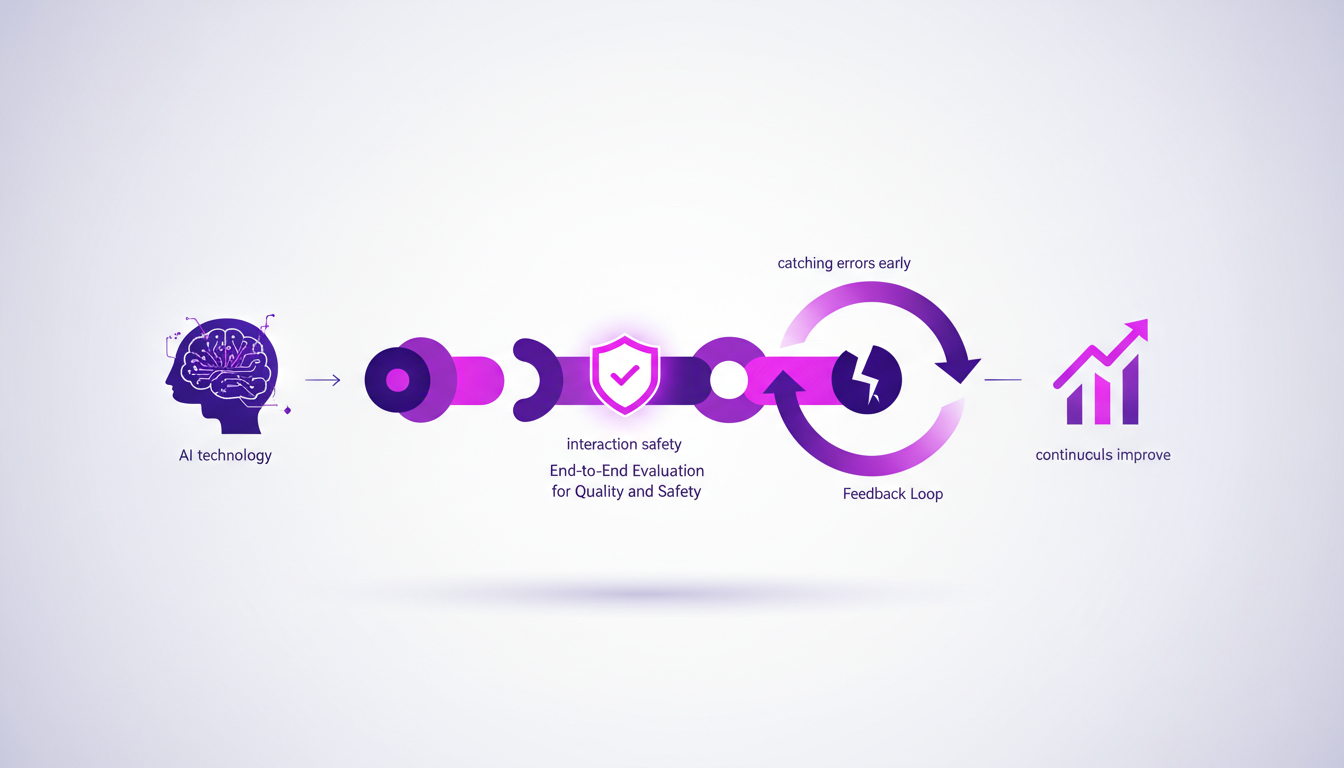

End-to-End Evaluation for Quality and Safety

I implemented end-to-end evaluations to ensure interaction safety. This holistic approach helped me catch errors early. I used feedback loops to continuously improve the evaluation process. Don't underestimate the power of a thorough end-to-end check.

- Holistic evaluations for safety.

- Early error detection.

- Continuous improvement via feedback loops.

Mastering Gemini 3.1 might give you ideas on optimizing multimodal workflows.

Integrating multimodal evaluators in LangSmith has been a true game changer in how I handle agent interactions. By honing in on image relevancy and quality, I've achieved more reliable and efficient results. Here are some key takeaways:

- Using B64 format for images has streamlined the evaluation of their quality and relevance in interactions.

- Thorough evaluations are crucial to optimizing agent performance.

- Integrating attachments and multimodal formats enriches overall interactions.

Looking ahead, these multimodal evaluators can really be a game changer, but remember to keep monitoring and iterating to fine-tune the details. I encourage you to start experimenting with these multimodal evaluators in your setup. Trust me, the key is in the details. For a deeper dive and better understanding, check out the original video: Introducing Support for Multimodal Evaluators in LangSmith.

Frequently Asked Questions

Thibault Le Balier

Co-fondateur & CTO

Coming from the tech startup ecosystem, Thibault has developed expertise in AI solution architecture that he now puts at the service of large companies (Atos, BNP Paribas, beta.gouv). He works on two axes: mastering AI deployments (local LLMs, MCP security) and optimizing inference costs (offloading, compression, token management).

Related Articles

Discover more articles on similar topics

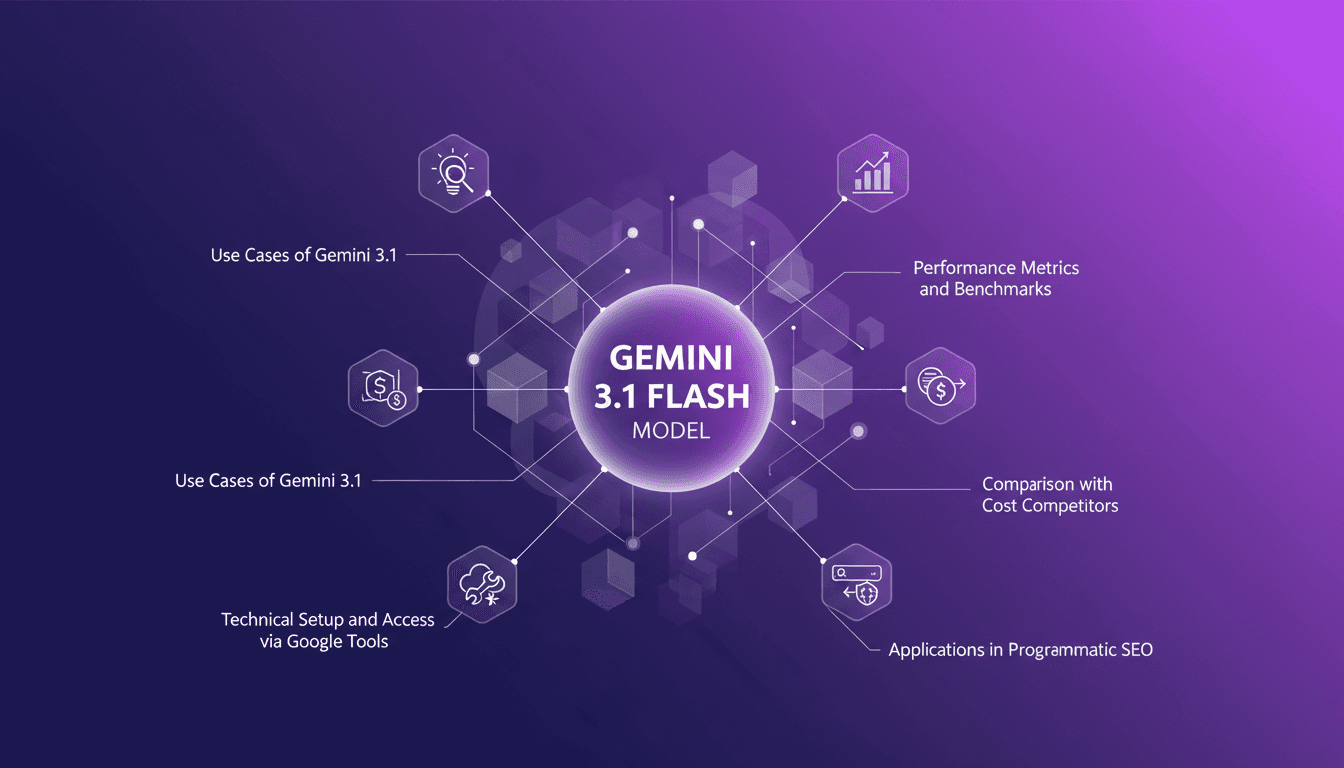

Mastering Gemini 3.1: Flash Lite in 14 Minutes

I dove headfirst into Gemini 3.1 Flash Lite, eager to see if it could truly revolutionize my workflow. Spoiler: It did, but not without a few hiccups along the way. Picture a model that can grasp multimodal data and optimize programmatic SEO in a flash. I tested five different use cases, and for a translation task, it took just one second. But watch out, setting it up with Google's tools isn’t exactly a walk in the park. I'll walk you through how I navigated it all, with candid comparisons to competitors and an eye on cost efficiency. If you're ready to supercharge your SEO, join me on this journey.

Boosting Web Search with GPT-5.3: Practical Guide

I've been tweaking search results for years, but integrating GPT-5.3 changed everything. With the latest enhancements, understanding user queries has become more nuanced. In this article, I walk you through how to leverage these advancements for better web search results. We'll dive into the importance of subtext, the enhancements in GPT-5.3, and how they make responses more natural and conversational. You'll see practical cases like planning a biking trip or understanding baseball rule changes. It's a powerful tool, but watch out for context limits—beyond 100K tokens, things get tricky. I'll share how I orchestrated these elements for direct user experience impact.

GPT 5.4: Performance, Cost, and Controversy

I just integrated GPT 5.4 into my workflow, and let me tell you, it's a game changer—but not without its quirks. OpenAI has just released GPT 5.4, and between boosted efficiency and cost management, it's a complex terrain of trade-offs. Priced at $15 per million tokens, it looks tempting, but watch out for the 295% surge in uninstalls on February 28th. Scoring 83% on the GDP val benchmark, surpassing Opus 4.6, GPT 5.4 promises a lot, but beware of the pitfalls. Let's dive into the technical details and potential professional impacts this new version might have.

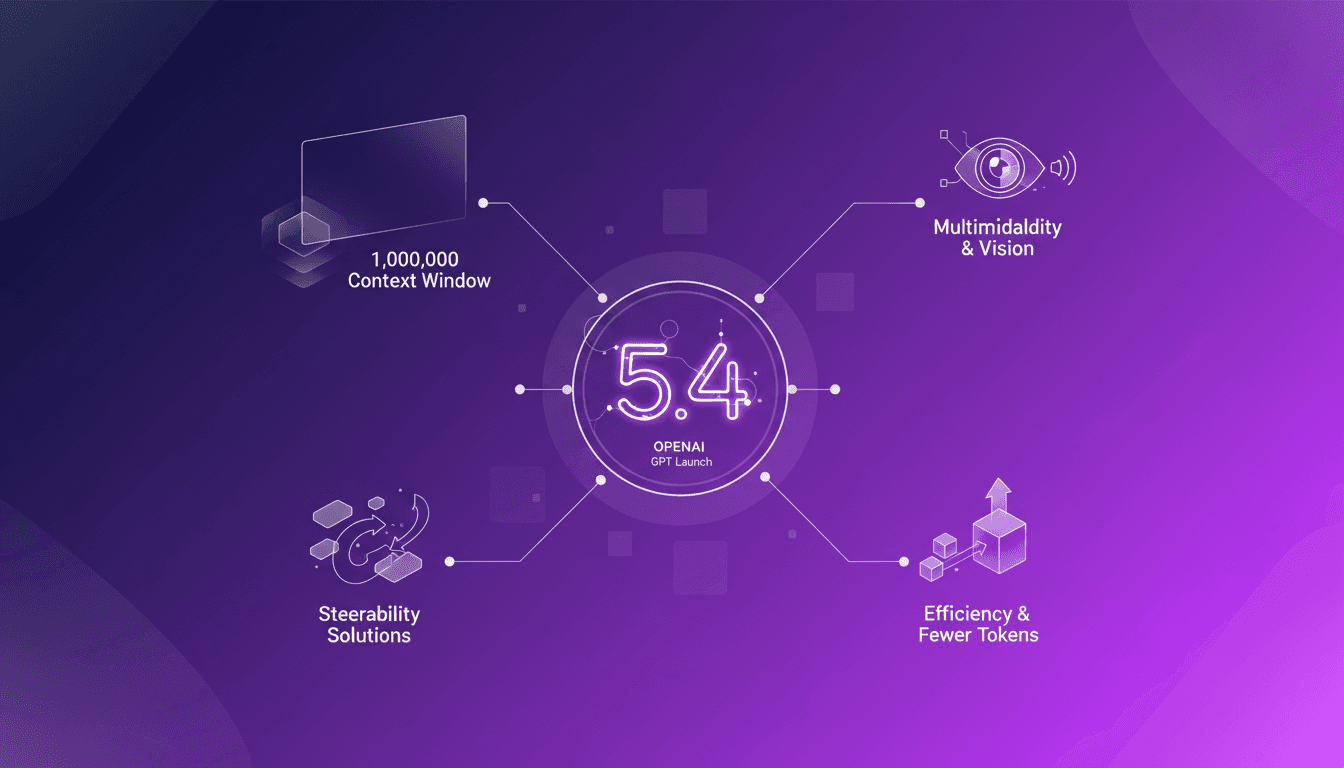

GPT 5.4: Context Revolution with 1 Million

I've been in the trenches with AI models for years, and let me tell you, the launch of GPT 5.4 is a game changer. This model promises a massive leap with its 1 million context window, enhanced multimodal capabilities, and solutions to the notorious steerability problem. But before you dive in headfirst, let's break down what this means for us builders. Imagine orchestrating a project where context isn’t a crushing limit anymore, where vision and text blend seamlessly. GPT 5.4 isn’t just a simple update; it’s a reinvention of the wheel, but watch out for the usual pitfalls: don’t overload your project with promises without understanding the constraints. Let's explore these new features and see how they stack up in real-world applications.

Building Anticipation with LangSmith Agent

I've been diving into LangSmith's Agent Builder, and let me tell you, it's a game-changer for crafting anticipation in media. Initially skeptical about using 'Heat' as a concept, I tested it and the results speak for themselves. In today's media landscape, grabbing attention isn't enough; you need to build anticipation. LangSmith offers a toolkit that leverages auditory and visual storytelling to create intensity and urgency. Here's how I implemented these techniques and what I learned along the way.