AI Improvement: Cutting Costs and Time

I remember the first time I heard about 'recursive self-improvement' in AI—it sounded like sci-fi. But diving into it, I realized it's the future—if we can manage the costs and time. Training an LLM costs hundreds of millions and takes months. I'm right in the thick of this race to develop faster and cheaper solutions. Let's break down how we're tackling this, the challenges in model training, and why traditional methods just won't cut it anymore. Trust me, this is practical stuff, not corporate fluff.

I distinctly remember the first time 'recursive self-improvement' in AI crossed my path. It sounded like something out of a sci-fi novel. But then I dove into it and realized it's the future—if we can nail down the costs and time. Right now, training a new large language model (LLM) costs hundreds of millions and takes months. Yet here I am, in the thick of it, fighting to develop faster and cheaper solutions. In this talk, I'm diving into how we're orchestrating these innovations, why traditional methods are lagging, and how major AI players are tackling these challenges. This is hands-on, real practice, not some sterile theoretical analysis. Get ready to jump into the fray.

Understanding Recursive Self-Improvement

When discussing recursive self-improvement, we're diving into what many consider the holy grail of AI. In practical terms, it's a system that improves itself, meaning the AI can make itself smarter. It's fascinating, but it seemed like a distant dream until I took a closer look. Initially, I was skeptical, thinking it was one of those ideas too good to be true. But once I saw the first implementations, I understood the revolutionary potential of this method. We're talking about automation at a level where machines can learn on their own, and that's where the real magic lies.

Ultimately, recursive self-improvement could transform AI development. It's a process where automation plays a key role, reducing the need for human intervention. I've seen projects where this approach significantly reduced development times. However, beware of the limits: a system that learns by itself can also learn to reproduce its own mistakes, which requires careful monitoring.

The Cost and Time of Training LLMs

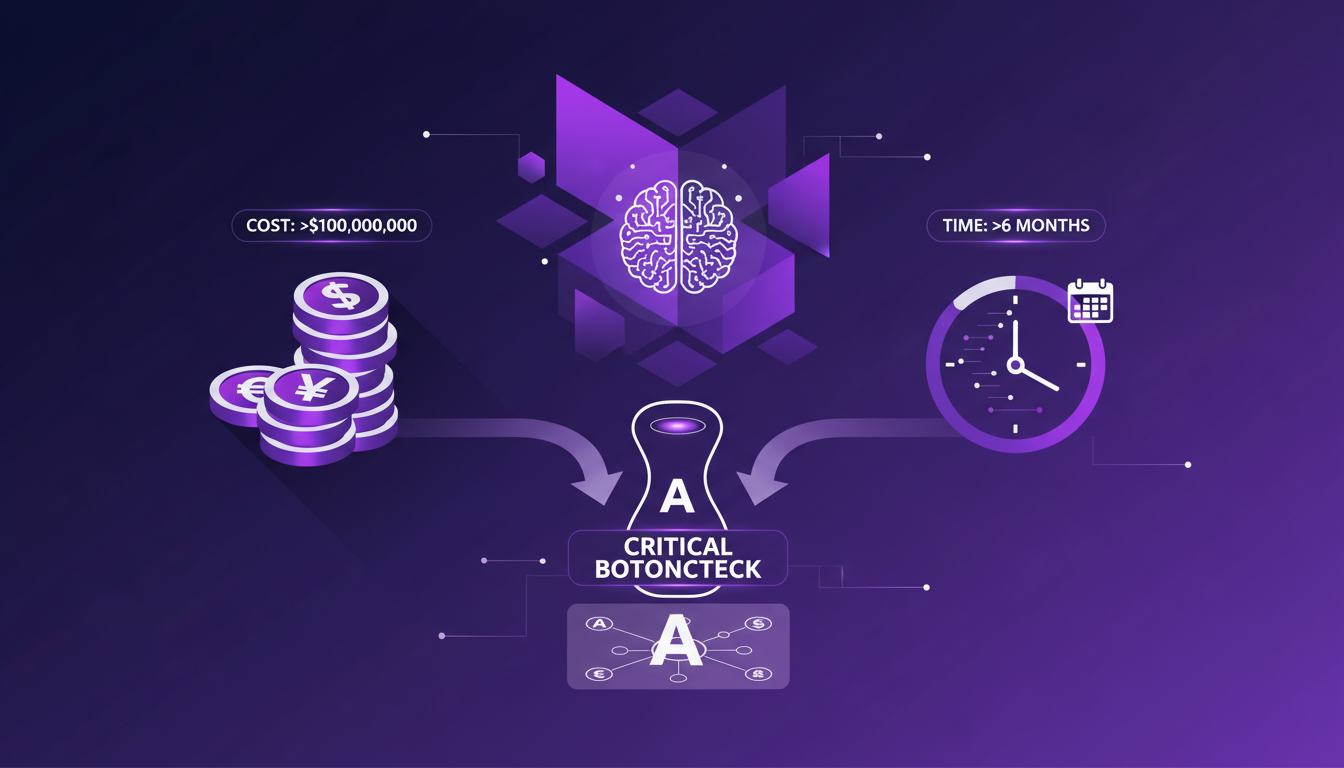

Let's move on to one of the main bottlenecks of recursive self-improvement: the cost and time of training LLMs (Large Language Models). When we talk numbers, we're talking hundreds of millions of dollars to train a new model from scratch. And these trainings can take months. I've been involved in several projects where the initial budget was significantly exceeded due to the unforeseen complexity of the models. Delays are commonplace.

Why are these factors critical bottlenecks? Simply because, outside giants like Anthropic or OpenAI, few players can afford such expenses. And even with the budget, there's still the time. This is where recursive self-improvement can be a game-changer, optimizing each step of the process to save both time and money. But beware, do not underestimate the initial complexity of setting up these systems.

Comparing Traditional and New Approaches

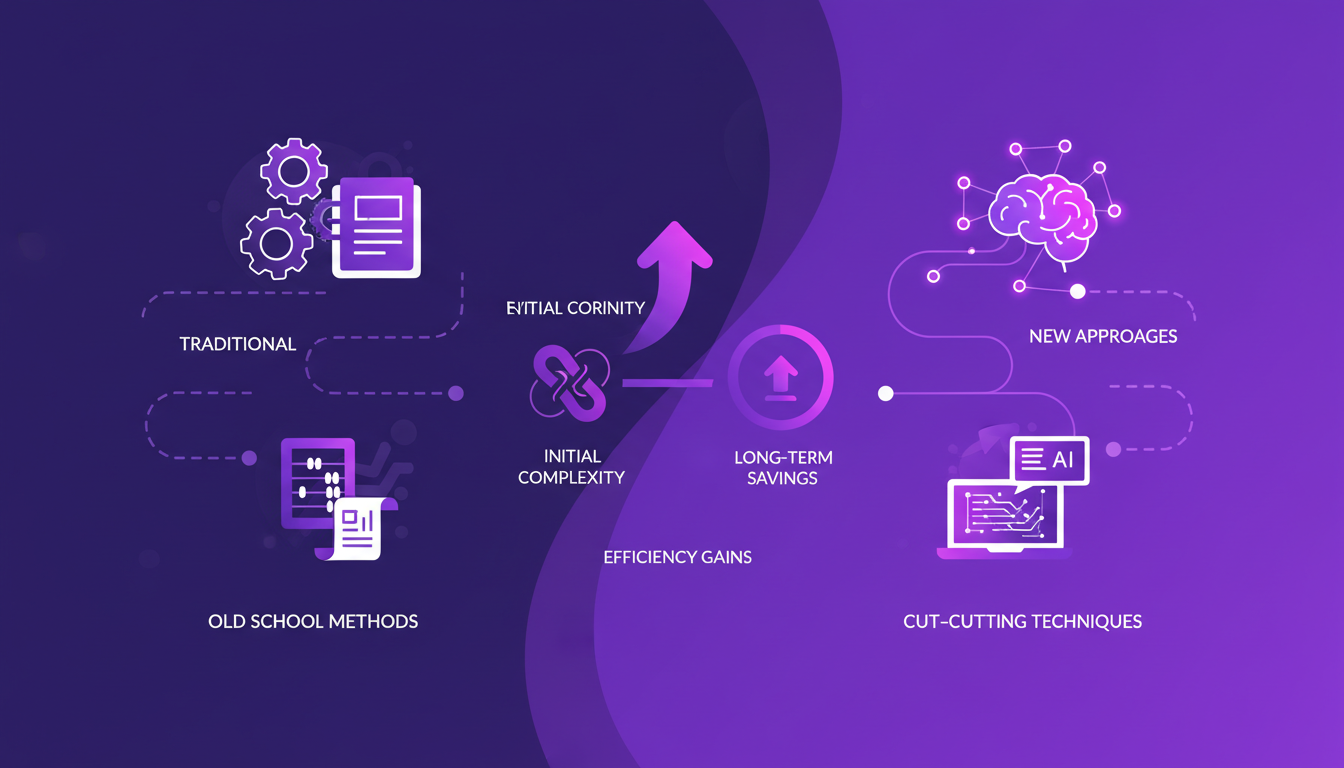

In my journey, I've had the opportunity to transition from traditional methods to new approaches in AI training. The classic methods, while effective, are often resource-intensive. The new techniques, on the other hand, focus on efficiency. Take a simple example: LLMs vs Traditional AI. With LLMs, you can design a chatbot in a few hours, whereas it would take weeks with the old methods.

Of course, there are trade-offs. The new methods may seem complex at first and require a significant initial learning curve. But in the long run, the savings and efficiency are undeniable. I've experienced this transition myself, and it's not without its pains, but the outcome is often worth the effort.

Efforts by Major Players: Anthropic, OpenAI, Google

When discussing giants like Anthropic, OpenAI, and Google, their initiatives in AI are always at the cutting edge. These companies are heavily investing in recursive self-improvement and training new models for each improvement step. I've had the chance to collaborate with some of these entities, and the balance between collaboration and competition is fascinating to observe.

In these collaborations, navigating between knowledge sharing and protecting one's own advancements is crucial. What is certain is that these actors drive innovation while keeping a close eye on costs and efficiency. And even if we are not all at their level, it's possible to draw inspiration from their practices to boost our own projects.

Innovations and the Future of AI Efficiency

Finally, let's talk about the innovations shaping the future of AI. With recursive self-improvement, it's possible to envisage faster and cheaper improvements. I've seen projects where models were optimized in real-time, drastically reducing resource requirements. However, beware of the limits: continuous optimization can lead to unintended biases if not well controlled.

I dream of a future where AI is not only smarter but also more accessible. And that's what recursive self-improvement promises. Of course, the road is long, and there are pitfalls to avoid, but current trends hint at immense potential. For those looking to dive in, I recommend starting with readings on the topic, like this article on recursive self-improvement.

In conclusion, recursive self-improvement is much more than a futuristic concept. It's a reality being built today, ready to transform how we design artificial intelligence.

So, in the AI realm, recursive self-improvement is truly a game changer. First, we're slashing costs and time—think hundreds of millions and several months to train a LLM from scratch. Then, innovative methods are shaking up traditional approaches as major companies continue to push boundaries. But watch out, these new methods come with trade-offs—they require tight orchestration and a deep understanding of technical challenges.

Looking ahead, I believe embracing these advancements is about playing an active role in AI efficiency. Now’s the time to not just keep up, but to lead the charge.

Join me in exploring these innovations. Check out the original video for a deep dive and let’s see how we can be at the forefront of AI advancements. You can find it here: This Is The Holy Grail Of AI.

Frequently Asked Questions

Thibault Le Balier

Co-fondateur & CTO

Coming from the tech startup ecosystem, Thibault has developed expertise in AI solution architecture that he now puts at the service of large companies (Atos, BNP Paribas, beta.gouv). He works on two axes: mastering AI deployments (local LLMs, MCP security) and optimizing inference costs (offloading, compression, token management).

Related Articles

Discover more articles on similar topics

Aerospace Engineer: My Practical Journey

Ever dreamt of reaching for the stars, literally? I did, and it led me to become an aerospace engineer at NASA. But dreams aren't enough—they need a practical roadmap. In this live stream, I share how I built mine, from investing in rocket companies to developing platforms that disrupt industries. We dive into Vibe coding, the Consult Sphere platform, and how these tools turn aspirations into tangible outcomes. Plus, let's not overlook the importance of community, credibility, and brand value in all of this.

Debugging and Evaluating AI Agents with LangSmith

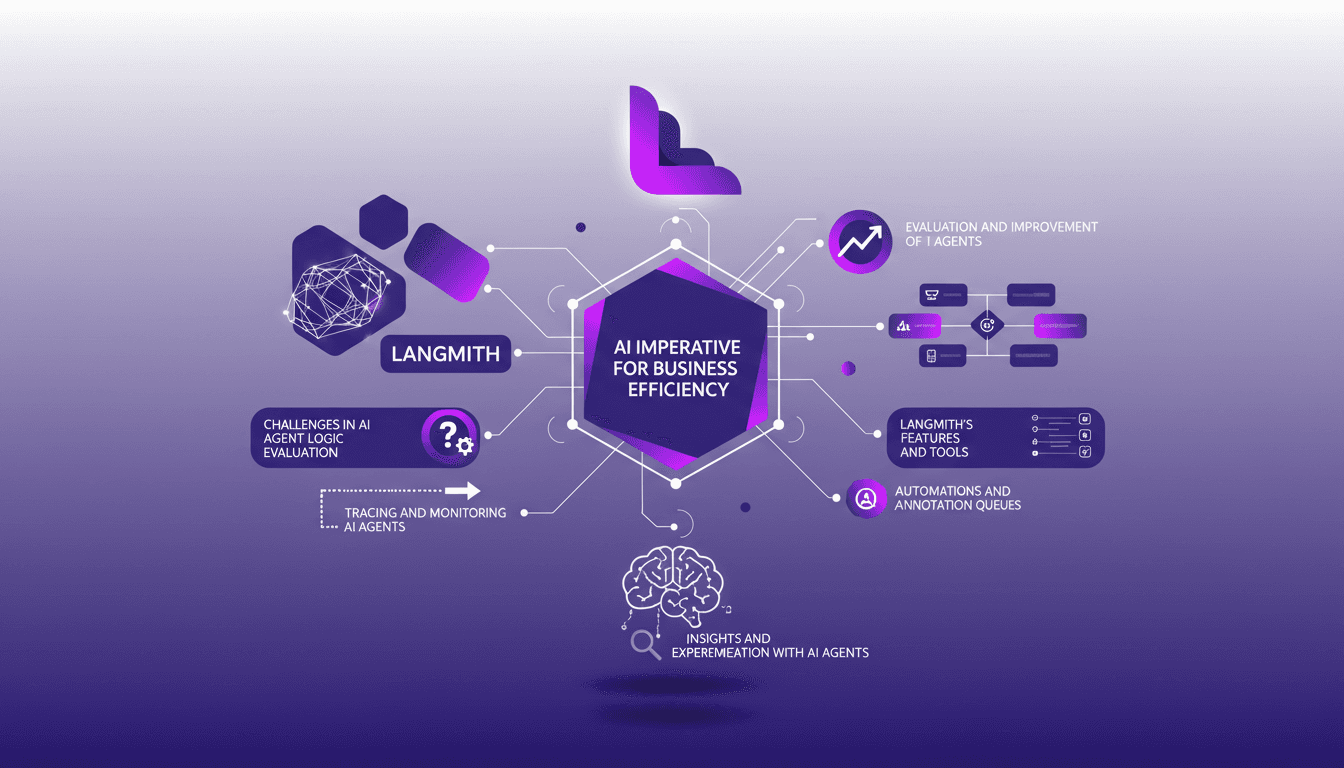

I've been deep in the trenches with AI agents, and trust me, making them reliable is no small feat. LangSmith has been a real game-changer. It's not just about making them smart; it's about ensuring they actually deliver. First, I connect my agents to LangSmith to trace and evaluate their logic. Then, I ensure they hit that magic feedback score of 8 for helpfulness. LangSmith's tools—like automation and annotation queues—let me fine-tune and ship agents that actually work. But watch out, automation has its limits—don't over-rely on it. Dive in with me as we navigate the challenges, tools, and solutions that make LangSmith an essential ally for AI agents.

Scaffolding Ads: A Winning Strategy

I once offered 50 quid to anyone willing to put up an ad for my business on a scaffold. It was a crazy idea, but it sparked something bigger: the potential of unconventional spaces in entrepreneurship. In the business world, thinking outside the box can lead to unexpected opportunities. Whether it's placing an ad in unusual spots or dreaming big about your career, I'm sharing practical insights here. We also touch on business ideas and teaching, job opportunities and dreams, and even my observations on vaping and smoking. Let's dive into this casual conversation.

Starting an Events Company: Practical Steps

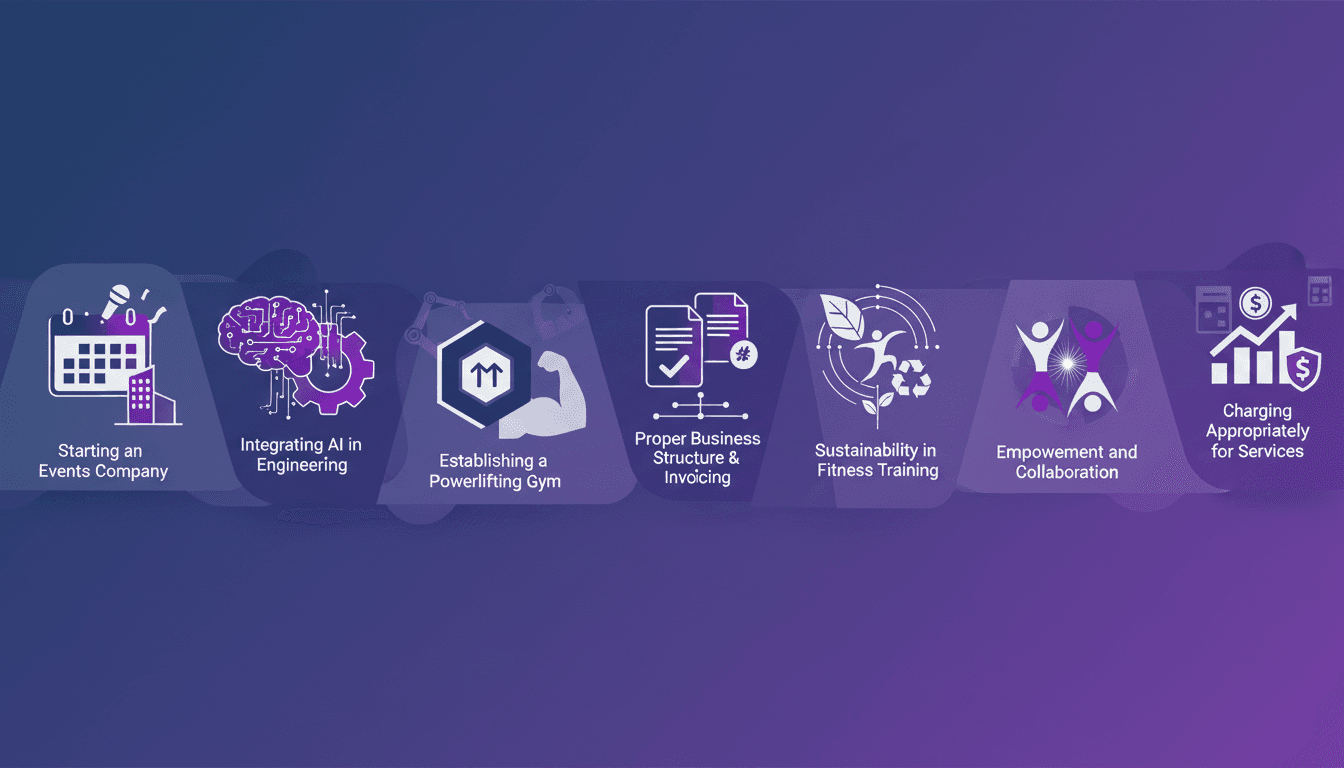

Starting an events company felt like stepping off a cliff—pure excitement mixed with a fair bit of panic. At 27, with just six months of savings, I took the plunge. Spoiler alert: it was worth it. In this article, I lay out how I structured my business, integrated AI into engineering, and even launched a powerlifting gym. We’ll talk numbers, business impact, and how to avoid getting burned by inefficient practices. Whether you're in events, tech, or fitness, here’s how to move from dreaming to doing.

Nvidia and OpenClaw: Integrating Nemo Claw

I dove into the Nvidia GTC 2026 keynote expecting the usual tech updates, but stumbled upon a real game-changer: Nvidia's involvement in the OpenClaw project with Nemo Claw. Nvidia isn't just tagging along; they're reshaping the landscape. OpenClaw started from scratch and already boasts over 50 variations on GitHub. With Nemo Claw's entry, security, hardware integration, and enterprise applications are being reinvented. Nvidia's hardware strategy is no joke, especially with Gro 3 LPU chips and Grock IP integration. But watch out—data privacy remains a hot topic in this space.