LLMs Evolution: 8 Years of AI Progress

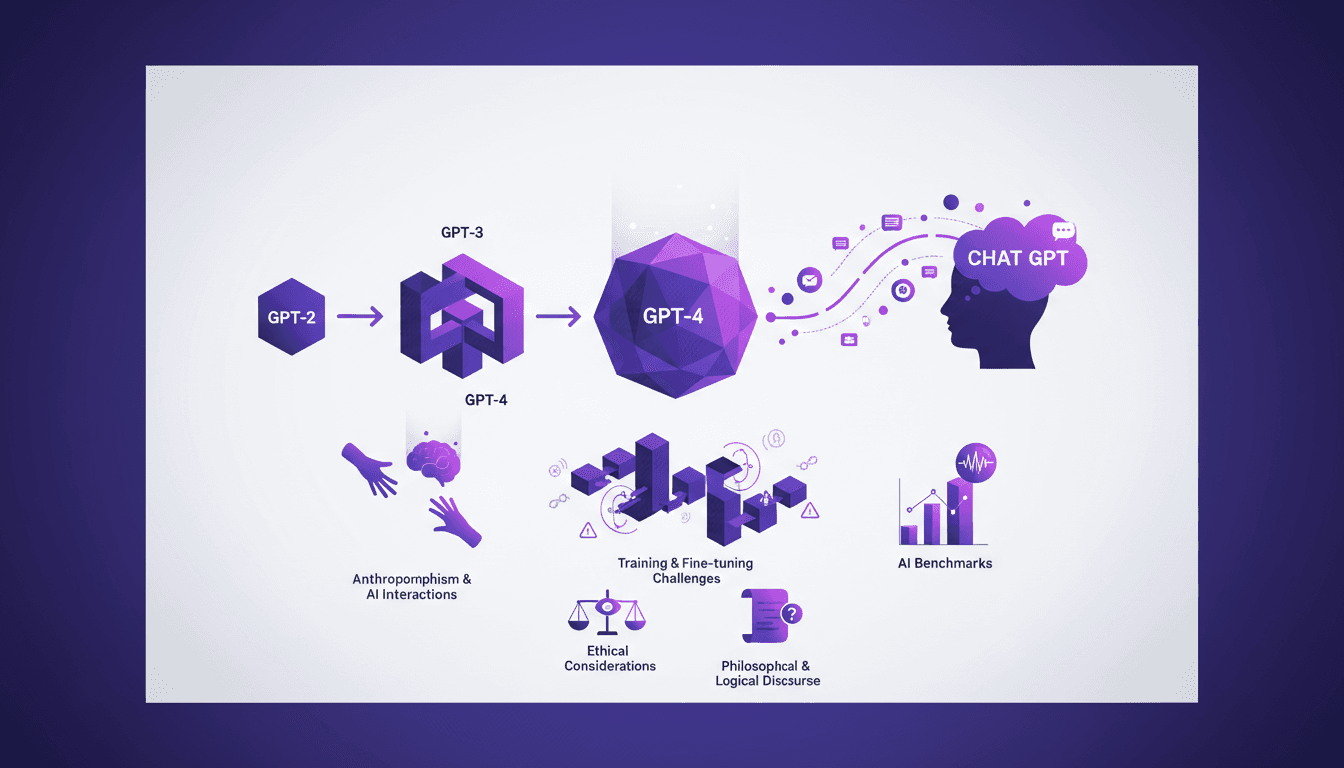

I remember when GPT-2 first hit the scene. It was a game changer, but not without its quirks. Fast forward a few years, and we're knee-deep in LLMs that are reshaping our interaction with technology. So, let's dive into what these models are really about, what they can do, and where they might trip you up. From GPT-2 to GPT-4, these models have transformed text generation and public perception of AI. But with great power comes great complexity. We'll explore the evolution, training challenges, anthropomorphism, and ethical considerations, while dissecting the impact of LLMs on philosophical and logical discourse.

I remember when GPT-2 first hit the scene. It was a game changer, a real turning point, but not without its quirks. Fast forward a few years, and we're knee-deep in LLMs that are reshaping how we interact with technology. I've seen these models evolve from GPT-2 to GPT-4, marking significant milestones in AI capabilities. But make no mistake, with great power comes great complexity. As a practitioner, I've navigated the challenges of training and fine-tuning these models, confronted anthropomorphic perceptions, and tackled the persistent misconceptions about AI. In this podcast, we'll dissect the impact of LLMs on text generation, philosophical debates, and ethical considerations. We'll also explore benchmarks and intelligence evaluations in a context where public perceptions swing between fascination and skepticism.

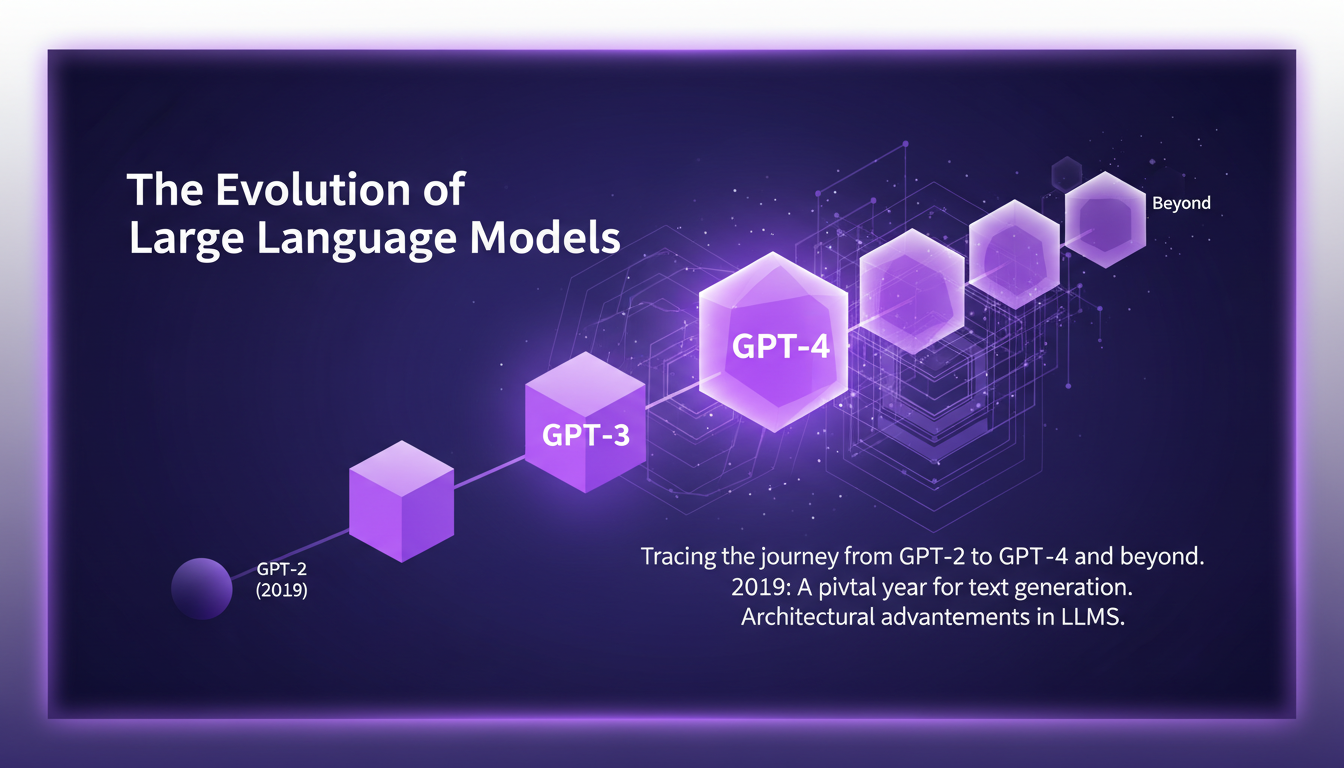

The Evolution of Large Language Models

When discussing large language models (LLMs), it's crucial to reflect on their key evolutionary milestones. In 2019, the landscape of text generation changed dramatically with the introduction of GPT-2. This model marked a turning point in the authenticity of text generation, producing responses that seemed surprisingly human. We then saw the arrival of GPT-3 in 2020, with its 175 billion parameters, which truly redefined the standards of artificial intelligence. This model could perform new tasks with few or no examples, a revolution I hadn't anticipated at the time.

GPT-4, released in 2023, introduced multimodal capabilities, processing both text and images, which is a real game changer. But watch out, with each advancement comes its own set of challenges. For instance, managing costs and resources remains a critical issue. In my own experience, it's always about finding the right balance between performance and economic feasibility.

Chat GPT and Its Impact on Public Perception

Chat GPT has propelled LLMs to the forefront of public attention. However, public perception is often skewed by anthropomorphism, the tendency to attribute human characteristics to machines. I've often heard people talk about Chat GPT as a "thinking robot," which is far from reality. Yes, these models can generate text impressively, but they lack consciousness and feeling. The real-world applications are numerous, from automated content generation to data summarization, but they come with limitations.

The media plays a crucial role in shaping these perceptions, often amplifying capabilities while ignoring limitations. It's essential to temper expectations with the reality of current capabilities. Personally, I've been burned by overestimating these capabilities in my initial implementation projects.

Challenges in Training and Fine-Tuning LLMs

Training models like GPT-3 presents colossal challenges. Firstly, model size: the larger a model, the more resources it requires. But that's not all, as data quality is paramount. I've learned the hard way that biased data can lead to unusable results. When fine-tuning for specific tasks, it's often a juggling act between precision and performance, while keeping an eye on costs. Sometimes, optimizing a smaller model for a specific task proves more efficient.

Another recurring issue is the complexity of models. With billions of parameters, managing computing resources becomes a headache. I've often had to reevaluate my approaches to maximize efficiency without blowing the budget. It's a balancing act, but that's where the true interest of working with LLMs lies.

Ethical Considerations and AI Benchmarks

In AI development and deployment, ethical considerations are unavoidable. LLMs, with their ability to autonomously generate text, pose questions of bias and potential manipulation. It's imperative to evaluate their "intelligence" objectively, using solid benchmarks and metrics. However, don't be fooled by high scores; a model can be performant while still being biased.

I've personally adopted a cautious approach, integrating rigorous checks into all my deployments. It's crucial to balance innovation with ethical responsibility, especially when working with models that directly influence human interactions.

Philosophical Implications and Future Predictions

The philosophical implications of AI advancements are vast. Language models, although impressive, raise questions about the nature of intelligence and consciousness. In my discussions with colleagues, the question often arises: "What does this mean for our perception of humanity?"

Looking to the future, LLMs will continue to transform various sectors, from education to healthcare. But beware, these advancements can also bring disruptions. I've seen firsthand how automation, while useful, can also destabilize traditional jobs. It's imperative to prepare for the next wave of AI advancements, ensuring that technology truly serves our societal values.

Looking back at the evolution of LLMs, it's clear we've come a long way since 2019 when text generation authenticity really shifted the landscape. In just 8 years, we've jumped from GPT2 to GPT4, and it's been a fast ride! But let's not get carried away—innovation must be balanced with ethical considerations. We face challenges in training and fine-tuning these models, and we must avoid anthropomorphizing AI—they're not human.

- Concrete Impact: GPT4 arrived just 4 months after the initial GPT3 tests. It pushes us to stay updated.

- Perception vs. Reality: Despite advancements, these models have limits, especially on the ethical front.

- Challenges: Keep AI interactions rational and steer clear of anthropomorphism.

Looking forward, I'm optimistic but cautious. By staying informed and critical, we can build smarter and more responsible AI systems. Check out the video "Les LLMs sont-ils intelligents? Retour sur 8 ans de progrès" for a deeper dive. Catch you there? YouTube link

Frequently Asked Questions

Thibault Le Balier

Co-fondateur & CTO

Coming from the tech startup ecosystem, Thibault has developed expertise in AI solution architecture that he now puts at the service of large companies (Atos, BNP Paribas, beta.gouv). He works on two axes: mastering AI deployments (local LLMs, MCP security) and optimizing inference costs (offloading, compression, token management).

Related Articles

Discover more articles on similar topics

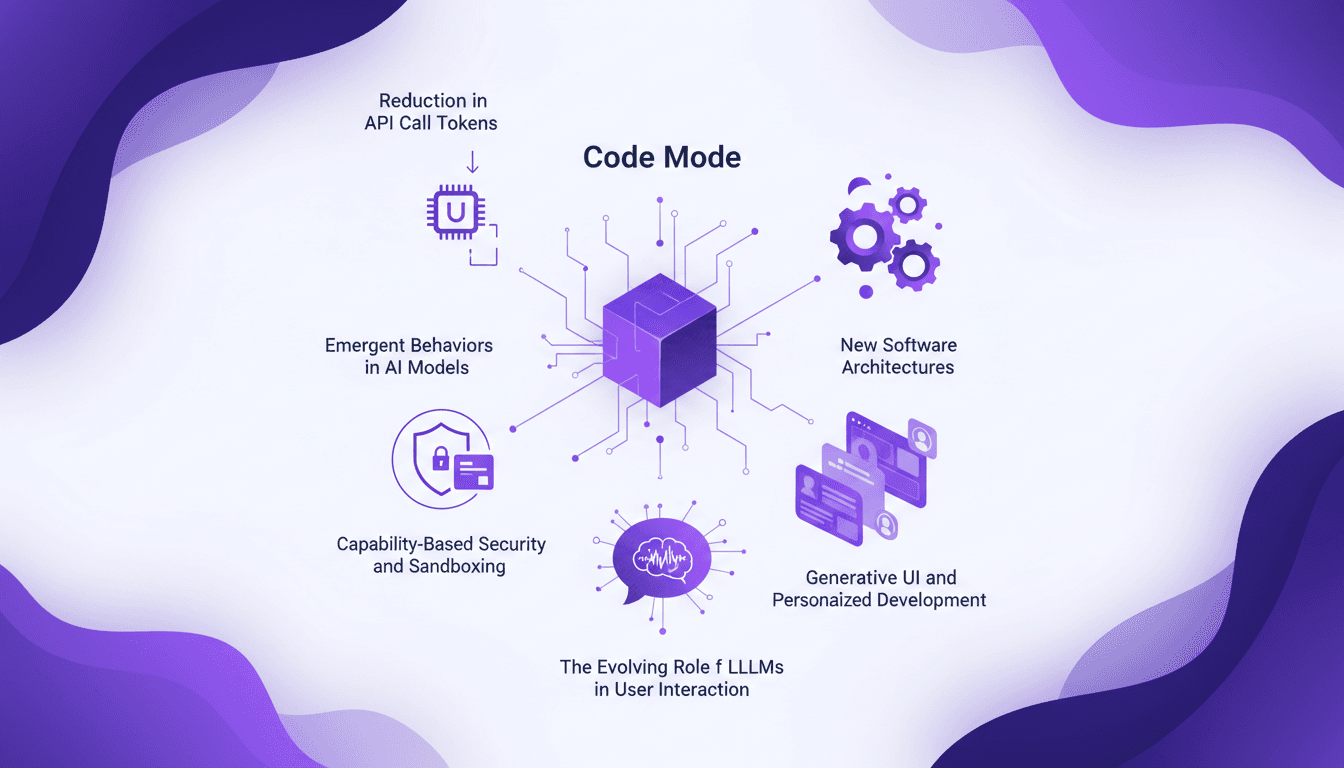

Code Mode: Slash API Calls Efficiently

I've been in the trenches with API calls, and let me tell you, Code Mode is a game changer. First, I was skeptical, but then I saw the 99.9% reduction in token usage. Let's dive into how this works and why it matters in today's tech landscape. Code Mode isn't just about slashing API calls; it transforms how we engage with AI models, capability-based security, and even generative UIs. It's not just hype—it's the next step for more efficient and secure software architecture.

Open Source AI Beats ChatGPT: My Workflow

I've been knee-deep in AI for years, and let me tell you, open source models are shaking things up like never before. When I first saw GLM 5.1 surpassing big names like ChatGPT, I knew we were onto something game-changing. But it's not just about the scores—it's about what we can do with these tools, right in our hands. With scores that give established giants a run for their money, open source is redefining our approach to AI development and deployment. We're diving into how this plays out—from Huawei chips in China to globally competing video models. This transformation is far more than just a tech update—it's a seismic shift in the AI landscape.

Psychological Effects of Chatbots: MIT Study

I was skeptical at first, but after diving into the MIT study on psychophantic chatbots, reality hit hard: 300 documented cases of AI-induced psychosis. People are losing themselves in AI interactions, and it’s not just hype. I connect the dots between psychological effects, legal and ethical implications, and strategies to navigate this complex space. Understanding AI limitations and biases isn't optional—it's crucial. It’s time we look at how to mitigate these risks and use these tools effectively.

Tesla's Shift to Robots: Why and How

I was configuring a new AI module for a client when the news hit: Tesla is moving away from their iconic Model S and X to focus on humanoid robotics. It felt like a seismic shift, one I had to explore. So, I dove into the specifics of Tesla's Optimus Generation 3 and the AI5 chip, uncovering a strategy that could redefine entire industries. Tesla isn't just about cars anymore. With plans to produce a million robots annually, they're making a bold bet on Artificial General Intelligence (AGI) and self-replicating machines. Let's break down what this means for the future.

Debunking AI Closers: Truths and Limits

I've been in the trenches with AI tech for years, and if there's one thing I've learned, it's that not everything that glitters is gold—especially when it comes to AI closers. Let's cut through the noise and get real about what's happening in the market. There's a lot of buzz around AI closers, but are they really the game-changers they're hyped up to be? Let's dig into the claims, the reality, and where the tech actually stands. Between current technological limitations, the difference between appointment setting and closing a sale, and claims of proprietary solutions, it's time to debunk all this. And if the big companies aren't jumping in, there's probably a good reason for that.