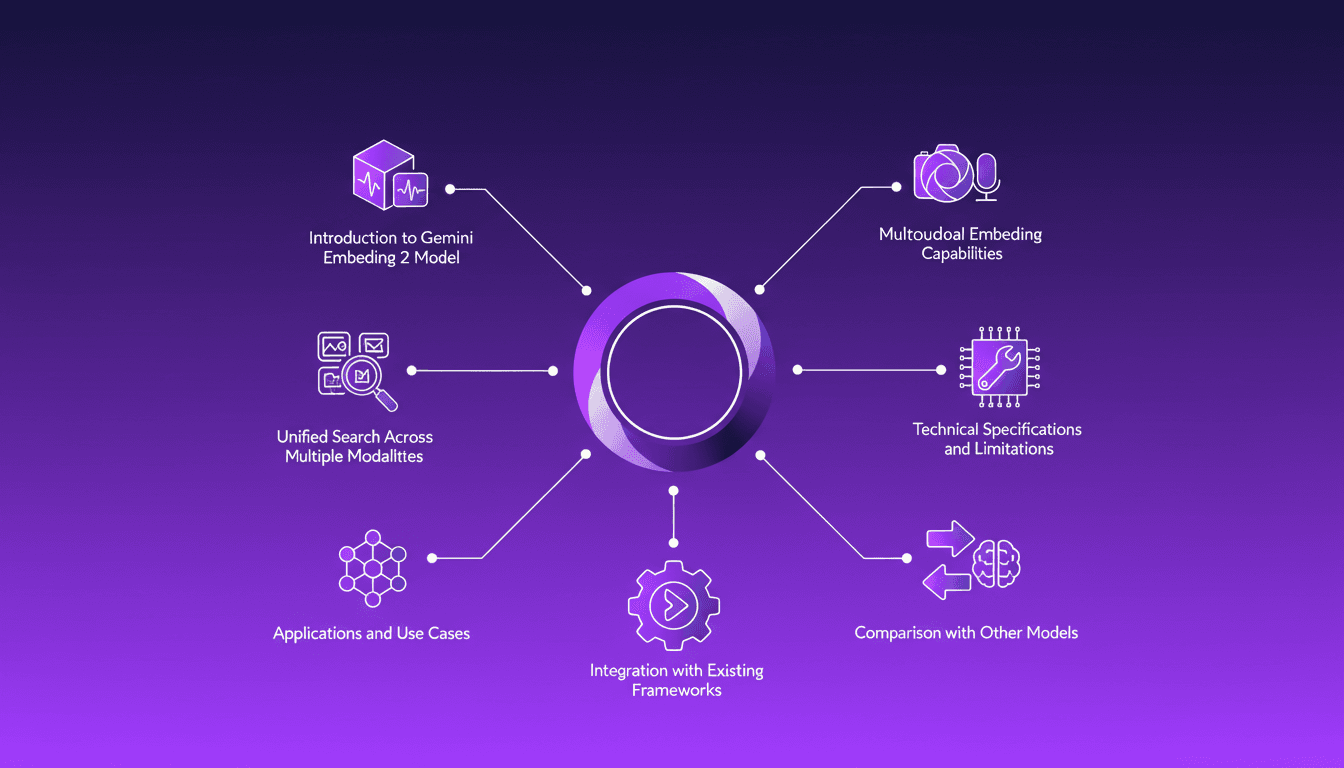

Integrating Gemini Embedding 2: A Practical Guide

I dove into Gemini Embedding 2 to streamline how I handle audio, text, images, and videos. Imagine this: a unified approach to multimodal embedding that actually delivers. I put this promise to the test myself, and trust me, there are critical nuances you'll need to leverage its full potential. Whether you're looking to unify your searches across multiple media types or integrate this model into your existing frameworks, this practical guide will show you how. Be wary of some technical limitations that might catch you off guard, but with the right orchestration, the results speak for themselves. Let's dive in, and I'll show you how I've integrated it into my workflows for direct, measurable impact.

When I first stumbled upon Gemini Embedding 2, I saw a game-changing opportunity to revolutionize how I handle audio, text, images, and videos. Think of it as finding the key to a treasure chest of multimodal capabilities. Picture this: a single model that can handle multiple data types with ease. But hold on, it’s not all smooth sailing. First, I connected my various content types (audio, text, images), then explored the model’s unified search capabilities. But there are technical traps you need to watch out for to avoid getting burned. Some limitations are there, and they can become a real headache if you don’t anticipate them. Let me walk you through how I integrated Gemini Embedding 2 into my existing frameworks and the tangible impacts it had on my workflow. Trust me, it’s a real game-changer, but only if you know how to pilot it correctly.

Understanding Multimodal Embedding with Gemini

When I first encountered Gemini's multimodal embedding, I was blown away. Integrating audio, text, images, and videos into a unified vector space is a major leap forward. Gemini Embedding 2 enables us to explore semantic similarities across different content types, which is groundbreaking. The key to this technology lies in Matrioska Representation Learning, which maintains context across modalities. However, watch out for token limitations, as they can impact performance. Personally, I found the model's ability to handle diverse data types a real game changer, but keep an eye on token usage.

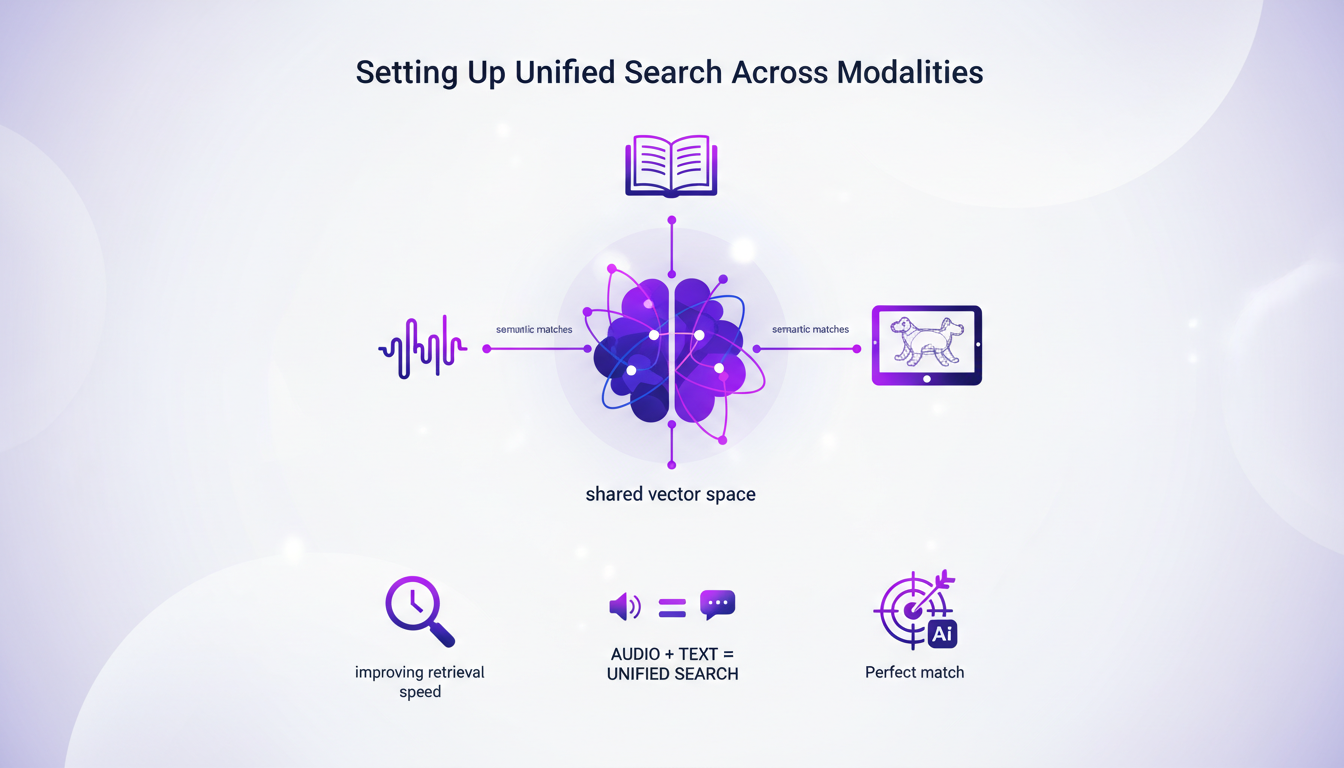

Setting Up Unified Search Across Modalities

Setting up a unified search is truly powerful. Leveraging the shared vector space, I configured searches to find semantic matches between audio and text, significantly improving data retrieval speed. The model even scored a perfect match with similar sketches, demonstrating its precision. However, beware of the 3,000-dimension threshold, as it can affect search efficiency. The key is balancing search breadth and depth for optimal performance.

- Balance search breadth and depth

- Optimize dimension usage

- Enhance precision of semantic matches

Technical Specs and Their Implications

With over 3,000 dimensions for full representations, Gemini Embedding 2 excels at handling multimodal data. For example, it can process up to six images simultaneously for embedding. Understanding the five different models and indexes is crucial for targeted applications. I encountered five distinct issues that required tailored model configurations. Trade-offs between model complexity and processing speed are inevitable.

- Handle up to six images for embedding

- Use five models and indexes for specific applications

- Manage trade-offs between complexity and speed

Applications, Use Cases, and Integration

Gemini's applications range from content recommendation to cross-modal retrieval systems. I integrated Gemini with existing frameworks to enhance functionality without overhauling systems. Use cases include improving media asset management and enhancing search capabilities. Integration requires careful orchestration to maintain system efficiency. Consider the cost-benefit ratio of integration based on specific business needs.

- Content recommendation

- Cross-modal retrieval systems

- Improve media asset management

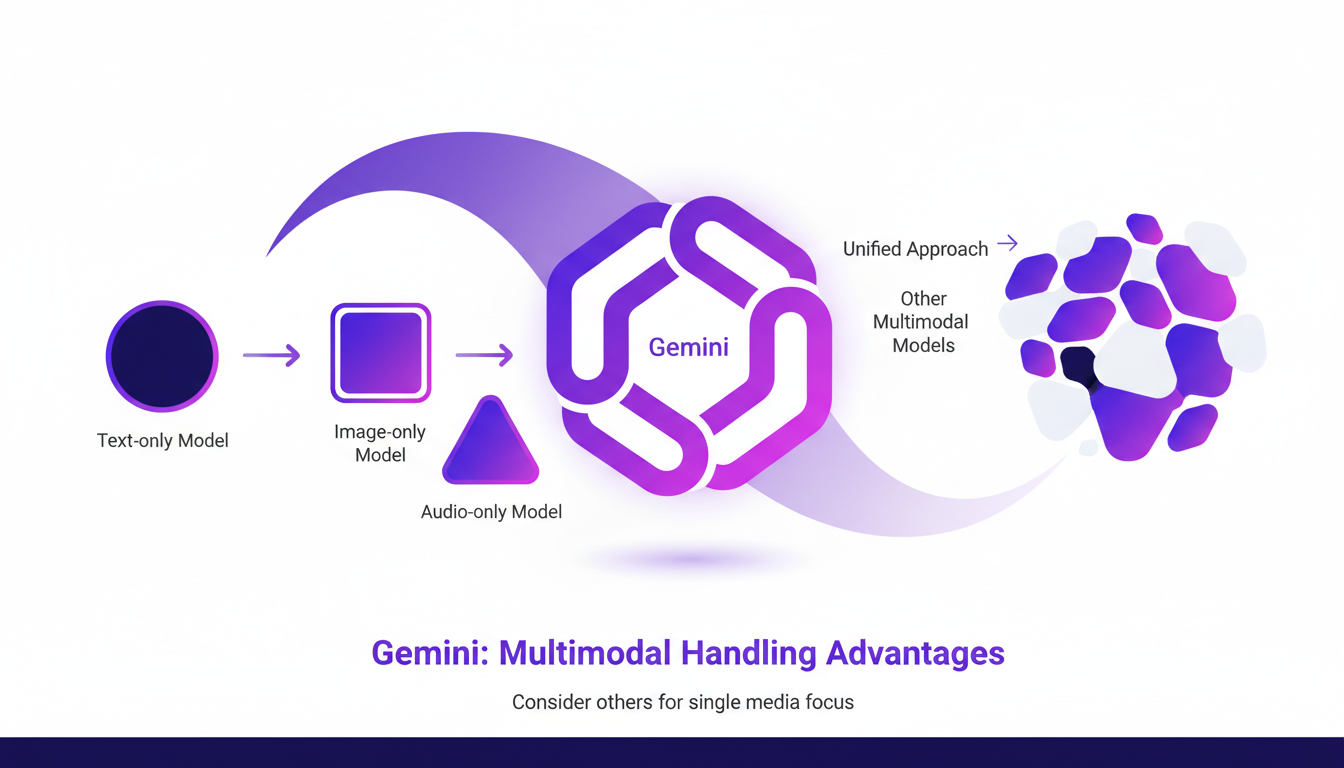

Comparing Gemini with Other Models

Gemini stands out for its unified approach, but it's not the only player in the field. I compared it with other models and found distinct advantages in multimodal handling. Consider other models if your focus is solely on one type of media. Gemini's comprehensive approach can save time and resources in multimodal contexts. Watch out for overhyped claims; not every model fits every need.

- Distinct advantages in multimodal handling

- Save time and resources

- Watch out for overhyped claims

So, Gemini Embedding 2 is really a game changer for handling diverse media types in a unified way. I've integrated it to boost efficiency and, let me tell you, it's a robust solution. But, you need to understand its limitations to maximize its potential.

- First, I passed in two pieces of content for separate embeddings, and it works smoothly.

- Then, I got a perfect score of 1 matching the text 'a sketch of a backpack' with 'a sketch backpack'.

- I embedded six images, and the process was seamless.

Looking ahead, I'm really excited about how this tech can transform our media handling processes, but always keep an eye on its technical limits.

Ready to transform your media processes? Dive into Gemini Embedding 2 and see the difference. For deeper understanding, check out the original video, it’s truly peer-to-peer: watch the video.

Frequently Asked Questions

Thibault Le Balier

Co-fondateur & CTO

Coming from the tech startup ecosystem, Thibault has developed expertise in AI solution architecture that he now puts at the service of large companies (Atos, BNP Paribas, beta.gouv). He works on two axes: mastering AI deployments (local LLMs, MCP security) and optimizing inference costs (offloading, compression, token management).

Related Articles

Discover more articles on similar topics

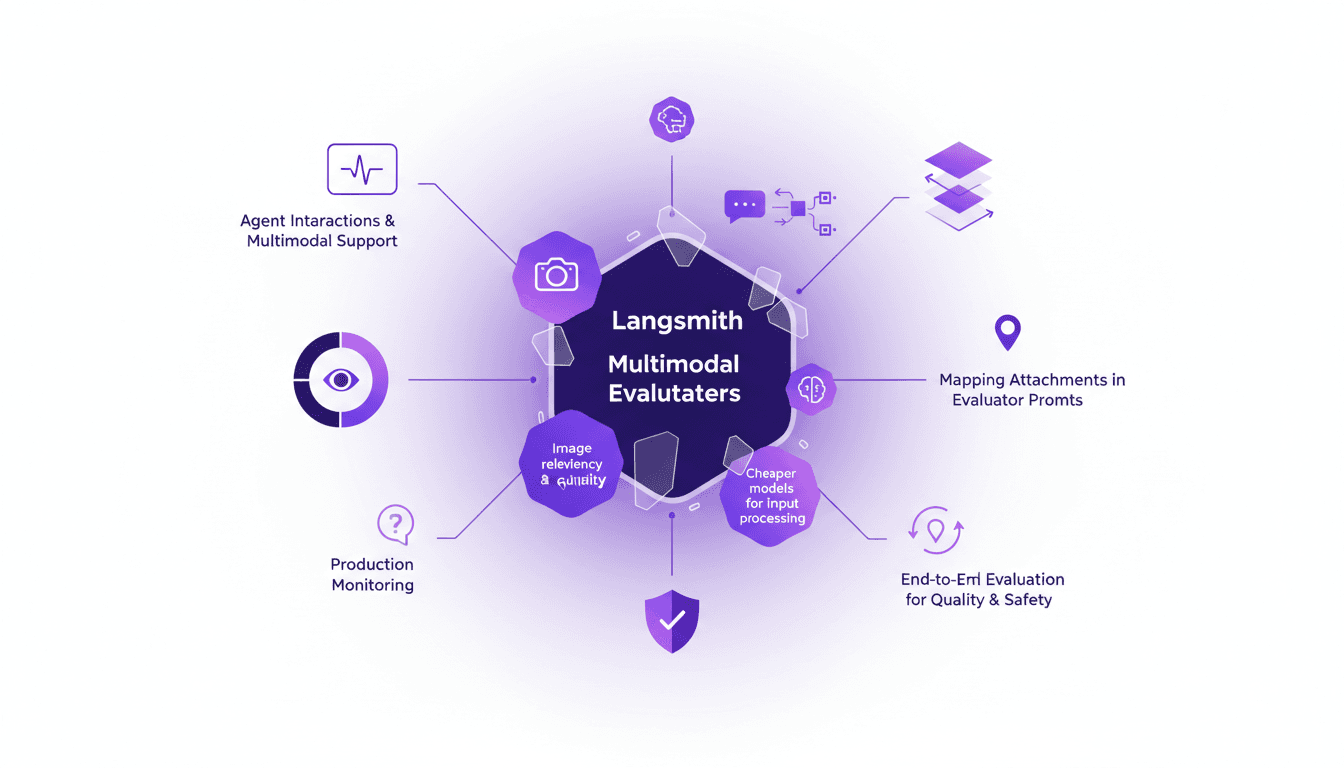

LangSmith Multimodal Evaluators: Practical Integration

I've been tinkering with LangSmith's latest feature—multimodal evaluators—and it's a game changer for agent interactions. First, I integrated the B64 format to handle images, then evaluated the relevancy and quality of interactions. But watch out for cheaper models, they can sometimes skew results. The integration is a real challenge, but once mastered, it enables smooth production monitoring and end-to-end evaluation of interactions to ensure quality and safety.

Mastering Gemini 3.1: Flash Lite in 14 Minutes

I dove headfirst into Gemini 3.1 Flash Lite, eager to see if it could truly revolutionize my workflow. Spoiler: It did, but not without a few hiccups along the way. Picture a model that can grasp multimodal data and optimize programmatic SEO in a flash. I tested five different use cases, and for a translation task, it took just one second. But watch out, setting it up with Google's tools isn’t exactly a walk in the park. I'll walk you through how I navigated it all, with candid comparisons to competitors and an eye on cost efficiency. If you're ready to supercharge your SEO, join me on this journey.

Boosting Web Search with GPT-5.3: Practical Guide

I've been tweaking search results for years, but integrating GPT-5.3 changed everything. With the latest enhancements, understanding user queries has become more nuanced. In this article, I walk you through how to leverage these advancements for better web search results. We'll dive into the importance of subtext, the enhancements in GPT-5.3, and how they make responses more natural and conversational. You'll see practical cases like planning a biking trip or understanding baseball rule changes. It's a powerful tool, but watch out for context limits—beyond 100K tokens, things get tricky. I'll share how I orchestrated these elements for direct user experience impact.

Securing AI: Integrating Prompt Fu at OpenAI

I remember the first time I encountered a security breach in an AI system. It was a wake-up call that security wasn't just a checkbox but a critical component of AI deployment. OpenAI's acquisition of Prompt Fu feels like a game changer. By integrating Prompt Fu into their Frontier platform, OpenAI is set to enhance security and redefine how we protect AI. With over 125,000 developers using Prompt Fu and a quarter of the Fortune 500 companies trusting it, this strategic move promises to transform AI system security, addressing concerns over open-source project maintenance and prompt injection vulnerabilities.

GPT 5.4: Performance, Cost, and Controversy

I just integrated GPT 5.4 into my workflow, and let me tell you, it's a game changer—but not without its quirks. OpenAI has just released GPT 5.4, and between boosted efficiency and cost management, it's a complex terrain of trade-offs. Priced at $15 per million tokens, it looks tempting, but watch out for the 295% surge in uninstalls on February 28th. Scoring 83% on the GDP val benchmark, surpassing Opus 4.6, GPT 5.4 promises a lot, but beware of the pitfalls. Let's dive into the technical details and potential professional impacts this new version might have.