GPT 5.5 Instant: Revolution and Comparison

I've been diving deep into OpenAI's latest release, the GPT 5.5 Instant model. It's not just another upgrade; it's a genuine game changer in the AI world. Let me walk you through what I've discovered. With its multimodal capabilities and performance enhancements, the promises are big. But how does it really stack up against its predecessors? I'll show you how it performs in benchmark tests, how its API might revolutionize our future use cases, and why it might just outdo the Claude Haiku 4.5 model. Get ready, because this journey is intriguing.

I've been diving headfirst into OpenAI's latest offering, the GPT 5.5 Instant model. And let me tell you, this isn't just another upgrade. It's a radical shift, a true game changer in the AI landscape. First, I compared its multimodal capabilities with previous versions, and the difference is stark. We're talking about an AI detection accuracy of 98%, which is massive. Then, I tested its efficiency and performance, and the results are impressive. But watch out, there are limits - especially regarding accessibility via chatgptt.com. I'll also touch on the API and how it might redefine our future use cases. And to top it all off, we'll pit it against the Claude Haiku 4.5 model. This isn't just theoretical; I’m sharing what I've lived, what I've tested, and how I had to orchestrate my workflows to get the most out of this new version.

Unpacking GPT 5.5 Instant: What's New

I kick off each day by diving into the latest tech advancements, and GPT 5.5 Instant caught my eye right away. This model promises a 7% improvement in multimodal reasoning over previous versions. Now, don't be fooled, 7% might seem modest, but in the AI world, that's a significant leap forward. One of the most striking features is its 98% AI detection accuracy, which means practical use cases become a lot more reliable.

In my initial interactions, I noticed that responses were not only faster but also more accurate. It's like the model anticipated my questions before I even asked them. I found myself testing this new release on chatgptt.com, where it's available for free to all users. The difference from the previous model is stark. As a builder, every millisecond counts, and this speed is a valuable time-saver.

Comparing GPT 5.5 Instant to Previous Models

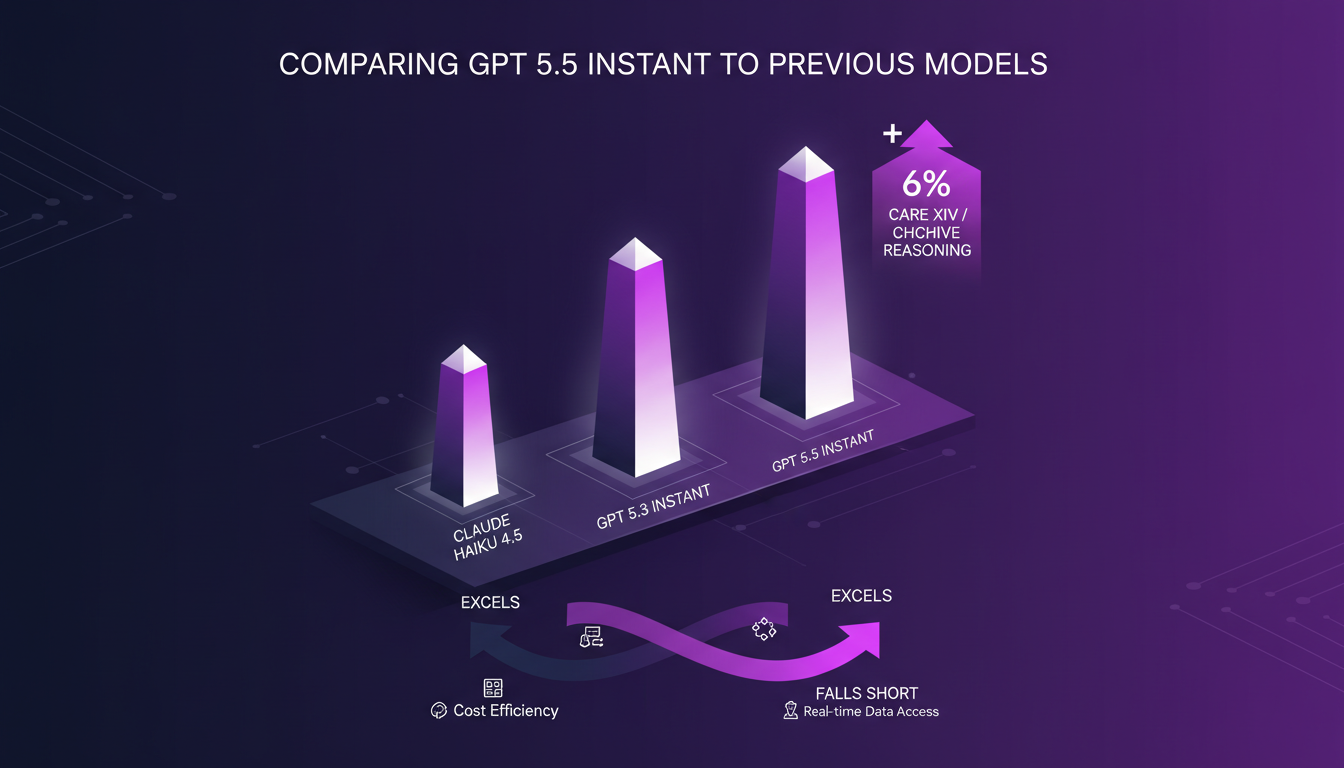

Now let's dive into a direct comparison. I took the time to test GPT 5.5 Instant against GPT 5.3 Instant and Claude Haiku 4.5. The first thing I noticed is a 6% improvement in care XIV or CH archive reasoning. It's in these tasks that GPT 5.5 truly shines, but some trade-offs remain.

So where does GPT 5.5 really excel? Speed and visual intelligence are its strong points. But watch out, when tasks become too complex, the model can still stumble. I found that in some cases, Claude Haiku 4.5 had a slight edge in terms of token usage cost. In practice, this means if your application requires high precision without cost compromise, GPT 5.5 Instant is your best bet.

Multimodal Capabilities: The Real Deal

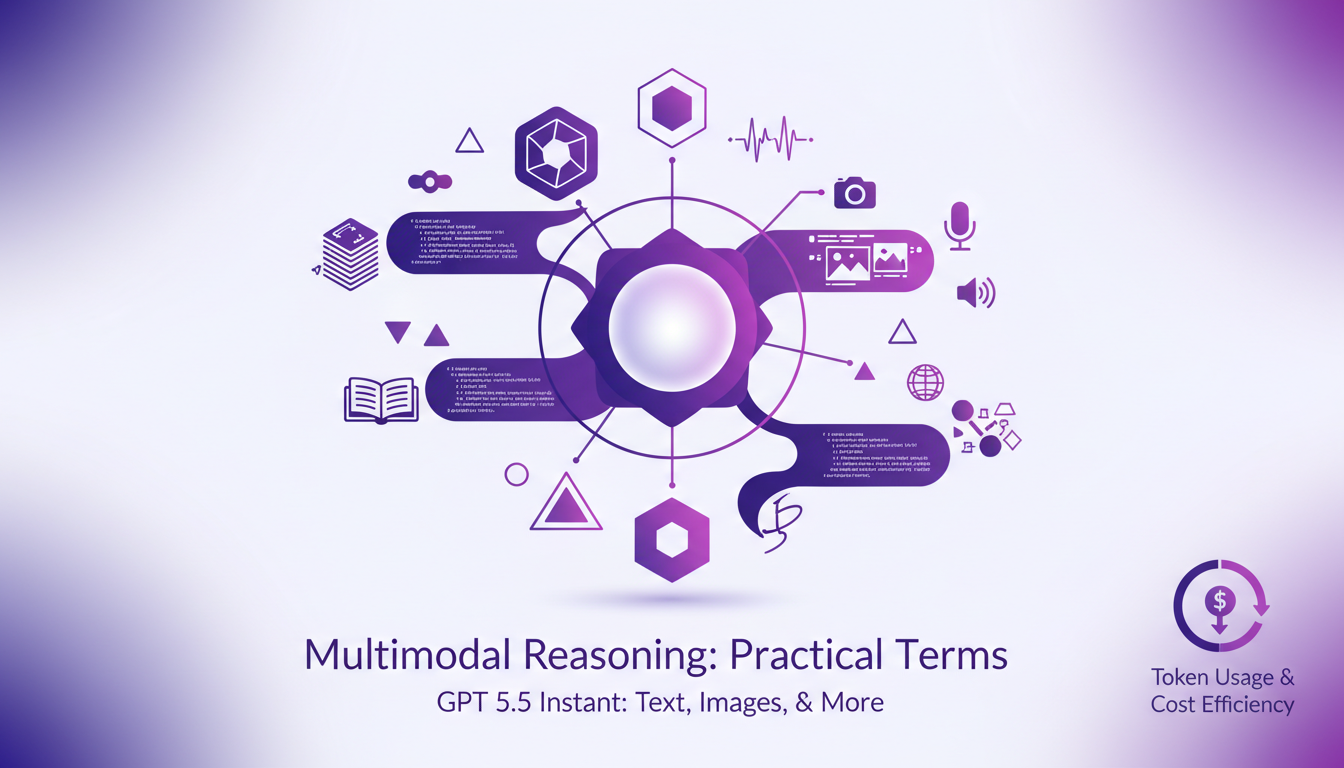

Let's talk about multimodal capabilities, an area where GPT 5.5 Instant truly makes a difference. The ability to handle both text and images is a real game-changer. Imagine a tool that can not only read your words but also understand your images. That's exactly what I experienced when testing this model.

But there's a catch: token usage. While the model is more efficient, complex tasks can consume more tokens, increasing costs. I learned the hard way that exceeding context limits can quickly lead to suboptimal performance. So, use it wisely, especially for heavy multimodal tasks.

Performance and User Experience

In terms of performance, GPT 5.5 Instant doesn't disappoint. I've observed significant efficiency gains with faster processing and reduced latency. Testing on chatgptt.com, the improvement in user experience is evident. Responses are not only faster but also more relevant.

Benchmark tests confirm these impressions. While the numbers are impressive, I remain cautious about accessibility. For some, the speed and accuracy may not justify the increased token costs. Yet, for those seeking a balance between performance and accessibility, GPT 5.5 remains a solid option.

API Availability and Future Use Cases

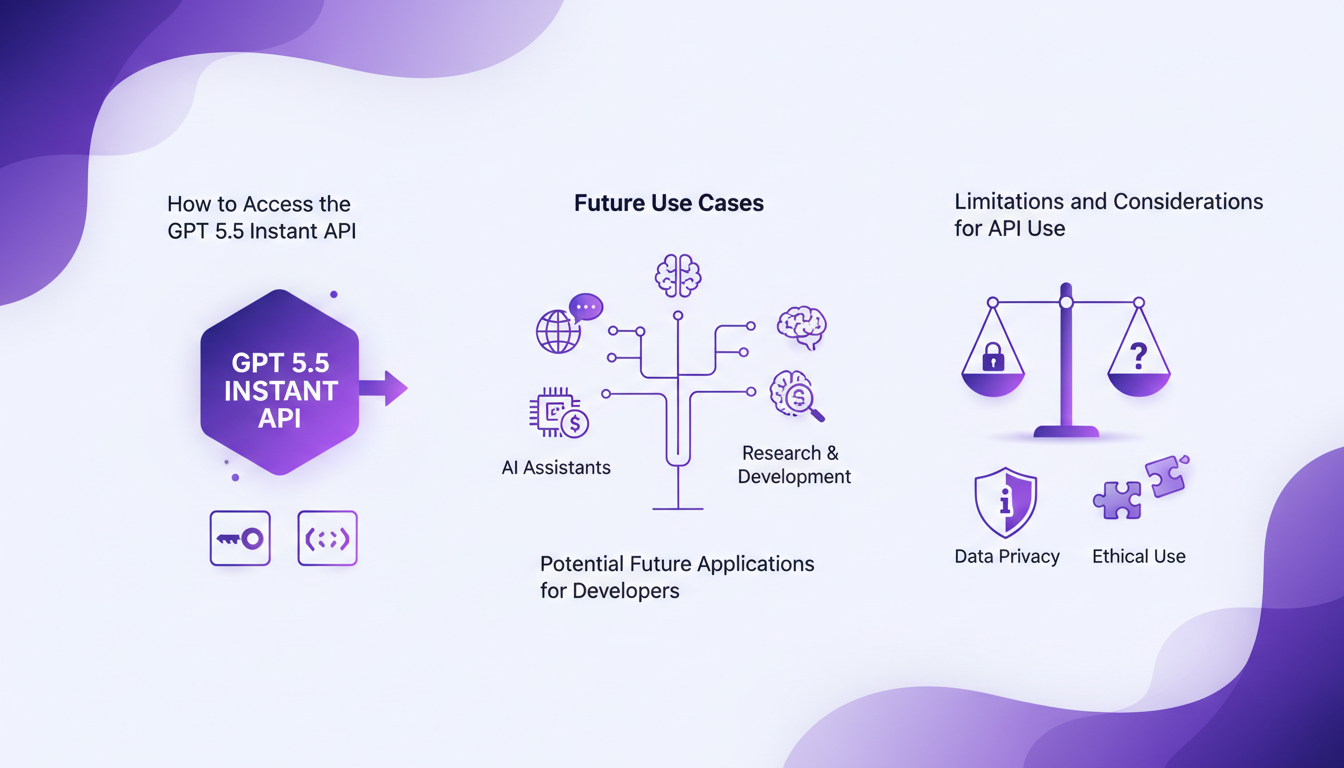

Accessing the GPT 5.5 Instant API is an exciting opportunity for developers. Although the API isn't available yet, the prospects for future applications are vast. Whether for integrations into existing applications or new innovative projects, the possibilities are numerous.

But be wary of the limits. Using the API requires careful token management to avoid cost explosions. Compared to current API offerings, GPT 5.5 Instant positions itself as a robust option for those looking to leverage the latest AI advancements.

GPT 5.5 Instant is a real game changer, but like any tool, it has its limits. Here are the key takeaways:

- I see a 98% detection accuracy on a specific model, which is massive for refining our analyses.

- The GPT 5.5 Instant model, with its multimodal capabilities, simplifies tasks that once required multiple distinct tools.

- In terms of efficiency, the improvement is clear, but watch out for performance limits in complex contexts.

Looking ahead, I'm excited to see how these new capabilities will transform our daily workflows. It's a real game changer, but mastering its subtleties is crucial to avoid pitfalls.

Ready to dive into GPT 5.5 Instant? Head over to chatgptt.com to test its capabilities today. And for a deeper understanding, watch the original video on YouTube. It's like chatting with a colleague who's already explored the field.

Frequently Asked Questions

Thibault Le Balier

Co-fondateur & CTO

Coming from the tech startup ecosystem, Thibault has developed expertise in AI solution architecture that he now puts at the service of large companies (Atos, BNP Paribas, beta.gouv). He works on two axes: mastering AI deployments (local LLMs, MCP security) and optimizing inference costs (offloading, compression, token management).

Related Articles

Discover more articles on similar topics

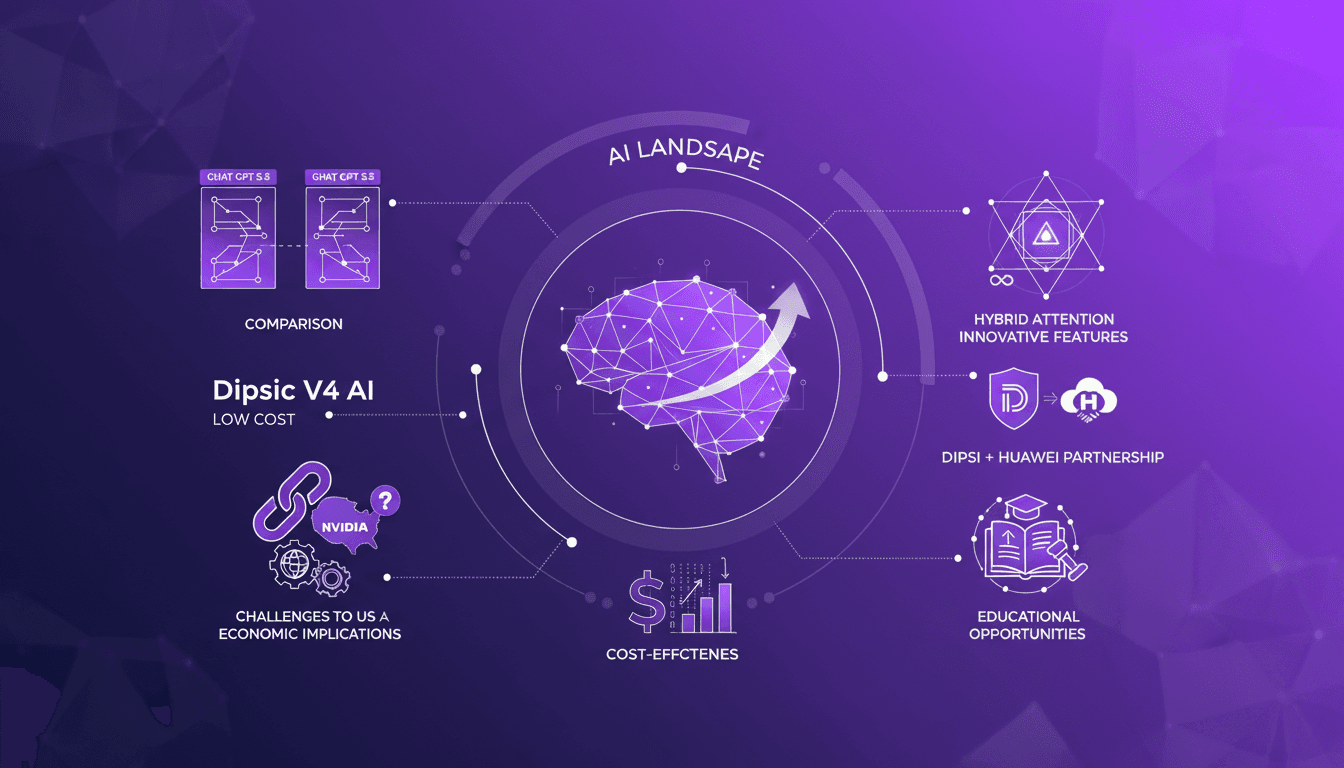

Dipsic V4: AI Revolution, Challenges OpenAI

I've been in the AI trenches for years, watching models evolve. But when I first got my hands on Dipsic V4, I knew we were onto something game-changing. With 1600 billion parameters, this model isn't just another tool in the landscape; it’s a potential disruptor in a space dominated by giants like OpenAI's GPT 5.5. Let me show you why this model is causing such a stir and how it’s rewriting the rules. We’ll dive into its innovative features, aggressive pricing strategy, and what it means for players like Nvidia and OpenAI. Watch out, this could be a game changer.

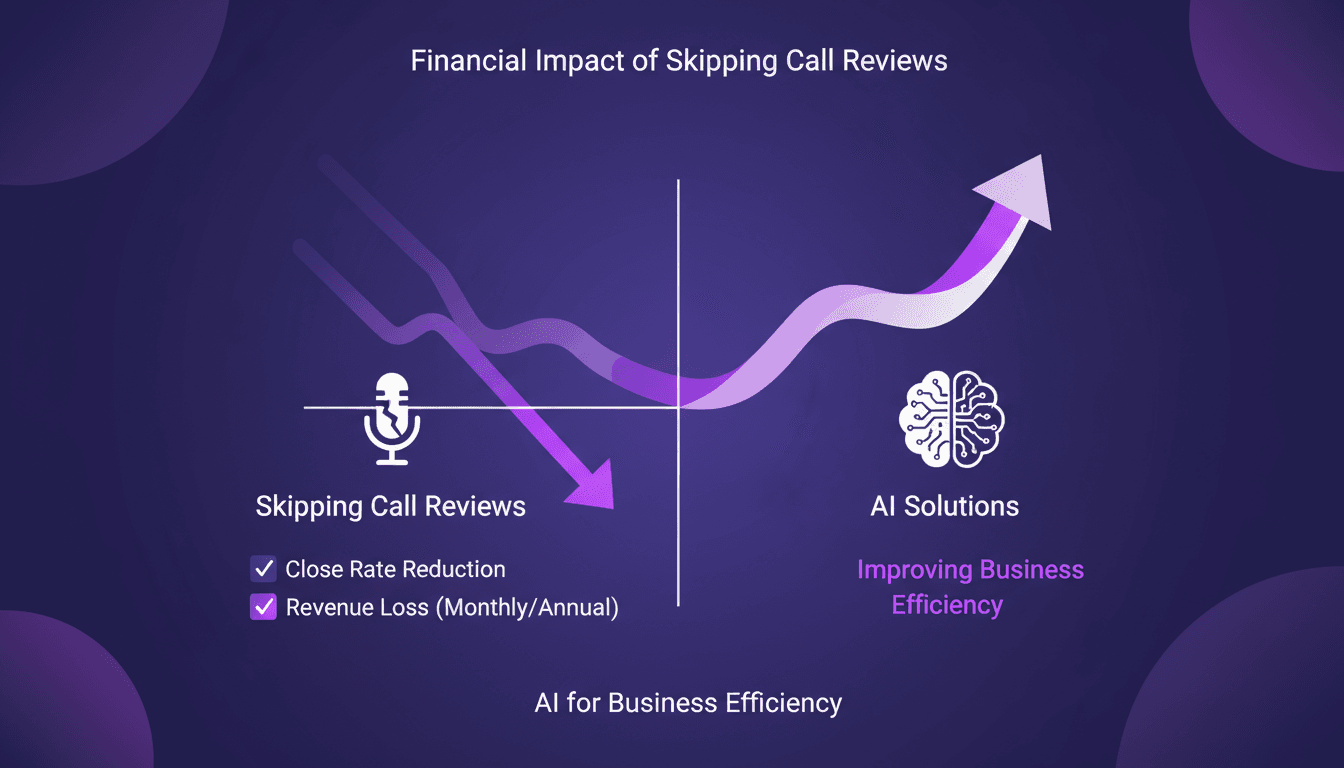

Stop Losing $360K: AI for Call Reviews

I was bleeding $360,000 annually without even realizing it. The culprit? Skipping call reviews. In the fast-paced sales world, these small oversights can cost you big. I turned things around using AI to analyze my calls and boost my close rates. We're talking practical solutions here, not theory. First, I identified gaps in my current processes, then integrated AI to fill them. The result? A direct increase in efficiency and revenue protection. Beware not to underestimate the potential impact of these tools. Sometimes, a simple tweak can be a game changer.

Integrate Codex and Google Calendar for Efficient Meetings

Ever been caught scrambling before a sales meeting, trying to gather customer details and polish your presentation at the last minute? Yeah, I've been there, and it's not a good look. But now, I streamline my prep with Codex, integrating it seamlessly with Google Calendar and Salesforce to get all the info I need in seconds. In this guide, I'll show you my workflow and the tools I rely on daily. Heads up: I'll share the mistakes that tripped me up, so you don't have to go through the same hassle.

Evolving Role of Software Engineers: Key Insights

I've been in the trenches of software engineering long enough to see our roles evolve. We started as code writers, became system architects, and now, we're orchestrators of complex ecosystems. The rise of advanced language models has reshaped our daily workflows. When I configure an architecture, I'm not just coding anymore; I'm designing entire systems. These models amplify our expertise—they don't replace it. But remember, a good engineer remains the author of their applications, even with a powerful tool at hand. Curious about how these shifts redefine our profession? Let's dive into this fascinating world.

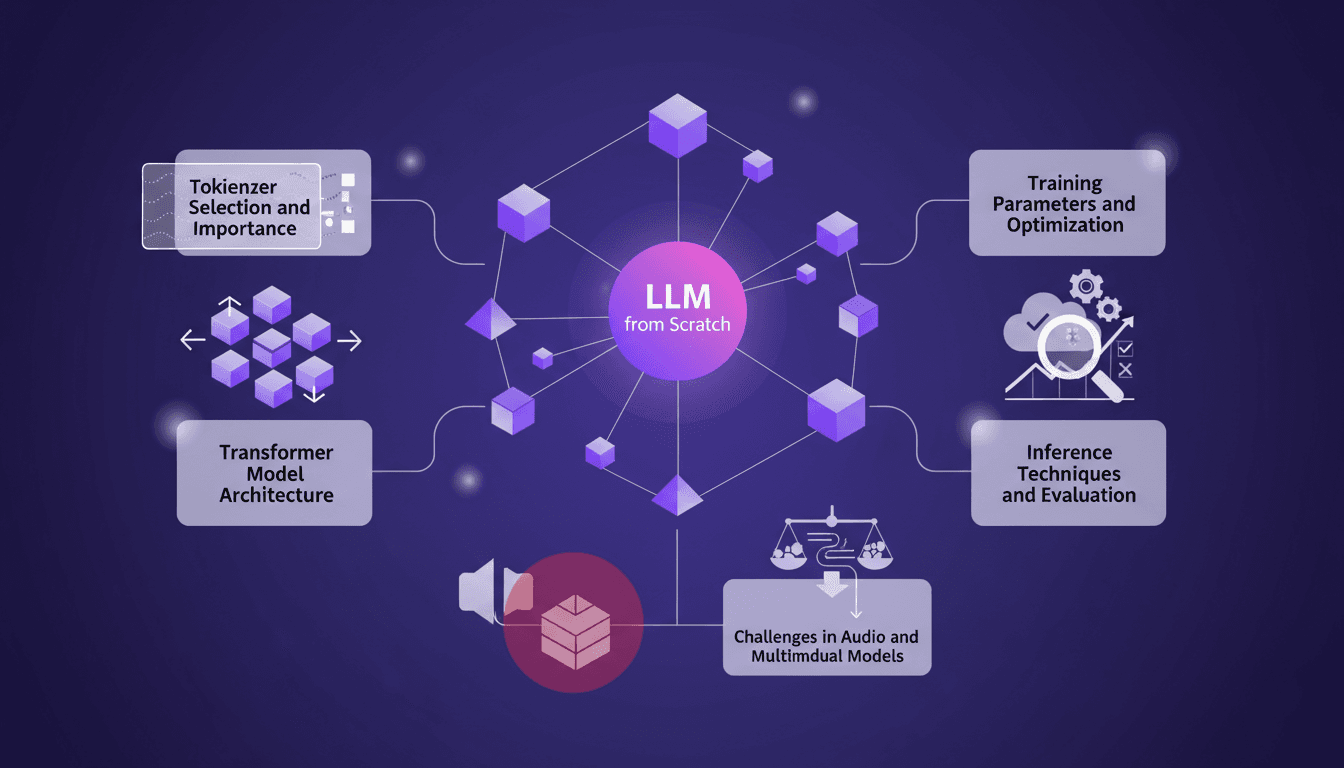

Training an LLM from Scratch: Practical Guide

I remember the first time I decided to train a large language model from scratch. It felt like climbing a mountain with no map. But once you get the hang of it, it's like orchestrating a symphony. In this guide, I'll take you through my journey of building an LLM locally, inspired by Andre Karpathy's Nano GPT. We'll dive into tokenizer selection, Transformer model architecture, training parameters, and much more. I'll share the mistakes I made, the solutions I found, and how I optimized for efficiency. This is a practical guide for anyone wanting to truly understand each step of the process without wasting time on unnecessary details.