GPT 5.4 Mini: Performance and Cost Compared

I've been diving into the GPT 5.4 Mini and Nano models—talk about game changers. But, like always, there are trade-offs. With AI models evolving fast, the GPT 5.4 series offers intriguing options for devs who need to balance performance and cost. First, I set up the Mini to get a feel for its performance. Scoring 54.4%, it holds its own, especially considering the lower cost. Then I tested the Nano, which comes in at 52.5%. It's perfect for apps where every millisecond counts. But watch out for latency. We'll dive into how these models can fit into your workflows, and especially, where they truly stand out against competitors.

I've been diving into the new GPT 5.4 Mini and Nano models, and let me tell you, these are game changers. But, as always, there are trade-offs that need to be navigated. With AI models evolving rapidly, the GPT 5.4 series offers intriguing options for developers looking to balance performance and cost. First, I connected the Mini to see how it stacks up. Scoring 54.4% in performance, it's holding its own, especially when you think about the reduced cost. Then I gave the Nano a spin, which scores 52.5%. It's perfect for apps where every millisecond counts. But watch out for those latency issues. In this tutorial, we're going to explore how these models can integrate into your daily workflows, and most importantly, where they truly stand out from the competition. We'll dive into access and usage options for the Mini, specific use cases, and cost comparisons with other market players. Get ready to see how these models can transform your development approach.

Getting to Know GPT 5.4 Mini and Nano

Diving into the capabilities of GPT 5.4 Mini and Nano, I was quite astounded by their performance for such compact models. Scoring 54.4% and 52.5% respectively, they truly stand out in their niche. Both are tailored for agentic use cases and computer vision tasks. Knowing their strengths is crucial to selecting the right model for specific tasks.

What strikes me is how these models handle complex tasks with efficiency I hadn't expected. Their low cost doesn't mean compromised performance, quite the opposite. As a developer, choosing between Mini and Nano depends on the task's complexity and the need for speed or accuracy.

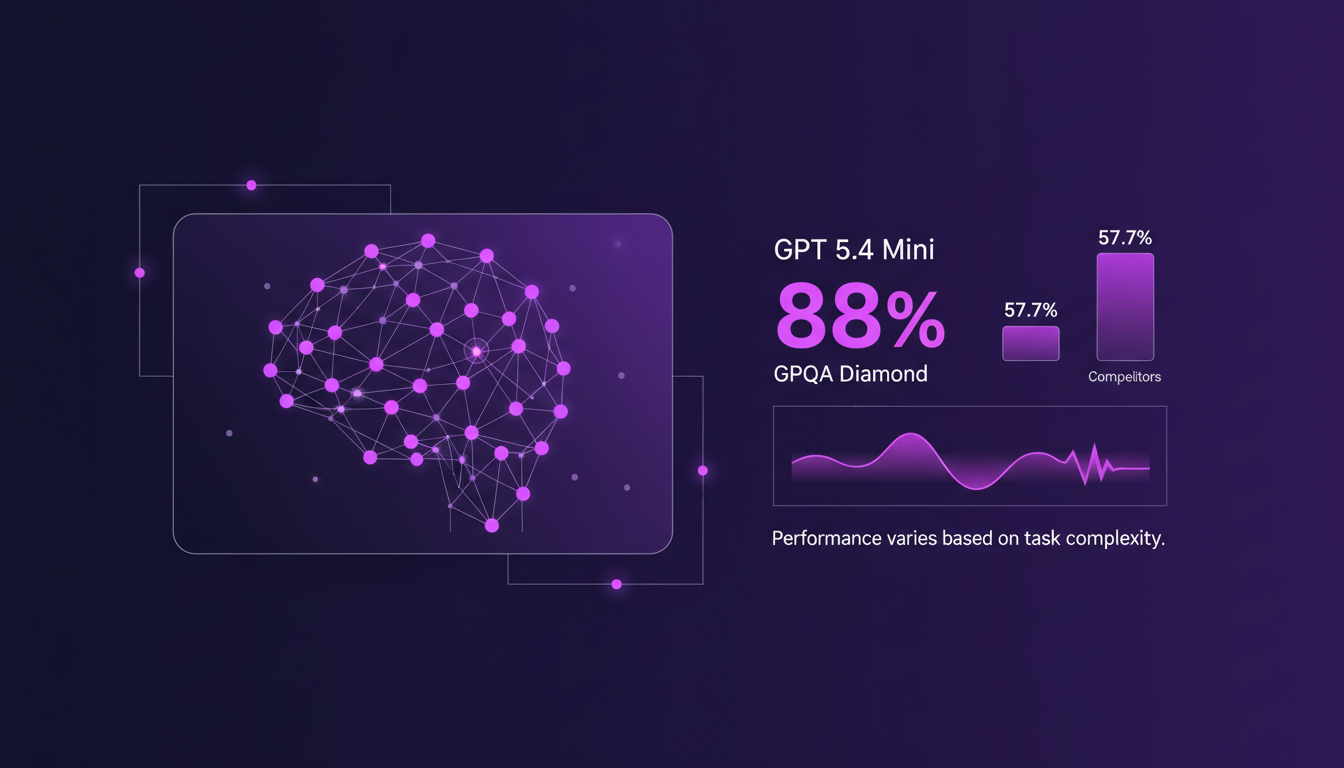

Performance Metrics: What the Numbers Say

When I started benchmarking GPT 5.4 Mini, its 88% score on the GPQA diamond was truly impressive. This model competes with the larger models boasting a 57.7% overall score. It's in complex tasks where these performance differences become crucial. Higher scores generally mean better efficiency for intricate queries.

I tested these models against competitors, and seeing GPT 5.4 Mini perform with a 54.4% score on the SWE bench pro confirmed its suitability for high-reasoning tasks. However, beware of overly complex tasks where even small performance gaps can be significant.

Cost Efficiency: Navigating the Trade-offs

Cost is always a factor. GPT 5.4 models are competitively priced. Compared to larger models, Mini and Nano offer substantial savings in specific scenarios. Token pricing and outputs are critical—watch out for hidden costs.

Sometimes, spending a bit more upfront can save headaches later on. Essentially, GPT 5.4 Mini costs $4.5 per million output tokens, making it significantly cheaper than its larger counterparts. But don't underestimate the Nano; while cheaper, it might not always suffice for complex tasks.

Real-World Use Cases: Where GPT 5.4 Shines

I've used GPT 5.4 Mini for real-time applications, and latency is minimal. Agentic use cases benefit greatly from the model's efficiency. For computer vision tasks, there's a notable improvement in processing speed.

Efficiency in these scenarios directly translates to business impact. The gains in speed and accuracy in tasks like software debugging or code review are palpable and justify the integration of GPT 5.4 Mini into daily workflows.

Access and Usage: Getting Started with GPT 5.4 Mini

Setting up GPT 5.4 Mini is straightforward, but there are nuances. I found the usage options flexible, with API access being a plus. Latency improvements mean faster results in high-demand environments.

To get started, simply configure your access via OpenAI's platform. With a 400k token context and reduced access costs, GPT 5.4 Mini is an option worth considering for anyone looking to optimize costs without sacrificing performance.

In summary, GPT 5.4 Mini and Nano are powerful tools for anyone looking to balance cost and performance in their AI tasks. Don't underestimate the importance of testing them yourself to see where these models can fit into your current workflows. Here's a comprehensive overview of these models if you want to know more.

I've been working with GPT 5.4 Mini and Nano, and honestly, I'm impressed by how well these models balance performance and cost. Here are some key takeaways:

- GPT 5.4 scored 57.7%, making it a solid option for ramping up your AI toolkit.

- For lighter needs, GPT 5.4 Mini and Nano hold their own with scores of 54.4% and 52.5%, respectively.

- On the budget front, these models remain competitive against other market options. So yes, it's a real game changer, but remember, smart orchestration is crucial to get the most out of your setup.

Looking forward, I encourage you to dive into your own projects with GPT 5.4. It's through experimenting that you'll see where it can take you. Watch the full video "GPT 5.4 Mini in 5 mins!" on YouTube for a comprehensive overview: video link. Efficiency and smart orchestration are truly key.

Frequently Asked Questions

Thibault Le Balier

Co-fondateur & CTO

Coming from the tech startup ecosystem, Thibault has developed expertise in AI solution architecture that he now puts at the service of large companies (Atos, BNP Paribas, beta.gouv). He works on two axes: mastering AI deployments (local LLMs, MCP security) and optimizing inference costs (offloading, compression, token management).

Related Articles

Discover more articles on similar topics

Deploying Mistral Small 4: Practical Use Cases

I dove into the Mistral Small 4 model recently, and let me tell you, it's a beast with its 119 billion parameters. But don't let that scare you; it’s all about how you harness it. With its multimodal and multilingual capabilities, this model is truly a game changer. I'll walk you through its setup, the trade-offs I encountered, and where it truly shines. Whether you're comparing it to GPT-3 or trying to grasp the hardware requirements, there's plenty here to optimize your AI approach. Watch out, though—underestimating the technical specs can hit you hard on performance.

Grok TTS: Fast and Cost-Effective API Integration

Ever been burned by overpriced TTS solutions that don't deliver? I have. That's why I switched to Grok TTS. It's fast, cheap, and integrates like a dream. With support for over 20 languages and inline emotion tags, it's a game changer. But watch out, don't get dazzled just by the price; it's crucial to know how to integrate it effectively into your applications. Let's compare it with 11 Labs and see why Grok TTS might be the solution you've been waiting for.

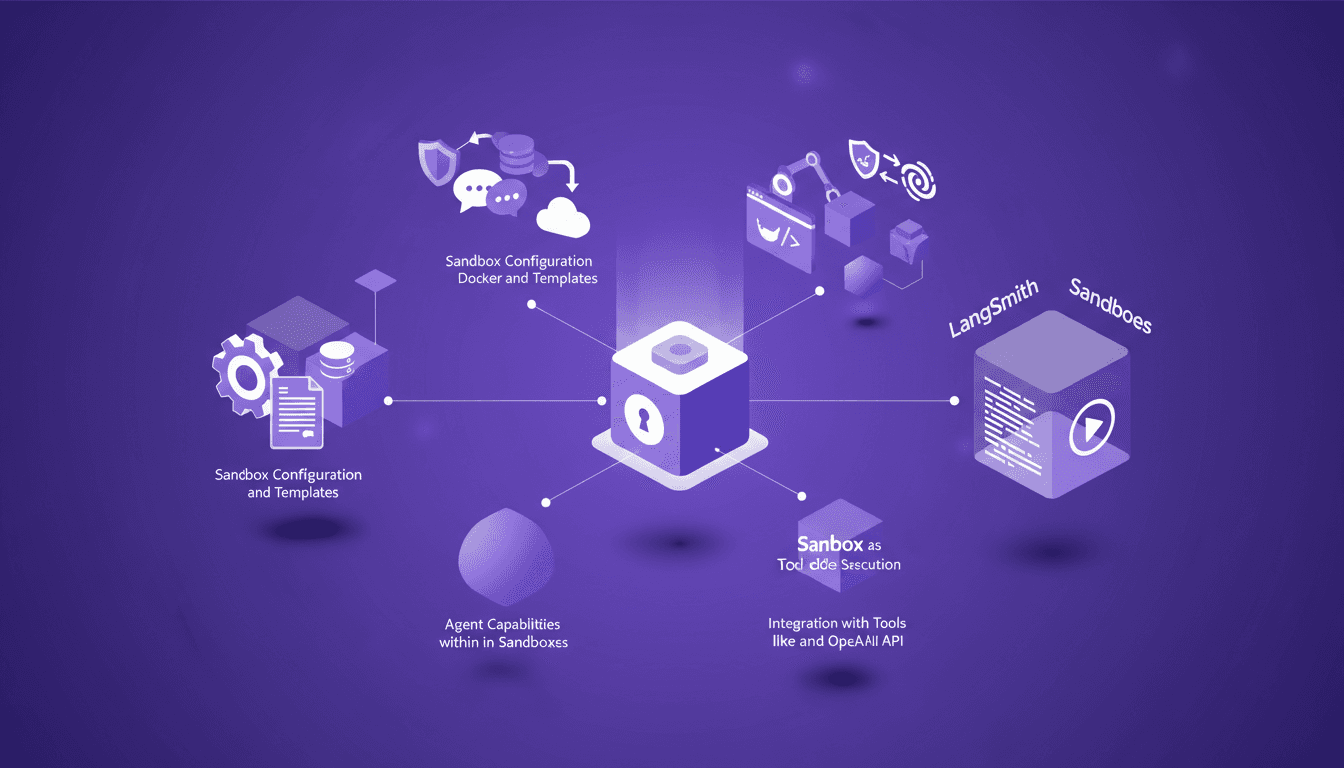

LangSmith Sandboxes: Secure Code Execution

I've been hands-on with LangSmith Sandboxes for a while now, and let me tell you, spinning up a secure environment in just a second or two is a game changer. But there's more under the hood that makes this tool indispensable for secure code execution. Whether you're testing new code snippets or running complex simulations, understanding how to configure and leverage these sandboxes can save you time and headaches. We'll dive into agent capabilities, security measures, and integration with tools like Docker and the OpenAI API. Ready to transform your workflow?

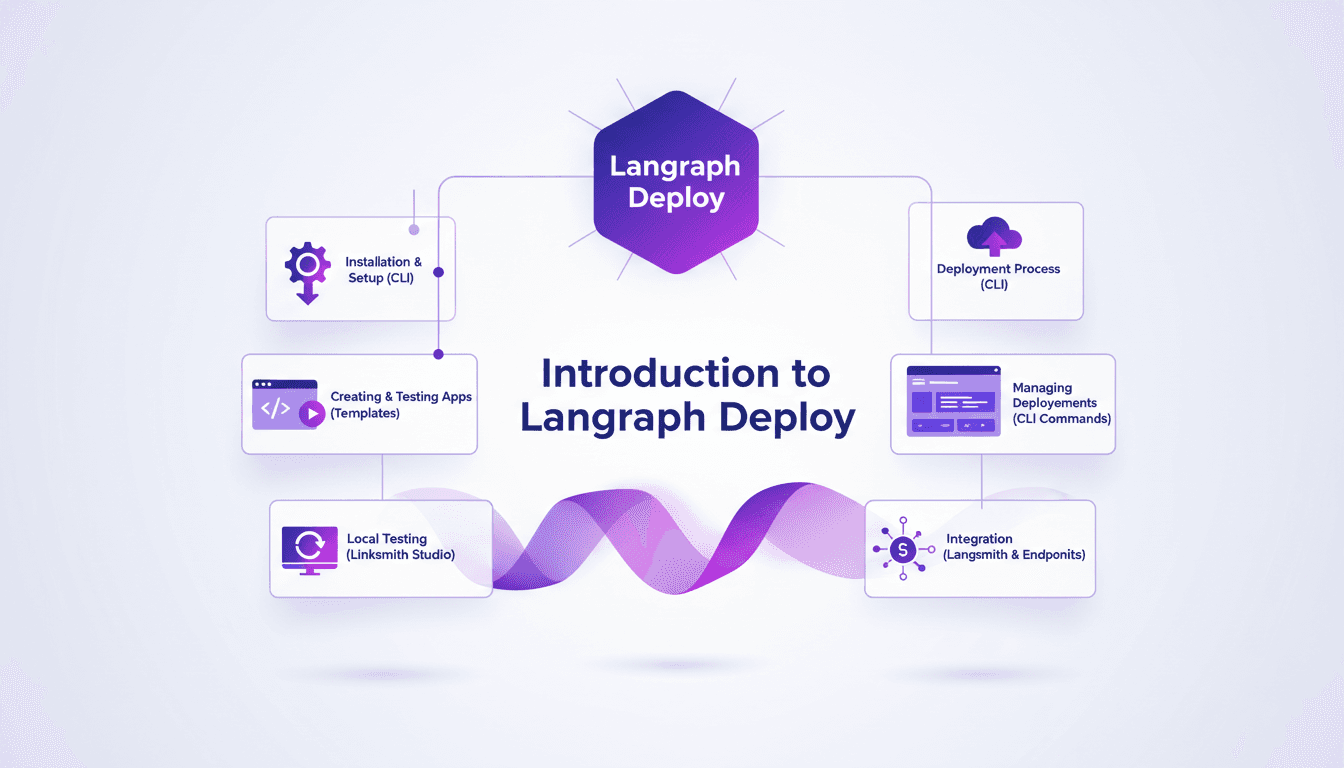

Deploy Agents Easily with Langraph CLI: A Practical Guide

Deploying agents shouldn't be a pain. With Langraph CLI, I've slashed my deployment time down to mere minutes. First, I set up the CLI installation with the straightforward 'uv tool install langraph cli' command. Then, I test my applications locally using Langsmith Studio, allowing for quick iterations (essential to dodge any production mishaps). After that, I spin up a new Langraph application with 'langraph new' and I'm ready for deployment. I'll walk you through how I integrated with Langsmith, managed my deployments, and used the available endpoints—all from the terminal in just a few commands. Trust me, once you experience this ease, there's no going back.

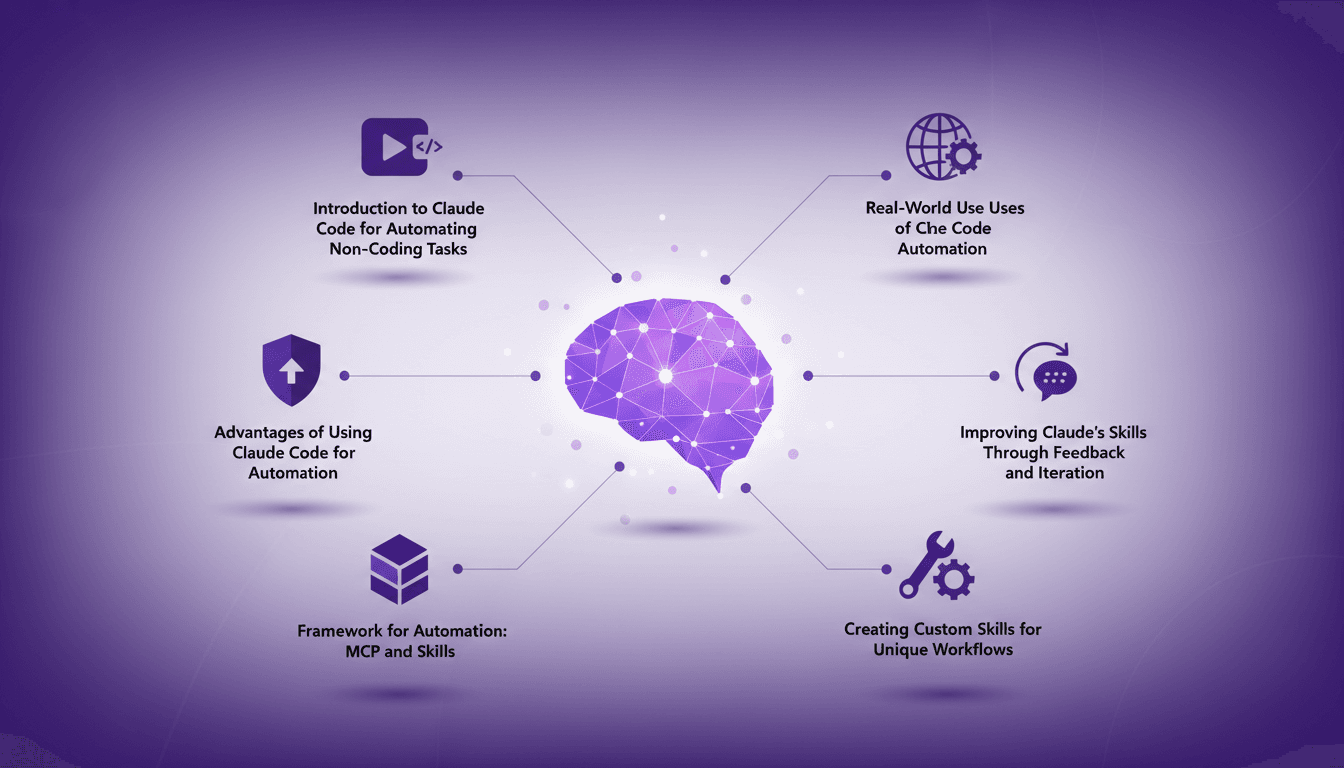

Automate Without Coding Using Claude Code

I still remember the moment I realized I could automate my tasks without writing a single line of code. It felt like uncovering a secret weapon. With Claude Code, I turned repetitive tasks into efficient workflows, saving time and reducing errors. In this article, I'll show you how I did it, covering the frameworks, real-world applications, and how you can tailor it to your unique needs. If efficiency is your goal, you won't want to miss this.