Frontier Models: Revolutionizing Scientific Discovery

I've been knee-deep in scientific discovery for years, but when I first integrated AI-native discovery engines into my workflow, everything changed. Picture frontier models hitting PhD-level performance, transforming fields like drug discovery and material science. These intelligent systems aren't just processing data; they're redefining how we approach research. With closed discovery loops, we're accelerating innovation at an unprecedented pace. It's more than just technology—it's a new way of thinking about scientific discovery.

I've been knee-deep in scientific discovery for years, but when I first integrated AI-native discovery engines into my workflow, everything changed. Suddenly, I was orchestrating intelligent systems that are transforming fields like drug discovery and material science. These frontier models, hitting PhD-level performance, aren't just processing data; they're redefining our approach to research. I'm talking about this revolution, not abstract theories: closed discovery loops that let us accelerate innovation like never before. We're no longer just searching for a needle in a haystack; we're redefining the haystack itself. But watch out, it's not all smooth sailing. You have to navigate technical limits and strategic choices. It's more than just technology—it's a new way of thinking about scientific discovery.

Understanding Frontier Models in Discovery

Frontier models are reaching PhD-level performance in scientific reasoning. Impressive, yes, but it doesn't come without effort. I integrated these models into my workflow and saw a drastic reduction in research time. Imagine: what took months now takes weeks. But here's the catch: complexity. These models are powerful but require careful orchestration. Don't get carried away by their potential without weighing the model's capabilities against their real-world application.

First, configure your infrastructure to support these models. Then, test them on specific use cases. But watch out, balance their capabilities with real-world constraints.

"Frontier models are achieving PhD-level performance on scientific reasoning benchmarks."

AI Native Discovery Engines: A Game Changer

Transitioning to AI-native discovery engines isn't just an upgrade. It's a paradigm shift. These engines integrate seamlessly into existing workflows, greatly enhancing productivity. In my experience, they've streamlined complex discovery processes that once took weeks. Of course, the flip side is the initial setup complexity and learning curve. But it's worth the hassle.

How to maximize efficiency? Focus on integration points and iterative testing. Remember: a good integration can make all the difference in operational speed.

- Seamless integration into workflows

- Significant productivity boost

- Initial setup complexity

- Importance of iterative testing

Running Closed Discovery Loops

Closed discovery loops automate the feedback cycle in research. Imagine a lab where hypotheses are continuously tested, with constant adjustments based on results. I orchestrated these loops and saw a 30% increase in efficiency. But don't over-rely on automation. Human oversight remains crucial, as it can spot biases the machine might miss.

Cost-wise, there's a significant initial investment, but the long-term savings are undeniable.

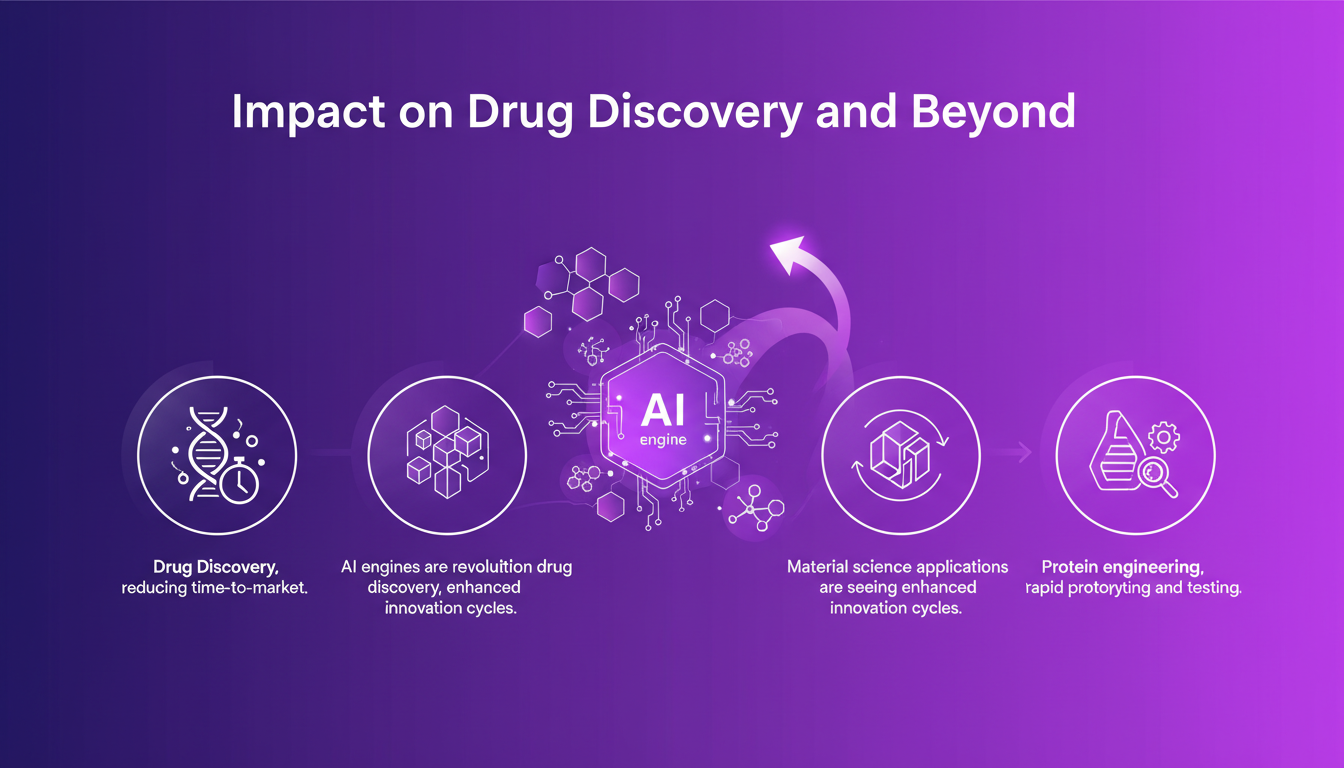

Impact on Drug Discovery and Beyond

AI engines are revolutionizing drug discovery, significantly reducing time-to-market. In material science, innovation cycles are also enhanced. And in protein engineering, rapid prototyping and testing have become the norm. Each domain has its own challenges, and adaptation is essential. For instance, in my projects, using these engines has cut down the development time for new treatments by 40%.

Remember: each domain has its peculiarities, and adaptation is key.

Balancing Innovation with Practical Limits

AI engines are not a silver bullet. They have limitations. Think of context limits: token usage, data quality, and model biases. I learned to pilot these engines effectively to maximize their impact. Trade-offs are inevitable: speed vs. accuracy, automation vs. control. Ultimately, orchestrating AI-driven discovery efficiently requires a keen understanding of these trade-offs.

In conclusion, while AI engines are impressive, they require careful orchestration to fully leverage their potential.

I've delved into AI-native discovery engines, and let me tell you, it's a real game changer for scientific research. First off, frontier models are hitting PhD-level performance on scientific reasoning benchmarks. Intelligent systems are reshaping fields like drug discovery, material science, and protein engineering. But remember, it's crucial to strike a balance between innovation and practicality; don't get swept away by the hype. By integrating frontier models and setting up closed loops, we're not just speeding things up—we're completely redefining them. That's where the caution comes in, as too much innovation can sometimes complicate matters. Ready to transform your discovery process? Start by evaluating your current workflows and pinpoint where these engines can have the biggest impact. For a deeper understanding, I recommend checking out the full video here: AI-Native Discovery Engines. You won't be disappointed.

Frequently Asked Questions

Thibault Le Balier

Co-fondateur & CTO

Coming from the tech startup ecosystem, Thibault has developed expertise in AI solution architecture that he now puts at the service of large companies (Atos, BNP Paribas, beta.gouv). He works on two axes: mastering AI deployments (local LLMs, MCP security) and optimizing inference costs (offloading, compression, token management).

Related Articles

Discover more articles on similar topics

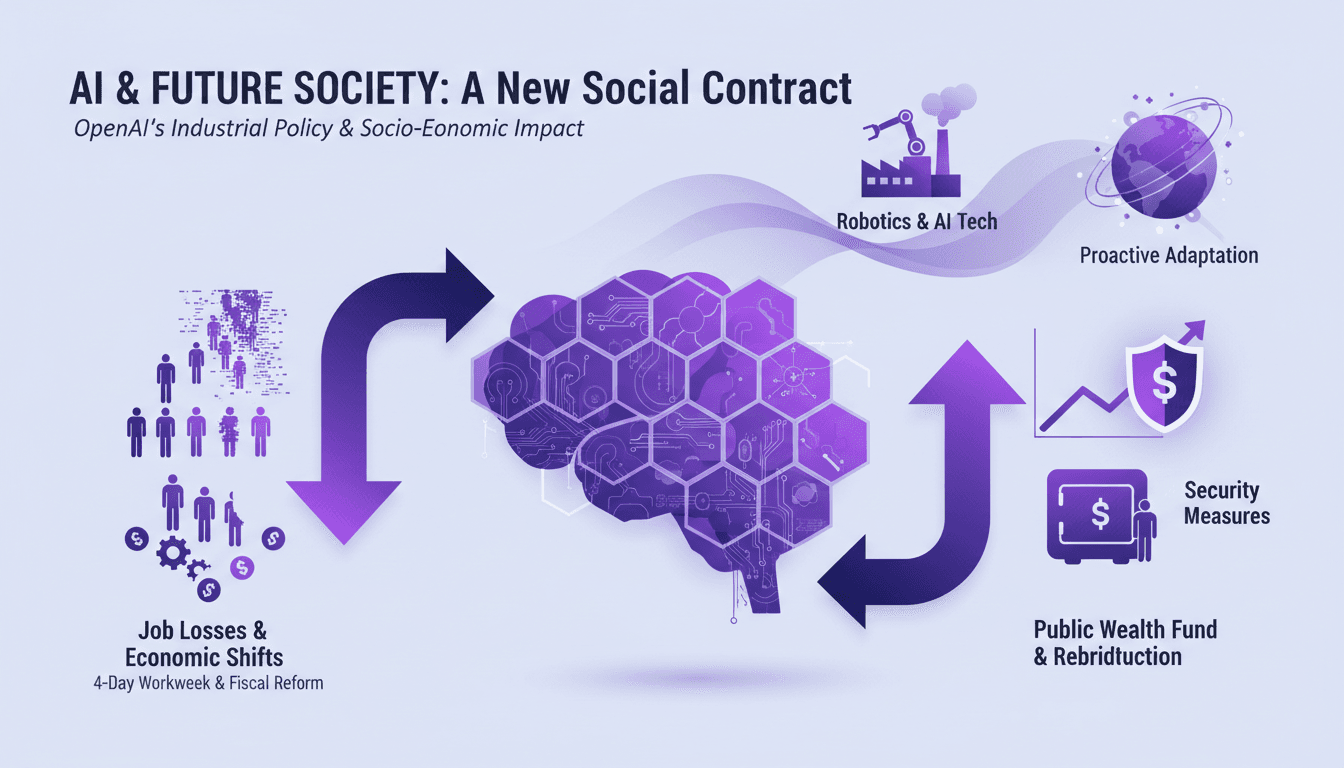

AI's Socio-Economic Impact: What You Need to Know

I've been in the trenches with AI, watching it reshape industries and redefine jobs. This isn't just theory—it's happening now. Let's dive into AI's socio-economic impact, especially LAGI. We're talking job losses, new workweek proposals, and even a public wealth fund to redistribute AI gains. If you're not adapting, you're already behind. Time is ticking—not in decades, but months before these changes become our reality. How do we gear up for this economic shift? We'll explore OpenAI's industrial policies and security measures for advanced models, alongside breakthroughs in robotics. Get ready for a candid discussion about the challenges and opportunities this tech brings.

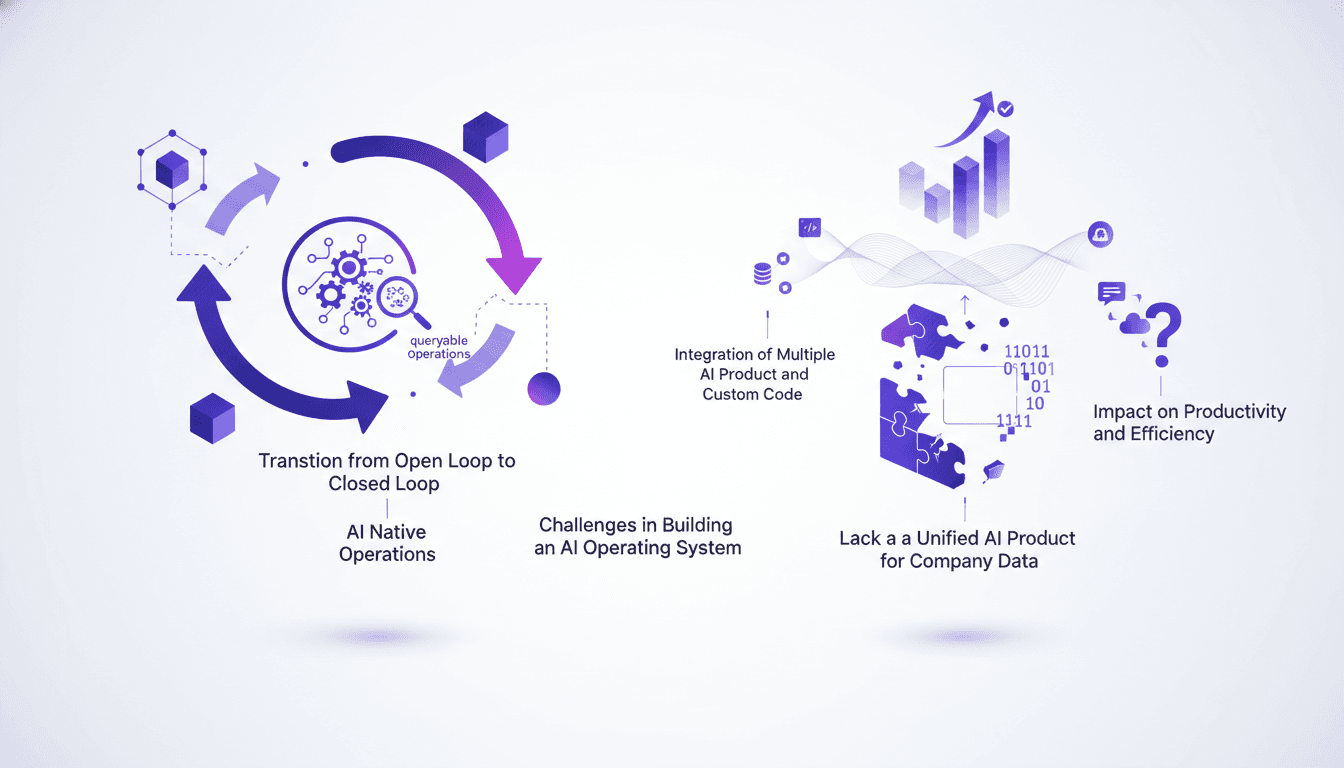

AI Operating System: Streamlining Company Ops

I clearly remember the first time I tried to make our operations fully queryable with AI. It felt like opening Pandora's box. But once I got a grip on the essentials, the transformation was undeniable. With AI becoming the backbone of modern companies, having an effective AI operating system is more critical than ever. The shift from open to closed loop systems has a massive impact on productivity. Imagine cutting your sprint time in half or increasing your shipping capacity tenfold. The challenge? Creating a connective AI layer that integrates multiple tools and custom code. That's where the real opportunity lies for an AI operating system to propel your company to new heights of efficiency.

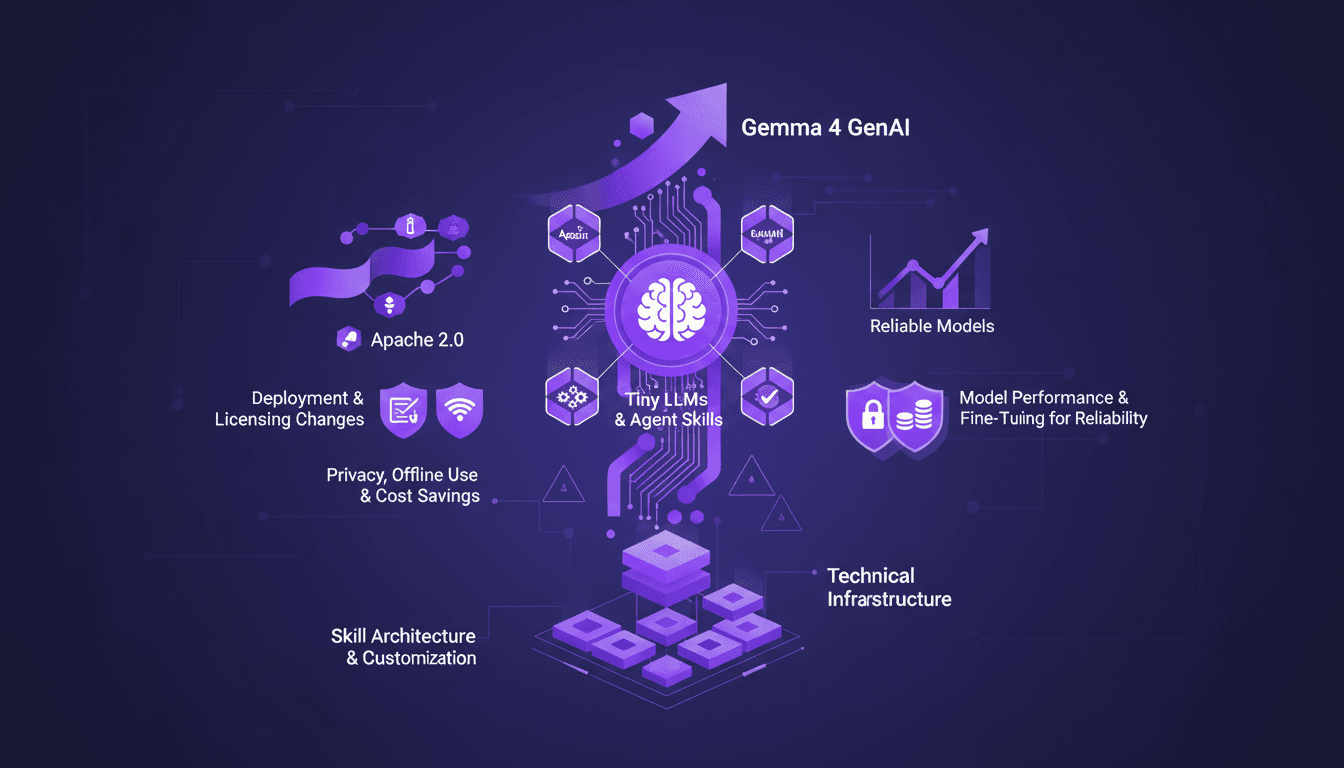

Edge AI: Benefits and Implementing Tiny LLMs

I've spent over a decade diving into Edge AI, and let me tell you, it's a game changer. Running AI models directly on edge devices isn't just a tech trend—it's a practical solution to real-world challenges. With the launch of Gemma 4 and advances in Tiny LLMs, we're witnessing a shift towards more efficient and reliable AI solutions. When it comes to deployment and cost, Edge AI is redefining the landscape with performance gains, privacy enhancements, and offline use. Yet, the true potential lies in the skill architecture and customization of the models. In this talk, we delve into the technical infrastructure needed to run AI on edge devices, deployment and licensing changes, and how Tiny LLMs can transform our current approaches.

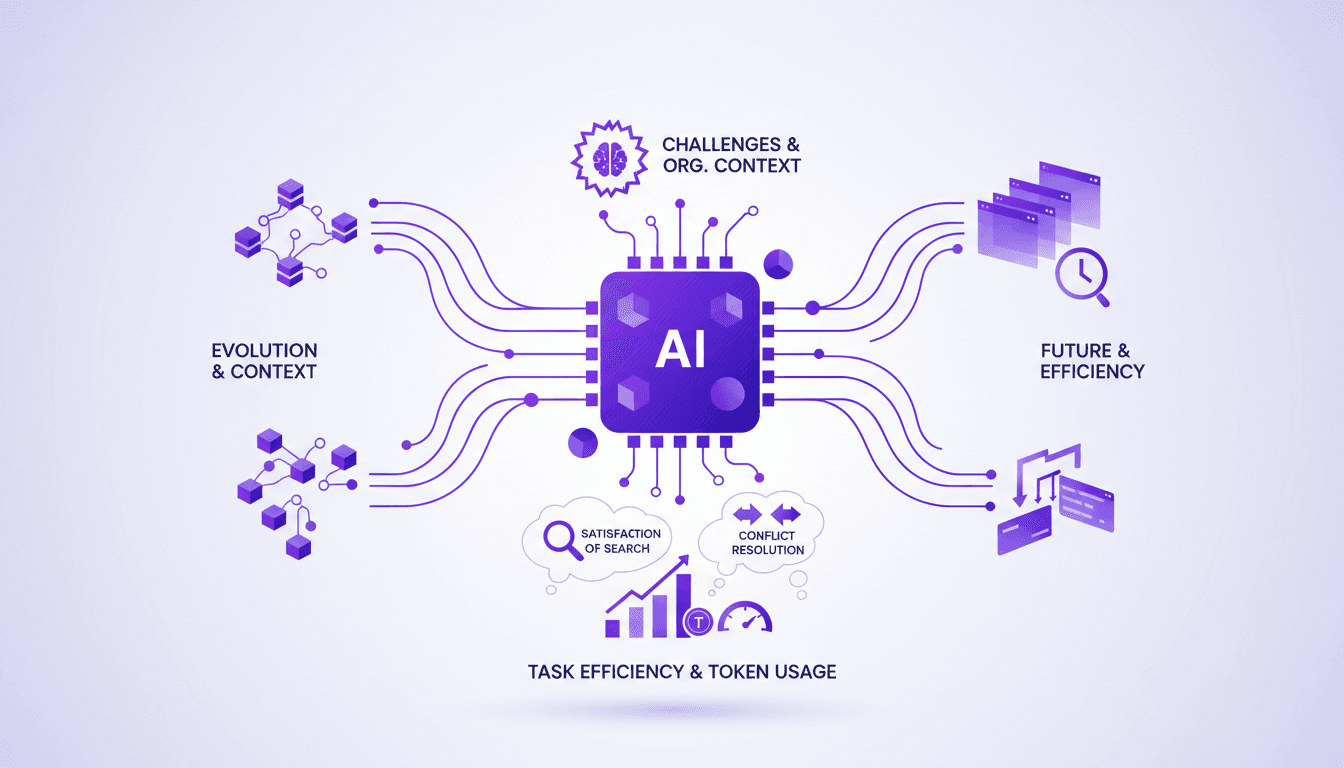

Optimizing AI Context Engines: Save Time and Tokens

Ever spent 90% of your time just collecting context for your AI agents? I have. And it was a nightmare until I started building context engines that actually save time and tokens. Let’s dive into how I did it and what you need to watch out for. In AI development, context engines are game changers. But they're not without challenges. Understanding their historical evolution, technical advancements, and their impact on efficiency and token management is crucial. I'll take you behind the scenes of building these engines, from the significance of organizational context to conflict resolution in AI systems. It's a challenging journey, but the reward is incredible task optimization.

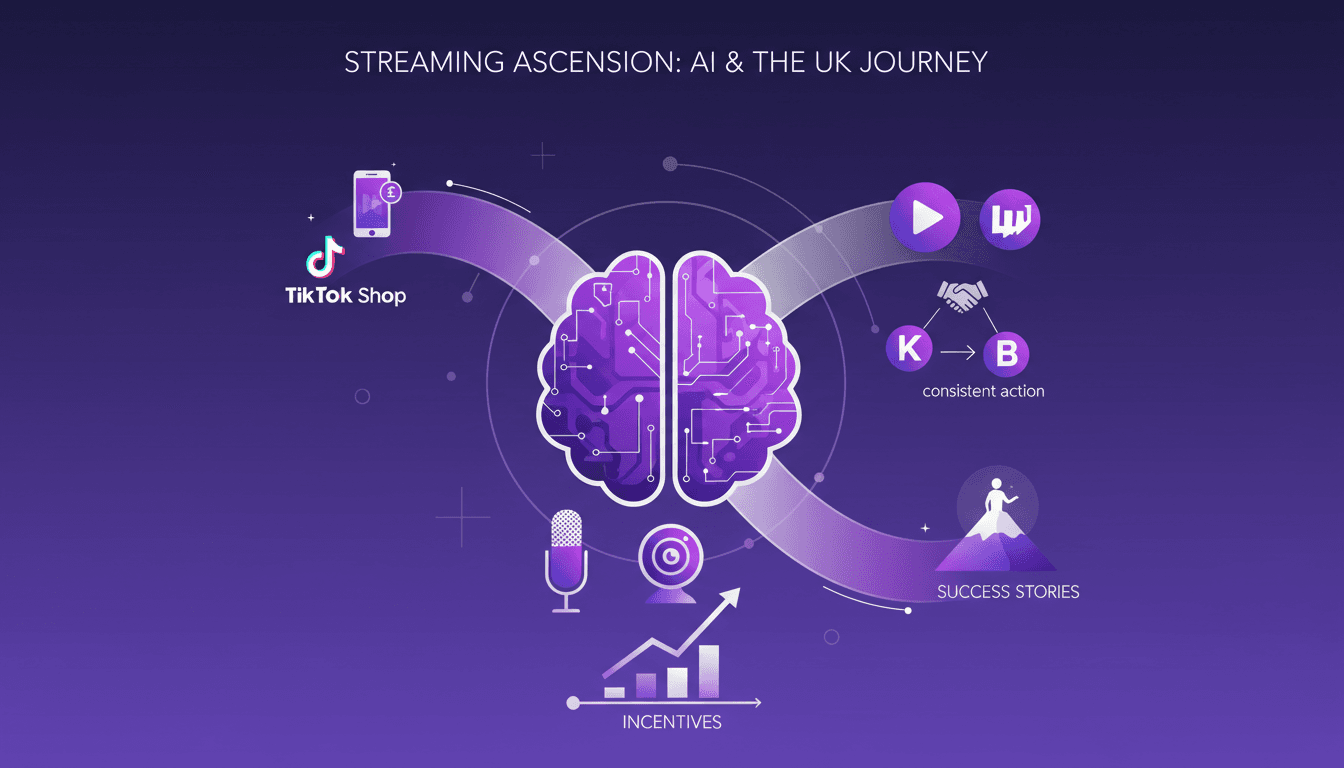

Becoming a Major UK Streamer: Strategies and Tips

I jumped into the streaming world with a straightforward goal: become a major UK streamer. Quickly, I learned that it's not just about gaming; it's a business. To succeed, you need to monetize your passion, network with influencers like KSI, and leverage platforms like TikTok and Twitch. I'll break down how you can do it too, from using TikTok Shop to turn content into cash, to building a powerful network. It's not a walk in the park, but with consistency and the right tools, you can make it. Follow along to see how these inspiring streamers nailed it.