Generative Models at Scale: Optimization Focus

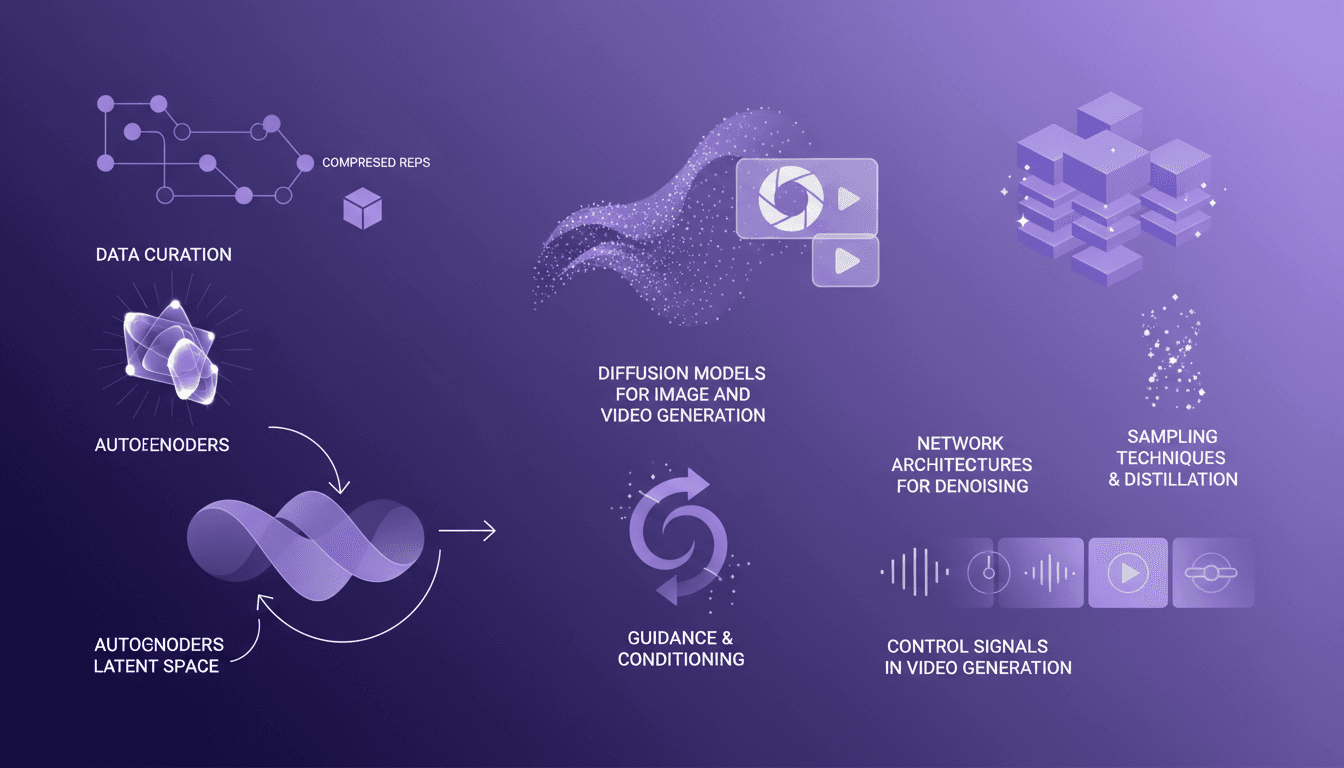

I've spent countless hours wrestling with generative models, and let me tell you, it's a wild ride. Data curation is your lifeline—without it, you're building on sand. In this talk, we dive into the world of large-scale generative image and video models. We start with data curation, dig into compressed representations with autoencoders, and explore diffusion models for image and video generation. We'll also hit on latent space, decoding, control signals, and of course, network architectures for denoising. Buckle up; we're going at 30 frames per second!

I've spent countless hours wrestling with generative models, and let me tell you, it's a wild ride. First, you need to curate your data like a pro. Without it, you're building on sand. In the AI world, building large-scale generative image and video models isn't just about algorithms; it's about orchestrating a symphony of data, compression, and computation. In this conference talk, we'll dissect data curation for large-scale models, compressed representations and autoencoders, diffusion models for image and video generation. We'll dive into latent space and decoding, guidance and conditioning in generative models, network architectures for denoising, sampling techniques and distillation, and control signals in video generation. Get ready for a fast-paced journey—we're moving at 30 frames per second!

Data Curation: The Foundation of Scale

When I ventured into building large-scale models, the first lesson I learned was the crucial importance of data curation. Without well-prepared data, even the most advanced model will yield mediocre results. Personally, I handle gigabytes of data, and believe me, it's a real challenge.

First, I avoid common pitfalls in data preparation, like overusing unfiltered data. I prioritize quality over quantity because a poorly calibrated dataset can lead to biased and ineffective training. To manage large data volumes, I use tools like Pandas and Apache Spark, which facilitate the processing and cleaning of my massive datasets.

- Setting up automated systems for data collection and filtering.

- Using sampling techniques to reduce dataset size while maintaining relevance.

Compressed Representations and Autoencoders

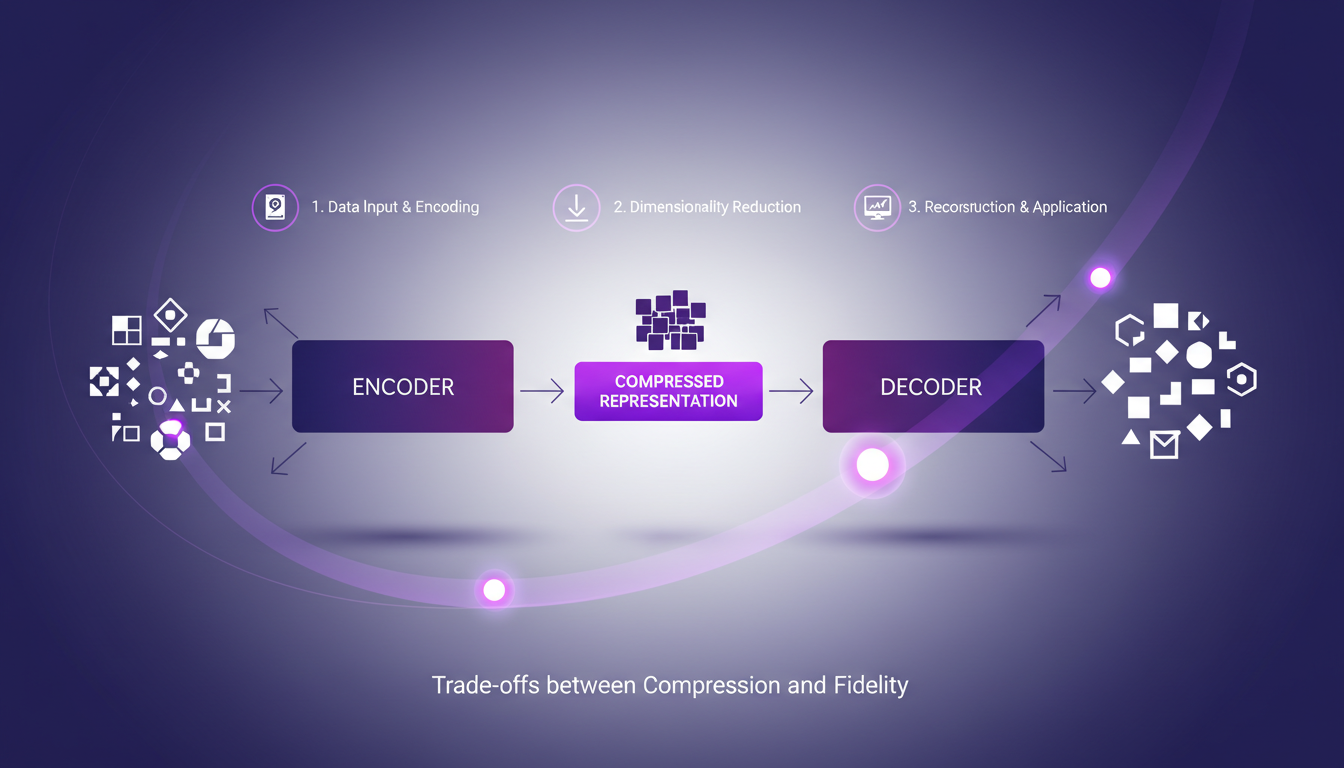

Autoencoders have become essential for me in reducing data size. What's great is they allow data compression without sacrificing integrity. The trade-off, of course, is between compression and fidelity.

To integrate autoencoders into my workflow, I start by ensuring my data is well-normalized. Then, I configure my autoencoder to learn to create compressed representations. This allows my model to efficiently handle high-resolution images, for instance, reducing from gigabytes to mere megabytes.

- Implementing autoencoders to create compact latent representations.

- Balancing compression to maintain data quality.

The key is not to over-compress, as you risk losing crucial details that can impact model performance. Compressed representation is essential for scalability, especially when working with 1080p video at 30 fps.

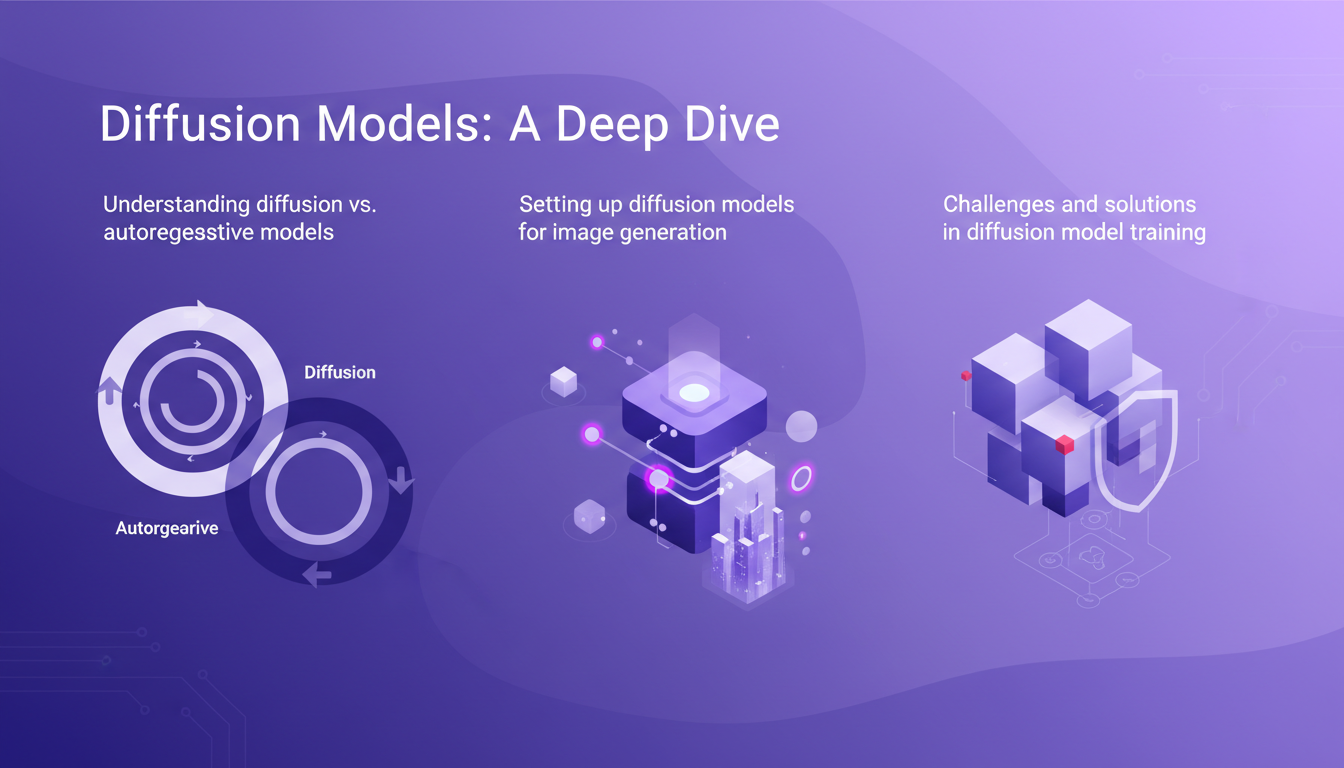

Diffusion Models: A Deep Dive

Diffusion models have been game-changing for me in image generation. Unlike autoregressive models, they are better suited for audiovisual data generation. Setting up a diffusion model for image generation involves several steps, but the key is understanding the diffusion and decoding process.

One challenge is training these models, which requires fine management of latent space and decoding. I ensure the model can add noise progressively and that the denoiser is effective in preserving the global structure while removing unnecessary details.

- Understanding the difference between diffusion and autoregressive models.

- Optimizing the corruption and denoising process for training.

Guidance and Conditioning in Generative Models

Guidance plays a crucial role in improving model outputs. For me, model conditioning is an essential technique that allows better control of signals during video generation. Lessons learned: you need to balance control signals to avoid artificial results.

Guidance directly impacts the quality of generated content, and I've learned to avoid common mistakes in model conditioning, like over-dependence on one type of control signal.

- Effective conditioning techniques for generative models.

- Impact of guidance on the quality of generated content.

Ultimately, it's about making subtle but impactful adjustments to achieve more natural and realistic results.

Network Architectures and Sampling Techniques

When I build denoising models, choosing the right network architecture is crucial. I've tested different approaches, and the ones that work best balance network complexity with speed. Techniques like stratified sampling can significantly boost model efficiency.

The distillation process, often underrated, allows improving model performance by reducing the number of steps needed for a good sample. It's truly a game changer.

- Architectural choices to optimize speed and accuracy.

- Sampling techniques to enhance model efficiency.

"Distillation in diffusion models reduces steps while enhancing sample quality."

In summary, by finely tuning architecture and sampling techniques, I've been able to create models that are more robust and faster, without sacrificing quality.

Building generative models at scale isn't just about picking the right algorithm. It's about how you orchestrate data, compression, and computation strategies effectively. Here are the key takeaways I gathered:

- Data Curation: Start with meticulous data curation. If your data's a mess, your models will just mirror that chaos.

- Compressed Representations and Autoencoders: I use these to shrink data size without losing the essentials. It saves time and computing power.

- Diffusion Models for Image and Video: Generating 1080p video at 30 fps for 30 seconds is doable, but watch out for the resources you'll need.

Looking forward, these techniques could really transform how we design generative models. But remember, the resources must match the ambition. Ready to dive deeper? Go watch Sander Dieleman’s full video: YouTube link. It’s a goldmine for anyone who wants to really push their models forward.

Frequently Asked Questions

Thibault Le Balier

Co-fondateur & CTO

Coming from the tech startup ecosystem, Thibault has developed expertise in AI solution architecture that he now puts at the service of large companies (Atos, BNP Paribas, beta.gouv). He works on two axes: mastering AI deployments (local LLMs, MCP security) and optimizing inference costs (offloading, compression, token management).

Related Articles

Discover more articles on similar topics

AI in Emotional Sales: A Game-Changer Unveiled

Yesterday, I thought AI couldn't close emotional sales. Then I built a system around it and watched my deal closures take off. So, how did I do it? Let's dive into AI's potential to transform sales processes, especially on the emotional front. Building the right architecture is a game-changer for operational efficiency and leaner operations. Companies can not only run leaner but also close more deals. Be warned, failing to adopt these technologies in time might leave you behind. Let's uncover why mastering this new tech wave is crucial.

Imagen 2.0: Revolutionizing Image Generation

When I first got my hands on Imagen 2.0, I was blown away by its potential. We're talking about generating 2K resolution images with multilingual support. The first thing I did was integrate it into my workflow, and the improvement is tangible. The advancement in resolution and detail is a real game changer, but watch out for technical limits in multi-image generation. Compared to previous models and DALL-E, Imagen 2.0 really stands out. This isn't about theory; I'm talking about daily impact on my practice. If you're aiming to innovate, this is the tool to explore.

Launching a Clothing Brand at 14: My Journey

I remember being 14 and deciding to launch my own clothing brand. It felt like climbing Everest with just a backpack. But I did it, and here's how you can too. Many believe you need to be an adult to start a business, but with the right mix of passion, strategy, and inspiration, you can turn a simple idea into a thriving enterprise. In this article, I share my journey, the challenges I faced as a young entrepreneur, and the inspiration I drew from successful entrepreneurs. Launching a brand at that age is like juggling school and business, but it's doable with the right content strategies and unwavering passion.

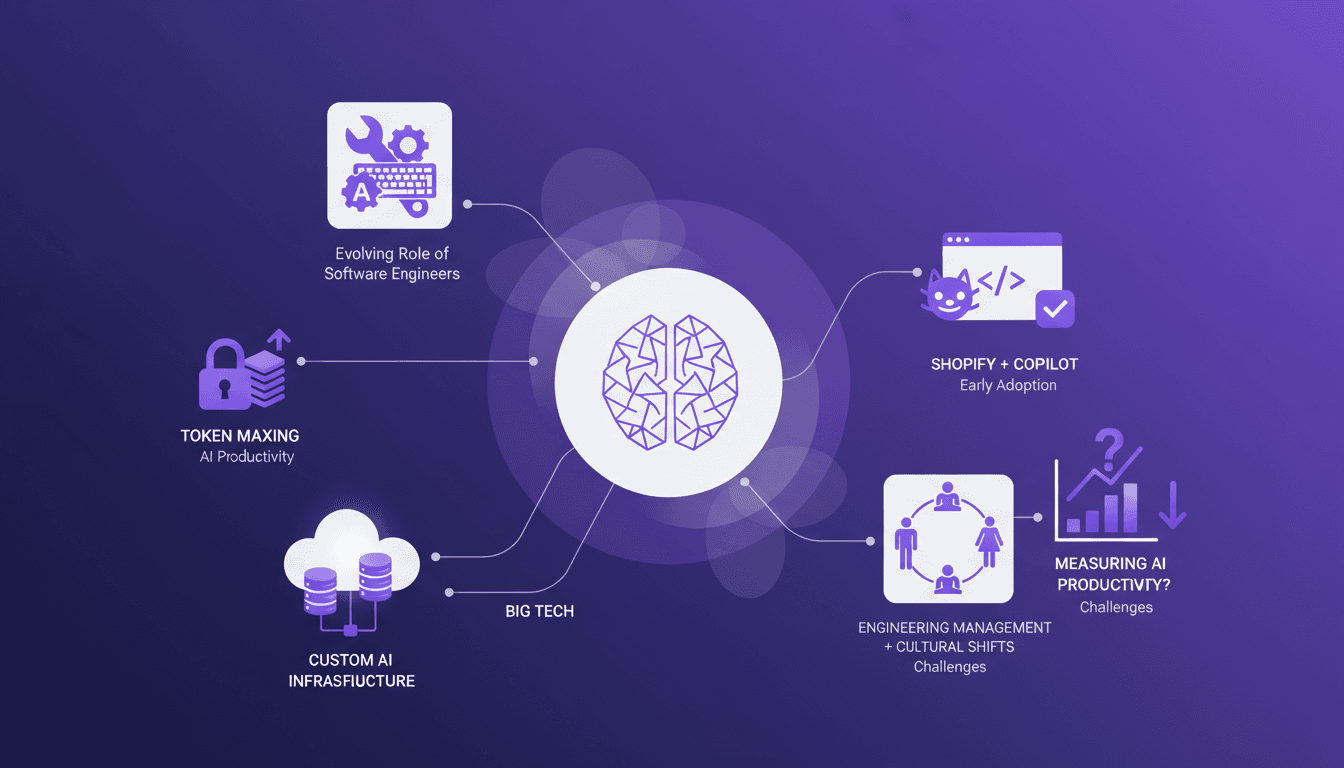

Token Maxing: AI's Revolution in Engineering

I've been in the AI trenches, and let me tell you, the way AI is reshaping software engineering is nothing short of a game changer. But beware, it's not all smooth sailing. In our field, AI tool adoption brings its own set of challenges, like token maxing and the evolving role of engineers. At a recent conference, experts like Gergely Orosz shared valuable insights on these transformations, from productivity impacts to cultural shifts in team management. We will need to navigate these opportunities and challenges to make the most of this technological revolution.

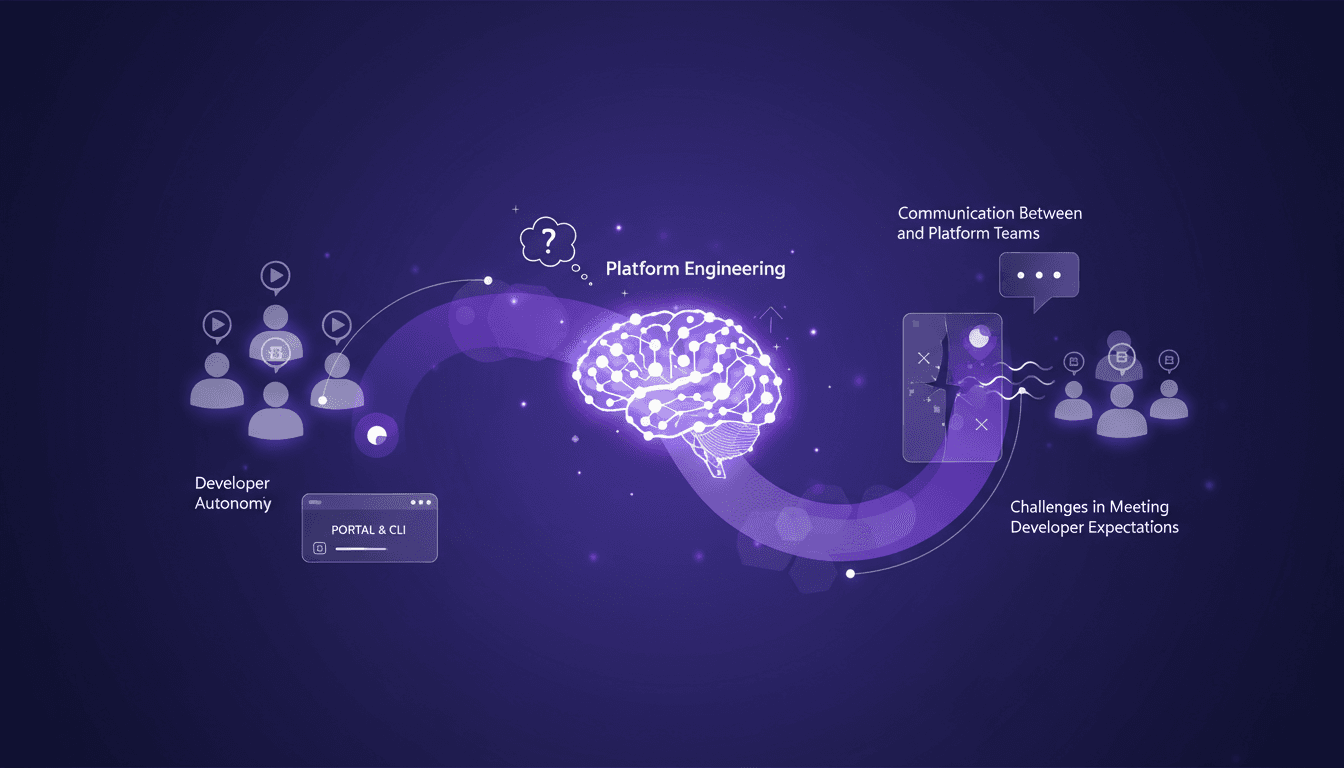

Platform Engineering's Impact on Developer Autonomy

I remember the first time our platform team rolled out a new self-service portal. It felt like a game changer. But soon, I realized it was nibbling away at our autonomy. Platform engineering is reshaping how we deliver capabilities, often enhancing efficiency but sometimes at the cost of developer autonomy. Let's dive into the impact this has on our daily work. I'll walk you through self-service capabilities, the communication between developers and platform teams, and the challenges in meeting developer expectations. We'll also look at tools like portals and CLI interfaces for delivering these capabilities.