Adopting AI: Challenges and Solutions

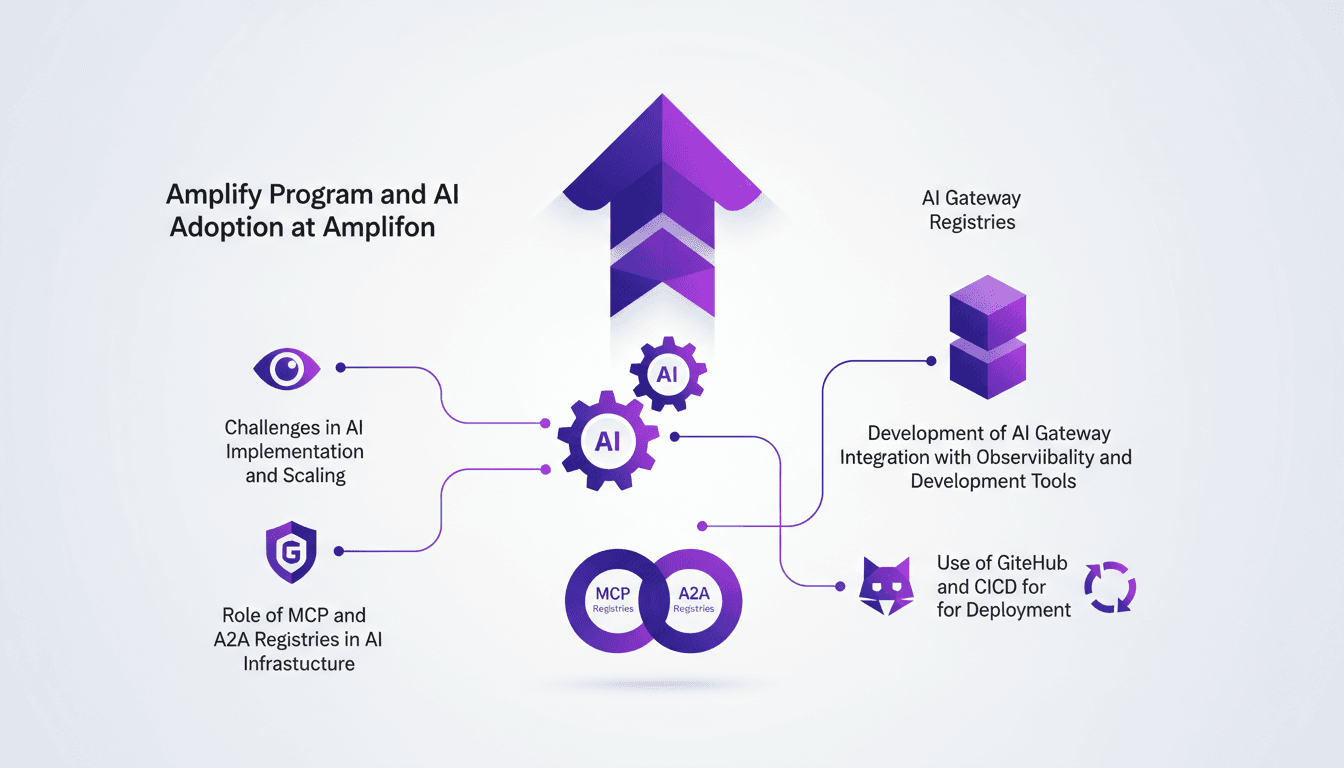

I remember when we first tackled AI at Amplifon. It was 2025, and we faced the monumental task of scaling AI across 26 countries with over 20,000 team members. Integrating AI wasn't just about technology—it was about infrastructure management, compliance, governance, and deployment tools like GitHub and CICD. I got burned more than once, especially with our private MCP and A2A registries. But through the Amplify Program, we turned every challenge into a learning and improvement opportunity. Dive in to see how we navigated these challenges, and why a single registry was a game changer.

I remember when we first tackled AI at Amplifon. It was 2025, and we were staring down the monumental task of deploying AI across 26 countries with over 20,000 team members. Technology is fine, but it's only part of the puzzle. Infrastructure, governance, compliance, and deployment tools like GitHub and CICD made the real difference. I got burned more than once, especially with our private MCP and A2A registries. But the Amplify Program wasn't just a project—it was a real learning journey. Each challenge pushed us to adapt our strategies, to tweak our governance, and most importantly, to make the most of our tools. And let me tell you, having a single registry to orchestrate everything was a real game changer. Join me as I walk you through how we turned these challenges into success stories.

Launching the Amplify Program: A Strategic Move

In 2025, we kicked off the Amplify Program to spearhead AI integration across our global operations. With operations spanning 26 countries and more than 20,000 employees, a unified approach was essential. The first step was setting up a dedicated team focused on AI infrastructure. I was directly involved in assembling this team to ensure our priorities were clear from the start: creating a secure and accessible AI Gateway. But watch out, aligning AI initiatives with business goals is no small feat.

"The Amplify program is designed to set clear rules for AI adoption."

The challenges were numerous: ensuring each initiative aligned with the business goals of each region while maintaining a global vision. We had to navigate through complex waters, but this first step was crucial for the rest of the journey.

- Formation of a dedicated AI infrastructure team.

- Creation of a secure AI Gateway.

- Alignment of AI initiatives with business goals.

Building the AI Gateway: Unified Access and Security

The AI Gateway was the linchpin. It enabled us to provide unified access to AI tools across all our stores. By integrating robust authentication protocols, we secured access while simplifying AI deployments. Initially, integration was a real headache, but with a phased approach, we overcame these hurdles.

The Gateway became the backbone of AI operations at Amplifon. Every developer could now access AI models from a single point, greatly easing our resource management.

- Unified access to AI tools.

- Secured by robust authentication protocols.

- Simplified AI deployment.

Developing MCP and A2A Registries: Core Infrastructure

To manage our AI models efficiently, we built a private MCP registry. This was essential for maintaining governance and compliance in our AI operations. The A2A registry facilitated agent-to-agent communication within our AI systems, strengthening our operational structure.

We encountered scalability issues initially, but iterative improvements helped us overcome these challenges. This infrastructure now supports our AI's operational and strategic needs.

- Efficient management of AI models with the MCP registry.

- Improved communication with the A2A registry.

- Scalability issues resolved through continuous improvements.

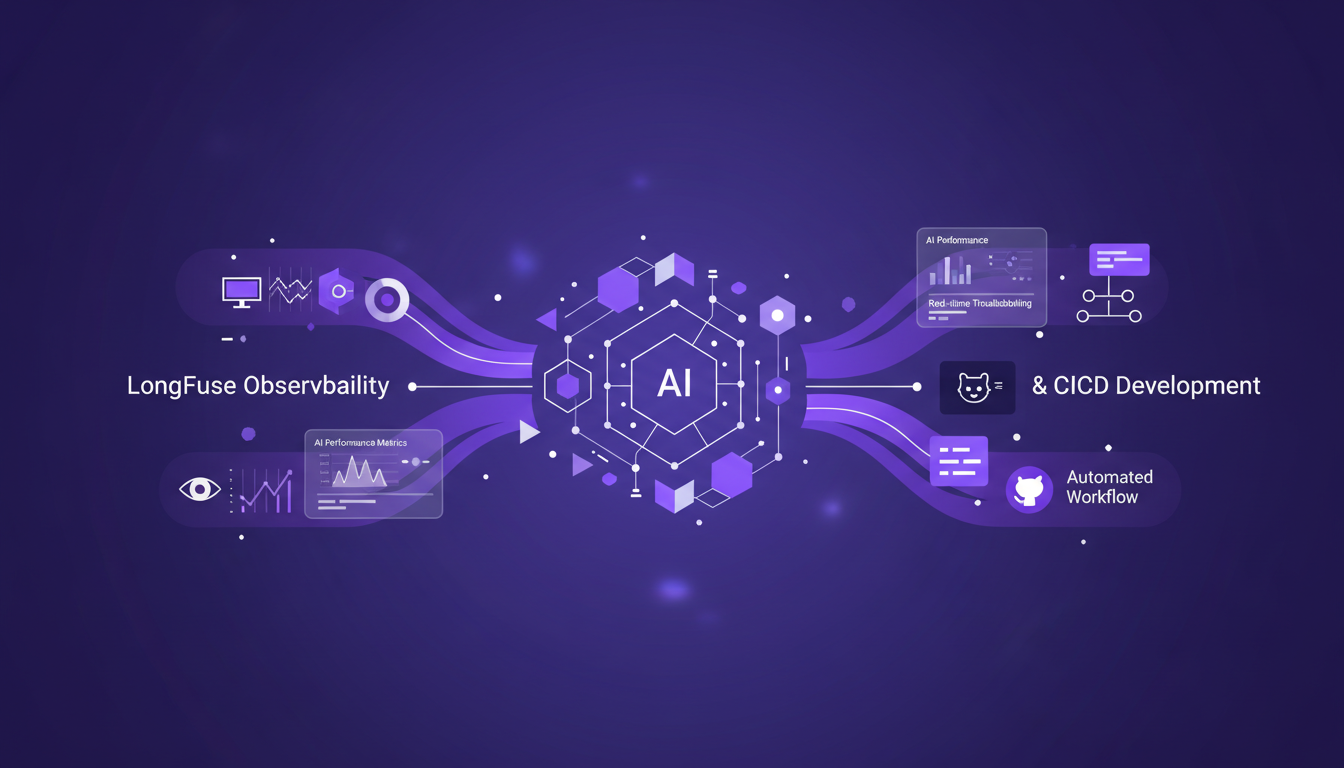

Integrating Observability and Development Tools

Integrating LongFuse improved the observability of AI processes. With this tool, we could monitor AI performance and troubleshoot in real-time. GitHub templates and CICD pipelines streamlined our development workflow, but don't neglect thorough testing to ensure reliability.

Integration challenges taught us valuable lessons in tool orchestration. I've learned from experience that sometimes it's better to slow down to better orchestrate the entire technology stack.

- Improved observability with LongFuse.

- Streamlined development workflow with GitHub and CICD.

- Importance of thorough testing to ensure reliability.

Ensuring Governance and Compliance in AI Solutions

Governance was always a priority to ensure compliance with global standards. We implemented metadata management to track AI model usage. Regular audits and updates were necessary to maintain compliance.

It's not easy to balance innovation with regulatory requirements. But today, our governance framework has become a model for AI compliance.

- Priority on governance for global compliance.

- Metadata management for tracking AI models.

- Regular audits to maintain compliance.

Ultimately, the Amplify program enabled us to tackle these challenges and create a robust and efficient AI infrastructure. Innovation should never come at the expense of security and compliance, and that's where our success lies.

Scaling AI at Amplifon was no small feat. Through the Amplify Program, I developed a robust infrastructure with the AI Gateway, MCP, and A2A registries. Here's what I learned:

- First, building our own private MCP registry was crucial for managing AI at scale.

- Next, integrating observability tools became essential for real-time performance monitoring.

- Also, maintaining compliance was a constant challenge, but I found effective ways to do it without stifling innovation.

With over 20,000 people across 26 countries, these solutions allowed us to standardize and manage AI on a global scale. Honestly, it’s a real game changer, but watch out for initial implementation costs.

Considering scaling AI in your organization? These strategies worked for us, and I'm confident they can work for you too. Check out the full video for a deeper dive with my colleagues Sonny, Mauro, and Mattia: Watch the video.

Frequently Asked Questions

Thibault Le Balier

Co-fondateur & CTO

Coming from the tech startup ecosystem, Thibault has developed expertise in AI solution architecture that he now puts at the service of large companies (Atos, BNP Paribas, beta.gouv). He works on two axes: mastering AI deployments (local LLMs, MCP security) and optimizing inference costs (offloading, compression, token management).

Related Articles

Discover more articles on similar topics

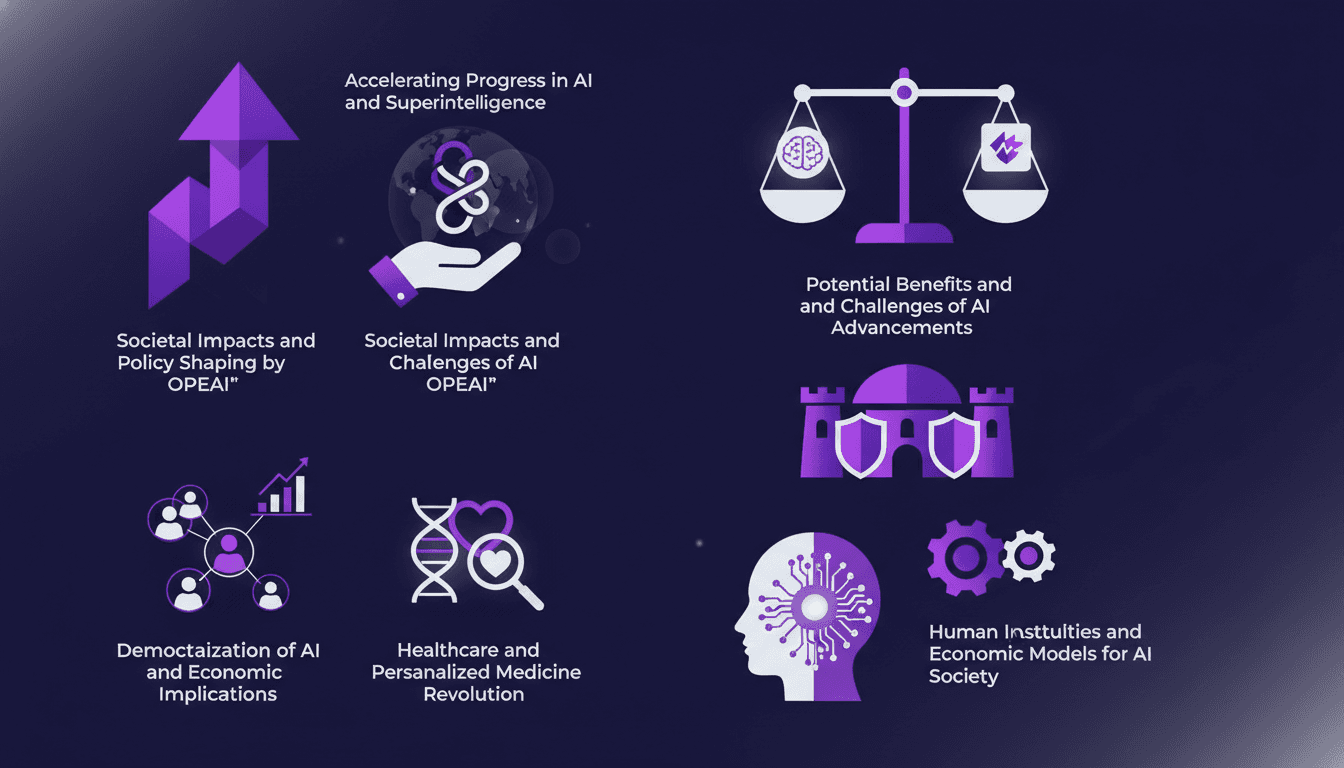

Accelerating AI: Building a Superintelligent Future

I've spent countless hours building AI systems that push boundaries. Imagine accelerating a decade of scientific progress in just one year. That's what Sam Altman and his OpenAI team are showcasing in their talk on the future of AI. We're diving into real-world applications, tangible challenges, and the potential societal impact. From AI democratization to personalized medicine and resilience against threats, the AI era is here. But watch out, there are challenges to tackle. Join me in exploring how we're shaping policies and economic models to integrate AI into our daily lives.

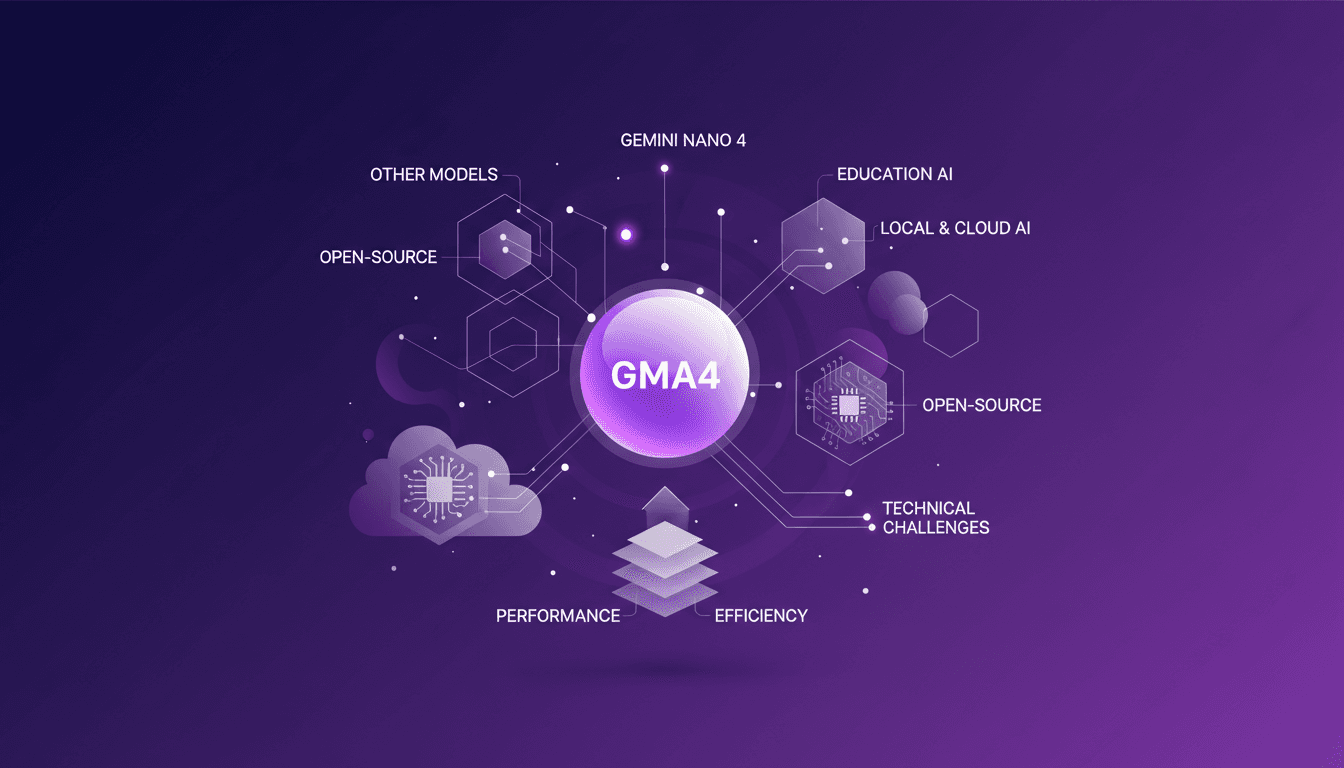

GMA4: Google's Open-Source AI Revolution

When Google released the GMA4 model, I knew it would be a game changer. I dove right into integrating it with Gemini Nano 4 for Android. And let me tell you, it wasn't a walk in the park. GMA4 is not just another AI model. With its open-source structure, it redefines local AI processing. But watch out, there are technical traps to avoid. I got burned several times, especially on performance optimization and efficiency. Yet, when you truly get a handle on these aspects, the impact is massive. Imagine a model 30 times smaller than its competitors but just as powerful. That's GMA4, a revolution in local and cloud AI. We'll talk about the educational opportunities for integrating this technology and what it means for the future of AI.

API Platform Engineering: A Practical Case

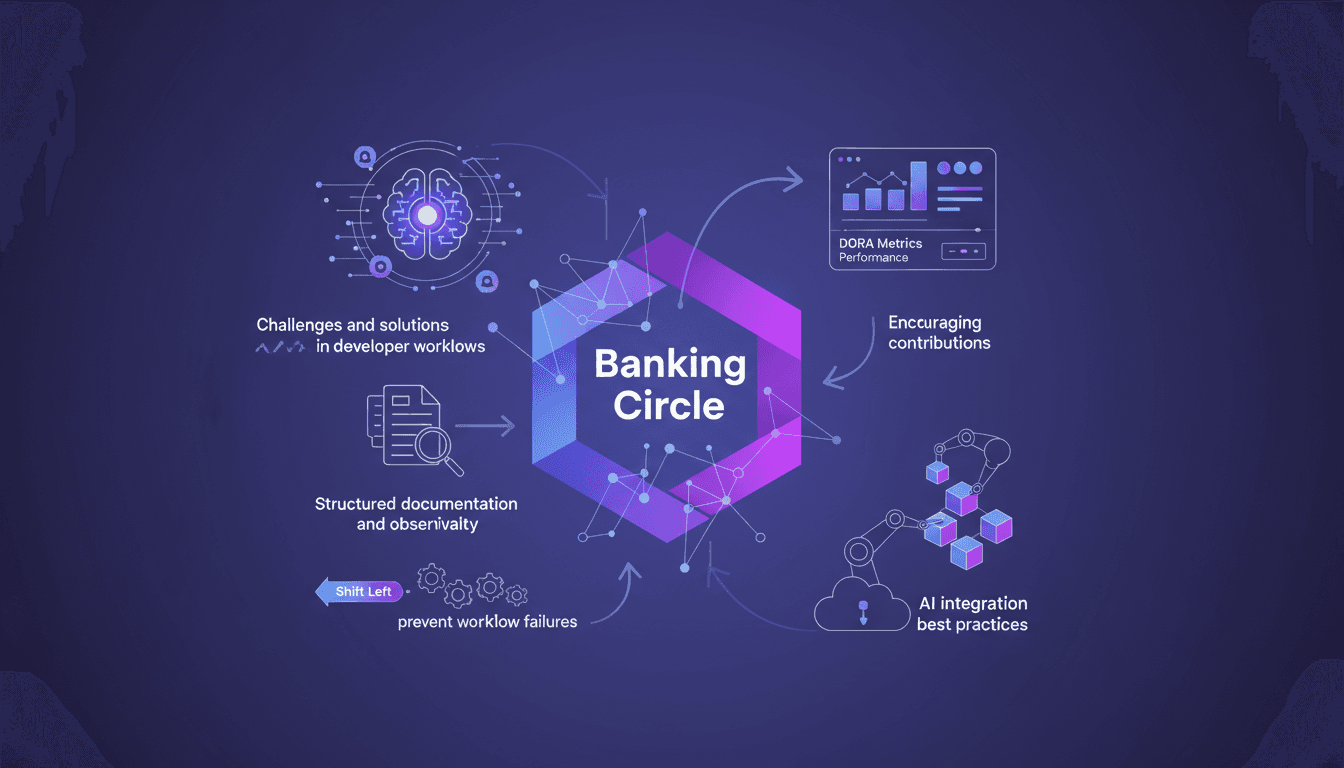

I've been knee-deep in platform engineering at Banking Circle, where we handle a staggering €1 trillion annually. With 700 financial institutions counting on us, our mission is clear: streamline workflows through API-based solutions and AI integration. But it's no walk in the park. Let me show you how we tackle these challenges: self-service, APIs, and AI agents. Our team of 250 engineers is at the forefront, leveraging metrics like Dora to measure success. Dive into how we preempt workflow failures with a 'shift left' approach and encourage contributions to our internal platforms.

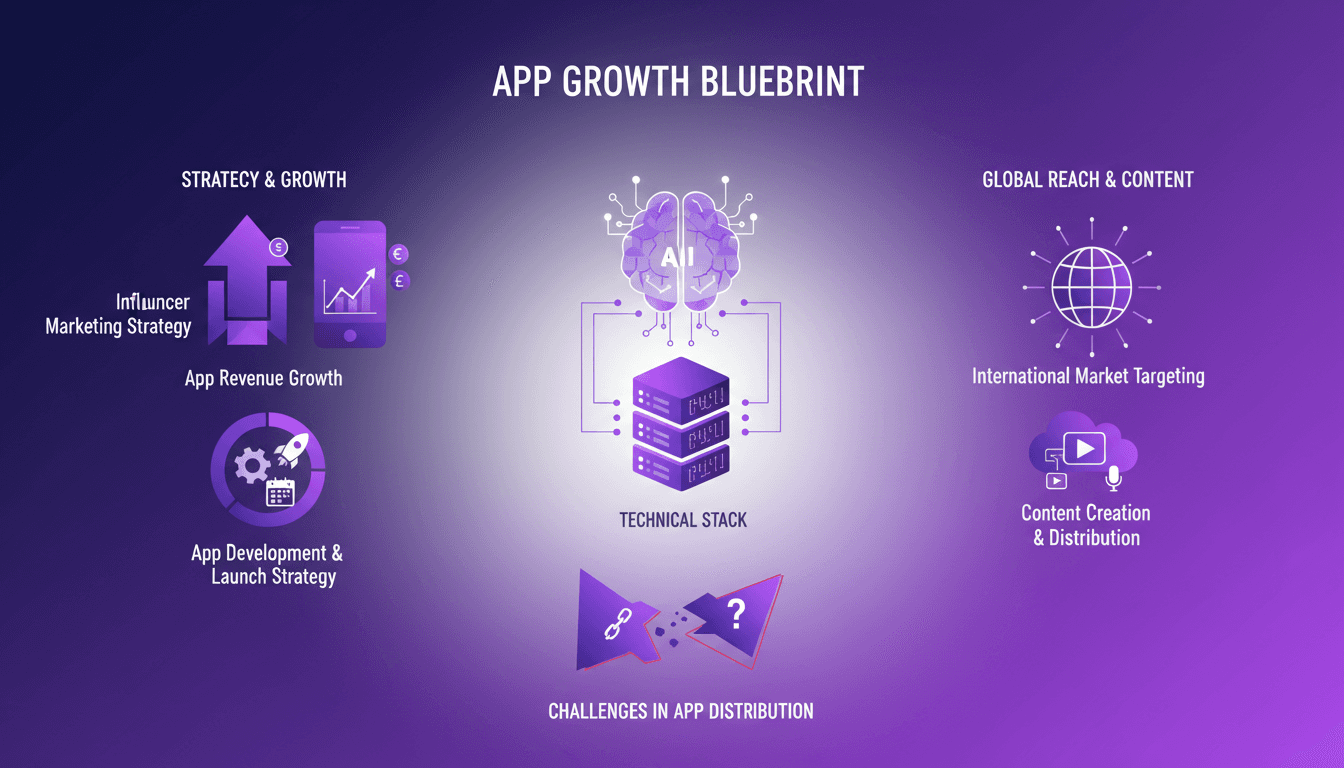

Influencer Strategy: $35K/Month with One App

I turned an app idea into a $35K/month cash machine by partnering with just one influencer. How? By orchestrating an influencer marketing strategy that skyrocketed our revenue by 10,000%. I'll walk you through how I navigated the challenges of app development, international market launch, and powerful content creation. And watch out—there are pitfalls to avoid (I've been burned more than once)!

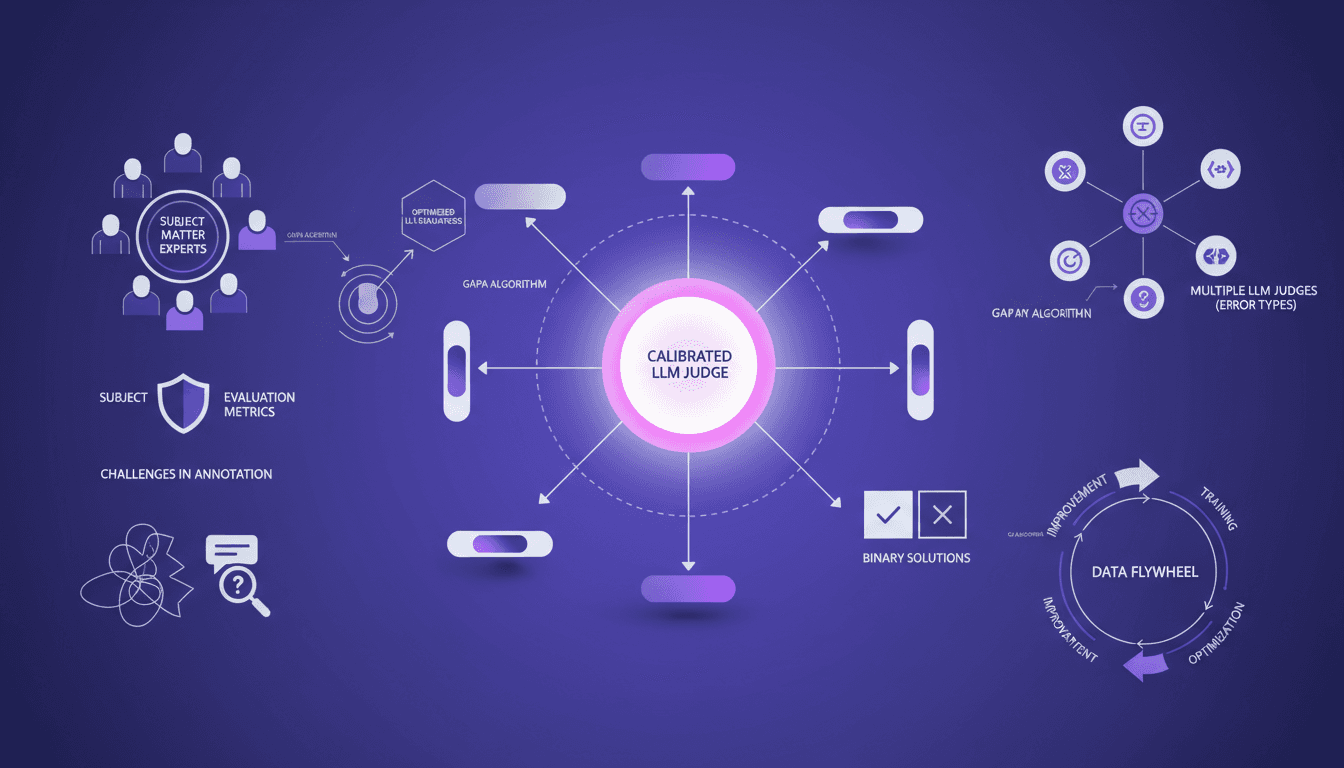

Building LLM Judges: Optimize with GAPA

I've spent years building AI systems, and if there's one thing I've learned, it's that having calibrated LLM judges is crucial. Let me walk you through how I use tools like the GAPA algorithm to optimize these evaluators, ensuring they're not just functional but effective. In AI development, the accuracy and reliability of LLM judges can make or break your system. With tools like GAPA and insights from subject matter experts, we can refine these judges to handle specific error types and continuously improve through a data flywheel approach. I'll detail how experts define evaluation metrics, the challenges of annotating conversation traces, and strategies for building multiple LLM judges for specific error types. With the GI algorithm reaching 61% accuracy on the validation set, we're hitting par to frontier. It's a game changer, but watch out for rushing through the steps.