OpenAI Audio Models: Real-Time Integration

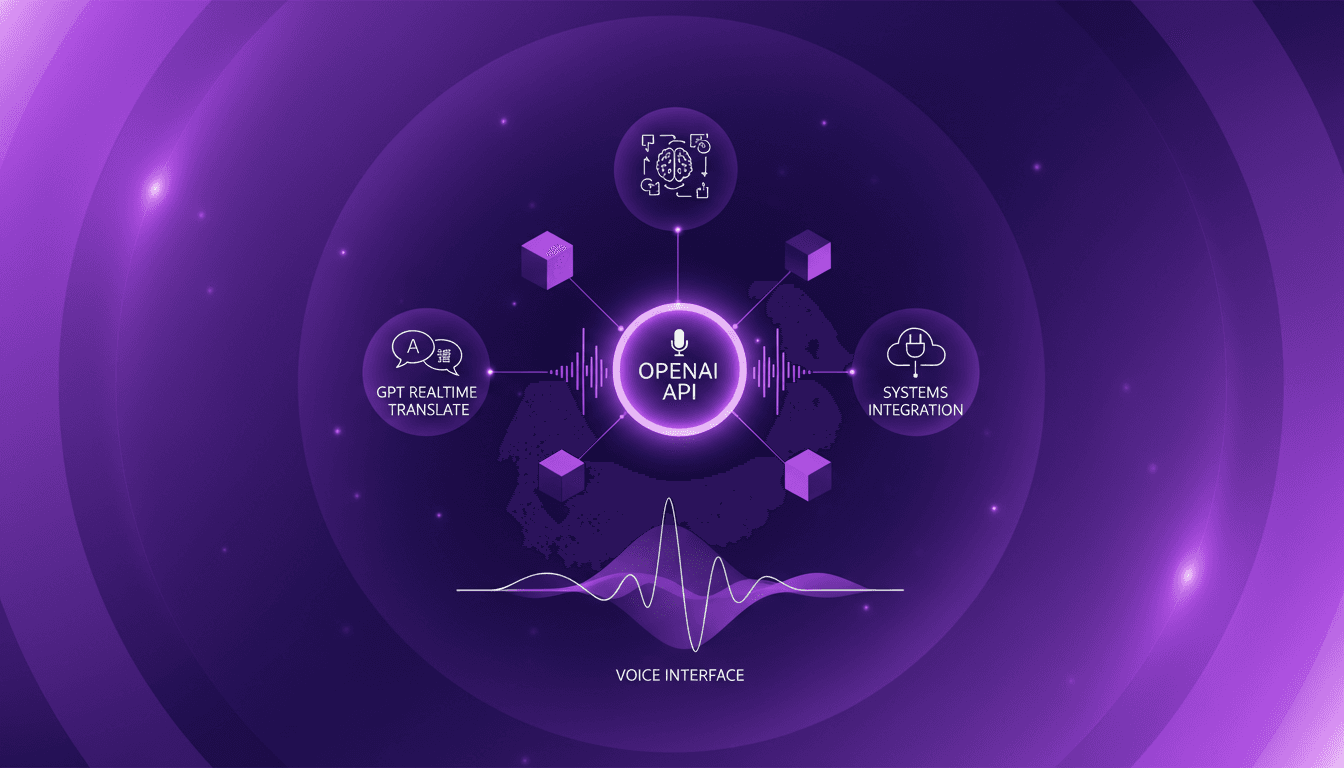

I still remember the first time I integrated voice models into my system. It was utter chaos, but the results were a game changer. Now, with OpenAI's new real-time audio models, we're taking it to a whole new level. Imagine translating across 70 languages live or using voice agents with intelligent reasoning. In this article, I'll show you how these models can revolutionize your workflow. From real-time translation to intelligent voice agents, every integration step is crucial. Watch out for technical terms and language switching—it can become a headache if mishandled. But when orchestrated well, voice becomes the primary interface for interaction. Ready to transform your system? Let's dive in!

I still remember the first time I integrated voice models into my system. It was chaos, but the results were a game changer. Now, with OpenAI's new real-time audio models, we're taking it to a whole new level. Imagine translating in real-time across 70 languages or deploying voice agents that reason real-time. That's exactly what these models promise. In this article, I'm showing you how to integrate these new solutions into your workflow. First up, there's the GPT Realtime Translate model for live translations, and then the GPT Realtime 2 model for intelligent reasoning in voice agents. But watch out for technical terms and language switching—it can turn into a real headache if not managed properly. Yet, when orchestrated correctly, voice becomes the primary interface. Ready to transform your system? Let's dive in, it's time to integrate these solutions and see direct business impact.

Getting Started with Real-Time Audio Models

OpenAI's latest introduction of real-time audio models in their API is a game changer. It's fascinating to see how these models can transform our approach to audio and language tasks. Setting up your integration environment correctly is crucial to getting started. I discovered that having a machine with sufficient processing power is essential, especially when handling real-time audio streams. But that's not all. Understanding what these models can truly accomplish is the first step towards successful integration.

What struck me as particularly impressive is the model's ability to handle technical terms effortlessly while allowing for smooth communication. However, watch out for initial challenges: latency can be an issue if your network isn't optimized. I overcame this by tweaking my network settings and using servers closer to my location. Think about this before you dive in.

- Configure adequate processing power

- Optimize network to reduce latency

- Understand model capabilities for seamless integration

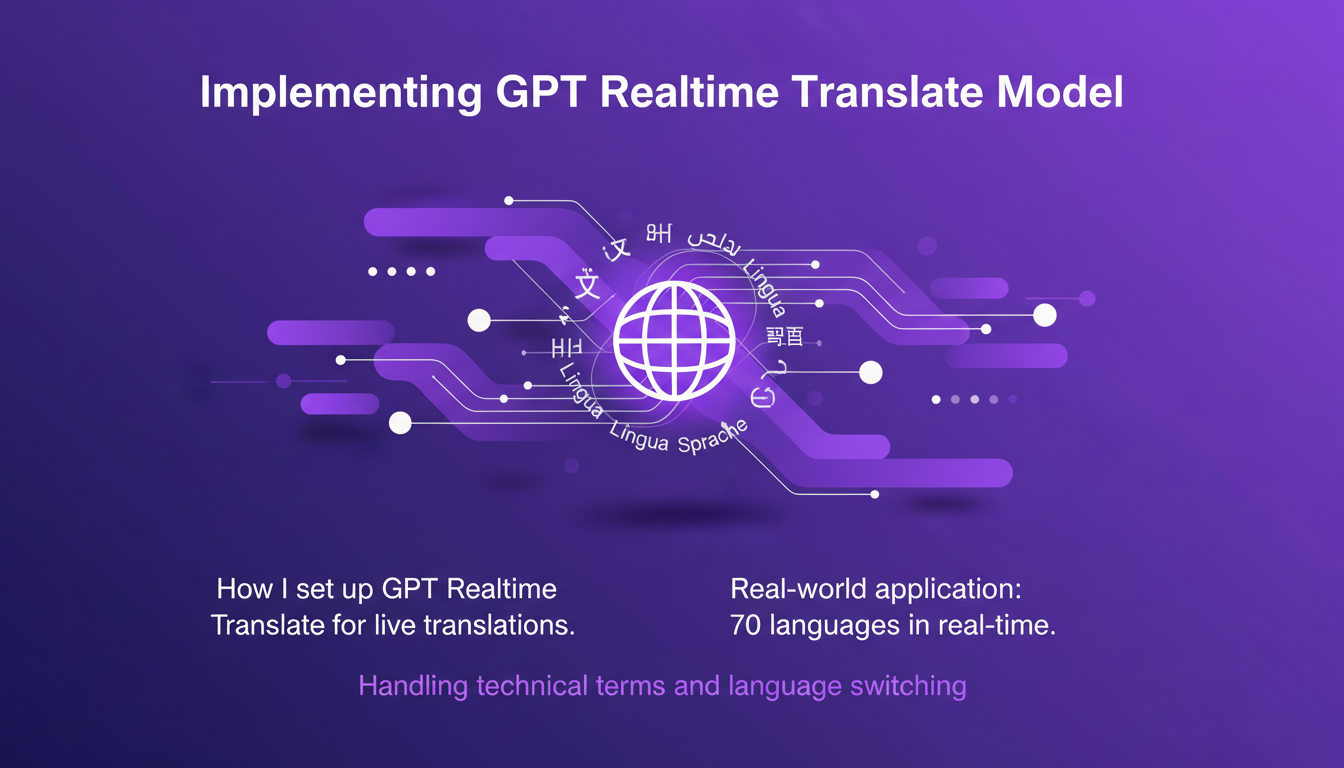

Implementing the GPT Realtime Translate Model

Implementing the GPT Realtime Translate model for live translations is a unique experience. I set up this model to offer instant translations across 70 languages. Imagine the reach this provides in contexts like international conferences or multilingual customer support. But beware, switching languages on the fly requires careful management of technical terms and linguistic context.

The model can translate live across 70 different languages, offering natural communication between speakers.

What I've noticed is that the model handles interruptions and rapid language changes very well. However, it can struggle in high-noise environments. To address this, I integrated additional noise filters, which significantly improved translation quality.

- Live translations in 70 languages

- Handling of technical terms

- Potential issues in noisy environments

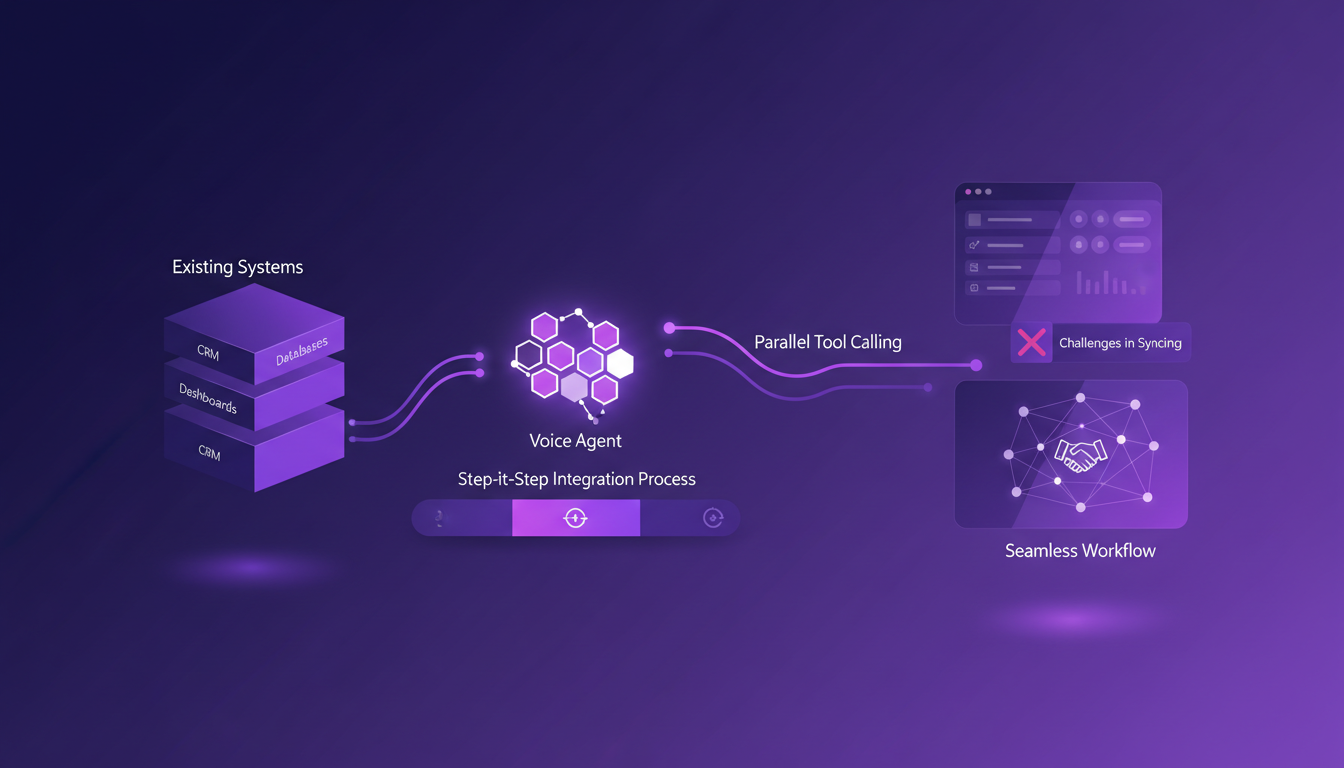

Leveraging GPT Realtime 2 for Intelligent Voice Agents

The GPT Realtime 2 model goes beyond basic voice commands. With integration into existing systems like CRMs and dashboards, it brings an unprecedented level of user interaction. This model enables real-time reasoning, greatly enhancing user engagement. But let's be clear, there are trade-offs between processing power and response time.

I integrated this model with our CRM, and the way it can reason and act in real-time is impressive. However, expect high computing power needs, especially if you're handling large data volumes. It's a balance between performance and speed.

- Integration with existing systems

- Real-time reasoning for better interaction

- Trade-offs between processing power and response time

Integrating Voice Agents with Existing Systems

Integrating voice agents with current systems requires a methodical approach. I started by mapping out our existing systems, then proceeded with a step-by-step integration process. The main challenge was syncing data with our CRM and dashboards. However, with proper orchestration, it becomes seamless.

The key is to use parallel tool calling to make the process smooth. But watch out for costs: every call and additional integration can quickly add up. However, the efficiency gains are often worth it.

- Step-by-step integration process

- Sync challenges with CRM

- Cost considerations and efficiency gains

The Future of Voice as a Primary Interface

With these advancements, voice is poised to become the primary interface. These models can reshape user interaction in a radical way. Preparing businesses for these advancements is crucial to stay competitive. But let's not forget to balance innovation with practical implementation.

It's fascinating to see how these models can not only preserve context but also act directly within the products we already use. Voice is on the brink of becoming the main channel, and it's exciting to think about what we can build with these new models.

- Voice as the primary interface

- Reshaping user interaction

- Balance between innovation and practical implementation

So, after setting up and integrating OpenAI's real-time audio models, I can say we've really hit a turning point in how we interact with technology. First, the GPT Realtime Translate model is a real feat: translating in real time across 70 languages is just massive for international meetings. Then, the GPT Realtime 2 model for intelligent voice agents adds a layer of reasoning that's a game changer. But watch out, handling technical terms and language switching can be tricky.

Look, with these models, we're not just talking efficiency anymore; we're talking about a radical transformation. Ready to transform your workflow with voice integration? Start experimenting with these models and see the impact firsthand. And if you really want to grasp how this can integrate into your daily routine, I recommend watching the full video here: YouTube link.

Frequently Asked Questions

Thibault Le Balier

Co-fondateur & CTO

Coming from the tech startup ecosystem, Thibault has developed expertise in AI solution architecture that he now puts at the service of large companies (Atos, BNP Paribas, beta.gouv). He works on two axes: mastering AI deployments (local LLMs, MCP security) and optimizing inference costs (offloading, compression, token management).

Related Articles

Discover more articles on similar topics

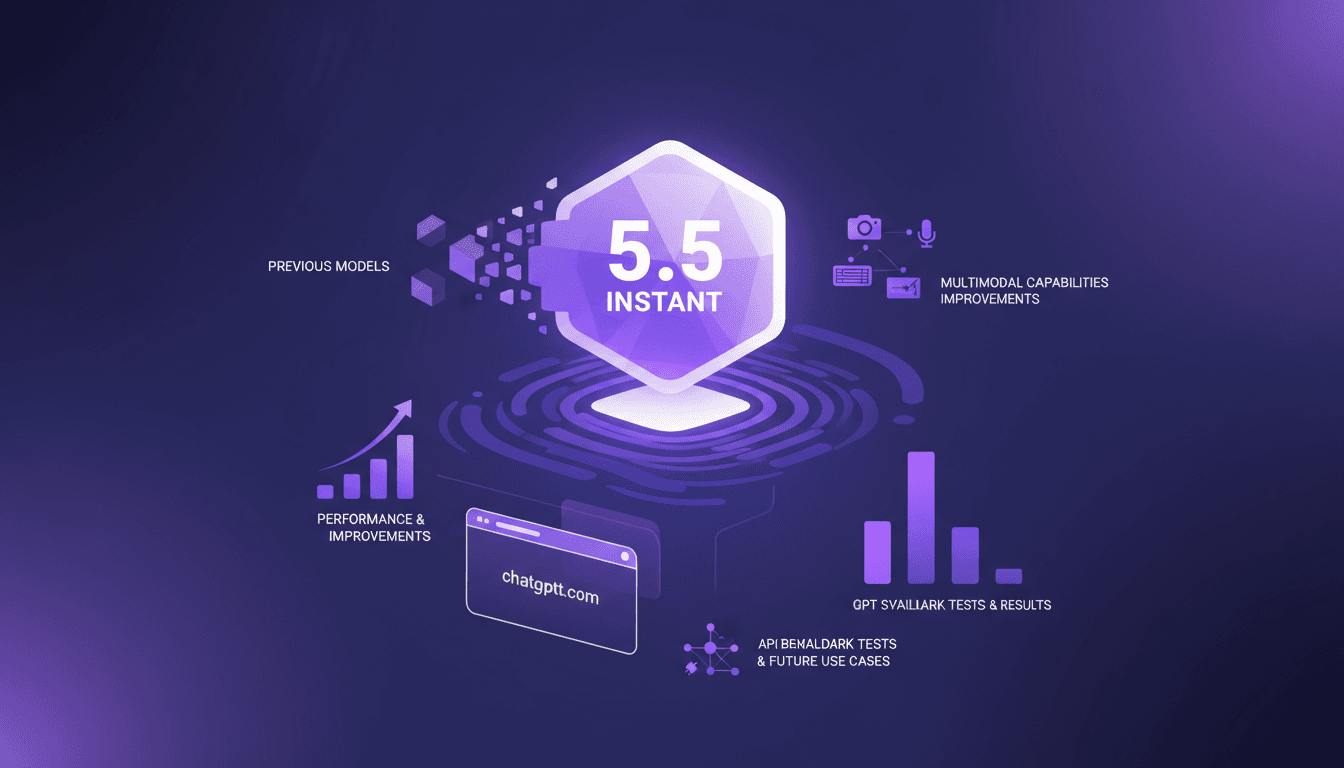

GPT 5.5 Instant: Revolution and Comparison

I've been diving deep into OpenAI's latest release, the GPT 5.5 Instant model. It's not just another upgrade; it's a genuine game changer in the AI world. Let me walk you through what I've discovered. With its multimodal capabilities and performance enhancements, the promises are big. But how does it really stack up against its predecessors? I'll show you how it performs in benchmark tests, how its API might revolutionize our future use cases, and why it might just outdo the Claude Haiku 4.5 model. Get ready, because this journey is intriguing.

IBM Granite ASR: Setup and Optimization

I dove into IBM's Granite Series ASR models to see if they're as fast as they claim. Spoiler: they're impressive, but let's break it down. With AI-driven ASR models becoming crucial for real-time applications, IBM's Granite Series promises speed and accuracy. But how do they really perform in a practical setup? I connect my environment, set up the technical requirements, and put the Granite Speech 4.1 model to the test. Result: a 5.33 word error rate and 95% accuracy. But watch out, there are trade-offs. Set it up right or you'll get disappointed. It's a balancing act between performance and resources.

GPT-5.5 Instant: What's New and Improved

I dove into the new GPT-5.5 Instant, and let me tell you, it's a game changer. But like any tool, it has its quirks. Transitioning from GPT-5.3 to 5.5 isn't as straightforward as it seems. I'll break down how I navigated this technological leap. With this update, OpenAI is pushing us further into AI capabilities. Whether you're a free or paid user, these changes have a direct impact on our everyday applications. Let's dissect the new features of the 5.5 model, the performance enhancements, and I'll share my tips for getting the most out of this advancement.

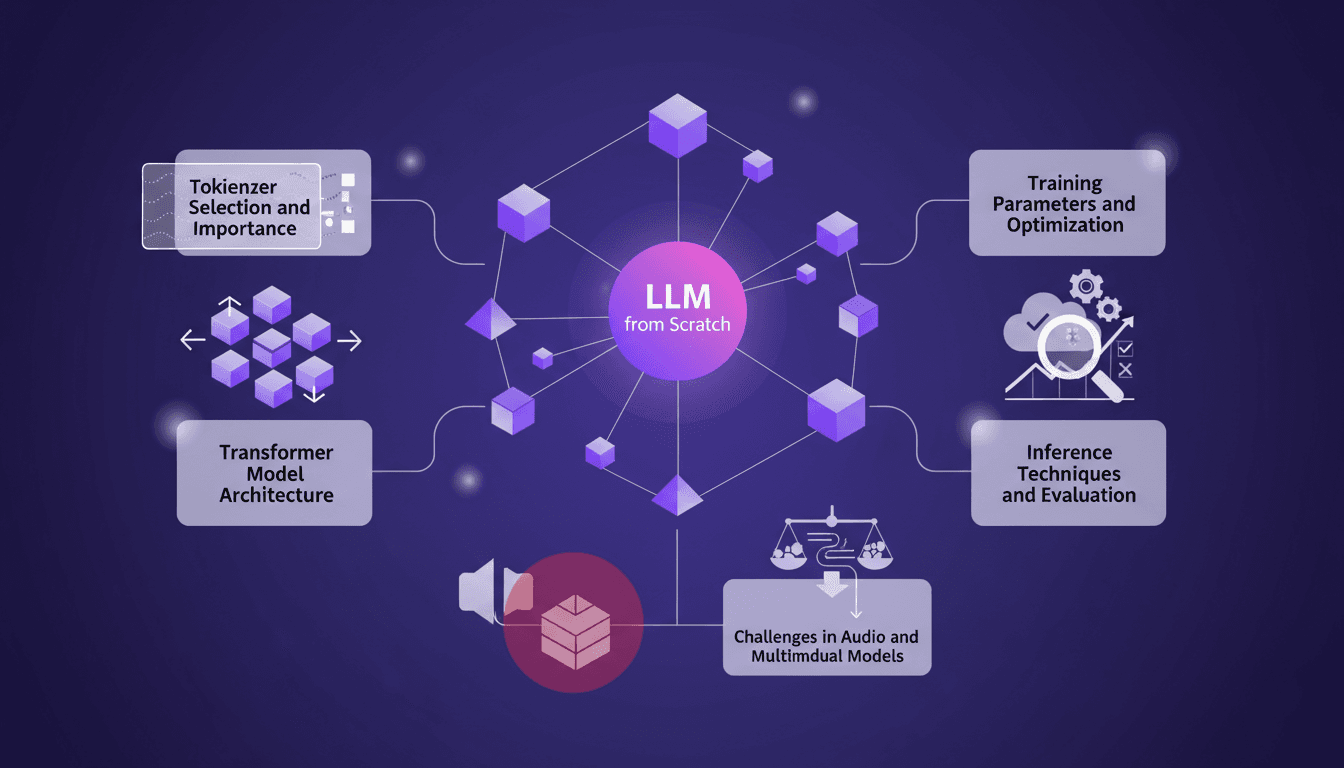

Training an LLM from Scratch: Practical Guide

I remember the first time I decided to train a large language model from scratch. It felt like climbing a mountain with no map. But once you get the hang of it, it's like orchestrating a symphony. In this guide, I'll take you through my journey of building an LLM locally, inspired by Andre Karpathy's Nano GPT. We'll dive into tokenizer selection, Transformer model architecture, training parameters, and much more. I'll share the mistakes I made, the solutions I found, and how I optimized for efficiency. This is a practical guide for anyone wanting to truly understand each step of the process without wasting time on unnecessary details.

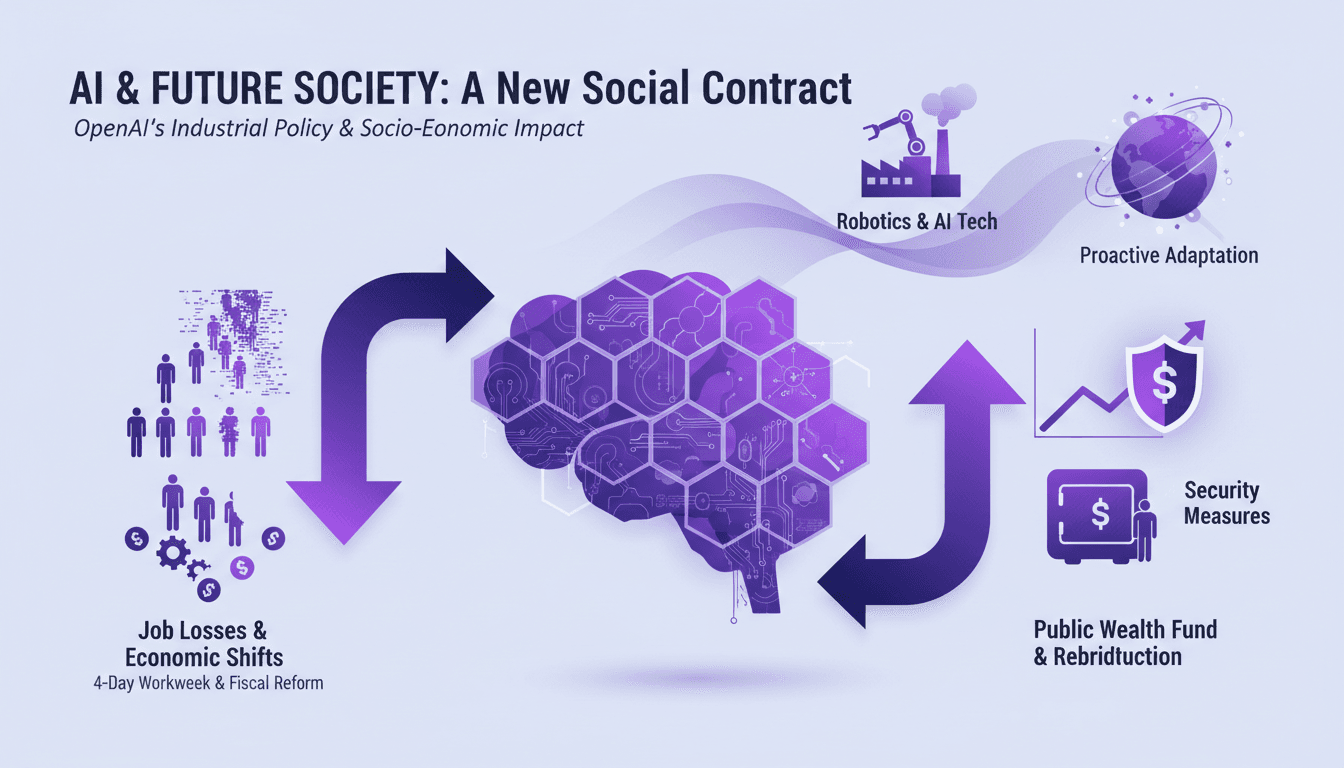

AI's Socio-Economic Impact: What You Need to Know

I've been in the trenches with AI, watching it reshape industries and redefine jobs. This isn't just theory—it's happening now. Let's dive into AI's socio-economic impact, especially LAGI. We're talking job losses, new workweek proposals, and even a public wealth fund to redistribute AI gains. If you're not adapting, you're already behind. Time is ticking—not in decades, but months before these changes become our reality. How do we gear up for this economic shift? We'll explore OpenAI's industrial policies and security measures for advanced models, alongside breakthroughs in robotics. Get ready for a candid discussion about the challenges and opportunities this tech brings.