Flux Models: Revolutionizing Visual AI

I remember the first time I saw Flux in action. It felt like magic, with images generated and edited in less than a second. But it’s not just about speed; it’s about the doors it opens for visual AI. Under Stephen Batifol's leadership, Black Forest Labs is at the cutting edge, redefining what's possible. Flux models are not merely fast; they unlock potential we hadn't dared to dream of. This article delves into the mechanics of these models, the challenges faced, and the future they promise. I'll walk you through how we've tackled technical hurdles and the exciting directions we're exploring, especially with Selfflow for real-time multimodal generative models. No academic fluff here; it's practical, lived experience, with direct impact on how we work with visual AI.

I remember the first time I saw Flux in action. It was like witnessing magic unfold—images generated and edited in the blink of an eye. But hold on, it’s not just about speed. With Stephen Batifol at the helm, Black Forest Labs is redefining what's possible in visual AI. Flux models aren’t just fast; they open doors we thought were closed. Imagine being able to manipulate images in real time, with zero lag. That's the kind of revolution we’re experiencing. And let me tell you how. First off, with Flux 2, we've seen spectacular performance gains, but it hasn't been without its challenges. Training generative models is no walk in the park. We had to rethink our approaches, especially with Selfflow for multimodal generative models. Then there's the future of visual AI, a field where the possibilities are endless but require precise orchestration. So buckle up, I'm taking you on this visual AI journey.

Introduction to Black Forest Labs and Flux Models

Working at Black Forest Labs (BFL) means being part of a technological revolution. Since our inception, BFL has aimed for disruptive advancements, and with the Flux models, we've truly marked a turning point in 2024. Flux one, our first model, redefined industry standards by combining image generation and editing, an achievement that earned us over 200,000 academic citations. This breakthrough was made possible by the visionary innovation of Stephen Batifol, who steered our research towards concrete applications and tangible results.

Flux one was released in August 2024, already a major breakthrough. This model was the first to enable open-source image editing, fusing text and visuals. With this innovation, we surpassed existing models while offering a more accessible and faster solution.

"The Flux models have been a game changer in the sector of image editing and generation."

Advancements in Image Generation and Editing with Flux 2

Moving to Flux 2 was like going from a scooter to a rocket. Where previous models took 40 to 50 seconds to generate or edit images, Flux 2 does it in less than a second. Imagine the impact on sectors like the financial sector where time is crucial.

Flux 2 allows editing of up to 10 images simultaneously, which is a significant time saver. However, watch out, not everything is perfect. The model remains limited by the complexity of images and requests, which can affect the final quality in some cases. It's a powerful tool, but like anything, it has its limits and requires judicious use.

- Generates images in under a second.

- Simultaneous editing of 10 images.

- Compared to slower models, huge improvements in time and cost.

Challenges and Solutions in Training Generative Models

Training generative models is a bit like juggling with live grenades. Every mistake can be costly. At BFL, we've encountered numerous challenges, but we've adapted our approach through innovative solutions.

External alignment has become a key tool, allowing our models to be 70 times faster in terms of convergence and loss reduction. However, be aware of the limits imposed by external encoders and specialized modalities. Finding this balance between innovation and practical constraints has been a valuable lesson.

- Utilization of external alignment to improve convergence speed.

- Importance of balancing innovation with practical constraints.

- Lessons learned: never underestimate the challenges of scaling.

Selfflow: A New Approach to Multimodal Generative Models

Selfflow is our answer to the limits of traditional models. By integrating multiple modalities seamlessly, Selfflow enriches AI applications by combining representation learning and generation in the same flow.

This model is particularly effective for audio, image, and video applications, outperforming traditional methods. However, it is not without limits: complex integration can sometimes lead to uneven performances across modalities. We are continuously working to improve these aspects.

- Seamless integration of multiple modalities.

- Improved performance in various fields (audio, images, video).

- Current limitations: complexity of integration.

Real-Time Image Editing and Generation: The Future

Today, real-time image editing is a reality thanks to the Flux models. This has revolutionized sectors like robotics and visual media, enabling instant interaction and reaction.

However, as the technology scales, new challenges arise, particularly in terms of scalability and resource management. But I am convinced that the future of visual AI and robotics lies in these real-time technologies, offering a new dimension to human-machine interactions.

- Significant impact on robotics and visual media.

- Challenges: scalability and resource management.

- Promising future for real-time visual AI.

Diving into Flux models, I realized they're not just about tech achievements; they're a peek into the future of visual AI. Generating and editing images in less than a second with Flux 2? That's game-changing. But let's be real, training these generative models isn't a walk in the park. There are challenges, but solutions like Selfflow are moving us forward. And seeing the BFL team with 200,000 academic citations already? That's reassuring.

- Flux 2 delivers image generation and editing in under a second.

- Starting with Flux one in 2024 marked a pivotal point.

- Selfflow's multimodal models open new doors.

The future is bright, especially if we keep pushing boundaries. I encourage you to dive deeper into Flux models and explore their potential applications in your field. Check out Stephen Batifol's full video on "FLUX, Open Research, and the Future of Visual AI" for more insights: YouTube link.

Frequently Asked Questions

Thibault Le Balier

Co-fondateur & CTO

Coming from the tech startup ecosystem, Thibault has developed expertise in AI solution architecture that he now puts at the service of large companies (Atos, BNP Paribas, beta.gouv). He works on two axes: mastering AI deployments (local LLMs, MCP security) and optimizing inference costs (offloading, compression, token management).

Related Articles

Discover more articles on similar topics

Google's $40B Cloud Move: Impacts Unveiled

I never thought I'd see Google throw $40 billion at a cloud competitor. But here we are, and it's shaking up the tech landscape like never before. I'm diving into how this move, alongside advancements in AI and robotics, is reshaping the industry. We'll unpack Google's massive investment, explore the performance of cutting-edge AI models like Happy Horse and Grock 4.3, and examine the latest innovations in robotics. We'll also touch on tech giants' infrastructure investments and a new approach to AI collaboration.

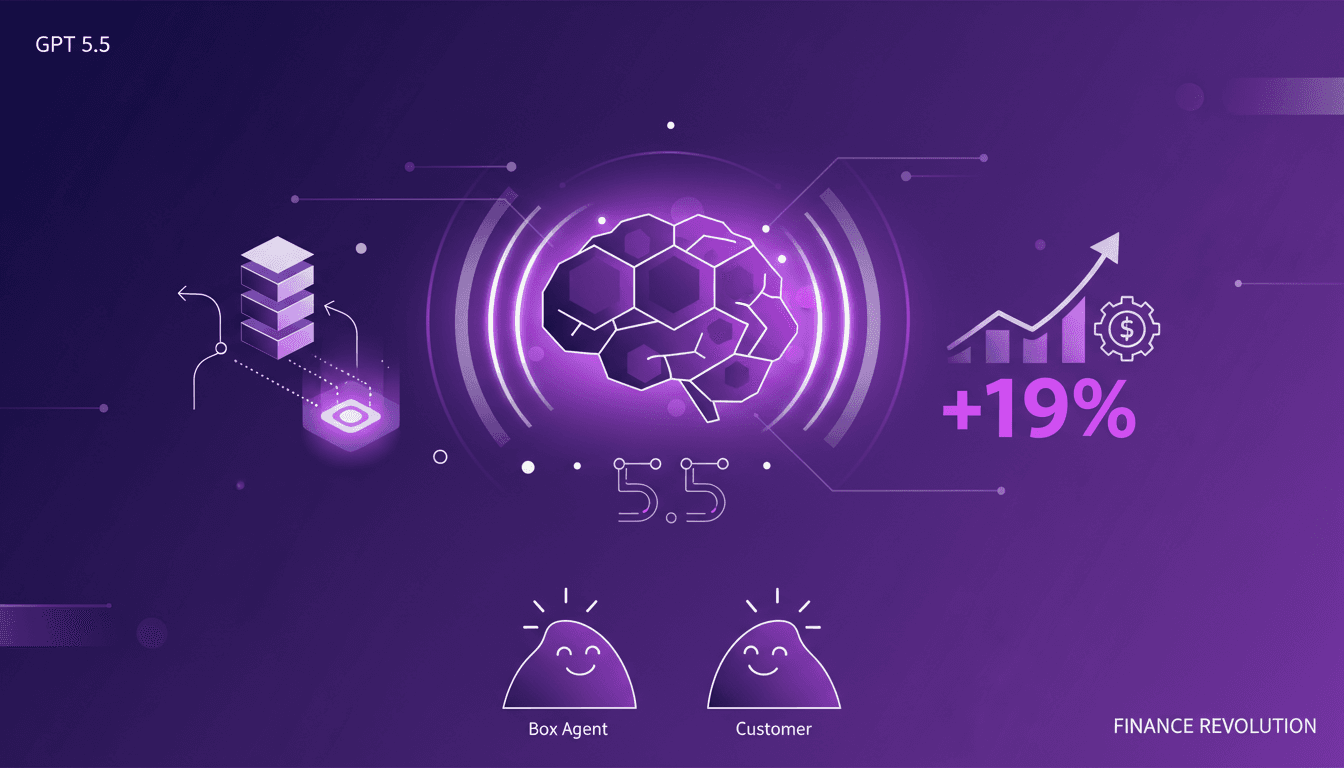

GPT 5.5: Transforming Finance Sector

When I first integrated GPT 5.5 into my workflow, it was like adding a turbocharger to a classic engine. Tasks that used to take hours were suddenly done in minutes, and the precision was off the charts. With the arrival of GPT 5.5, we're witnessing a paradigm shift in how AI handles complex reasoning and data tasks, especially in the finance sector. We're looking at a 19 percentage point improvement from the previous version, transforming our approach to daily efficiency and quality.

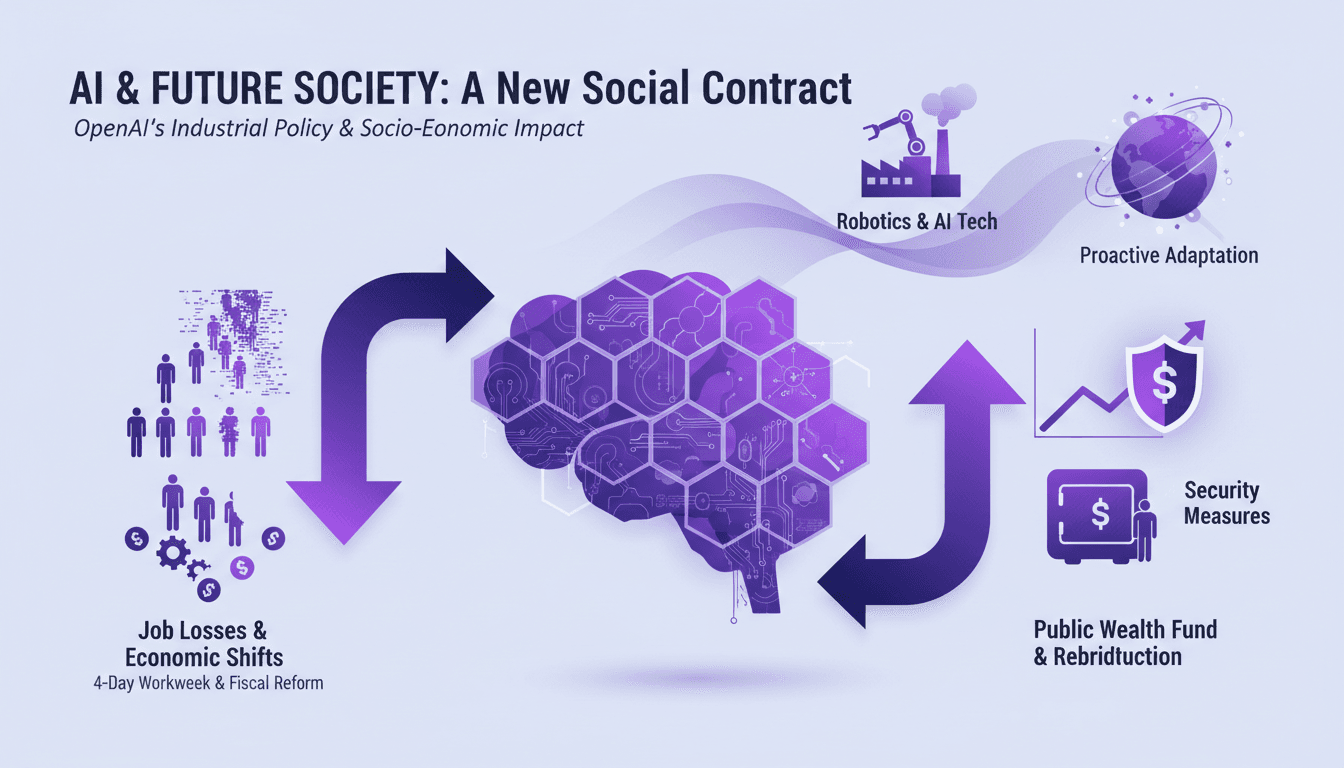

AI's Socio-Economic Impact: What You Need to Know

I've been in the trenches with AI, watching it reshape industries and redefine jobs. This isn't just theory—it's happening now. Let's dive into AI's socio-economic impact, especially LAGI. We're talking job losses, new workweek proposals, and even a public wealth fund to redistribute AI gains. If you're not adapting, you're already behind. Time is ticking—not in decades, but months before these changes become our reality. How do we gear up for this economic shift? We'll explore OpenAI's industrial policies and security measures for advanced models, alongside breakthroughs in robotics. Get ready for a candid discussion about the challenges and opportunities this tech brings.

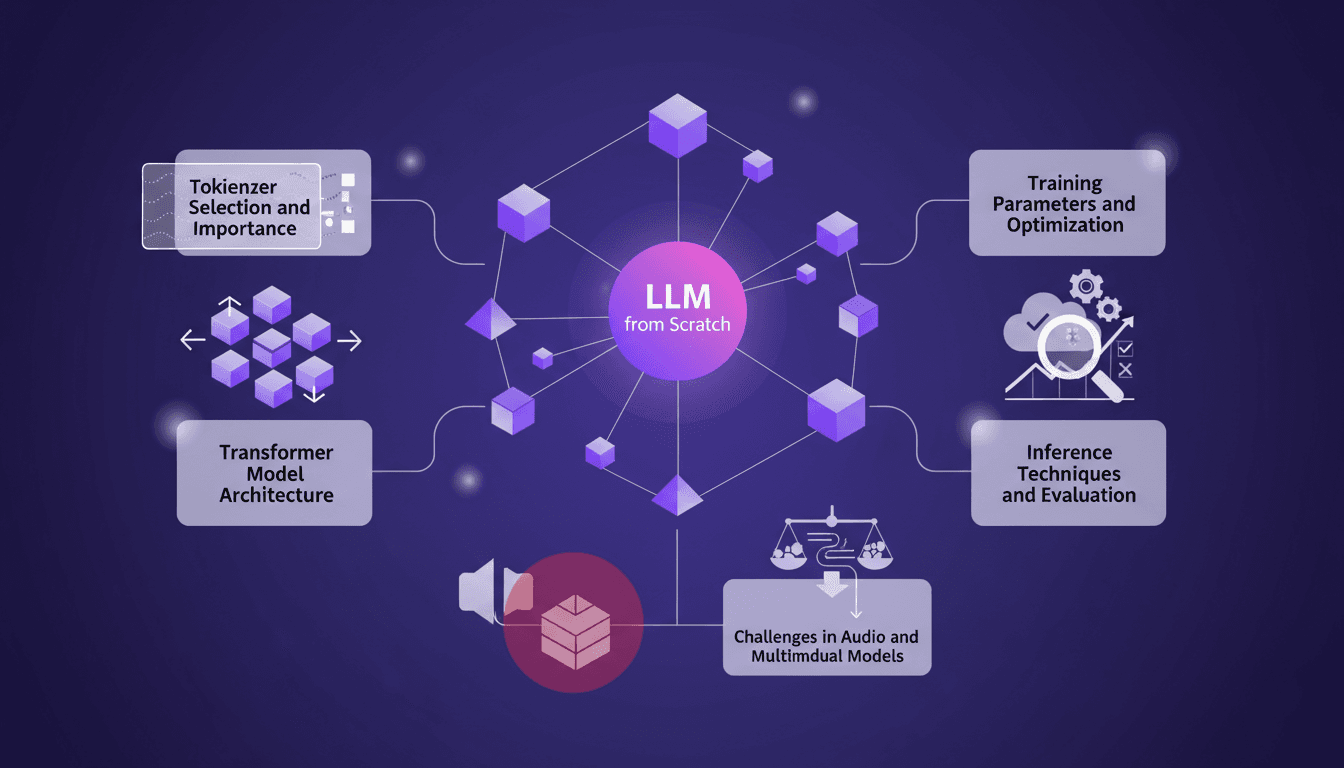

Training an LLM from Scratch: Practical Guide

I remember the first time I decided to train a large language model from scratch. It felt like climbing a mountain with no map. But once you get the hang of it, it's like orchestrating a symphony. In this guide, I'll take you through my journey of building an LLM locally, inspired by Andre Karpathy's Nano GPT. We'll dive into tokenizer selection, Transformer model architecture, training parameters, and much more. I'll share the mistakes I made, the solutions I found, and how I optimized for efficiency. This is a practical guide for anyone wanting to truly understand each step of the process without wasting time on unnecessary details.

Token Maxing: Building Software Efficiently

I returned to coding after years in management, and it felt like coming home. But the landscape had shifted. Tools evolved, and so did my approach. In this journey, I'll show you how I developed 'Gary's List' and tackled the challenge of token maxing. We'll dive into my plan-ge-review method and the impact of personal AI. This is a hands-on guide to navigating modern software development, comparing tools, and reflecting on quality education. And yes, I spent 200 dollars on a Claude Code Max account, but the payoff was worth it.