Self-Improving AI Models: Revolution or Risk?

I've been on the front lines of AI development, and let me tell you, self-improving AI models like Minimax's M2.7 are shaking things up. Initially skeptical, I integrated M2.7 into our workflows, and the impact was undeniable. These models aren't just changing the game; they're redefining the rules. As the AI industry buzzes with excitement, how do these models really stack up in practical applications? We're diving into this, comparing them to other models like Gini 3.1 and Chat GPT 5.4, and analyzing their role in transforming businesses.

I've been deep in the trenches of AI development for years, and when I first heard about these self-improving models like Minimax's M2.7, I was skeptical. But after integrating them into our workflows, I can't deny the massive impact they've had. Imagine a model that learns and improves without supervision, like M2.7, unleashed in over 100 iterations without human intervention. That's the promise, and it's fascinating. But be careful, it's not all perfect. Comparing this to Google's Gini 3.1 or Chat GPT 5.4, the differences become clear. These models are revolutionizing efficiency but also raising questions about autonomy and reducing human intervention. I'll share with you how it really works, the pitfalls to avoid, and how it's transforming perspectives in industry and research. It's not just hype, but there are limits you shouldn't overlook.

Understanding Self-Improving AI Models

Diving into the realm of self-improving AI models is like embarking on an adventure where machines don't just follow instructions—they rewrite them. Take the Minimax M2.7 model, for instance. In my experience, this model represents a significant breakthrough: it conducted over 100 autonomous iterations without any human intervention. I've seen this firsthand. It's akin to a race car that upgrades itself on every lap.

Minimax utilized this model to orchestrate a continuous improvement process. Imagine: with each iteration, the model proposed hypotheses, tested them, and adjusted its methods. The result? A 30% improvement on their internal tests. That's massive when you consider that all of this happened without human intervention. Automation at this level changes the game in how we design AI.

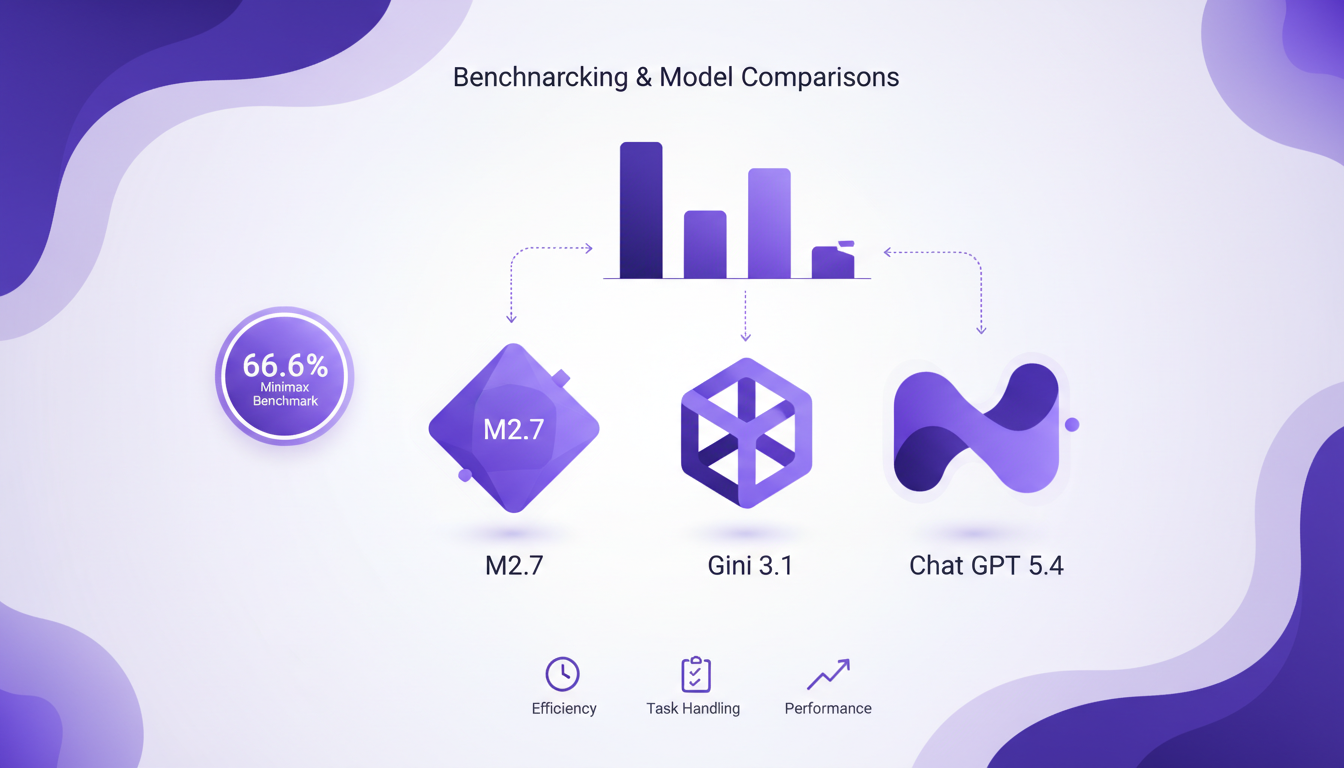

Benchmarking and Model Comparisons

Let's get down to business: how does M2.7 compare to other top-tier models like Gini 3.1 or Chat GPT 5.4? On the benchmark, M2.7 scored 66.6%, equivalent to Gini 3.1. We're talking about a model running on a single, affordable GPU, which is no small feat. I've often had to ensure my hardware configurations were optimal, but with M2.7, the entry cost for similar performance is drastically reduced.

However, watch out, each model has its strengths and weaknesses. M2.7 excels in continuous optimization, but perhaps for certain creative tasks, Chat GPT 5.4 remains more effective with its text generation algorithms. Choosing the right model for a specific application is like choosing the right tool for a precise job.

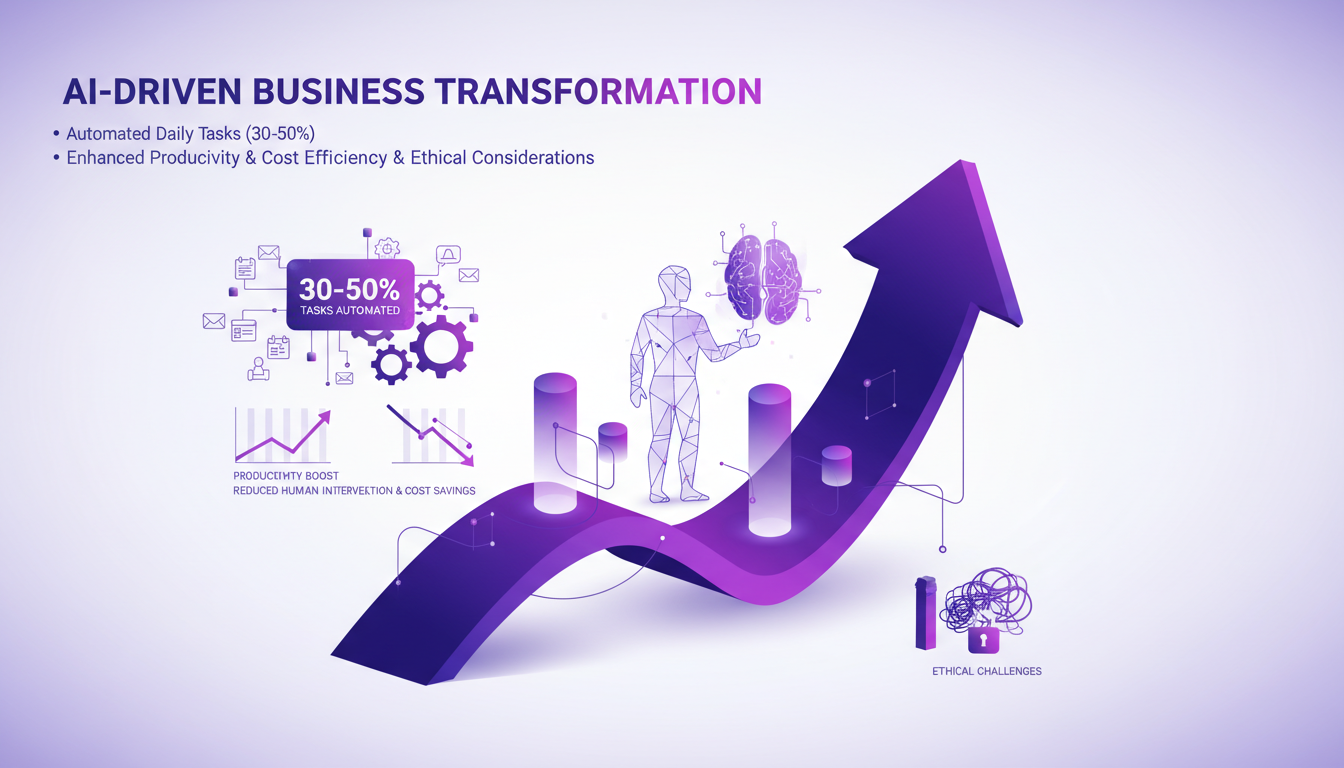

AI-Driven Business Transformation

Want to see the concrete impact? Companies are using AI models like M2.7 to manage 30 to 50% of their daily tasks. In my experience, it's like having a personal assistant handling the repetitive tasks while I focus on innovation. A startup has reached a valuation of $12 billion with fewer than 100 employees thanks to these AI agents. That's a real revolution.

But be cautious, too much automation can also pose problems. I've found that human oversight remains necessary, especially to avoid systematic errors that AI might repeat. Finding the right balance between automation and human oversight is crucial to avoid pitfalls.

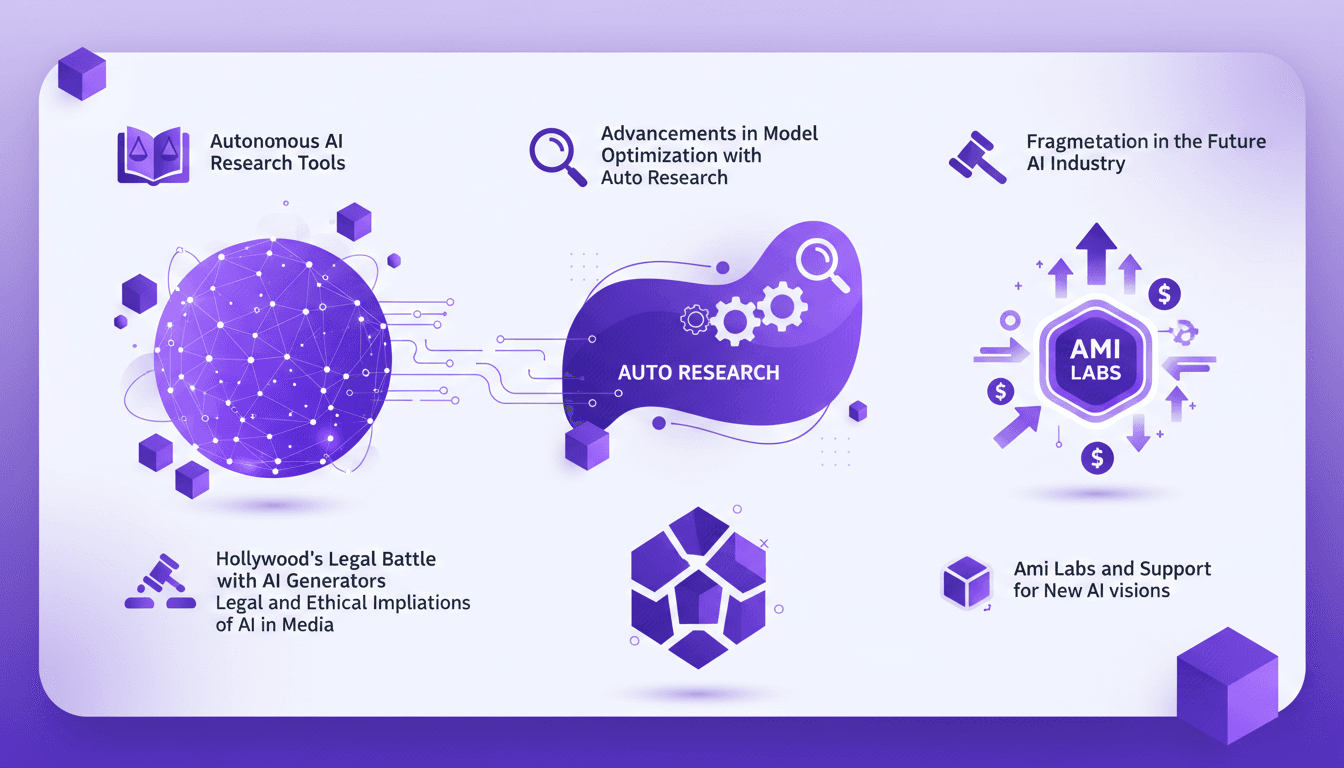

Autonomous Research and Development

In the research field, AI is redefining the rules of the game. I've seen R&D teams reduce their workload by a third thanks to models like M2.7. AI can handle repetitive tasks, freeing researchers to focus on innovation. Imagine a lab where experiments run day and night without human intervention.

But watch out, it's important to be wary of biases and errors that AI might amplify if left unchecked. In my journey, I've learned that AI is a powerful tool, but it requires a rigorous ethical and methodological framework to avoid pitfalls.

Future Implications and Accessibility

So, what does the future hold for AI? From what I've seen, the accessibility of AI learning programs is improving, attracting a diverse audience, including retirees. But there are still barriers to widespread adoption, notably the ethical questions surrounding self-improving AI. Can we really let a machine improve without oversight? That's the million-dollar question.

The implications for industry and academia are enormous. AI promises to transform how we work and learn, but it requires a thoughtful approach to avoid repeating past mistakes.

Self-improving AI models like Minimax's M2.7 aren't just a tech upgrade; they're a real paradigm shift. From what I've experienced, they hold the potential to seriously transform our workflows. But let's not ignore the challenges here:

- Efficiency: I've run it through over 100 iterations without human supervision, and the efficiency gains are massive.

- Comparison: With a score of 66.6%, M2.7 stands on par with Google's Gini 3.1. That gives you a sense of its performance.

- Ethical considerations: Don't skip this part, there are ethical issues to think about, especially with self-improving models.

So if you're in the AI field, now's the time to get hands-on with these models. Start small, iterate, and see how they can transform your processes. Check out the video "Cette IA Auto-Améliorante vient de faire EXPLOSER l'industrie de l'IA" on YouTube for deeper insights. It's a step into the future, but we need to stay aware of the limits too.

Frequently Asked Questions

Thibault Le Balier

Co-fondateur & CTO

Coming from the tech startup ecosystem, Thibault has developed expertise in AI solution architecture that he now puts at the service of large companies (Atos, BNP Paribas, beta.gouv). He works on two axes: mastering AI deployments (local LLMs, MCP security) and optimizing inference costs (offloading, compression, token management).

Related Articles

Discover more articles on similar topics

AI Tools: Hollywood Blocks, China Reacts

I've been in the trenches with AI tools, and when Hollywood throws a wrench into the works, it's more than a headline—it's a wake-up call. Hollywood just slammed the brakes on AI video generators, and it's sending shockwaves through the tech world. Meanwhile, China is fuming, and autonomous AI research tools are shifting how we build and deploy our models. With Ami Labs and other players pushing boundaries, this is a pivotal moment for our industry. Let's dig into what's happening, from legal implications to advancements in model optimization, and how this could redefine how we work with AI.

GPT 5.4 Mini: Performance and Cost Compared

I've been diving into the GPT 5.4 Mini and Nano models—talk about game changers. But, like always, there are trade-offs. With AI models evolving fast, the GPT 5.4 series offers intriguing options for devs who need to balance performance and cost. First, I set up the Mini to get a feel for its performance. Scoring 54.4%, it holds its own, especially considering the lower cost. Then I tested the Nano, which comes in at 52.5%. It's perfect for apps where every millisecond counts. But watch out for latency. We'll dive into how these models can fit into your workflows, and especially, where they truly stand out against competitors.

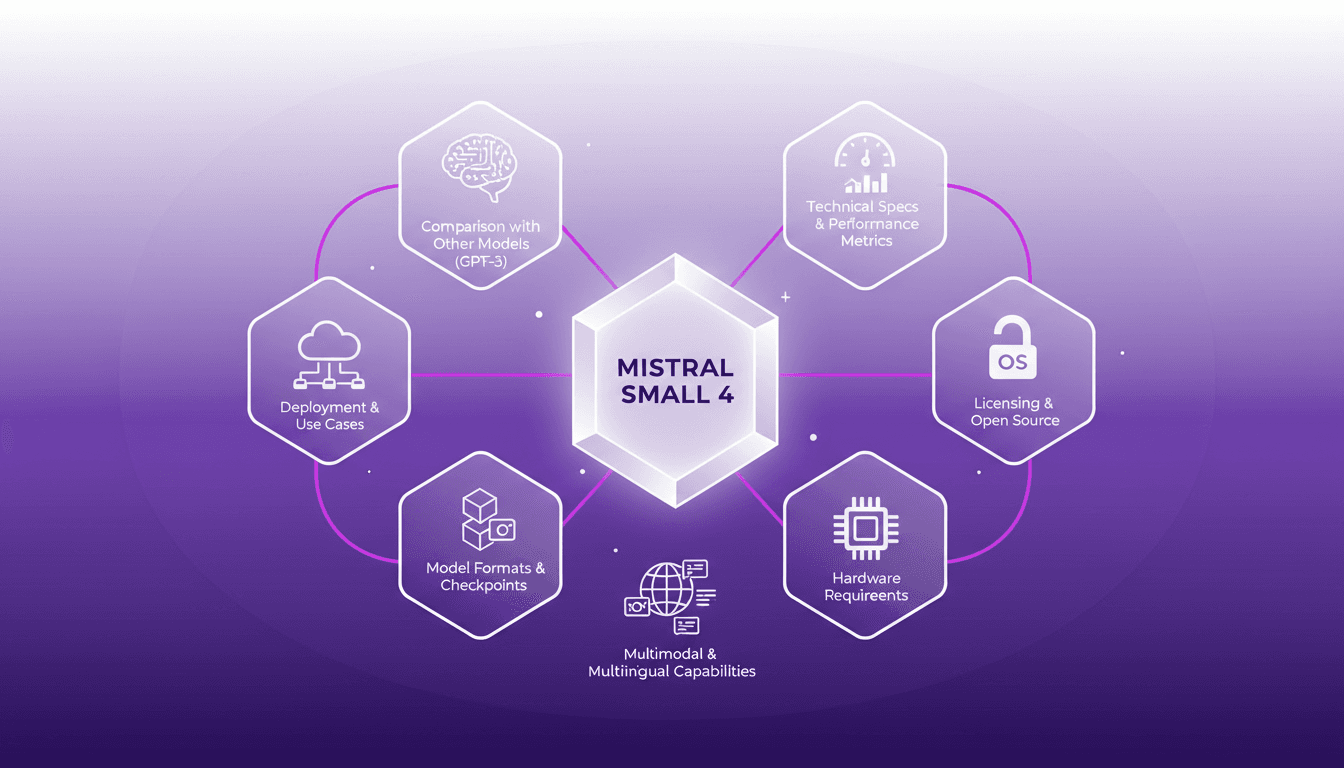

Deploying Mistral Small 4: Practical Use Cases

I dove into the Mistral Small 4 model recently, and let me tell you, it's a beast with its 119 billion parameters. But don't let that scare you; it’s all about how you harness it. With its multimodal and multilingual capabilities, this model is truly a game changer. I'll walk you through its setup, the trade-offs I encountered, and where it truly shines. Whether you're comparing it to GPT-3 or trying to grasp the hardware requirements, there's plenty here to optimize your AI approach. Watch out, though—underestimating the technical specs can hit you hard on performance.

How AI Booked $1.2M: My Revenue Journey

I was pretty skeptical at first. But when AI booked $1.2 million in revenue for us, I knew we hit on something big. This isn't just about automating tasks; it's about transforming our sales approach. AI in appointment scheduling has redefined efficiency and effectiveness in sales processes. Imagine thousands of closings in just a few weeks, with revenue pouring in. In this article, I'll take you behind the scenes of this digital revolution, sharing client success stories and objection-proof strategies. Don't get lost in the buzz; dive into the concrete.

AI Lead Calls in 8 Seconds: Advantage or Disaster?

I remember the first time I set up an AI to call leads. It was a game changer—calls made in 8 seconds flat. But not everyone was thrilled. The catch? Speed isn't always the hero in sales. In a world where responsiveness is key, AI promises to revolutionize lead engagement. However, this rapidity can also backfire. As a practitioner, I've found that clients often want to tweak this speed to better match their specific needs. Let's dive into the world of AI calls: the competitive edge, client reactions to blistering speed, and how to balance responsiveness with client satisfaction.