Managing AI Agent Context: Strategies and Challenges

I remember the first time I tried managing context in an AI agent. It was like juggling a dozen balls, each representing crucial data. Understanding what context engineering really means is key. It's not just about storage, but making that data work efficiently for you. In today's AI landscape, handling context effectively is a real game changer. But watch out, it's not just about throwing more data into the mix. We need smart solutions like hierarchical memory and sub-agents to tame this complexity. Discover how strategic data selection and smart context management can elevate your AI projects to the next level.

I remember the first time I tried to manage context in an AI agent. Honestly, it was like juggling a dozen balls, each representing a crucial piece of data. First, I had to grasp what context engineering really meant in this space. It’s not just about data storage; it’s about making that data work efficiently for you. In today’s AI landscape, handling context effectively is a real game changer. But beware, it’s not just about throwing more data at the problem. We need smart solutions like hierarchical memory and sub-agents to manage this complexity. This talk delves into how concepts like smart truncation memory, strategic data selection, and clever use of sub-agents can turn your challenges into successes. Discover these strategies and avoid common pitfalls that can derail your AI projects.

Understanding Context Engineering in AI

Today, when talking about AI, context engineering is the hot topic. I found this out while working on our agent Alex. Context engineering is the art of managing what the AI needs to see and what it should ignore. Why does it matter? Because it's what makes the difference between a successful agent and a failing one. Sally-Ann Delucia summed it up well: strategic data selection is crucial to overcoming context management challenges, notably data overload and over-truncation of context.

Context management is crucial for AI agents to remember essential data while forgetting irrelevant information. Imagine trying to squeeze a massive amount of data into a small context window – yes, that's exactly the challenge. And if you push too far, you break the agent's logic. Bottom line: you need to be strategic, not just fill up to the token limit.

"Context engineering has become more popular, emphasizing the importance of context over prompts in agent success."

Challenges and Solutions in Context Management

Ah, the over-truncation. It's the classic mistake. We think we're solving the problem by cutting the context, but we end up forgetting crucial information. That's where smart truncation memory comes into play. Essentially, it allows us to trim unnecessary data while keeping the essentials. I've seen this work with Alex: we reduced our data from 100 to 40 characters while maintaining performance.

Here's how I implemented smart truncation: first, prioritize critical data, then apply truncation rules based on information importance. But watch out, too much truncation can lead to significant context loss, so you need to test and adjust constantly.

- Avoid over-truncating.

- Keep a priority list for critical data.

- Adapt based on observed results.

Leveraging Sub-Agents for Data Handling

Let's talk about sub-agents. These little workers lighten our heavy data load. When I integrated sub-agents for Alex, it was a game changer. They handle heavy workloads, allowing the main agent to focus on strategic data analysis.

By using sub-agents, I could distribute data processing tasks. For example, one sub-agent can extract relevant data while another analyzes it. This optimizes the workflow and avoids slowdowns. But be careful, you must orchestrate well and not overload these sub-agents, or you'll fall back into the overload trap.

- Implement sub-agents for heavy tasks.

- Orchestrate their work to avoid overloads.

- Use them for specific tasks, not everything.

Long-Term Memory and Context Selection

Long-term memory is a game changer in AI. With Alex, I've integrated context selection heuristics that allow us to keep only essential information over the long term. This improves performance in long sessions, but be aware, there are technological limits.

To implement long-term memory, start by defining data retention criteria. Then, test these criteria in long sessions to evaluate their effectiveness. But remember, AI isn't perfect. Sometimes, it forgets important details, and you need to adjust.

- Define criteria for data retention.

- Test in long sessions.

- Adjust based on results.

Strategic Data Selection: The Key to AI Success

Strategic data selection is what ensures the success of an AI application. With Alex, I've learned to balance data quantity and quality. Too much data can drown the AI, too little can make it inefficient. Here's how I do it: first, I define the AI's goals. Then, I select data that directly serves these goals. Finally, I evaluate the impact on performance.

Here's a guide for effective selection: start by identifying critical data, assess its relevance, and adjust based on the results obtained. This not only improves AI performance but also avoids common mistakes like data overload.

- Balance data quantity and quality.

- Select relevant data for the goals.

- Adjust based on results.

Agentic search is a method I've used to boost context engineering efficiency, and it makes a noticeable difference.

Managing context in AI agents isn't just about the tech; it's about making smart choices with the tools we have. First, the trick of smart truncation: I take the first 100 characters of the context blob to keep the core relevant without overload. Then, the role of sub-agents: 40 skills built into Alex show how to distribute the load to optimize data handling. Watch out for the limits, like the 10 turns that can sometimes become a bottleneck. But by orchestrating all this well, efficiency is within reach.

Looking forward, I see massive potential in optimizing AI context management, but we must remain mindful of the trade-offs. So, ready to optimize your AI context management? Start implementing these strategies today and watch your AI agents perform at their best. I encourage you to watch Sally-Ann Delucia's video for deeper insights: https://www.youtube.com/watch?v=esY99nYXxR4

Frequently Asked Questions

Thibault Le Balier

Co-fondateur & CTO

Coming from the tech startup ecosystem, Thibault has developed expertise in AI solution architecture that he now puts at the service of large companies (Atos, BNP Paribas, beta.gouv). He works on two axes: mastering AI deployments (local LLMs, MCP security) and optimizing inference costs (offloading, compression, token management).

Related Articles

Discover more articles on similar topics

Agentic Search: Boosting Context Engineering Efficiency

I remember diving into context engineering with a fixed pipeline mindset. It was limiting, cumbersome, and frankly, a bit outdated. But then, I discovered agentic search—game changer. In this article, I walk you through how I transformed my approach. We shift from rigid logic to dynamic tools like Lang Chain that enhance our search capabilities. We'll talk challenges, parameter complexity, and hybrid tools that make a difference. If you're in the field, you know that context engineering is about 80% agentic search.

Scaling AI Skills: A Builder's Guide

I've been in the trenches, building AI workflows that don't just work but scale. Let's talk about skills in AI—those discrete units of work that are game-changers if you know how to manage them right. In a world where AI is reshaping industries, understanding how to efficiently develop, manage, and share these skills is crucial. This isn't about theory; it's about real-world application. Nick Nisi and Zack Proser from WorkOS delve into the structure and components of skills, context management, confidence scoring, and how to share them within teams. If you're tired of empty talk and want tools that deliver in day-to-day operations, this is where you want to be.

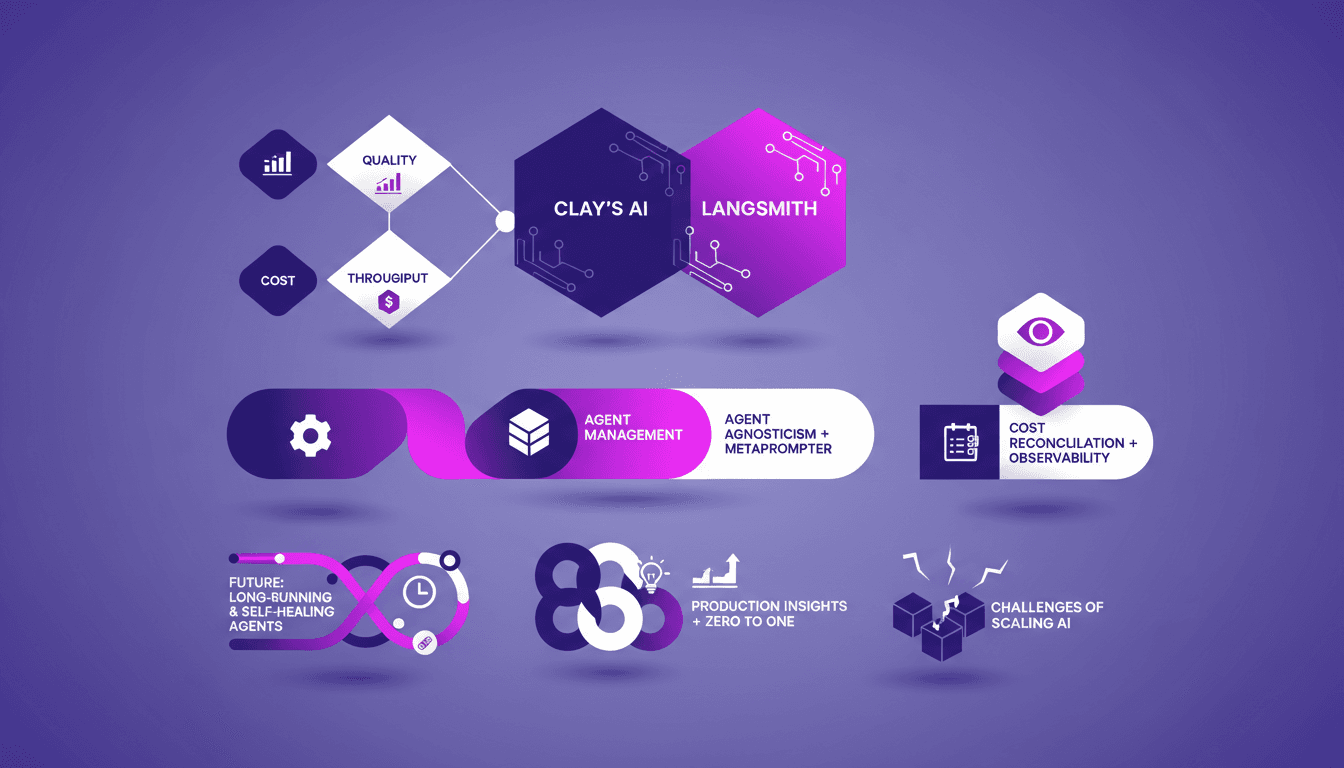

Managing 300M Agent Runs with LangSmith: Clay

I still remember the first time we hit 300 million agent runs in a month at Clay. It was exhilarating but a logistical nightmare. Yet, we orchestrated this massive operation with LangSmith. Every day, we juggle AI integration and cost optimization while maintaining impeccable quality. LangSmith is our ally, handling everything from agent orchestration to cost reconciliation. This isn't theoretical; it's our daily grind. If you're wondering how we manage at this scale, the answer lies in our ability to tweak every detail, anticipate errors, and always aim higher.

Real-Time Transcriptions: Efficiency with Cronulla

I dove into Cronulla's real-time transcription capabilities and found ways to enhance efficiency. Let's talk about how I transformed our Electron app and tackled chatbot customization. In a world where real-time data and customization are king, finding the right tools and workflows can make or break your project. I had to build internal tracing tools for better visibility and consider re-platforming our app from Electron to Tauri. You'll see, when LLMs start self-verifying, testing efficiency skyrockets. It's a journey I'm ready to share with you.

Building a $20K/Month App in 83 Days

I built an app that hit $20K in monthly revenue in just 83 days. Sounds like a wild ride? It was. I'm going to take you through the strategy that got me there, the commitment it took, and the tools that made it possible. I connected my technical stack, orchestrated my development tools — and yes, I got burned a few times along the way. But every mistake was a lesson learned. I'll share insights on idea validation, engagement metrics, and the pricing model I implemented. Plus, the challenges faced and the marketing strategies that really worked. This is practical, hands-on stuff, not just theory. Ready to dive into the world of app development and see how to turn an idea into a profitable product?