GPT-5.5: Evolution and First Impressions

I've spent years tinkering with AI models, and when GPT-5.5 dropped, it felt like a new wrench in my workshop. Let me share my first impressions and how it's reshaping workflows. From basic tab completions to tackling ambiguous tasks, AI has come a long way. GPT-5.5 promises autonomous problem-solving and seamless context handling. I'll dive into how we're testing its performance at Ramp and its use in real-world applications.

When GPT-5.5 landed, it felt like finding a new tool in my AI toolkit, and let me tell you, it's pretty impressive. I've been connecting, orchestrating, and tweaking AI models for years, and this one is a game changer. I'm going to walk you through how it's transforming our workflows, especially at Ramp where we're pushing its limits. We've moved from basic tab completions to handling ambiguous tasks, and GPT-5.5 takes it further by autonomously solving problems while maintaining impressive context continuity. But beware, it's not magic. There are pitfalls to avoid and benchmarks to watch. So if you're in the AI space, you'll want to keep a close eye on this evolution.

Evolution of AI Models: From Tabs to Tasks

Reflecting back just two years ago, AI was primarily about completing tabs. Fast forward to today, it's all about complex task management. This evolution is monumental, and GPT-5.5 is the epitome of this shift. It's no longer about filling in gaps but understanding context. This contextual understanding is a real game changer. Previously, we had to provide manual instructions, but now AI autonomously discovers what needs to be done. Why does this matter? Because it redefines how we work, both for developers and businesses.

Firstly, we've seen AI transition from mere completion to handling ambiguous tasks. GPT-5.5 can understand these tasks without detailed instructions and provide multiple solutions. For developers, this means saving time and optimizing efficiency. For businesses, it's a clear strategic asset.

First Impressions of GPT-5.5: A Game Changer?

When I first integrated GPT-5.5 into my workflow, its ability to handle context and solve problems stood out immediately. The 'wow' moment was seeing it autonomously discover solutions. Sometimes, I'd give it vague tasks, and it would break them down, offering multiple solutions. But beware, it's not perfect.

GPT-5.5 excels at understanding context, yet it still has limitations, especially with highly complex tasks where some guidance is needed. However, the efficiency gains and potential cost savings it offers are undeniable.

"GPT-5.5 offers a much more intuitive user experience, a real leap in coding."

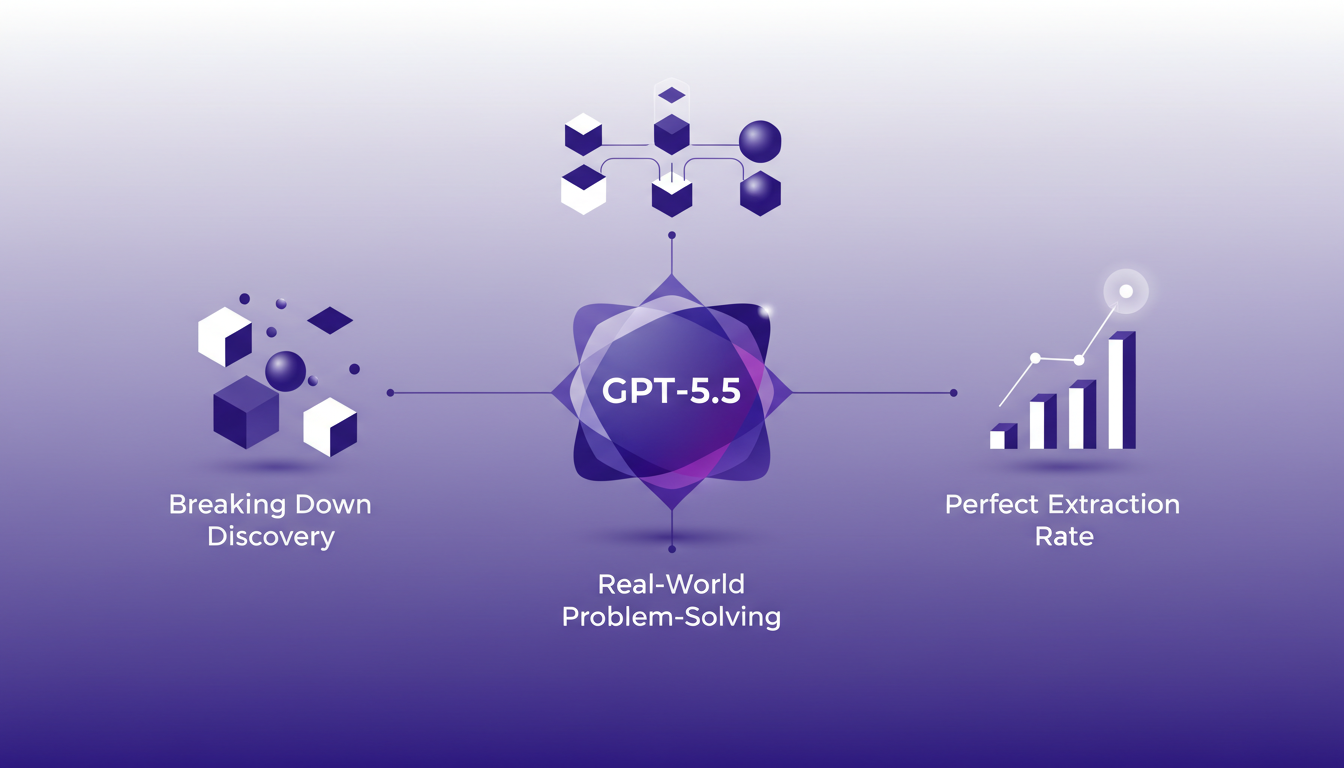

Autonomous Problem-Solving: How GPT-5.5 Does It

So how does GPT-5.5 solve problems autonomously? Simple: it explores and uses the tools at its disposal. For instance, in my projects, it used our databases and telemetry tools to find novel ways to solve problems. This is where the perfect extraction rate benchmark comes into play. GPT-5.5 hits this benchmark at impressive levels, though it sometimes needs a bit of human guidance.

It's crucial to find the right balance between autonomy and control. Too much autonomy without oversight can lead to errors. Tip: always verify the results to avoid unpleasant surprises.

Performance Benchmarks and Context Continuity

Performance metrics from Ramp reveal that GPT-5.5 excels, notably in maintaining context continuity during compaction periods. This means it keeps important details from one task to the next, even when it seems to have "forgotten". But watch out for pitfalls in context management. Optimizing is key to preventing significant data loss.

To optimize GPT-5.5's performance, I recommend always testing and adjusting settings based on specific tasks. It's often faster to tweak a few settings than to waste time with incorrect outcomes.

Real-World Applications at Ramp: A Case Study

At Ramp, we leverage GPT-5.5 in various real-world scenarios. For example, in extracting information from financial documents, we've seen a notable improvement in efficiency. What worked was the AI's adaptation to our internal tools without needing major adjustments. However, some limitations persist, particularly in managing tasks where context frequently changes.

For teams considering adopting GPT-5.5, I advise starting with pilot projects to understand its strengths and limitations. The future looks promising, but it's essential to stay vigilant and maintain control to make the most of this technology.

In summary, GPT-5.5 is a powerful tool that, when used wisely, can radically transform how we approach complex tasks. But like any tool, it needs to be used judiciously.

GPT-5.5 isn't just another AI model; it's a game changer in how we tackle problem-solving. First, its autonomous ability to handle ambiguous tasks is leaps beyond what we saw just two years ago. Next, with zero touch, it hits a perfect extraction rate, letting us focus on the core without the hassle. But watch out, these undeniable efficiency gains come with trade-offs: there's always a context limit, especially when pushing boundaries. As I integrate GPT-5.5 into my workflows, I already see how it's transforming my daily operations. Ready to see how GPT-5.5 can transform yours? Dive in and start experimenting. For a deeper dive, I'd recommend checking out Will Koh's original video: https://www.youtube.com/watch?v=Aq0Q_G-rtfA. It was crucial for me to grasp the full extent of this model.

Frequently Asked Questions

Thibault Le Balier

Co-fondateur & CTO

Coming from the tech startup ecosystem, Thibault has developed expertise in AI solution architecture that he now puts at the service of large companies (Atos, BNP Paribas, beta.gouv). He works on two axes: mastering AI deployments (local LLMs, MCP security) and optimizing inference costs (offloading, compression, token management).

Related Articles

Discover more articles on similar topics

First Steps with GPT-5.5: Boosting Efficiency

When I first integrated GPT-5.5 into my daily workflow, I wasn't just looking for a new tool—I was looking for a game changer. And let me tell you, it didn't disappoint. As an engineer, I'm always hunting for ways to streamline processes and boost productivity. GPT-5.5 promises to do just that by handling ambiguous prompts and autonomously completing tasks. Early tests showed increased efficiency, a direct impact on my engineering workflows, and noticeable improvements in decision-making and code writing. But watch out, the unexpected surge in pull requests caught me off guard. Here, I share my first impressions and how GPT-5.5 can transform our workways.

Imagen 2.0: Revolutionizing Image Generation

When I first got my hands on Imagen 2.0, I was blown away by its potential. We're talking about generating 2K resolution images with multilingual support. The first thing I did was integrate it into my workflow, and the improvement is tangible. The advancement in resolution and detail is a real game changer, but watch out for technical limits in multi-image generation. Compared to previous models and DALL-E, Imagen 2.0 really stands out. This isn't about theory; I'm talking about daily impact on my practice. If you're aiming to innovate, this is the tool to explore.

LLMs Evolution: 8 Years of AI Progress

I remember when GPT-2 first hit the scene. It was a game changer, but not without its quirks. Fast forward a few years, and we're knee-deep in LLMs that are reshaping our interaction with technology. So, let's dive into what these models are really about, what they can do, and where they might trip you up. From GPT-2 to GPT-4, these models have transformed text generation and public perception of AI. But with great power comes great complexity. We'll explore the evolution, training challenges, anthropomorphism, and ethical considerations, while dissecting the impact of LLMs on philosophical and logical discourse.

Balancing Info for AI Effectiveness

I've been deep in the trenches with AI systems, and if there's one thing I've learned, it's that waiting for the 'perfect' AI is a fool's game. I build around what we have now, balancing information input to optimize performance. In this episode, I share how this approach can transform your workflow. AI is evolving at breakneck speed, but sitting on our hands waiting for the next big breakthrough is a mistake. By leveraging existing AI capabilities effectively, we can achieve significant gains in efficiency and performance today. We'll dive into balancing information for AI effectiveness, building architecture around existing AI, the risks of waiting for AI advancements, the impact of excessive data on AI performance, and the importance of speed in human interaction and sales.

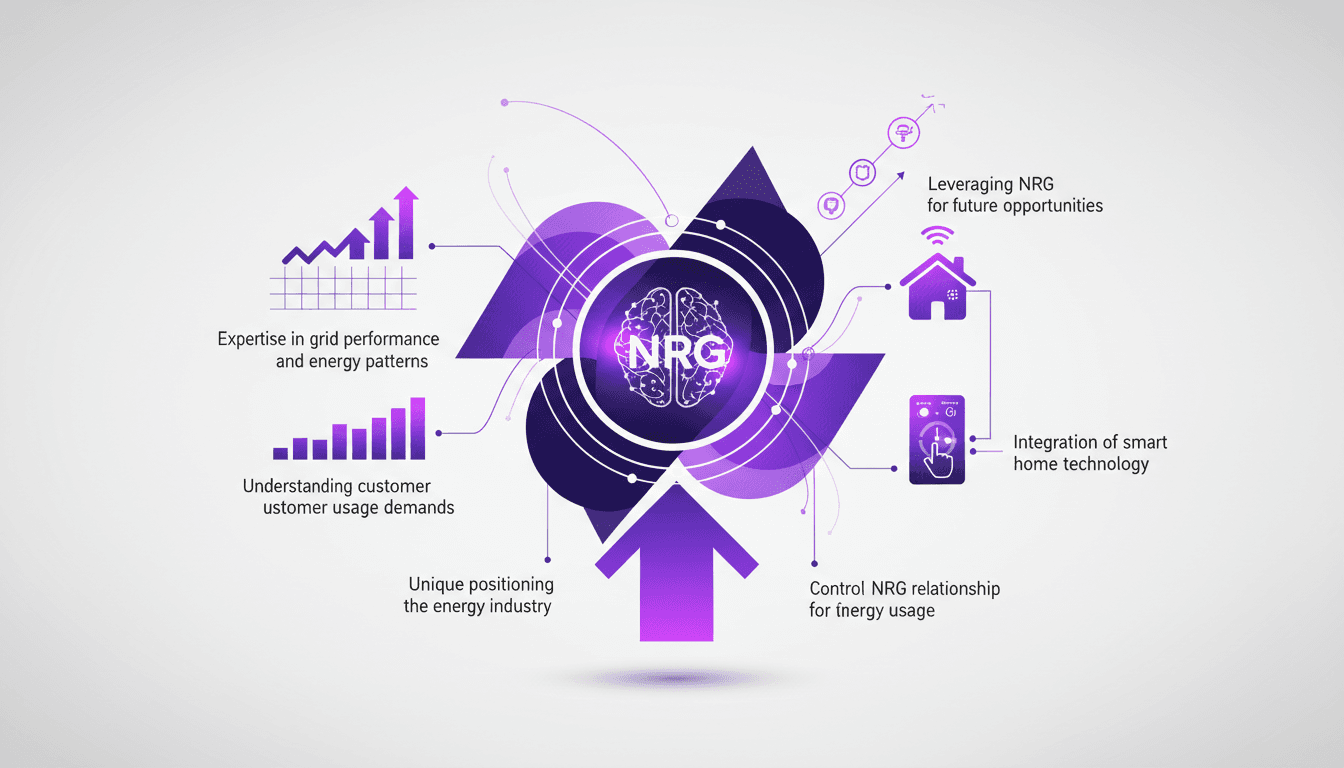

Integrating AI in Energy: My NRG Experience

Joining the NRG family wasn't just a career move; it was like stepping into a new era of energy innovation. I connect our AI expertise directly to grid performance, and every day, I'm hands-on with smart home tech that reshapes how we think about energy. In this article, I'll walk you through how we've leveraged our position within NRG to enhance grid performance, understand energy patterns, and meet evolving customer demands, all while integrating cutting-edge smart home technology. We'll discuss the impact of our acquisition by NRG, our expertise in grid performance and energy patterns, and our unique positioning in the energy industry. This exploration will give you insight into our true competitive edge and how we're setting the stage for future opportunities.