Building RL Environments for LLMs: A Practical Guide

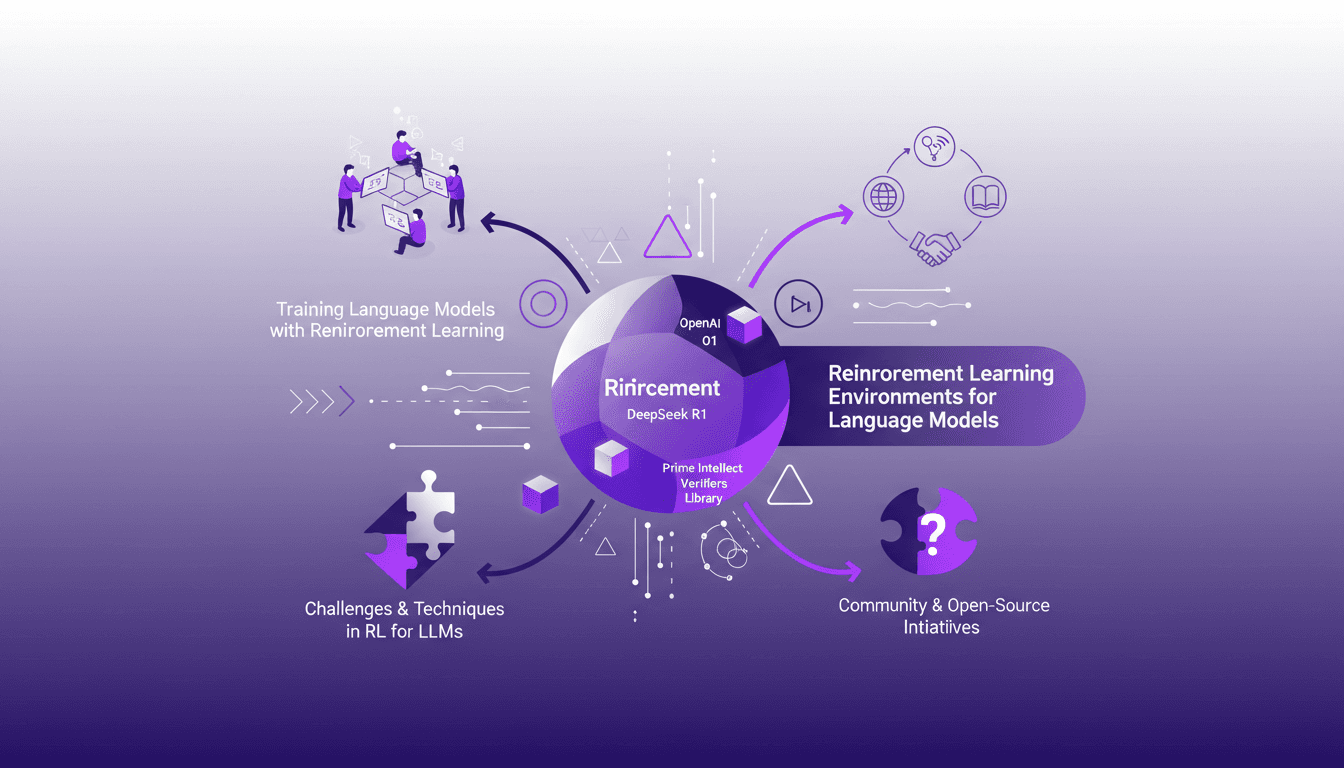

When I first dived into engineering RL environments for language models, the complexity was overwhelming. But I navigated through it using tools like OpenAI's O1 series and DeepSeek R1. Reinforcement learning is a game-changer for language models, but it's a tough nut to crack. I’ll show you how to effectively build and optimize these environments. We’ll discuss RL environments for LLMs, Prime Intellect's Verifiers library, and the challenges and techniques in RL for LLMs. I've used thousands of RL environments and I'll share what I've learned. If you're ready to dig in, read on.

When I first dove into engineering RL environments for language models, I was hit by the wave of complexity. It was like standing at the edge of a vast ocean. But with tools like OpenAI's O1 series and DeepSeek R1, I charted my course. Reinforcement learning is indeed a game-changer for LLMs, but it’s not a walk in the park. Let me show you how I built and optimized these environments. We’ll dive into the intricacies of RL environments for LLMs, delve into Prime Intellect’s Verifiers library, and navigate through the challenges and techniques of RL in this context. I've orchestrated thousands of RL environments, and I’ll share both my wins and mistakes. Ready to dig in and see how it all comes together? Read on.

Understanding Reinforcement Learning for LLMs

In the realm of language models (LLMs), reinforcement learning (RL) has become indispensable. Why? Because it allows models to learn by interacting and exploring, much like a child playing to understand their environment. The key here is the concept of verifiable rewards. These rewards ensure that the model has indeed learned what it's supposed to. But watch out, without proximal policy optimization (PPO), the model can easily fall into inefficient patterns. This is where batch size becomes crucial.

I discovered that without proper batch size management, learning becomes unstable. To optimize this, I had to tweak my parameters after several trials, and believe me, I learned the hard way. Moreover, reinforcement learning environments for language models are currently a hot topic, with startups receiving significant funding for such innovations.

Leveraging OpenAI's O1 Model Series

Integrating OpenAI's O1 model series into my RL environments was an enriching experience. These models have a unique approach, notably using RL to enhance chain of thought performance. However, be cautious of the trade-offs: more performance often means more complexity. In the real world, this translates to efficiency gains, but also increased resource costs.

I orchestrated the integration of O1 models into various RL environments, and I found that these models do indeed improve the model's thinking capabilities. However, watch out not to overcomplicate your infrastructure, as it can quickly become a resource drain.

DeepSeek R1 and Verifiable Rewards

The verifiable rewards of DeepSeek R1 are a real game changer. Implementing them in my RL environments allowed me to observe a marked improvement in the models' reasoning strategies. But beware, structuring rewards incorrectly can lead to unexpected results. I learned to avoid these pitfalls by gradually adjusting my parameters.

To maximize performance against random opponents, I found that performance was similar at 85% when I correctly adjusted the rewards. What's crucial here is the ability to evolve the model's strategies without relying solely on supervised training data.

Exploring Prime Intellect's Verifiers Library

Prime Intellect's Verifiers library is a fantastic tool for enhancing RL environments. While building and evaluating environments for games like Tic-Tac-Toe, I encountered challenges, but I was able to overcome them thanks to Prime Intellect's modular tool.

One major challenge was managing the growing complexity of environments without fragmenting efforts. Fortunately, the open-source community is a major asset, and I was able to leverage many shared tools to streamline my process.

Community and Open-Source Initiatives

The community plays a crucial role in advancing RL environments. Open-source tools not only saved me time but also spared me many headaches. Contributing to and benefiting from community resources is a balance between innovation and pragmatism.

For those looking to get involved, I recommend starting with open-source projects like Verifiers. It requires not just technical skills but also a pragmatic understanding of the real needs in the field.

Building RL environments for language models is complex but rewarding. I've leveraged OpenAI's O1 series and tapped into the community to streamline my workflow and maximize efficiency. Here's what I picked up:

- Formatting the reward function with a 0.2 weight was a game changer.

- Against a random opponent, performance remains very similar at 85%, which is impressive.

- Effectively using thousands of RL environments is doable and significantly boosts our capabilities.

Looking ahead, the potential to further optimize these environments is real. Innovation's knocking—ready to optimize your RL environments? Dive in and start experimenting today! Check out Stefano Fiorucci's video "Let LLMs Wander" for more insights. It's like having a peer-to-peer chat full of practical tips.

Frequently Asked Questions

Thibault Le Balier

Co-fondateur & CTO

Coming from the tech startup ecosystem, Thibault has developed expertise in AI solution architecture that he now puts at the service of large companies (Atos, BNP Paribas, beta.gouv). He works on two axes: mastering AI deployments (local LLMs, MCP security) and optimizing inference costs (offloading, compression, token management).

Related Articles

Discover more articles on similar topics

From Coding to Solution-Focused Engineering

I've spent enough sleepless nights coding to know that the real challenge isn't about how much code we write, but the solutions we deliver. In a world where you can code 55 times faster, the mistake is focusing solely on churning out lines of code. What really matters is solution-focused software engineering, AI adoption, and integrating all this into our platforms. If you've ever wondered why your productivity only improves by 14% despite all your efforts, maybe it's because you haven't yet embraced this holistic approach that pushes beyond just coding.

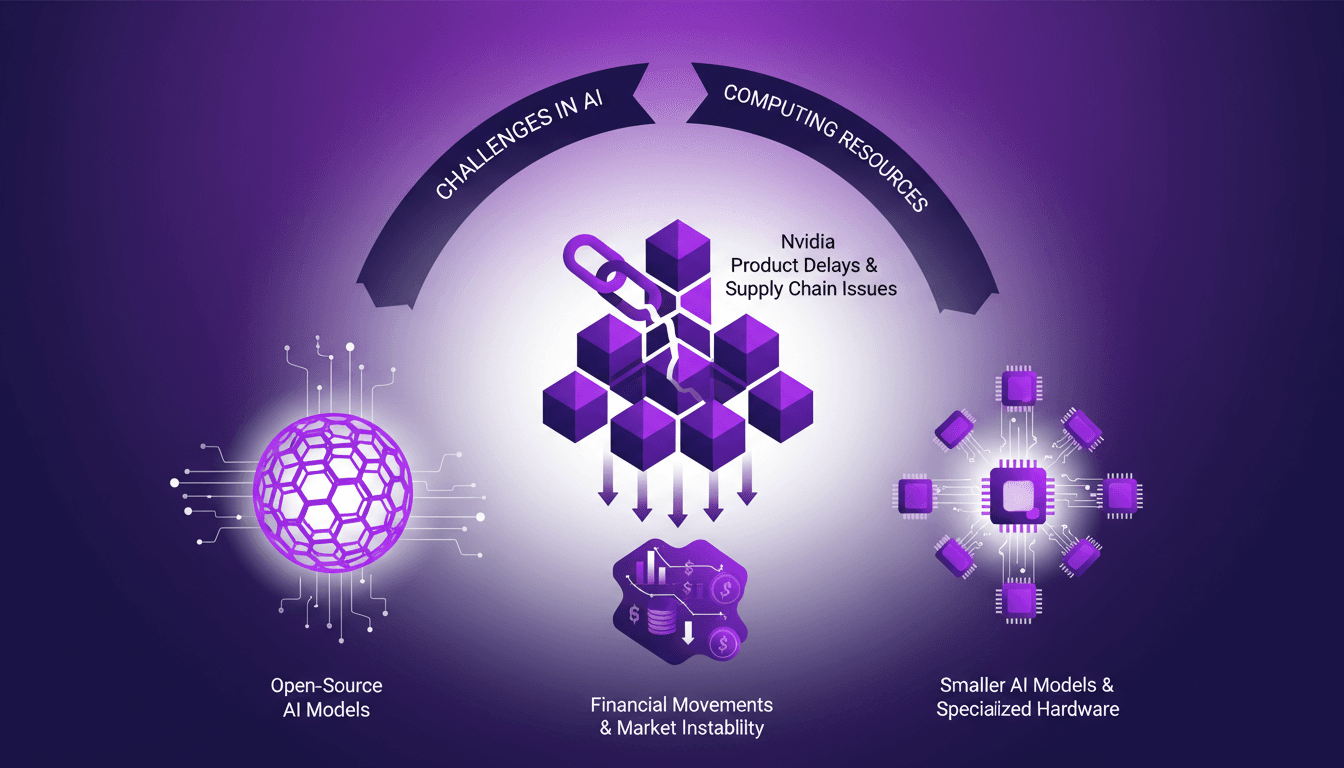

AI Resource Struggles: Nvidia Delays, Open Source

I remember the first time I hit a wall with AI compute resources. It felt like trying to run a marathon on a treadmill stuck at walking speed. In this rapidly evolving AI landscape, we're facing real challenges—from Nvidia's delays to the growing allure of open-source models. The market is in flux, with financial movements like Mistral's debt announcements adding another layer of complexity. We need to navigate resource shortages, the emergence of smaller AI models, and supply chain issues affecting component lead times. Let's dive into these dynamics from a practitioner’s perspective, focusing on practical solutions and trade-offs.

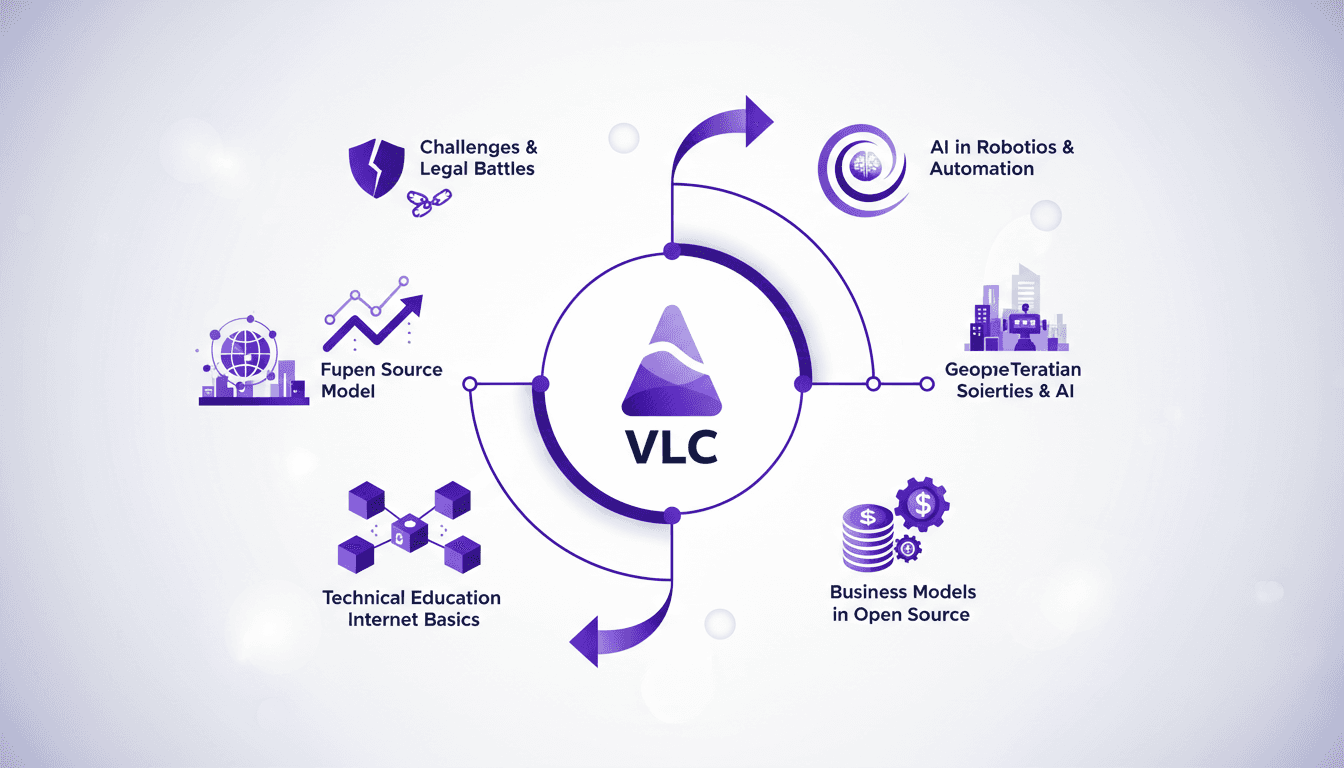

Building an App Downloaded 7 Billion Times

I remember the first time I saw the download numbers for VLC skyrocket. It was a real game changer, but it wasn't all smooth sailing. This is where you realize that behind every phenomenal success, there are mountains of challenges to overcome. Between legal battles and the implications of open source, VLC's journey is anything but ordinary. As a developer, we often think the hardest part is coding, but sustaining and growing an app downloaded 7 billion times is a whole different ball game. Let's dive into the story of VLC, an adventure where technology and perseverance are tightly intertwined.

Modeling Emotions in AI: Hands-On Experience

I've spent countless hours tinkering with AI models, and let me tell you, the moment you see a neural pattern map onto something as human as emotion, it's a game changer. But it's not all roses. In this article, I dive into how these emotional behaviors emerge and what they mean for AI development. We explore AI neuroscience, understanding how language models simulate emotions, and how these patterns influence their behavior. I've tinkered with 'desperation neurons' and observed AI characters developing functional emotions. So, how do we shape AI psychology for trustworthy systems? Here's what I've learned from the trenches.

Bank Scalability: OpenAI and Gradient Labs

I remember the first time I integrated AI into a banking system. Scalability issues were a nightmare, but partnering with OpenAI and Gradient Labs opened up a whole new world. In this article, I walk you through how we tackled these challenges using high-quality, low-latency models. Imagine an AI handling the entire customer support life cycle in a bank, it's a real game-changer. With constant feedback exchanges, we've accelerated innovation in the financial sector. Voice agents with minimal latency now provide faster and more accurate customer service. Don't get burned by relying solely on human-based transaction monitoring: AI is now indispensable for financial institutions.