Bank Scalability: OpenAI and Gradient Labs

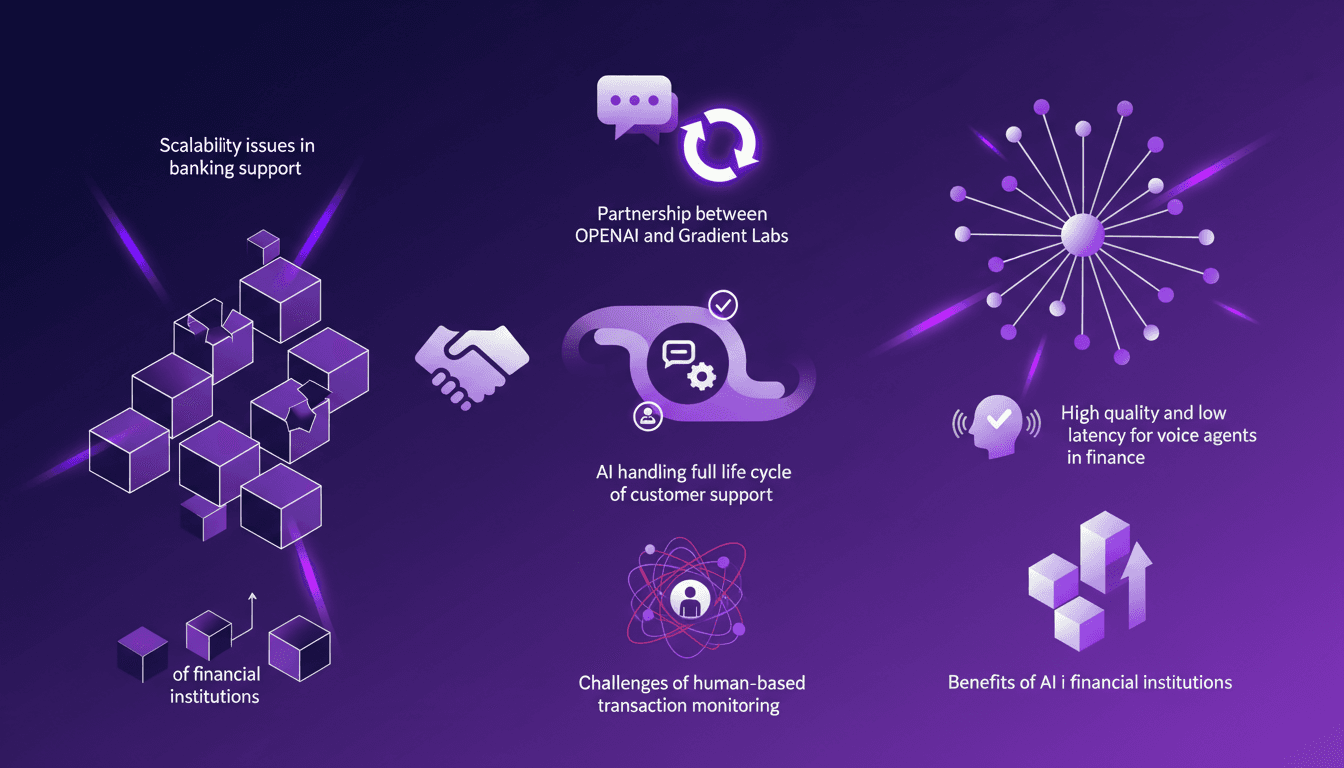

I remember the first time I integrated AI into a banking system. Scalability issues were a nightmare, but partnering with OpenAI and Gradient Labs opened up a whole new world. In this article, I walk you through how we tackled these challenges using high-quality, low-latency models. Imagine an AI handling the entire customer support life cycle in a bank, it's a real game-changer. With constant feedback exchanges, we've accelerated innovation in the financial sector. Voice agents with minimal latency now provide faster and more accurate customer service. Don't get burned by relying solely on human-based transaction monitoring: AI is now indispensable for financial institutions.

I remember the first time I tried to integrate an AI solution into a banking system. The scalability was a real headache, and I got burned a few times before I figured out how to handle the volume without crashing everything. Then, I discovered OpenAI and Gradient Labs, and it was a game-changer. In this article, I'll show you how we tackled this challenge using high-quality, low-latency AI models. It's simple: imagine an AI that can handle the entire customer support life cycle in a bank. Yes, it's possible, and it's a game-changer. With regular feedback exchanges, we've been able to boost innovation in the financial sector. For voice agents, minimal latency is crucial, especially in finance where every millisecond counts. And frankly, financial institutions can no longer do without AI, especially when it comes to monitoring transactions.

Tackling Scalability in Banking Support

Scalability in banking support can cripple systems if not managed properly. I've seen systems buckle under the pressure of increased load. AI models, like those from OpenAI, offer a scalable solution that's frankly a game changer. But watch out, initial challenges include latency and model quality. What I found essential is partnering with domain experts like Gradient Labs to overcome these hurdles. First insight: you can't do it all alone.

"Banking support is impossible to scale with humans today."

When I started working with Gradient Labs, we quickly realized that their domain expertise perfectly complemented the power of OpenAI's models. It's like having a team of "super agents" AI handling complex issues without breaking a sweat.

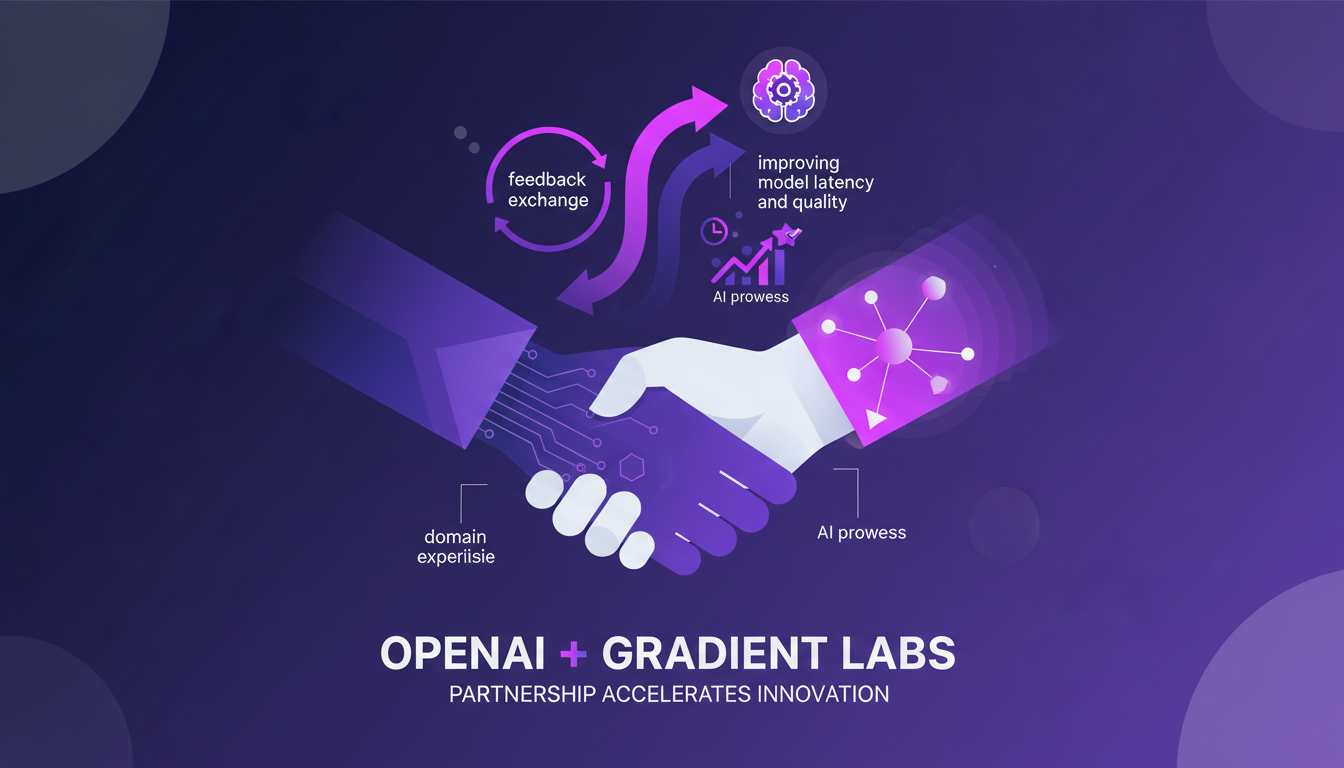

The Power of Partnership: OpenAI and Gradient Labs

Partnerships aren't just for show. They accelerate innovation through feedback exchange. With Gradient Labs, it's more than just a customer-provider relationship. They bring domain expertise; OpenAI brings top-notch AI. Together, we've worked on improving model latency and quality. Real-world impact: faster, more efficient customer support systems.

I recall a discussion with Gradient Labs where we exchanged insights about latency. It opened my eyes to optimization potential. They have a very pragmatic, results-oriented approach, and that's refreshing.

- Constant feedback for refining models

- Continuous improvement of latency

- Real impact on support system efficiency

Understanding Latency in AI Models

Latency is crucial for real-time applications like voice agents. OpenAI offers models with incredible quality and unbelievable latency. Why is this important? Because low latency enhances customer experience in financial services. But remember, you must juggle model complexity with cost.

I've often seen teams get burned by aiming too high on model complexity, forgetting that latency is key. Why Low Latency Matters in AI Voice Agents explains this well.

Voice Agents Transforming Financial Institutions

Voice agents aren't just eye candy. They handle the full life cycle of customer interactions. AI-driven efficiency gains cut operational costs. However, latency needs to be low for seamless interactions. Watch out: integrating AI with existing systems isn't always a walk in the park.

The key is ensuring agents can manage the entire customer journey without hiccups. One agent, one cycle is the mantra.

- Cost reduction through AI efficiency

- Complex integration needed for smooth operation

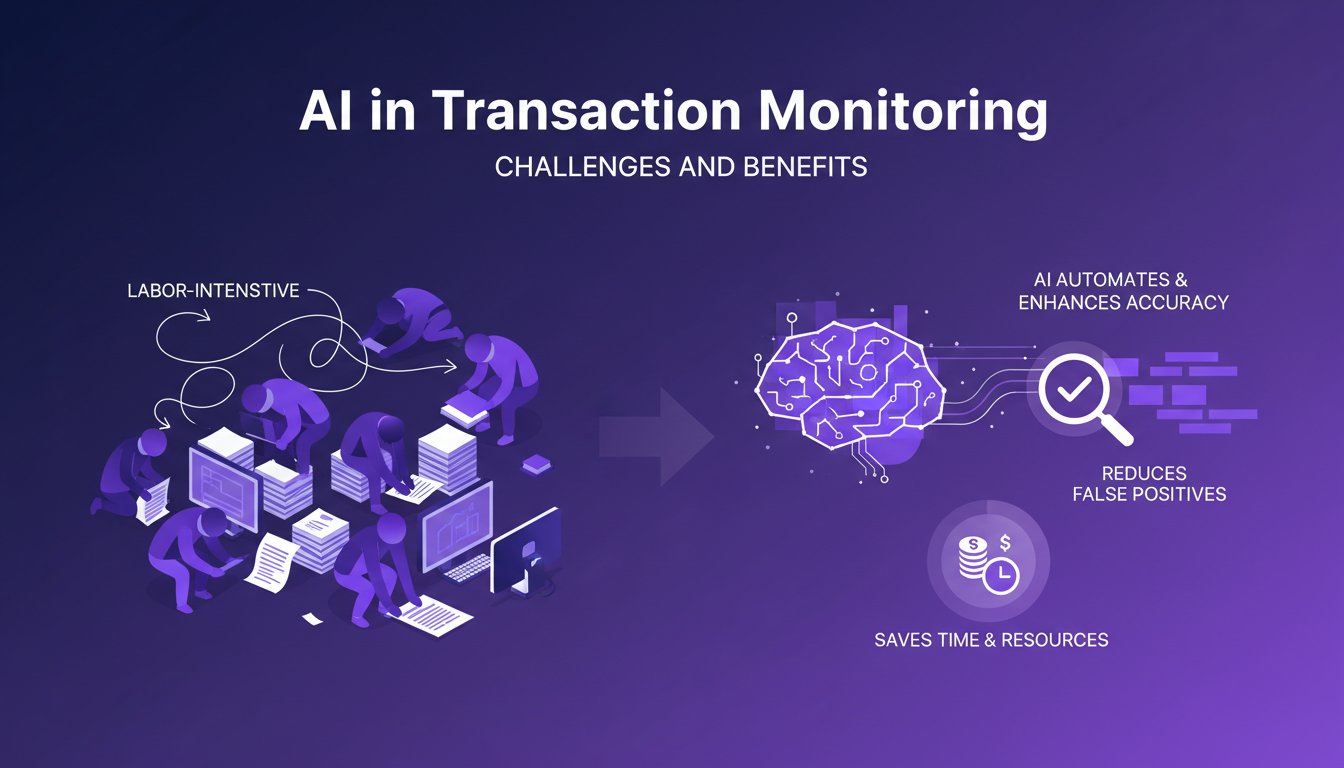

AI in Transaction Monitoring: Challenges and Benefits

Traditional transaction monitoring is labor-intensive. AI automates and enhances accuracy in detecting anomalies. Models reduce false positives, saving time and resources. Limitation: AI needs continuous updates to handle new fraud patterns.

Whenever I set up a new AI monitoring system, I remind myself it needs constant tweaking. Fraud patterns evolve, and AI must keep up. But the time and accuracy gains are worth the effort.

- Increased automation and accuracy

- Reduction in false positives

- Continuous updates needed to track evolving fraud

Ultimately, combining high-performing AI with strong partnerships like the one with Gradient Labs allows us to overcome scalability challenges in banking support. For more insights on AI improvement, check out AI Improvement: Cutting Costs and Time.

Integrating AI into banking systems feels like turbocharging an already powerful engine. With partnerships like OpenAI and Gradient Labs, I'm scaling and streamlining operations in ways I couldn't before. But don't overlook the nuances of latency and transaction monitoring—those can trip you up. Here are my main takeaways:

- Scalability: By using a single AI agent to handle the full lifecycle, I optimize both human and technical resources.

- Incredible Latency: OpenAI's models offer game-changing quality and responsiveness, but watch out for bottlenecks.

- Feedback-Driven Innovation: The feedback loop between OpenAI and Gradient Labs accelerates innovation, but staying alert to feedback is key for real-time adjustments.

Consider how these AI advancements can be applied to your organization to enhance customer support and operational efficiency. Think of it as a lever for your digital transformation. I highly recommend watching the full "OpenAI x Gradient Labs Founder Spotlight" video for deeper insights: YouTube link.

Frequently Asked Questions

Thibault Le Balier

Co-fondateur & CTO

Coming from the tech startup ecosystem, Thibault has developed expertise in AI solution architecture that he now puts at the service of large companies (Atos, BNP Paribas, beta.gouv). He works on two axes: mastering AI deployments (local LLMs, MCP security) and optimizing inference costs (offloading, compression, token management).

Related Articles

Discover more articles on similar topics

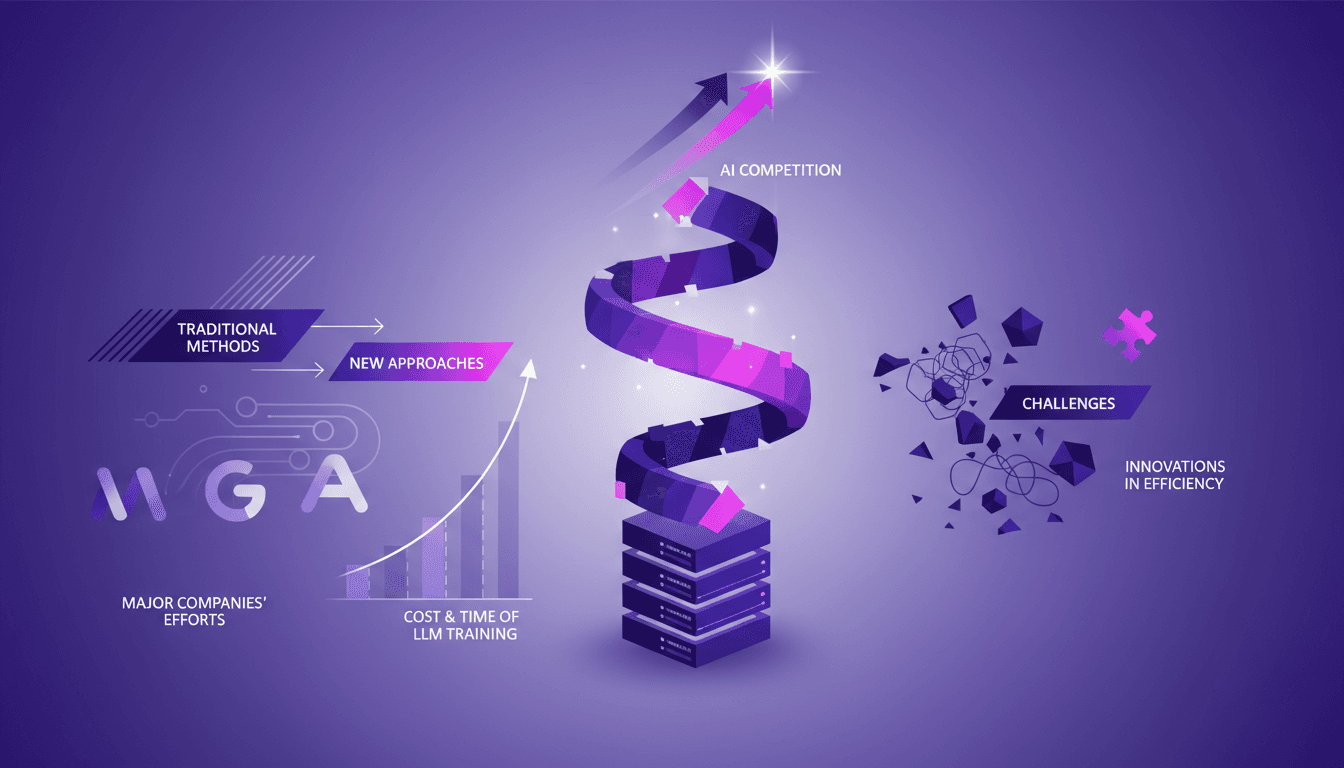

AI Improvement: Cutting Costs and Time

I remember the first time I heard about 'recursive self-improvement' in AI—it sounded like sci-fi. But diving into it, I realized it's the future—if we can manage the costs and time. Training an LLM costs hundreds of millions and takes months. I'm right in the thick of this race to develop faster and cheaper solutions. Let's break down how we're tackling this, the challenges in model training, and why traditional methods just won't cut it anymore. Trust me, this is practical stuff, not corporate fluff.

Codex: Revolutionizing Code Review at RAMP

When I first integrated Codex with GPT 5.4 at RAMP, I knew we were onto something big. The way it slashed code review times from hours to minutes was a game changer. That's not just talk. By pairing Codex with GPT 5.4, we've not only optimized our workflows but also developed an AI-driven on-call assistant that changed how we tackle complex problems. Codex has become the industry standard for code review, and at RAMP, our engineers swear by it. Let's dig into how this setup works and why it's winning over everyone here.

AI in Sales: Mastering the VRP=C Strategy

I've spent years refining my sales techniques, but nothing has surprised me quite like the impact AI is having on our methods. We often talk about automation, but what interests me is how AI can truly transform our sales conversations at a deep level. I used AI to close a sale where humans traditionally stumble. The key here is the VRP=C strategy. In this article, I'm going to break down how AI is redefining our approach, not just in terms of speed, but also in terms of precision and strategy. Get ready to discover how adjusting conversation pace can make all the difference.

Building a $14K/Month App in 4 Months: My Journey

I built an app that hit $14K a month in just four months. Sounds unreal? Let me show you how I did it, from the tech stack to influencer marketing strategies. In today's tech world, launching an app isn't just about coding. It's smart marketing, efficient development, and a dash of hustle. Join me on my journey with the Locked app, from my development path, gamification strategies, to influencer deal negotiations. I've been burned by common pitfalls so you don't have to. If you're looking to grasp the gears of a successful launch, this is your playbook.

Ethical Smartphones: Challenges and Solutions

I've spent years building tech that respects privacy and sustainability. Let me walk you through how we developed Murena OS, an ethical smartphone solution. In an era where smartphones are becoming advertising fortresses, developing an ethical alternative is both a challenge and a necessity. Here's how we tackled it, integrating data protection and regulatory pressures, while harnessing the power of open source software. It's a journey where every line of code aims to safeguard our digital integrity.