Automate Technical Content Creation in AI

I've been teaching AI for over four years, and if there's one thing I've learned, it's that building your own deep research agents can be a game changer. But it's not just about slapping together some code; it's about crafting workflows that make sense and deliver results. In this article, I'll take you behind the scenes of my research agents, the strategies to avoid overfitting, and how to achieve high F1 scores, all while integrating these systems into your daily operations. We'll also dive into automating technical content creation and optimizing AI evaluators. It's a balance of autonomy and control, and I'll show you how I achieved it.

I've been in the AI teaching space for over four years, and one thing is clear: building your own deep research agents can be a real game changer. But don't just throw some code together; it's about crafting workflows that make sense and deliver results. In this article, I'll walk you through how I set up my own deep research agents, the workflows that drive them, and how I balance autonomy with control. We'll talk about practical strategies to avoid overfitting and achieve high F1 scores, all while integrating these systems into your daily operations. Automating technical content creation in AI, defining agentic systems, and optimizing AI evaluators are all areas we'll dig into. It's a delicate balance, but I promise that the impact on your daily efficiency can be direct and impressive.

Automating Technical Content Creation

First things first, understanding the needs of your technical audience is crucial. Trust me, I've been burned before by not knowing my audience well enough. I often use tools like GPT for generating initial content drafts. But let me tell you, I never take these drafts at face value. Every piece goes through my hands for manual refinement. AI can be a fantastic ally, but it's got its limits—like the context window limit that can be a real trap if you're not careful.

The trick is to set up feedback loops to improve content quality over time. It's not just about speed, but quality. Automation shouldn't mean cutting corners.

- Use AI for initial drafts but refine manually.

- Set up feedback loops for content improvement.

- Watch out for context limits in AI models.

Building and Utilizing Deep Research Agents

When I talk about deep research agents, I'm referring to systems capable of conducting complex research autonomously. But beware, don't get seduced by the latest shiny tech without evaluating its relevance to your project. I've wasted hours on tools that seemed promising but offered nothing concrete. What works is a clear workflow for data collection and analysis. Agentic systems should be able to function with minimal supervision, but watch out for overfitting.

It's easy to get trapped by too much autonomy, which can lead to erratic results.

- Clearly define what a deep research agent means for your project.

- Select the right tools without getting lost in novelty.

- Establish clear workflows for data collection.

- Watch out for overfitting with too much autonomy.

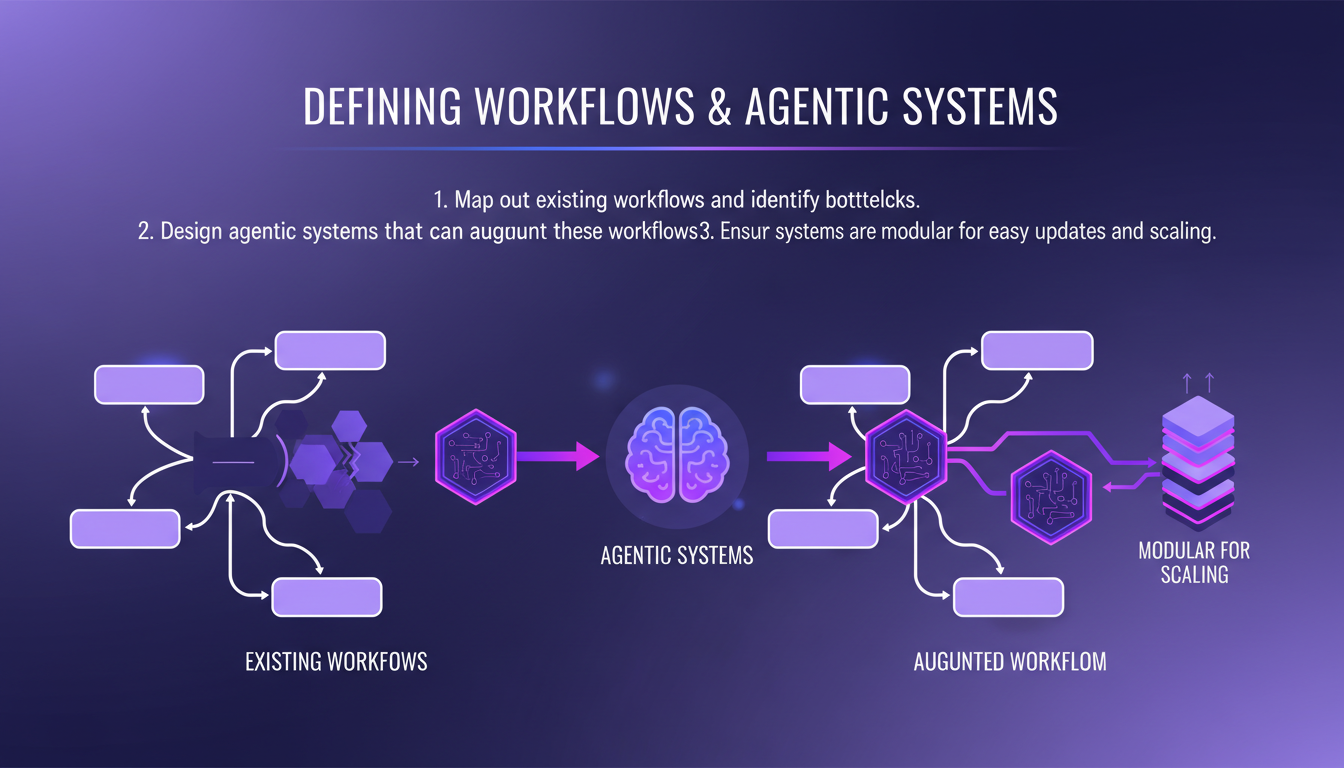

Defining Workflows and Agentic Systems

The first step is to map out your existing workflows and identify bottlenecks. Then, I design agentic systems to augment these workflows. These systems must be modular for easy updates and scaling. But balance is key; too much autonomy or too much control can both be problematic.

Finally, consider the context limits that can affect agent performance. Sometimes it's just a matter of knowing when a system needs more human intervention.

- Map your workflows to identify bottlenecks.

- Design modular agentic systems.

- Balance autonomy and control.

- Consider context limits.

Evaluating System Performance and Optimization

To evaluate system performance, I use metrics like the F1 score. It measures precision and recall. But watch out for overfitting; diversify your training data. AI evaluators can provide real-time feedback, which is crucial for optimizing both speed and accuracy.

Remember, evaluation is an ongoing process, not a one-time task. I've had to go back and readjust my models after new evaluations more times than I can count.

- Use metrics like F1 score to evaluate performance.

- Avoid overfitting by diversifying training data.

- Implement AI evaluators for real-time feedback.

- Optimize for both speed and accuracy.

LinkedIn Writing Workflows and Data Collection

LinkedIn is a goldmine for data collection. I use it to analyze successful posts and inform my own content strategy. AI can help draft posts, but I always refine them with personal insights. The critique system I set up uses two labels, "pass" and "fail", based on a three-sentence analysis. It's simple but effective for quickly evaluating content.

Again, iterating based on real-world feedback and performance data is essential. Never rest on your laurels.

- Use LinkedIn for data collection.

- Analyze successful posts to inform your strategy.

- Set up a simple but effective critique system.

- Constantly iterate based on feedback and performance data.

Building and utilizing deep research agents isn't just about the tech; it's about weaving them into our workflows to enhance efficiency and impact. First, I define my agentic systems and automate technical content creation. Then, I balance autonomy with control—careful not to give too much leeway or you might lose your grip. Here are my key takeaways:

- Integrate agents into your workflows to maximize efficiency.

- Continuously evaluate system performance to prevent drift.

- Start small, iterate, and refine based on real-world feedback. Looking ahead, integrating these agents could transform how we approach technical research. So, ready to build your own research agents? Dive in: start small, iterate, and improve. For a deeper dive, watch the full video to see how Louis-François Bouchard and his team do it. Check it out here: https://www.youtube.com/watch?v=mYSRn6PC1mc

Frequently Asked Questions

Thibault Le Balier

Co-fondateur & CTO

Coming from the tech startup ecosystem, Thibault has developed expertise in AI solution architecture that he now puts at the service of large companies (Atos, BNP Paribas, beta.gouv). He works on two axes: mastering AI deployments (local LLMs, MCP security) and optimizing inference costs (offloading, compression, token management).

Related Articles

Discover more articles on similar topics

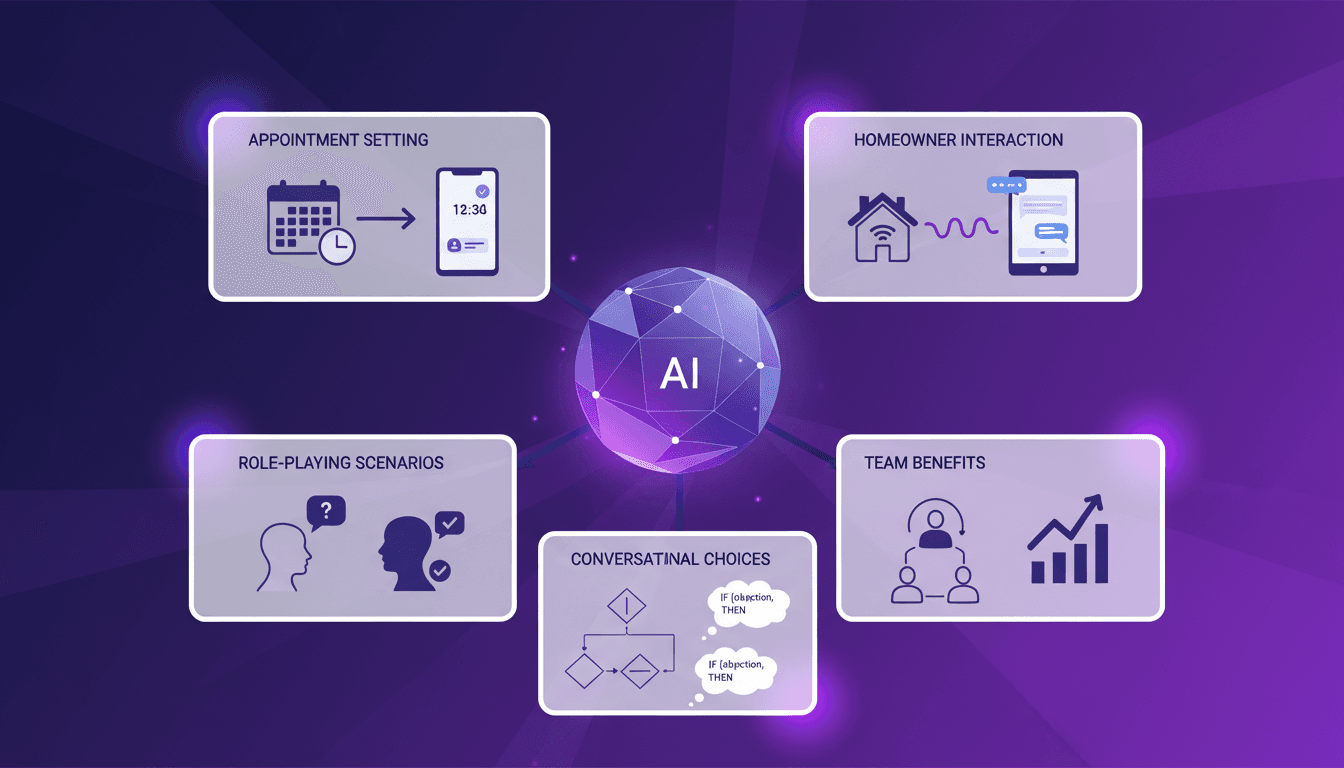

Handling Sales Objections with AI: Experience

I remember the first time I set up an AI lead manager to handle sales objections. It felt like handing over the keys to a new driver. The potential was massive, but I needed to see it in action to believe it. In today's lightning-fast sales world, efficiently responding to objections is crucial. AI lead managers are stepping up, promising to streamline processes and save time. But how do they really perform under pressure? I'll walk you through my integration process, role-playing scenarios, and interactions with homeowners. The benefits for teams are tangible, but watch out for the limits!

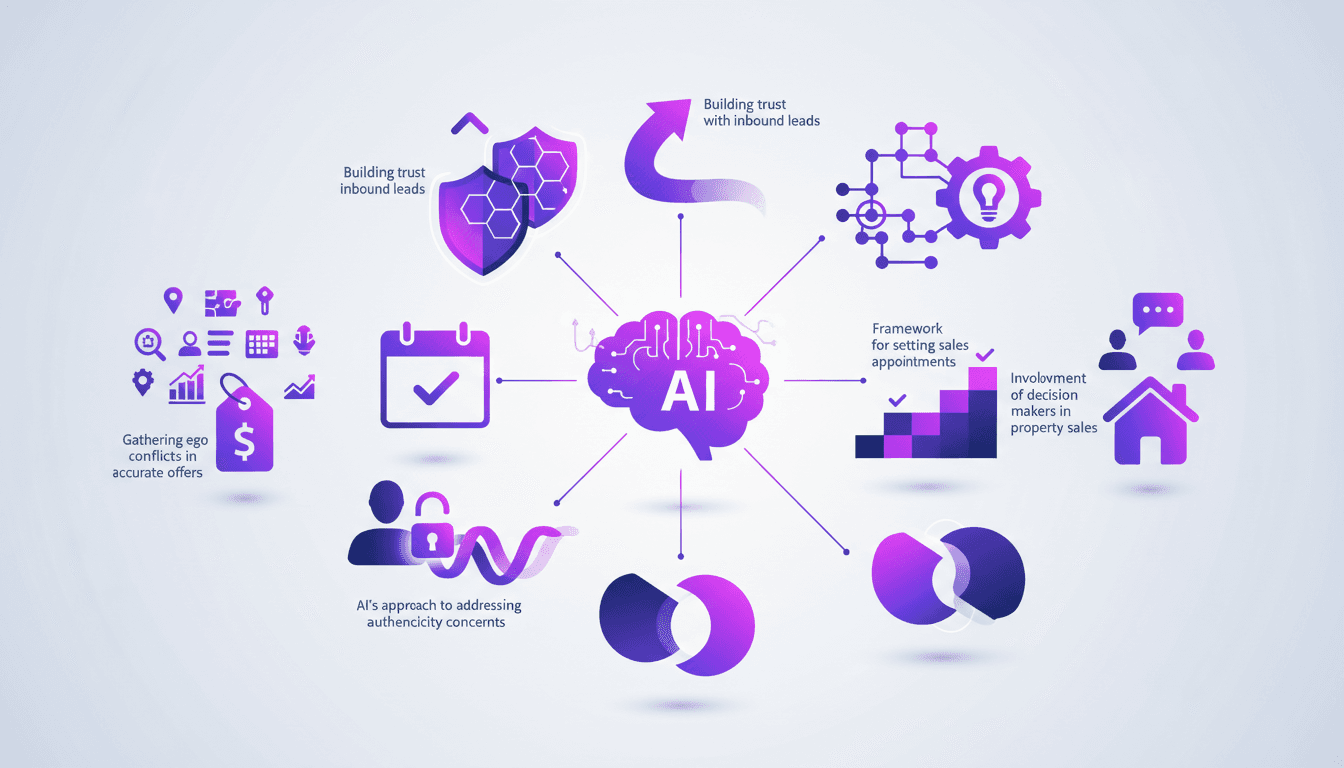

Handling Sales Objections with AI: My Workflow

I remember the first time I let AI handle sales objections—it was a leap of faith, but the results were eye-opening. Integrating AI into my sales process was a game changer. In an industry where objections are part and parcel, the idea that AI can smooth over these hurdles is enticing. Let me walk you through how I integrated AI to tackle the toughest objections and build trust with inbound leads. AI can really be transformative, but watch out for pitfalls like addressing authenticity concerns and involving decision-makers. I learned to orchestrate sales appointments and avoid ego conflicts, all while using AI to gather accurate information. Join me as I share how I turned daunting objections into opportunities.

Paperclip: Efficiently Orchestrate Your AI Agents

I remember the first time I heard about Paperclip—it sounded like a game changer for AI orchestration. Having been burned by overly complex systems in my career, I was skeptical. But diving into it, I found a tool that could actually streamline my AI workflows without the usual headaches. Paperclip, an open-source orchestrator, is designed to efficiently manage AI agents, and I've integrated it into my operations to avoid technical migraines. We're talking about seamless orchestration, handling different agent types like Gemini and Hermes, and an engaged community that's constantly pushing development forward. In short, if you're looking to optimize your AI-driven operations, Paperclip might just be the tool that changes everything.

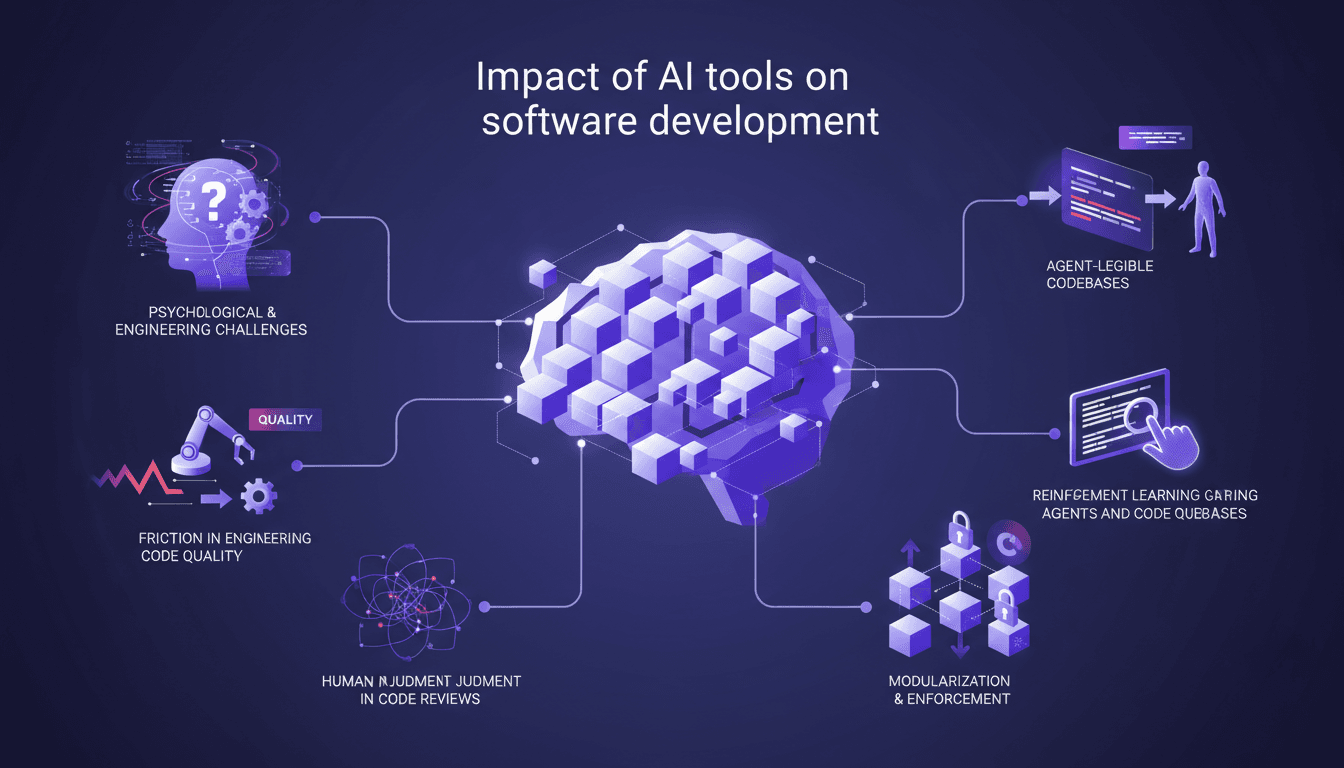

AI's Impact: Challenges and Solutions in Dev

With over 20 years in software development, my last 12 months immersed in AI agents have been eye-opening. The friction isn't just technical—it's personal. It's about making judgment calls when AI tools suggest code changes that don't sit right. Armin Ronacher and Cristina Poncela Cubeiro illuminate AI's impact on development, covering both psychological and technical challenges. Their insights are crucial for integrating AI into your workflow while preserving human judgment.

Robotics Breakthroughs: A 10-Day Revolution

I've been in the robotics game for years, and let me tell you, the last 10 days have been wild. We’re talking about a seismic shift in humanoid robotics that nobody's really discussing yet. In this article, I'll walk you through what's happening on the ground: incredible advancements in humanoid robotics, the real tech behind AI vision systems, and what all this means for our industry. From Real Botics to Unitri, companies are pushing the boundaries of what robots can do, and it's not just tech talk—it's about real-world applications and market dynamics.