2025: Voice Agents, How I'm Preparing

I remember the first time I integrated a chat agent with a voice interface. It felt like giving a soul to my lines of code. With 2025 dubbed the year of chat agents, it's time to leverage voice as a powerful medium. Voice agents are transforming interactions, making them more natural and engaging. In this talk, I'll dive into how to prepare for this shift, discussing the advantages of voice over text, how developers can implement the Voice Engine product, and the higher abstraction bundles available. We're venturing into a world where human-machine interactions are about to become as natural as our daily conversations.

I still remember the first time I integrated a chat agent with a voice interface. It felt like I was giving a soul to my lines of code. With 2025 being hailed as the year of chat agents, it's high time we leverage voice as a powerful medium. This isn't science fiction anymore—voice agents are already transforming interactions, making them more natural and engaging. I've experienced firsthand the advantages of voice over text—the natural flow is unmatched. But watch out, making this transition doesn't happen overnight. In this talk, I'll show you how to prepare for this shift: first, understanding the options available with the Voice Engine product; then, exploring higher abstraction bundles that make implementing voice agents easier for developers. Trust me, we're on the brink of a new era where human-machine interactions become as fluid as our daily conversations. Ready to give your code a voice?

Why 2025 is the Year of Chat Agents

Looking ahead to 2025, it's clear that chat agents are set to dominate the landscape. I've witnessed firsthand how projects have transitioned from simple chat interfaces to full-fledged voice agents over recent years. Voice brings a layer of personality and accessibility that purely text-based interactions often lack. With technologies like Text to Speech (TTS) and Speech to Text (STT) advancing at breakneck speed, we can now offer interactions in thousands of voices and languages. This opens up unprecedented opportunities to reach a global audience.

What's truly fascinating is that a single prompt can now analyze and deploy a voice agent. This has been a game changer for many companies, especially those keen to integrate AI more deeply into their apps. It's almost like everyone has to become AI-first to stay competitive. But beware, this transition doesn't come without its challenges.

It's really great as a tool, but watch out for context limits — beyond 100K tokens it gets tricky.

Voice vs Text: Natural Interaction Advantages

I have to say, voice is inherently more interactive and engaging than text. As a developer, I see it all the time: real-time communication better mimics human conversation. This significantly reduces the cognitive load on users. Sometimes, voice can be much more efficient for quick tasks.

However, there are trade-offs. Not all environments are conducive to voice interactions. For instance, in noisy settings, text might still have its place. But for anything involving real-time integration and quick tasks, voice is a natural choice.

- Voice reduces cognitive load.

- Ideal for quick and interactive tasks.

- Not suitable for all environments.

Getting Started with the Voice Engine

When I integrated the Voice Engine into my system, I was impressed by its capabilities. The V3 model is particularly powerful for text to speech, and it's incredibly easy to customize. I managed to integrate it in just a few simple steps, while being careful not to over-customize to avoid performance issues.

The key advantages are its scalability and ease of use. But watch out, over-customization can lead to performance issues. It's crucial to find the right balance between customization and efficiency.

- V3 model for advanced features.

- Easy integration and customization.

- Beware of over-customization.

Developer Options for Voice Integration

When it comes to integrating voice capabilities, you mainly have two options: SDK integration or higher abstraction bundles. The SDK offers more control, but requires deeper technical knowledge. On the other hand, bundles simplify the process, making them ideal for rapid deployment.

Personally, I chose my approach based on the specific needs of the project. But caution is needed, as balancing control with simplicity can be tricky.

- SDK for greater control.

- Bundles for simplified deployment.

- Balancing control and simplicity is crucial.

Leveraging Higher Abstraction Bundles

The higher abstraction bundles for voice agents allow for quick deployment without deep technical dives. I recently piloted these bundles in a project, and it truly simplified agent orchestration with LLMs and tool calling.

However, these solutions might not be suitable for highly customized or niche applications. It's essential to thoroughly evaluate your needs before opting for this route.

- Quick and simplified deployment.

- Agent orchestration with LLMs and tool calling.

- Limitations for highly customized applications.

Voice agents are truly set to revolutionize how we interact with technology. By 2025, we're already calling it the year of chat agents. Why? Because with text-to-speech models like V3, you can create incredibly engaging user experiences. Imagine thousands of voices and languages at your fingertips. But watch out, don't get lost in the myriad of options — choose those that fit seamlessly into your workflow.

- Start experimenting with voice integration today to not miss the boat.

- Leverage the V3 text-to-speech model and try different voices.

- Keep in mind that there are limits, particularly in terms of complexity and integration cost.

Personally, I believe these agents will transform our way of working soon. So why wait? Watch the full video by Luke Harries (https://www.youtube.com/watch?v=DCZZ3AJKzuc) to really dive into the topic. You'll see, it's a real game changer.

Frequently Asked Questions

Thibault Le Balier

Co-fondateur & CTO

Coming from the tech startup ecosystem, Thibault has developed expertise in AI solution architecture that he now puts at the service of large companies (Atos, BNP Paribas, beta.gouv). He works on two axes: mastering AI deployments (local LLMs, MCP security) and optimizing inference costs (offloading, compression, token management).

Related Articles

Discover more articles on similar topics

Scaling AI Skills: A Builder's Guide

I've been in the trenches, building AI workflows that don't just work but scale. Let's talk about skills in AI—those discrete units of work that are game-changers if you know how to manage them right. In a world where AI is reshaping industries, understanding how to efficiently develop, manage, and share these skills is crucial. This isn't about theory; it's about real-world application. Nick Nisi and Zack Proser from WorkOS delve into the structure and components of skills, context management, confidence scoring, and how to share them within teams. If you're tired of empty talk and want tools that deliver in day-to-day operations, this is where you want to be.

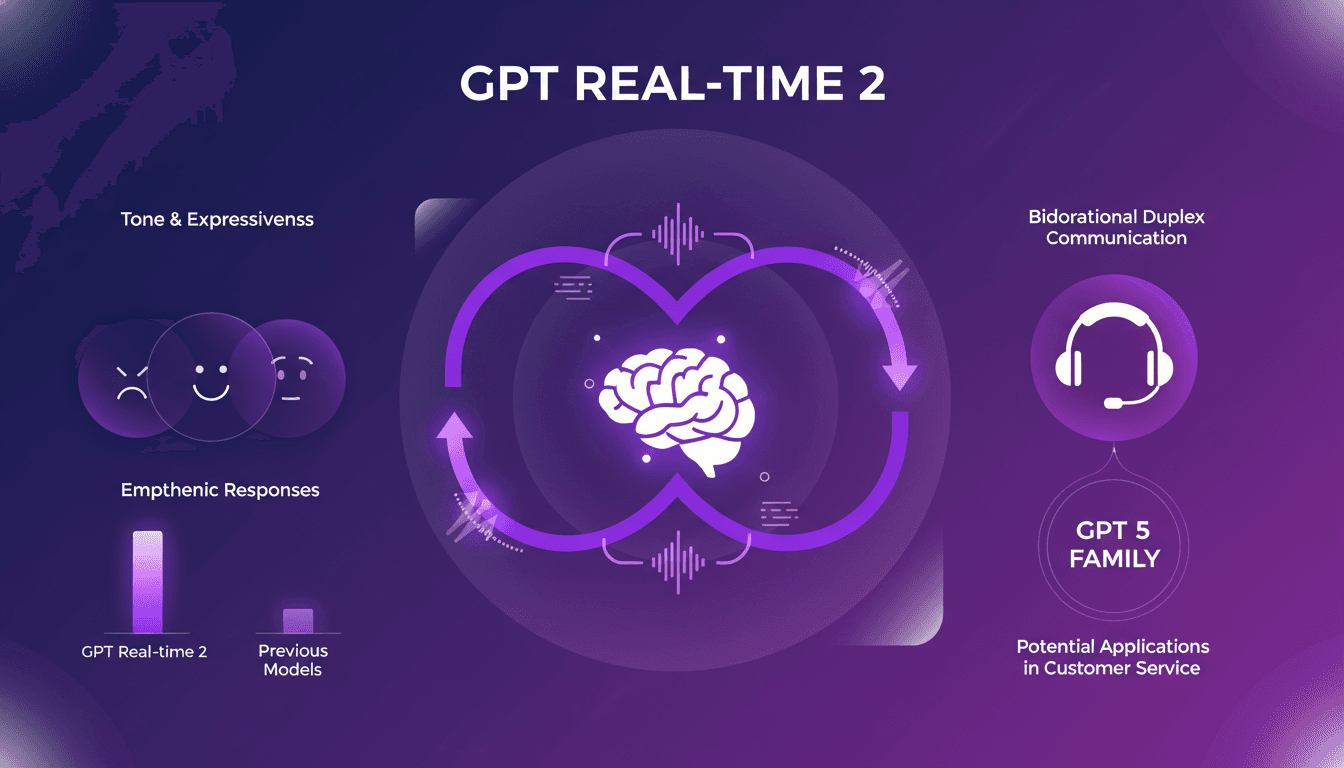

Integrate GPT Realtime-2 into Your Voice Agents

I've been hands-on with GPT Realtime-2, and let me tell you, it's a game changer for voice agents. When I first integrated it, the fluidity and responsiveness blew me away. As someone who's in the trenches with AI models, I know the pain points of latency and lack of expressiveness. GPT Realtime-2 directly addresses these, and it's not just hype. The bidirectional duplex communication and improved tone expressiveness are significant. Responses are more empathetic, conversations more lifelike. Compared to previous models, it's a leap forward. In customer service, the potential applications are vast. Integrated into the GPT 5 family, this model redefines the limits of what voice agents can achieve.

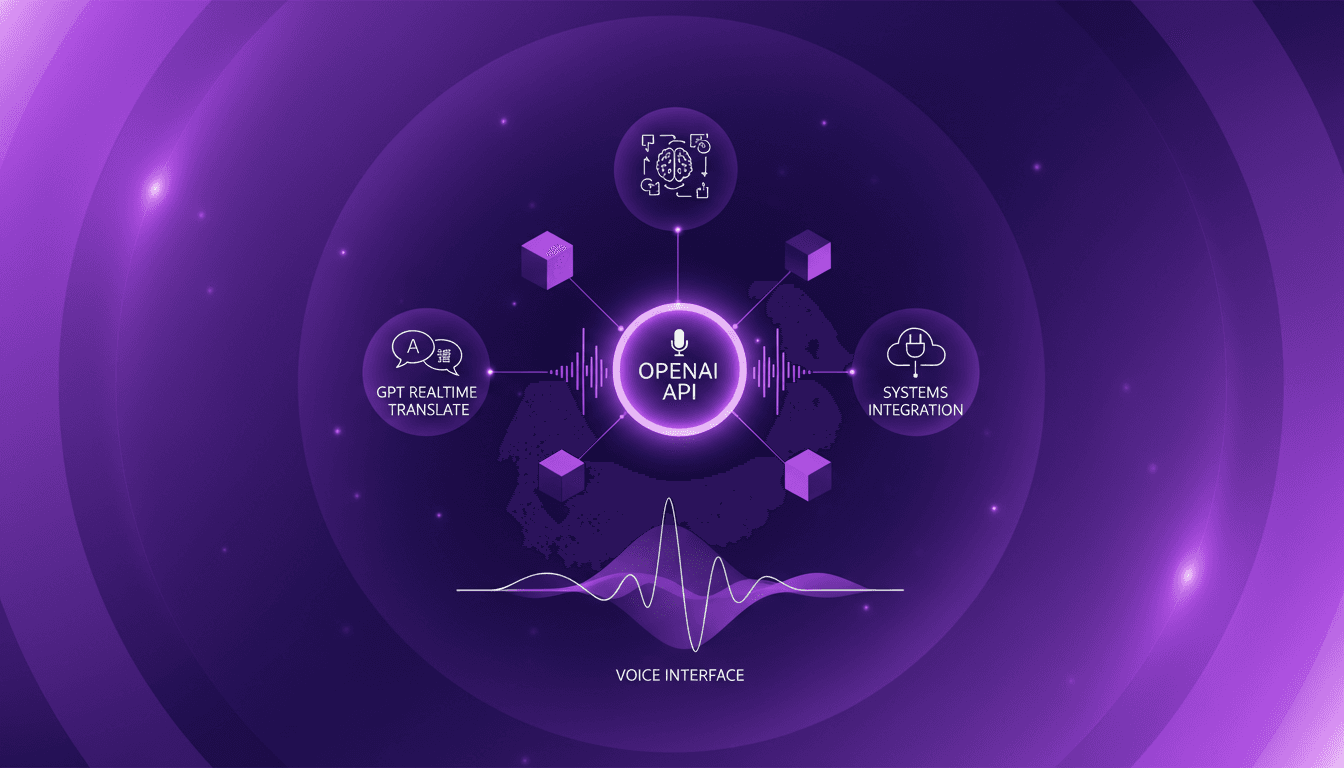

OpenAI Audio Models: Real-Time Integration

I still remember the first time I integrated voice models into my system. It was utter chaos, but the results were a game changer. Now, with OpenAI's new real-time audio models, we're taking it to a whole new level. Imagine translating across 70 languages live or using voice agents with intelligent reasoning. In this article, I'll show you how these models can revolutionize your workflow. From real-time translation to intelligent voice agents, every integration step is crucial. Watch out for technical terms and language switching—it can become a headache if mishandled. But when orchestrated well, voice becomes the primary interface for interaction. Ready to transform your system? Let's dive in!

Transformers in Vision: Evolution and Challenges

I remember the first time I transitioned from CNNs to Transformers. It felt like stepping into a new world, full of potential but also pitfalls. Here, I'll walk you through how these models evolved and what it means for us in the field. Transformers have revolutionized vision tasks, and understanding their evolution and application is crucial for effective deployment. I'll take you through my journey, highlighting key moments and practical insights. From ViT and pretraining techniques to Swin and ConvNeXt models, down to the deployment challenges of the SAM Series Models, and how Roboflow's RF100VL dataset impacts model flexibility, we've got a lot to cover.

Opening a Dance Studio: Act Now or Never

I've been in Phil's shoes, dreaming big while hesitating. When it comes to opening a dance studio, he has to act now, not tomorrow. I've witnessed firsthand how confidence and accountability can turn dreams into reality. Phil has this burning aspiration, but without action, it'll just remain a wish. The key lies in turning that urgent need into a success driver, leveraging social media influence to gather the necessary support. The urgency to act is real, and it requires quick decision-making. If you've spent four years dreaming, it's time to move into action.