Optimizing Networks for AI: Challenges and Solutions

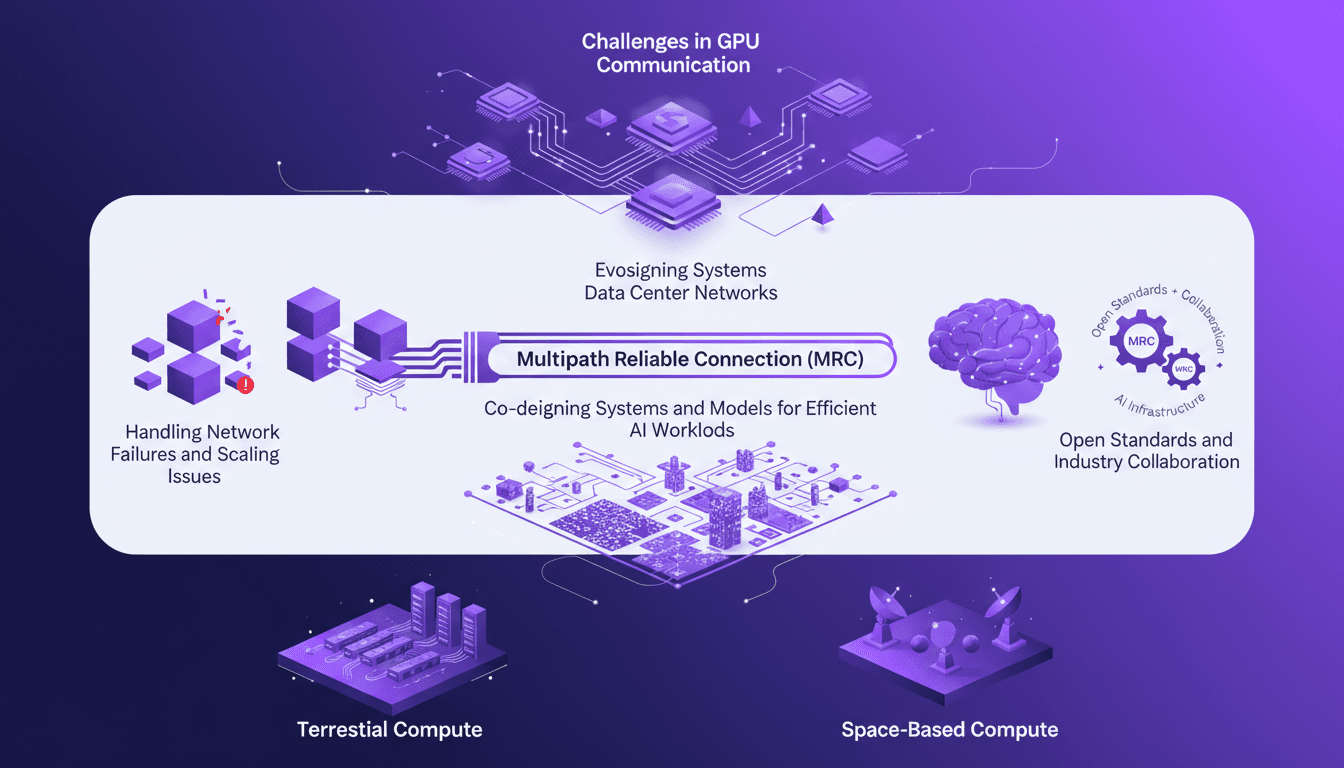

I've spent countless hours wrestling with the intricacies of AI model training, and it's crystal clear: our current network infrastructure is like trying to drive a Formula 1 car through city traffic. AI models need racetracks, not congestion. By diving into the challenges of GPU communication and the evolution of data center networks, I've realized AI demands a new kind of supercomputer network. We need to co-design systems and models for efficient AI workloads, handle network failures, and consider vertical integration. Let's explore how these elements can reshape our approach to data centers (and why open standards are crucial).

I've spent countless hours wrestling with the intricacies of AI model training, and if there's one thing I've learned, it's that our current network infrastructure just doesn't cut it. Picture driving a high-performance race car down a winding rural road—you're wasting potential. Our AI models are these race cars, and with AI evolving at breakneck speed, it's time we rethink how we design and implement data center networks. First up, the challenges in GPU communication—sometimes, ten people choose the same path and the network crawls to a halt. Then, there's the multipath reliable connection (MRC) and its impact. We need to co-design systems and models for truly efficient AI workloads. Finally, handling network failures and scaling issues, not to mention the importance of open standards and industry collaboration. So let's dive into this podcast and see why AI needs a new kind of supercomputer network.

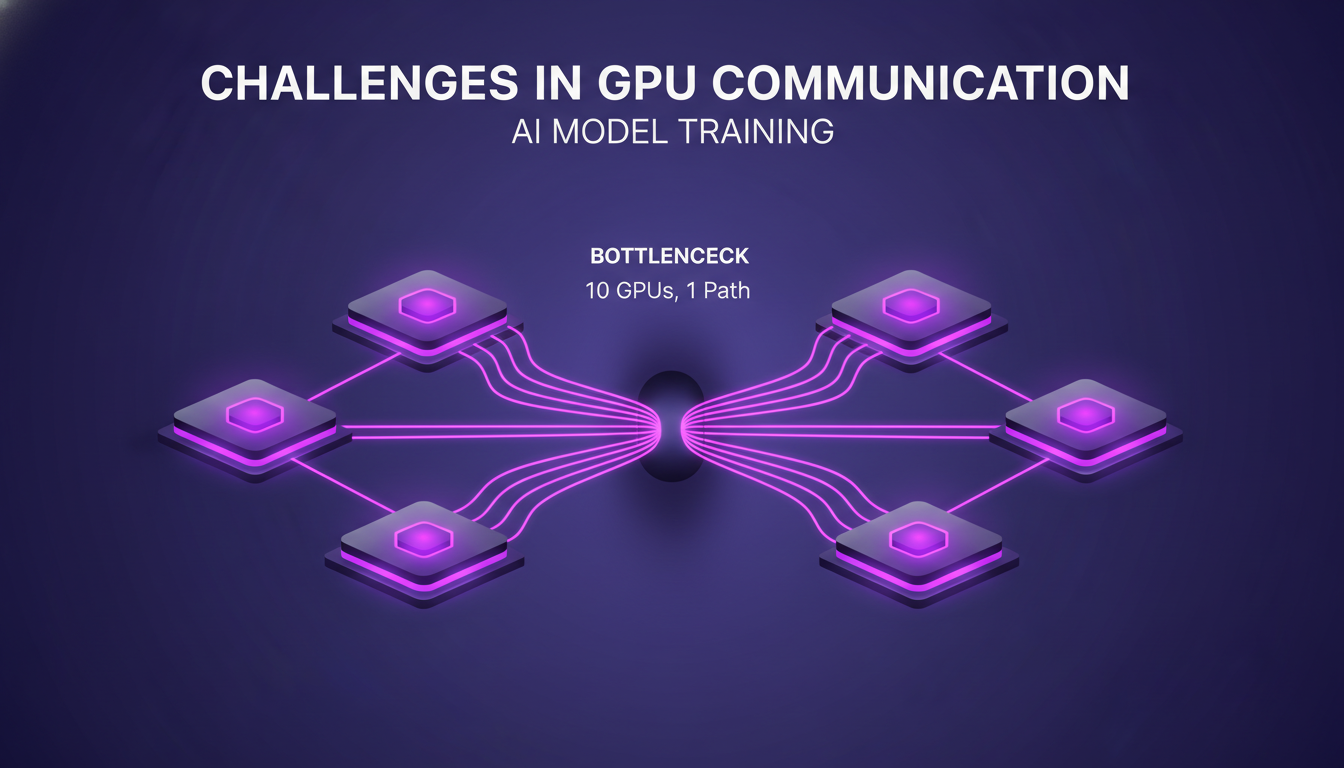

Challenges in GPU Communication for AI Model Training

I remember my first experience training AI models on GPU clusters. It was like trying to herd elephants through a garden gate. High-speed, low-latency communication between GPUs is crucial, but current networks often create bottlenecks that slow training times. When I saw ten GPUs choose the same path, I knew it was going to be a disaster. Performance plummets dramatically, and in this field, every millisecond counts. Even the slightest latencies are noticeable and can cause the link to stop being used.

So, what's the solution? Exploring alternatives to traditional Ethernet standards. It's time to rethink how our networks are designed to support these massive workloads. In this context, the evolution of data center networks becomes essential.

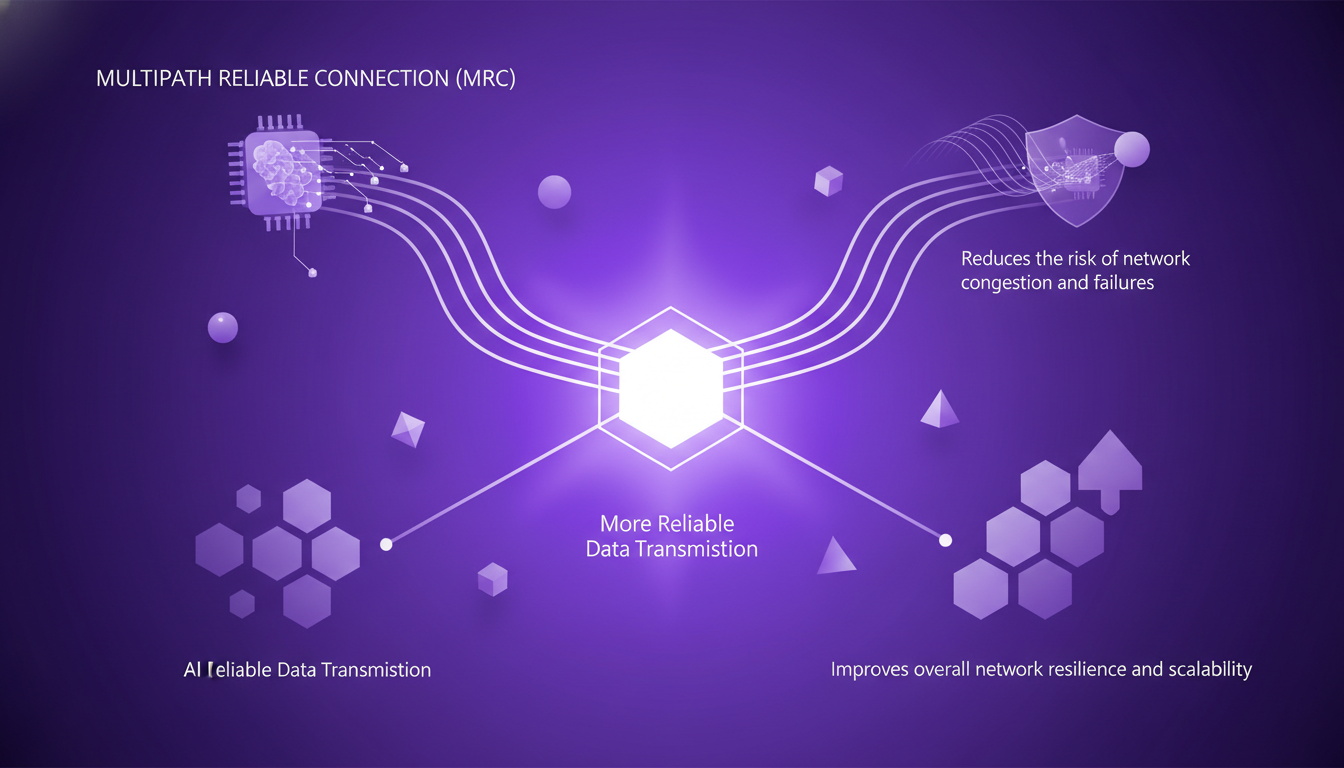

Evolution and Design of Data Center Networks

I've always believed that data centers must evolve in sync with the needs of AI workloads. Designing networks capable of handling immense data throughput is a constant challenge. Recently, I discovered that Multipath Reliable Connection (MRC) could be a real game changer. It offers a more reliable connection, which is crucial when discussing large-scale data center networks.

IPv6 Segment Routing adds another layer to this discussion, allowing for increased routing efficiency. However, even with these innovations, you must always weigh the trade-offs between cost and performance.

Multipath Reliable Connection (MRC) and Its Impact

MRC is a tool I've started integrating recently, and the results are impressive. It allows for more reliable data transmission across networks, reducing the risk of congestion and failures. This improves overall network resilience and scalability. Compared to traditional single-path connections, MRC offers a level of reliability I hadn't seen before.

But watch out, integrating MRC into existing infrastructure isn't without its challenges. You need to be ready to adjust and optimize to get the most out of this technology.

Handling Network Failures and Scaling Issues

Nothing is more frustrating than seeing AI training grind to a halt because of a network failure. It costs time and resources. I've learned that anticipating and mitigating these failures is crucial. Adding more GPUs isn't always the solution; sometimes it makes things worse, as networks aren't prepared for that scale.

Focusing on open standards and industry collaboration can really help here. It's about finding the right balance between vertical integration and interoperability.

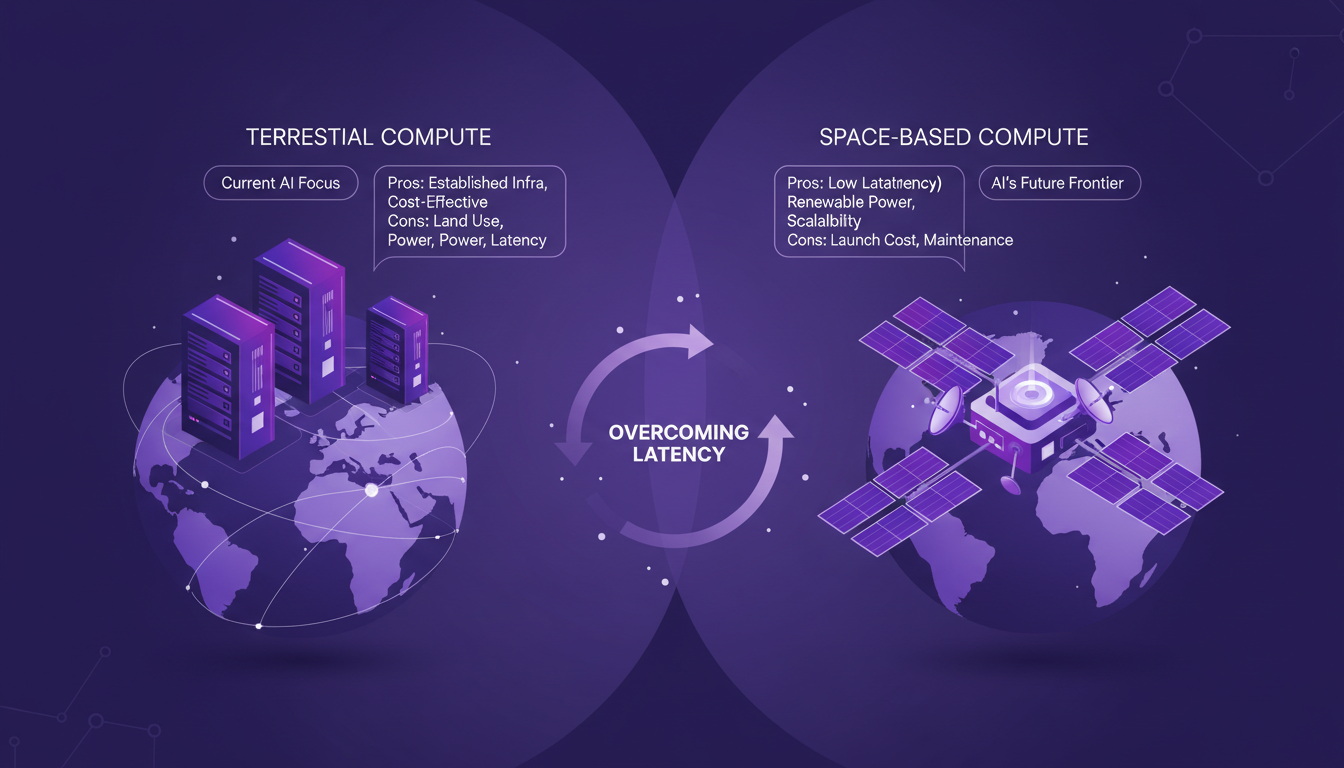

Terrestrial vs. Space-Based Compute Centers

Now, let's explore a bit of a futuristic idea: space-based compute centers. The idea is appealing: eliminate terrestrial latency issues by basing in space. But is it really feasible? Cost and technological feasibility remain major hurdles.

In the future, these centers might play a role, but for now, the challenges are still numerous.

Revolutionizing our AI infrastructure needs more than just slapping on more GPUs. First, tackling communication challenges is key. I've seen it firsthand; if ten people choose the same path, the network slows down, and within milliseconds, everyone bails. Next, evolving our data centers is vital. I design my networks to be more adaptable, and innovations like Multipath Reliable Connection (MRC) are true game changers if properly leveraged. But watch out, there are limits—system/model co-designs need to be in sync to truly optimize AI workloads.

Looking ahead, it's on us to rethink these systems for more efficient AI. I urge you to join the conversation on how we can collectively drive these changes forward. Check out Episode 18 of the OpenAI Podcast to dive deeper into these insights. Let's build the future of AI infrastructure together.

Link: YouTube

Frequently Asked Questions

Thibault Le Balier

Co-fondateur & CTO

Coming from the tech startup ecosystem, Thibault has developed expertise in AI solution architecture that he now puts at the service of large companies (Atos, BNP Paribas, beta.gouv). He works on two axes: mastering AI deployments (local LLMs, MCP security) and optimizing inference costs (offloading, compression, token management).

Related Articles

Discover more articles on similar topics

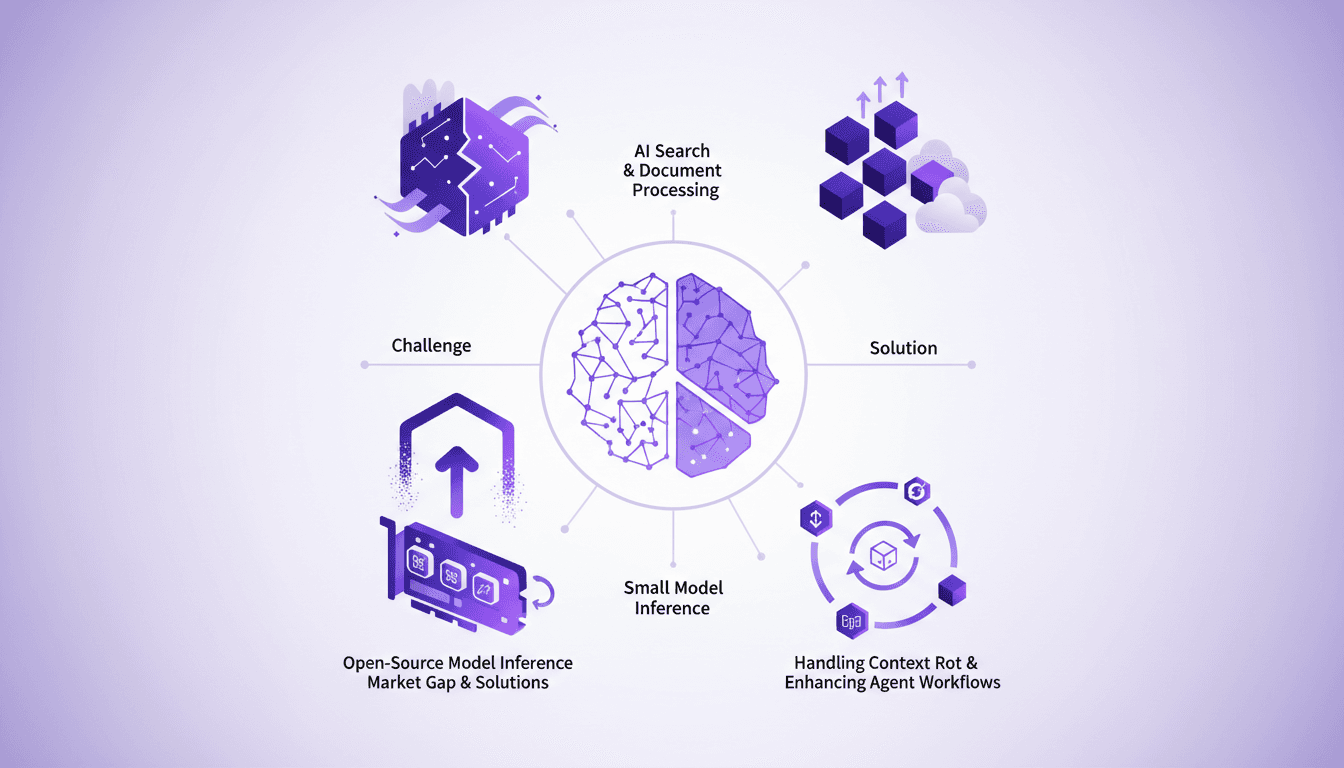

Optimizing AI Models: Our Practical Approach

I saw a gap in the market for small model inference, so I decided to build the infrastructure myself. With over 3 million models on Hugging Face, the lack of effective infrastructure was glaring. Why is this important? Because small models play a crucial role in AI search and document processing. And the challenges? There were plenty, but I tackled them head-on. From context rot to optimizing agent workflows, each step was a learning curve. I orchestrated model swapping for efficient GPU usage while supporting various open-source architectures. In short, we filled a market gap with a robust, scalable solution.

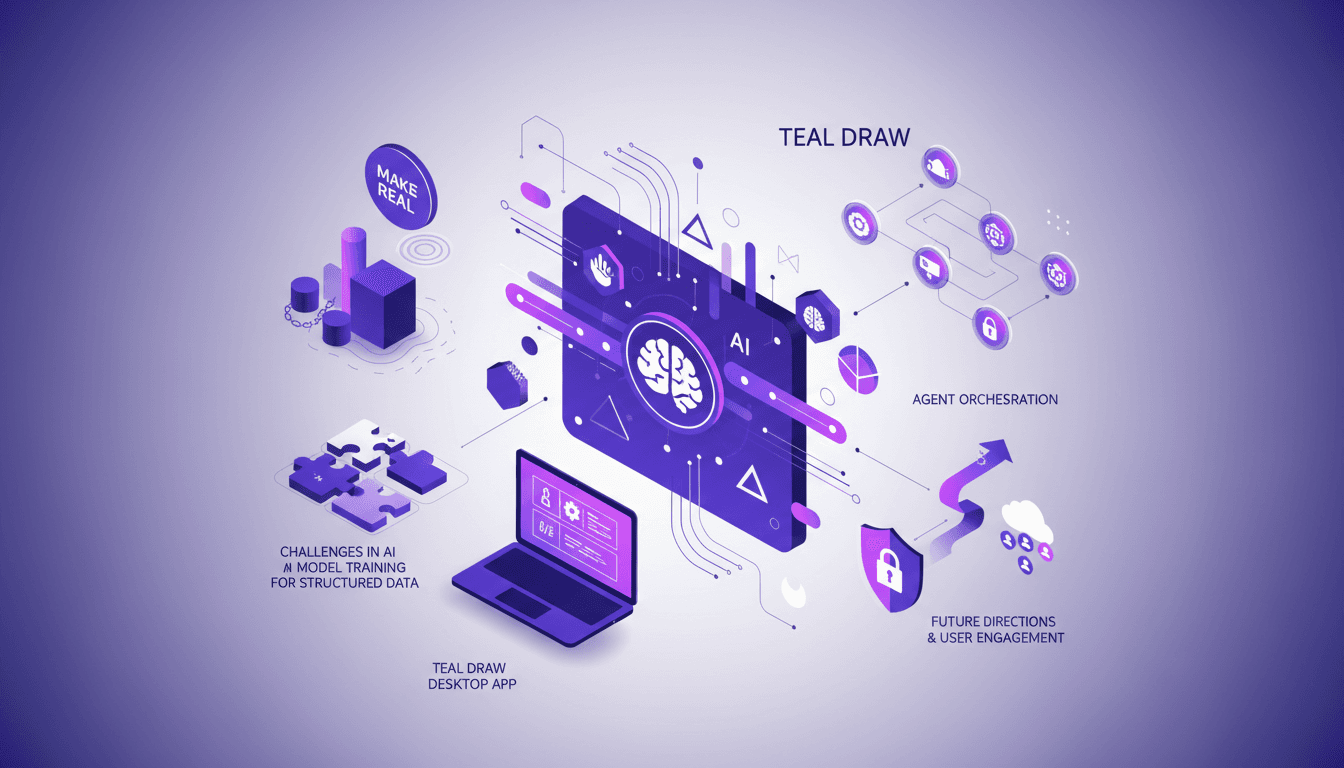

Agents on Canvas: Orchestrating in tldraw

I remember the first time I played with tldraw — I connected my React components and watched as the canvas came alive. It's not just about drawing; it's about orchestrating AI agents right there in real-time. Let's dive into how I got this setup working and what you can expect. In this journey, we'll explore AI integration, agent orchestration, and practical applications. I'll share insights from the Make Real project, AI model training challenges, and the security measures I implemented. It's all about making complex AI concepts actionable and efficient.

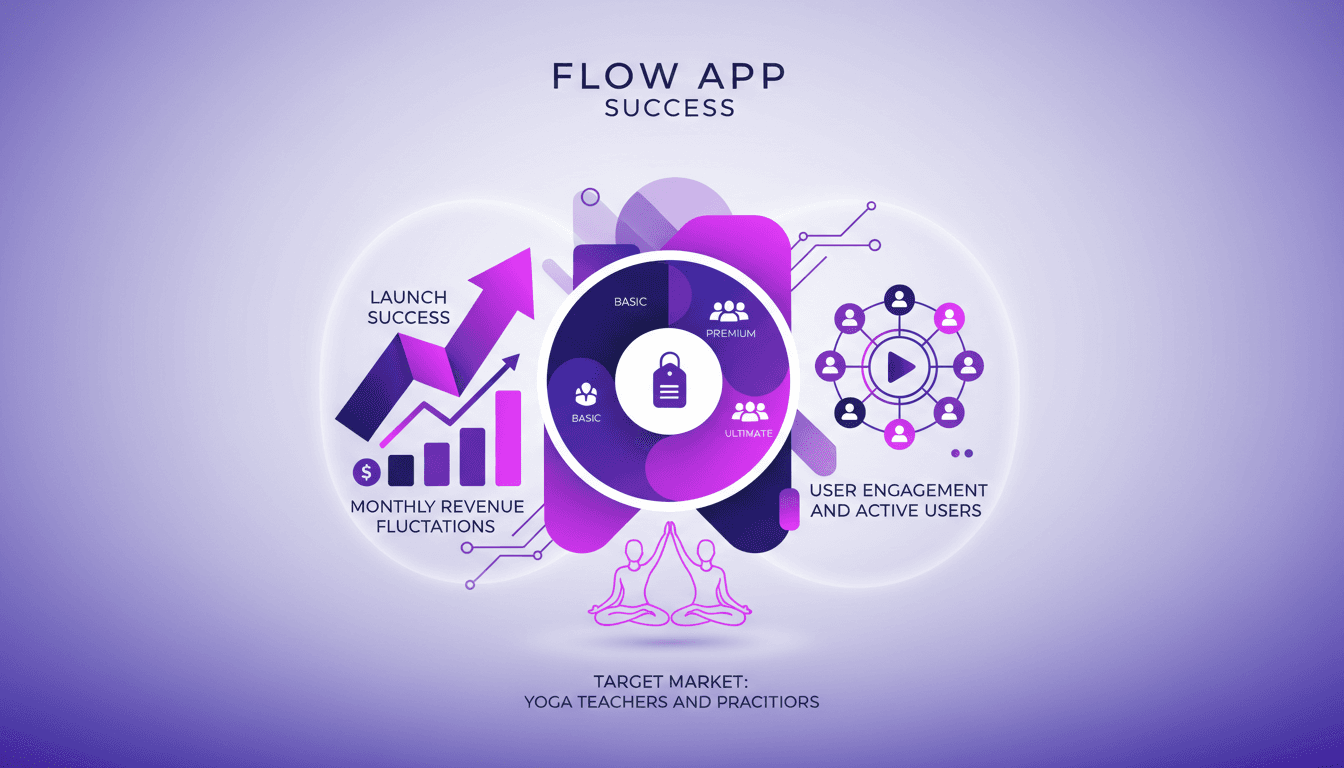

Flow App's Rapid Success: $120K in 24 Hours

I launched Flow App and hit $120K in 24 hours. Sounds crazy, right? Trust me, it was a wild ride with plenty of bumps. We built this app for yoga teachers and practitioners, transforming their workflow with a subscription model. Here’s the full story: the launch strategy, the hiccups, the wins, and the lessons learned along the way. It was a rollercoaster (I definitely got burned a few times). But ultimately, the business impact was direct: engaged users, revenue ups and downs, and a clearly defined target market. No theory here, just concrete reality.

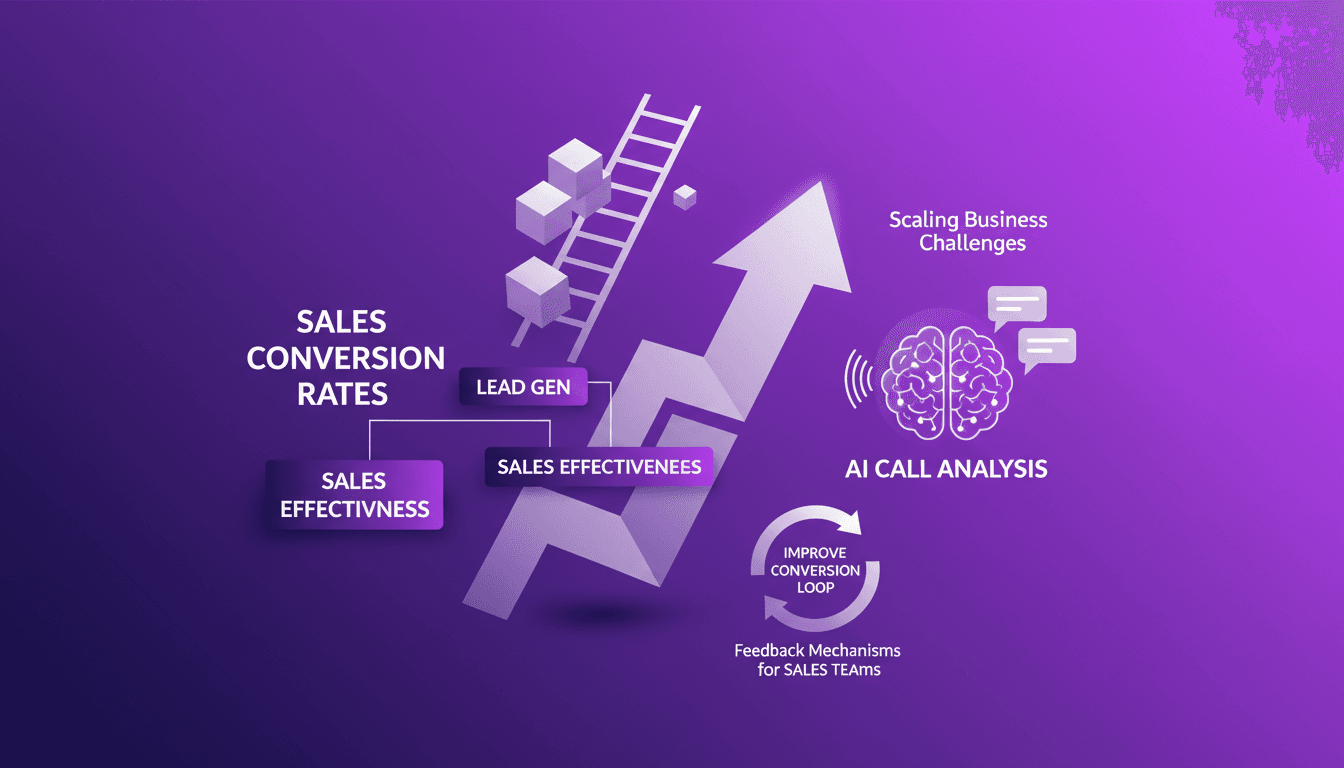

Boosting Conversion Rates with AI

I've been in the trenches of sales for years, and nothing stings more than seeing a pile of leads convert to dust. But here's the kicker: AI can turn that around if you know how to wield it. In this article, I'll walk you through leveraging AI to boost your conversion rates. We'll dive into real-world workflows, dodge common pitfalls, and see how AI can truly make a difference, especially in analyzing sales calls. Basically, if you want your stack of 100 leads to convert beyond just 15 closings, it's time to harness these AI tools.

Singing Training: Becoming Pro & Overcoming Doubts

I remember the first time I dreamed of standing on a big stage, the lights, the crowd, the music. It felt like a distant fantasy. Yet, after a decade of intense training and personal hurdles, I'm set to face an audience of 1000 people on May 22nd. Let me share how I overcame my doubts, secured financial support as an artist, and kept that Grammy dream alive. My journey hasn't been a smooth ride, but it's taught me to orchestrate my own successes and navigate the choppy waters of the music industry. Stick around to see what's in store on that stage.