Voice AI: Practical Challenges and Opportunities

I've been knee-deep in voice AI for a while now, and let me tell you, it’s a wild ride. You think we're close to the 'Her' moment? Well, not quite yet, but we're getting there. Let's dive into what's really happening behind the scenes at Gradian and why full duplex models are game changers—if you know how to handle them. With challenges like latency and tool call unpredictability, understanding these issues is crucial for anyone in the field. We'll also delve into on-device processing for privacy and cost-effectiveness, and what all this means for the future of voice AI.

I've been knee-deep in voice AI for a while now, and let me tell you, it’s a wild ride. You think we're close to the 'Her' moment? Not quite yet, but we're getting there. At Gradian AI, we're pushing the boundaries of voice technology, but real-world challenges like latency and tool call unpredictability are still very much a part of the picture. You need to understand these to really make strides in the field. Full duplex models are a game changer—if you know how to handle them. I've been burned before, trust me. We'll also dive into on-device processing for privacy and cost-effectiveness, and what all this means for the future of voice AI. So let's dive into Neil Zeghidour's talk and uncover what's really happening behind the scenes at Gradian AI.

Gradian's Mission and Voice AI Potential

Gradian is like the craftsman of the future. We're not just commenting; we're building. Our mission? To unlock the unrealized potential of voice AI. Imagine a world where human-computer interaction is as natural as a conversation with friends. That's our goal. We focus on voice models—from speech-to-text to speech-to-speech, and translation. But let's not kid ourselves; we're not in the movie "Her" yet. Current limitations, like latency and tool call unpredictability, remind us that the 'Her' moment is still a bit off. Understanding the underlying technology is crucial to harness its full potential.

"Man, have you seen what Gradian is doing with voice cloning? It's kind of crazy."

Gradian was founded two years ago, supported by philanthropists like Eric Schmidt. Our approach is open, research-oriented, and we develop solutions like Moshi and Pocket TTS. We aim to be the model provider for anyone building voice agents.

Challenges in Voice AI: Latency and Tool Call Unpredictability

In practice, latency is our arch-nemesis. Imagine 200ms latency for text-to-speech and 500ms to 4s for tool calls. In a human conversation, these delays are deal-breakers. We need strategies to reduce this latency. For instance, using fillers to keep the conversation flowing while waiting for results. But watch out, tool call unpredictability is still a headache. This unpredictability affects system consistency.

Full Duplex vs Half Duplex Models: The Real Deal

Let's talk about full duplex and half duplex models. Full duplex models allow for more natural interactions, with overlapping speech, like in a real conversation. But implementing them isn't without trade-offs. For example, Moshi is the only full duplex model currently available. Half duplex models, on the other hand, can only listen or speak, not both simultaneously. Gradian uses these models to create more human-like interactions, but you must be ready to manage the trade-offs in terms of complexity and resources needed.

- Full duplex models are more natural but complex to implement.

- Moshi is an example of a full duplex model.

- Half duplex models are simpler but less natural.

Speech-to-Speech Models and On-Device Processing

Speech-to-speech models are a breakthrough for reducing latency by combining speech input and output in one model. This significantly reduces processing time. But the real game-changer is on-device processing. It's a more private and cost-effective solution. Gradian introduced Gradion Phonon, an on-device text-to-speech solution, which promises to be a turning point in terms of cost and privacy.

The Future of Voice AI: Are We Close to the 'Her' Moment?

So, where are we with voice AI, and what's next? To reach the 'Her' moment, we still need advancements in latency, complexity, and contextual understanding. Continuous improvement and iteration are key to achieving these future goals. The societal impacts of advanced voice AI could be massive, changing how we work, communicate, and even learn. But we have a long road ahead, and each step is crucial.

- Continuous improvement and iteration are essential.

- Latency and contextual understanding need to be improved.

- Societal impacts could transform our habits.

So where are we with voice AI? Not quite at the 'Her' level yet, but we're getting there. Here's my breakdown:

- Latency and models: Understanding latency and the type of model you use is crucial. Too much latency can kill the user experience, and choosing between full duplex and half duplex models can be a game changer.

- On-device processing: Models processing on-device hold promise, but watch out for limitations in processing power.

- Practical applications: Speech-to-speech models are already transforming specific sectors, but keep an eye on unpredictability in tool calls. Looking forward, it's all about continuing to test and understand these complexities. Every iteration gets us closer to that 'Her' moment. Watch the full video here for the deep dive—it’s worth it. Keep experimenting and sharing your findings with the community.

Frequently Asked Questions

Thibault Le Balier

Co-fondateur & CTO

Coming from the tech startup ecosystem, Thibault has developed expertise in AI solution architecture that he now puts at the service of large companies (Atos, BNP Paribas, beta.gouv). He works on two axes: mastering AI deployments (local LLMs, MCP security) and optimizing inference costs (offloading, compression, token management).

Related Articles

Discover more articles on similar topics

Daily Routine: Maximize Productivity

I wake up every day with the same routine, and it's not just a habit—it's my secret weapon. No phone, no distractions, just pure focus. Let me walk you through how I turned this into a $77K/month gig. In our fast-paced tech world, distractions are endless. Yet, maintaining a consistent routine can be your biggest ally in achieving productivity and financial success. I'll show you how I structure my days to maximize efficiency, dodge FOMO, and ensure every day kicks off with unwavering focus.

Token Maxing: Building Software Efficiently

I returned to coding after years in management, and it felt like coming home. But the landscape had shifted. Tools evolved, and so did my approach. In this journey, I'll show you how I developed 'Gary's List' and tackled the challenge of token maxing. We'll dive into my plan-ge-review method and the impact of personal AI. This is a hands-on guide to navigating modern software development, comparing tools, and reflecting on quality education. And yes, I spent 200 dollars on a Claude Code Max account, but the payoff was worth it.

Agentic Search: Boosting Context Engineering Efficiency

I remember diving into context engineering with a fixed pipeline mindset. It was limiting, cumbersome, and frankly, a bit outdated. But then, I discovered agentic search—game changer. In this article, I walk you through how I transformed my approach. We shift from rigid logic to dynamic tools like Lang Chain that enhance our search capabilities. We'll talk challenges, parameter complexity, and hybrid tools that make a difference. If you're in the field, you know that context engineering is about 80% agentic search.

Claude Design Intro: 10-Minute Quickstart Guide

I jumped into Claude Design by Anthropic, curious if it could really deliver what it promised in just 10 minutes. Spoiler: It did, and here's how. As someone who's navigated the design landscape for years, I was eager to see if Claude Design could streamline my workflow and save time without compromising quality. In just 10 minutes, I explored its features, created projects with simple prompts, and optimized my designs with AI tools. Compared to other design tools, Claude Design stands out for its simplicity and efficiency. I'm sharing it all, step-by-step, like chatting with a colleague over coffee.

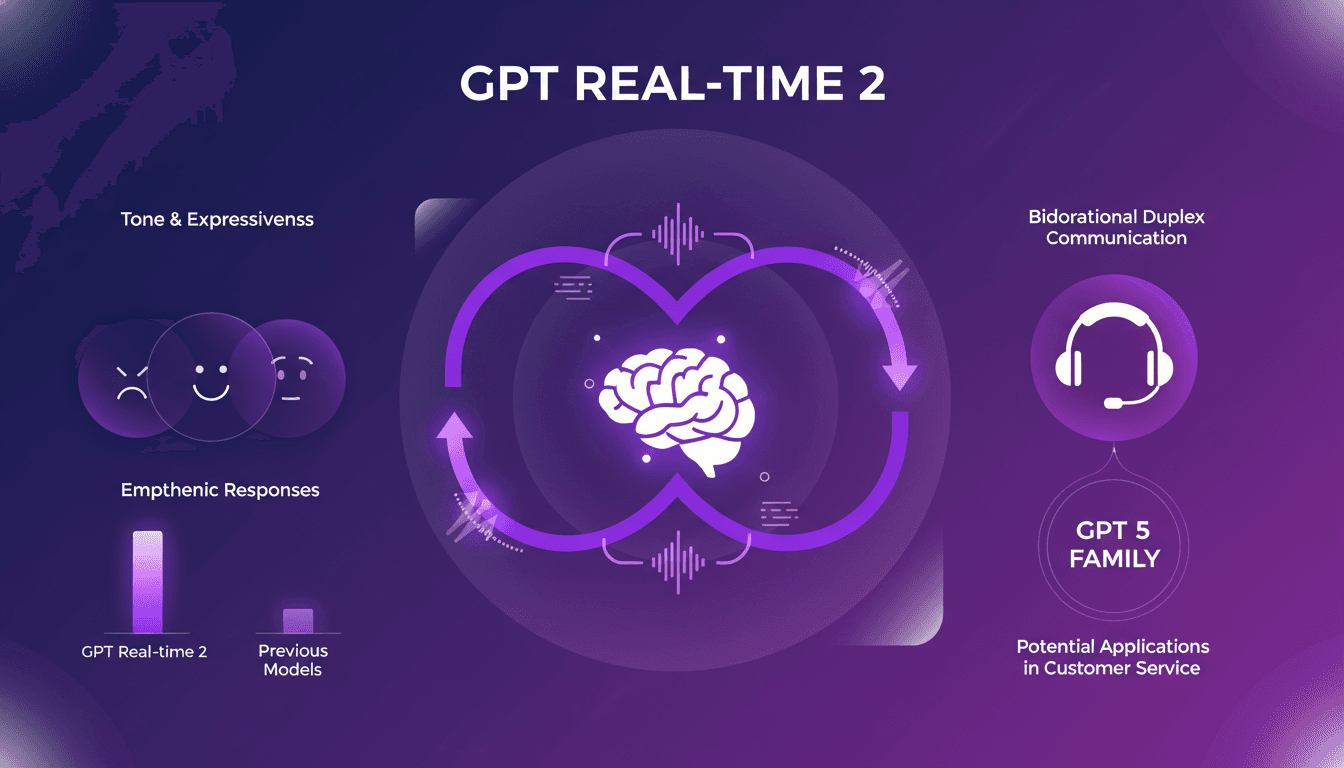

Integrate GPT Realtime-2 into Your Voice Agents

I've been hands-on with GPT Realtime-2, and let me tell you, it's a game changer for voice agents. When I first integrated it, the fluidity and responsiveness blew me away. As someone who's in the trenches with AI models, I know the pain points of latency and lack of expressiveness. GPT Realtime-2 directly addresses these, and it's not just hype. The bidirectional duplex communication and improved tone expressiveness are significant. Responses are more empathetic, conversations more lifelike. Compared to previous models, it's a leap forward. In customer service, the potential applications are vast. Integrated into the GPT 5 family, this model redefines the limits of what voice agents can achieve.